Jr guzman-item-analysis

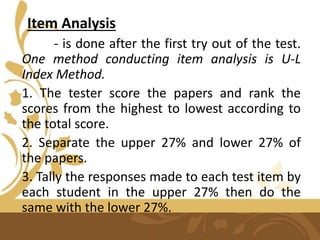

- 1. Item Analysis - is done after the first try out of the test. One method conducting item analysis is U-L Index Method. 1. The tester score the papers and rank the scores from the highest to lowest according to the total score. 2. Separate the upper 27% and lower 27% of the papers. 3. Tally the responses made to each test item by each student in the upper 27% then do the same with the lower 27%.

- 2. 4. Compute the percentage of the upper group that got the item right. This is called the U. 5. Compute the percentage of the lower group that got the item right. This is called the L. 6. Average U and L percentage. The result is the difficulty index 7. Subtract the L percentage from the U percentage. The result is the discrimination index.

- 4. After the item analysis, the tester uses the following table of equivalents in interpreting the difficulty index: .00 - .20 - Very Difficult .21 - .80 - Moderately Difficult .81 – 1.00 - Very Easy

- 5. Item Revision On the basis item analysis data, test items are revised for improvement. After revising the test items that need revision, the tester needs another try out. The revised test must be administered to the same set of samples.

- 6. Third try out After two revisions, the test is considered ready for the final form. The test is good in terms of difficulty index and discrimination indices. At this time, the test is ready for it reliability testing.

- 7. How to Establish Reliability Test reliability is an element in test construction and test standardization and is the degree to which a measure consistently returns the same result when repeated under similar conditions.

- 8. Reliability does not imply validity. That is, a reliable measure is measuring something consistently, but not necessarily what it is supposed to be measuring.

- 9. Multiple-administration Method – require that two assessment are administered. 1. Test-retest reliability – is estimated as the Pearson Product-moment Correlation Coefficient between two administrations of the same measure. This is sometimes known as the coefficient of stability.

- 10. 2. Alternative forms reliability – is estimated by the Pearson product moment correlation coefficient of two different forms of measure, usually administered together. This is sometimes known as the coefficient of equivalence.

- 11. Single-administration methods – include split-half and internal consistency. 1. Split-half reliability – treats the two halves of a measure as alternate forms. This “halves reliability “ estimate is then stepped up to the full test length using the Spearman Brown Prediction Formula. This is sometimes referred to as the coefficient of internal consistency.

- 13. 2. The most common internal consistency measure is Cronbach’s alpha, which is usually interpreted as the mean of all possible split-half coefficients. Cronbach’s alpha is a generalization of an earlier form of estimating internal consistency.

- 14. Cronbach’s alpha Internal consistency α ≥ 0.9 Excellent 0.9 > α ≥ 0.8 Good 0.8 > α ≥ 0.7 Acceptable 0.7 > α ≥ 0.6 Questionable 0.6 > α ≥ 0.5 Poor 0.5 > α Unacceptable