JBEI January 2021 Research Highlights

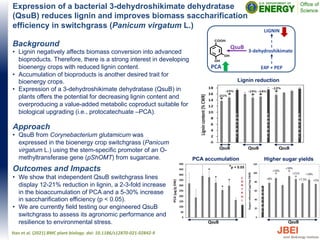

- 1. Expression of a bacterial 3-dehydroshikimate dehydratase (QsuB) reduces lignin and improves biomass saccharification efficiency in switchgrass (Panicum virgatum L.) Approach • QsuB from Corynebacterium glutamicum was expressed in the bioenergy crop switchgrass (Panicum virgatum L.) using the stem-specific promoter of an O- methyltransferase gene (pShOMT) from sugarcane. Outcomes and Impacts • We show that independent QsuB switchgrass lines display 12-21% reduction in lignin, a 2-3-fold increase in the bioaccumulation of PCA and a 5-30% increase in saccharification efficiency (p < 0.05). • We are currently field testing our engineered QsuB switchgrass to assess its agronomic performance and resilience to environmental stress. Hao et al. (2021) BMC plant biology. doi: 10.1186/s12870-021-02842-9 Lignin reduction PCA accumulation C O N T R O L Higher sugar yields C O N T R O L *p < 0.05 QsuB C O N T R O L C O N T R O L C O N T R O L QsuB QsuB QsuB QsuB C O N T R O L QsuB PCA E4P + PEP 3-dehydroshikimate LIGNIN Background • Lignin negatively affects biomass conversion into advanced bioproducts. Therefore, there is a strong interest in developing bioenergy crops with reduced lignin content. • Accumulation of bioproducts is another desired trait for bioenergy crops. • Expression of a 3-dehydroshikimate dehydratase (QsuB) in plants offers the potential for decreasing lignin content and overproducing a value-added metabolic coproduct suitable for biological upgrading (i.e., protocatechuate –PCA).

- 2. Multi-Omics Driven Metabolic Network Reconstruction and Analysis of Lignocellulosic Carbon Utilization in Rhodosporidium toruloides Background • An oleaginous yeast Rhodosporidium toruloides is a promising host for converting lignocellulosic biomass to bioproducts and biofuels • In this study, we performed multi-omics analysis and reconstructed the genome-scale metabolic network of R. toruloides to study the utilization of carbon sources derived from lignocellulosic biomass Approach • Reconstruction of the genome-scale metabolic network, manual curation of the reconstructed network, and validation of the metabolic model were performed using Jupyter notebooks in a fully reproducible manner • A multi-omics dataset including transcriptomics, proteomics, metabolomics, lipidomics, and RB-TDNA sequencing was generated and integrated with the metabolic model to investigate lignocellulosic carbon utilization Outcomes and Impacts • A large and comprehensive multi-omics dataset was generated for R. toruloides grown on glucose, xylose, arabinose, or p-coumaric acid as carbon sources found after deconstruction of lignocellulose • An accurate genome-scale metabolic network was developed for R. toruloides and validated against high-throughput growth phenotype and functional genomics data • The multi-omics dataset and genome-scale metabolic model will be utilized to maximize the use of the carbon in lignocellulosic biomass feedstocks and improve the production of biofuel and bioproduct precursors Kim et al. (2021) Front. Bioeng. Biotechnol. 8:612832, doi: 10.3389/fbioe.2020.612832 The metabolic reactions, genes, and their localization for p-coumaric acid degradation pathway in R. toruloides were proposed using the multi-omics dataset and metabolic network reconstruction

- 3. Efficient production of oxidized terpenoids via engineering fusion proteins of terpene synthase and cytochrome P450 Background • The functionalization of terpenes using cytochrome P450 enzymes is a versatile route to the production of useful derivatives that can be further converted to value-added products. • Many terpenes are hydrophobic and volatile making their availability as a substrate for P450 enzymes significantly limited during microbial production. Approach • This work developed a strategy to improve the accessibility of terpene molecules for the P450 reaction by linking terpene synthase and P450. • As a model system, fusion proteins of 1,8-cineole synthase (CS) and P450cin were investigated via experimental and structural analysis. Outcomes and Impacts • Fusion proteins of CS and P450cin showed an improved hydroxylation of the monoterpenoid 1,8-cineole up to 5.4-fold. • Structural analysis by SEC-SAXS indicated the linker length affects the flexibility, which eventually affects the catalytic activity, of the fusion enzymes. • The application of fusion strategy to the biosynthetic pathway for oxidized epi-isozizaene products resulted in a 90-fold increase. • This strategy could be widely applicable to improve the biosynthetic titer of the functionalized products from hydrophobic terpene intermediates. Wang et al. (2021) Metabolic Engineering, 64: 41–51. (doi.org/10.1016/j.ymben.2021.01.004) Enzyme fusions were engineered to improve substrate availability as terpenes are hydrophobic and easily lost by phase separation. Fusion proteins showed improved production of oxidized mono- and sesquiterpenoids.

- 4. Genomic mechanisms of climate adaptation in polyploid bioenergy switchgrass Background • Switchgrass (P. virgatum) is both a promising biofuel crop and an important component of the North American tallgrass prairie. • Biomass production is the principal breeding target for switchgrass as a forage and bioenergy crop Approach • Deep PacBio long-read sequencing coupled with deep short-read polishing and bacterial artificial chromosome (BAC) clone validation produced a highly contiguous ‘v5’ AP13 genome assembly. • Analysis of biomass and survival among 732 resequenced genotypes, which were grown across 10 common gardens that span 1,800 km of latitude to find evidence of climate adaptation. Outcomes and Impacts • Highly accurate and complete reference genome for tetraploid switchgrass was generated using long-read DNA sequencing technology • With the help of whole genome sequence it was estimated that the two parental species of switchgrass diverged from a common ancestor about 6.7 million years ago, and that the two genomes came back together in a whole-genome duplication at least 4.6 million years ago • Investigating patterns of climate adaptation, the genome resources and gene–trait associations developed here provide breeders with the necessary tools to increase switchgrass yield for the sustainable production of bioenergy. Geographical distribution of common gardens (n = 10) and plant collection locations (n = 700 georeferenced genotypes), and spatial distribution models of each ecotype. The ecotype color legend accompanies the representative images of each ecotype to the right of the map (images were taken at the end of the 2019 growing season and the background was removed with ImageJ (https://imagej.nih.gov/ij)). White-outlined points (coloured by ecotype, or in white if no ecotype assignment was made) indicate the georeferenced collection sites of the diversity panel. The labeled white circles with black crosses indicate the locations of the 10 experimental gardens. Scale bars, 1 m. Lovell, J.T., MacQueen, A.H., Mamidi, S. et al. Nature (2021). https://doi.org/10.1038/s41586-020- 03127-1

- 5. Technoeconomic analysis for biofuels and bioproducts Background • This article provides a review of current literature on Technoeconomic analysis (TEA), which is an approach for conducting process design and simulation, informed by empirical data, to estimate capital costs, operating costs, mass balances, and energy balances for a commercial scale biorefinery • TEA serves as a useful method to screen potential research priorities, identify cost bottlenecks at the earliest stages of research, and provide the mass and energy data needed to conduct life-cycle environmental assessments. Approach • We reviewed recently published work on TEA applied to biofuel and bioproduct production • We reviewed the challenges of integrating conventional process simulation software with uncertainty analysis and life-cycle assessment and noted recent examples of good implementations of this approach • We also reviewed the types of financial metrics that are most commonly used in industry to evaluate potential projects, in contrast with metrics used in academic and research settings Outcomes and Impacts • Recent studies have produced new tools and methods to enable faster iteration on potential designs, more robust uncertainty analysis, and greater accessibility through the use of open-source platforms. • There is also a trend toward more expansive system boundaries to incorporate the impact of policy incentives, use-phase performance differences, and potential impacts on global market supply. • Recent advances in high-throughput experimental pipelines have great potential if integrated with TEA to generate insights about commercial- scale implications. Scown et al. (2021) COBIOT, doi: 10.1016/j.copbio.2021.01.002 Figure 1. Scope of well-conducted technoeconomic analyses for biofuels and bioproducts