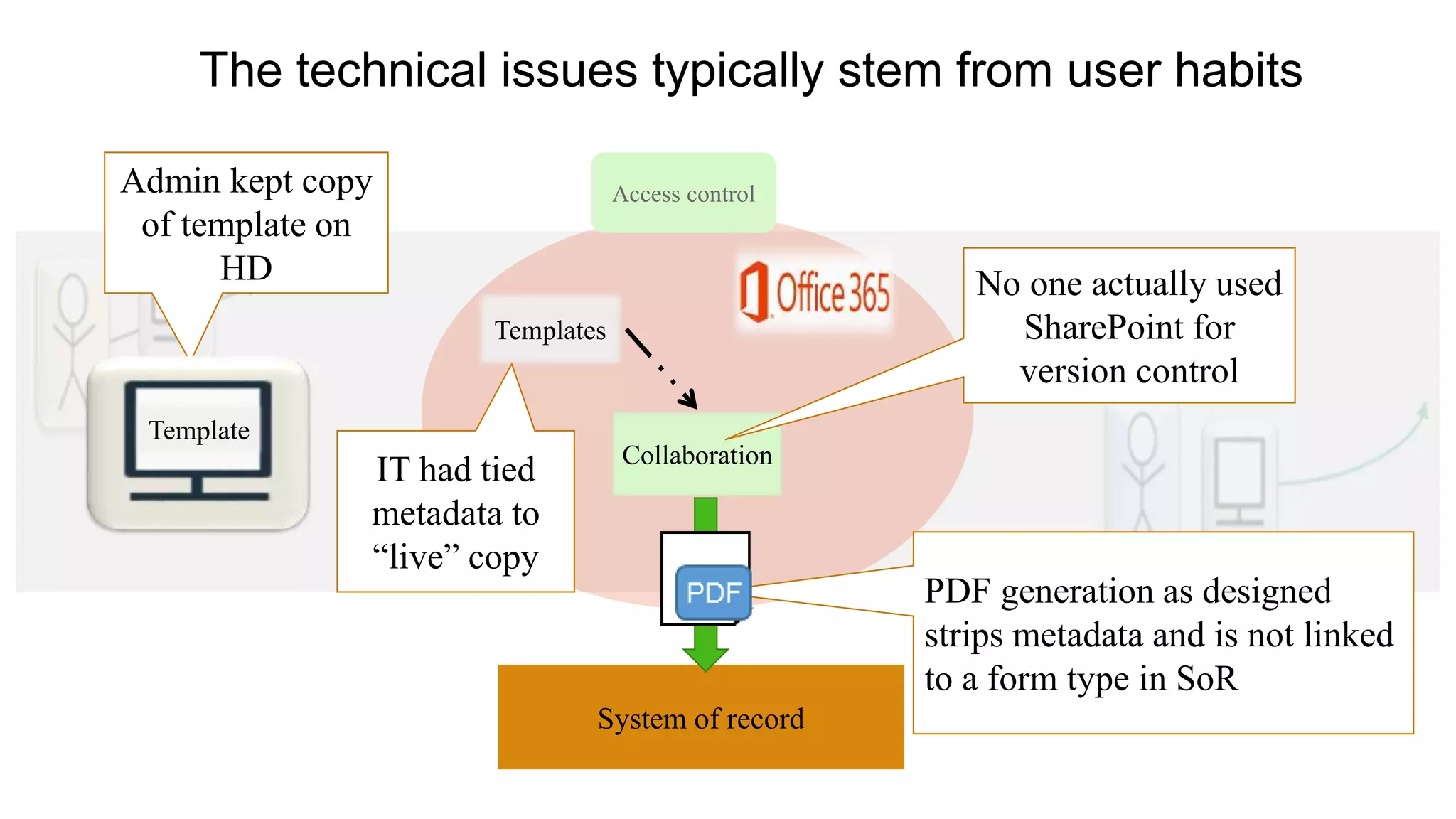

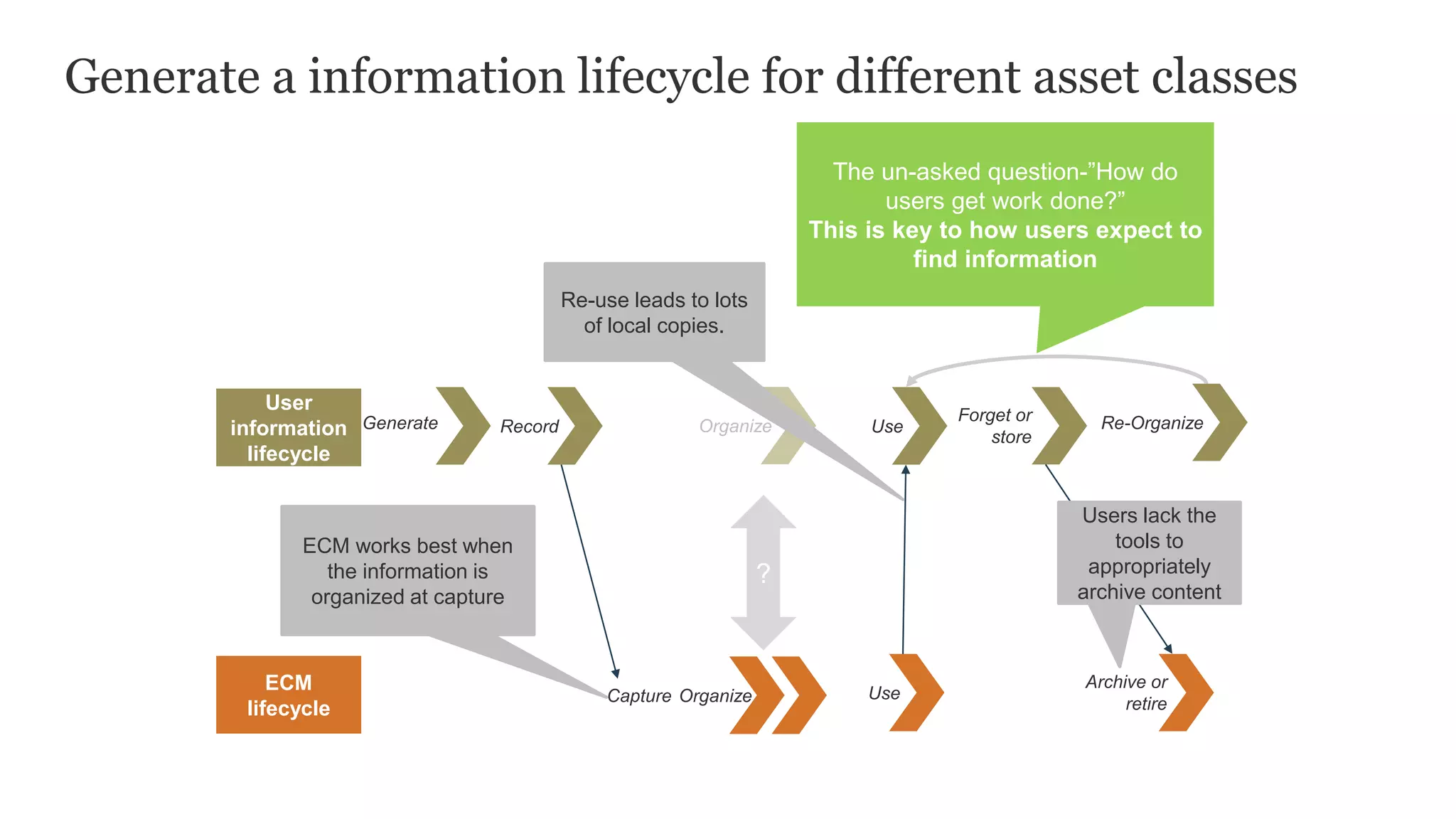

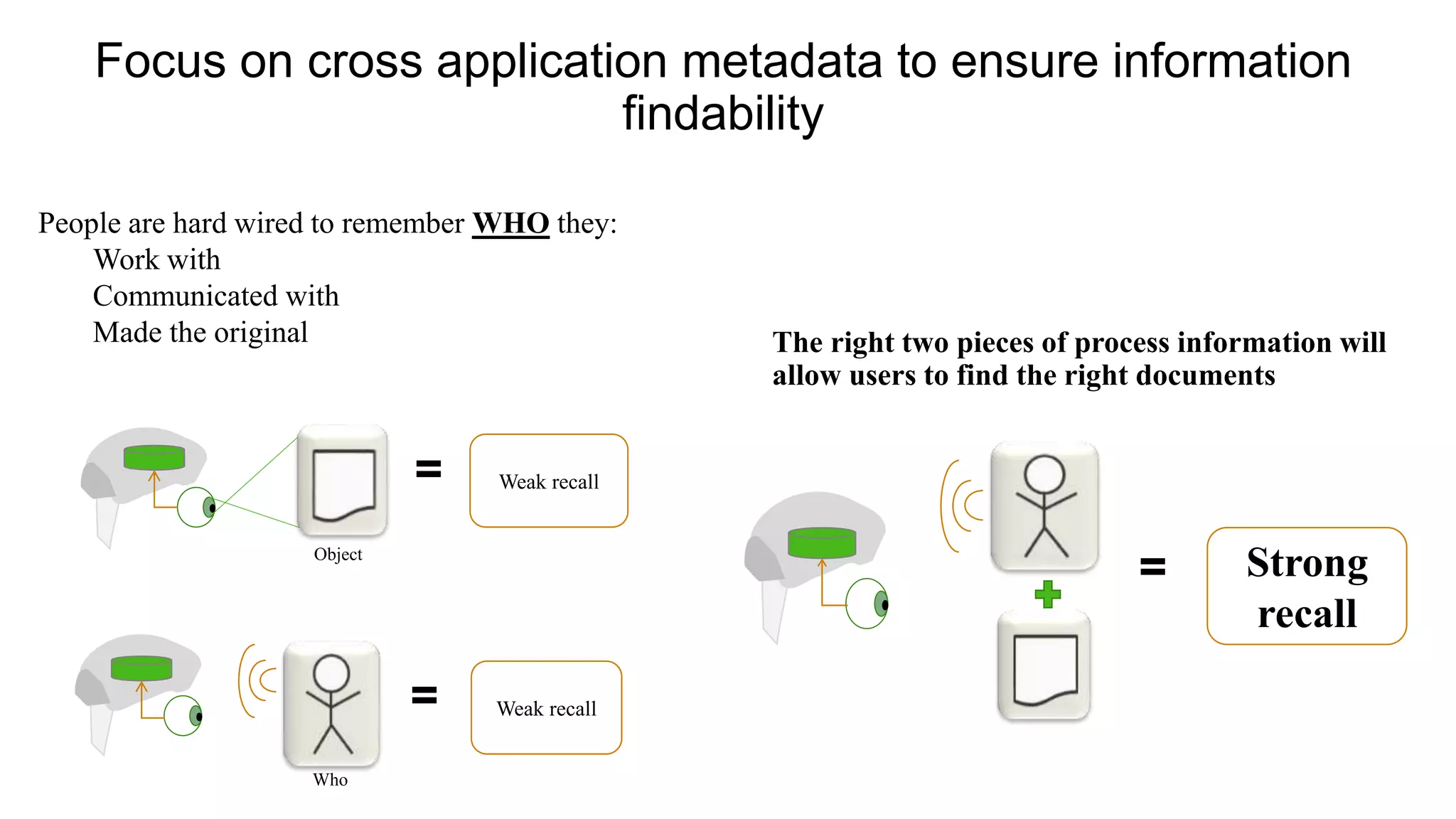

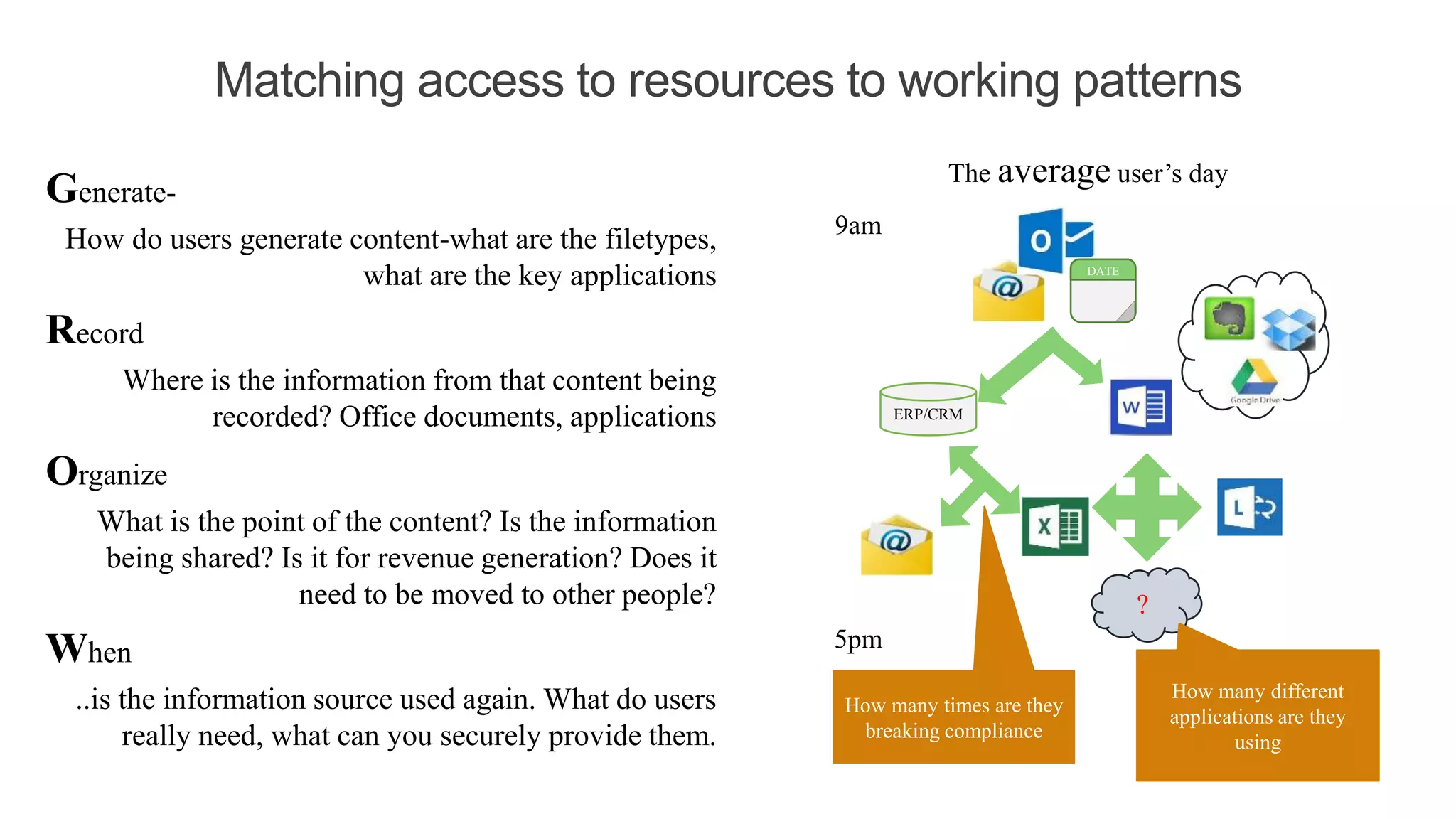

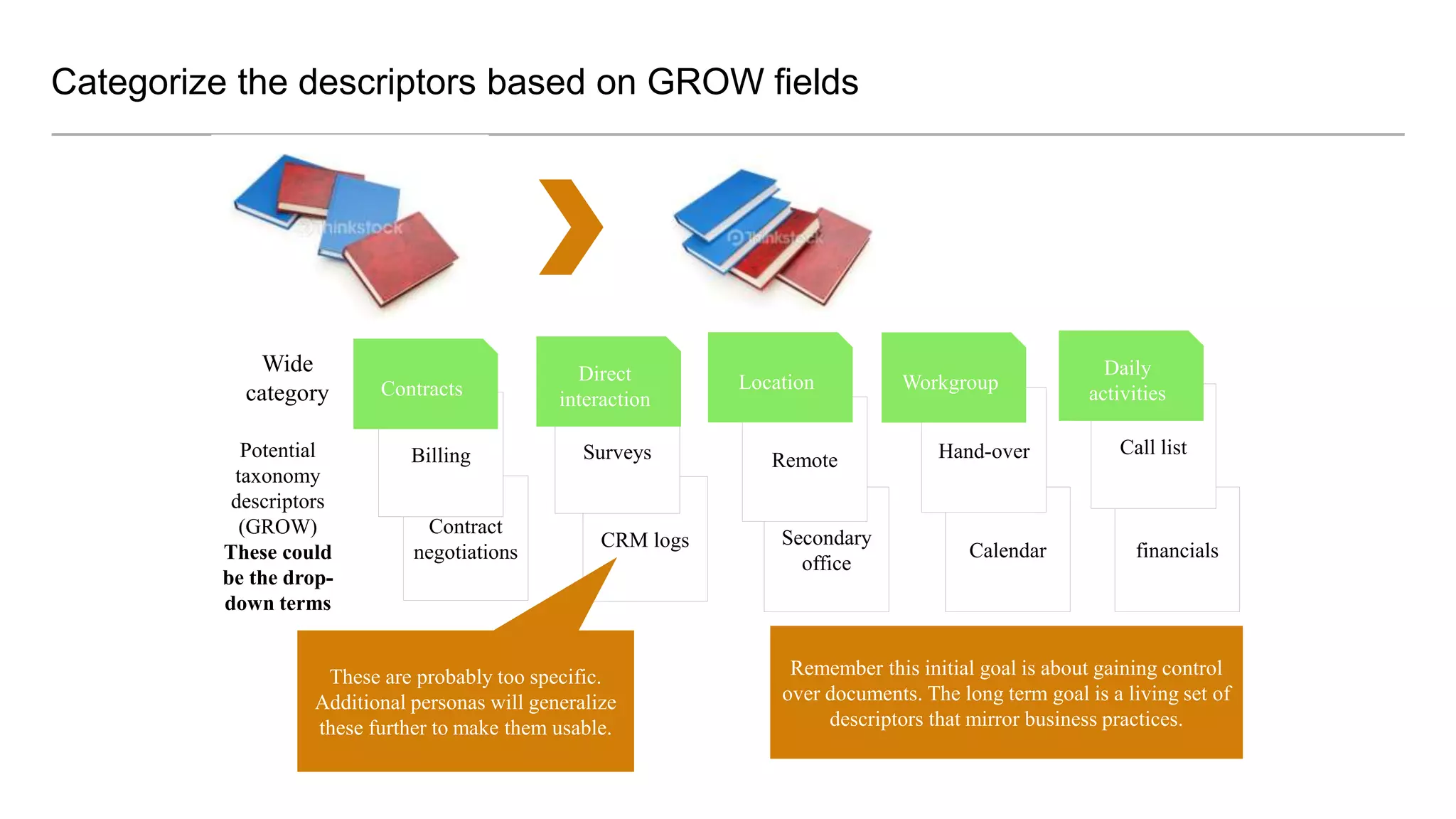

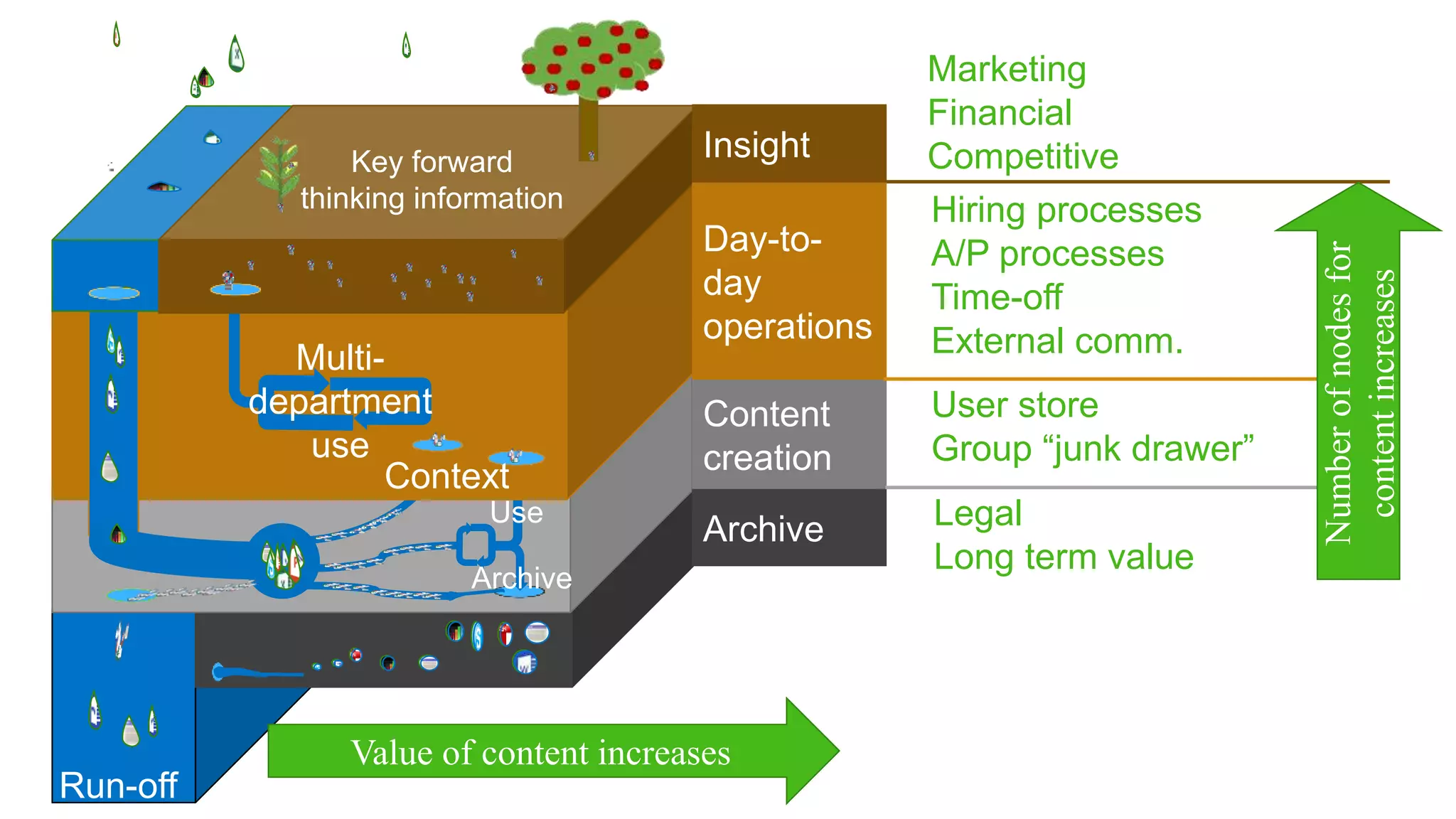

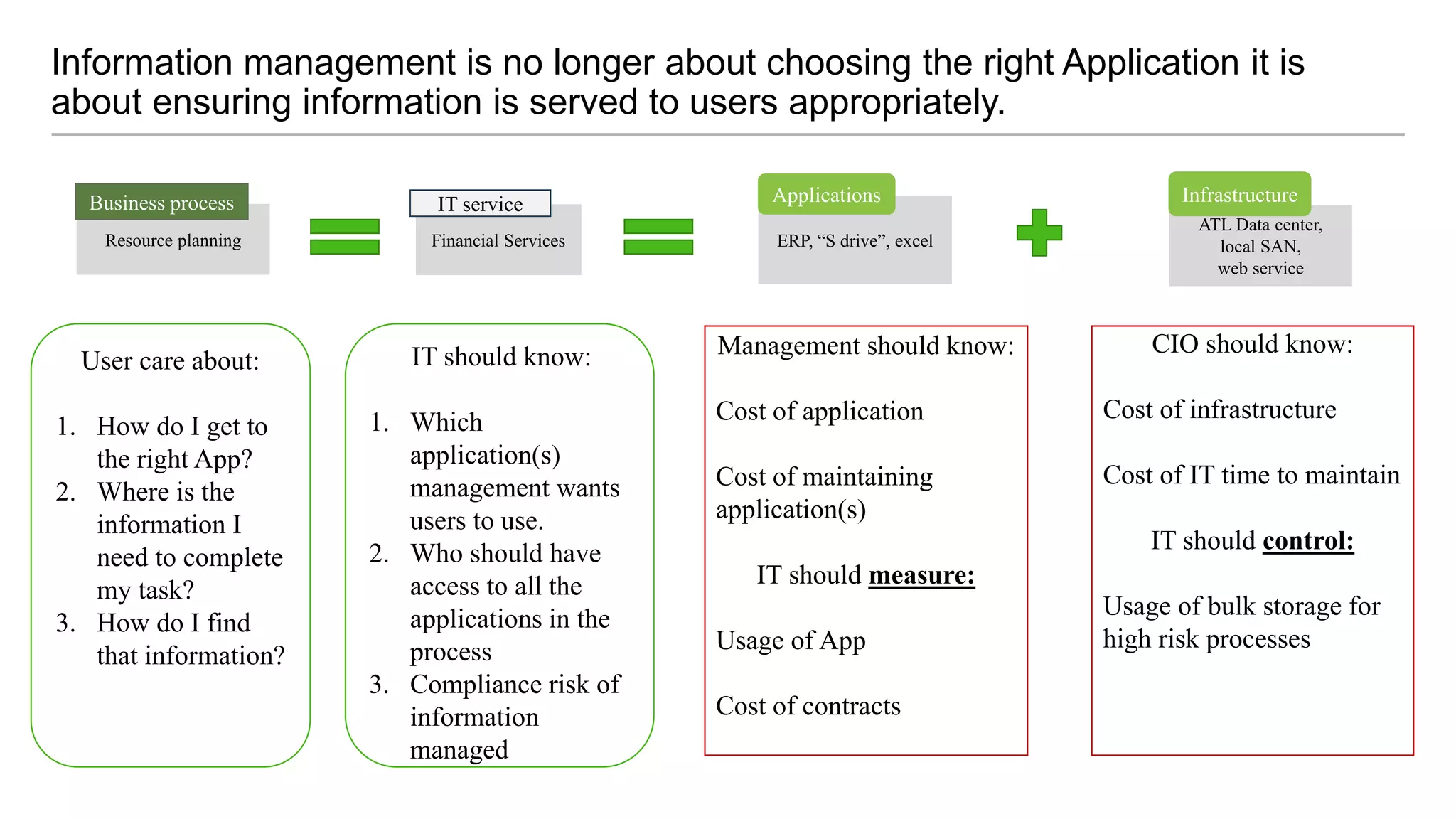

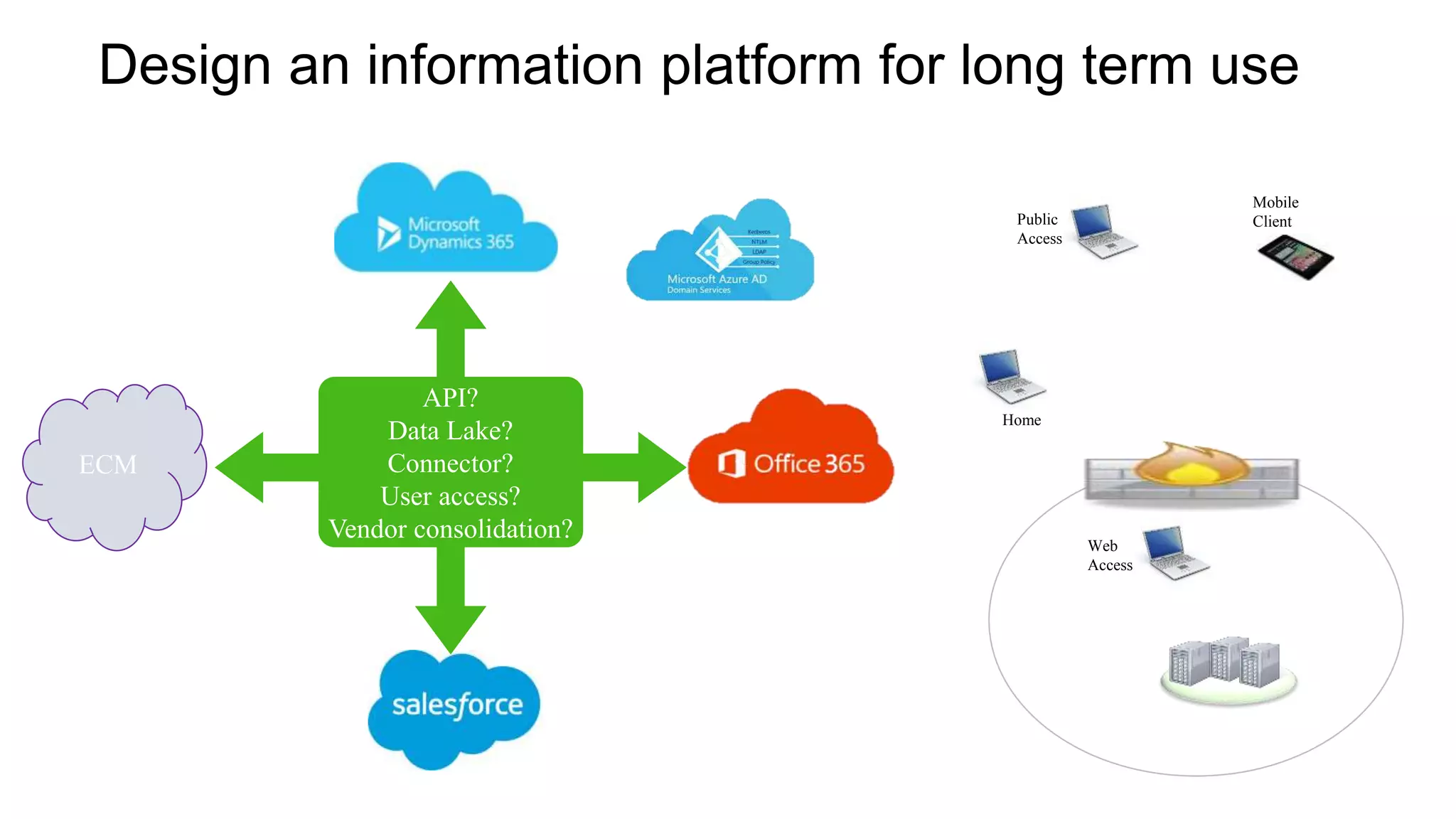

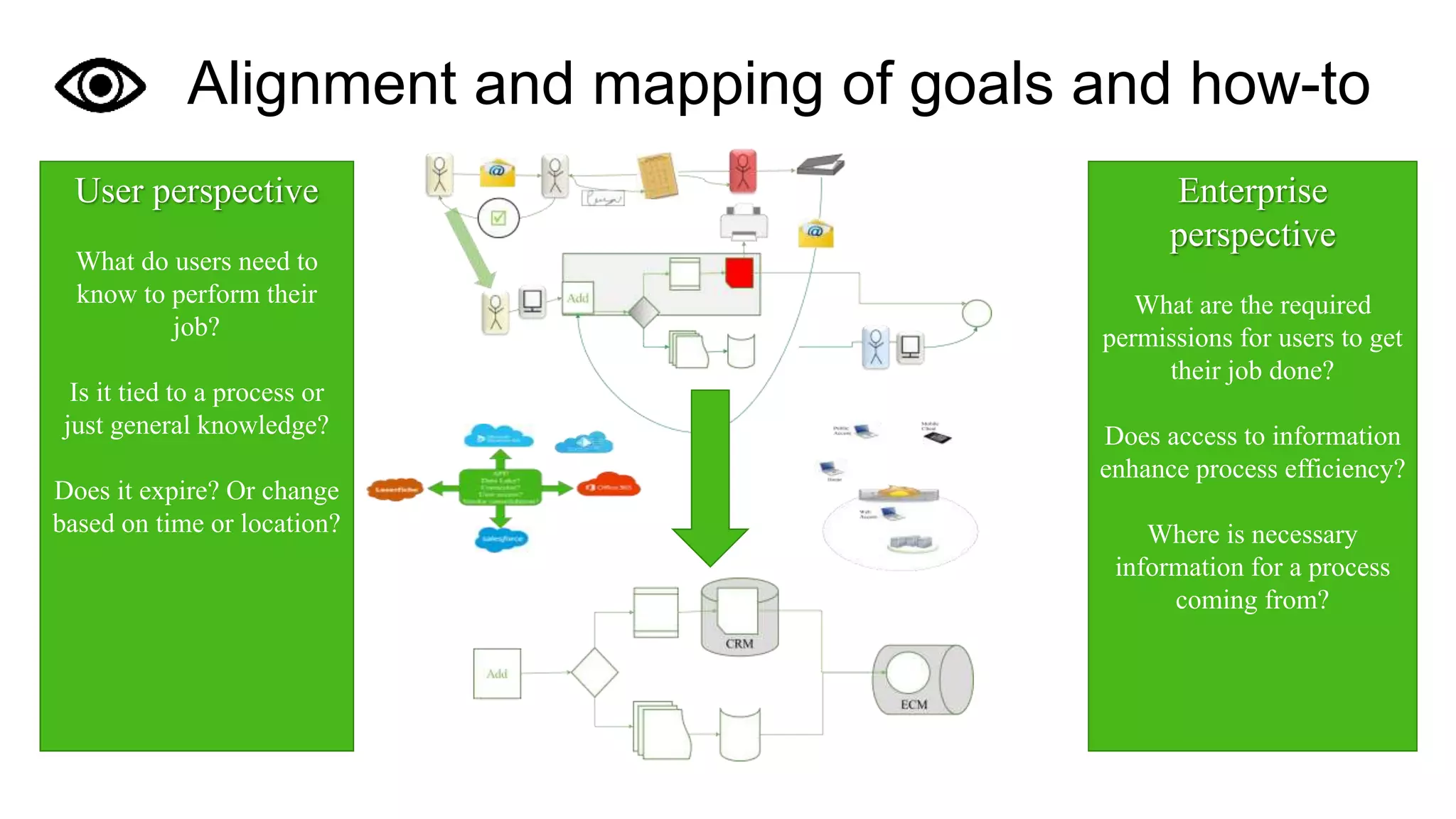

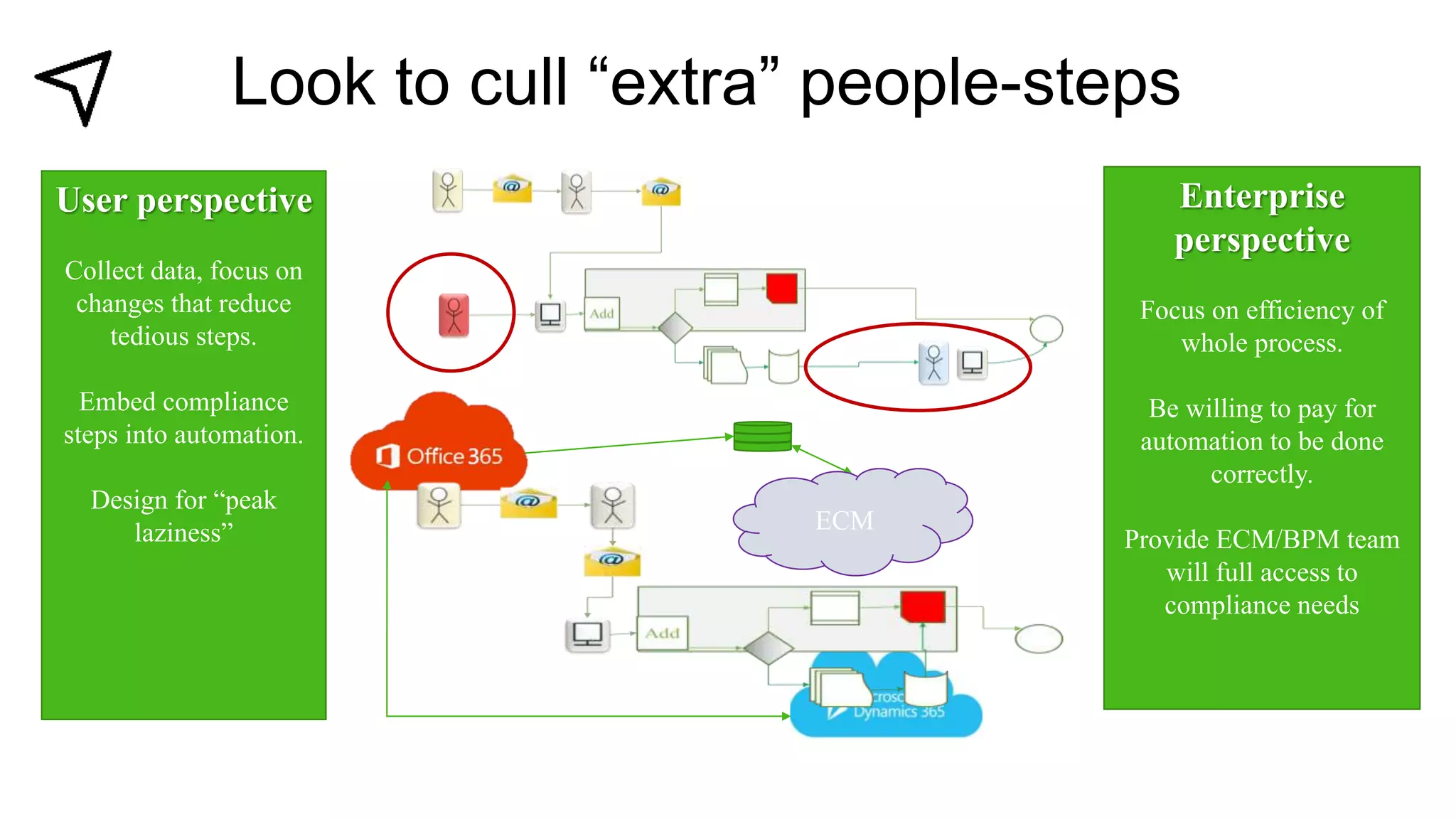

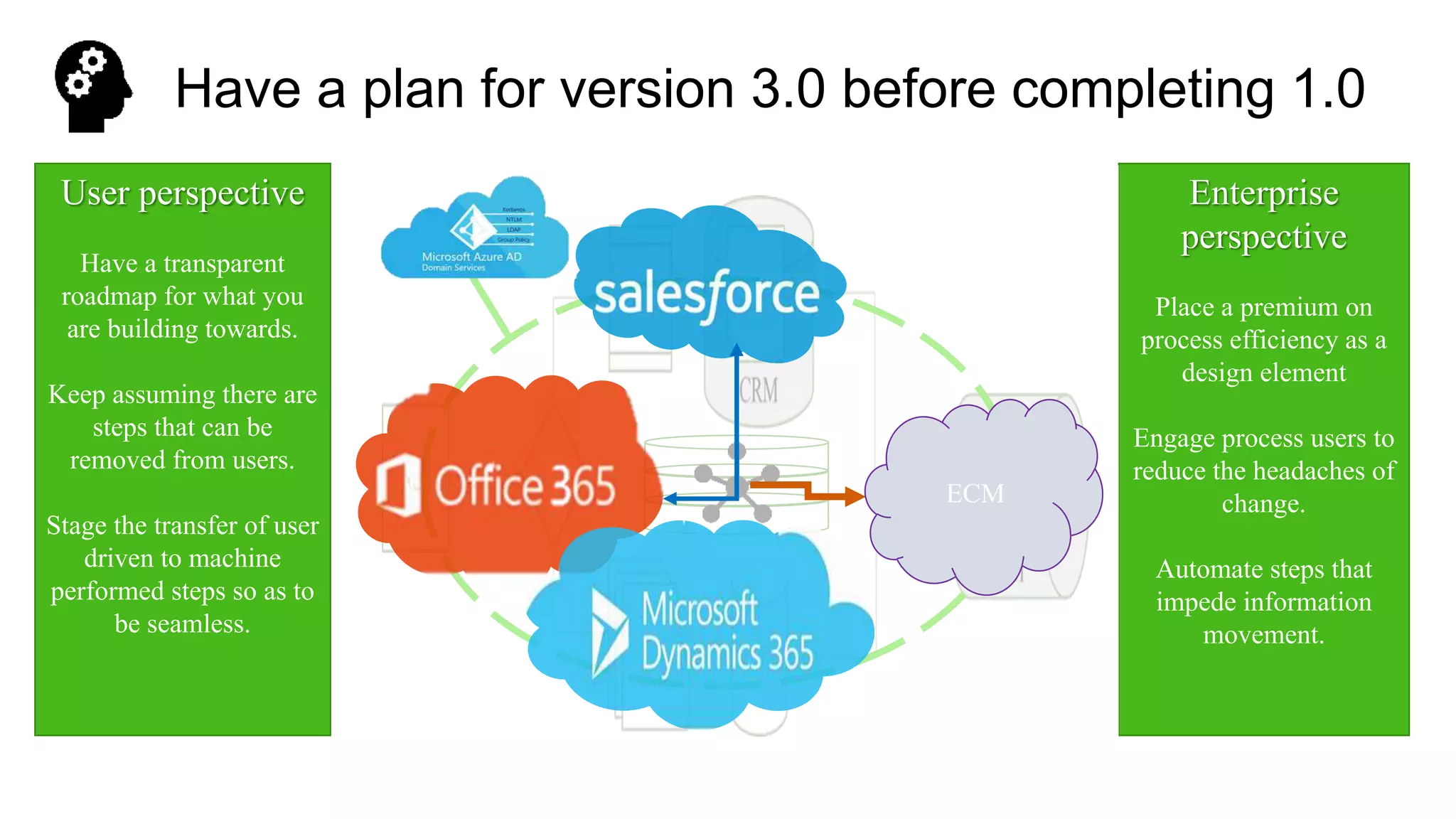

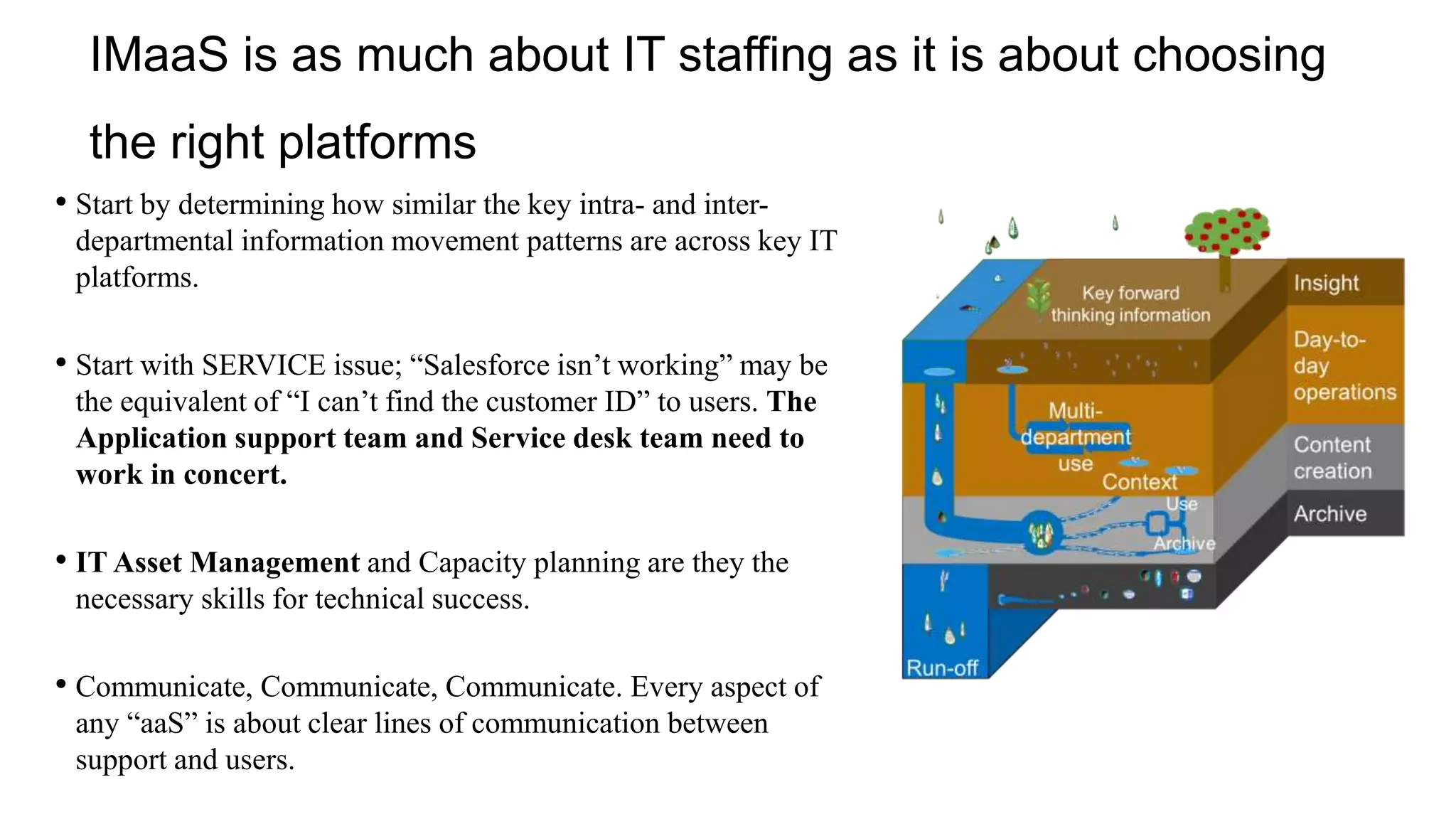

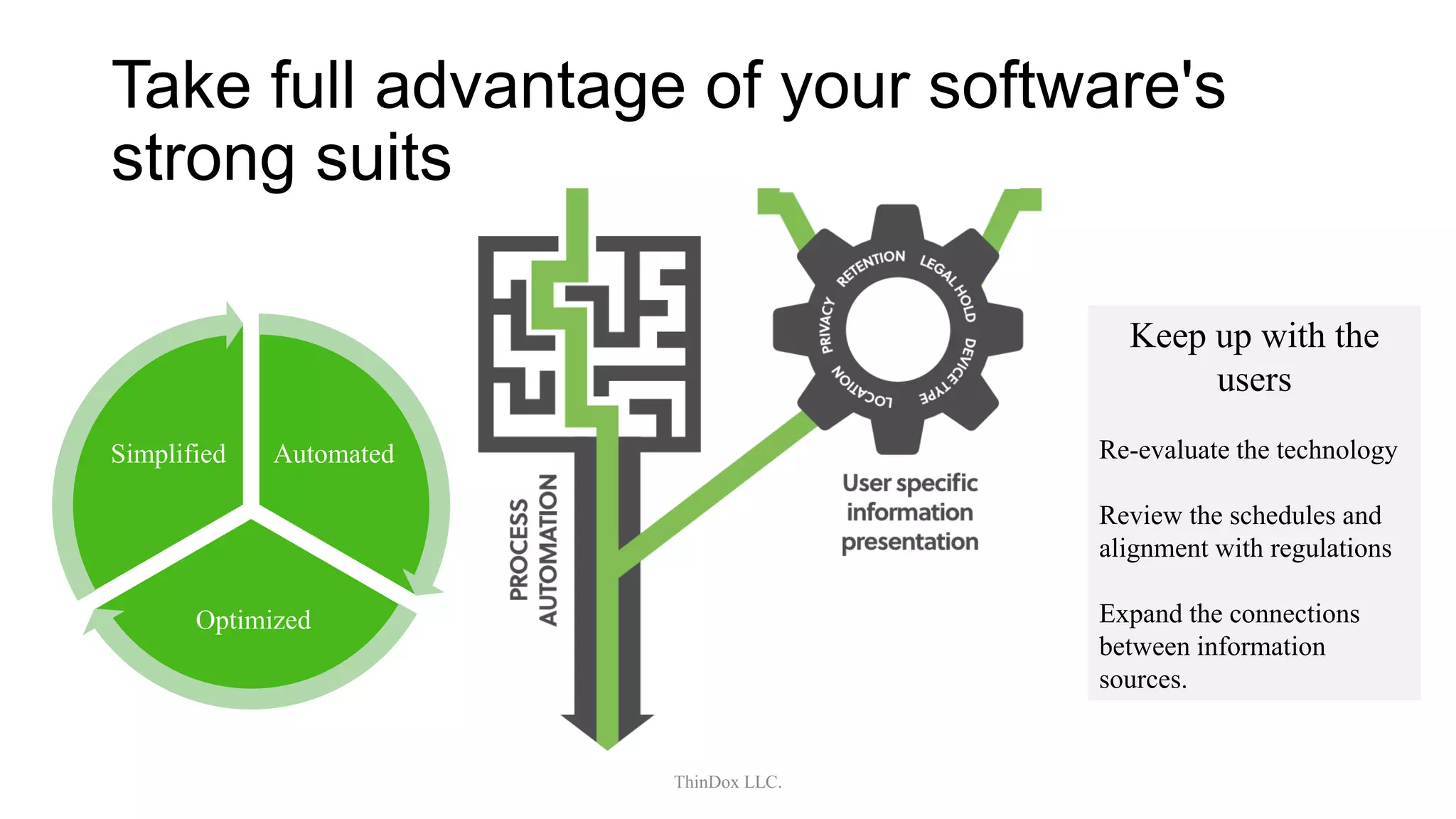

The document discusses the evolution of information management and the need for organizations to adapt to new patterns of work and information flow, arguing that traditional hierarchical models are outdated. It emphasizes the importance of aligning technology with business goals and providing seamless access to information to enhance user efficiency, while also addressing governance and compliance challenges in managing data. Additionally, it offers strategies for implementing effective information management as-a-service (IMaaS) that focus on user engagement, feedback, and continuous improvement.