Informatica perf points

•Download as PPT, PDF•

0 likes•113 views

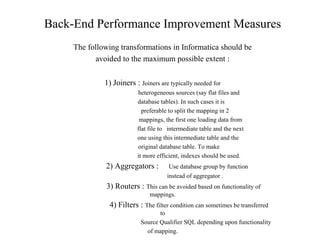

The document discusses various measures to improve back-end, front-end, and data model performance in Informatica and databases. For back-end performance, it recommends avoiding certain transformations like joiners and aggregators when possible, using indexes and transferring filters to source qualifiers. For databases, it suggests using clusters, partitioning, parallelism, and dynamic tuning. For front-end performance, it recommends indexes, aggregate tables, and dimensional modeling with global dimensions and translation tables.

Report

Share

Report

Share

Recommended

How to digitize penstocks leading to powerhouse of a hydropower plant from th...

This tutorial explains the application of DIVA-GIS software in the digitization of a hydropower plant schematics. Here the use of the point, line, and polygon shapefiles is demonstrated. The tutorial also explained the creation of attribute tables to display data on a digital map.

Modeling the water food-energy nexus in the Arab world: The NASA land informa...

Modeling the water food-energy nexus in the Arab world: The NASA land informa...International Food Policy Research Institute (IFPRI)

Presentation by Hamada S. BadrMap reduce advantages over parallel databases

Introduction

Heterogeneous Systems

Complex Functions

Fault Tolerance

Performance

Conclusion

Recommended

How to digitize penstocks leading to powerhouse of a hydropower plant from th...

This tutorial explains the application of DIVA-GIS software in the digitization of a hydropower plant schematics. Here the use of the point, line, and polygon shapefiles is demonstrated. The tutorial also explained the creation of attribute tables to display data on a digital map.

Modeling the water food-energy nexus in the Arab world: The NASA land informa...

Modeling the water food-energy nexus in the Arab world: The NASA land informa...International Food Policy Research Institute (IFPRI)

Presentation by Hamada S. BadrMap reduce advantages over parallel databases

Introduction

Heterogeneous Systems

Complex Functions

Fault Tolerance

Performance

Conclusion

Bioenergy prototype for the Global Atlas

Frist tests fothe Global Atlas data infrastructure for bioenergy data

The Hive Think Tank: "Stream Processing Systems" by Karthik Ramasamy of Twitter

Karthik Ramasamy's presentation was part of a panel discussion on Stream Processing Systems on January 20th, 2016 led by Ben Lorica (O'Reilly Media) with panelists: Jay Kreps (Confluent), M.C. Srivas (MapR), Nikita Shamgunov (MemSQL), Ram Sriharsha (Hortonworks)

Leveraging Map Reduce With Hadoop for Weather Data Analytics

IOSR Journal of Computer Engineering (IOSR-JCE) is a double blind peer reviewed International Journal that provides rapid publication (within a month) of articles in all areas of computer engineering and its applications. The journal welcomes publications of high quality papers on theoretical developments and practical applications in computer technology. Original research papers, state-of-the-art reviews, and high quality technical notes are invited for publications.

Application of web ontology to harvest estimation of rice in Thailand

Application of web ontology to harvest estimation of rice in ThailandAIMS (Agricultural Information Management Standards)

Authors: Takuji Kiura, Hitoshi. Toritani, Daisuke Horyu, Atsushi Yamakawa, Seishi Ninomiya

Organization: National Institute for Agro-Environmental ScienceApplication of web ontology to harvest estimation of rice in thailand

Application of web ontology to harvest estimation of rice in thailandAIMS (Agricultural Information Management Standards)

Cloud computing1

visit the following link for more details,

https://www.fiverr.com/alirazak?up_rollout=true

BUDW: Energy-Efficient Parallel Storage Systems with Write-Buffer Disks

A critical challenge with modern parallel I/O systems is that parallel disks consume a significant amount of energy in servers and high-performance computers. To conserve energy consumption in parallel I/O systems, one can immediately spin down disks when disk are idle; however, spinning down disks might not be able to produce energy savings due to penalties of spinning operations. Unlike powering up CPUs, spinning down and up disks need physical movements. Therefore, energy savings provided by spinning down operations must offset energy penalties of the disk spinning operations. To reduce the penalties incurred by disk spinning operations, we describe in this talk an approach to conserving energy of parallel I/O systems with write buffer disks, which are used to accumulate small writes using a log file system. Data sets buffered in the log file system can be transferred to target data disks in a batch way. Thus, buffer disks aim to serve a majority of incoming write requests, attempting to reduce the large number of disk spinning operations by keeping data disks in standby for long period times. Interestingly, the write buffer disks not only can achieve high energy efficiency in parallel I/O systems, but also can shorten response times of write requests. To evaluate the performance and energy efficiency of our parallel I/O systems with buffer disks, we implemented a prototype using a cluster storage system as a testbed. Experimental results show that under light and moderate I/O load, buffer disks can be employed to significantly reduce energy dissipation in parallel I/O systems without adverse impacts on I/O performance.

Sawmill - Integrating R and Large Data Clouds

This is a version of a talk that I have given a few times recently.

The design and implementation of modern column oriented databases

An attempt to break down the paper on the design of column-oriented databases into simpler terms.

https://stratos.seas.harvard.edu/files/stratos/files/columnstoresfntdbs.pdf

https://blog.acolyer.org/2018/09/26/the-design-and-implementation-of-modern-column-oriented-database-systems/

More Related Content

What's hot

Bioenergy prototype for the Global Atlas

Frist tests fothe Global Atlas data infrastructure for bioenergy data

The Hive Think Tank: "Stream Processing Systems" by Karthik Ramasamy of Twitter

Karthik Ramasamy's presentation was part of a panel discussion on Stream Processing Systems on January 20th, 2016 led by Ben Lorica (O'Reilly Media) with panelists: Jay Kreps (Confluent), M.C. Srivas (MapR), Nikita Shamgunov (MemSQL), Ram Sriharsha (Hortonworks)

Leveraging Map Reduce With Hadoop for Weather Data Analytics

IOSR Journal of Computer Engineering (IOSR-JCE) is a double blind peer reviewed International Journal that provides rapid publication (within a month) of articles in all areas of computer engineering and its applications. The journal welcomes publications of high quality papers on theoretical developments and practical applications in computer technology. Original research papers, state-of-the-art reviews, and high quality technical notes are invited for publications.

Application of web ontology to harvest estimation of rice in Thailand

Application of web ontology to harvest estimation of rice in ThailandAIMS (Agricultural Information Management Standards)

Authors: Takuji Kiura, Hitoshi. Toritani, Daisuke Horyu, Atsushi Yamakawa, Seishi Ninomiya

Organization: National Institute for Agro-Environmental ScienceApplication of web ontology to harvest estimation of rice in thailand

Application of web ontology to harvest estimation of rice in thailandAIMS (Agricultural Information Management Standards)

Cloud computing1

visit the following link for more details,

https://www.fiverr.com/alirazak?up_rollout=true

BUDW: Energy-Efficient Parallel Storage Systems with Write-Buffer Disks

A critical challenge with modern parallel I/O systems is that parallel disks consume a significant amount of energy in servers and high-performance computers. To conserve energy consumption in parallel I/O systems, one can immediately spin down disks when disk are idle; however, spinning down disks might not be able to produce energy savings due to penalties of spinning operations. Unlike powering up CPUs, spinning down and up disks need physical movements. Therefore, energy savings provided by spinning down operations must offset energy penalties of the disk spinning operations. To reduce the penalties incurred by disk spinning operations, we describe in this talk an approach to conserving energy of parallel I/O systems with write buffer disks, which are used to accumulate small writes using a log file system. Data sets buffered in the log file system can be transferred to target data disks in a batch way. Thus, buffer disks aim to serve a majority of incoming write requests, attempting to reduce the large number of disk spinning operations by keeping data disks in standby for long period times. Interestingly, the write buffer disks not only can achieve high energy efficiency in parallel I/O systems, but also can shorten response times of write requests. To evaluate the performance and energy efficiency of our parallel I/O systems with buffer disks, we implemented a prototype using a cluster storage system as a testbed. Experimental results show that under light and moderate I/O load, buffer disks can be employed to significantly reduce energy dissipation in parallel I/O systems without adverse impacts on I/O performance.

Sawmill - Integrating R and Large Data Clouds

This is a version of a talk that I have given a few times recently.

What's hot (20)

The Hive Think Tank: "Stream Processing Systems" by Karthik Ramasamy of Twitter

The Hive Think Tank: "Stream Processing Systems" by Karthik Ramasamy of Twitter

Leveraging Map Reduce With Hadoop for Weather Data Analytics

Leveraging Map Reduce With Hadoop for Weather Data Analytics

Application of web ontology to harvest estimation of rice in Thailand

Application of web ontology to harvest estimation of rice in Thailand

Application of web ontology to harvest estimation of rice in thailand

Application of web ontology to harvest estimation of rice in thailand

BUDW: Energy-Efficient Parallel Storage Systems with Write-Buffer Disks

BUDW: Energy-Efficient Parallel Storage Systems with Write-Buffer Disks

Similar to Informatica perf points

The design and implementation of modern column oriented databases

An attempt to break down the paper on the design of column-oriented databases into simpler terms.

https://stratos.seas.harvard.edu/files/stratos/files/columnstoresfntdbs.pdf

https://blog.acolyer.org/2018/09/26/the-design-and-implementation-of-modern-column-oriented-database-systems/

B036407011

The International Journal of Engineering & Science is aimed at providing a platform for researchers, engineers, scientists, or educators to publish their original research results, to exchange new ideas, to disseminate information in innovative designs, engineering experiences and technological skills. It is also the Journal's objective to promote engineering and technology education. All papers submitted to the Journal will be blind peer-reviewed. Only original articles will be published.

Welcome to International Journal of Engineering Research and Development (IJERD)

http://www.ijerd.com

Spanner Google’s Globally-Distributed DatabaseJames C. Corbett,.docx

Spanner: Google’s Globally-Distributed Database

James C. Corbett, Jeffrey Dean, Michael Epstein, Andrew Fikes, Christopher Frost, JJ Furman, Sanjay Ghemawat, Andrey Gubarev, Christopher Heiser, Peter Hochschild, Wilson Hsieh,

Sebastian Kanthak, Eugene Kogan, Hongyi Li, Alexander Lloyd, Sergey Melnik, David Mwaura,

David Nagle, Sean Quinlan, Rajesh Rao, Lindsay Rolig, Yasushi Saito, Michal Szymaniak, Christopher Taylor, Ruth Wang, Dale Woodford

Google, Inc.

Published in the Proceedings of OSDI 2012 1

Published in the Proceedings of OSDI 2012 1

Published in the Proceedings of OSDI 2012 16

Abstract

Spanner is Google’s scalable, multi-version, globallydistributed, and synchronously-replicated database. It is the first system to distribute data at global scale and support externally-consistent distributed transactions. This paper describes how Spanner is structured, its feature set, the rationale underlying various design decisions, and a novel time API that exposes clock uncertainty. This API and its implementation are critical to supporting external consistency and a variety of powerful features: nonblocking reads in the past, lock-free read-only transactions, and atomic schema changes, across all of Spanner.Introduction

Spanner is a scalable, globally-distributed database designed, built, and deployed at Google. At the highest level of abstraction, it is a database that shards data across many sets of Paxos [21] state machines in datacenters spread all over the world. Replication is used for global availability and geographic locality; clients automatically failover between replicas. Spanner automatically reshards data across machines as the amount of data or the number of servers changes, and it automatically migrates data across machines (even across datacenters) to balance load and in response to failures. Spanner is designed to scale up to millions of machines across hundreds of datacenters and trillions of database rows.

Applications can use Spanner for high availability, even in the face of wide-area natural disasters, by replicating their data within or even across continents. Our initial customer was F1 [35], a rewrite of Google’s advertising backend. F1 uses five replicas spread across the United States. Most other applications will probably replicate their data across 3 to 5 datacenters in one geographic region, but with relatively independent failure modes. That is, most applications will choose lower latency over higher availability, as long as they can survive 1 or 2 datacenter failures.

Spanner’s main focus is managing cross-datacenter replicated data, but we have also spent a great deal of time in designing and implementing important database features on top of our distributed-systems infrastructure. Even though many projects happily use Bigtable [9], we have also consistently received complaints from users that Bigtable can be difficult to use for some kinds of applications: those that have complex, evolving schemas, or those t.

Data Structure & Algorithm.pptx

A data structure is a named location that can be used to store and organize data. And, an algorithm is a collection of steps to solve a particular problem. Learning data structures and algorithms allow us to write efficient and optimized computer programs.

Data warehouse physical design

Data Warehouse Physical Design,Physical Data Model, Tablespaces, Integrity Constraints, ETL (Extract-Transform-Load) ,OLAP Server Architectures, MOLAP vs. ROLAP, Distributed Data Warehouse ,

Elimination of data redundancy before persisting into dbms using svm classifi...

Elimination of data redundancy before persisting into dbms using svm classification,

Data Base Management System is one of the

growing fields in computing world. Grid computing, internet

sharing, distributed computing, parallel processing and cloud

are the areas store their huge amount of data in a DBMS to

maintain the structure of the data. Memory management is

one of the major portions in DBMS due to edit, delete, recover

and commit operations used on the records. To improve the

memory utilization efficiently, the redundant data should be

eliminated accurately. In this paper, the redundant data is

fetched by the Quick Search Bad Character (QSBC) function

and intimate to the DB admin to remove the redundancy.

QSBC function compares the entire data with patterns taken

from index table created for all the data persisted in the

DBMS to easy comparison of redundant (duplicate) data in

the database. This experiment in examined in SQL server

software on a university student database and performance is

evaluated in terms of time and accuracy. The database is

having 15000 students data involved in various activities.

Keywords—Data redundancy, Data Base Management System,

Support Vector Machine, Data Duplicate.

I. INTRODUCTION

The growing (prenominal) mass of information

present in digital media has become a resistive problem for

data administrators. Usually, shaped on data congregate

from distinct origin, data repositories such as those used by

digital libraries and e-commerce agent based records with

disparate schemata and structures. Also problems regarding

to low response time, availability, security and quality

assurance become more troublesome to manage as the

amount of data grow larger. It is practicable to specimen

that the peculiarity of the data that an association uses in its

systems is relative to its efficiency for offering beneficial

services to their users. In this environment, the

determination of maintenance repositories with “dirty” data

(i.e., with replicas, identification errors, equal patterns,

etc.) goes greatly beyond technical discussion such as the

everywhere quickness or accomplishment of data

administration systems.

Nalini.M, nalini.tptwin@gmail.com, Anbu.S, anomaly detection,

data mining

big data

dbms

intrusion detection

dublicate detection

data cleaning

data redundancy

data replication, redundancy removel, QSBC, Duplicate detection, error correction, de-duplication, Data cleaning, Dbms, Data sets

Query optimization

Query Optimization, Importance, Query Processing, A Query-Evaluation Plan, Cost based Optimization (Physical), Heuristic Optimization (Logical), External sorting, External Merge sort, two-way merge sort

A Survey on Improve Efficiency And Scability vertical mining using Agriculter...

Basic idea is that the search tree could be divided into sub process of equivalence

classes. And since generating item sets in sub process of equivalence classes is independent from

each other, we could do frequent item set mining in sub trees of equivalence classes in parallel. So

the straightforward approach to parallelize Éclat is to consider each equivalence class as a data

(agriculture). We can distribute data to different nodes and nodes could work on data without any

synchronization. Even though the sorting helps to produce different sets in smaller sizes, there is a

cost for sorting. Our Research to analysis is that the size of equivalence class is relatively small

(always less than the size of the item base) and this size also reduces quickly as the search goes

deeper in the recursion process. Base on time using more than using agriculture data we can handle

large amount of data so first we develop éclat algorithm then develop parallel éclat algorithm then

compare with using same data with respect time .with the help of support and confidence.

Building High Performance MySQL Query Systems and Analytic Applications

This presentation describes how to build fast running MySQL applications that service read-based systems. It takes a special look at column databases and Calpont's InfiniDB

Building High Performance MySql Query Systems And Analytic Applications

This presentation gives practical advice and tips on how to build high-performance read intensive databases, and discusses innovations such as column-oriented databases

Similar to Informatica perf points (20)

The design and implementation of modern column oriented databases

The design and implementation of modern column oriented databases

Welcome to International Journal of Engineering Research and Development (IJERD)

Welcome to International Journal of Engineering Research and Development (IJERD)

Jovian DATA: A multidimensional database for the cloud

Jovian DATA: A multidimensional database for the cloud

Spanner Google’s Globally-Distributed DatabaseJames C. Corbett,.docx

Spanner Google’s Globally-Distributed DatabaseJames C. Corbett,.docx

Data management in cloud study of existing systems and future opportunities

Data management in cloud study of existing systems and future opportunities

Elimination of data redundancy before persisting into dbms using svm classifi...

Elimination of data redundancy before persisting into dbms using svm classifi...

A Survey on Improve Efficiency And Scability vertical mining using Agriculter...

A Survey on Improve Efficiency And Scability vertical mining using Agriculter...

Building High Performance MySQL Query Systems and Analytic Applications

Building High Performance MySQL Query Systems and Analytic Applications

Building High Performance MySql Query Systems And Analytic Applications

Building High Performance MySql Query Systems And Analytic Applications

Recently uploaded

原版仿制(uob毕业证书)英国伯明翰大学毕业证本科学历证书原版一模一样

原版纸张【微信:741003700 】【(uob毕业证书)英国伯明翰大学毕业证】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Multi-cluster Kubernetes Networking- Patterns, Projects and Guidelines

Talk presented at Kubernetes Community Day, New York, May 2024.

Technical summary of Multi-Cluster Kubernetes Networking architectures with focus on 4 key topics.

1) Key patterns for Multi-cluster architectures

2) Architectural comparison of several OSS/ CNCF projects to address these patterns

3) Evolution trends for the APIs of these projects

4) Some design recommendations & guidelines for adopting/ deploying these solutions.

1.Wireless Communication System_Wireless communication is a broad term that i...

Wireless communication involves the transmission of information over a distance without the help of wires, cables or any other forms of electrical conductors.

Wireless communication is a broad term that incorporates all procedures and forms of connecting and communicating between two or more devices using a wireless signal through wireless communication technologies and devices.

Features of Wireless Communication

The evolution of wireless technology has brought many advancements with its effective features.

The transmitted distance can be anywhere between a few meters (for example, a television's remote control) and thousands of kilometers (for example, radio communication).

Wireless communication can be used for cellular telephony, wireless access to the internet, wireless home networking, and so on.

How to Use Contact Form 7 Like a Pro.pptx

Contact Form 7 is a popular plugins for WordPress. This is an introduction into its features and usage.

ER(Entity Relationship) Diagram for online shopping - TAE

https://bit.ly/3KACoyV

The ER diagram for the project is the foundation for the building of the database of the project. The properties, datatypes, and attributes are defined by the ER diagram.

急速办(bedfordhire毕业证书)英国贝德福特大学毕业证成绩单原版一模一样

原版纸张【微信:741003700 】【(bedfordhire毕业证书)英国贝德福特大学毕业证成绩单】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

1比1复刻(bath毕业证书)英国巴斯大学毕业证学位证原版一模一样

原版纸张【微信:741003700 】【(bath毕业证书)英国巴斯大学毕业证学位证】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

The+Prospects+of+E-Commerce+in+China.pptx

The Prospects of E-commerce in China,www.cfye-commerce.shop

This 7-second Brain Wave Ritual Attracts Money To You.!

Discover the power of a simple 7-second brain wave ritual that can attract wealth and abundance into your life. By tapping into specific brain frequencies, this technique helps you manifest financial success effortlessly. Ready to transform your financial future? Try this powerful ritual and start attracting money today!

test test test test testtest test testtest test testtest test testtest test ...

salamsasalamlamsalamsalamsalamsalamsalamsalamsalam

History+of+E-commerce+Development+in+China-www.cfye-commerce.shop

History+of+E-commerce+Development+in+China-www.cfye-commerce.shop

Recently uploaded (16)

guildmasters guide to ravnica Dungeons & Dragons 5...

guildmasters guide to ravnica Dungeons & Dragons 5...

Multi-cluster Kubernetes Networking- Patterns, Projects and Guidelines

Multi-cluster Kubernetes Networking- Patterns, Projects and Guidelines

1.Wireless Communication System_Wireless communication is a broad term that i...

1.Wireless Communication System_Wireless communication is a broad term that i...

ER(Entity Relationship) Diagram for online shopping - TAE

ER(Entity Relationship) Diagram for online shopping - TAE

This 7-second Brain Wave Ritual Attracts Money To You.!

This 7-second Brain Wave Ritual Attracts Money To You.!

test test test test testtest test testtest test testtest test testtest test ...

test test test test testtest test testtest test testtest test testtest test ...

History+of+E-commerce+Development+in+China-www.cfye-commerce.shop

History+of+E-commerce+Development+in+China-www.cfye-commerce.shop

Living-in-IT-era-Module-7-Imaging-and-Design-for-Social-Impact.pptx

Living-in-IT-era-Module-7-Imaging-and-Design-for-Social-Impact.pptx

Informatica perf points

- 1. Back-End Performance Improvement Measures The following transformations in Informatica should be avoided to the maximum possible extent : 1) Joiners : Joiners are typically needed for heterogeneous sources (say flat files and database tables). In such cases it is preferable to split the mapping in 2 mappings, the first one loading data from flat file to intermediate table and the next one using this intermediate table and the original database table. To make it more efficient, indexes should be used. 2) Aggregators : Use database group by function instead of aggregator . 3) Routers : This can be avoided based on functionality of mappings. 4) Filters : The filter condition can sometimes be transferred to Source Qualifier SQL depending upon functionality of mapping.

- 2. Back-End Performance Improvement Measures 5) Lookups : Whenever only one port is the output of any lookup then it should be made unconnected lookup and then called from the transformation (typically expression) conditionally. If such lookups are of reusable nature, then the same object can be used in many mappings/mapplets and thus the maintenance of such lookups becomes much easier (this way we achieve better performance plus better development environment) . 6) Explain Plan : Every SQL generated in the source qualifier should go through the explain plan utility provided by Oracle to ensure the most efficient execution plan (or at least to ensure that indexes are being used wherever required and in case indexes don’t exist then the same needs to be created). 7) Session Properties : There are lots of session properties which can be modified to ensure better read and write throughput.

- 3. Database Performance Improvement Measures 1) Clusters : The usage of clusters improves the performance because the related set of data is kept together. 2) Partitioning : Partitioning of tables is known to improve the performance. 3) Parallelism : Parallel Query option when set improves the performance. 4) Dynamic Tuning : The database can be tuned dynamically from time to time by the DBA’s depending upon the data warehouse status as of any point in time.

- 4. Front-End Performance Improvement Measures 1) Indexes : Introduction of additional indexes wherever applicable can improve the performance of reports. 2) Aggregate Tables : At times the reports are based on fact tables which contains huge volume of data. If the group by operations like sum,max,avg etc. are computed on this set of records it would be time consuming. Introduction of aggregate table in the data model would be of immense use because then the whole computation logic would be shifted to back-end and thus the reports based on this aggregate table would be faster. In the front end universe, we can provide the drill down facility moving down from aggregate table to the original fact table.

- 5. Data Model Improvement Measures 1) Functionality : The functionality of the data model can be checked to ensure that it serves the whole reporting requirement in efficient way. 2) Global Dimensions : The global dimensions should be used wherever possible. In case the data coming from source doesn’t conform with that present in the global dimensions (say for example, different codes referring to the same country), then there should be translation tables to take care of it.

- 6. Data Model Improvement Measures 1) Functionality : The functionality of the data model can be checked to ensure that it serves the whole reporting requirement in efficient way. 2) Global Dimensions : The global dimensions should be used wherever possible. In case the data coming from source doesn’t conform with that present in the global dimensions (say for example, different codes referring to the same country), then there should be translation tables to take care of it.