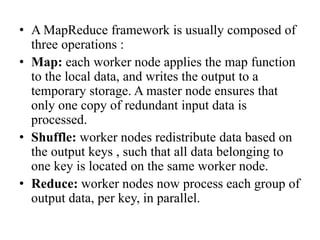

MapReduce is a programming model for processing large datasets in a distributed system. It allows parallel processing of data across clusters of computers. A MapReduce program defines a map function that processes key-value pairs to generate intermediate key-value pairs, and a reduce function that merges all intermediate values associated with the same intermediate key. The MapReduce framework handles parallelization of tasks, scheduling, input/output handling, and fault tolerance.