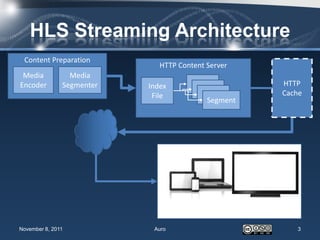

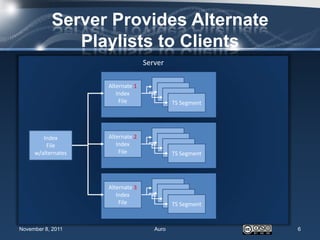

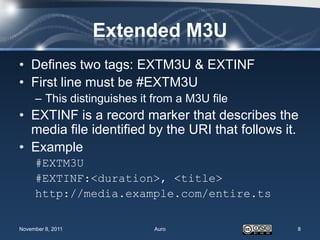

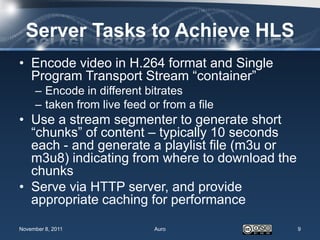

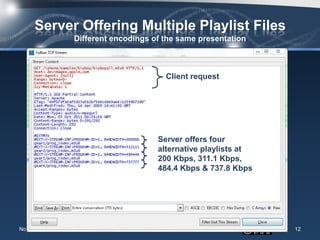

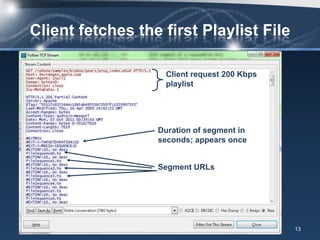

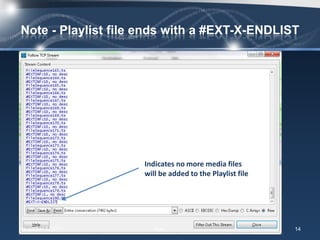

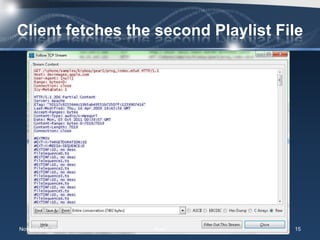

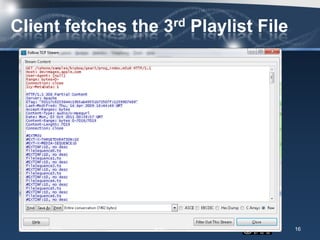

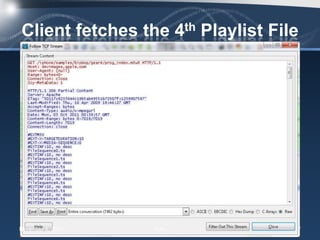

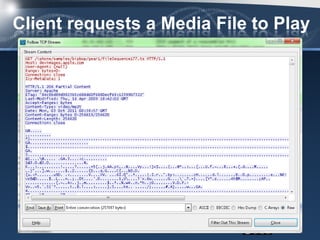

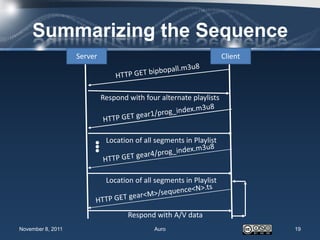

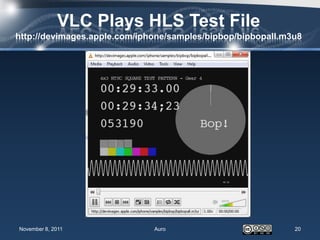

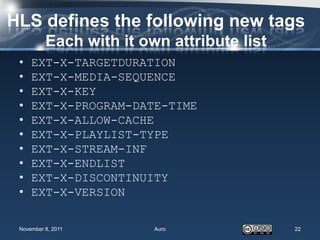

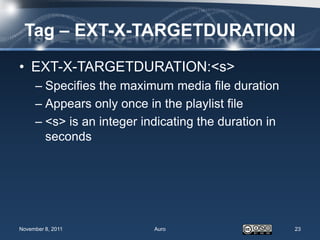

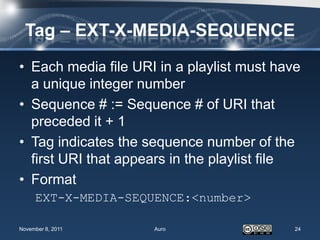

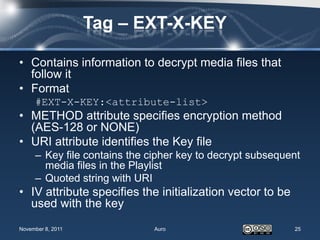

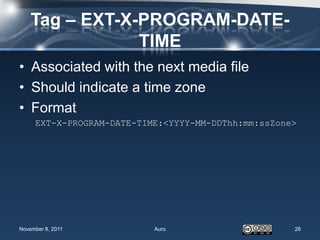

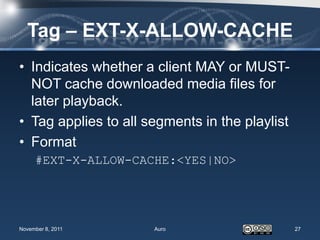

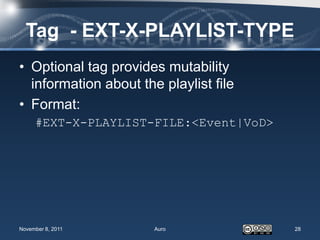

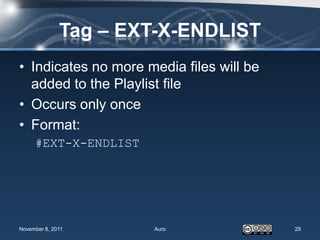

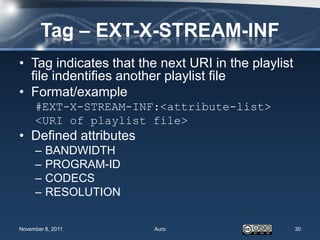

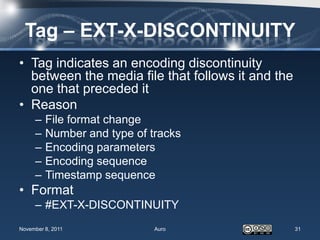

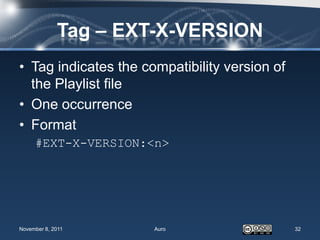

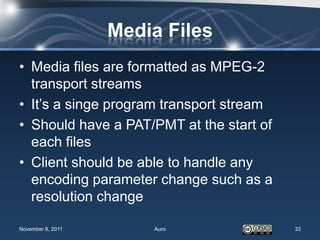

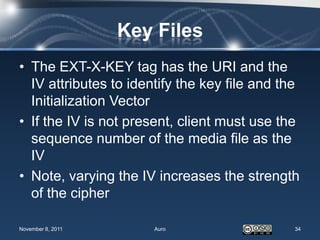

This document provides an overview of Apple's HTTP Live Streaming (HLS) protocol for dynamically adapting video streaming to network conditions. It describes the basics of HLS including how content is prepared and served, how clients play the stream by obtaining playlist files that list available media segments, and new tags defined by the HLS protocol such as EXT-X-TARGETDURATION and EXT-X-MEDIA-SEQUENCE. It also compares HLS to other adaptive streaming protocols and shows examples of analyzing an HLS stream with Wireshark.