Embed presentation

Downloaded 41 times

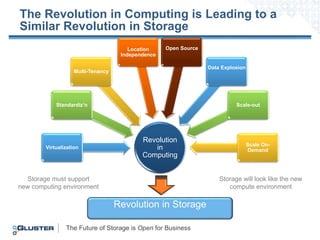

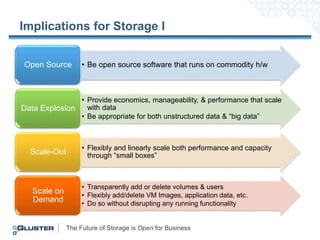

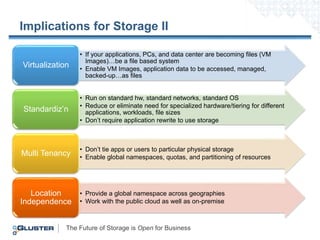

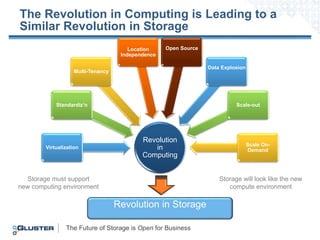

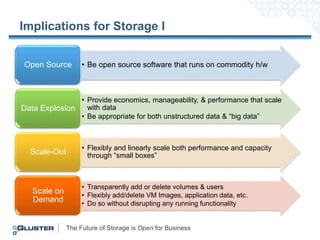

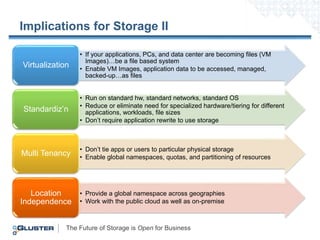

The document discusses the future of storage in relation to advancements in computing, highlighting the need for open-source software that operates on standard hardware. It emphasizes scalability, flexibility, and multi-tenancy, suggesting that storage systems must adapt to handle unstructured and big data efficiently. Overall, the future storage solutions should enhance manageability and performance while maintaining compatibility with evolving computing environments.