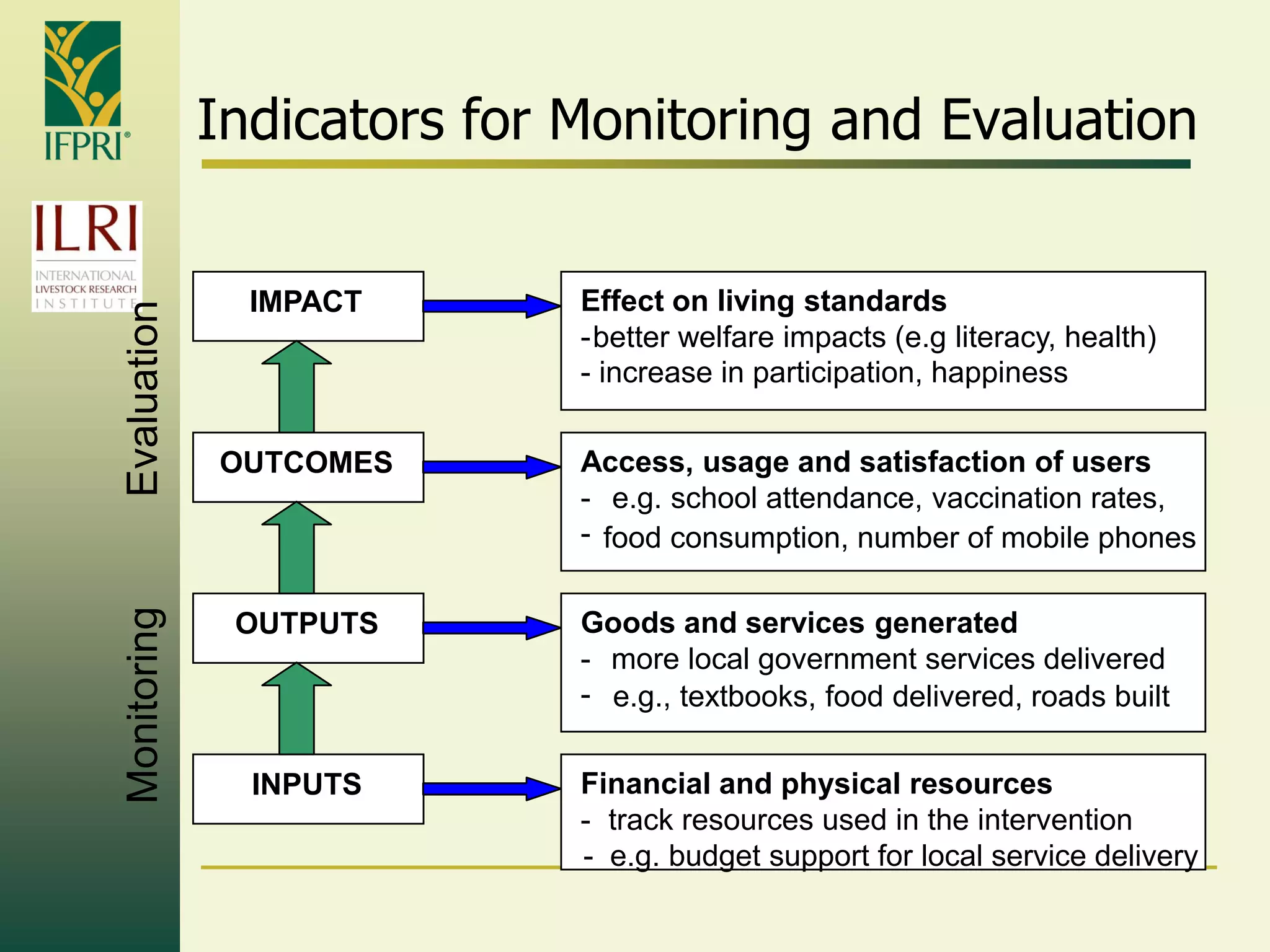

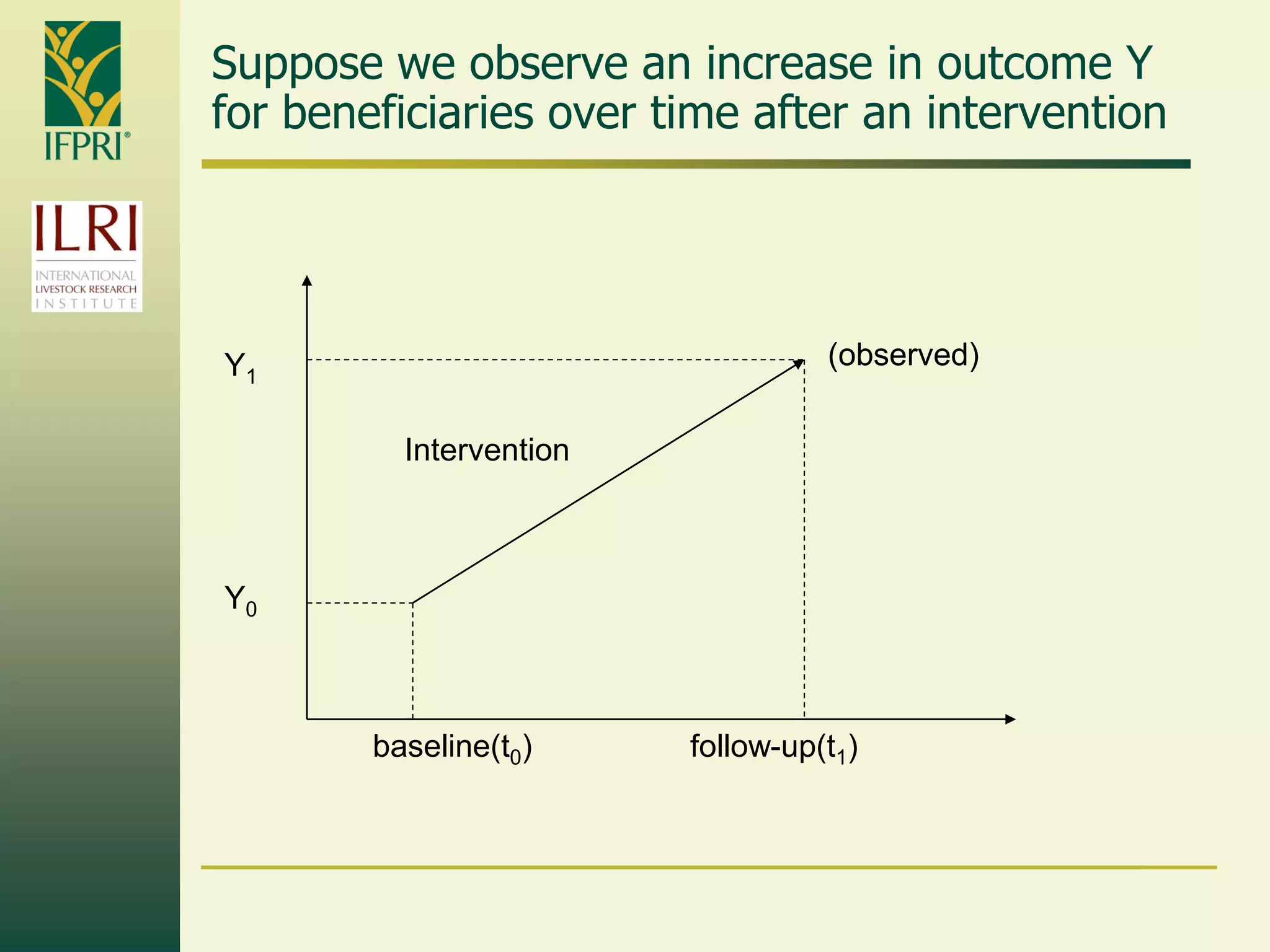

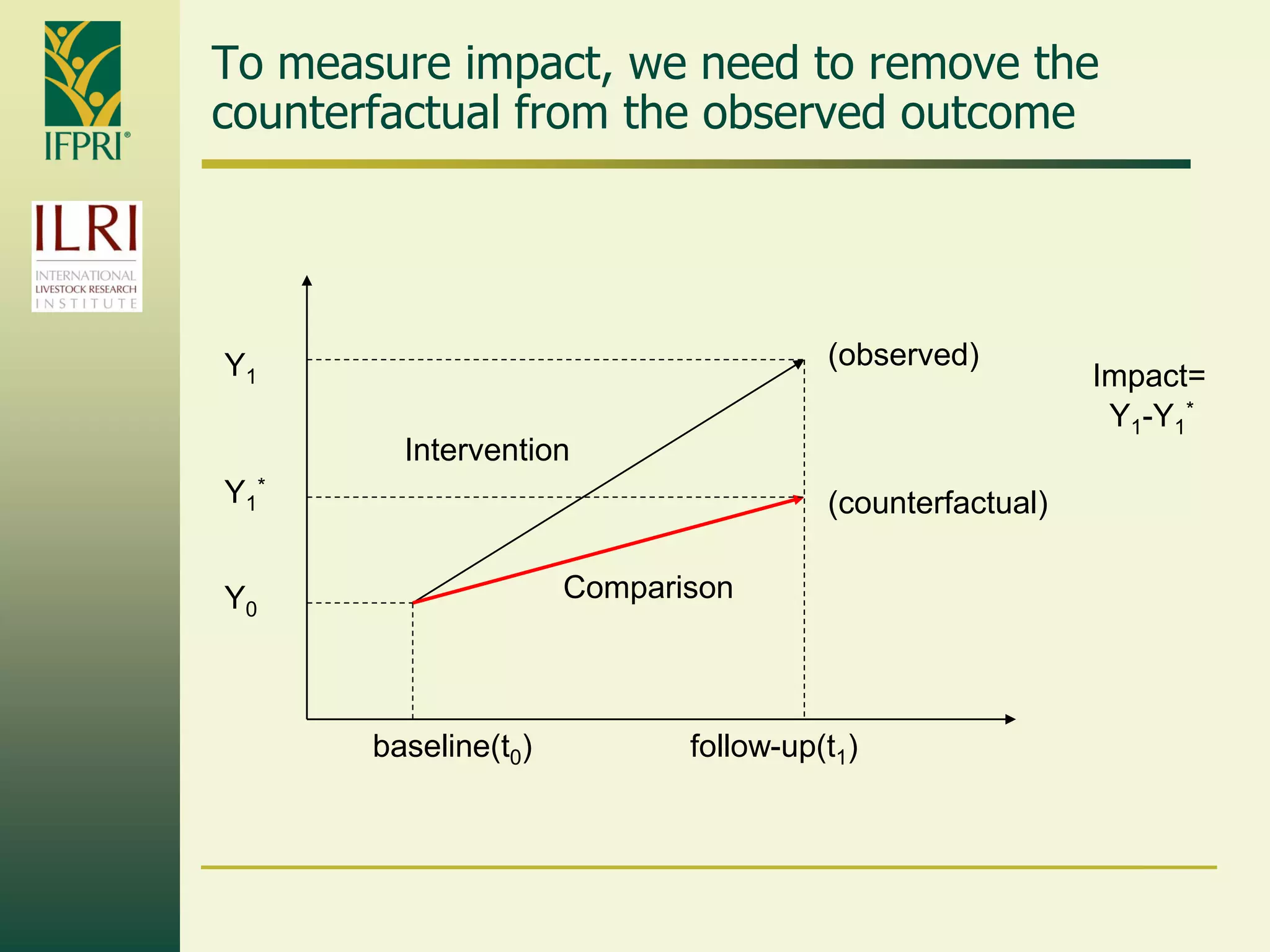

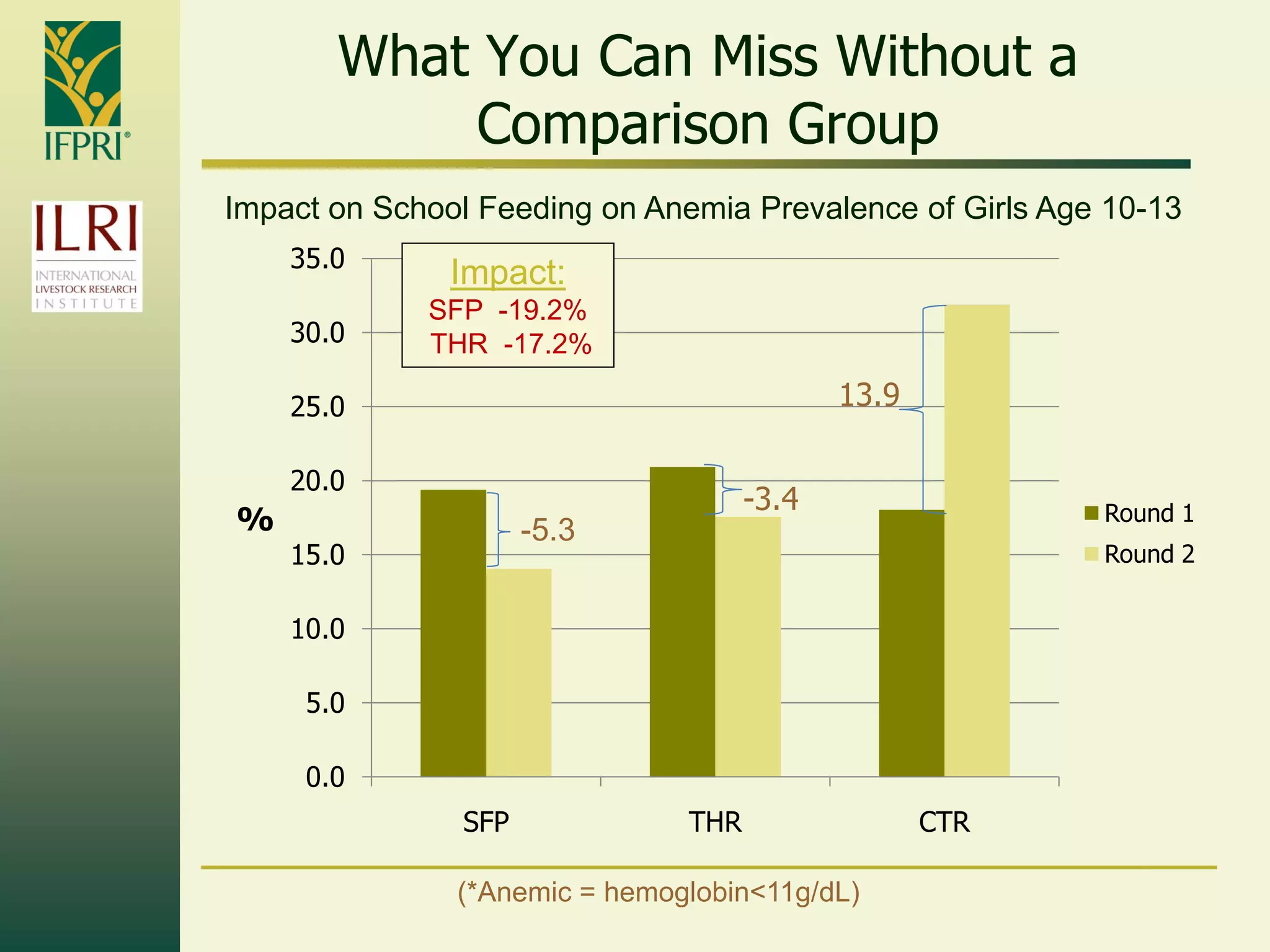

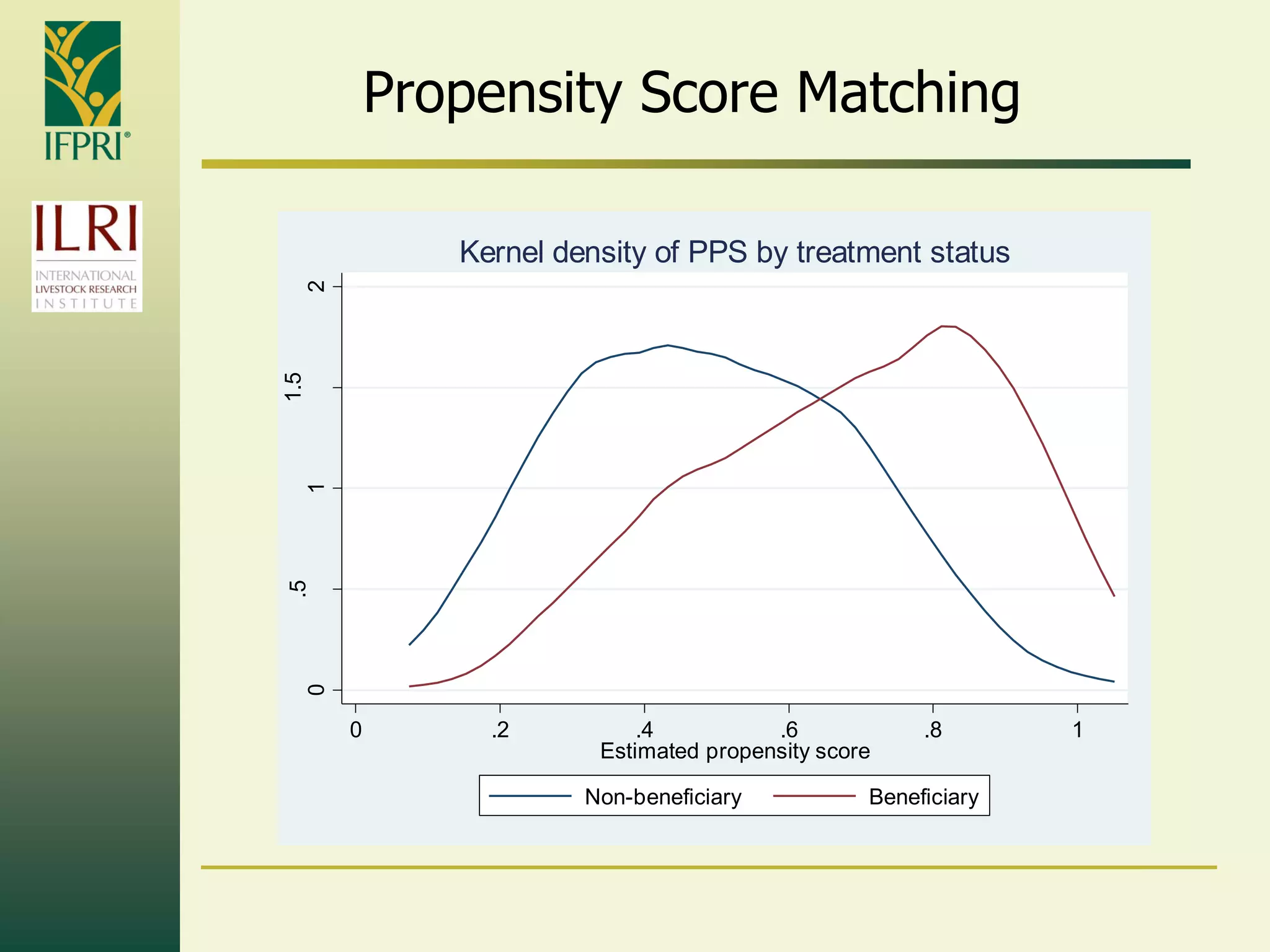

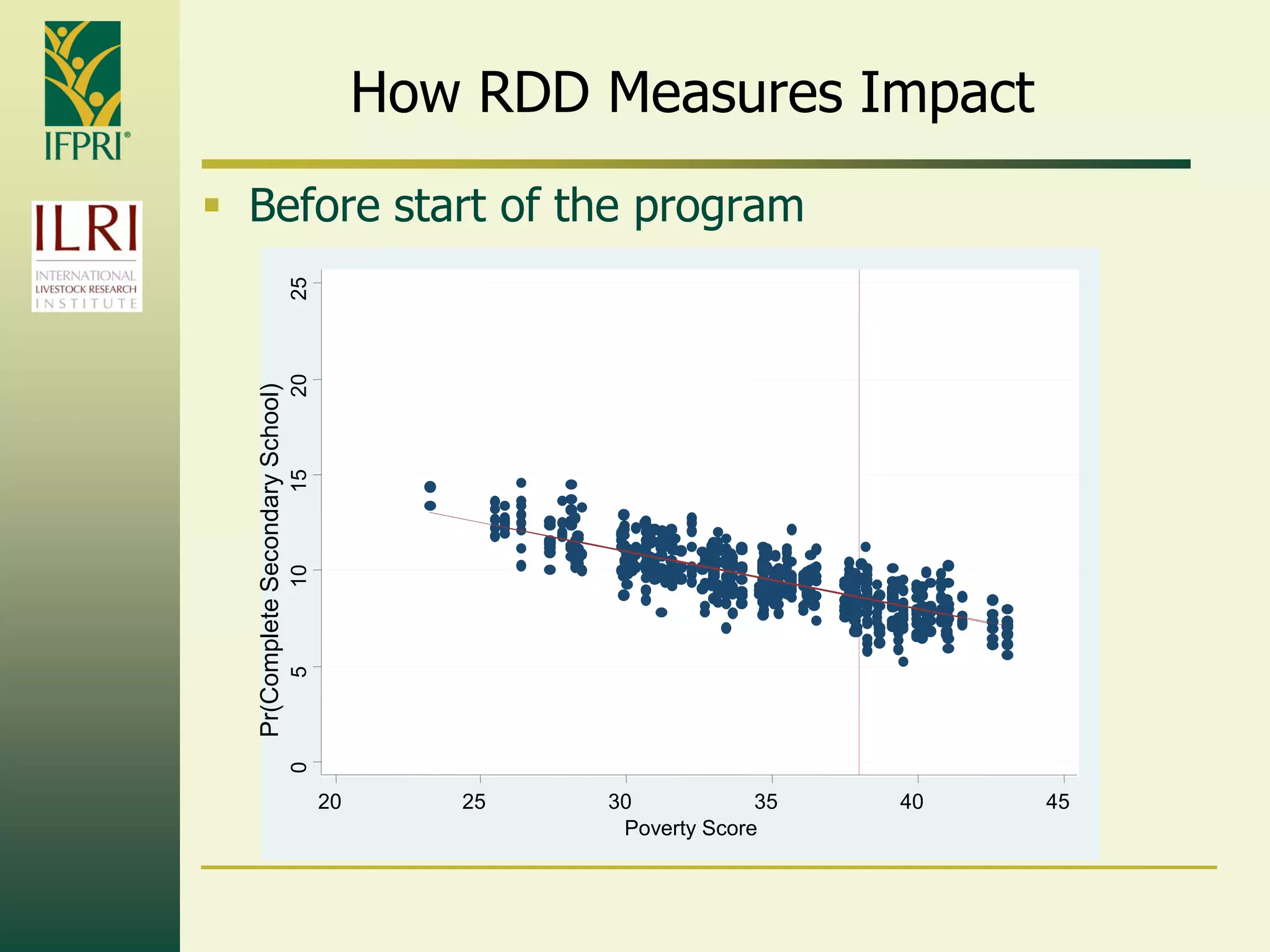

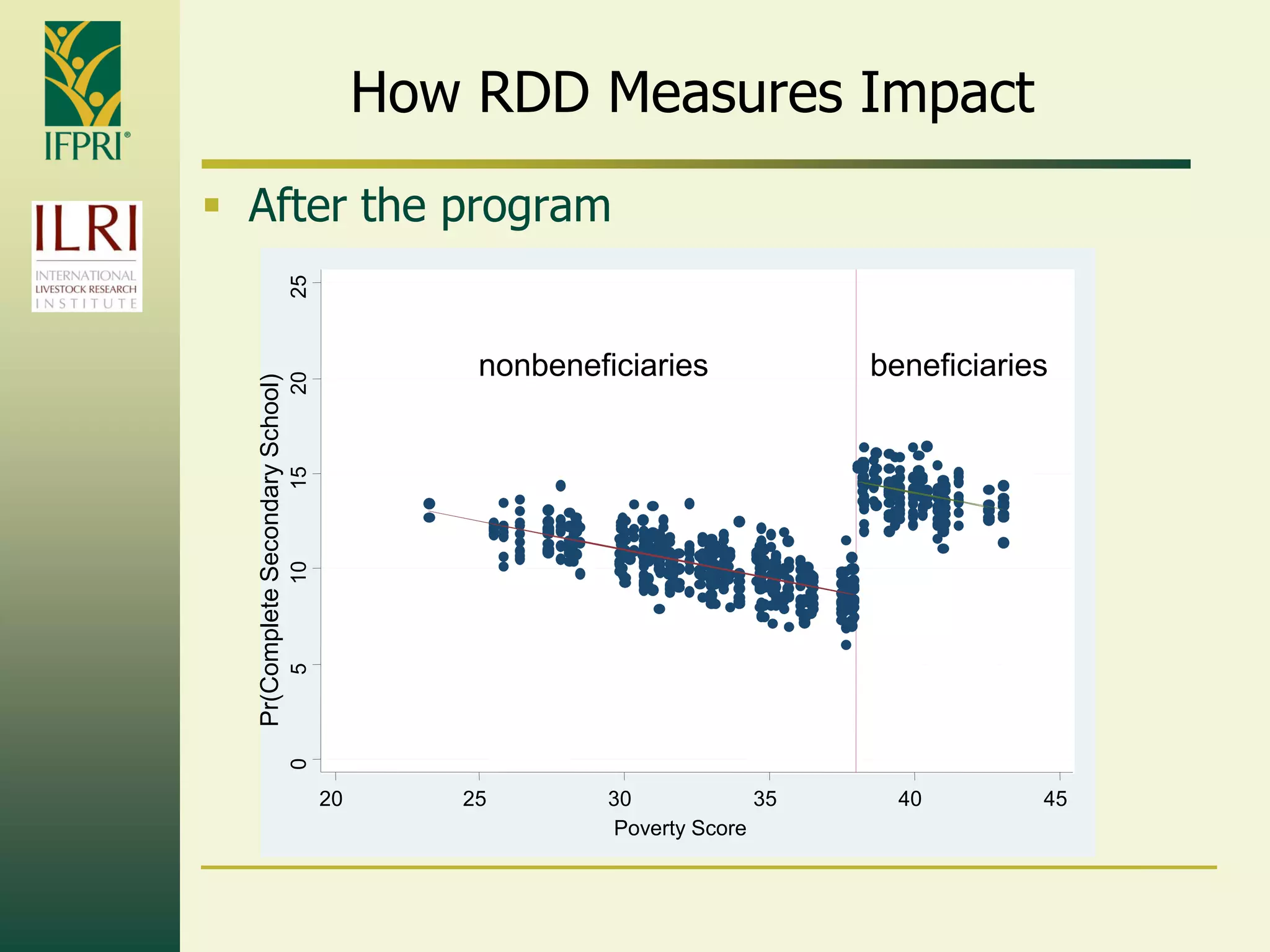

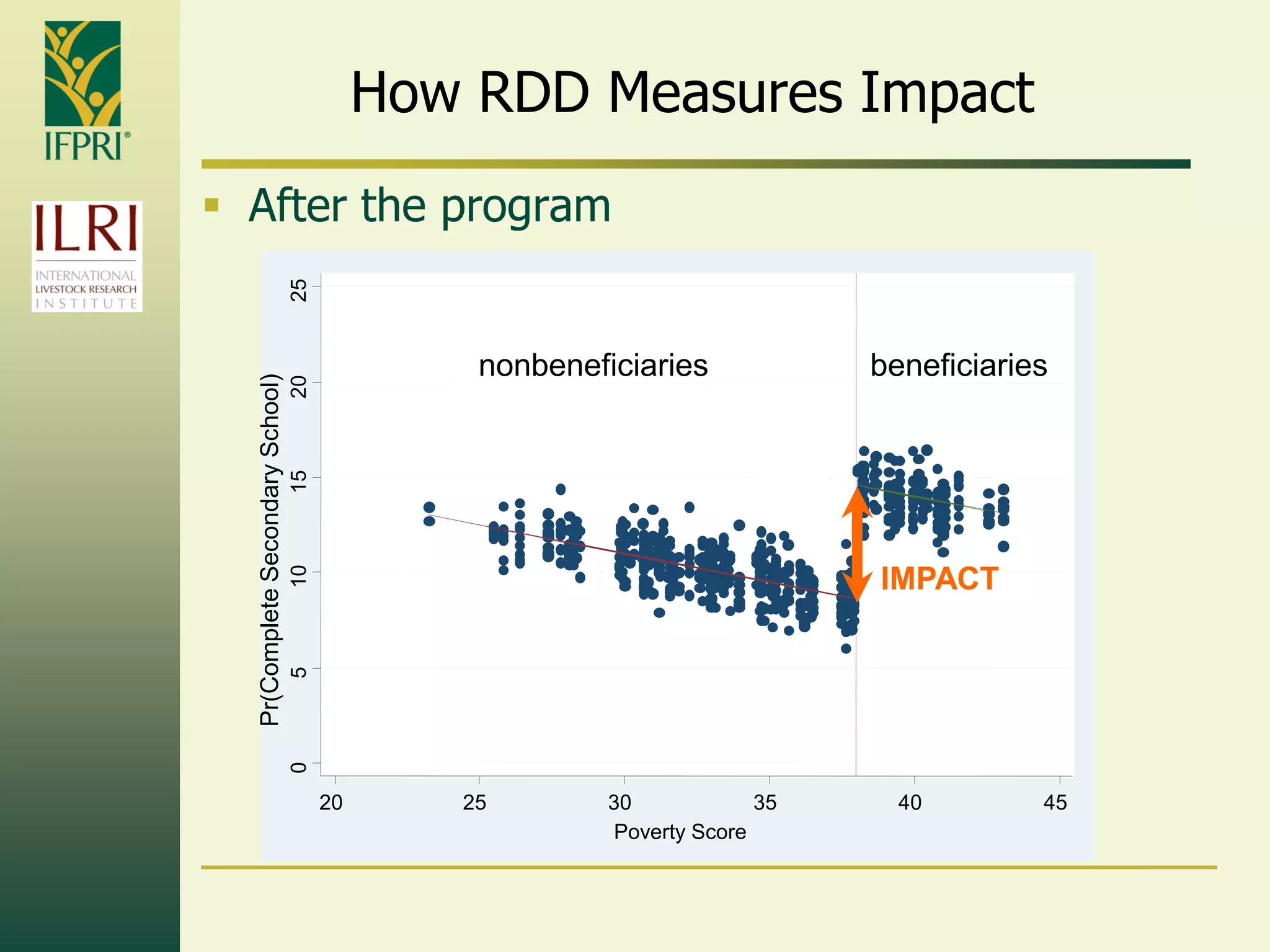

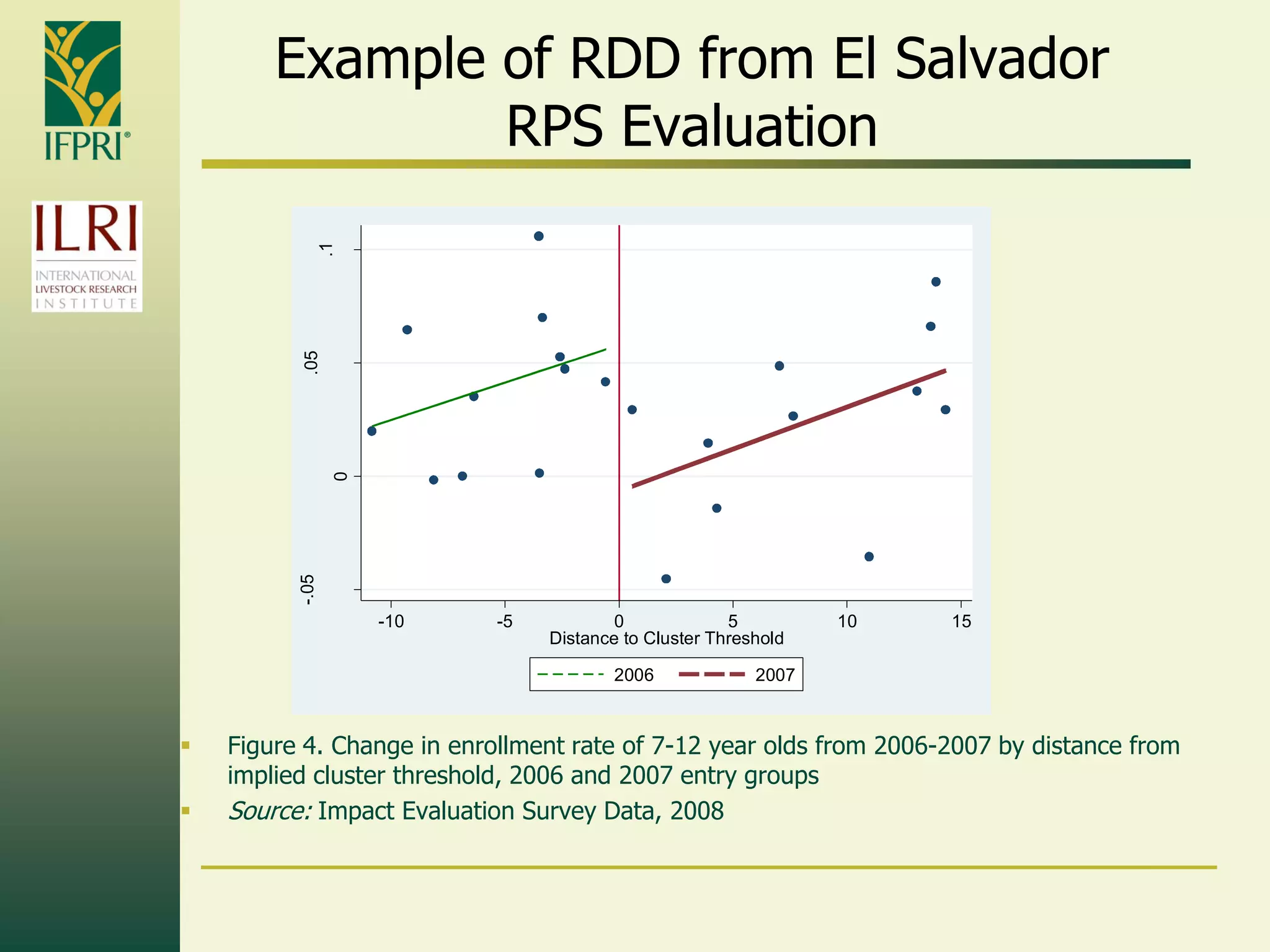

This document provides an introduction to quantitative impact evaluation methods. It discusses why impact evaluations are important, how to design an evaluation, and common evaluation tools and methodologies. Key points include: impact evaluations measure a program's causal effects, require a comparison group to estimate counterfactual outcomes, and use methods like randomization, matching, regression discontinuity, and difference-in-differences to construct valid comparisons. The goals of evaluations are to measure impacts, assess cost-effectiveness, and explain which program components are most effective.