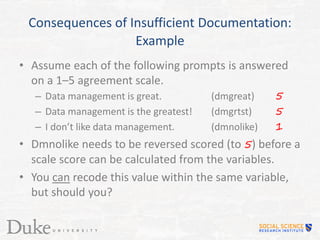

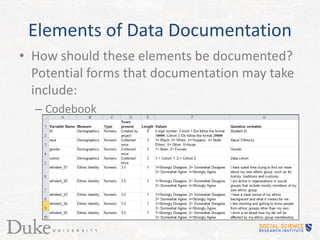

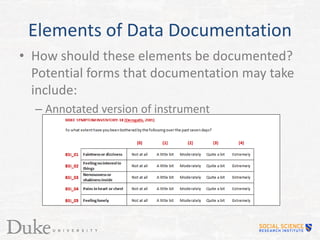

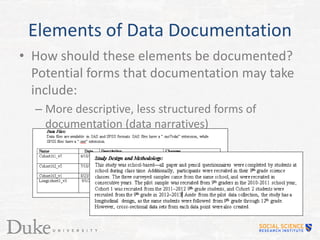

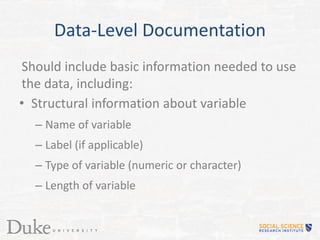

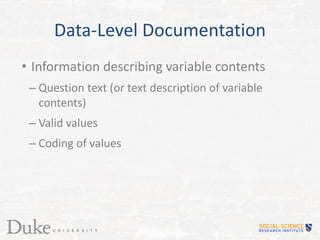

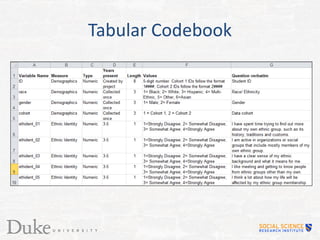

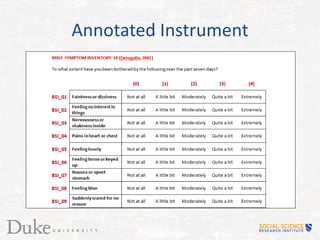

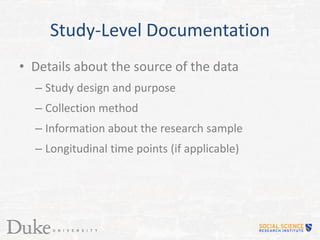

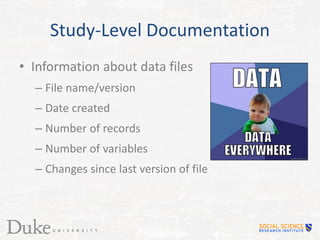

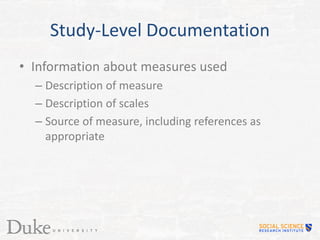

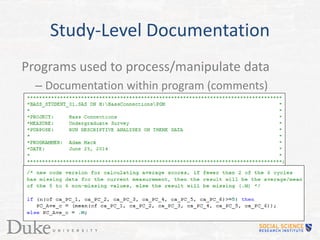

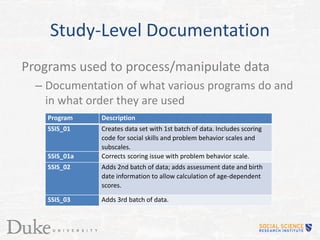

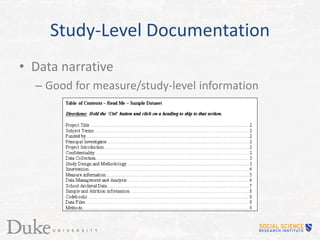

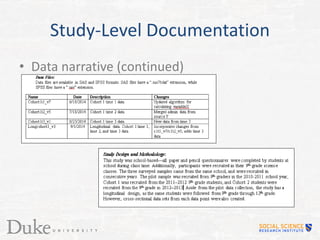

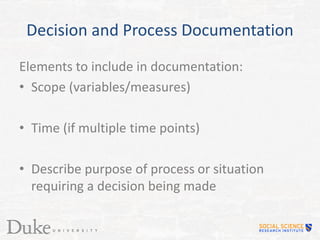

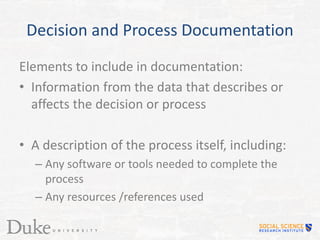

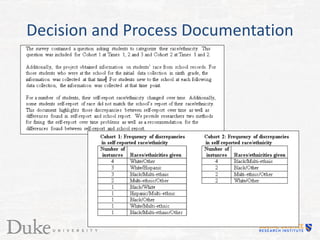

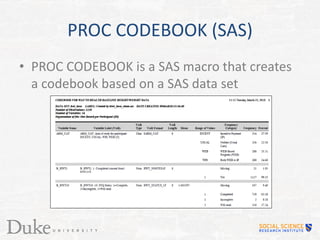

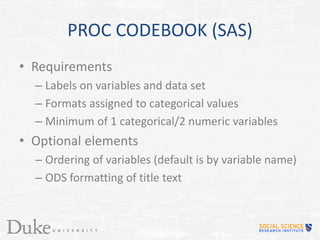

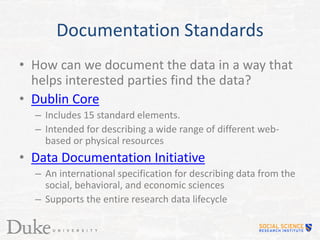

This document discusses the importance of properly documenting research data. It notes that documentation allows data to be understood by those outside the original project and prevents inaccurate assumptions from being made if the data manipulations or variable meanings are unclear. Insufficient documentation can make data unusable or misinterpreted. The document outlines key elements to document like data elements, study details, and decisions made. It provides examples of documentation tools like codebooks, annotated instruments, and data narratives. Thorough documentation ensures research data remains useful and understandable.