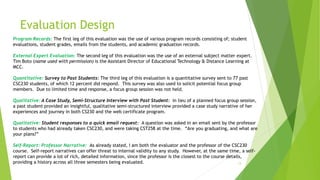

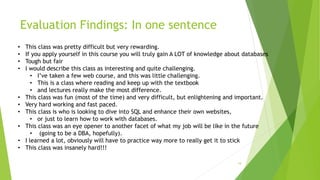

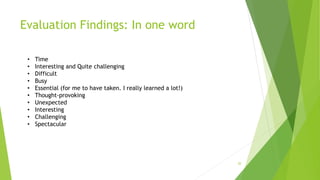

This document evaluates the CSC230 course at Manchester Community College, focusing on its effectiveness in preparing students for further studies and career advancement in web development. It utilizes a mixed-methods approach, collecting quantitative and qualitative data from multiple student cohorts and external evaluations to assess student outcomes and course design. Findings reveal both strengths and areas for improvement in the course content, structure, and delivery, including suggestions for lengthening the course duration and enhancing learning materials.

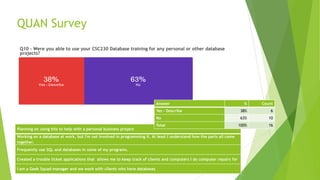

![Evaluation Findings: Outside Expert

21

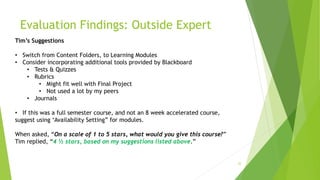

Tim’s Comments

“I am not seeing anything that is wrong, it is good overall.”

“Overall this course looks nice.”

(Appendix K1)“You use the ‘Getting Started” section to your own version, not just the canned version presented by the school.”

(Appendix K2) “You use announcements a lot” [this is good]

“You use embedded video.”

“Your use embedded links”

(Appendix K3) “Syllabus looks good, and provides the actual links to needed files for the course, and is high up on the syllabus.”

(Appendix K4) Course Content

“Has a roadmap in the front”

“You have videos in each module”

Laid out well, and consistent.

(Appendix K5) My Grades Section, he liked how they were organized.](https://image.slidesharecdn.com/paulgruhnedld808programevaluationonlineeducation-170317141725/85/EDLD808-Program-Evaluation-Final-Project-Online-Education-21-320.jpg)