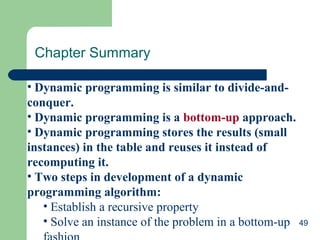

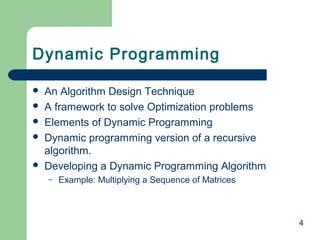

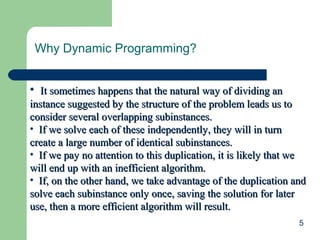

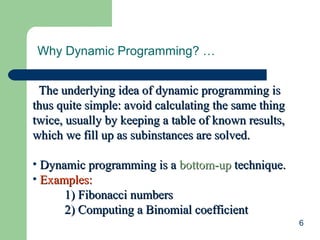

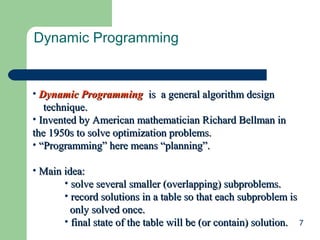

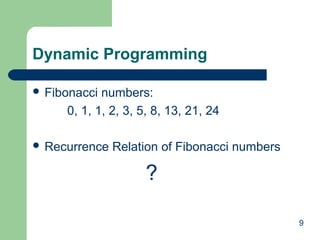

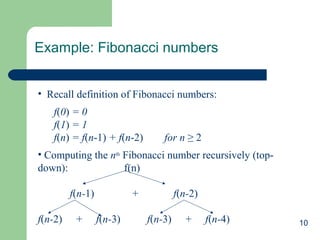

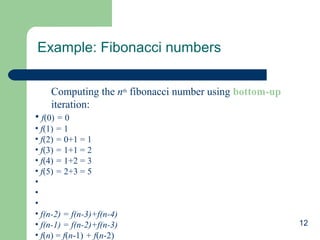

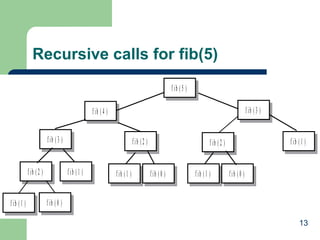

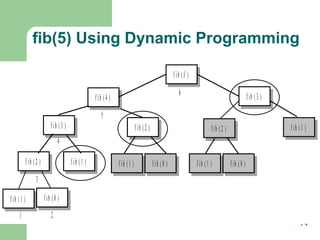

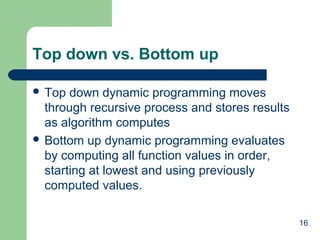

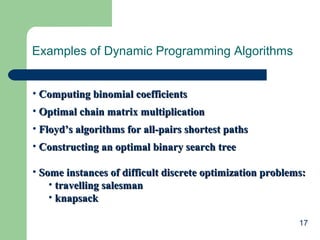

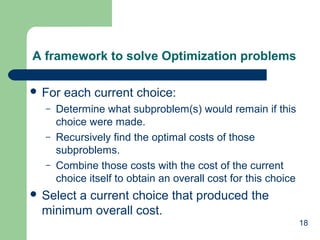

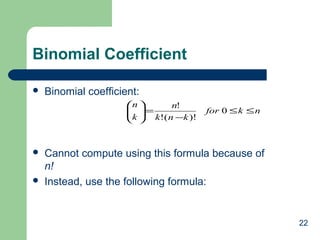

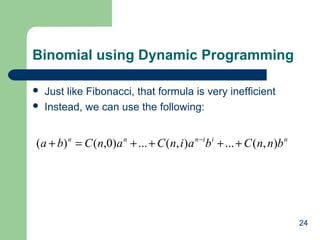

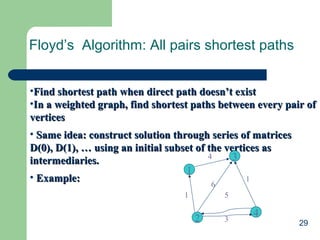

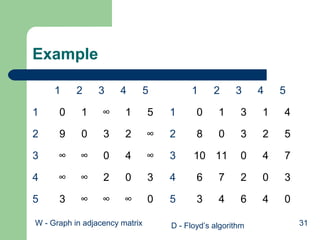

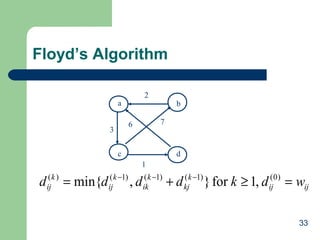

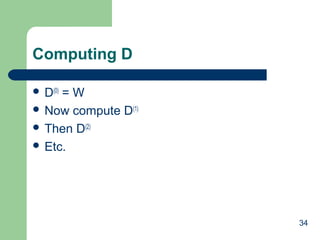

This document discusses dynamic programming and provides examples to illustrate the technique. It begins by defining dynamic programming as a bottom-up approach to problem solving where solutions to smaller subproblems are stored and built upon to solve larger problems. It then provides examples of dynamic programming algorithms for calculating Fibonacci numbers, binomial coefficients, and finding shortest paths using Floyd's algorithm. The key aspects of dynamic programming like avoiding recomputing solutions and storing intermediate results in tables are emphasized.

![11

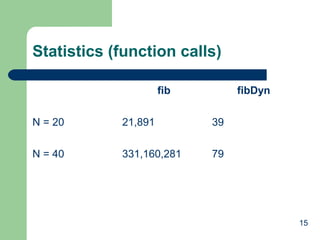

Fib vs. fibDyn

int fib(int n) {

if (n <= 1) return n; // stopping conditions

else return fib(n-1) + fib(n-2); // recursive step

}

int fibDyn(int n, vector<int>& fibList) {

int fibValue;

if (fibList[n] >= 0) // check for a previously computed result and return

return fibList[n];

// otherwise execute the recursive algorithm to obtain the result

if (n <= 1) // stopping conditions

fibValue = n;

else // recursive step

fibValue = fibDyn(n-1, fibList) + fibDyn(n-2, fibList);

// store the result and return its value

fibList[n] = fibValue;

return fibValue;

}](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-11-320.jpg)

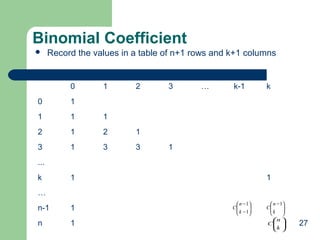

![25

Bottom-Up

Recursive property:

– B[i] [j] = B[i – 1] [j – 1] + B[i – 1][j] 0 < j < i

1 j = 0 or j = i](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-25-320.jpg)

![26

Pascal’s Triangle

0 1 2 3 4 … j k

0 1

1 1 1

2 1 2 1

3 1 3 3 1

4 1 4 6 4 1

… B[i-1][j-1]+ B[i-1][j]

i B[i][j]

n](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-26-320.jpg)

![28

Binomial Coefficient

ALGORITHM Binomial(n,k)

//Computes C(n, k) by the dynamic programming algorithm

//Input: A pair of nonnegative integers n ≥ k ≥ 0

//Output: The value of C(n ,k)

for i 0 to n do

for j 0 to min (i ,k) do

if j = 0 or j = k

C [i , j] 1

else C [i , j] C[i-1, j-1] + C[i-1, j]

return C [n, k]

)()(

2

)1(

)1(11),(

1

1

1 1 1 1 1

nkknk

kk

kiknA

k

i

i

j

n

ki

k

j

k

i

n

Ki

Θ∈−+

−

=

+−=+= ∑∑ ∑∑ ∑ ∑=

−

= += = = +=](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-28-320.jpg)

![32

Meanings

D(0)

[2][5] = lenth[v2, v5]= ∞

D(1)

[2][5] = minimum(length[v2,v5], length[v2,v1,v5])

= minimum (∞, 14) = 14

D(2)

[2][5] = D(1)

[2][5] = 14

D(3)

[2][5] = D(2)

[2][5] = 14

D(4)

[2][5] = minimum(length[v2,v1,v5], length[v2,v4,v5]),

length[v2,v1,v5], length[v2, v3,v4,v5]),

= minimum (14, 5, 13, 10) = 5

D(5)

[2][5] = D(4)

[2][5] = 5](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-32-320.jpg)

![35

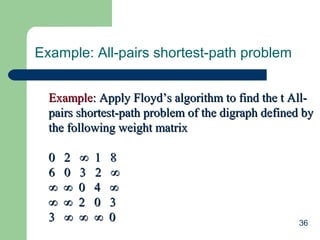

Floyd’s Algorithm: All pairs shortest paths

• ALGORITHM Floyd (W[1 … n, 1… n])ALGORITHM Floyd (W[1 … n, 1… n])

•For kFor k ← 1 to n do← 1 to n do

•For iFor i ← 1 to n do← 1 to n do

•For j ← 1 to n doFor j ← 1 to n do

•W[i, j] ← min{W[i,j], W{i, k] + W[k, j]}W[i, j] ← min{W[i,j], W{i, k] + W[k, j]}

•Return WReturn W

•Efficiency = ?Efficiency = ?

Θ(n)](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-35-320.jpg)

![38

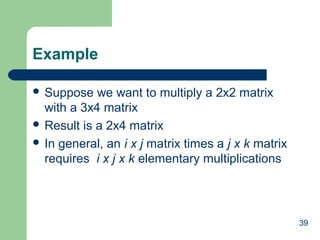

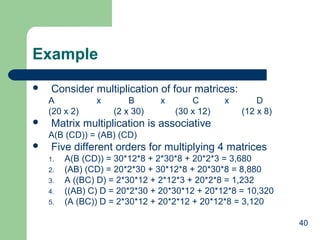

Chained Matrix Multiplication

Problem: Matrix-chain multiplication

– a chain of <A1, A2, …, An> of n matrices

– find a way that minimizes the number of scalar multiplications to

compute the product A1A2…An

Strategy:

Breaking a problem into sub-problem

– A1A2...Ak, Ak+1Ak+2…An

Recursively define the value of an optimal solution

– m[i,j] = 0 if i = j

– m[i,j]= min{i<=k<j} (m[i,k]+m[k+1,j]+pi-1pkpj)

– for 1 <= i <= j <= n](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-38-320.jpg)

![41

Algorithm

int minmult (int n, const ind d[], index P[ ] [ ])

{

index i, j, k, diagonal;

int M[1..n][1..n];

for (i = 1; i <= n; i++)

M[i][i] = 0;

for (diagonal = 1; diagonal <= n-1; diagonal++)

for (i = 1; i <= n-diagonal; i++)

{ j = i + diagonal;

M[i] [j] = minimum(M[i][k] + M[k+1][j] + d[i-1]*d[k]*d[j]);

// minimun (i <= k <= j-1)

P[i] [j] = a value of k that gave the minimum;

}

return M[1][n];

}](https://image.slidesharecdn.com/dynamicpgmming-140413223950-phpapp02/85/Dynamicpgmming-41-320.jpg)