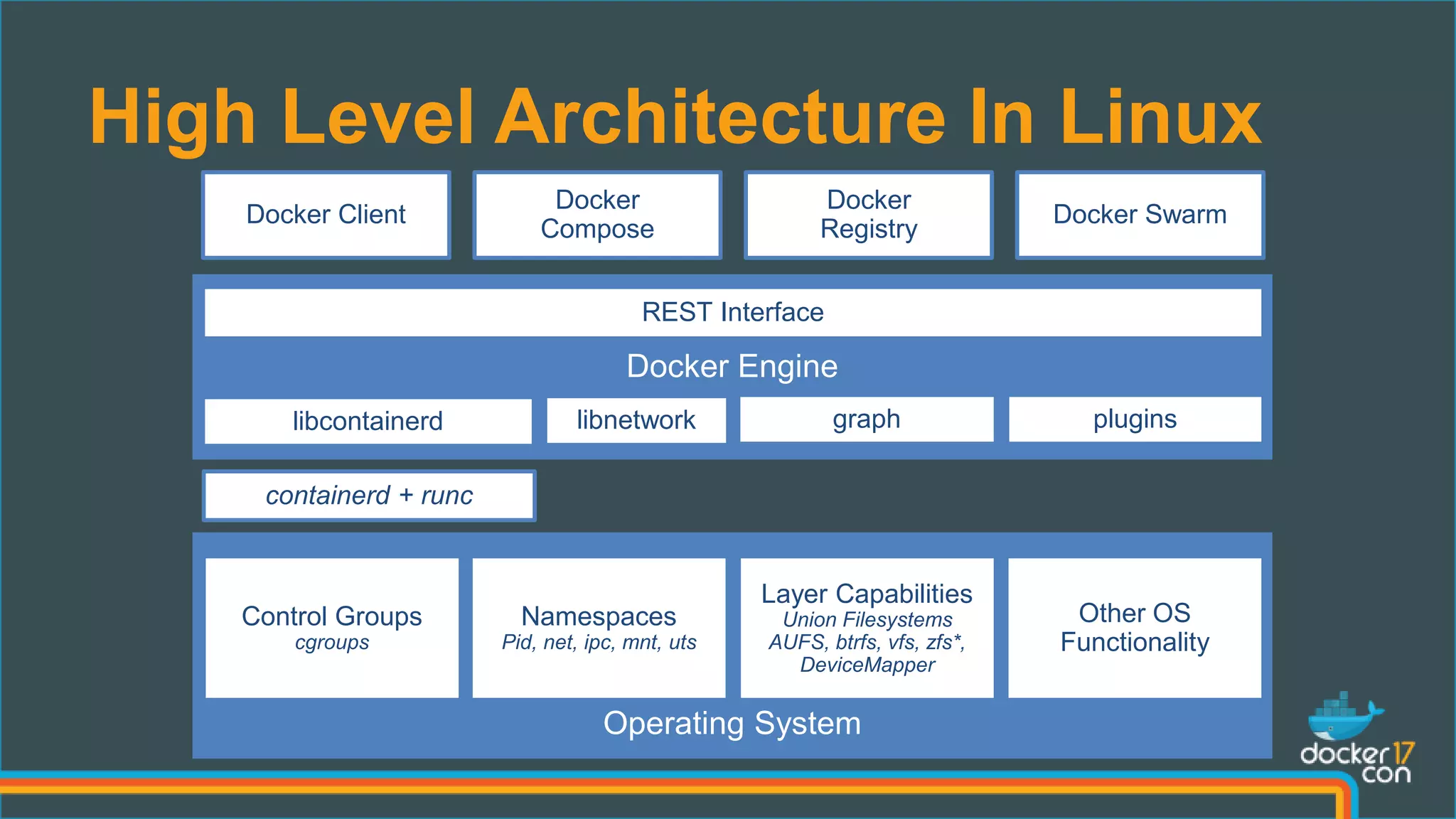

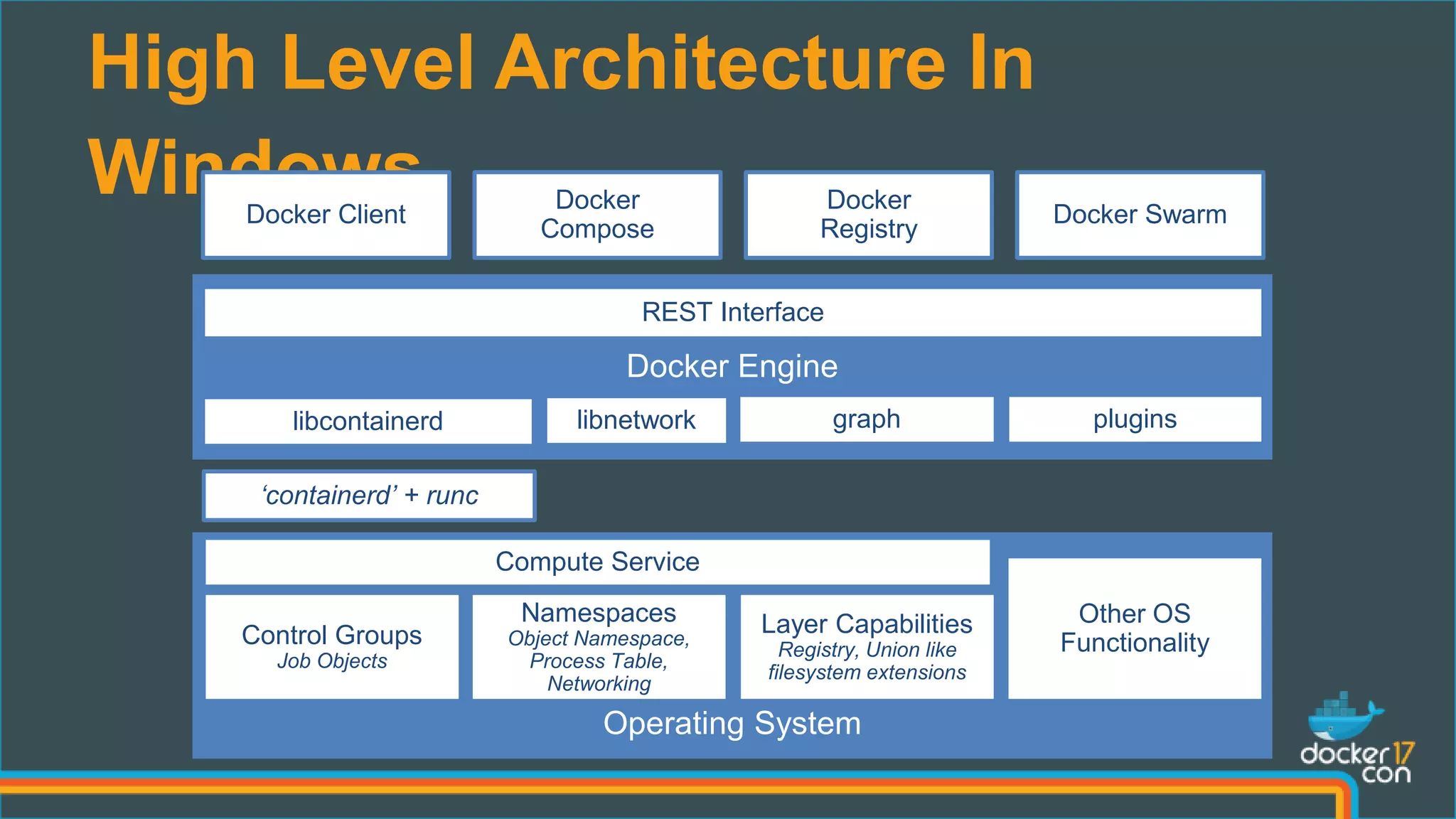

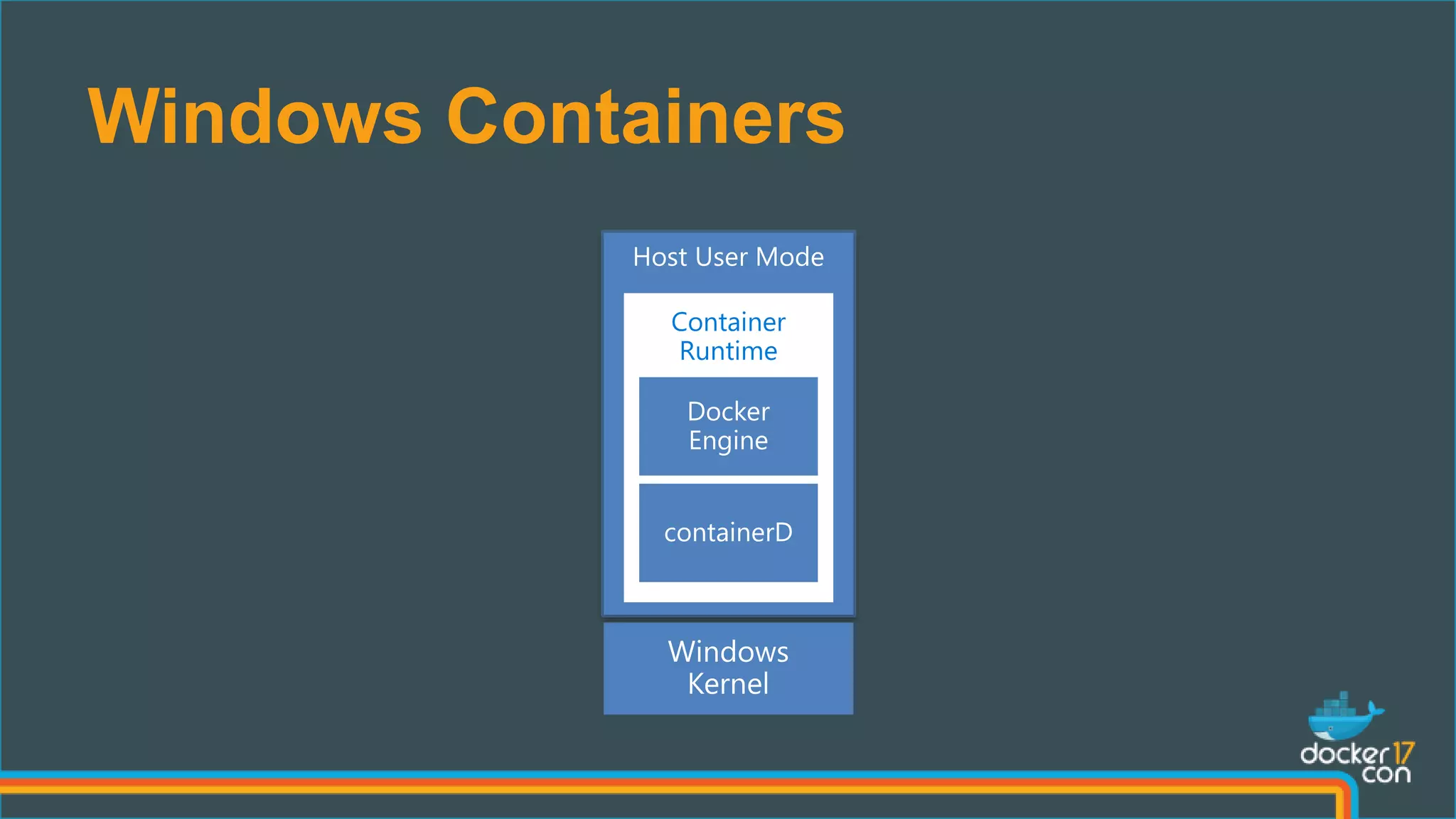

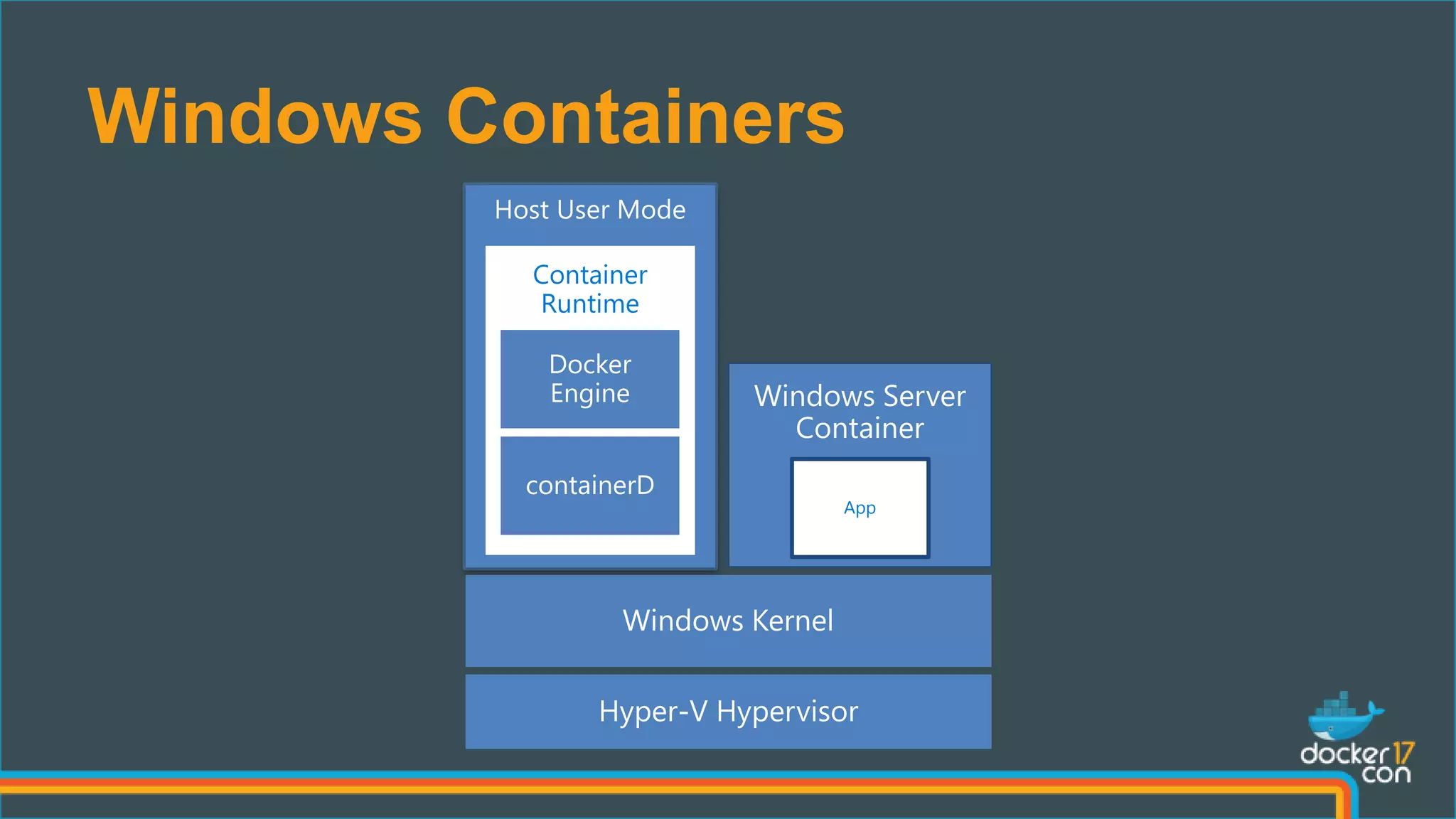

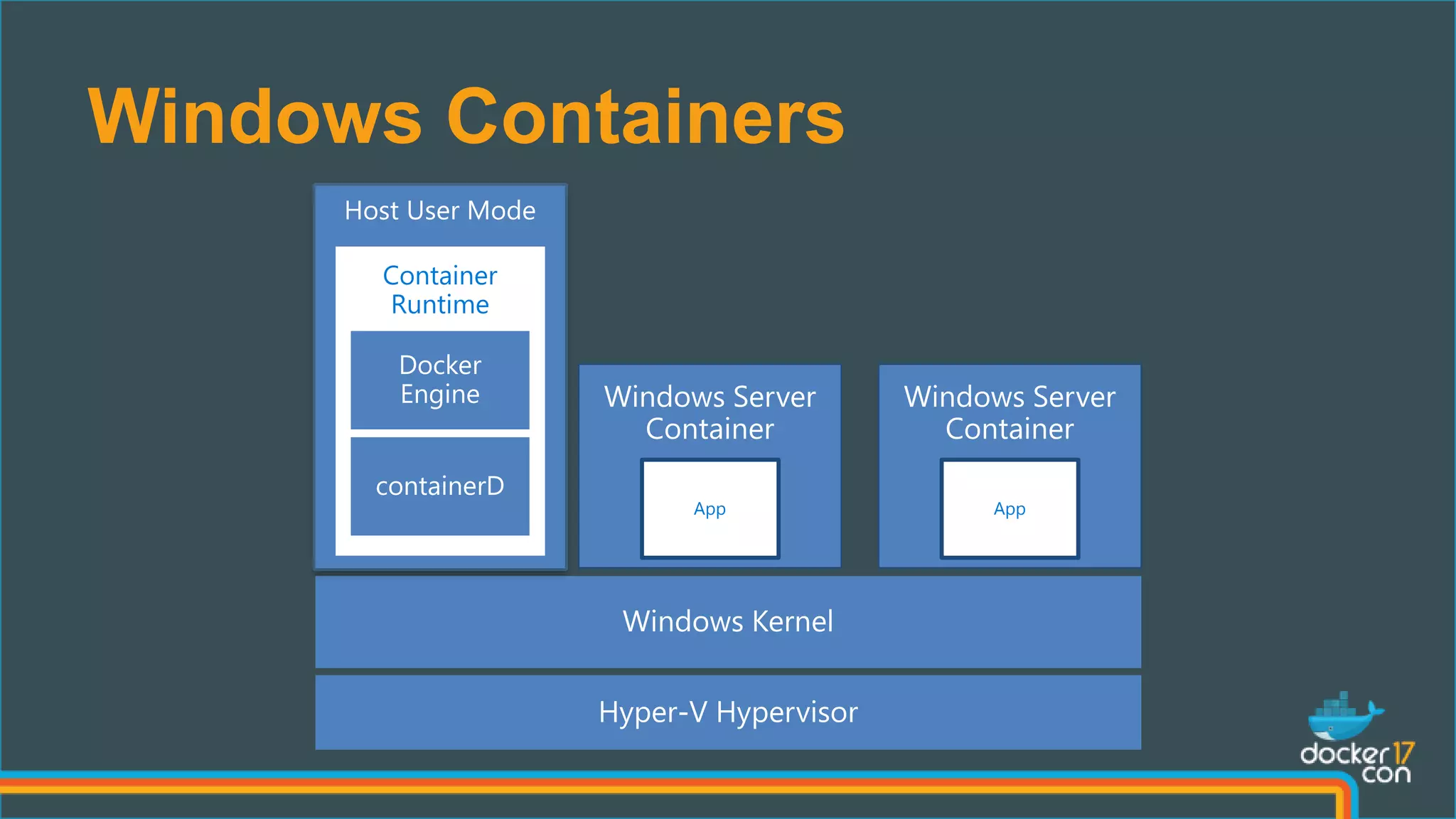

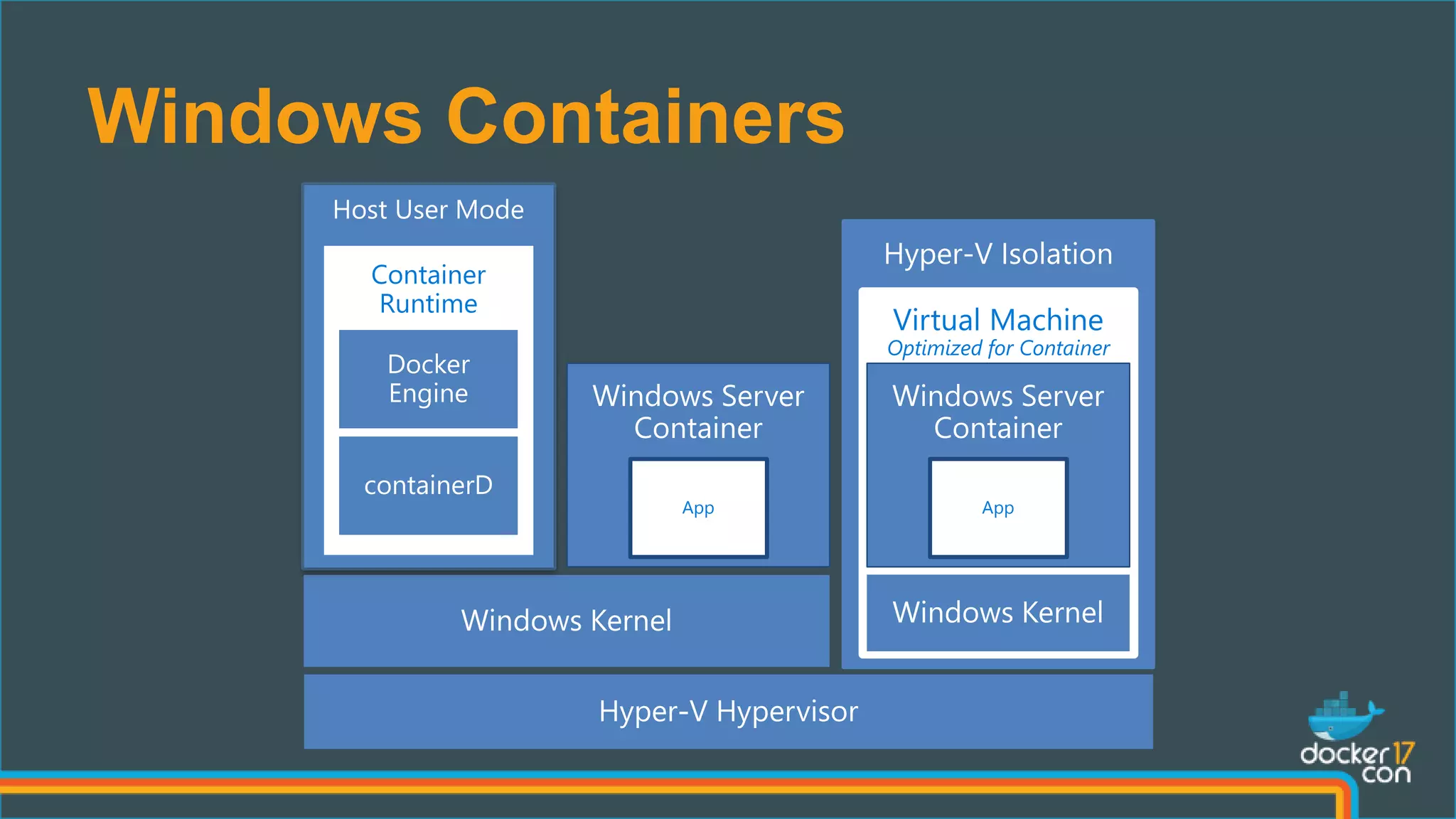

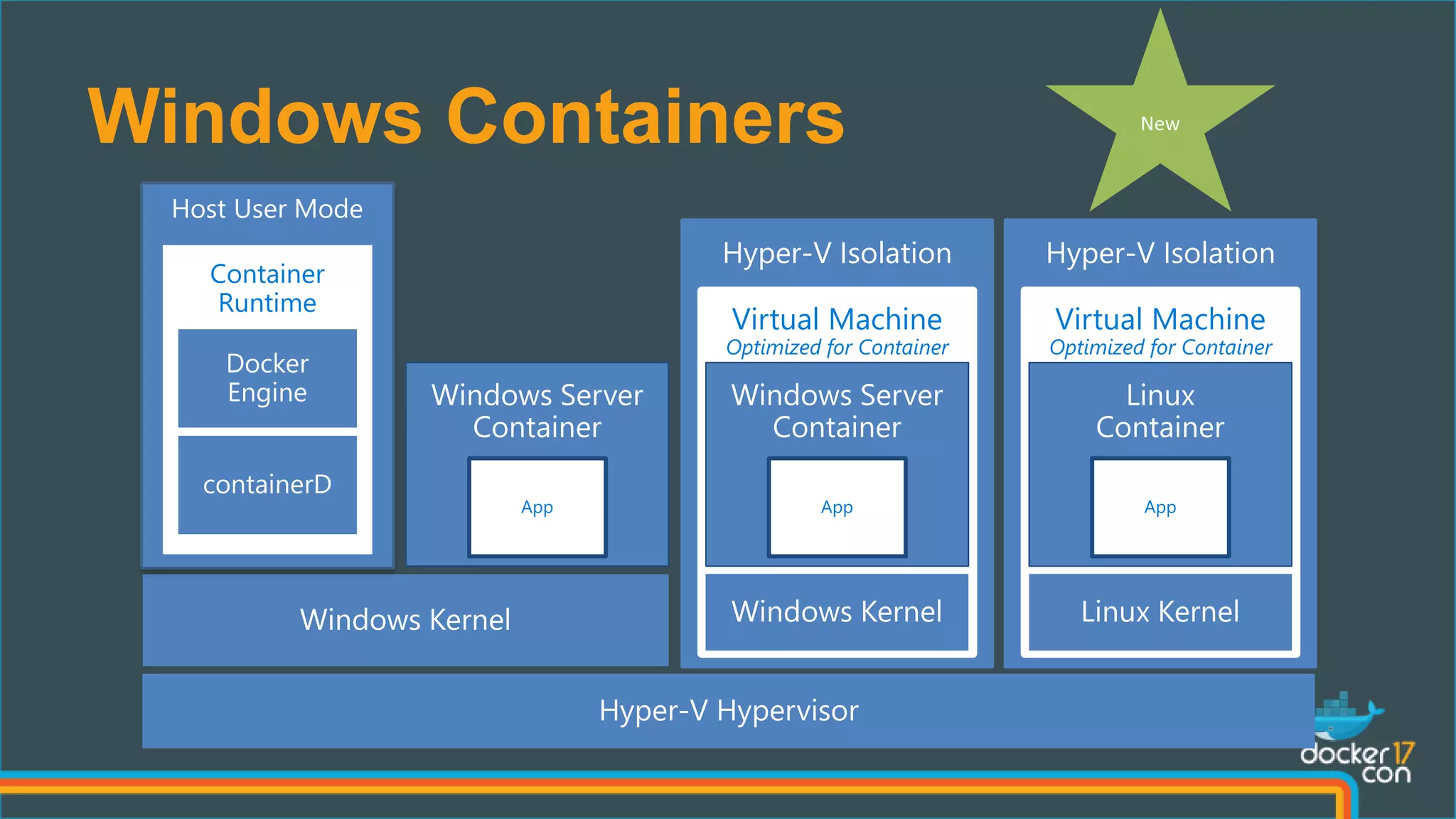

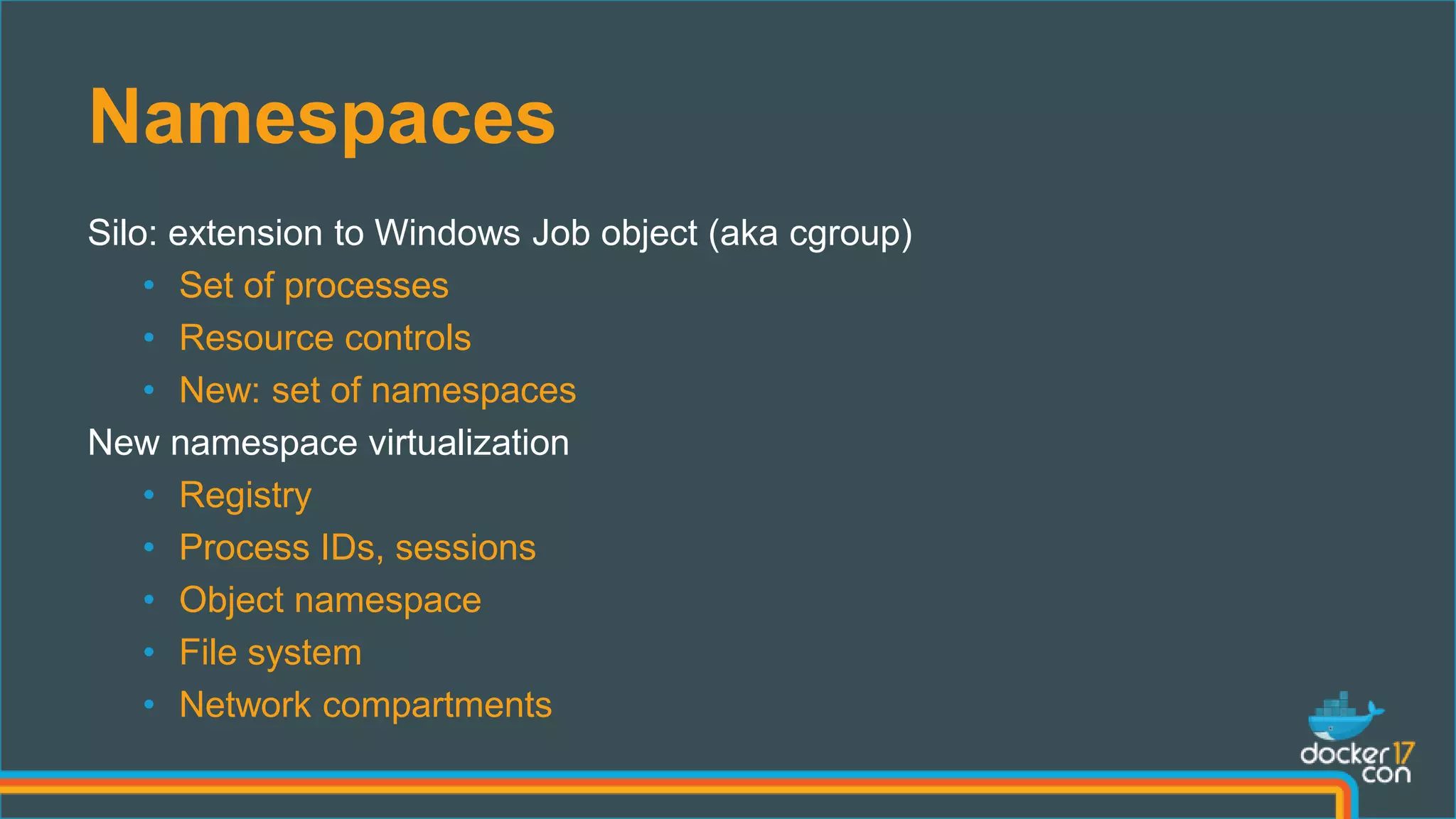

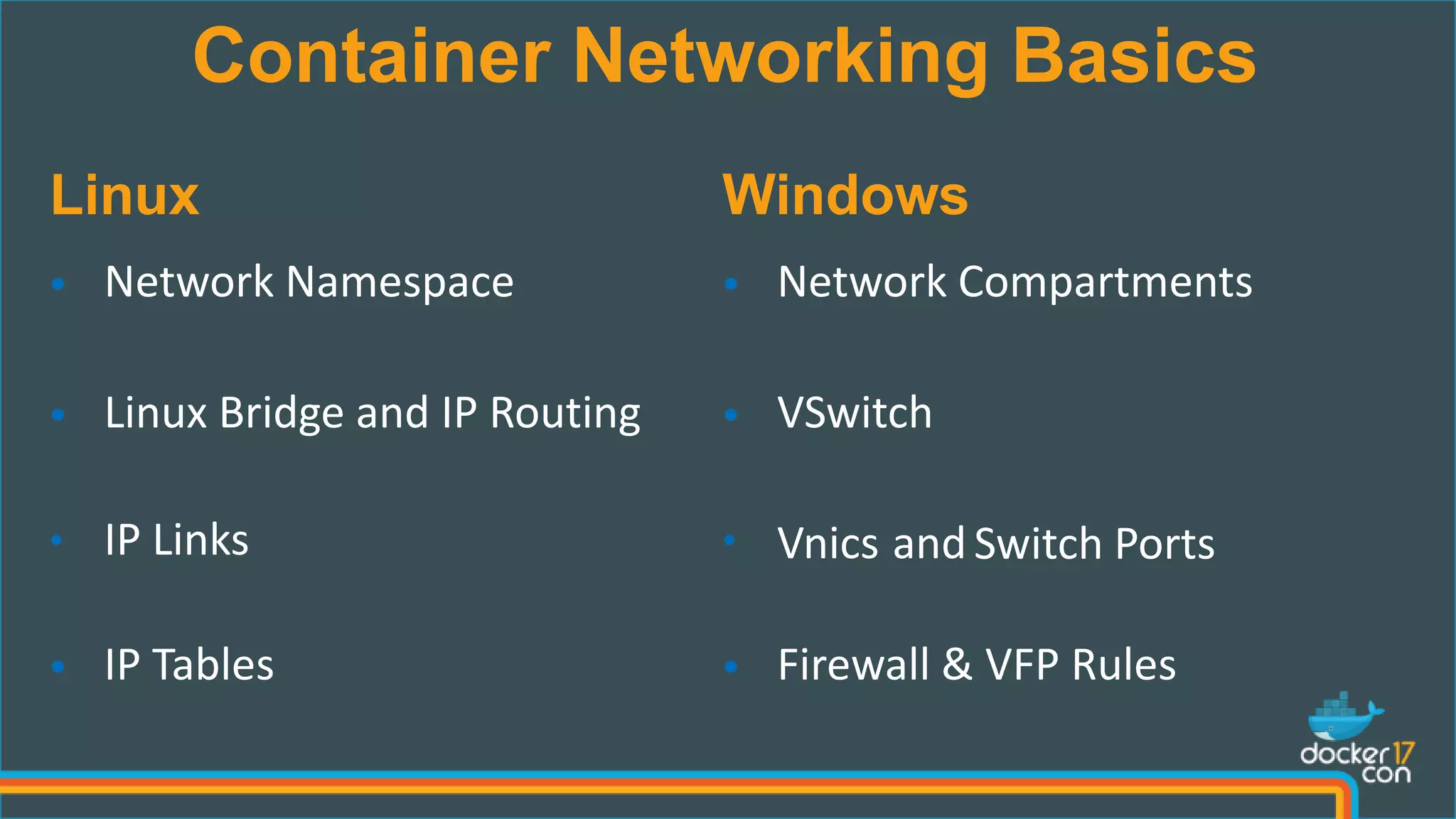

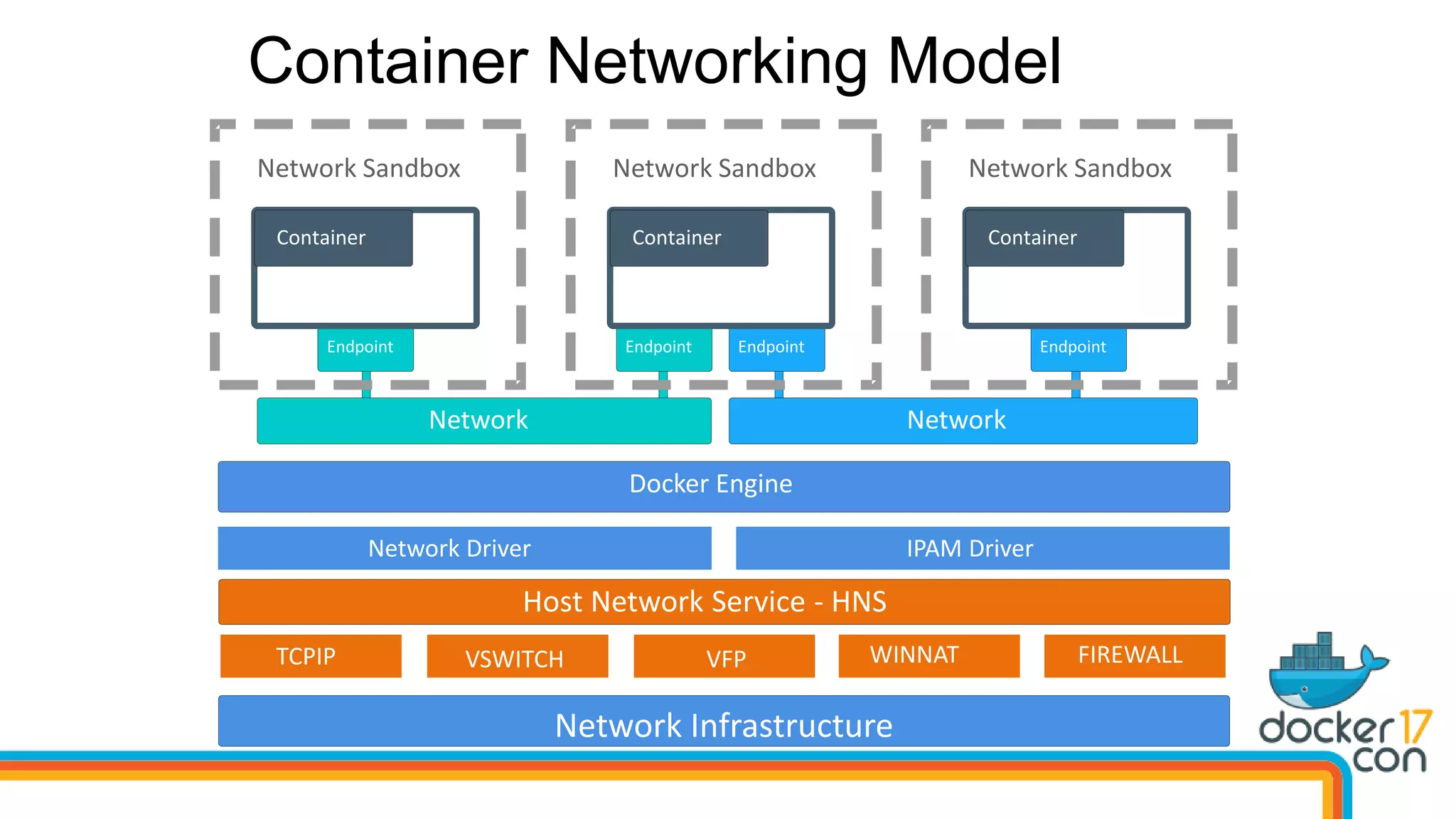

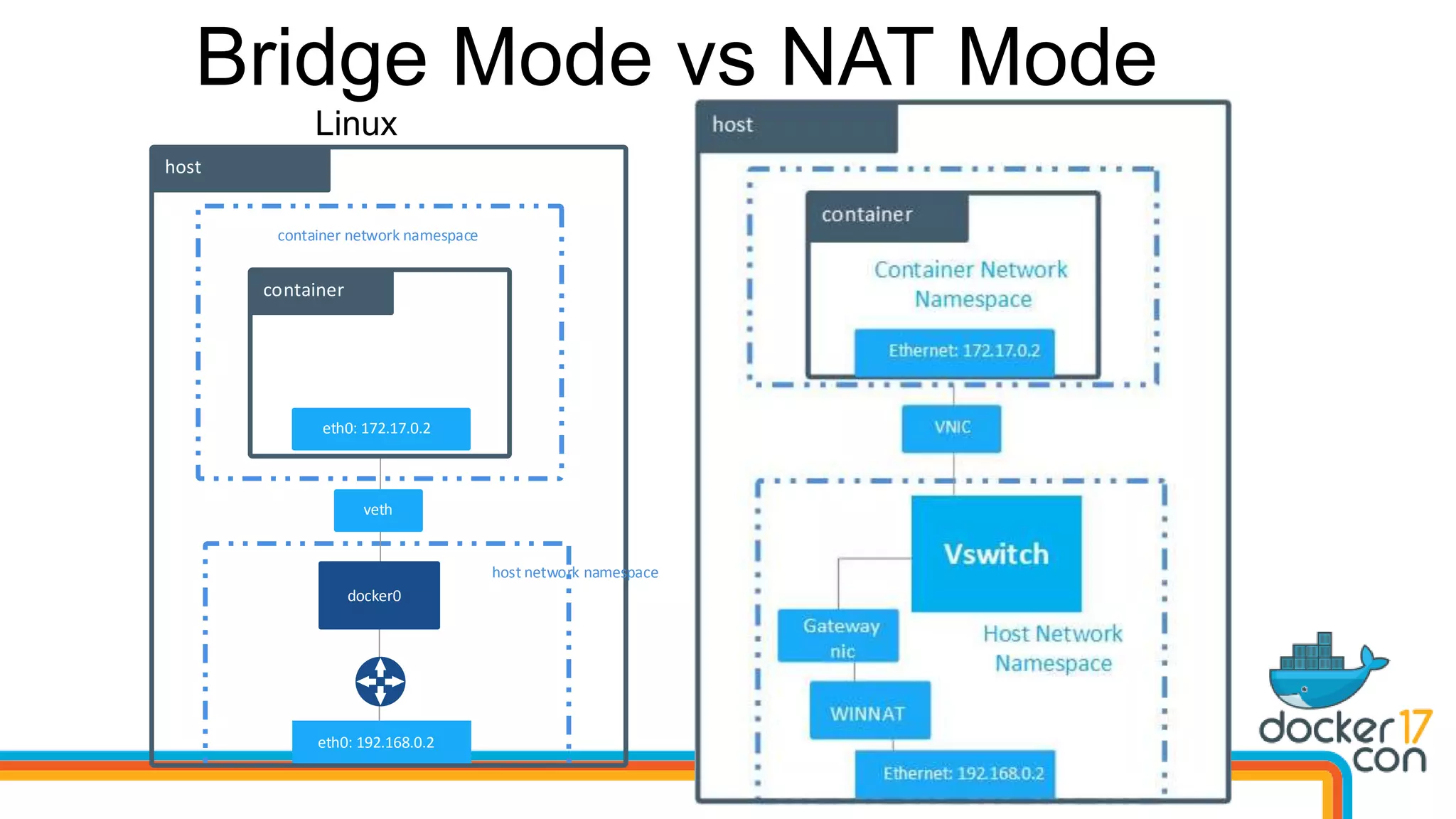

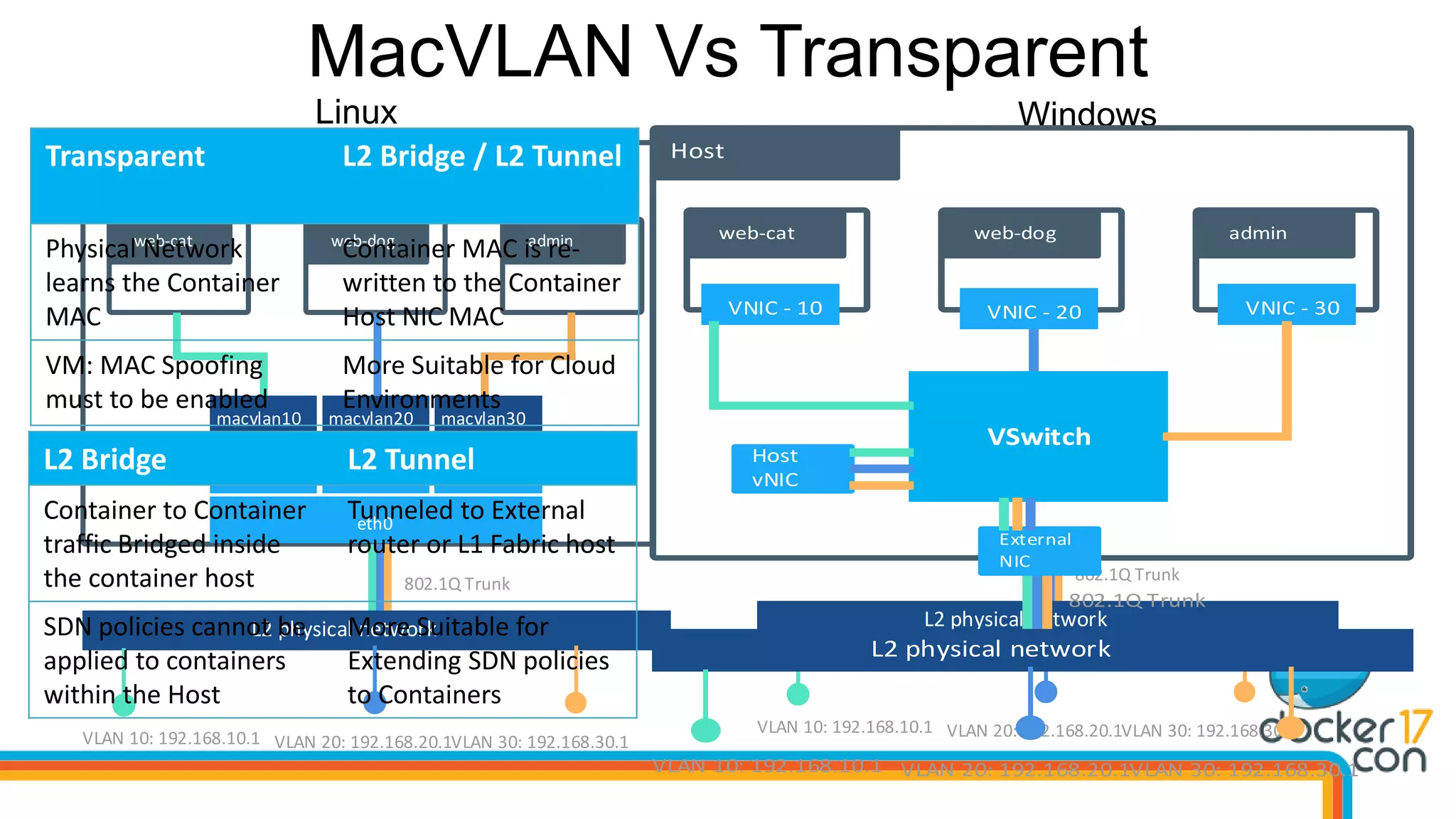

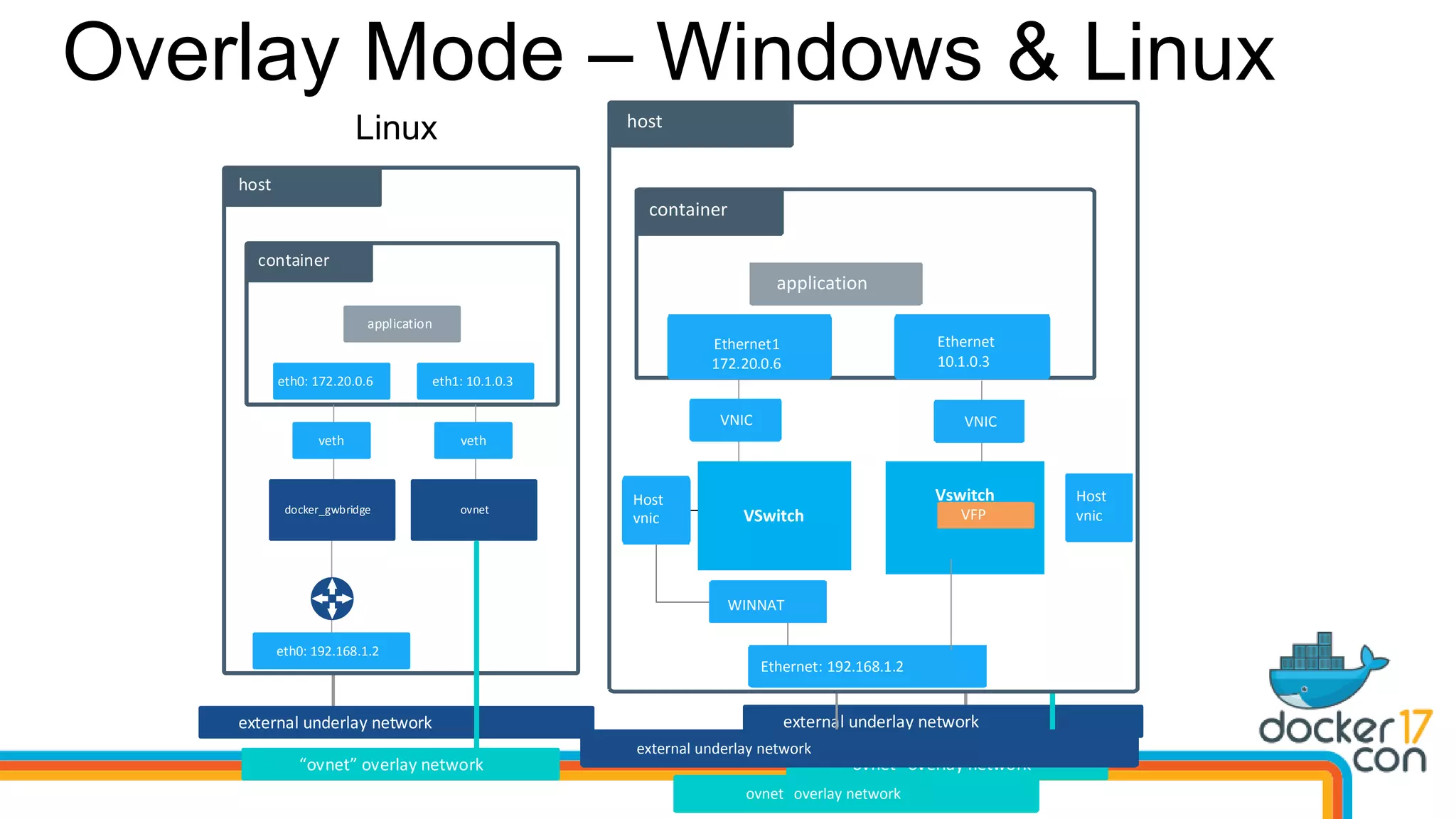

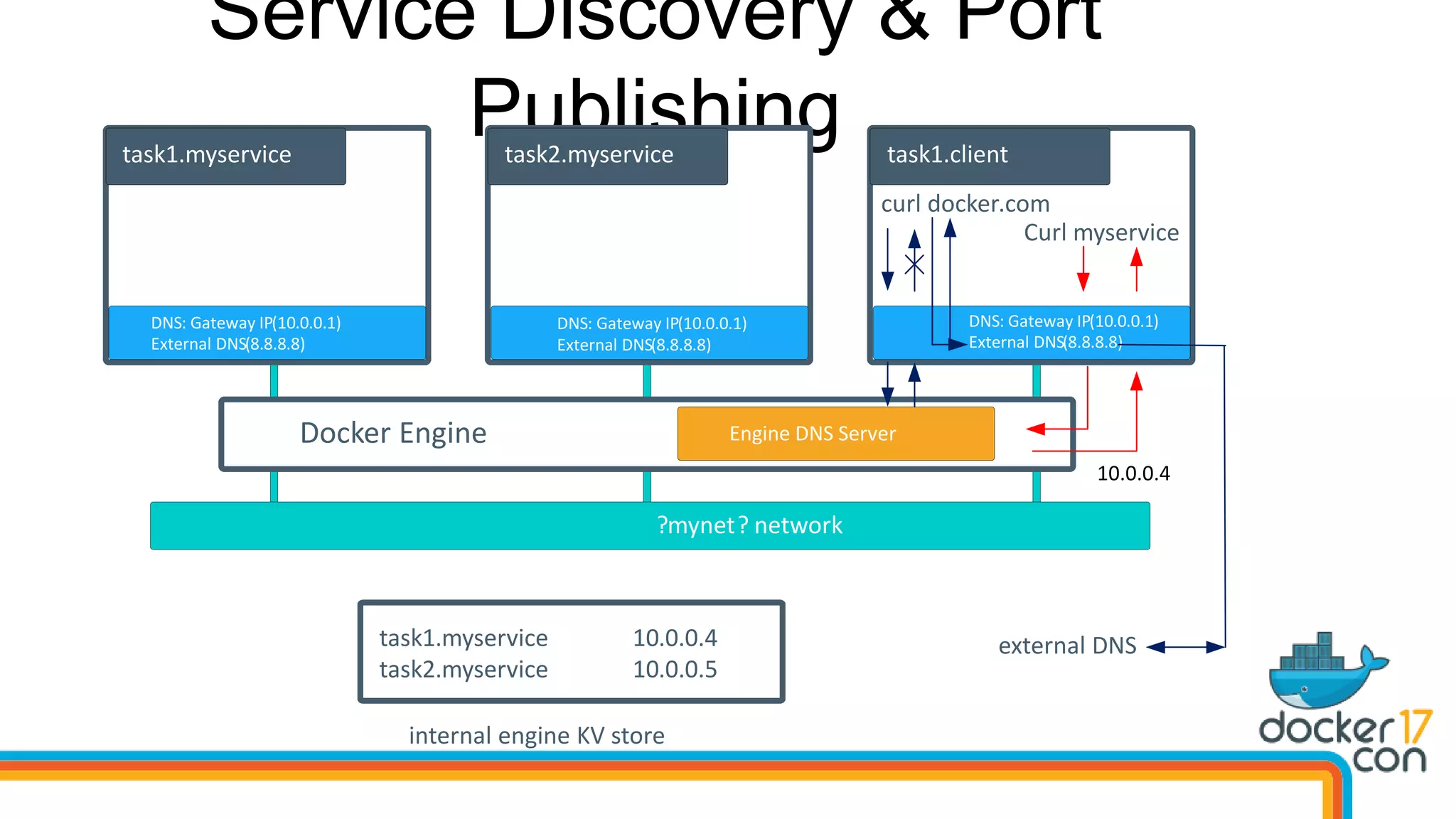

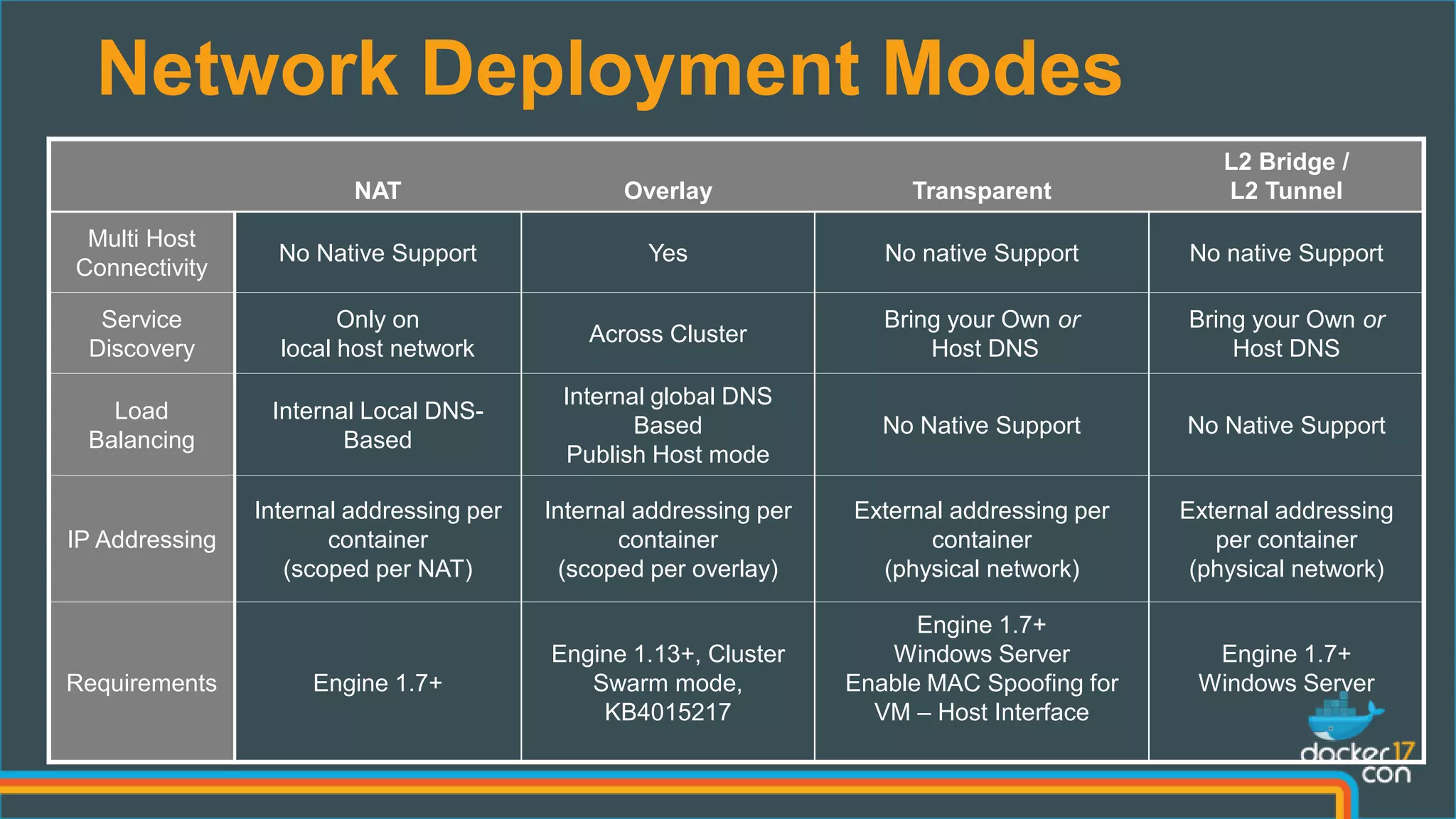

The document outlines the technical architecture and networking capabilities of Docker on Windows and Linux, comparing the implementation of containerization in both operating systems. It details features such as public interface to containers, hyper-v isolation, and networking models including NAT, macvlan, and overlay modes. Additionally, it discusses service discovery and port publishing within a Docker environment, and highlights the differences between local and multi-host connectivity.