Piotr Kieszczynski gave a presentation on network solutions for Docker. Some key points:

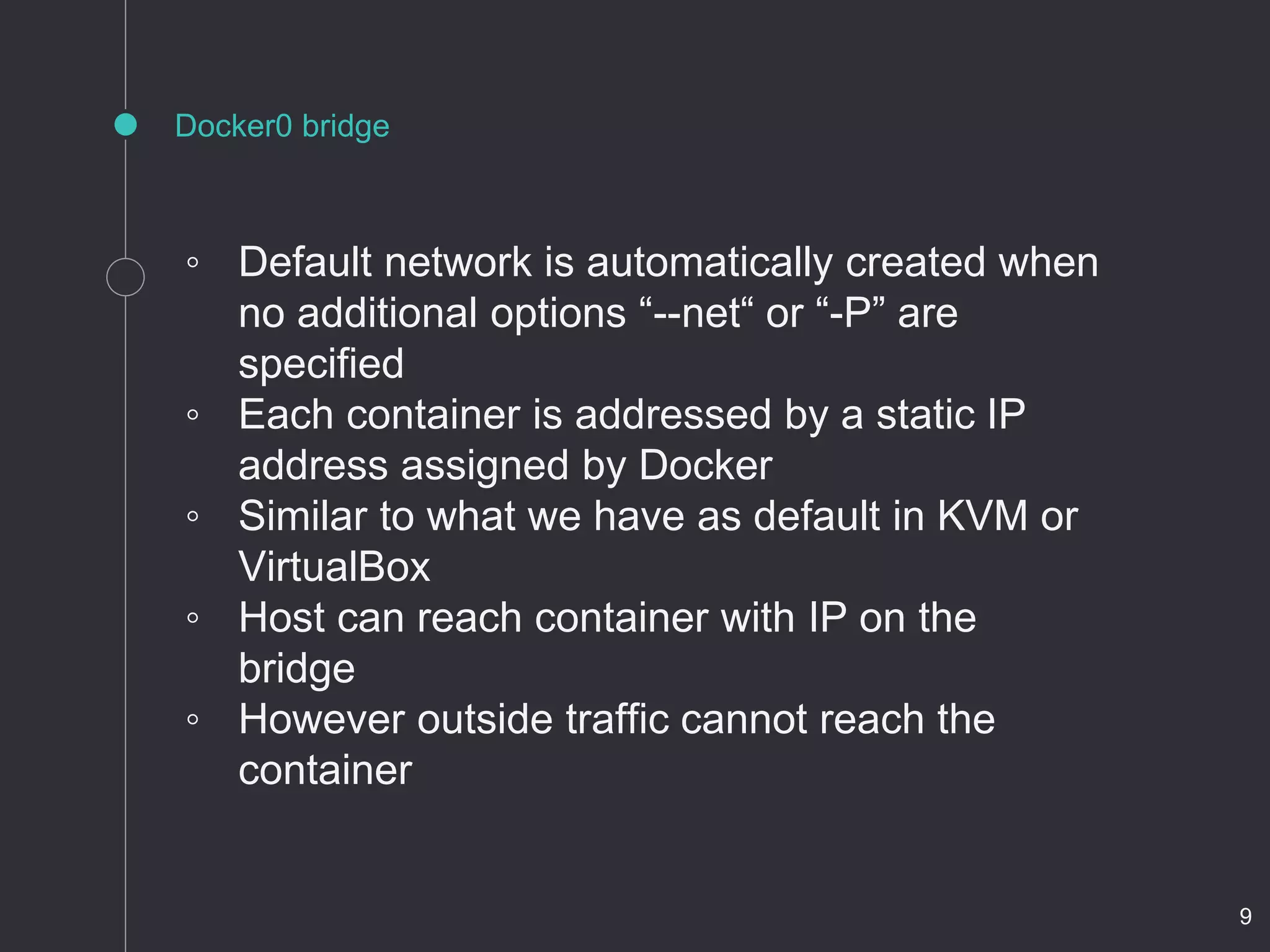

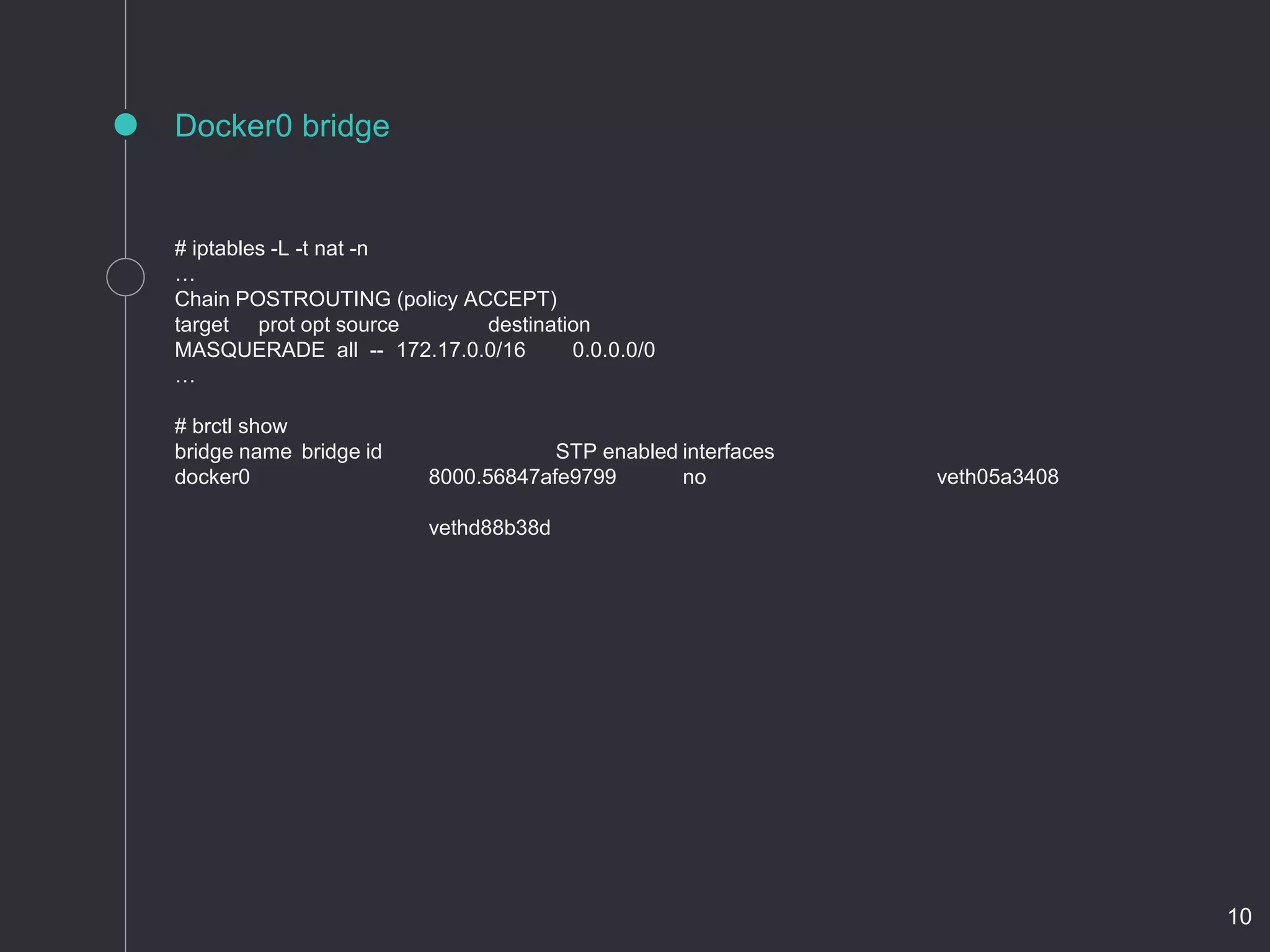

- Docker's default network assigns each container a static IP on the Linux bridge docker0, but outside traffic cannot reach containers.

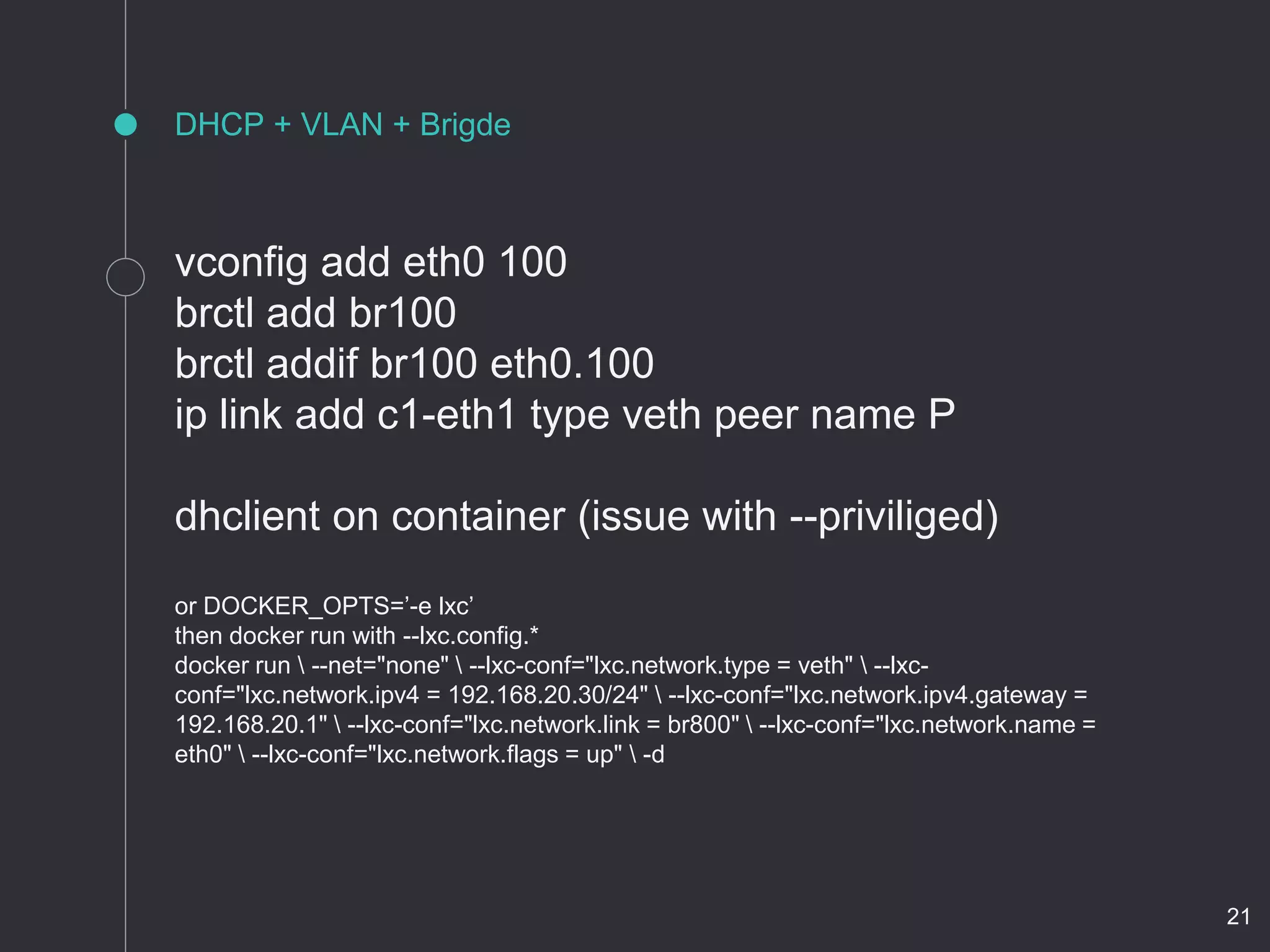

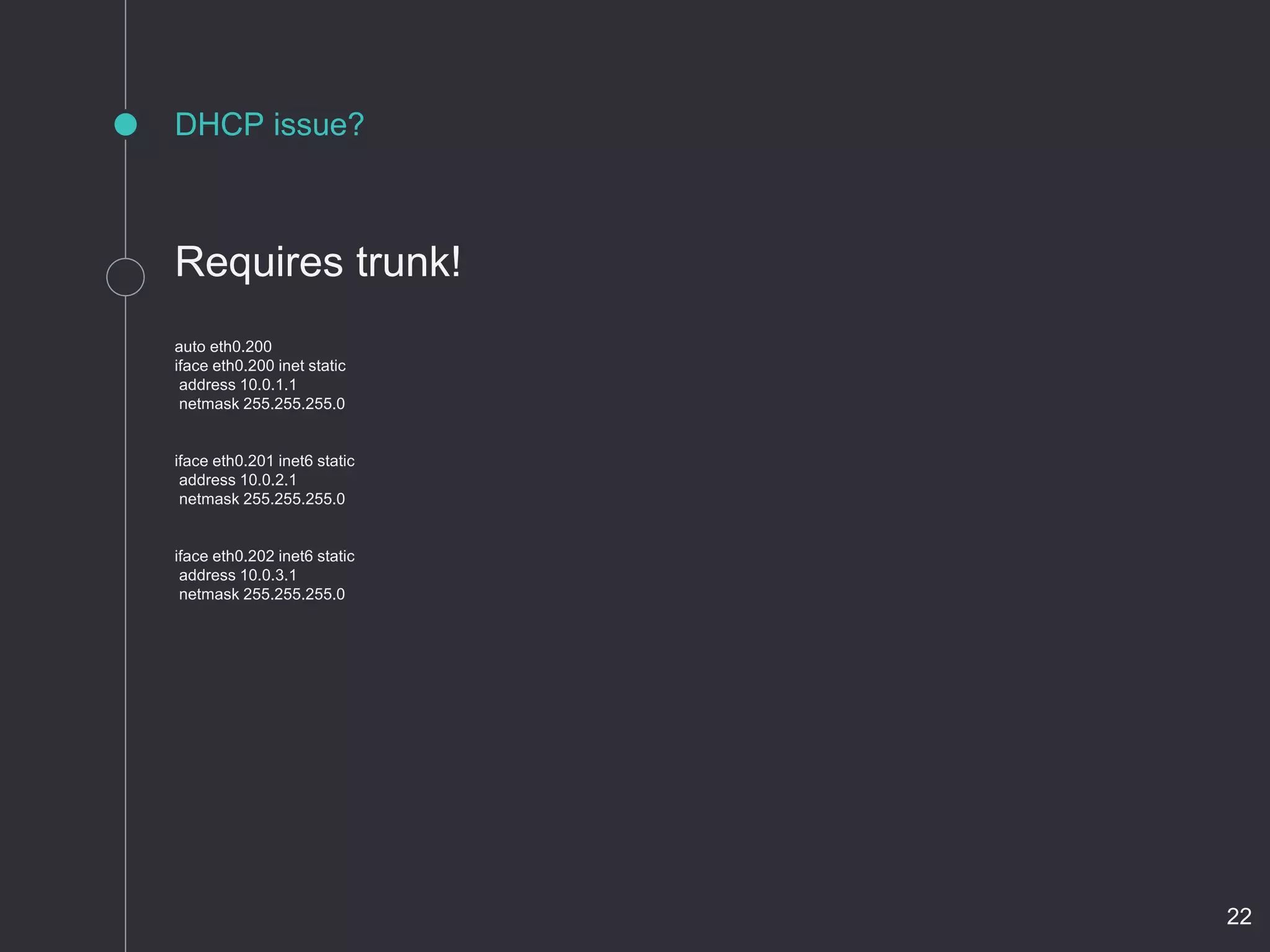

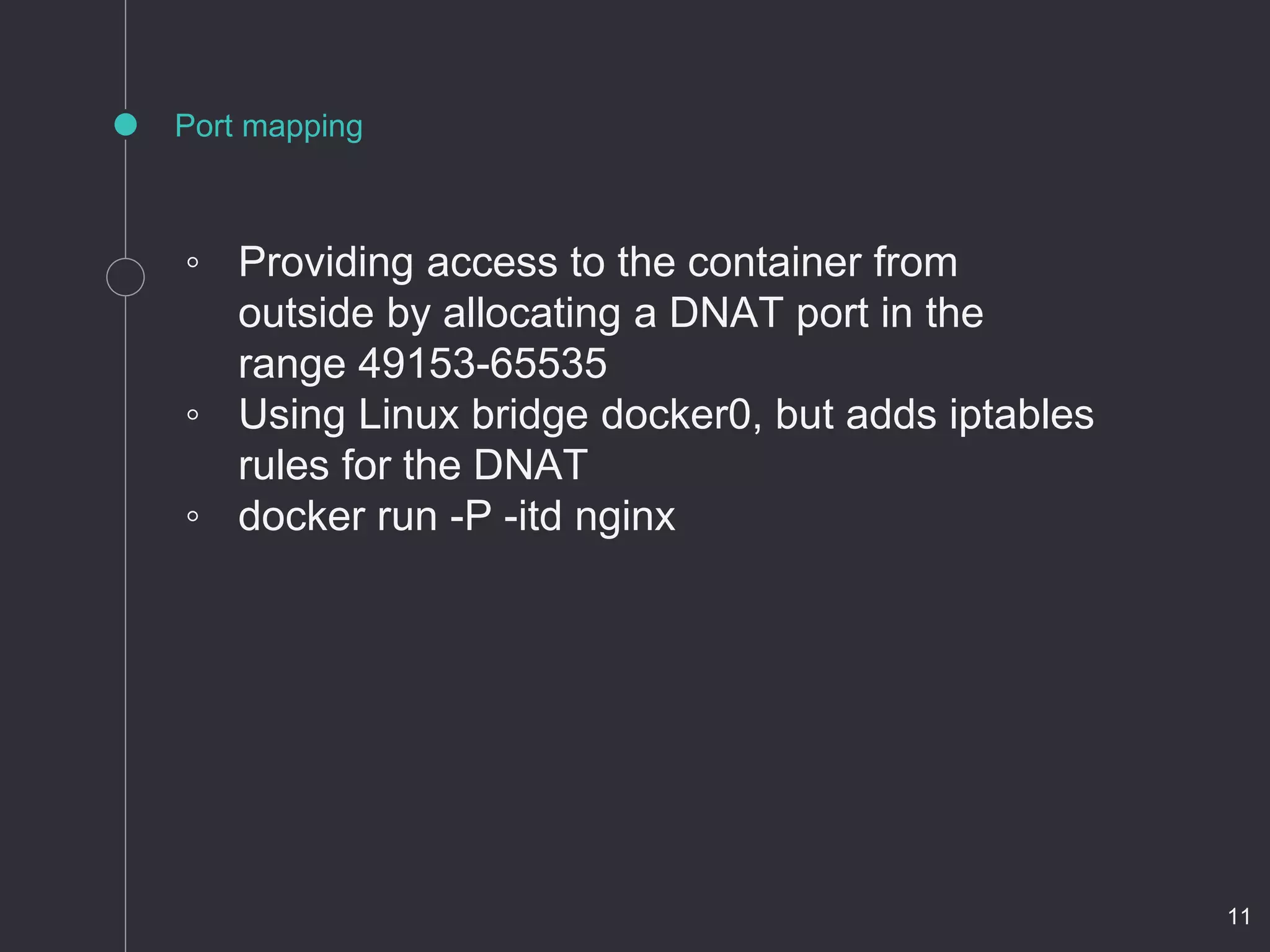

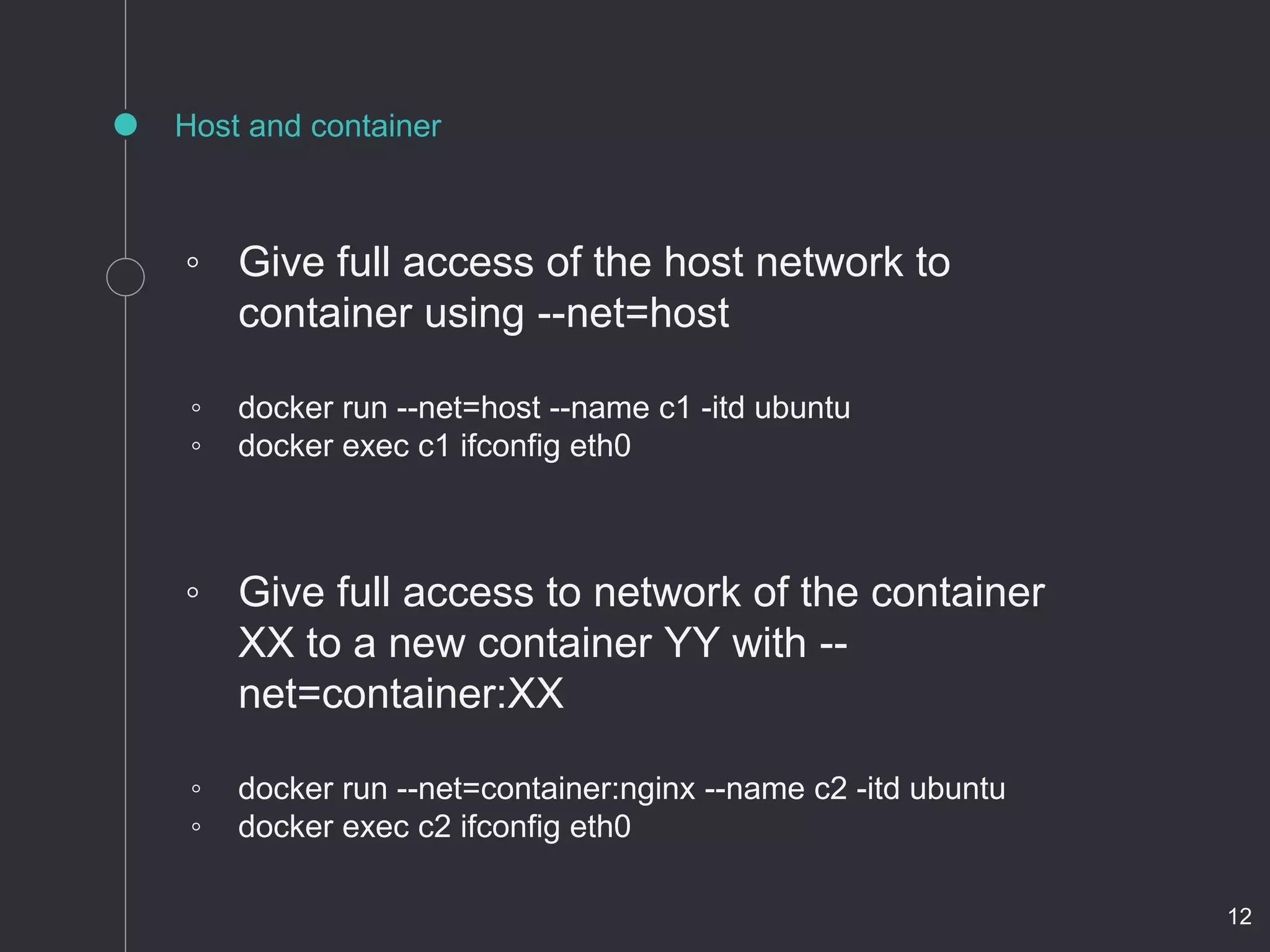

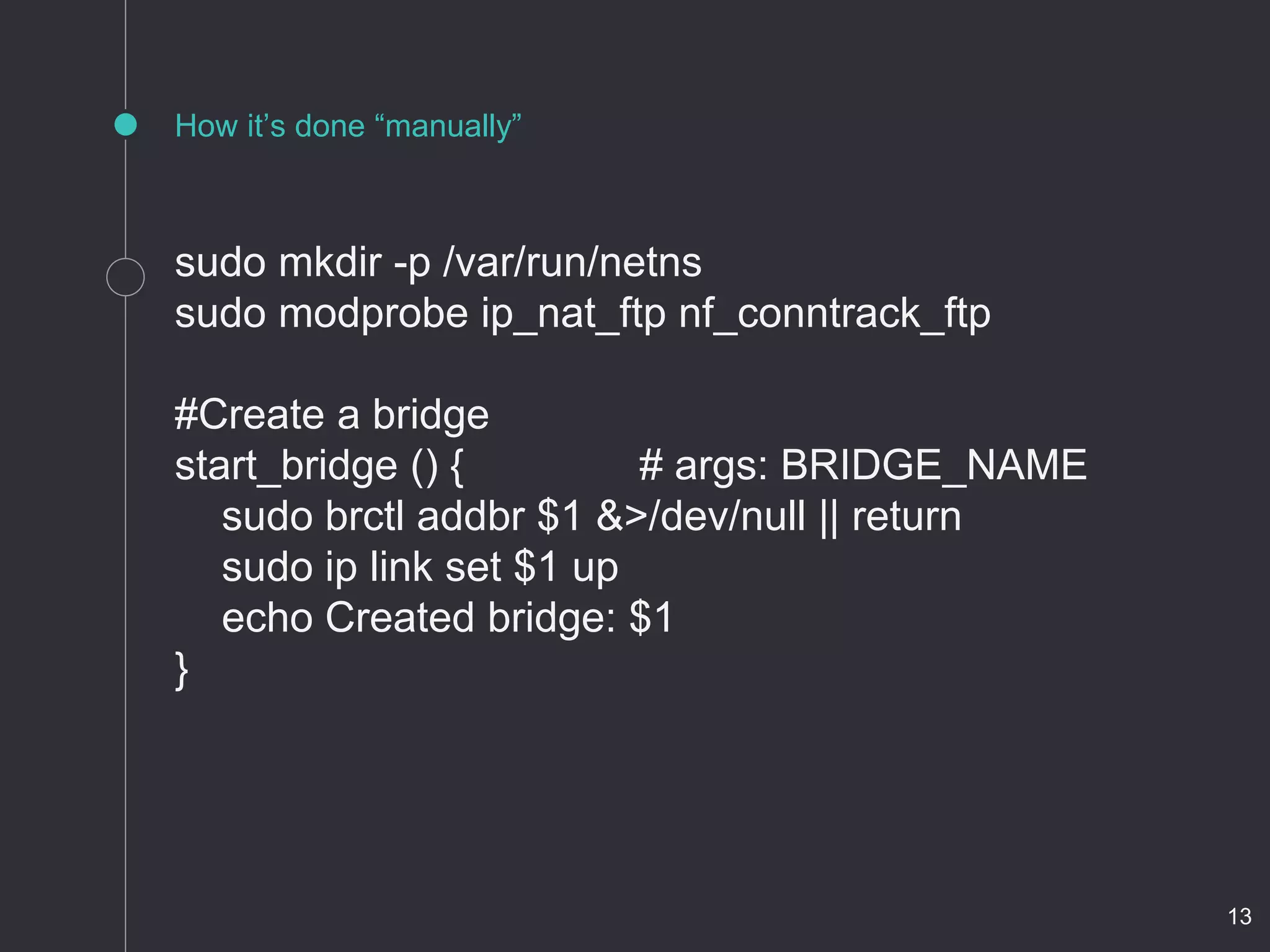

- Solutions like port mapping, host networking, and connecting containers allow external access but require IP management.

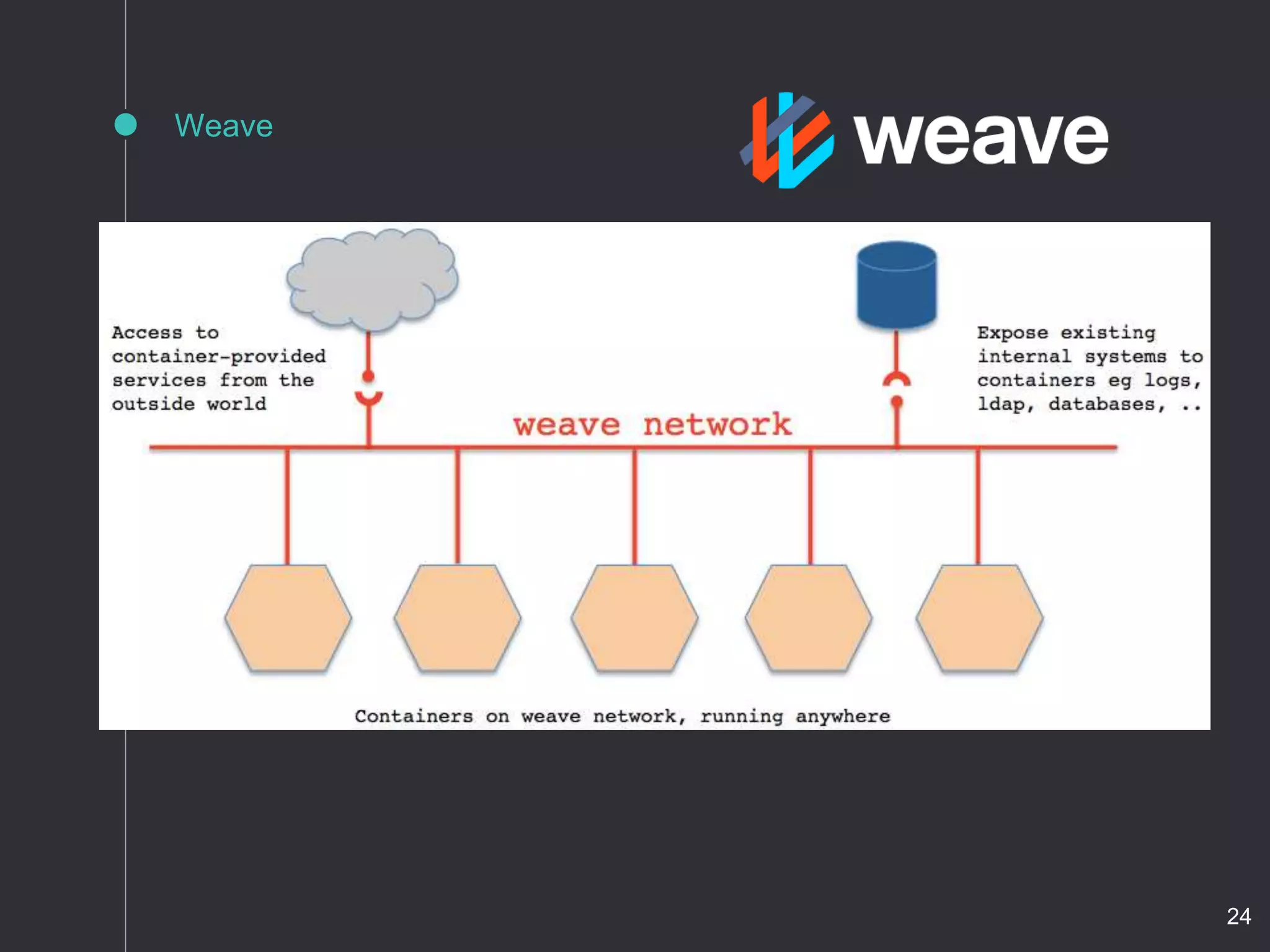

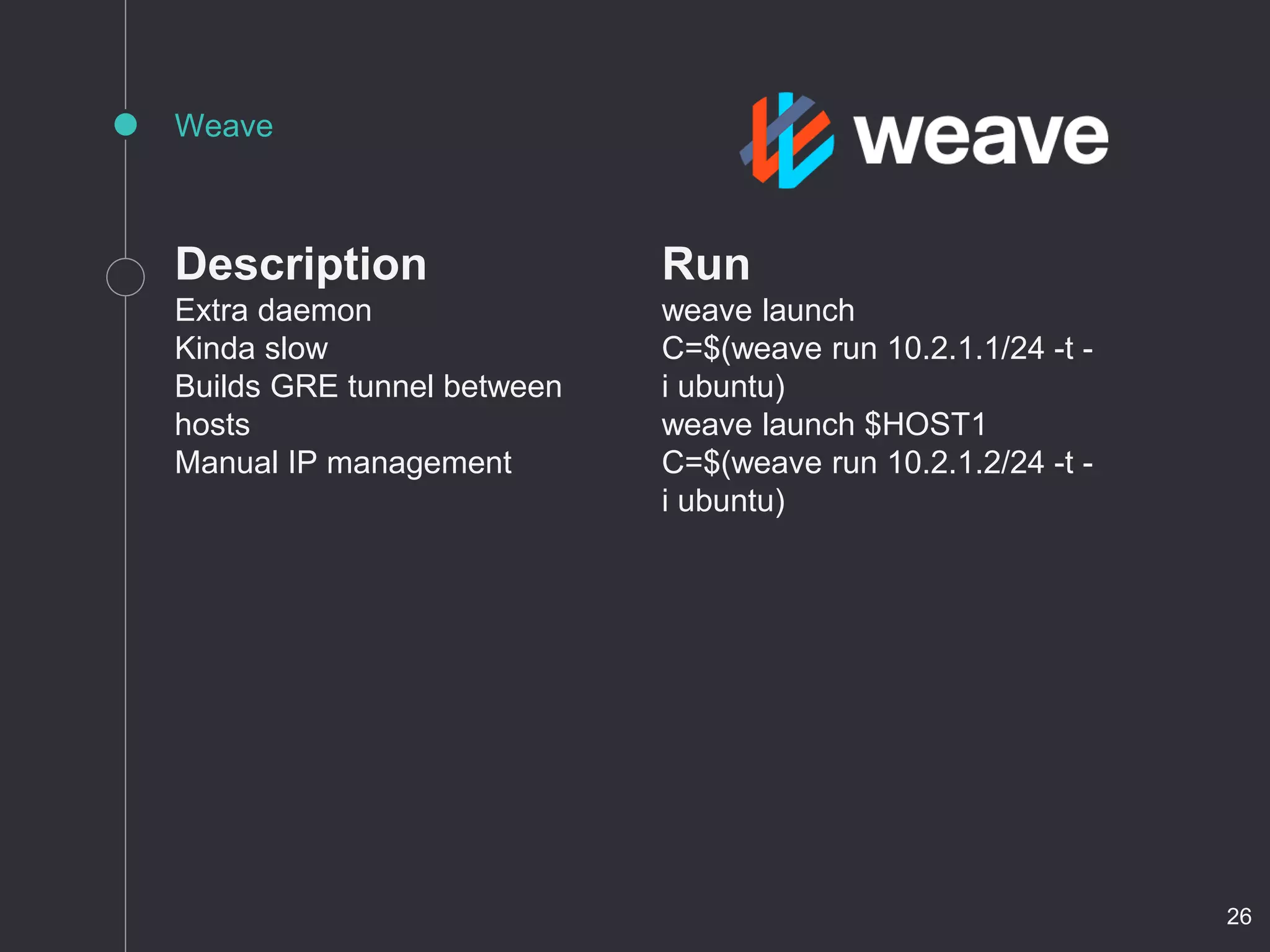

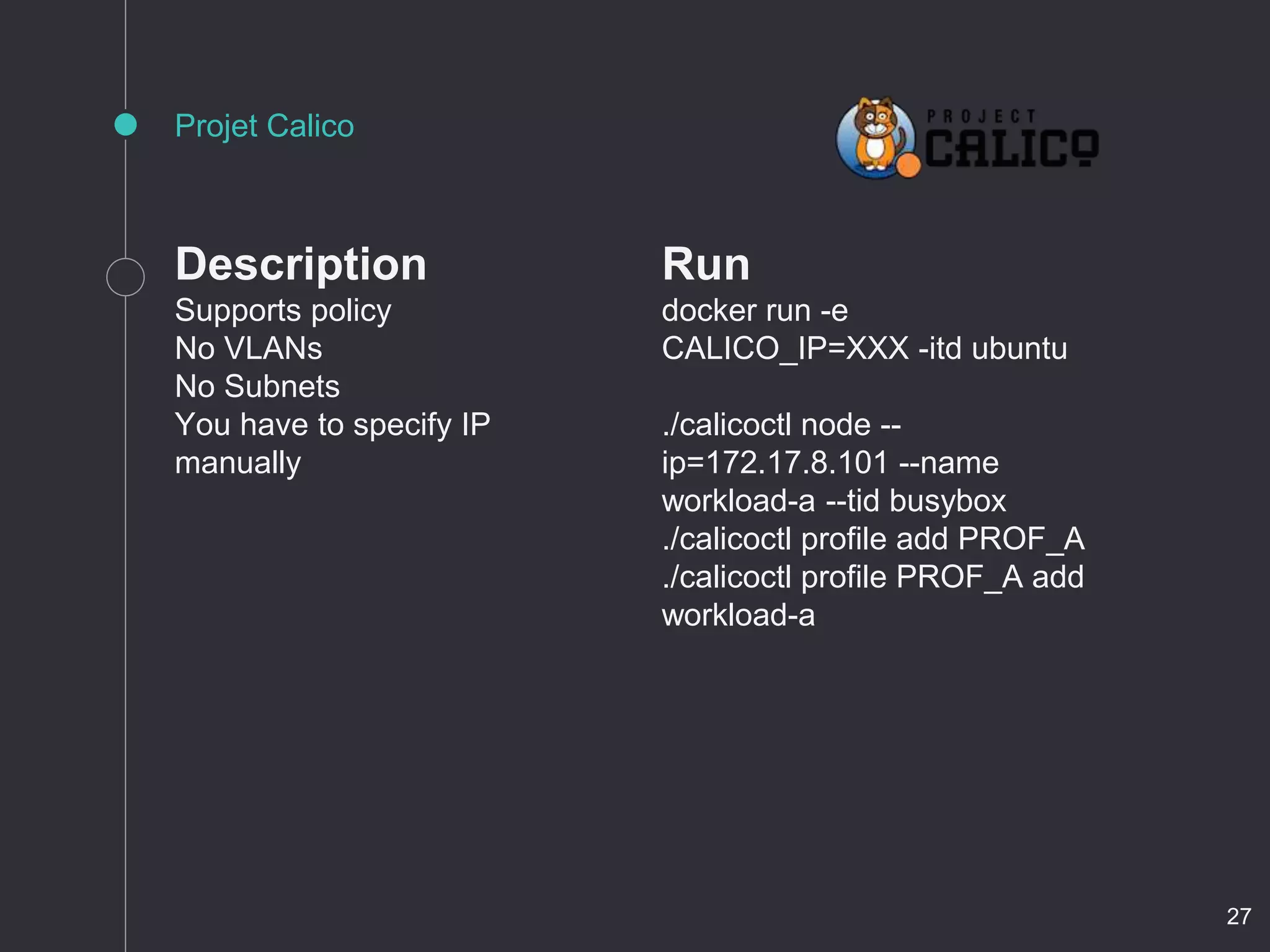

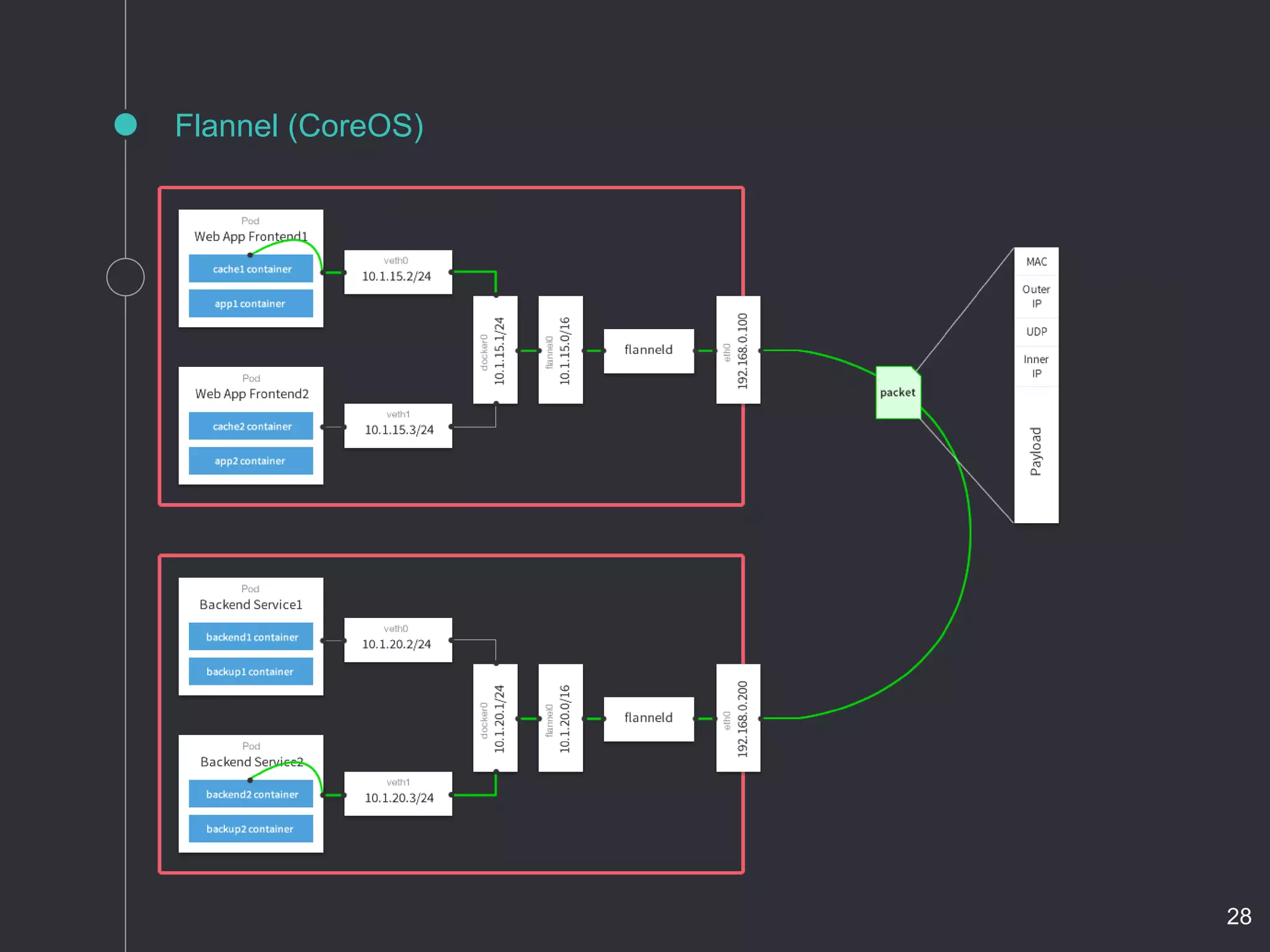

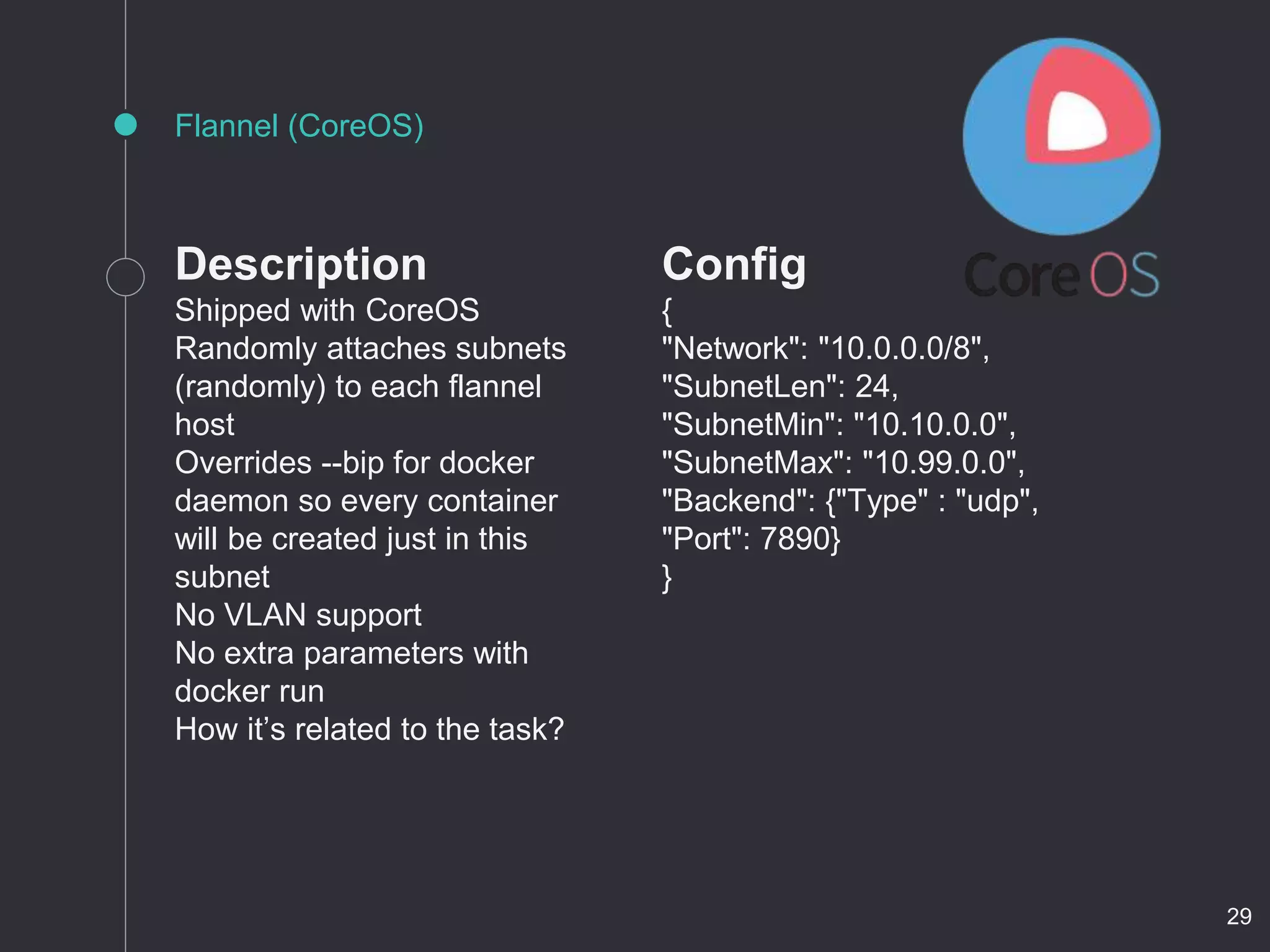

- Projects like Weave, Calico, Flannel, SocketPlane, and Pipework automate networking between containers and hosts using overlays like GRE tunnels or OVS.

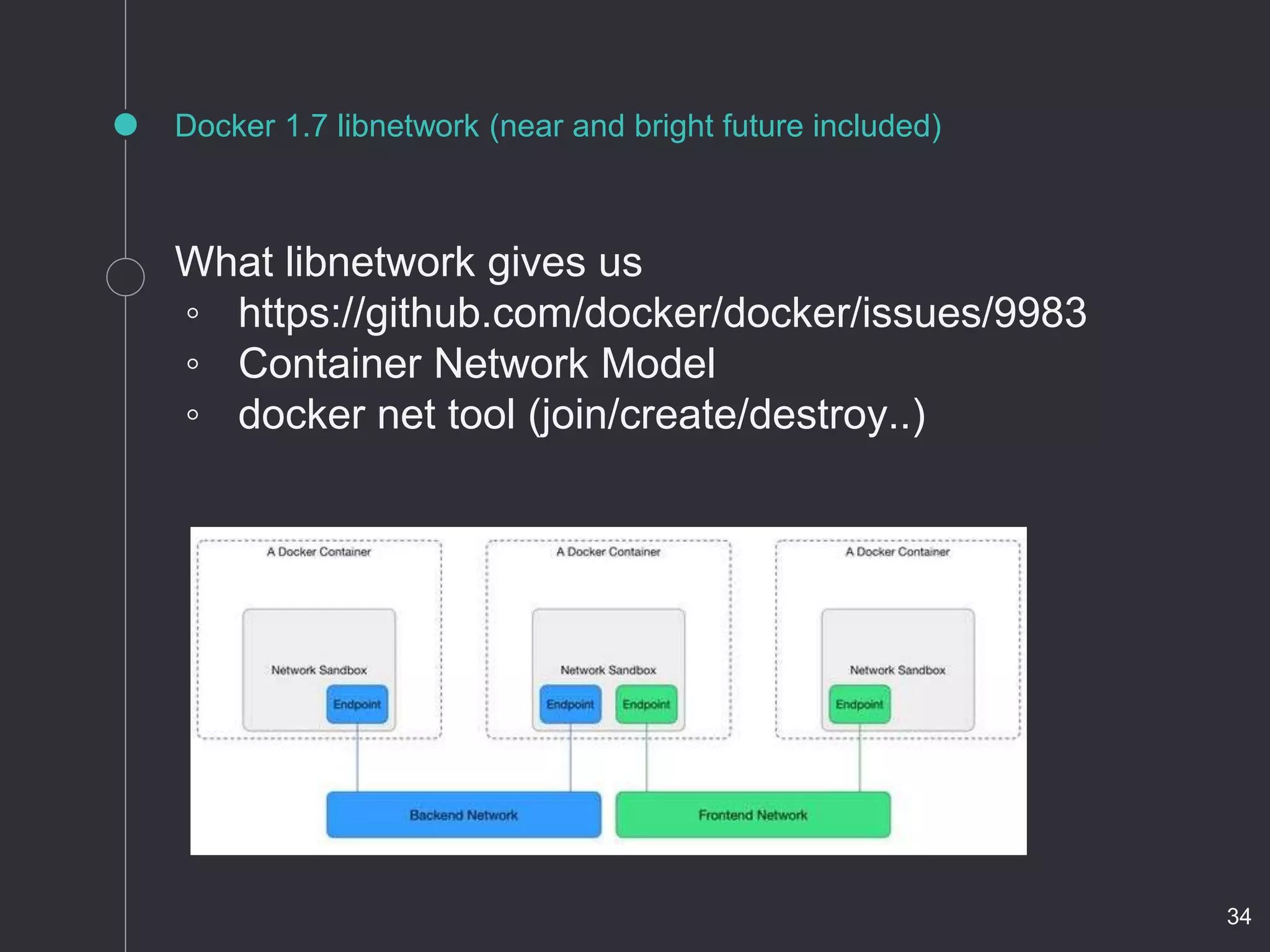

- Docker 1.7 includes a new libnetwork for container networking with a common network model and tools to manage networks.

![start_container () {

hostname=$1

image=$2

port=$3

container=${hostname%%.*}

pid=$(docker inspect -f '{{.State.Pid}}' $container 2>/dev/null)

if [ "$?" = "1" ]

then

if [ -n "$port" ]

then netopts="--publish=$port:22"

else netopts="--net=none"

fi

docker run --name=$container --hostname=$hostname

--dns=10.1.1.1 --dns-search=example.com "$netopts"

-d $image

elif [ "$pid" = "0" ]

then

docker start $container >/dev/null

else

return

fi

pid=$(docker inspect -f '{{.State.Pid}}' $container)

sudo rm -f /var/run/netns/$container

sudo ln -s /proc/$pid/ns/net /var/run/netns/$container

echo Container started: $container

}

How it’s done “manually” #2

14](https://image.slidesharecdn.com/vx0mucltrmfsak6ngvag-signature-78f0ddc83ff2939e0ff24918d3acd6ac2bd494dc020f164cfa3c37a400171abe-poli-150625070818-lva1-app6891/75/Docker-SDN-software-defined-networking-JUG-14-2048.jpg)

![CoreOS (cloud-init)

#brigde

- name: 20-br800.netdev

runtime: true

content: |

[NetDev]

Name=br800

Kind=bridge

#vlan

- name: 00-vlan800.netdev

runtime: true

content: |

[NetDev]

Name=vlan800

Kind=vlan

[VLAN]

Id=800

19](https://image.slidesharecdn.com/vx0mucltrmfsak6ngvag-signature-78f0ddc83ff2939e0ff24918d3acd6ac2bd494dc020f164cfa3c37a400171abe-poli-150625070818-lva1-app6891/75/Docker-SDN-software-defined-networking-JUG-19-2048.jpg)

![CoreOS (cloud-init) #2

#subinterface

- name: 10-eth1.network

runtime: true

content: |

[Match]

Name=eth1

[Network]

DHCP=yes

VLAN=vlan800

#attach

- name: 30-attach.network

runtime: true

content: |

[Match]

Name=vlan800

[Network]

Bridge=br800

20](https://image.slidesharecdn.com/vx0mucltrmfsak6ngvag-signature-78f0ddc83ff2939e0ff24918d3acd6ac2bd494dc020f164cfa3c37a400171abe-poli-150625070818-lva1-app6891/75/Docker-SDN-software-defined-networking-JUG-20-2048.jpg)