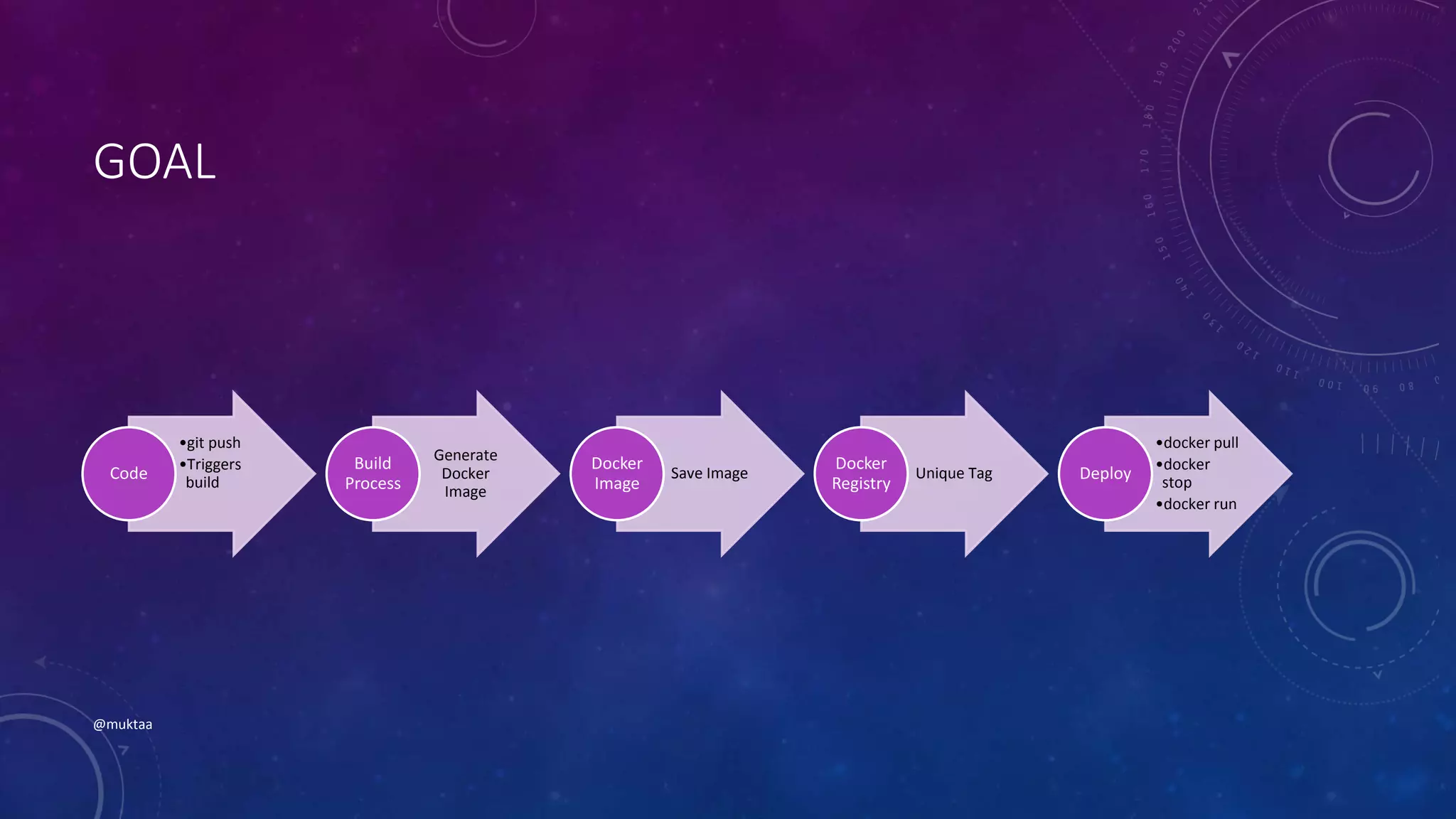

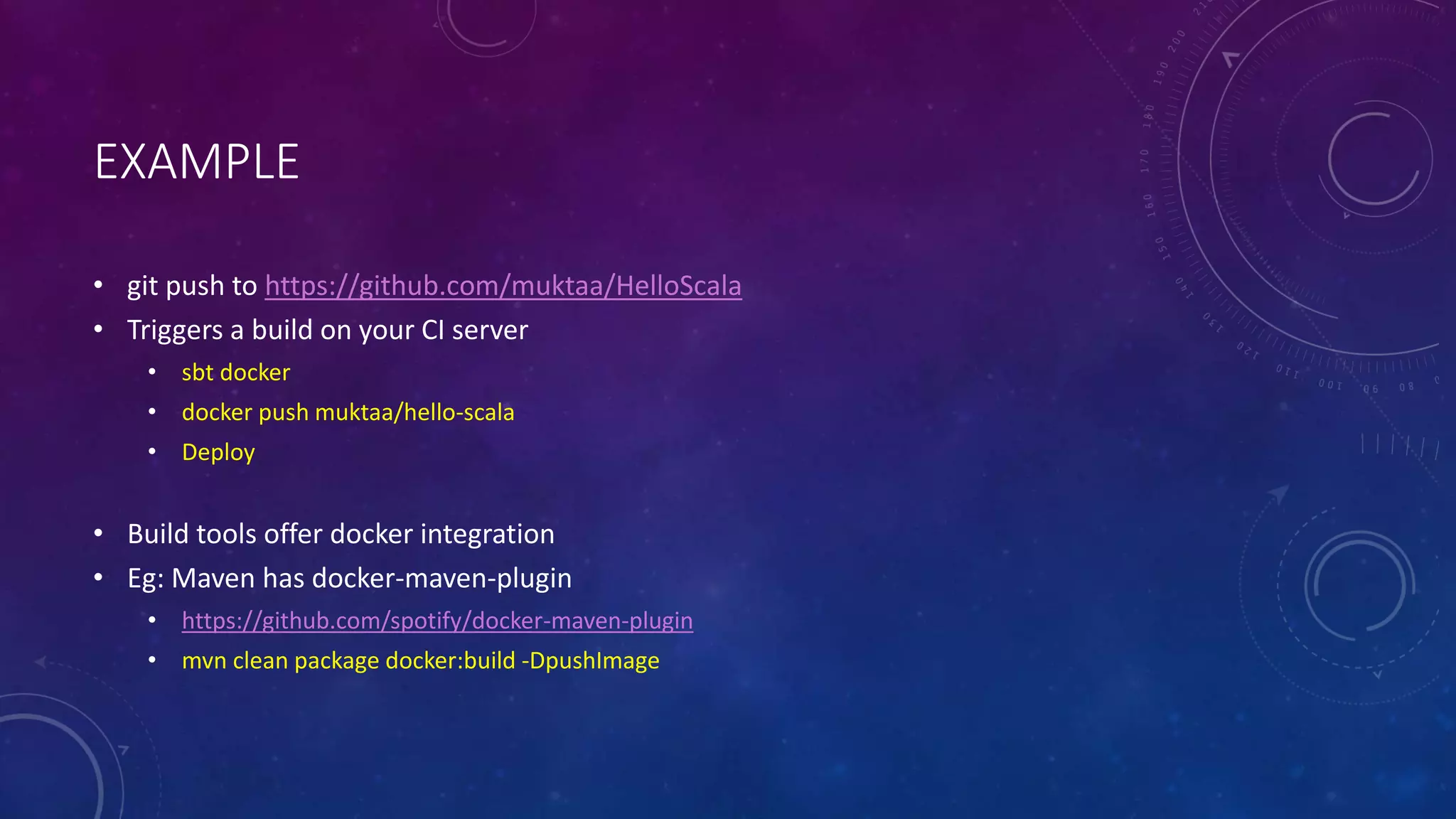

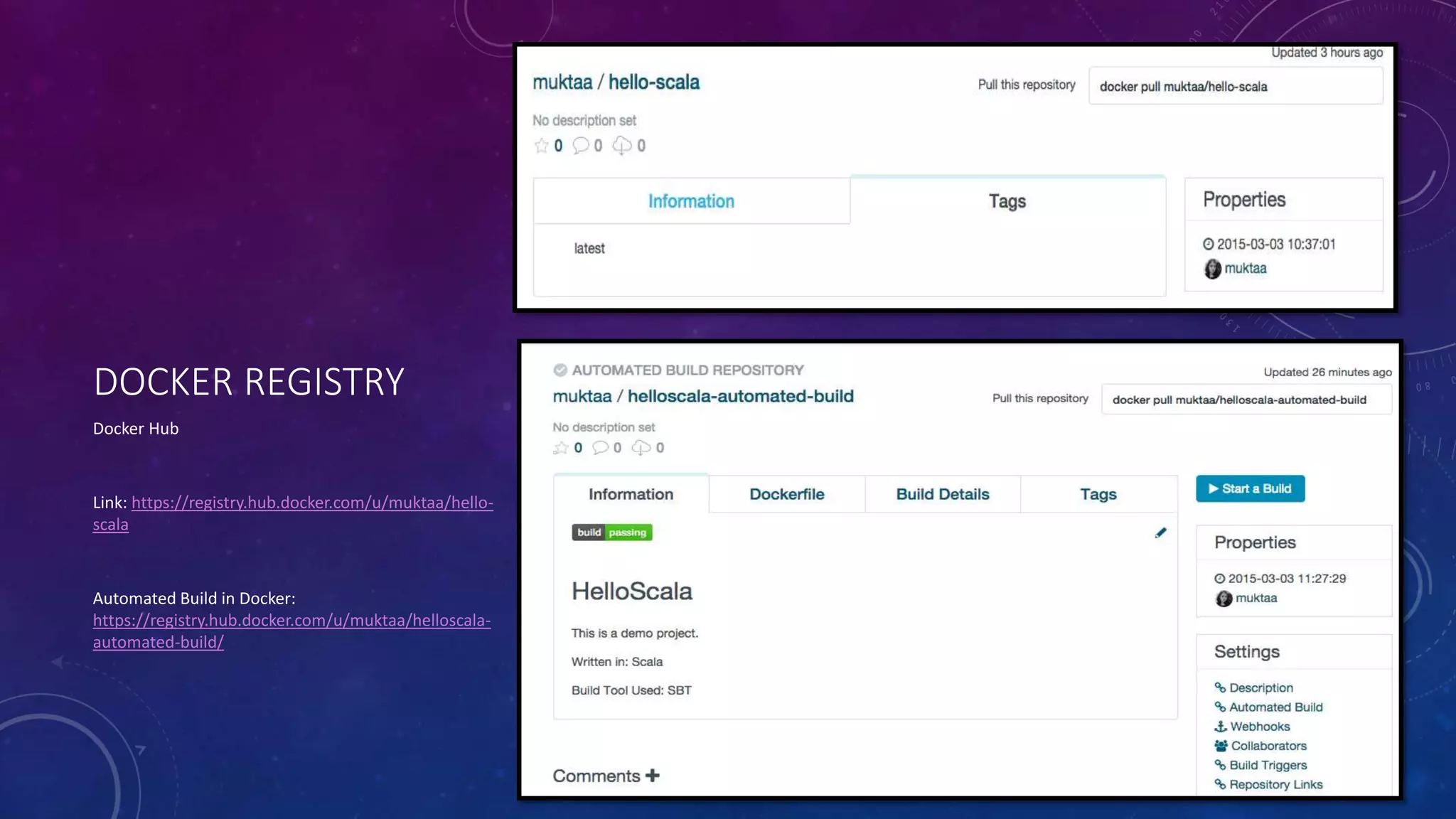

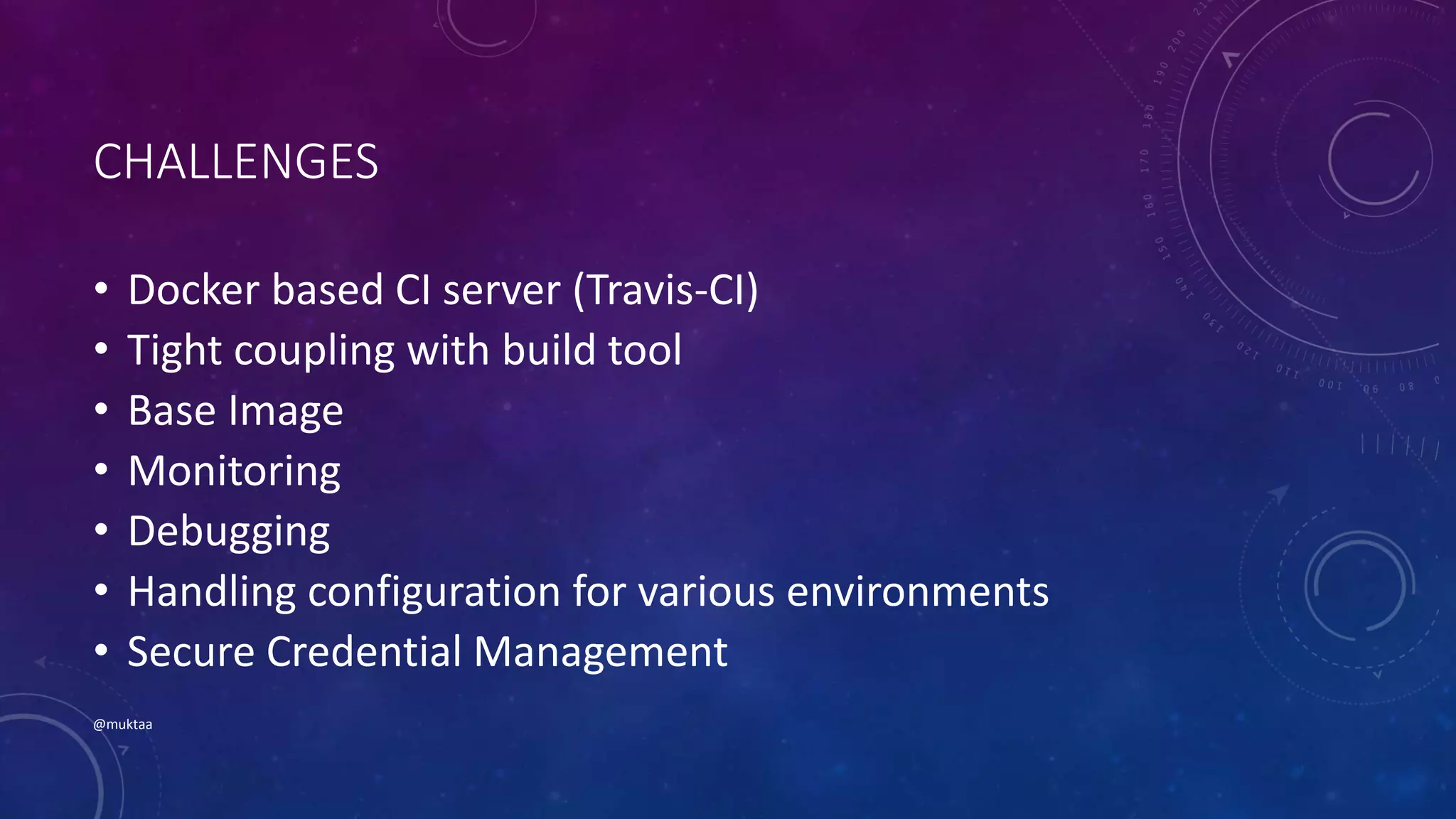

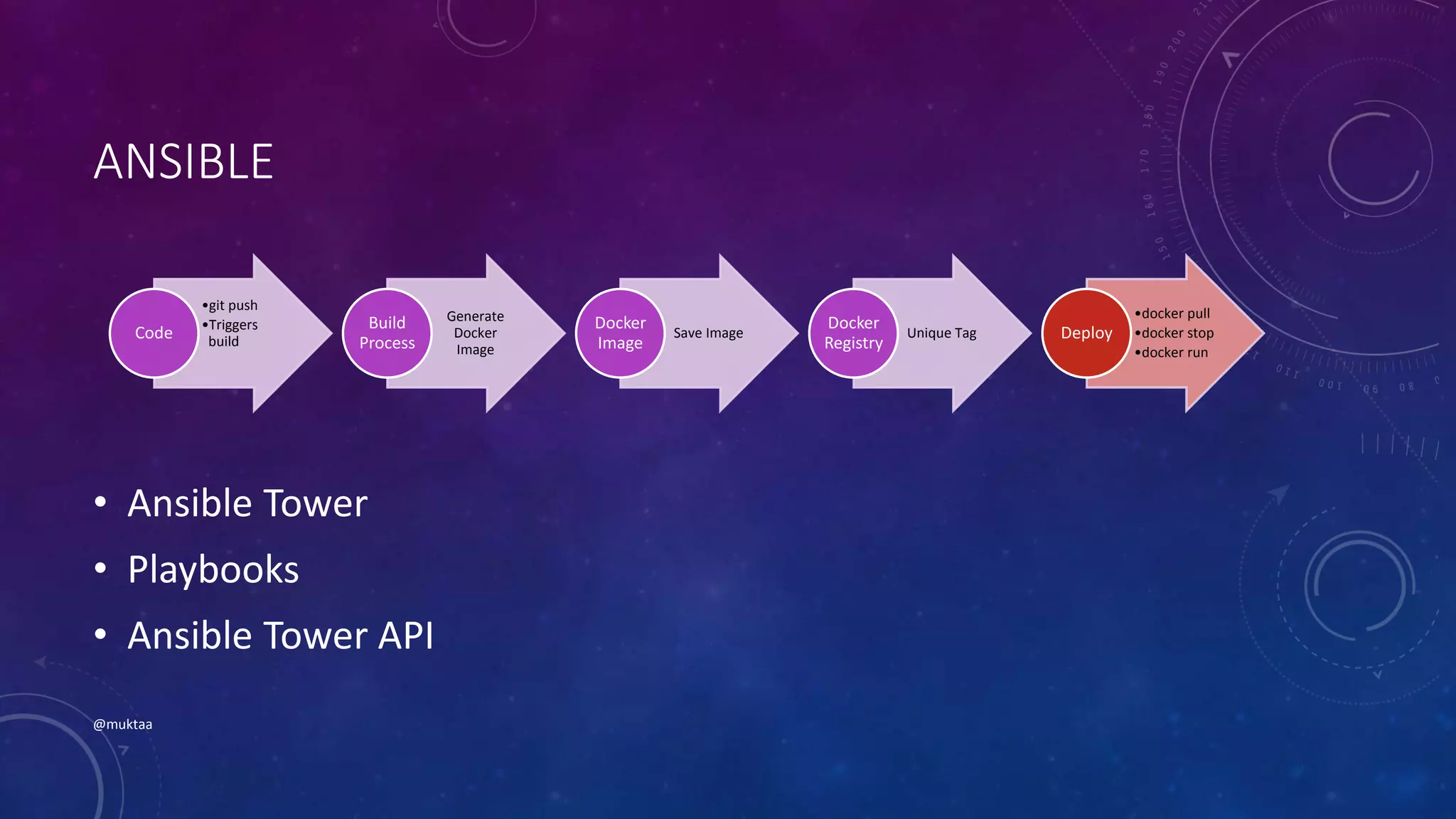

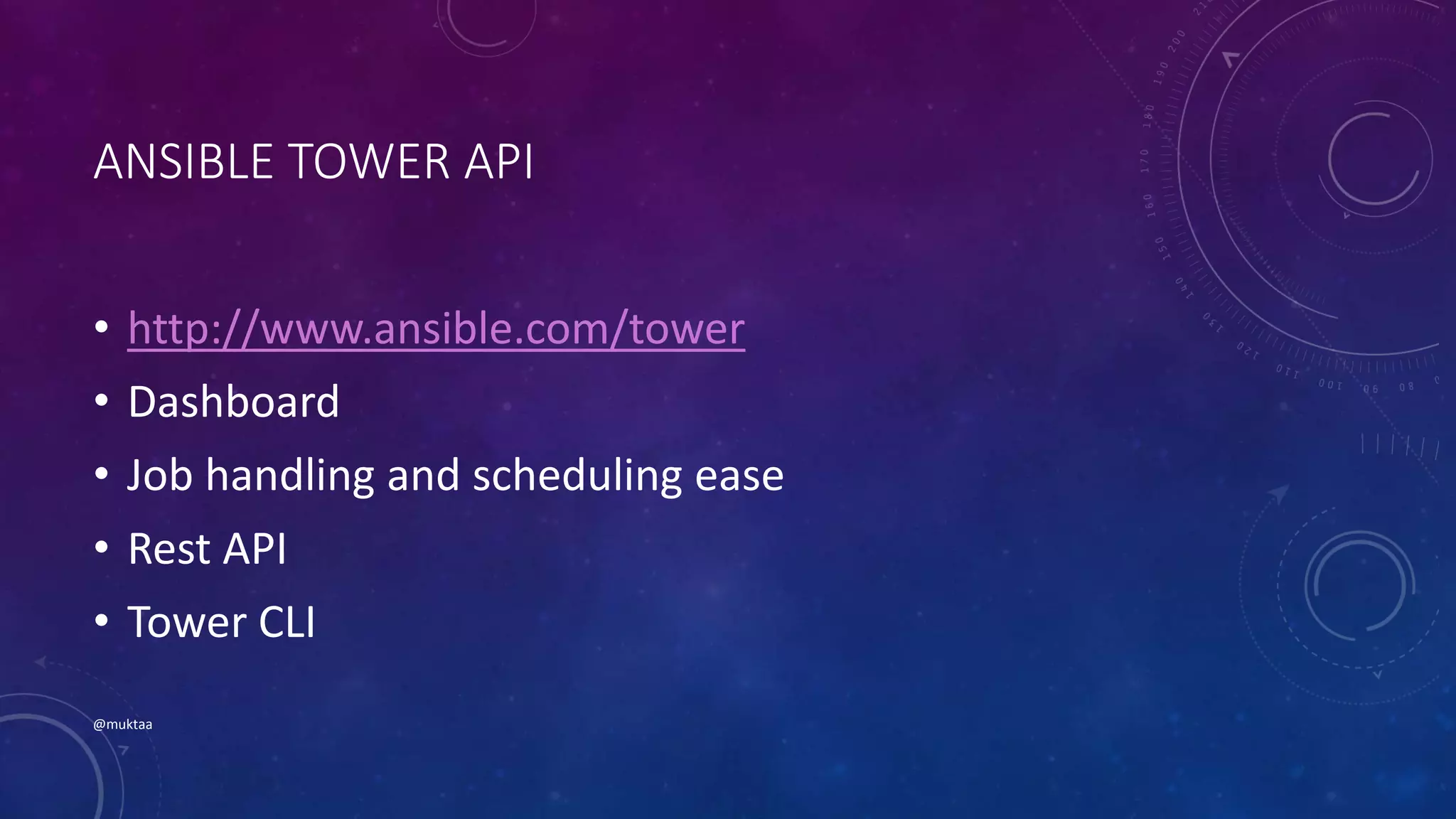

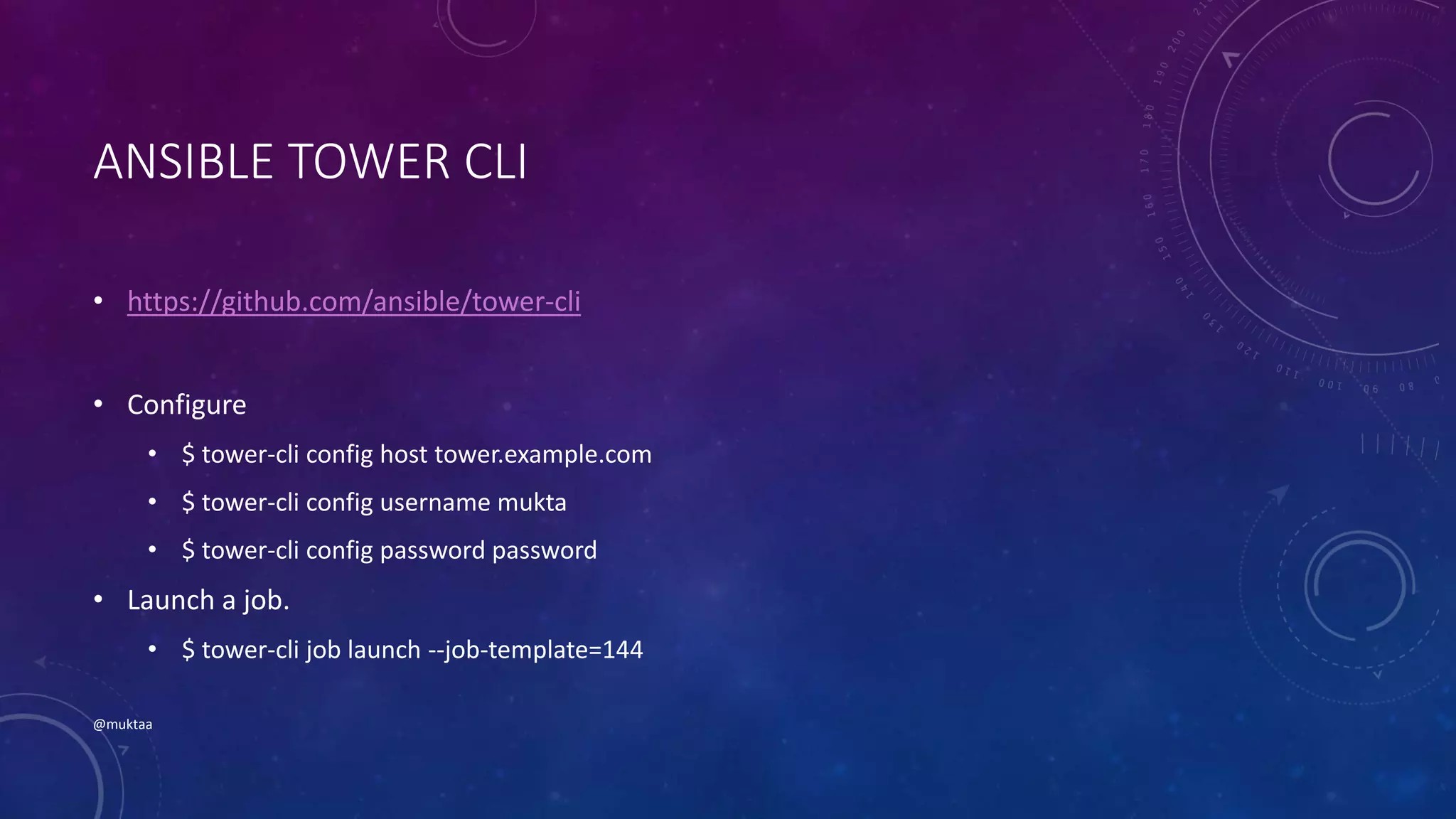

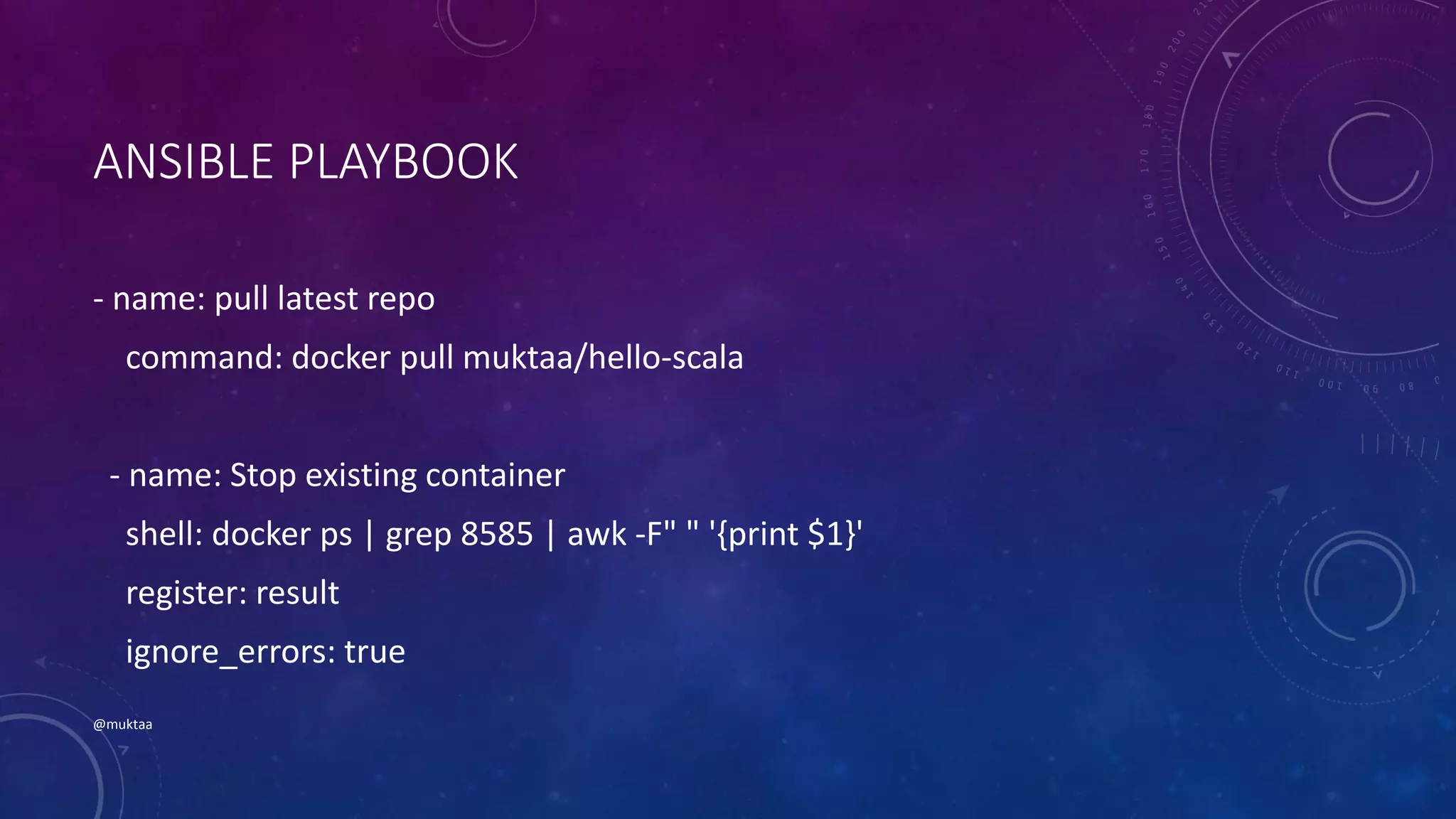

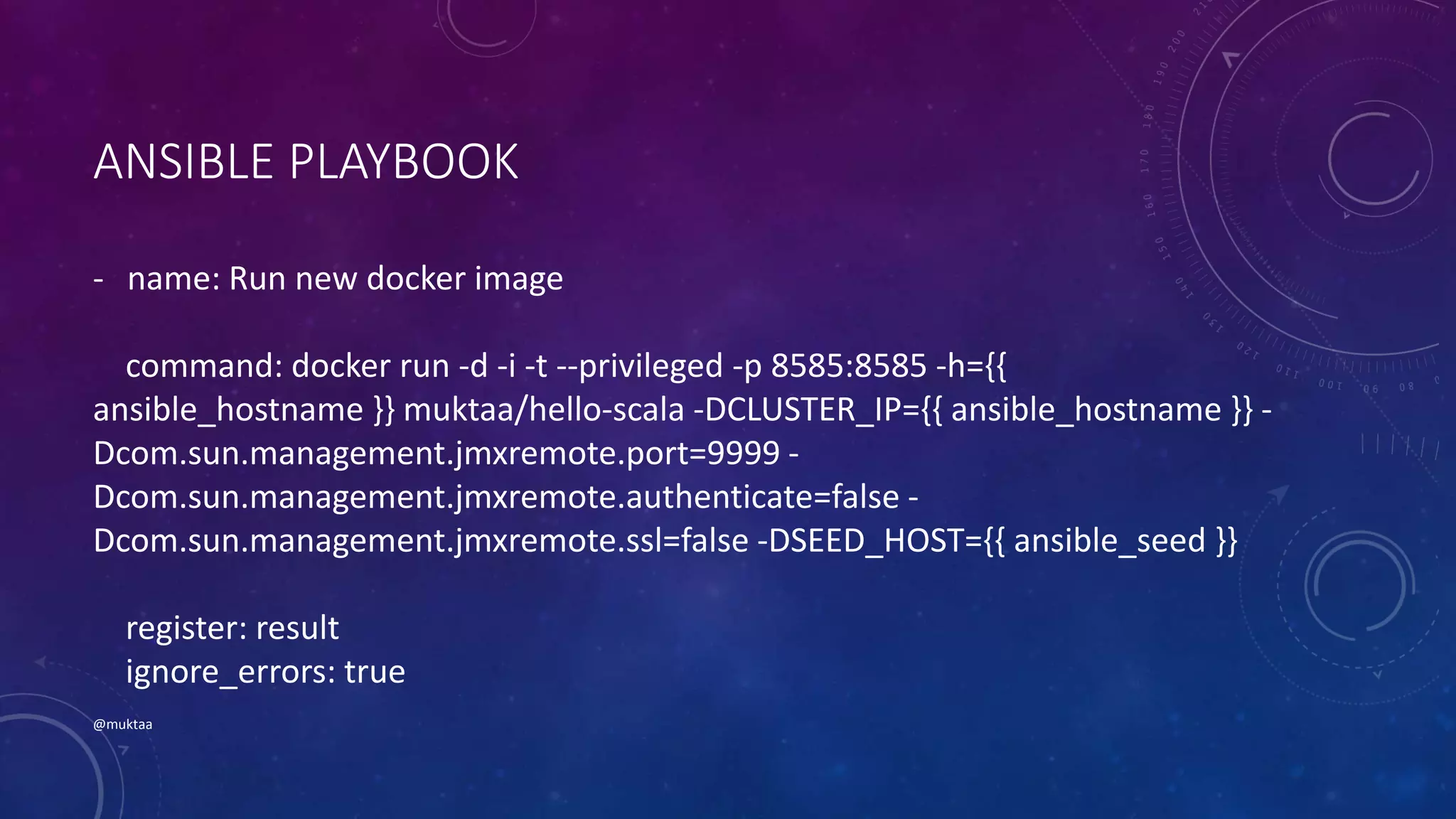

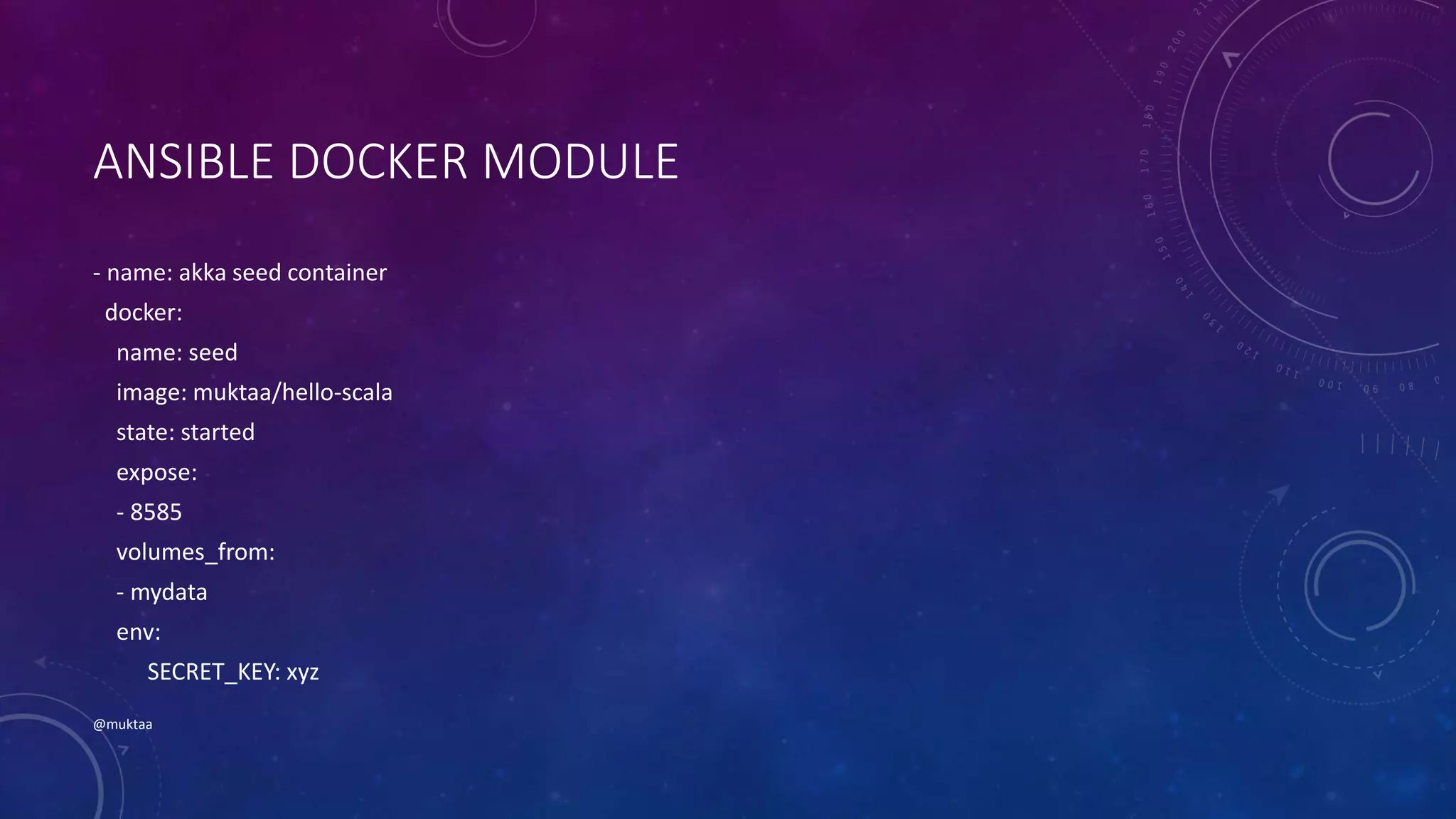

The document discusses using Docker and configuration management tools like Ansible and Chef together. It provides examples of building Docker images from code using tools like SBT and Maven. It also describes using Ansible playbooks and Chef recipes to automate pulling, running and managing Docker containers. Configuration files and credentials can be handled securely. Overall, the document shows how Docker and configuration management can be integrated to provide an automated and flexible deployment pipeline.

![~/github/HelloScala > sbt docker

[info] Loading project definition from

/Users/muktaaphale/github/HelloScala/project

[info] Set current project to hello-scala (in build

file:/Users/muktaaphale/github/HelloScala/)

[info] Creating docker image with name: 'muktaa/hello-scala'

:

[info] Sending build context to Docker daemon

[info] Step 0 : FROM dockerfile/java

[info] ---> 1126c85d8a06

[info] Step 1 : ADD /app/hello-scala_2.11-1.4-one-jar.jar /app/hello-scala_2.11-

1.4-one-jar.jar

[info] ---> Using cache

[info] ---> 61871958f108

[info] Step 2 : ENTRYPOINT java -jar /app/hello-scala_2.11-1.4-one-jar.jar

[info] ---> Using cache

[info] ---> a8005b32ddc4

[info] Successfully built a8005b32ddc4

[info] Successfully built Docker image: muktaa/hello-scala

[success] Total time: 1 s, completed Mar 3, 2015 2:10:04 PM

~/github/HelloScala > docker images | grep hello-scala

muktaa/hello-scala latest a8005b32ddc4 12 hours ago 715 MB

~/github/HelloScala > docker run muktaa/hello-scala

Hello, world! #1

Hello, world! #2

Hello, world! #3](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-5-2048.jpg)

![CREDENTIAL MANAGEMENT

secret =

Chef::EncryptedDataBagItem.load_secret

@docker_cred =

Chef::EncryptedDataBagItem.load(

node['docker']['creds']['databag'],

node['docker']['user'],

secret

)

docker_registry

‘https://registry.hub.docker.com/u/muktaa/he

llo-scala/’ do

email docker_cred['email']

username docker_cred['username']

password docker_cred['password']

end](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-19-2048.jpg)

![DOCKER_IMAGE

# Build a docker image using docker_image

resource

docker_image node['docker']['image'] do

tag node['docker']['image']['tag']

source '/var/docker'

action :build

end

# Push the image to docker registery

docker_image node['docker']['image'] do

action :push

end

# Delete the image from the machine

docker_image node['docker']['image'] do

action :remove

end](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-20-2048.jpg)

![GENERATE DOCKERFILE

# Generate a docker file using template.

template "#{node['docker']['directory']}/Dockerfile" do

source 'dockerfile.erb'

variables image: node['docker']['base']['image']['name'],

maintainer: @docker_cred['maintainer'],

email: docker_cred['email'],

build_cmd: node['docker']['build']['commands'],

entry_point: node['docker']['build']['entry_point']

action :create

end](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-22-2048.jpg)

![KNIFE CONTAINER DOCKER INIT

• Gem install knife-container

• knife container docker init NAMESPACE/IMAGE_NAME

[options]

• -f base docker image (default is ubuntu 12.04) - chef container

should be already installed on it

• -r runlist

• -z chef client local mode

• -b use berkshelf](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-28-2048.jpg)

![EXAMPLE

$ sudo knife container docker init muktaa/hello-scala-cc

Compiling Cookbooks...

Recipe: knife_container::docker_init

* directory[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc] action create

* template[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/Dockerfile] action

create

- update content in file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/Dockerfile from none to 943017

- * template[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/.dockerignore]

action create

- create new file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/.dockerignore

- update content in file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/.dockerignore from none to e3b0c4

* directory[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef] action create

- create new directory /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef

* template[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef/client.rb] action

create

- create new file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef/client.rb

- update content in file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/client.rb from none to 7de61f

* file[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef/first-boot.json] action

create

- create new file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef/first-

boot.json

- update content in file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/first-boot.json from none to 5269ef

* template[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef/.node_name]

action create

- create new file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/.node_name

- update content in file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/.node_name from none to 4764d2

* template[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/Berksfile] action

create (skipped due to only_if)

* directory[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc/chef/secure] action

create

- create new directory /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/secure

* file[/home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/secure/validation.pem] action create

- create new file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/secure/validation.pem

- update content in file /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-

cc/chef/secure/validation.pem from none to ec1f3e

- change mode from '' to '0600'

Downloading base image: chef/ubuntu-12.04:latest. This process may take awhile...

Tagging base image chef/ubuntu-12.04 as muktaa/hello-scala-cc

Context Created: /home/ubuntu/chef-repo/dockerfiles/muktaa/hello-scala-cc](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-29-2048.jpg)

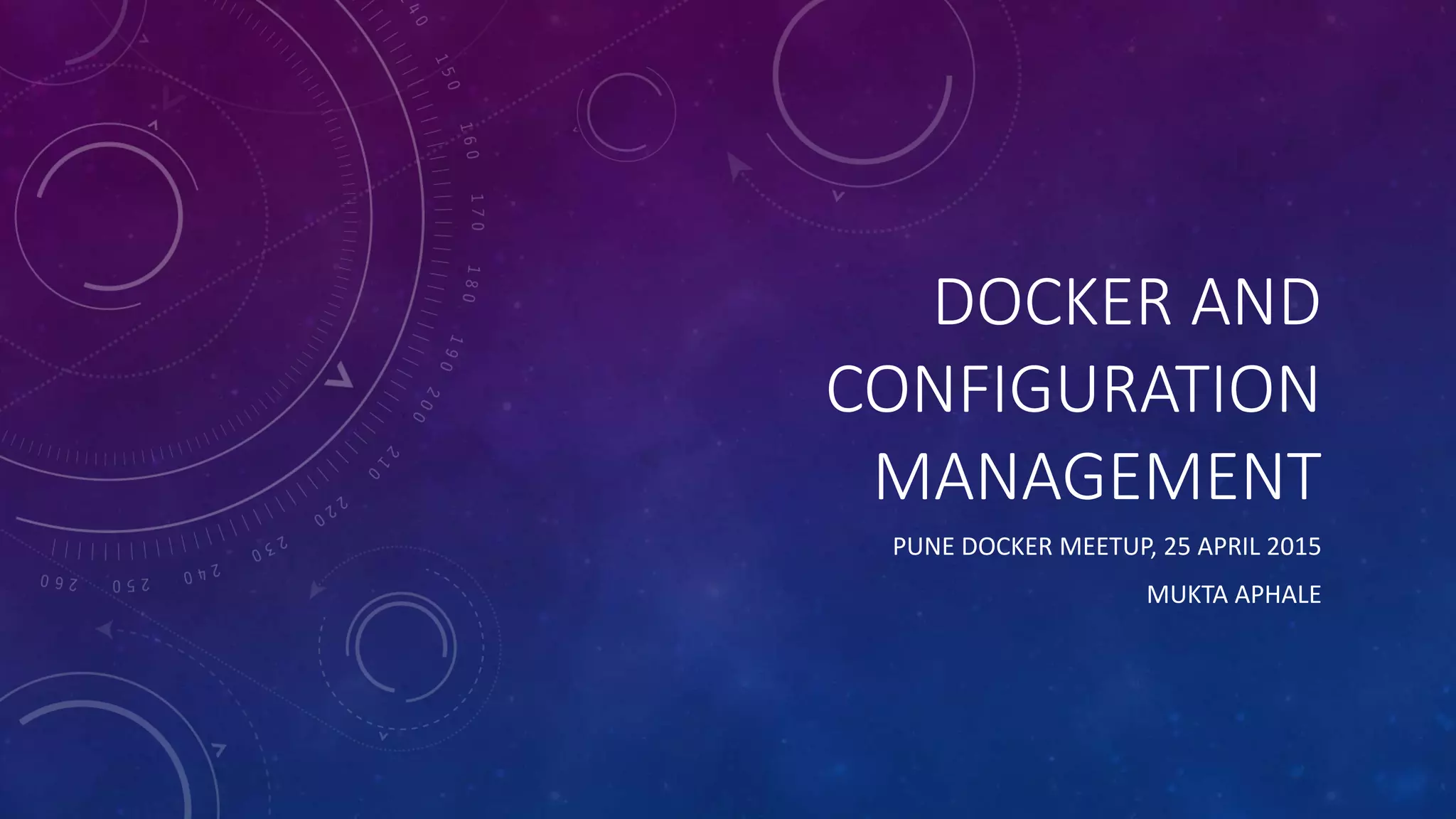

![EXAMPLE

$ sudo knife container docker build muktaa/hello-scala-cc

Sending build context to Docker daemon 9.728 kB

Sending build context to Docker daemon

Step 0 : FROM muktaa/hello-scala-cc

---> 50d3c5c9e133

Step 1 : ADD chef/ /etc/chef/

---> 4933cc9e13e0

Removing intermediate container da0a08413a91

Step 2 : RUN chef-init --bootstrap

---> Running in add27db609cc

[2015-03-31T21:44:44+00:00] INFO: Starting Supervisor...

[2015-03-31T21:44:44+00:00] INFO: Supervisor pid: 9

[2015-03-31T21:44:49+00:00] INFO: Starting chef-client run...

[2015-03-31T21:44:50+00:00] INFO: Forking chef instance to converge...

[2015-03-31T21:44:50+00:00] INFO: *** Chef 11.16.2 ***

[2015-03-31T21:44:50+00:00] INFO: Chef-client pid: 16

[2015-03-31T21:44:53+00:00] INFO: Client key /etc/chef/secure/client.pem is not present - registering

[2015-03-31T21:44:53+00:00] INFO: HTTP Request Returned 404 Object Not Found: error

[2015-03-31T21:44:54+00:00] INFO: Setting the run_list to [] from CLI options

[2015-03-31T21:44:54+00:00] INFO: Run List is []

[2015-03-31T21:44:54+00:00] INFO: Run List expands to []

[2015-03-31T21:44:54+00:00] INFO: Starting Chef Run for muktaa-hello-scala-cc-build

[2015-03-31T21:44:54+00:00] INFO: Running start handlers

[2015-03-31T21:44:54+00:00] INFO: Start handlers complete.

[2015-03-31T21:44:55+00:00] INFO: Loading cookbooks []

[2015-03-31T21:44:55+00:00] WARN: Node muktaa-hello-scala-cc-build has an empty run list.

[2015-03-31T21:44:55+00:00] INFO: Chef Run complete in 1.121705004 seconds

[2015-03-31T21:44:55+00:00] INFO: Running report handlers

[2015-03-31T21:44:55+00:00] INFO: Report handlers complete

[2015-03-31T21:44:55+00:00] INFO: Sending resource update report (run-id: 6f637baf-18cc-4620-b3e2-

9afc90e8cd6b)

---> 2c2ec6fab1ef

Removing intermediate container add27db609cc

Step 3 : RUN rm -rf /etc/chef/secure/*

---> Running in 30a3611b083f

---> cab28d6eed90

Removing intermediate container 30a3611b083f

Step 4 : ENTRYPOINT ["chef-init"]

---> Running in 0a9f4e96bbf7

---> a8577b66b103

Removing intermediate container 0a9f4e96bbf7

Step 5 : CMD ["--onboot"]

---> Running in f9a444817229

---> 21b3800bc9b3

Removing intermediate container f9a444817229

Successfully built 21b3800bc9b3](https://image.slidesharecdn.com/dockerandconfigurationmanagement-150427033117-conversion-gate02/75/Docker-and-configuration-management-31-2048.jpg)