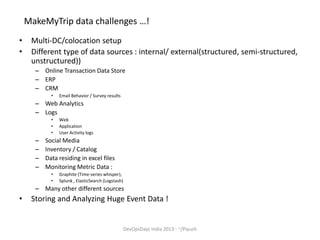

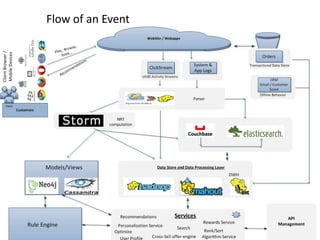

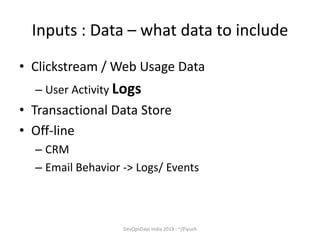

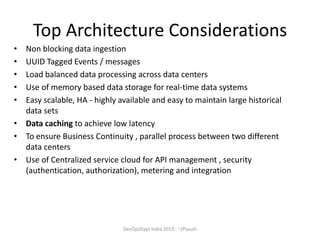

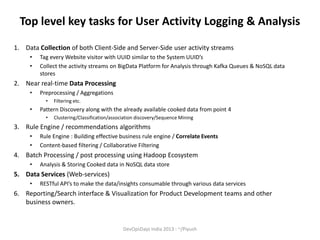

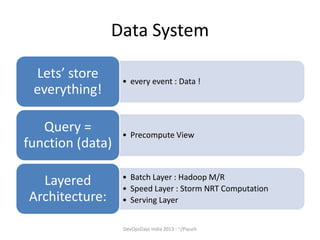

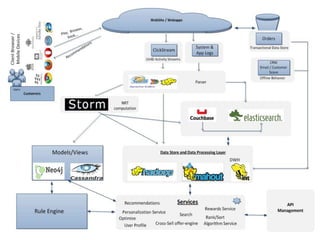

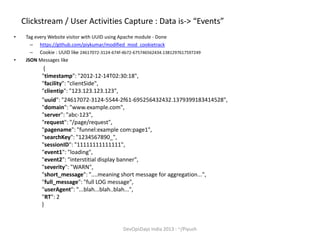

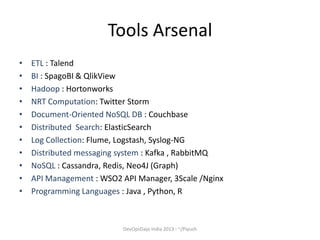

The document discusses the importance of centralized event collection and analysis using a big data platform. It describes the challenges faced by MakeMyTrip in analyzing huge amounts of data from various sources. Centralized logging of structured event data from all systems and applications is recommended to enable effective log analysis, troubleshooting, and personalizing the user experience. A data service platform is needed to integrate data from different sources and power real-time and batch processing for analytics and insights.