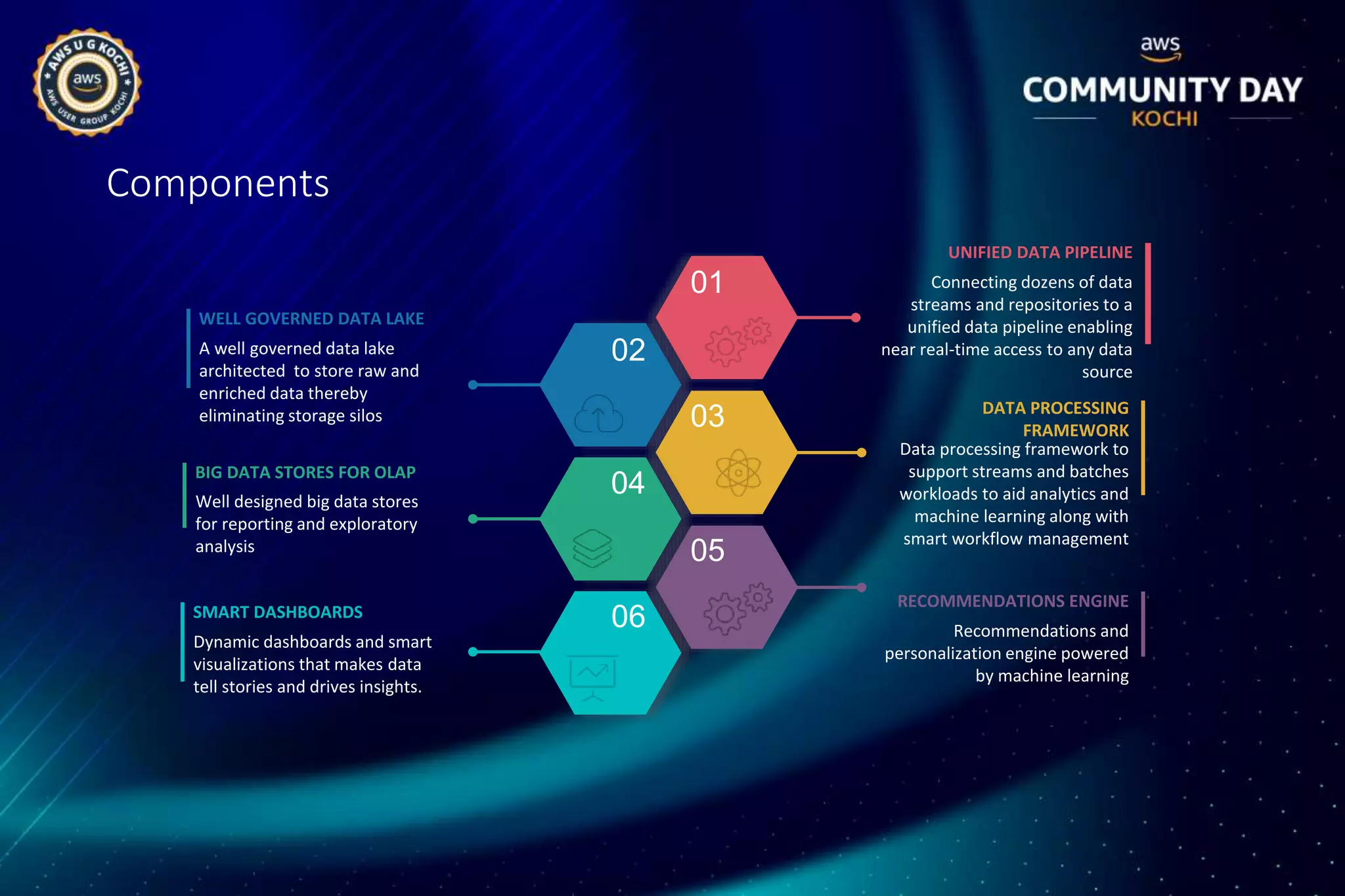

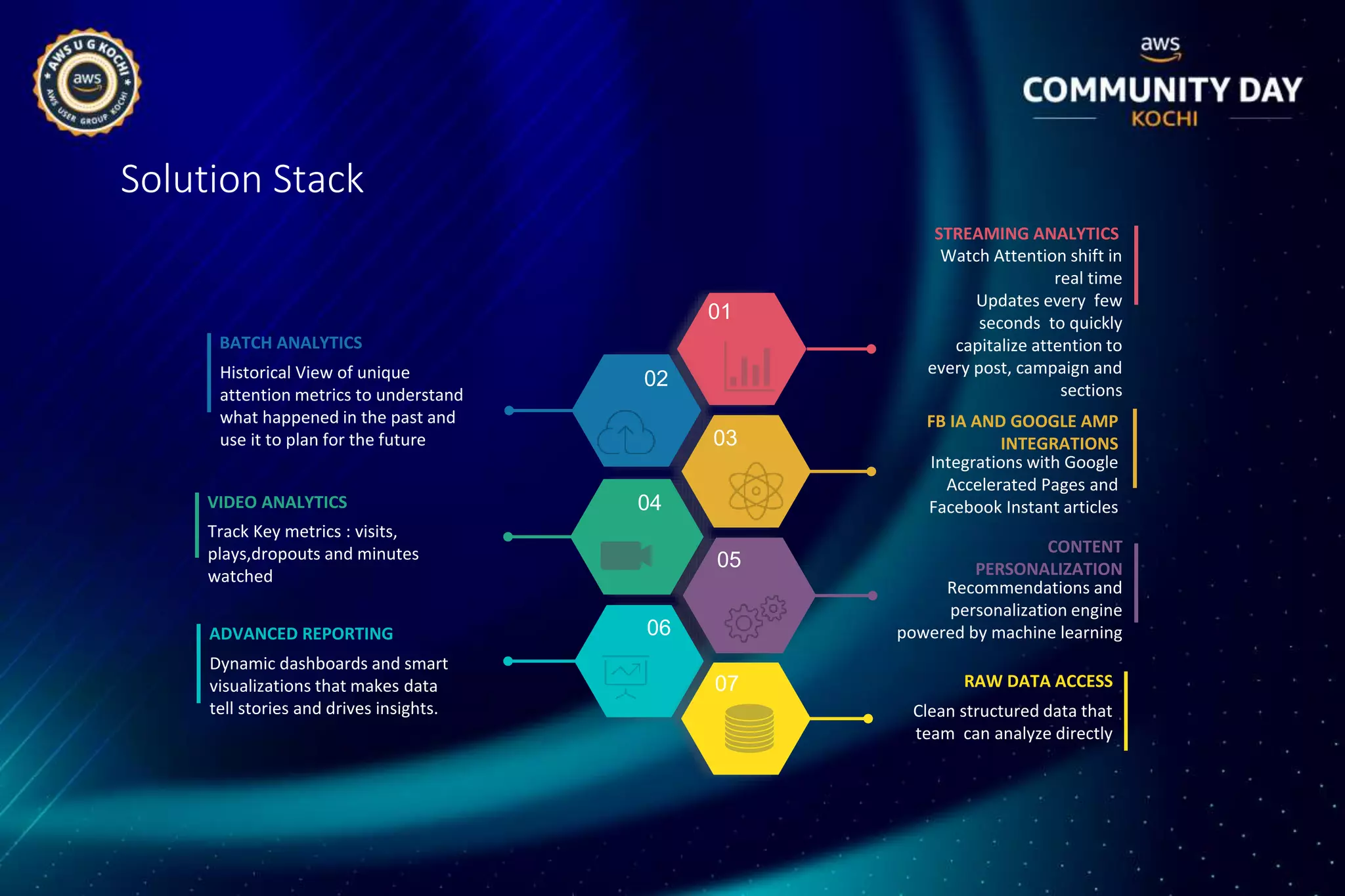

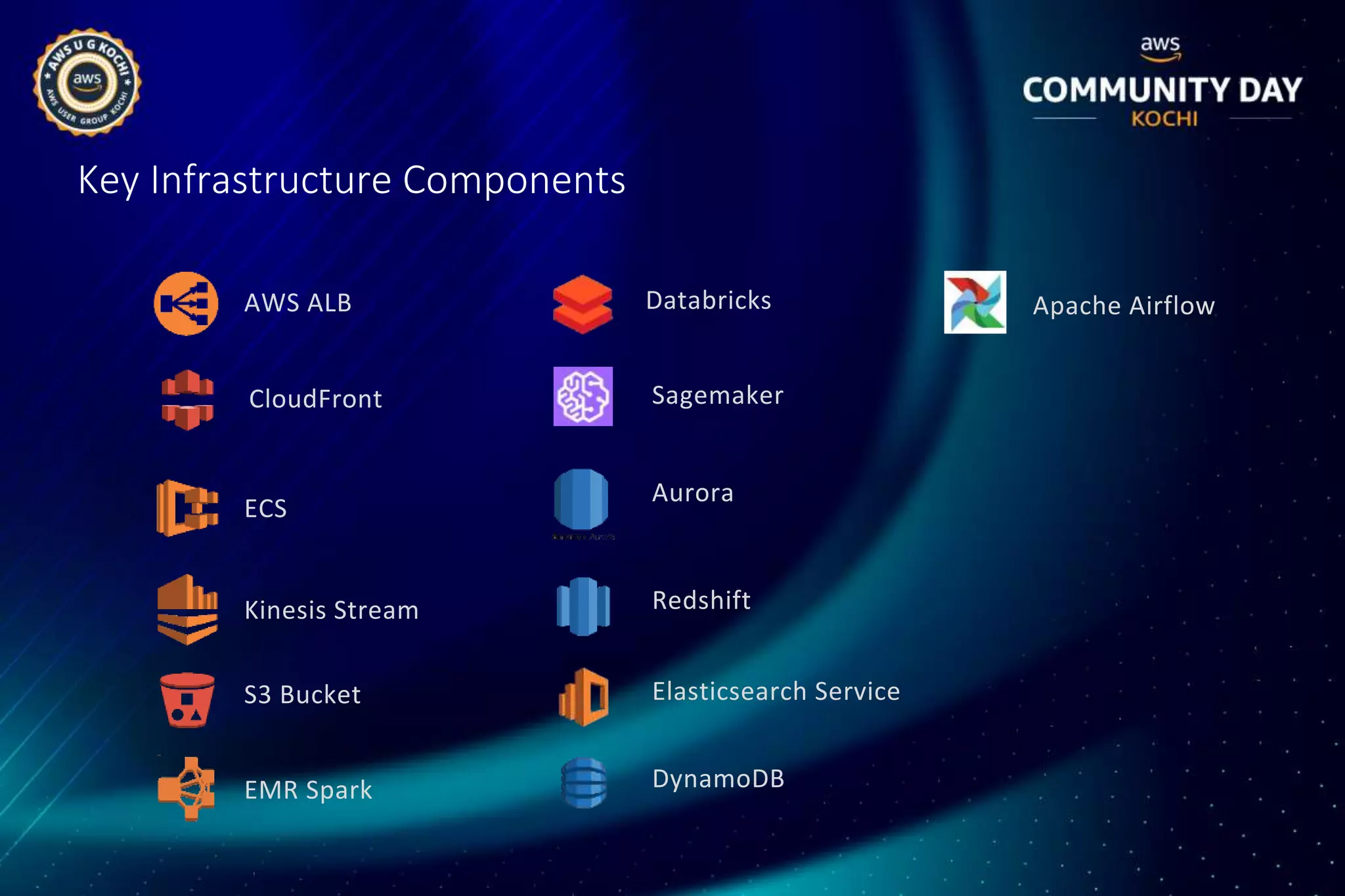

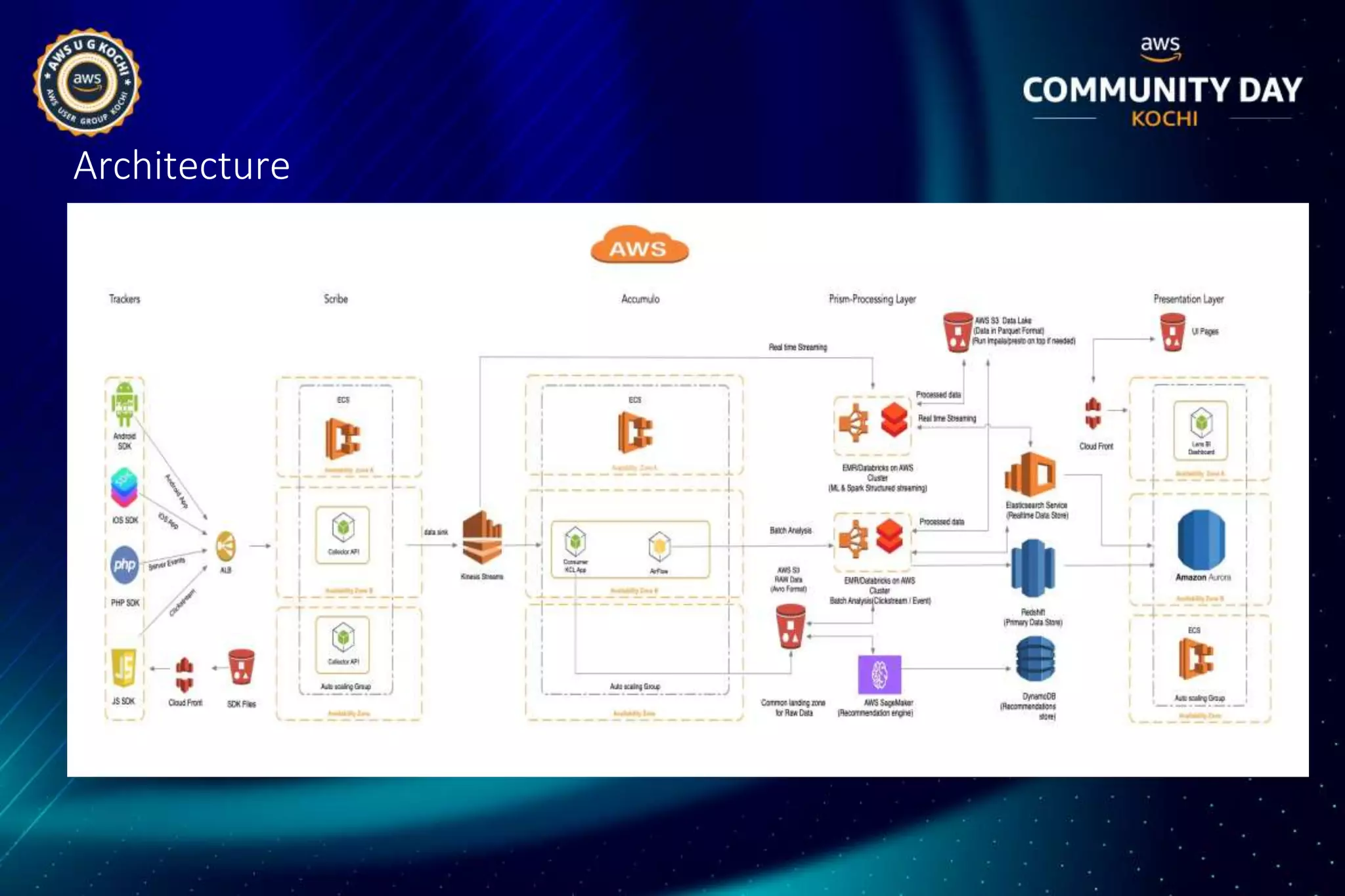

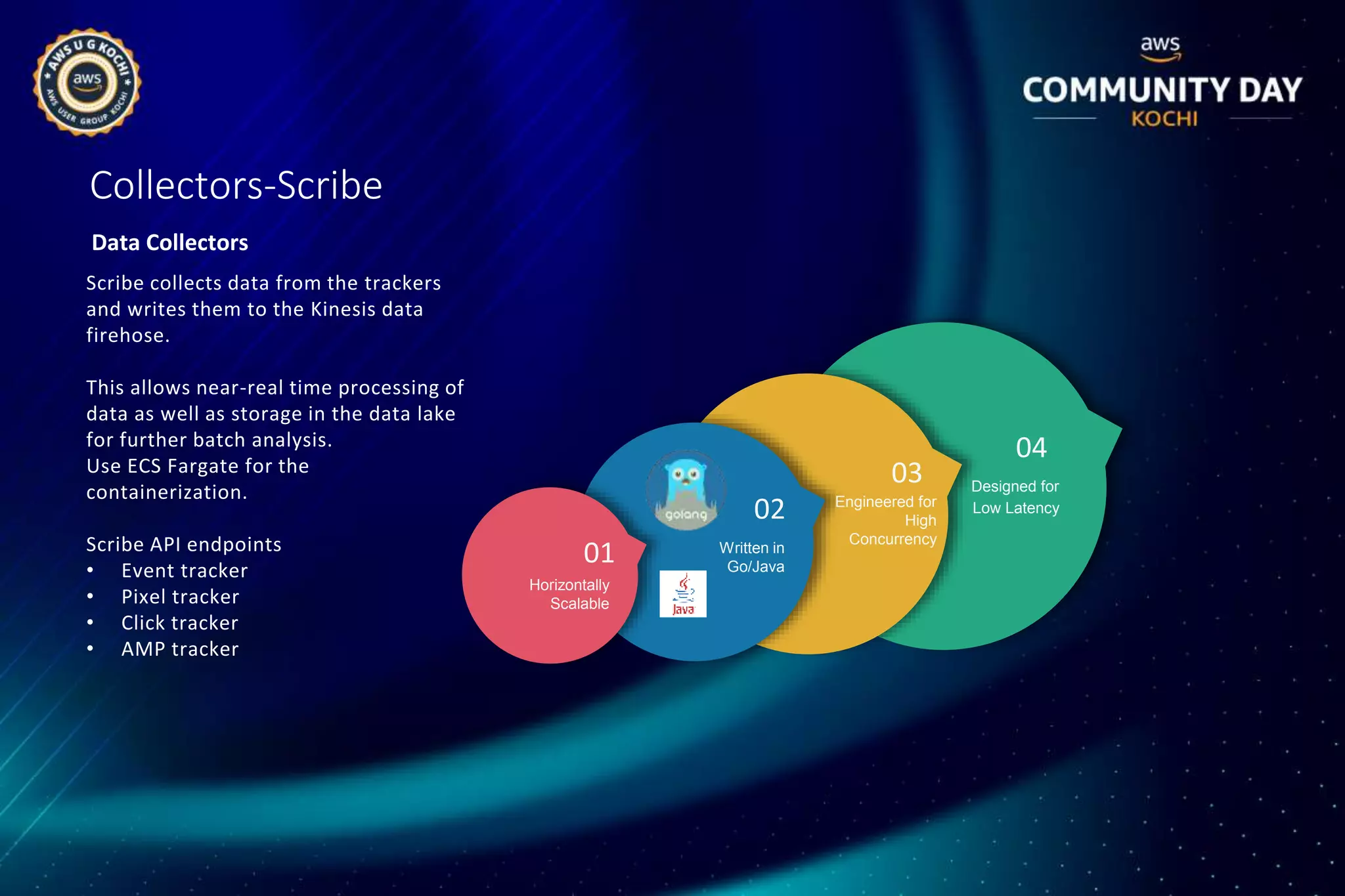

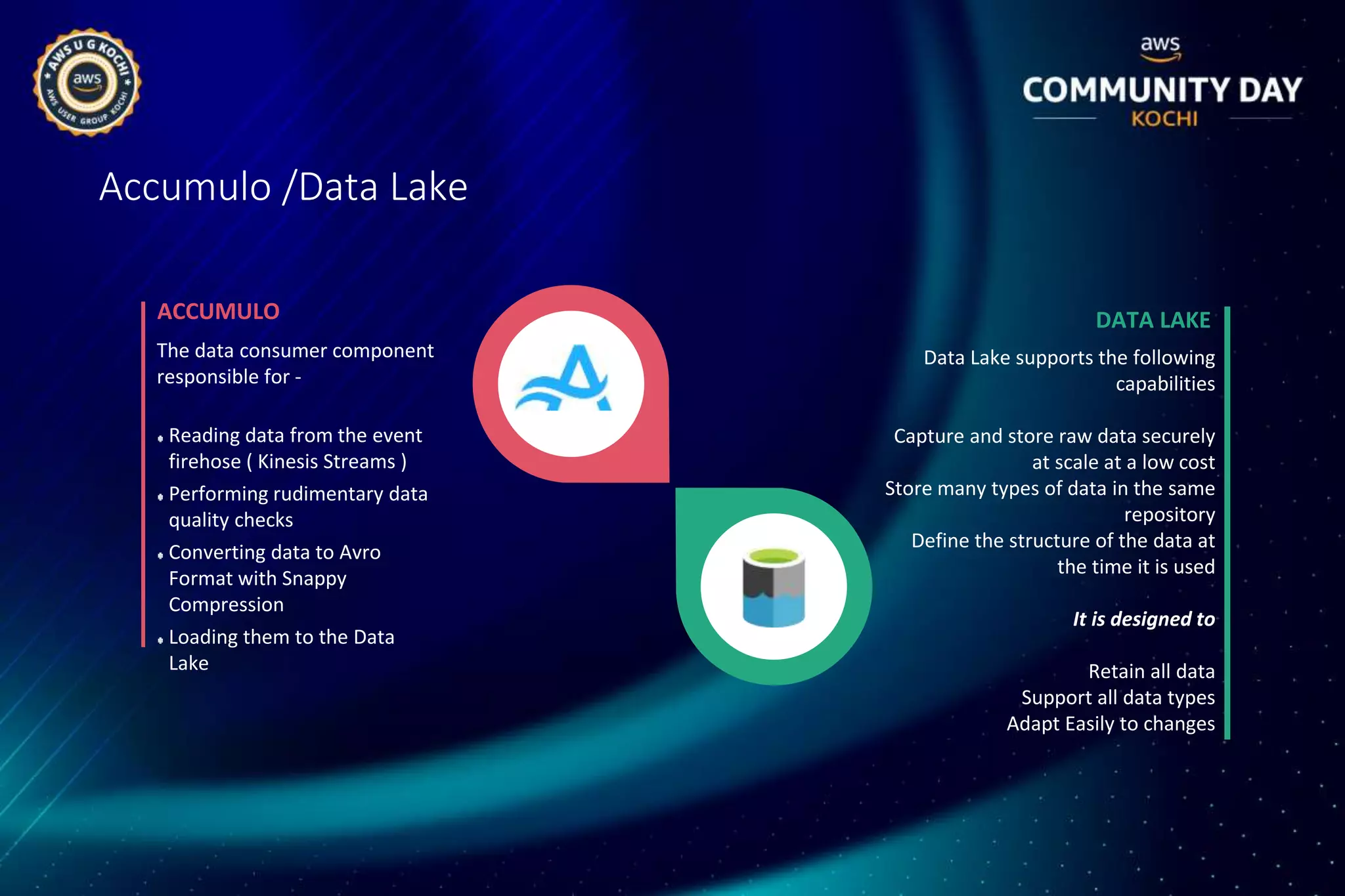

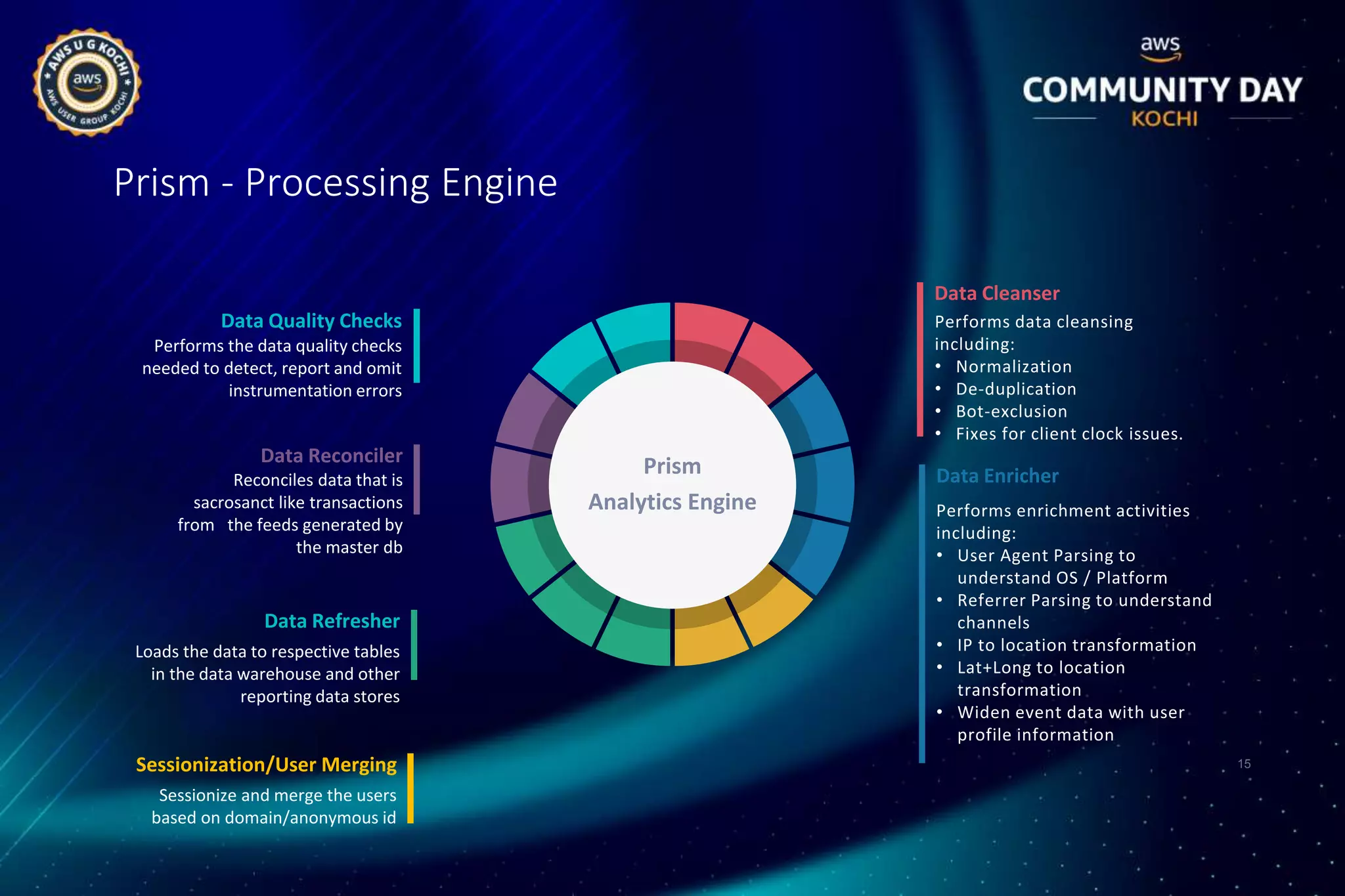

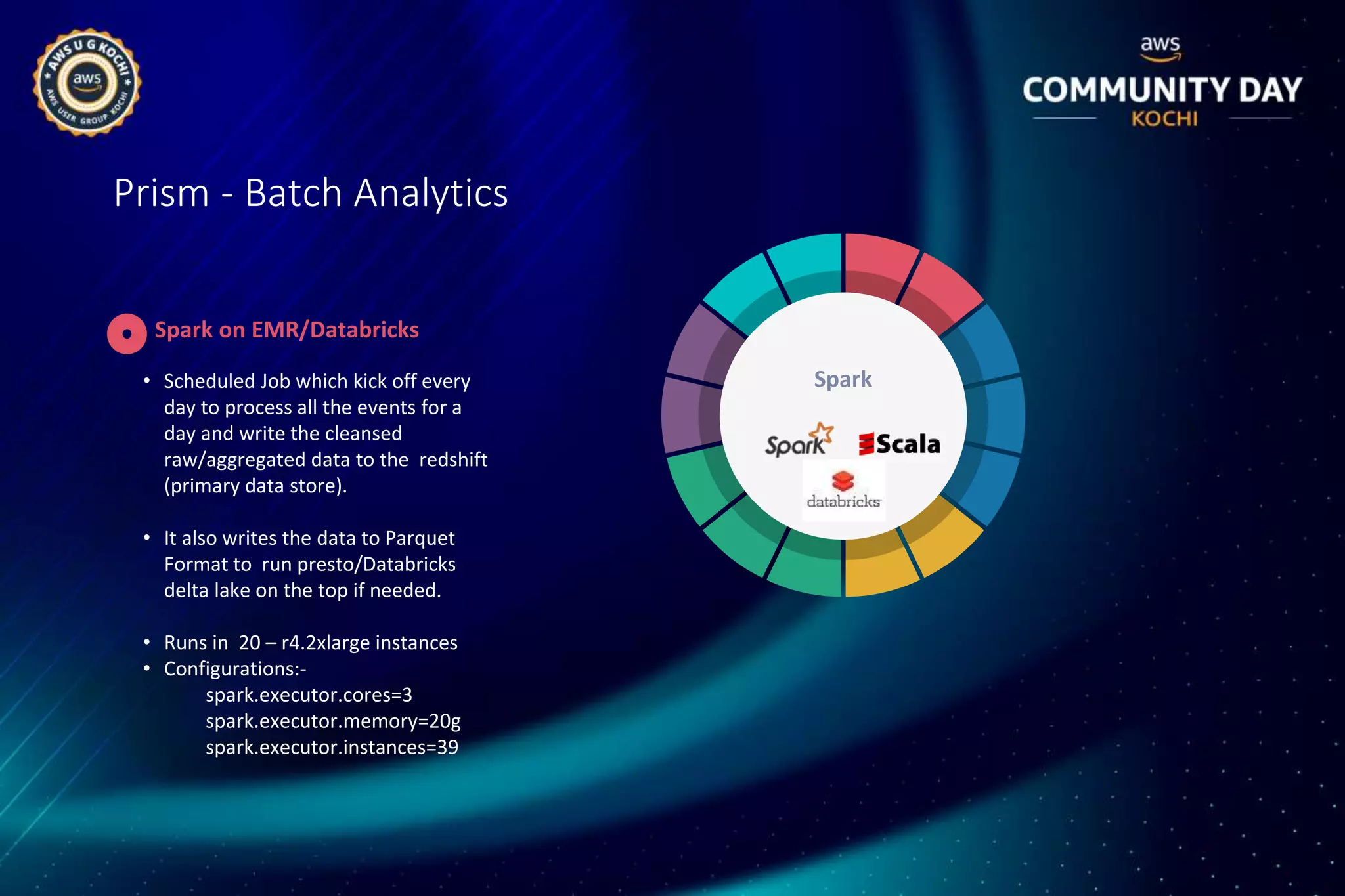

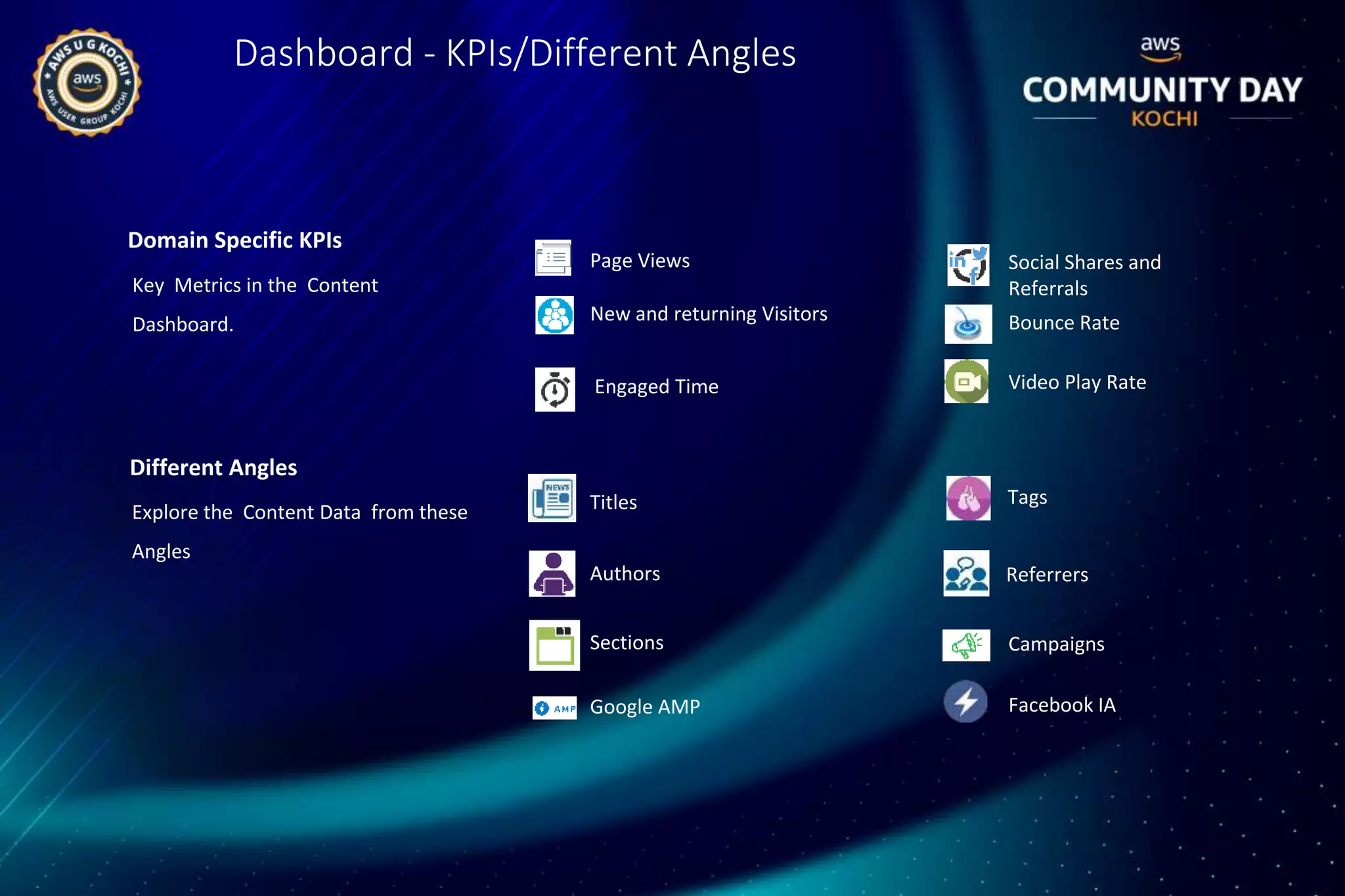

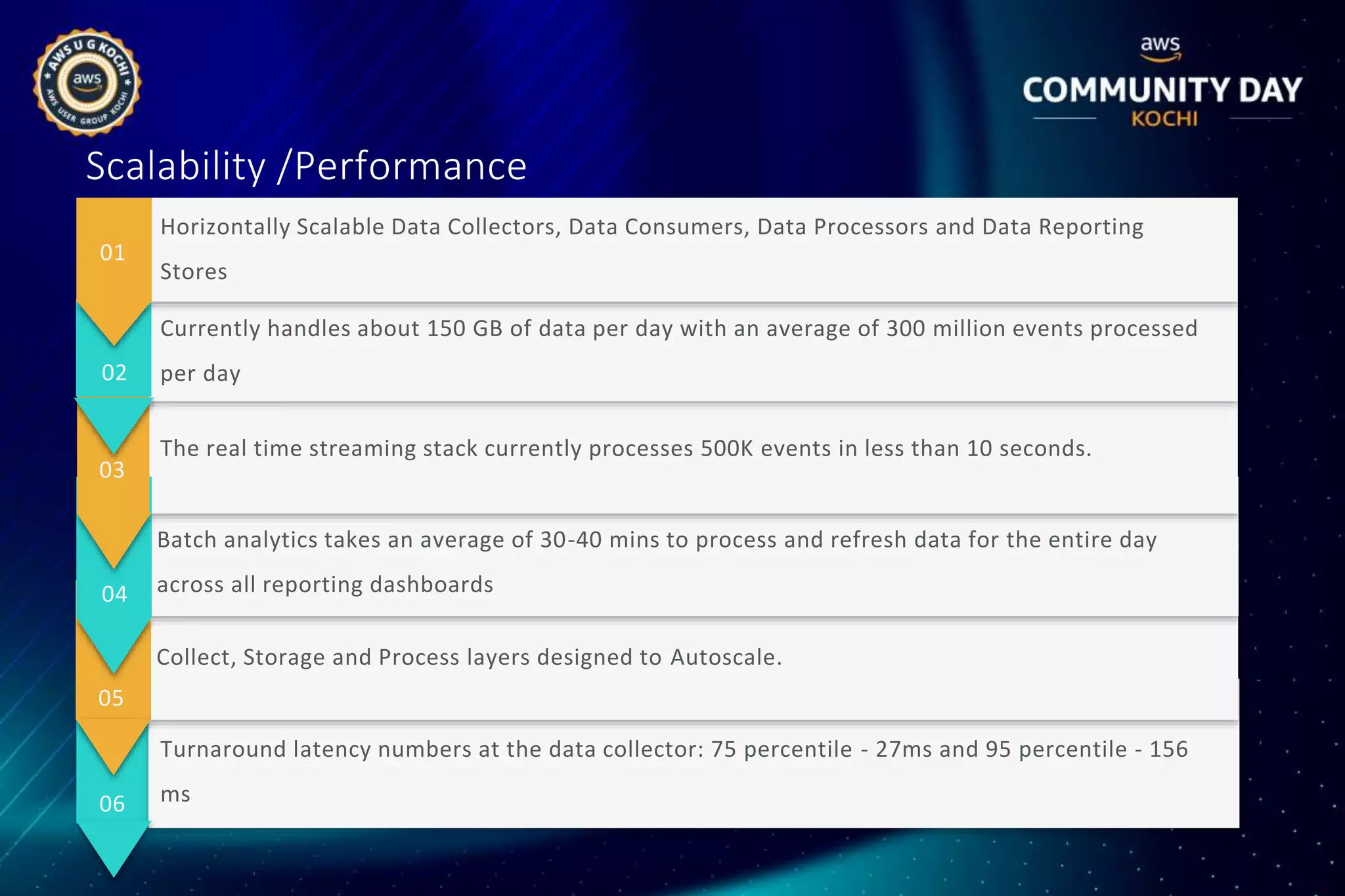

HiFX designed and implemented a unified data analytics platform called Vision Lens for Malayala Manorama to generate meaningful insights from large amounts of data across their multiple digital properties. The solution involved building a data lake, data pipeline, processing framework, and dashboards to provide real-time and historical analytics. This helped Manorama improve user experiences, drive smarter marketing, and make better business decisions.