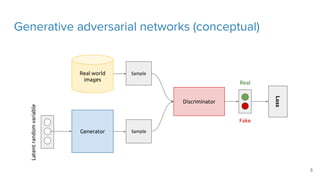

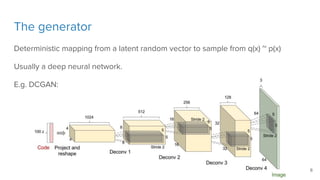

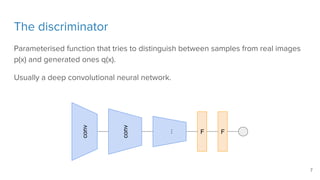

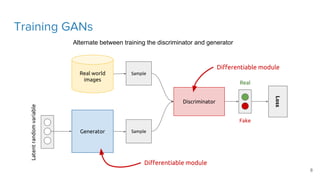

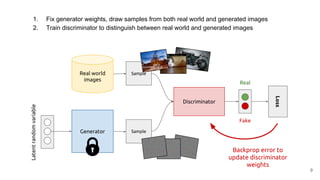

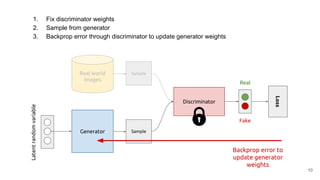

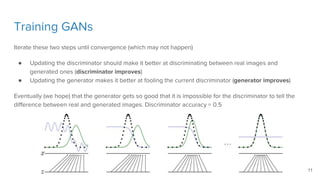

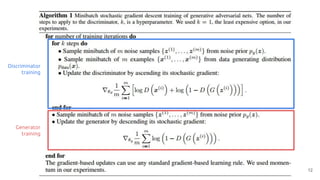

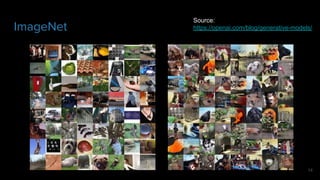

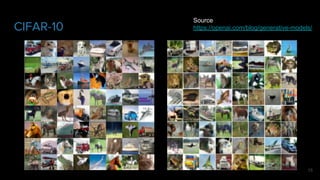

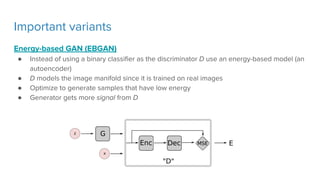

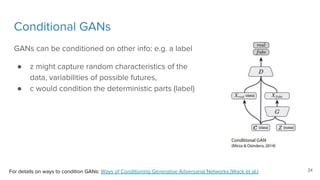

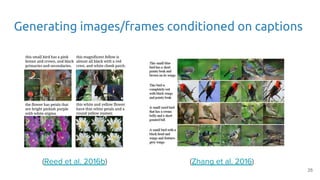

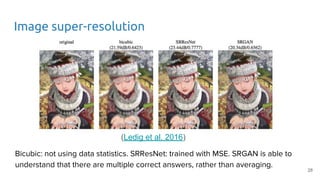

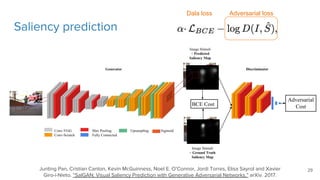

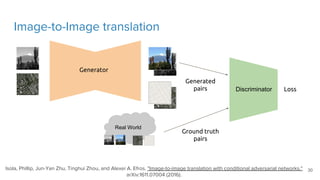

The document discusses generative models, particularly focusing on generative adversarial networks (GANs), which consist of a generator and a discriminator trained against each other. It highlights the importance and applications of generative models, training techniques for GANs, possible challenges like mode collapse, and variants such as Wasserstein GAN and Least Squares GAN. Additionally, the document mentions advanced topics, including conditional GANs and their applications in predicting future frames and image super-resolution.