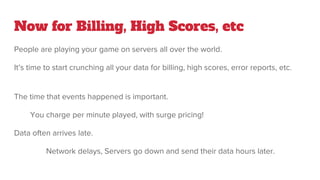

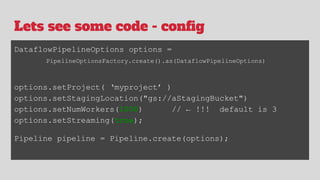

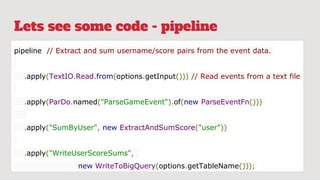

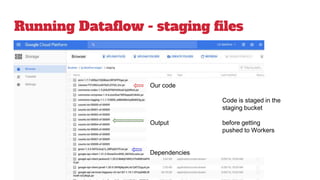

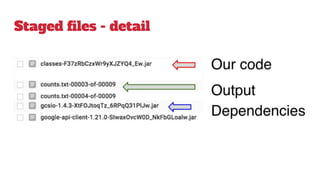

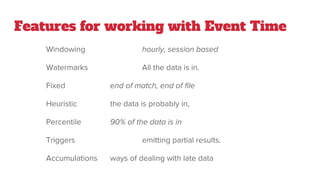

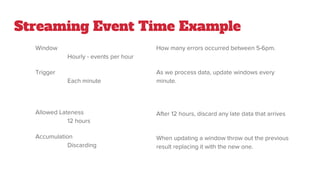

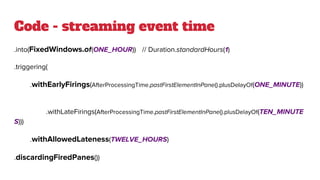

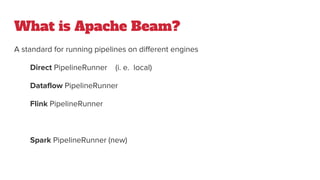

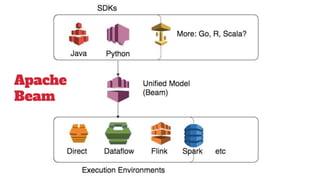

The document discusses the use of Google Dataflow and Apache Beam for processing data generated by video games, focusing on billing, high scores, and error reporting from global servers. It highlights the capabilities of Dataflow for handling large-scale distributed data processing, supporting both batch and streaming datasets while allowing for out-of-order event processing. The document includes code snippets for setting up a Dataflow pipeline, managing event time, and addressing late data efficiently.