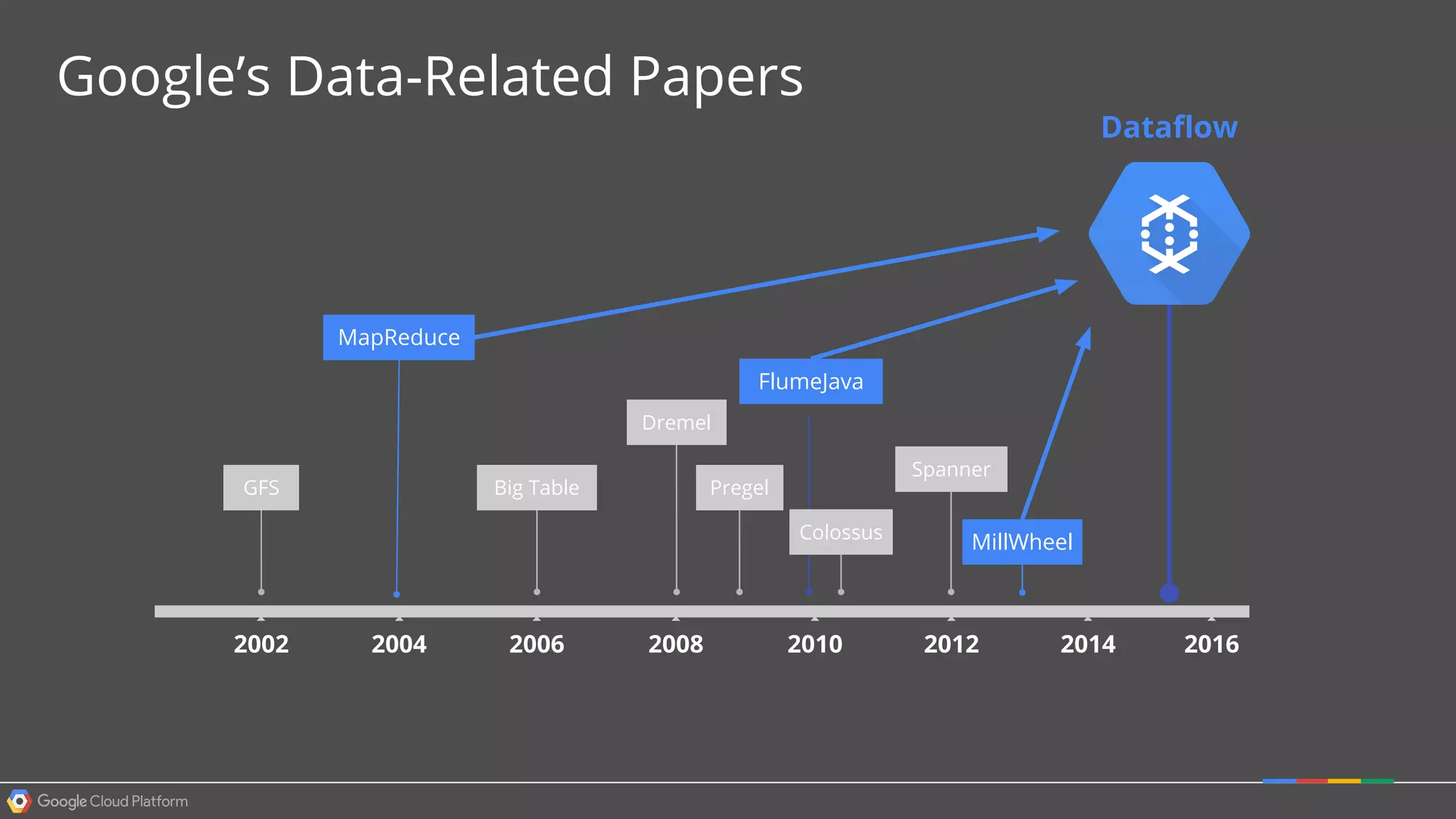

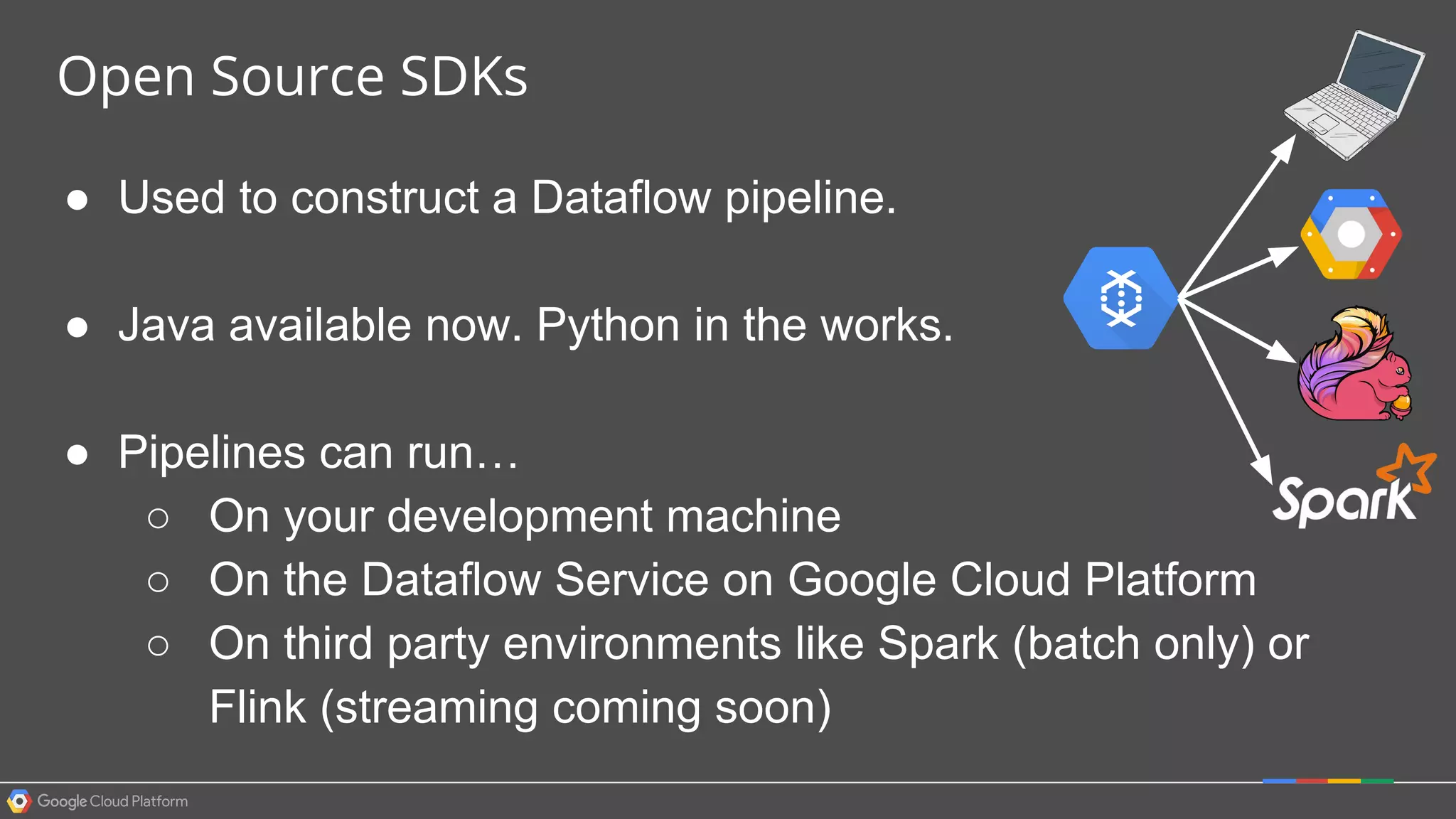

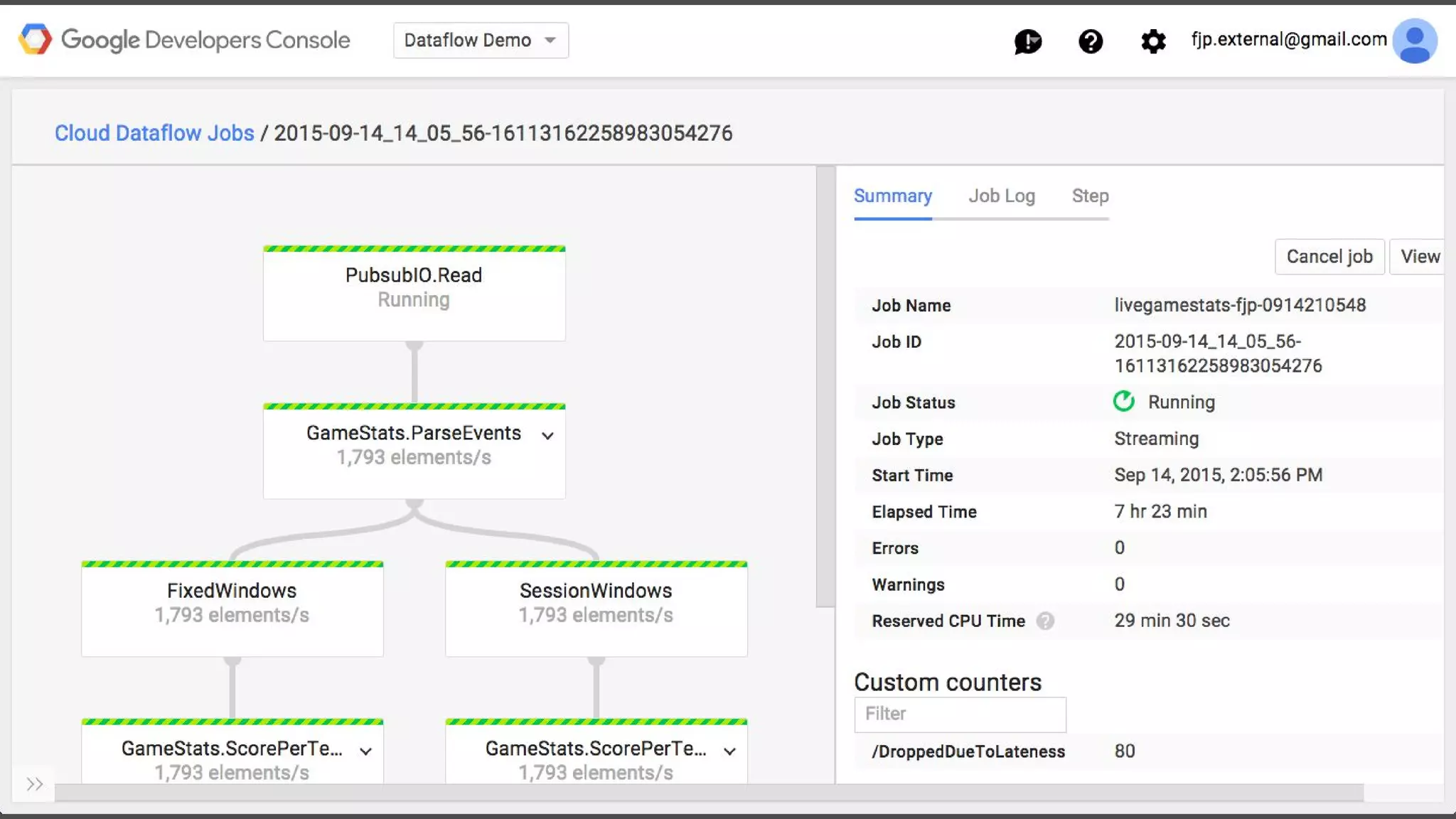

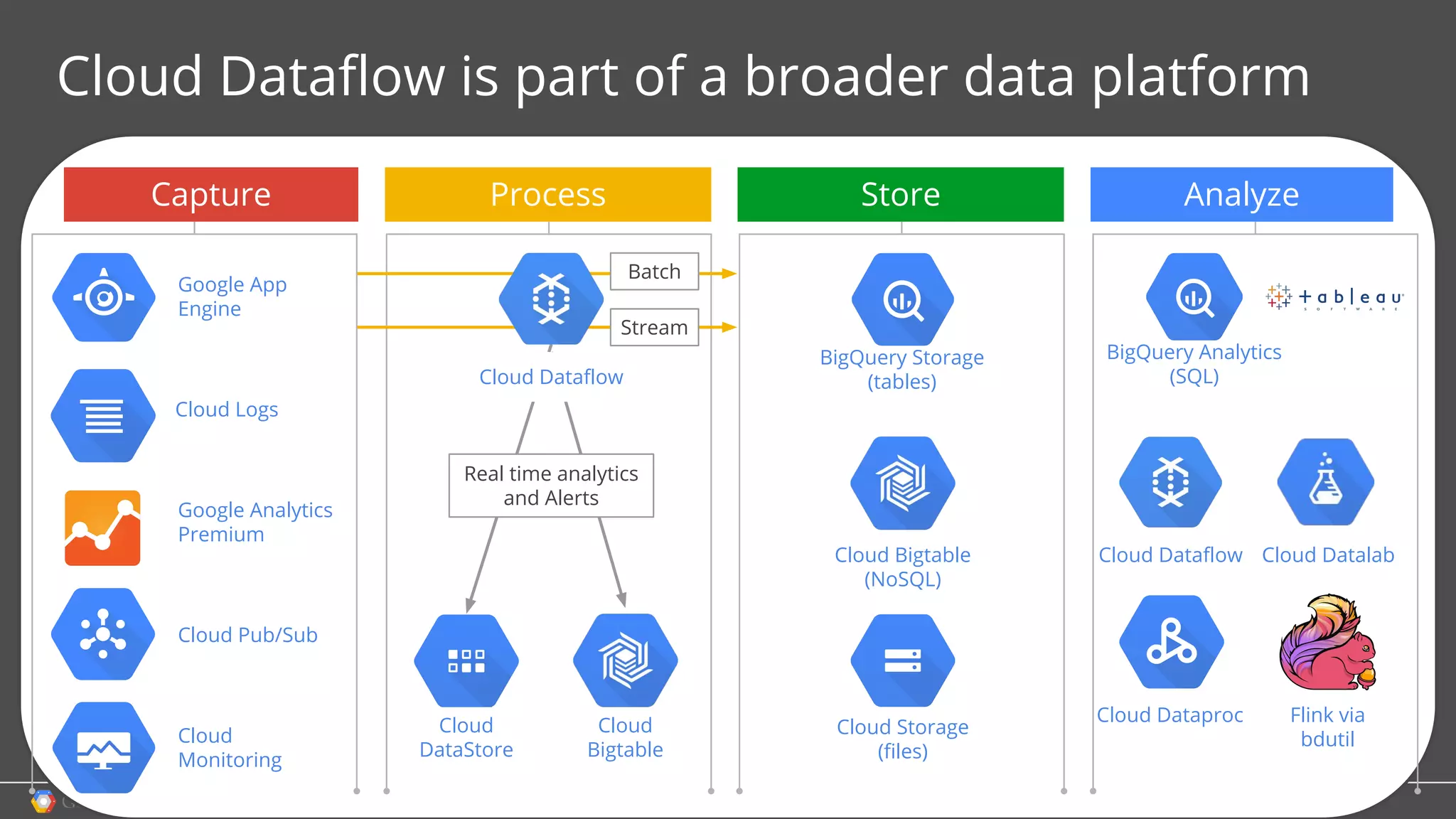

1. Google Cloud Dataflow is a fully managed service that allows users to define data processing pipelines that can run batch or streaming computations.

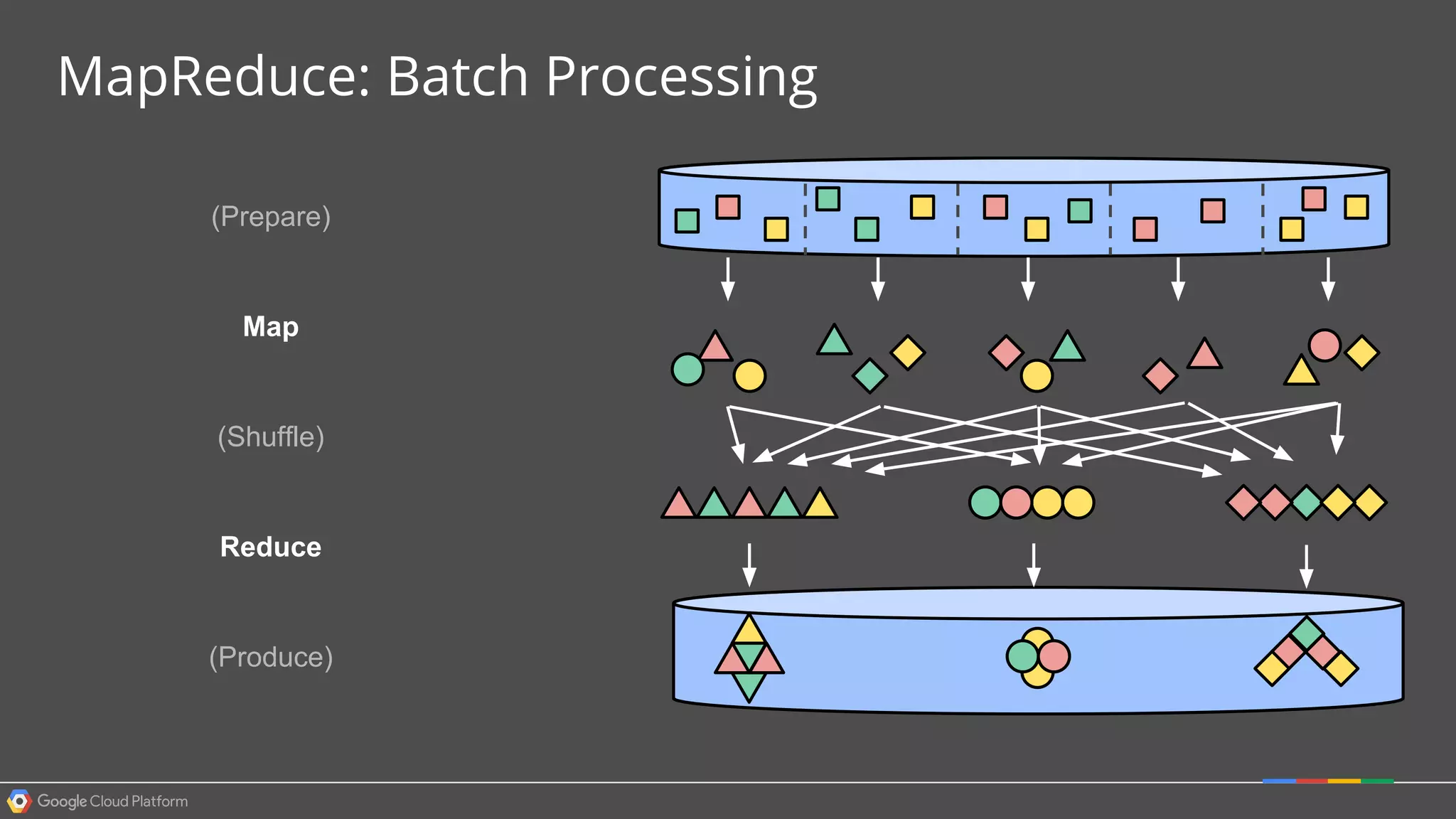

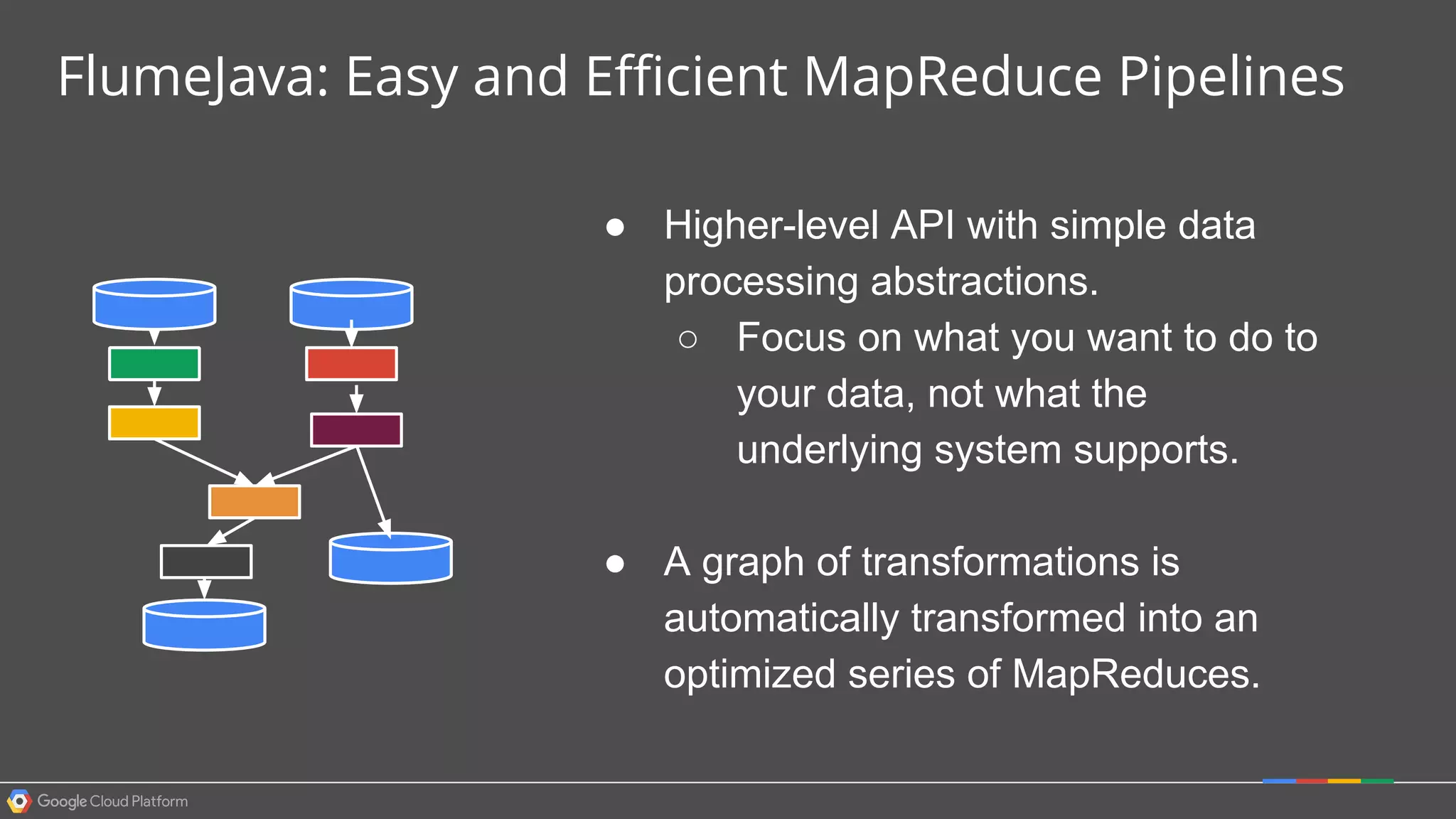

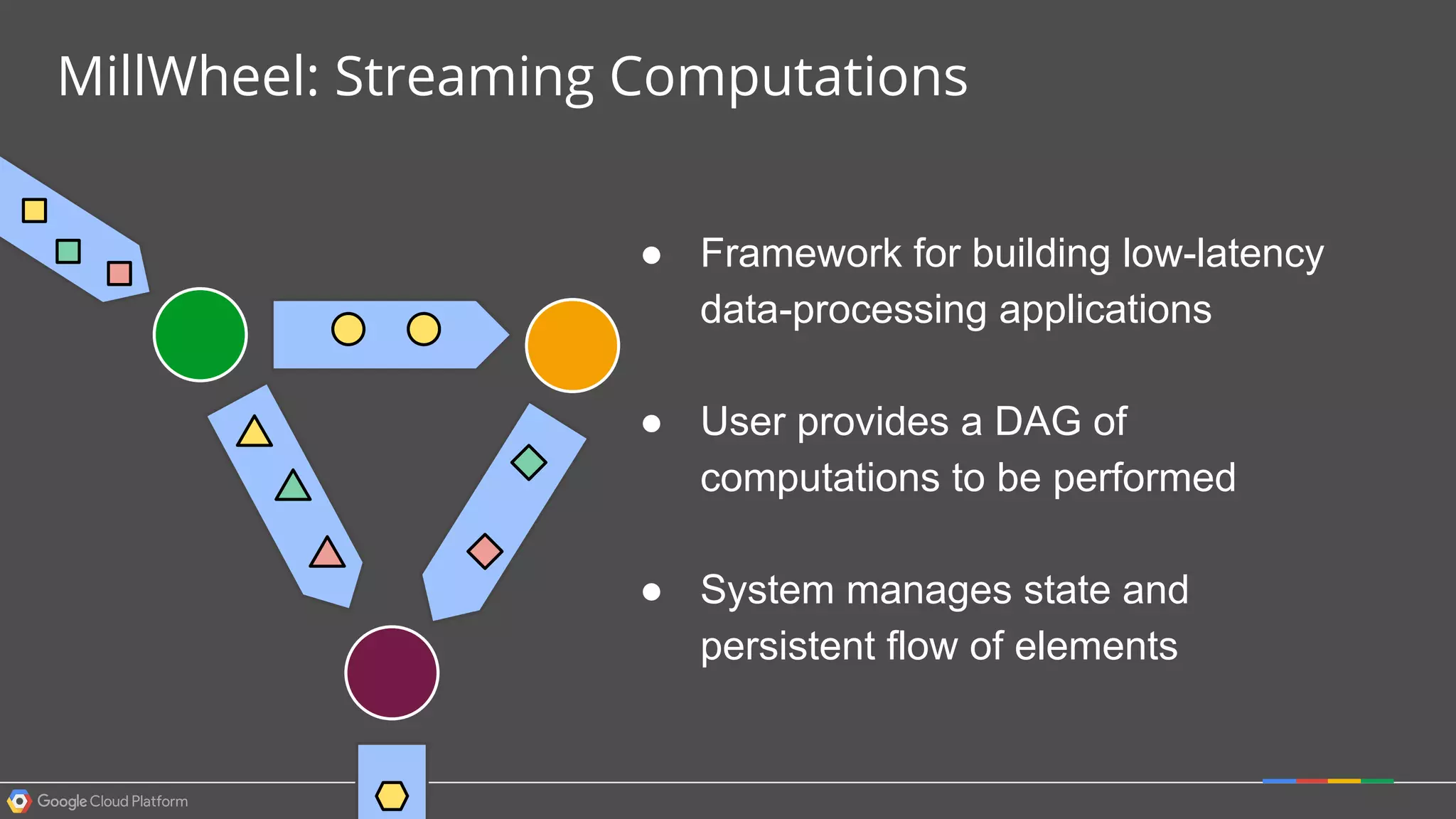

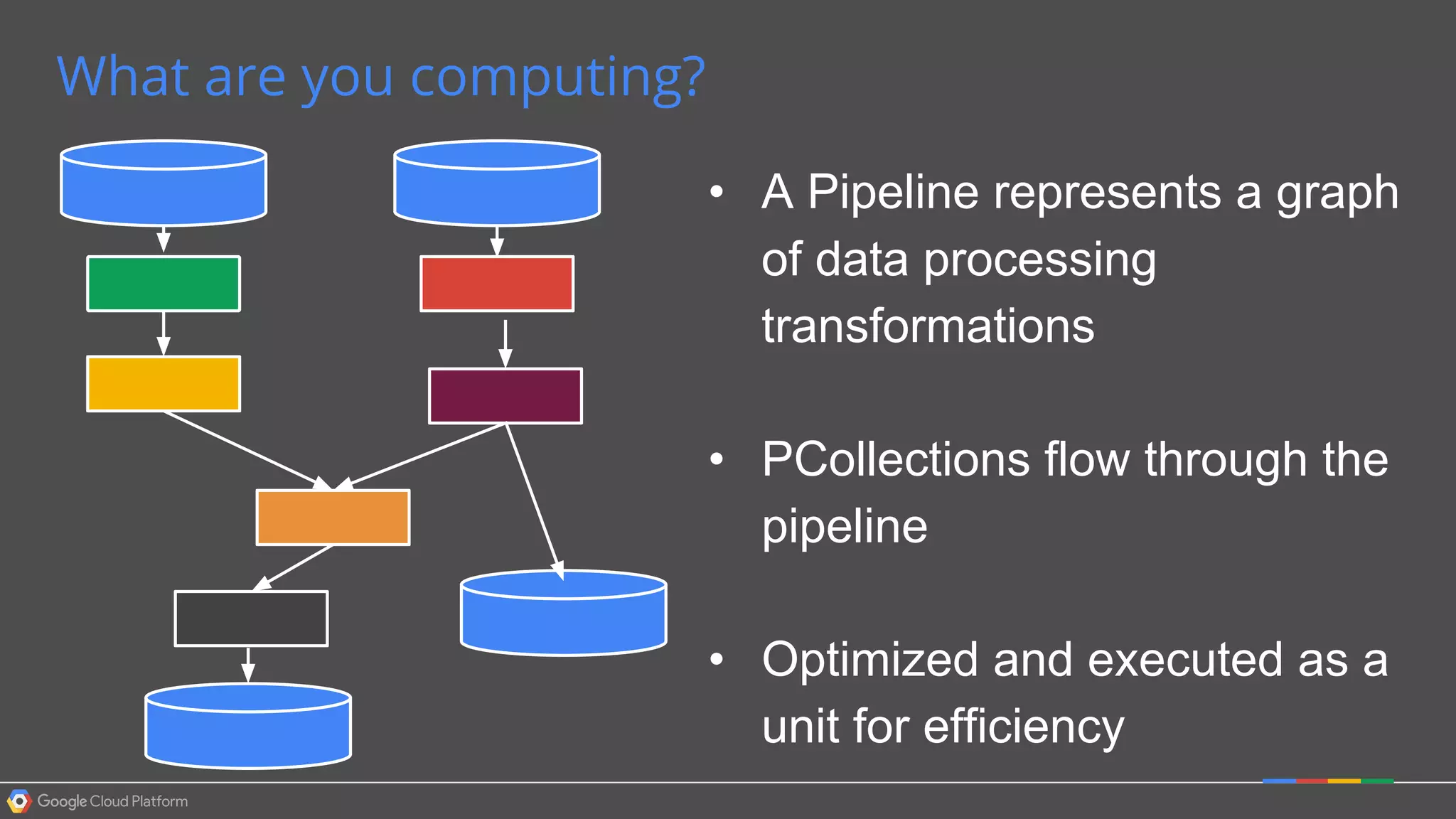

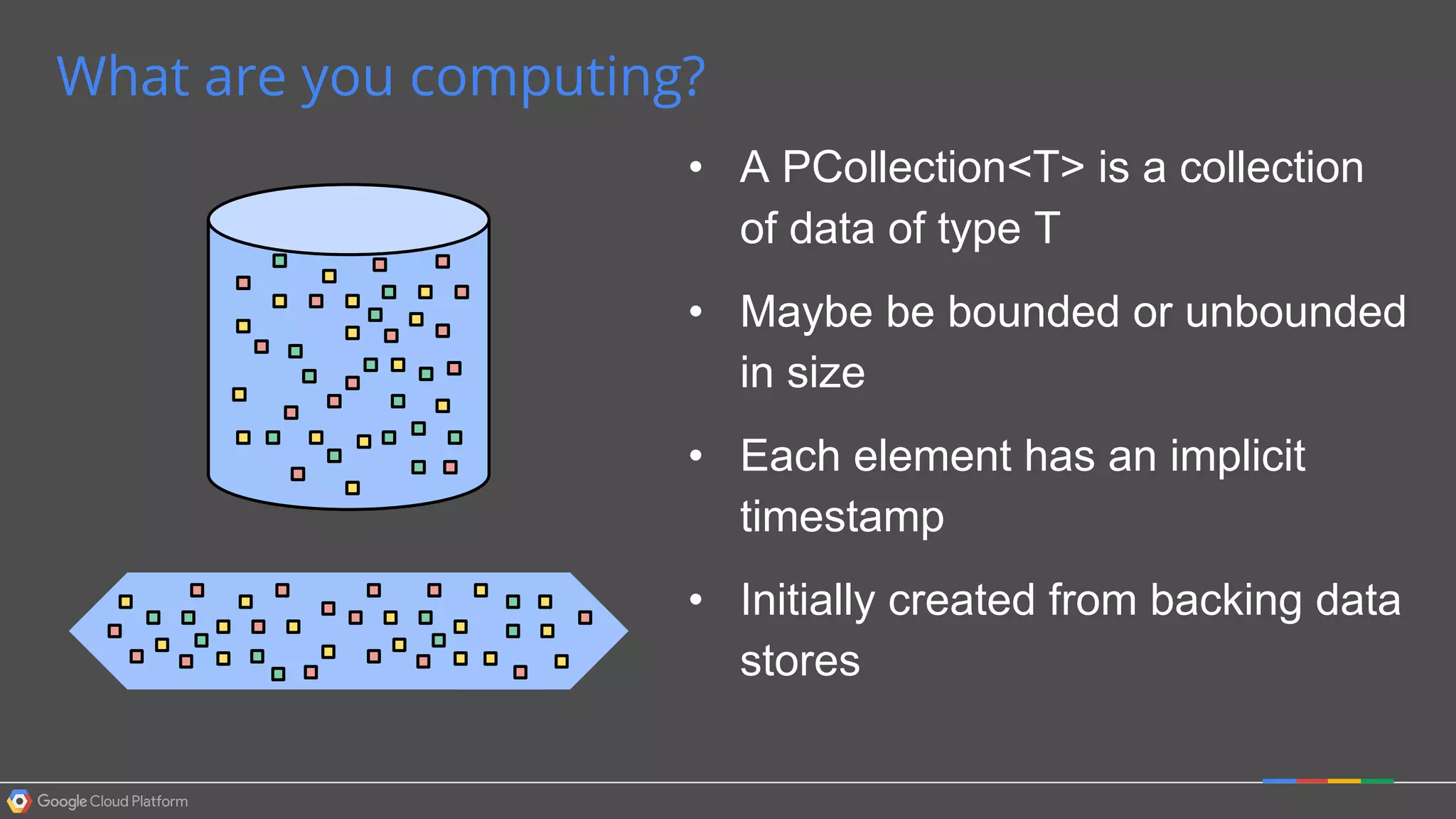

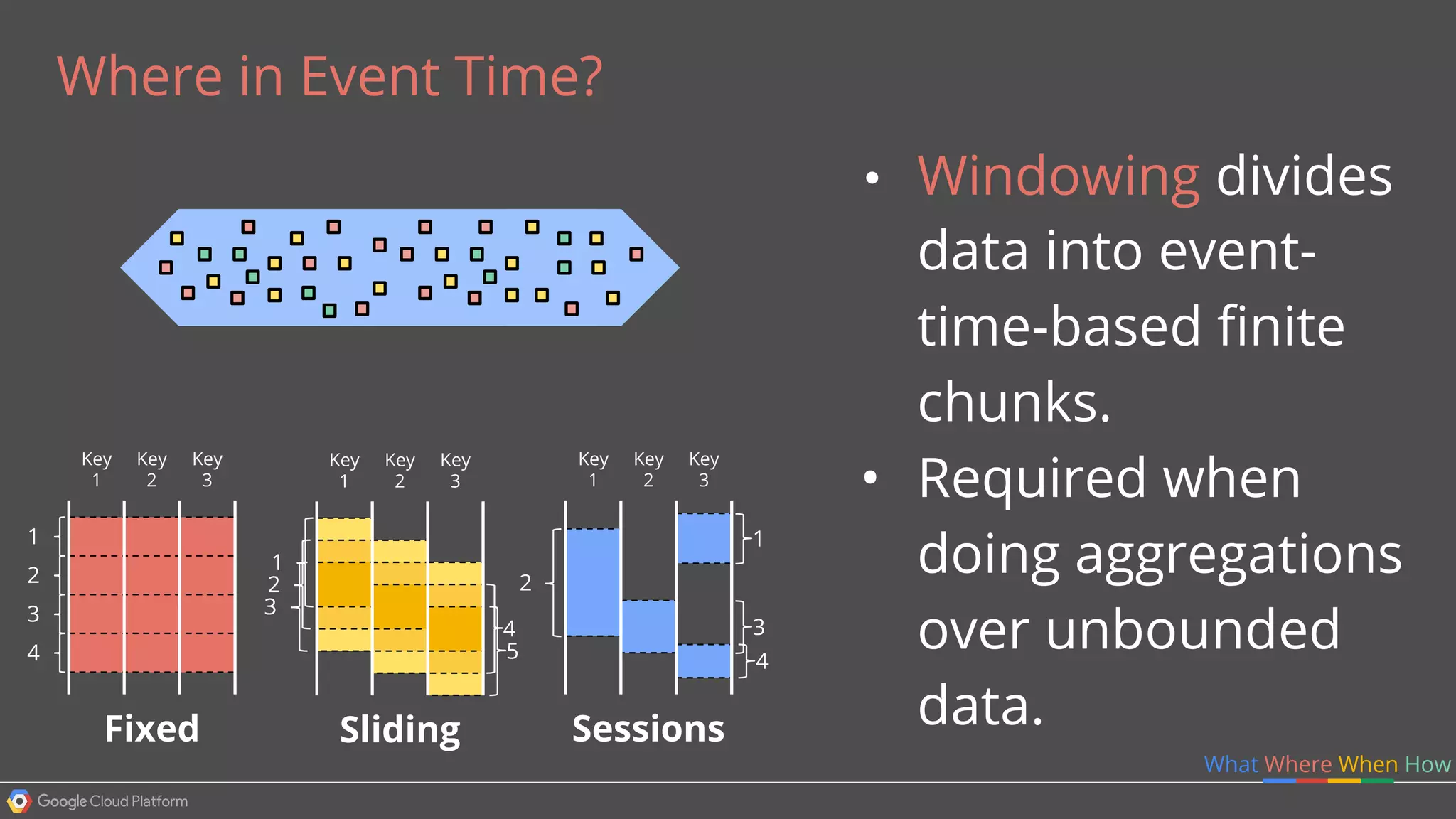

2. The Dataflow programming model defines pipelines as directed graphs of transformations on collections of data elements. This provides flexibility in how computations are defined across batch and streaming workloads.

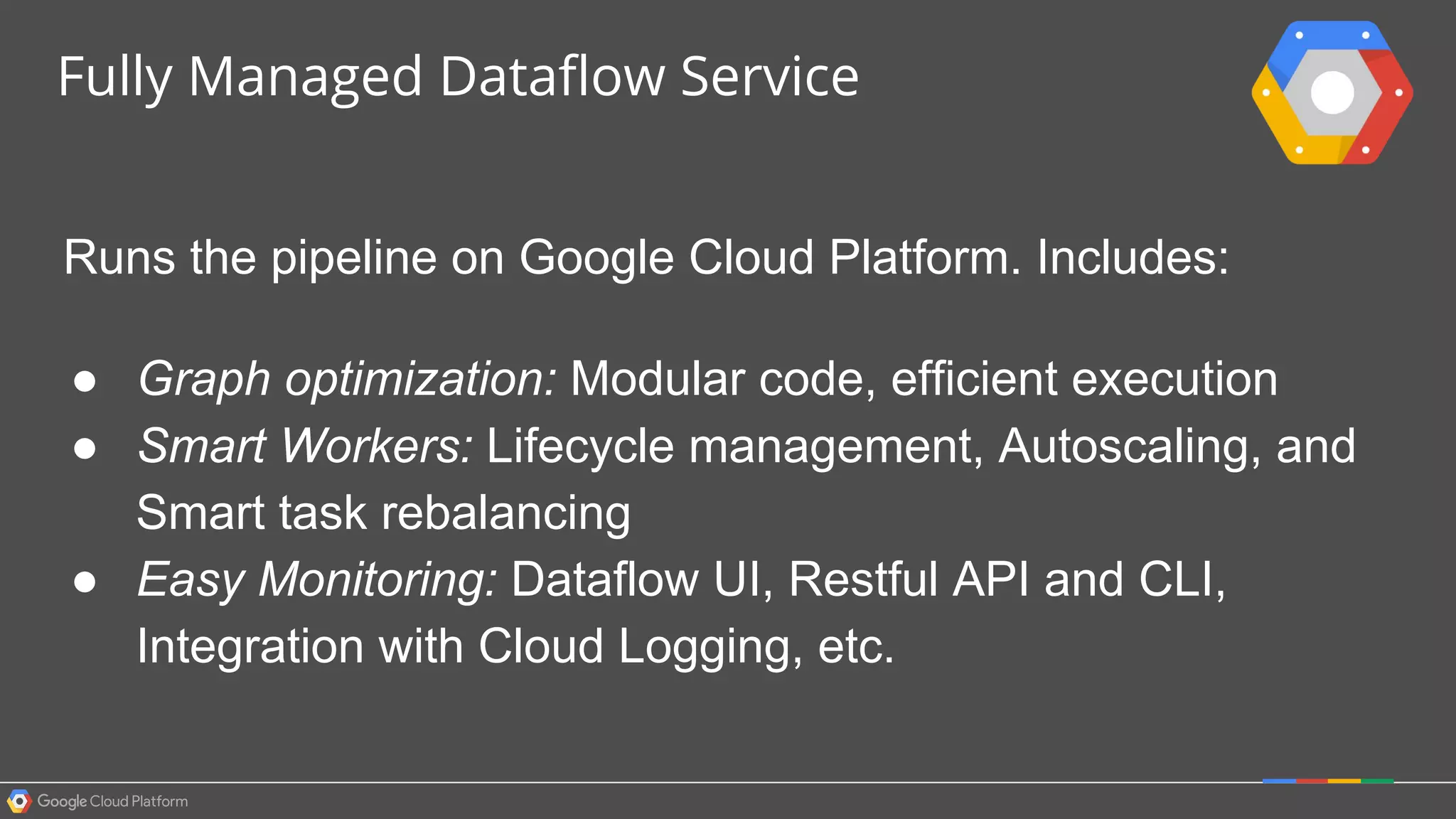

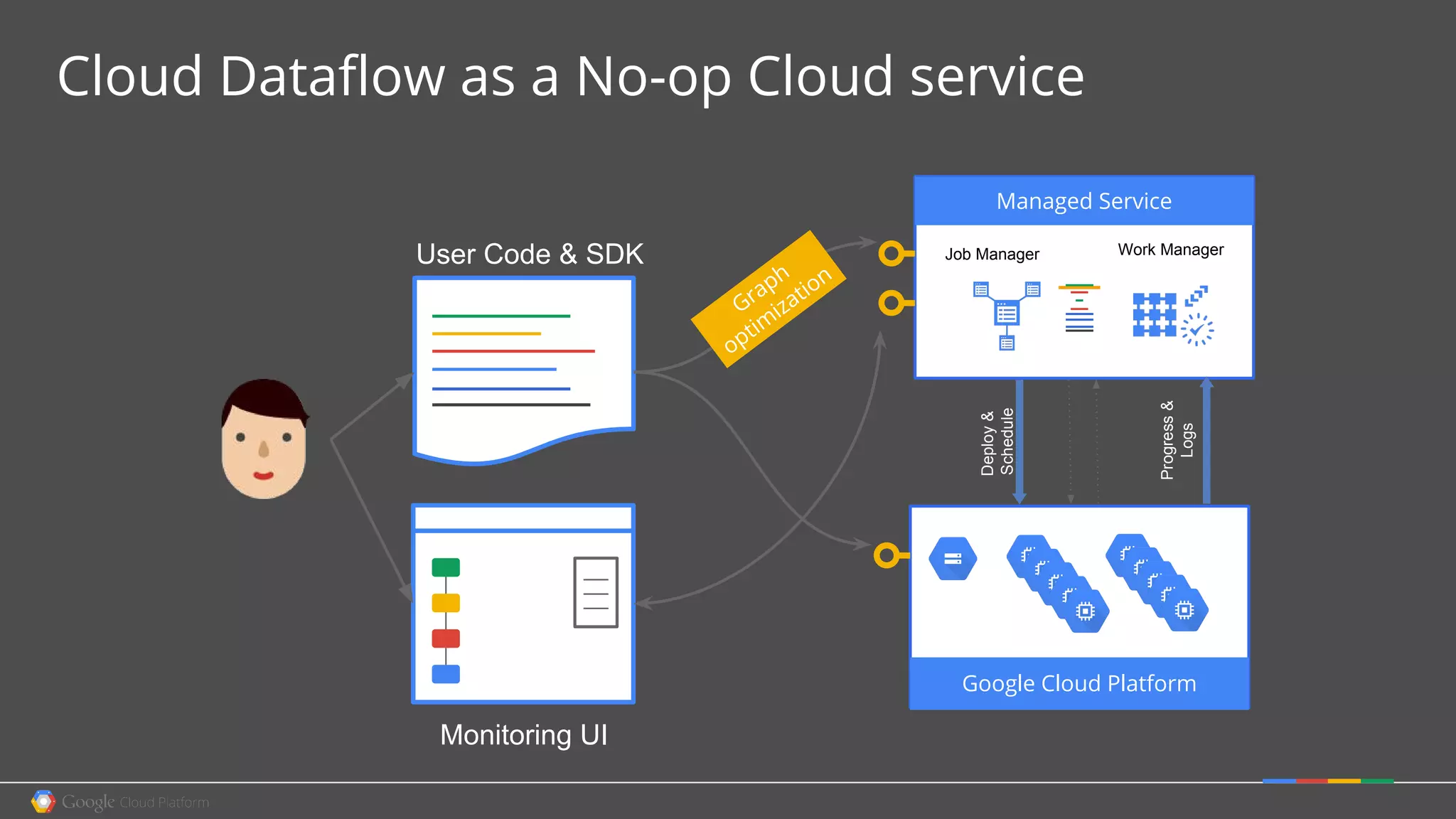

3. The Dataflow service handles graph optimization, scaling of workers, and monitoring of jobs to efficiently execute user-defined pipelines on Google Cloud Platform.