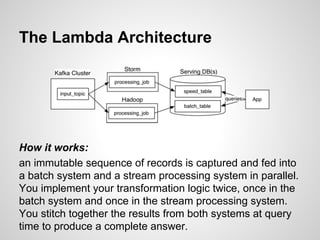

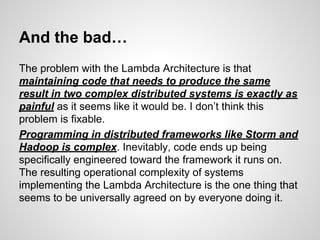

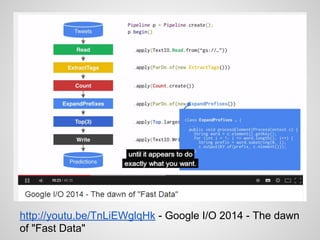

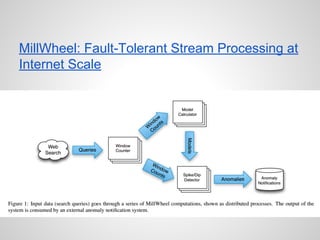

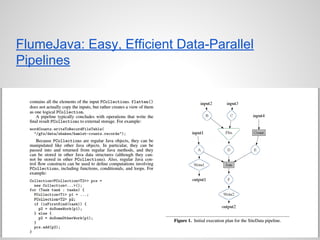

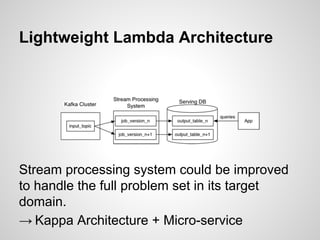

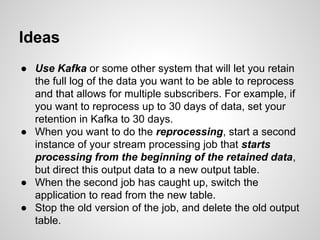

Google Cloud Dataflow is Google's successor to MapReduce, based on their internal Flume and MillWheel technologies. It aims to make building flexible analytics pipelines easier. The Lambda Architecture proposes processing data in batch and stream systems with the same logic, but maintaining code across different frameworks is difficult. While stream processing improves, the Kappa Architecture and microservices may provide a better approach through retaining event streams and reprocessing when needed.