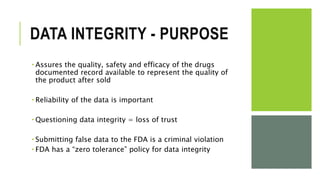

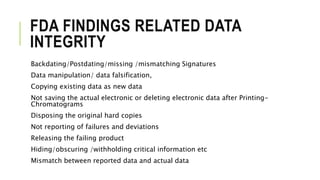

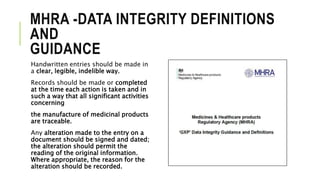

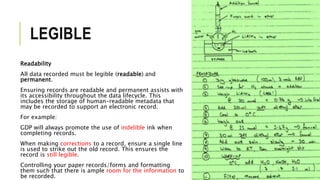

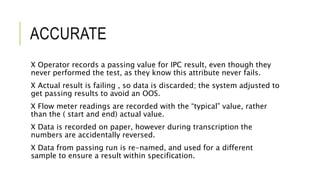

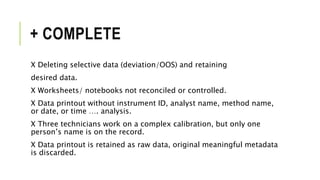

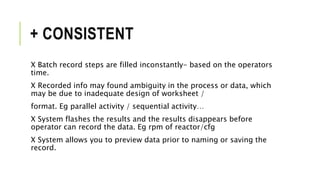

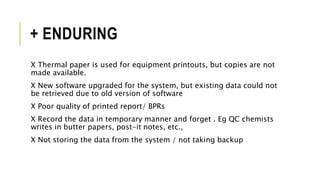

The document outlines the concept of data integrity in clinical pharmacy, emphasizing its importance in maintaining the quality, safety, and efficacy of pharmaceutical products. It discusses the definitions, principles (like ALCOA), implementation practices, and the consequences of data integrity breaches, including regulatory requirements from bodies like the FDA and MHRA. The need for a robust data integrity policy, training, and prevention systems is highlighted to ensure reliable and trustworthy data throughout the product lifecycle.