CVPR2016 was held in Las Vegas from June 26-July 1. The author attended and reported on trends in papers presented. Deep learning and CNNs were widely used for tasks like object detection, segmentation, pose estimation and re-identification. Fast/Faster R-CNN models were common for object detection. CNNs combined with CRFs were frequent for segmentation. Papers on 3D object detection from RGB-D data and dense 3D correspondence between human bodies using CNNs were highlighted.

![姿勢推定:Convolutional Pose Machine

• カスケード型に配置したCNNによる姿勢推定

– 各関節位置の尤度マップを出力

• ステージを進める毎に注目領域を拡大して高精度な尤度マップを出力

– tステージにはt-1ステージの尤度マップと特徴マップを入力

14

9⇥9

C

1⇥1

C

1⇥1

C

1⇥1

C

1⇥1

C

11⇥11

C

11⇥11

C

LossLoss

f 1 f 2

(c) Stage 1

Input

Image

h⇥w⇥3

Input

Image

h⇥w⇥3

9⇥9

C

9⇥9

C

9⇥9

C

2⇥

P

2⇥

P

5⇥5

C

2⇥

P

9⇥9

C

9⇥9

C

9⇥9

C

2⇥

P

2⇥

P

5⇥5

C

2⇥

P

11⇥11

C

(e) E↵ective Receptive Field

x

x0

g1 g2 gT

b1 b2 bT

2 T

(a) Stage 1

PoolingP

ConvolutionC

x0

Convolutional

Pose Machines

(T –stage)

x

x0

h0

⇥w0

⇥(P + 1)

h0

⇥w0

⇥(P + 1)

(b) Stage ≥ 2

(d) Stage ≥ 2

9 ⇥9 26 ⇥26 60 ⇥60 96 ⇥96 160 ⇥160 240 ⇥240 320 ⇥320 400 ⇥400

Figure 2: Architecture and receptive fields of CPMs. We show a convolutional architecture and receptive fields across layers for a CPM with any T

stages. Theposemachine[29] isshown in insets(a) and (b), and thecorresponding convolutional networksareshown in insets(c) and (d). Insets(a) and (c)](https://image.slidesharecdn.com/cvpr2016-160722104254/85/CVPR2016-15-320.jpg)

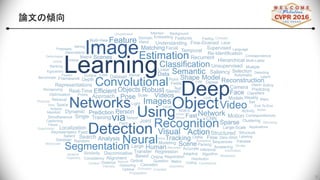

![Stacked Attention Networks for Image Question Answering

• 質問に対して画像中のどこに着目すればいいのかを示すAttention layerを導入したネ

ットワーク

– Attention layerをスタックすることでより正確な着目位置を推定

18

feng Gao2

, Li Deng2

, Alex Smola1

t Research, Redmond, WA 98052, USA

deng} @mi cr osof t . com, al ex@smol a. or g

Question:

What are sitting

in the basket on

a bicycle?

CNN/

LSTM

Softmax

dogs

Answer:

CNN

+

Query

+

Attention layer 1

Attention layer 2

feature vectors of different

parts of image

(a) Stacked Attention Network for ImageQA

hat learn to answer natural language questions from im-

ges. SANs use semantic representation of a question as

uery to search for the regions in an image that arerelated

o the answer. We argue that image question answering

QA) often requires multiple steps of reasoning. Thus, we

evelop a multiple-layer SAN in which we query an image

multiple times to infer the answer progressively. Experi-

ments conducted on four image QA data sets demonstrate

hat the proposed SANs significantly outperform previous

ate-of-the-art approaches. The visualization of the atten-

on layers illustrates the progress that the SAN locates the

elevant visual clues that lead to theanswer of the question

ayer-by-layer.

. Introduction

With the recent advancement in computer vision and

n natural language processing (NLP), image question an-

wering (QA) becomes one of the most active research ar-

as [7, 21, 18, 1, 19]. Unlike pure language based QA sys-

emsthat havebeen studied extensively in theNLPcommu-

ty [28, 14, 4, 31, 3, 32], imageQA systemsaredesigned to

utomatically answer natural language questions according

Question:

What are sitting

in the basket on

a bicycle?

CNN/

LSTM

Softmax

d

Ans

CNN

+

Query

+

Attention layer 1

Attention layer 2

(a) Stacked Attention Network for Image QA

Original Image First Attention Layer Second Attention Layer

(b) Visualization of the learned multiple attention layers. The

stacked attention network first focuses on all referred concepts,

e.g., bi cycl e, basket and objects in the basket (dogs) in

thefirst attention layer and then further narrowsdown thefocus in

thesecond layer and finds out theanswer dog.](https://image.slidesharecdn.com/cvpr2016-160722104254/85/CVPR2016-19-320.jpg)