The document proposes a cost-aware virtual machine placement approach across distributed data centers using Bayesian networks. It designs a Bayesian network to model expert knowledge on cloud infrastructure management. It then uses the GQM method to define measures for criteria based on the Bayesian network outputs. Finally, it applies multi-criteria decision analysis to create a utility function for virtual machine allocation and migration decisions. The approach was evaluated using a cloud simulation framework and real workload and infrastructure data, showing improvements of up to 69% in total costs compared to baseline algorithms.

![12th International Conference on Economics of Grids, Clouds, Systems, and Services (GECON’15), Romania , 15-17 Sep, 2015

Introduction

• Fast growing trend of Cloud Computing industry of over 300% in the last 6 years

• 86% of companies use more than one type of Cloud Computing services

• 30 millions of Cloud servers geographically distributed all over the world

• Huge environmental impact of Cloud Computing (1-2% of the world electricity usage)

• Not optimal energy usage plans while there are possible cost efficient solutions

• Challenges:

– high Quality-of-Service (QoS) expectations of Cloud customers

– Cloud providers’ challenges for the Costs vs. QoS trade-off

2Introduction Approach Evaluation Conclusion

Fig.1: Windows Azure CDN Locations [1]

[1] https://www.simple-talk.com/cloud/development/an-introduction-to-windows-azure-%28part-2%29/](https://image.slidesharecdn.com/gecon2015bayesian-network2015-09-15v5soodeh-150924180918-lva1-app6892/85/Cost-Aware-Virtual-Machine-Placement-across-Distributed-Data-Centers-using-Bayesian-Networks-2-320.jpg)

![12th International Conference on Economics of Grids, Clouds, Systems, and Services (GECON’15), Romania , 15-17 Sep, 2015

Contributions

• An approach to reduce the cloud operating cost by applying VM placement across geo-distributed DCs:

– Leverages the cloud expert knowledge and models them in a Bayesian Network (BN)

– The outputs of the BN are utilized in proposed VM allocation and consolidation algorithms

• A cloud simulation framework CloudNet [1] with the following features:

– Simulation of cloud infrastructure

– Utilization and generation of various application workloads

– Usage of geo-distributed DCs

– Management of cooling systems

– Usage of synthetic and real weather data

– Scheduling of power outages

– SLA-aware simulation

– Prediction of resource usage

5

[1] https://github.com/dmitrygrig/CloudNet/

Introduction Approach Evaluation Conclusion](https://image.slidesharecdn.com/gecon2015bayesian-network2015-09-15v5soodeh-150924180918-lva1-app6892/85/Cost-Aware-Virtual-Machine-Placement-across-Distributed-Data-Centers-using-Bayesian-Networks-5-320.jpg)

![12th International Conference on Economics of Grids, Clouds, Systems, and Services (GECON’15), Romania , 15-17 Sep, 2015

Challenges of managing Cloud data centers

• Geo-distributed DCs

– Dynamic electricity market

– Various time zones

– Different weather conditions (e.g., temperature)

• Frequent power outages

• VM migration is dependent on dynamic factors such as VM RAM size, bandwidth, etc.

• Trade-off (multi-criteria decision problem): reduction of the DCs energy cost vs. customer satisfaction in terms of QoS

6

Fig1.: Windows Azure CDN Locations [1]

[1] https://www.simple-talk.com/cloud/development/an-introduction-to-windows-azure-%28part-2%29/

Example: Microsoft Azure

• Operates in several regions around the world

• Electrical downtimes: from 6 min/year in Japan till 20 h/year in Brazil

• Energy prices differ more than twice at some locations

• Day/ night energy price rates

• Outdoor temperatures from -35 °C to +35 °C

Introduction Approach Evaluation Conclusion](https://image.slidesharecdn.com/gecon2015bayesian-network2015-09-15v5soodeh-150924180918-lva1-app6892/85/Cost-Aware-Virtual-Machine-Placement-across-Distributed-Data-Centers-using-Bayesian-Networks-6-320.jpg)

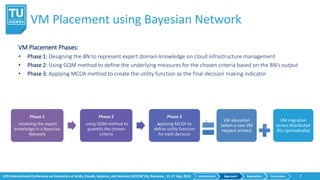

![12th International Conference on Economics of Grids, Clouds, Systems, and Services (GECON’15), Romania , 15-17 Sep, 2015

Phase 2: using GQM

Phase 2: using GQM method

• definition of underlying measures for the chosen criteria 𝑔𝑖 𝑎

• based on the BN’s output

12

Power outage

Low 1

Middle 0.3

High 0.1

Energy price

Low 1

Middle 0.7

High 0.5

Table 4: Criteria mapped to values in [0,1] interval using GQM

Introduction Approach Evaluation Conclusion](https://image.slidesharecdn.com/gecon2015bayesian-network2015-09-15v5soodeh-150924180918-lva1-app6892/85/Cost-Aware-Virtual-Machine-Placement-across-Distributed-Data-Centers-using-Bayesian-Networks-12-320.jpg)

![12th International Conference on Economics of Grids, Clouds, Systems, and Services (GECON’15), Romania , 15-17 Sep, 2015

Evaluation input data

Used real data traces:

• temperature (http://forecast.io/)

• cooling modes (Mechanical, Air, Mixed)

• power outage statistics

• electricity prices

• PM power specifications (SPECpower benchmark)

15Introduction Approach Evaluation Conclusion

Fig. 8a: Temperature data traces

Fig. 7: Power outage statistics [1]

http://earlywarn.blogspot.co.at/2013/05/international-power-outage-comparisons.html

Fig. 8b: Cooling modes Fig. 8c: Energy prices](https://image.slidesharecdn.com/gecon2015bayesian-network2015-09-15v5soodeh-150924180918-lva1-app6892/85/Cost-Aware-Virtual-Machine-Placement-across-Distributed-Data-Centers-using-Bayesian-Networks-15-320.jpg)

![12th International Conference on Economics of Grids, Clouds, Systems, and Services (GECON’15), Romania , 15-17 Sep, 2015

Evaluation Setup

• Simulation period: 1 month (January, 1, 2013 – February, 1, 2013)

• Interval: 1 hour

• VM specs: 1000MIPS, 768MB RAM

• PM specs: 3000MIPS, 4GB RAM (HP ProLiant ML110 G3)

16Introduction Approach Evaluation Conclusion

Table 6: Evaluation setup configuration

http://www.spec.org/power_ssj2008/results/res2011q1/power_ssj2008-20110127-00342.html

Fig. 9: PM power specifications [1]](https://image.slidesharecdn.com/gecon2015bayesian-network2015-09-15v5soodeh-150924180918-lva1-app6892/85/Cost-Aware-Virtual-Machine-Placement-across-Distributed-Data-Centers-using-Bayesian-Networks-16-320.jpg)