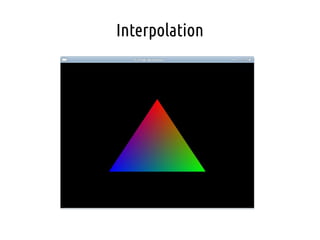

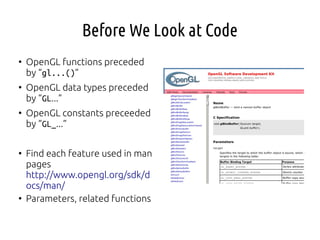

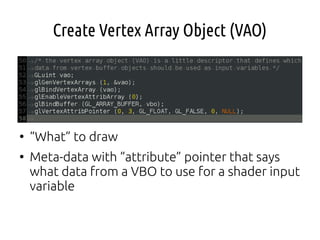

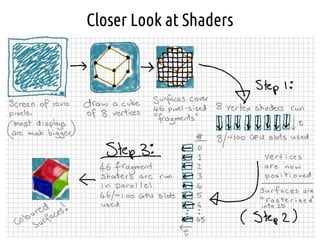

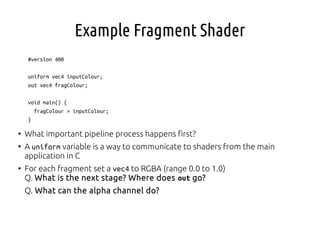

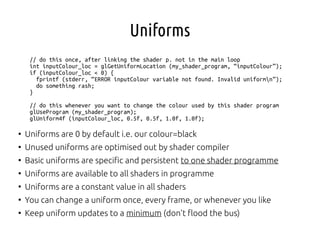

Here are a few key points about adding vertex colors to the example:

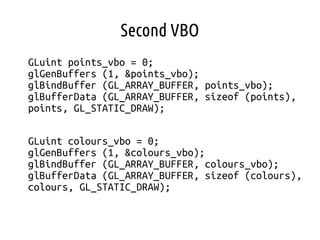

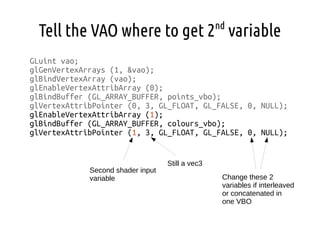

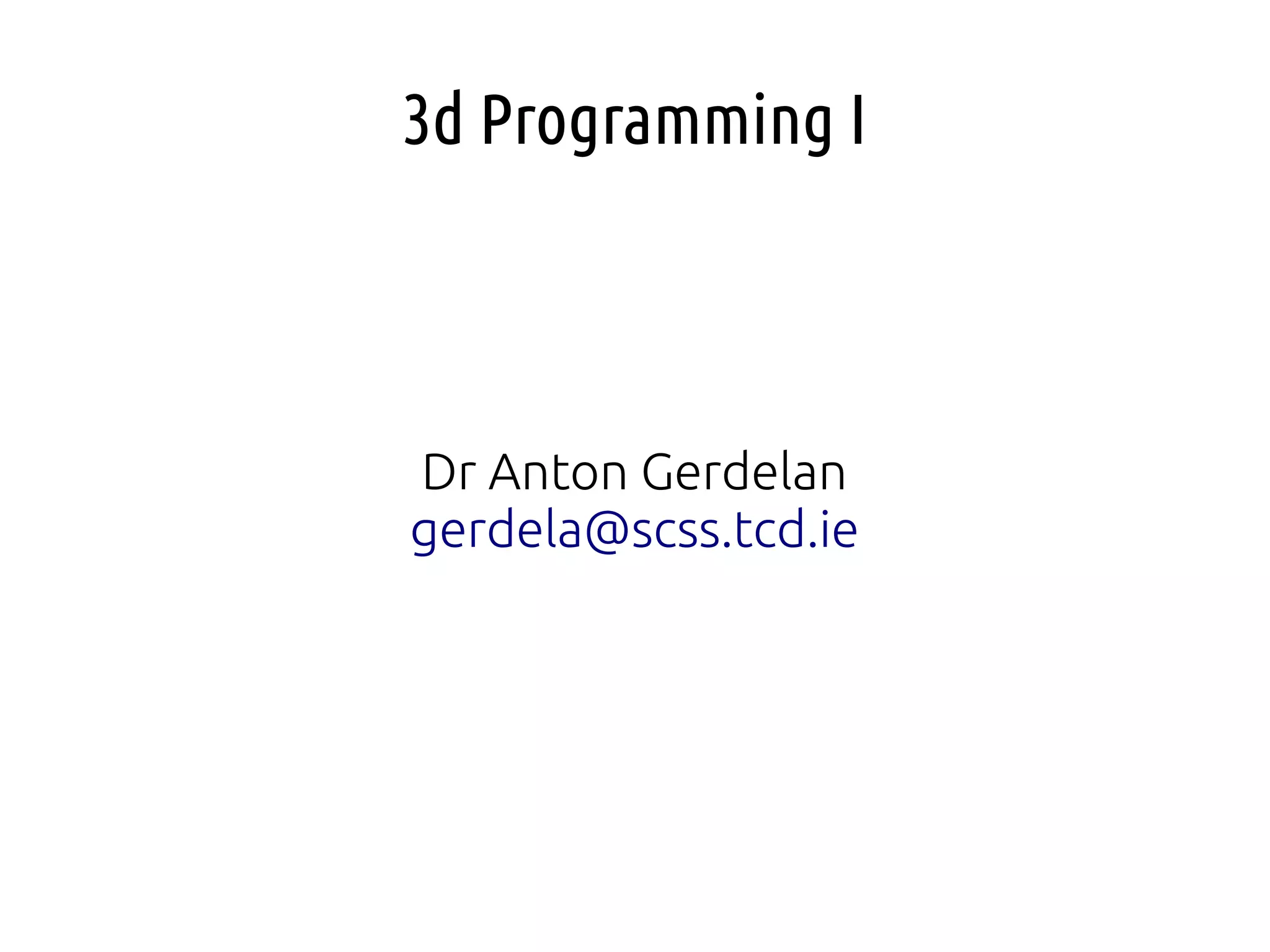

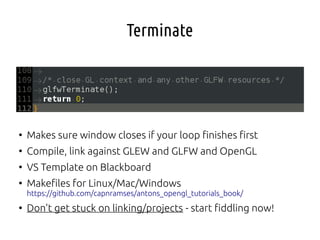

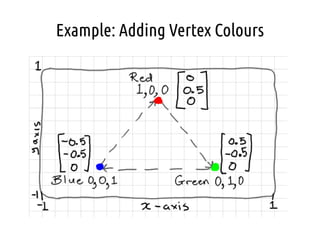

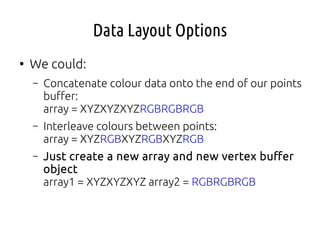

- Storing the color data in a separate buffer is cleaner than concatenating or interleaving it with the position data. This keeps the data layout simple.

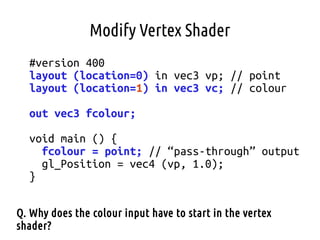

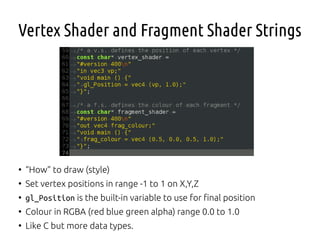

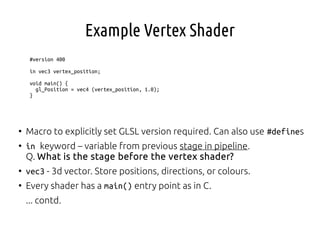

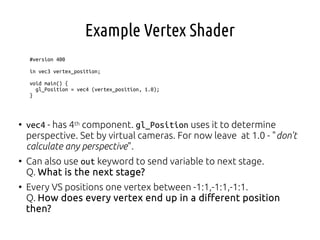

- The vertex shader now has inputs for both the position (vp) and color (vc) attributes.

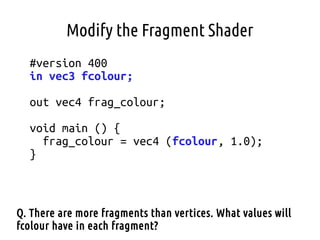

- The color is passed through as an output (fcolour) to the fragment shader.

- The position is still used to set gl_Position for transformation.

- The color input has to start in the vertex shader because that is where per-vertex attributes like color are interpolated across the primitive before being sampled in the fragment shader. The vertex shader interpolates the color value

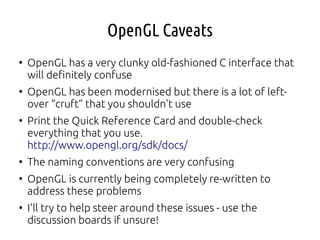

![OpenGL Global State Machine

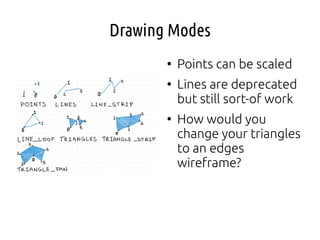

●

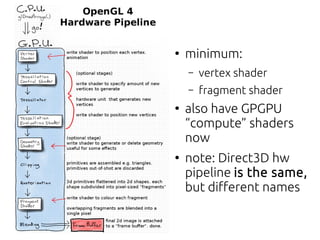

The OpenGL interface uses an architecture called a

“global state machine”

●

Instead of doing this sort of thing:

Mesh myMesh;

myMesh.setData (some_array);

opengl.drawMesh (myMesh);

●

in a G.S.M. we do this sort of thing [pseudo-code]:

unsigned int mesh_handle;

glGenMesh (&mesh_handle); // okay so far, just C style

glBindMesh (mesh_handle); // my mesh is now “the” mesh

// affects most recently “bound” mesh

glMeshData (some_array);

●

How this might cause a problem later?](https://image.slidesharecdn.com/013dproganton-170623132654/85/Computer-Graphics-Lecture-01-3D-Programming-I-12-320.jpg)

![File Naming Convention

●

Upgarde the template – load shader strings from text files

●

post-fix with

– .vert - vetex shader

– .frag - fragment shader

– .geom - geometry shader

– .comp - compute shader

– .tesc - tessellation control shader

– .tese - tessellation evaluation shader

●

allows you to use a tool like Glslang reference compiler to

check for [obvious] errors](https://image.slidesharecdn.com/013dproganton-170623132654/85/Computer-Graphics-Lecture-01-3D-Programming-I-40-320.jpg)

![Second Array

GLfloat points[] = {

0.0f, 0.5f, 0.0f,

0.5f, -0.5f, 0.0f,

-0.5f, -0.5f, 0.0f

};

GLfloat colours[] = {

1.0f, 0.0f, 0.0f,

0.0f, 1.0f, 0.0f,

0.0f, 0.0f, 1.0f

};](https://image.slidesharecdn.com/013dproganton-170623132654/85/Computer-Graphics-Lecture-01-3D-Programming-I-50-320.jpg)