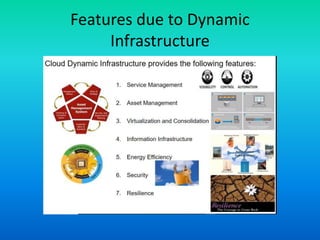

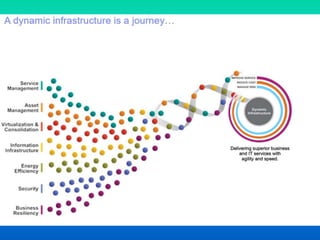

The document discusses the concept of dynamic infrastructure in cloud computing, focusing on its service management, asset management, virtualization, energy efficiency, security, and business resiliency. It highlights the benefits such as rapid scalable deployment, significant cost reductions, and enhanced performance metrics. The need for well-planned business continuity and disaster recovery strategies is also emphasized, revealing that many companies lack effective measures.