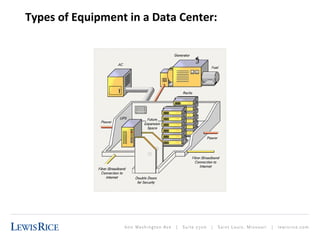

The document discusses the intricacies of data center leases and service level agreements, highlighting unique features of data centers such as the need for uninterrupted power, climate control, data connectivity, physical security, and structural integrity. It outlines trends driving the evolution of data centers, including the growth of cloud computing, virtualization, and heightened consumer expectations for connectivity and data access. The importance of these factors in shaping lease terms and the technological needs of data centers is emphasized throughout.