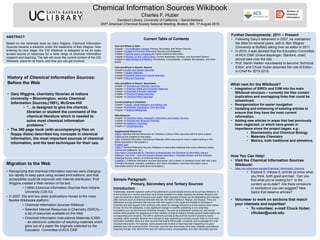

Chemical Information Sources Wikibook poster

•Download as PPTX, PDF•

1 like•177 views

Poster on the Chemical Information Sources Wikibook, presented at the 250th American Chemical Society National Meeting, Boston, MA on 17 August 2015.

Report

Share

Report

Share

Recommended

A Strategy for Sharing Your Research: Make Your Work Open Access

This document discusses how to make research work openly accessible. It recommends publishing in open access journals, which make articles freely available online, or depositing work in an open access repository like FAU Digital Library. Open access allows easy sharing of research. Examples are given of FAU researchers who have published in open access journals in various fields. The institutional repository at FAU aims to manage and disseminate digital materials created at the university to preserve and provide access to scholarship. Questions about open access can be directed to the contact provided.

High water raises all boats

The document discusses the University of Kentucky Libraries' efforts to build a digital repository by leveraging partnerships across campus. It outlines how the library advocated for a campus-wide repository model in 2007 and began populating the UKnowledge repository. As new data management requirements emerged from funders like NSF and NIH, the library explored technical options and settled on a microservices-based approach using Hydra, Archivematica, and CDL microservices. The library's roles include technical leadership, metadata, and data management plans, while IT provides storage and infrastructure and research provides policies and proposal support. The initial scope is serving research data needs, with potential future expansion to an enterprise repository.

UKSG Conference 2016 Breakout Session - Discovery and linking integrity – do ...

UKSG Conference 2016 Breakout Session - Discovery and linking integrity – do ...UKSG: connecting the knowledge community

How serendipitous is discovery for users? Like many a teenager, OpenURL linking can behave inappropriately. What can we do to smooth out the bumps on the road and what other tools are available? This breakout session will walk swiftly through linking to discovery targets, from OpenURL 0.1/1.0, to Index-Enhanced Direct Linking, Link 2.0 and beyond …1476-4598-3-23

1) Molecular Cancer is an open access journal that aims to maximize the exchange of scientific information by making all of its content freely available.

2) Open access has several broad benefits including universal accessibility of articles online, copyright retention by authors, and permanent archiving of articles which can increase citations and dissemination.

3) Molecular Cancer accepts articles through a peer review process and publishes them online along with supporting materials, allowing for fast publication and wider dissemination of research.

Publishing Open Research Data

Presentation given at SciDataCon 2014, Data Papers and Their Applications workshop, New Delhi, November 2 2014.

Chemistryand web2 ma walker 2 5 10

This document discusses the transition of chemistry information from traditional closed "Web 1.0" resources to more open "Web 2.0" collaborations. It outlines initiatives like Wikipedia's Chemistry Project, ChemSpider, and Open Notebook Science that openly share chemical data. While traditional peer review and publishers may be threatened, open approaches allow broader validation and updating of information over time. The future of chemistry information sharing lies in open, collaborative websites and databases that motivate experts and nonexperts alike to contribute data.

Using wikipedia as a source of chemical information

Webinar for the Chemical Information Division of the American Chemical Society. Describes descriptions of the types of chemical data in Wikipedia, and also how these are uploaded and maintained by the Wikipedia community.

Data Citation: A Critical Role for Publishers

The document discusses the critical role publishers play in data citation. It emphasizes the importance of publishers establishing clear guidelines for citing data, training copy editors to ensure data is properly cited, promoting the use of data papers to incentivize data sharing and reuse, and making data citations machine-readable through XML tagging or RDF to facilitate discovery and analysis of cited data.

Recommended

A Strategy for Sharing Your Research: Make Your Work Open Access

This document discusses how to make research work openly accessible. It recommends publishing in open access journals, which make articles freely available online, or depositing work in an open access repository like FAU Digital Library. Open access allows easy sharing of research. Examples are given of FAU researchers who have published in open access journals in various fields. The institutional repository at FAU aims to manage and disseminate digital materials created at the university to preserve and provide access to scholarship. Questions about open access can be directed to the contact provided.

High water raises all boats

The document discusses the University of Kentucky Libraries' efforts to build a digital repository by leveraging partnerships across campus. It outlines how the library advocated for a campus-wide repository model in 2007 and began populating the UKnowledge repository. As new data management requirements emerged from funders like NSF and NIH, the library explored technical options and settled on a microservices-based approach using Hydra, Archivematica, and CDL microservices. The library's roles include technical leadership, metadata, and data management plans, while IT provides storage and infrastructure and research provides policies and proposal support. The initial scope is serving research data needs, with potential future expansion to an enterprise repository.

UKSG Conference 2016 Breakout Session - Discovery and linking integrity – do ...

UKSG Conference 2016 Breakout Session - Discovery and linking integrity – do ...UKSG: connecting the knowledge community

How serendipitous is discovery for users? Like many a teenager, OpenURL linking can behave inappropriately. What can we do to smooth out the bumps on the road and what other tools are available? This breakout session will walk swiftly through linking to discovery targets, from OpenURL 0.1/1.0, to Index-Enhanced Direct Linking, Link 2.0 and beyond …1476-4598-3-23

1) Molecular Cancer is an open access journal that aims to maximize the exchange of scientific information by making all of its content freely available.

2) Open access has several broad benefits including universal accessibility of articles online, copyright retention by authors, and permanent archiving of articles which can increase citations and dissemination.

3) Molecular Cancer accepts articles through a peer review process and publishes them online along with supporting materials, allowing for fast publication and wider dissemination of research.

Publishing Open Research Data

Presentation given at SciDataCon 2014, Data Papers and Their Applications workshop, New Delhi, November 2 2014.

Chemistryand web2 ma walker 2 5 10

This document discusses the transition of chemistry information from traditional closed "Web 1.0" resources to more open "Web 2.0" collaborations. It outlines initiatives like Wikipedia's Chemistry Project, ChemSpider, and Open Notebook Science that openly share chemical data. While traditional peer review and publishers may be threatened, open approaches allow broader validation and updating of information over time. The future of chemistry information sharing lies in open, collaborative websites and databases that motivate experts and nonexperts alike to contribute data.

Using wikipedia as a source of chemical information

Webinar for the Chemical Information Division of the American Chemical Society. Describes descriptions of the types of chemical data in Wikipedia, and also how these are uploaded and maintained by the Wikipedia community.

Data Citation: A Critical Role for Publishers

The document discusses the critical role publishers play in data citation. It emphasizes the importance of publishers establishing clear guidelines for citing data, training copy editors to ensure data is properly cited, promoting the use of data papers to incentivize data sharing and reuse, and making data citations machine-readable through XML tagging or RDF to facilitate discovery and analysis of cited data.

UKSG Conference 2016 Breakout Session - Of Libraries and Labs: effecting user...

UKSG Conference 2016 Breakout Session - Of Libraries and Labs: effecting user...UKSG: connecting the knowledge community

This document summarizes Alex Humphreys' presentation on JSTOR Labs and their work on user-driven innovation projects with libraries and other partners. The presentation included three case studies: JSTOR Snap, a tool for capturing snippets from articles; JSTOR Sustainability, which developed topic pages on sustainability; and Understanding Shakespeare, a collaboration with Folger Shakespeare Library. Humphreys discussed JSTOR Labs' approach of rapid prototyping through "flash builds", gathering user feedback throughout the process, and continually iterating projects. He highlighted lessons for libraries and publishers in adopting more innovative practices.Preparing Data for (Open) Publication

The document discusses best practices for preparing data for open publication. It recommends thinking openly and planning early by creating detailed data management plans. It provides examples of repositories like GenBank, ClinicalTrials.gov, FlyBase, Figshare, and Dryad that accept different types of data. The document emphasizes documenting data thoroughly with metadata and standards and following ethical guidelines for sharing and preserving data in the long term.

Building a scalable, sustainable service with OJS

Ubiquity Press needed a scalable platform to host multiple scholarly journals. They chose to modify the open source Open Journal Systems (OJS) software rather than build a new system or use expensive commercial options. Key modifications included improving scalability to support many journals, integrating external services like typesetting and metrics tracking, fixing issues, and adding new features to enhance functionality and customization. This resulted in a flexible system that can efficiently host a large number of journals with individualized needs.

NIH Public Access Policy

The document summarizes the NIH Public Access Policy, which requires researchers who receive NIH funding to submit final peer-reviewed manuscripts to PubMed Central. It discusses how the policy benefits researchers, patients, and the public. It also outlines how libraries can help by advising authors on copyright issues, assisting with publisher agreements, and coordinating compliance efforts. The library's role is presented as helping relieve burdens on researchers while supporting open access to the biomedical literature.

Ensuring the Scholarly Record is Kept Safe: Measured Progress with Serials

Ensuring the Scholarly Record is Kept Safe: Measured Progress with SerialsEDINA, University of Edinburgh

1) The document discusses roles and responsibilities in ensuring permanent access to scholarly works.

2) It notes that while access to works has improved online, continuity of access is challenged as content can disappear from the web.

3) The document reports on measured progress in archiving journal content through organizations like CLOCKSS and Portico, but notes that only 19% of identified online journals are currently being preserved.NIH Public Access Policy: Ready to Publish

This document provides information about the NIH Public Access Policy, which requires authors to submit final peer-reviewed manuscripts from NIH-funded research to PubMed Central no later than 12 months after publication. It discusses finding copyright transfer information, potential examples of publisher policies, and the four methods for submitting manuscripts to PubMed Central - which include submission by the publisher, author, or directly to NIH. Additional resources are also provided.

Introducing PRIME:Publisher, Repository and Institutional Metadata Exchange

"Introducing PRIME:Publisher, Repository and Institutional Metadata Exchange" – Brian Hole, Ubiquity Press.

OpenAIRE Interoperability Workshop - University of Minho, Braga, Portugal, 8 February 2013

NIH Public Access Policy

The document summarizes the NIH Public Access Policy, which requires researchers receiving NIH funding to submit final peer-reviewed manuscripts to PubMed Central within 12 months of official publication. It outlines the three steps for compliance: 1) managing copyright by checking agreements and modifying if needed, 2) submitting manuscripts to the NIH system if required, and 3) including the PMCID in future NIH proposals. Benefits of complying include increased exposure and citation of work. Publishers have varying levels of support for submitting manuscripts.

Data Journals & Data Papers

Talk given at the “Shareable by Design: Making research data available for access” workshop,

London School of Hygiene and Tropical Medicine, November 12 2014

DiFiore: JSTOR & Portico: Committed to preserving the scholarly record , Bing...

This document discusses JSTOR and Portico's efforts to preserve the scholarly record in digital format. It notes that scholarly communication is shifting from paper to digital, and libraries are moving to electronic-only collections. JSTOR digitizes journal backfiles to improve access and reduce storage costs. Portico serves as a "dark archive" to ensure permanent access to born-digital scholarly content. Both work to ensure the long-term preservation of and access to scholarly works in service of the academic community.

SPARC Overview and Update, October 2008

SPARC (Scholarly Publishing and Academic Resources Coalition) is an alliance of academic institutions that aims to provide alternatives to commercial scholarly journals and encourage open access to research. It works to enhance access to peer-reviewed scholarship through publisher partnerships, incubation of new publications, advocacy, and education. SPARC benefits researchers through high-quality, lower-cost access to research and benefits publishers through providing new models for scholarly communication. It partners with various open access journals and resources to provide alternatives to traditional subscription-based publications.

NIH Public Access policy

Librarians\' role in NIH public access policy, its benefits, steps to compliance, author rights, and working with publishers.

Open Access Overview, Faculty Senate Library Committee, 10/21/08

Open Access publishing refers to making scholarly journal articles freely available online for anyone to read and use. It emerged as the internet made sharing content cheaper and easier than print. Traditionally, subscriptions funded journals but prices rose sharply in the 1990s limiting access. Now, some journals charge authors fees to make articles open while others use a hybrid model combining open access and subscription content, sometimes with embargo periods for new articles. This shift affects libraries who may see fewer subscriptions and more journals combining models, though embargo lengths will vary between journals and author choice.

Preserving the Integrity of the Scholarly Record

Presentation delivered by Peter Burnhill at the Edinburgh Digital Preservation meeting at the National Library of Scotland on 16 February 2015.

The Journal of Open Archaeology Data and PRIME: Incentivising Open Data Archi...

An introduction to the Journal of Open Archaeology Data (JOAD) and the Publisher, Repository and Institutional Metadata Exchange (PRIME) project, by Brian Hole. Presentation given at the 7th World Archaeological Congress (WAC 7), at the Dead Sea, Jordan, in 18 January 2013.

Sustainable, Successful Open Data Publication

Slides from a presentation given by Brian Hole from Ubiquity Press at the 9th International Digital Curation Conference, San Francisco,

February 25 2014.

Obtaining Credit for Research Software

A talk from 11 Febrary 2013, part of the University College London “Research Programming in Practice” seminar series. Brian Hole, founder of Ubiquity Press and creator of the Journal of Open Research Software wspeaks about a thorny problem for computationally-focused researchers: how do you best build a publication record and enhance your academic reputation when your primary output as a researcher is software? The Journal of Open Research Software is one potential solution, associating a software entity with a peer-reviewed journal publication.

The Shift to Open Access Publishing

The document discusses the shift to open access publishing. It provides an overview of Ubiquity Press, which publishes open access. It then discusses the history and models of open access publishing, including gold and green open access. Government policies increasingly mandate open access for publicly funded research. While large publishers initially opposed open access mandates, researcher support for open access has grown.

UKSG Conference 2017 Breakout - Advancing the Research Paper of the Future: c...

UKSG Conference 2017 Breakout - Advancing the Research Paper of the Future: c...UKSG: connecting the knowledge community

The vision for ‘the Research Paper of the Future’ promises

to make scholarship more discoverable, transparent,

inspectable, reusable and sustainable. Yet new forms

of scientific output also challenge authors, librarians,

publishers and service providers to register, validate,

disseminate and preserve them as elements of the scholarly

record. What constitutes authorship in a collaborative

process of GitHub pull requests and commits? When to

capture, reference and preserve dynamic data sets that

change over time? How to package and render complex

executable collections for review and delivery? This session

considers key challenges in operationalising the Research

Paper of the Future from the perspectives of a publisher,

a library administrator and a scientist/developer of a

collaborative authoring platform.PRIME: Publisher, Repository & Institutional Metadata Exchange

An short introduction to the PRIME (Publisher, Repository and Institutional Metadata Exchange) project, by Brian Hole, at the JISC Managing Research Data programme launch workshop in Nottingham, UK, October 25th 2012.

SAA 2014 session 703

This SAA 2014 (session 703) http://sched.co/1hIEcE2 lightning talk highlights challenges and solutions to promoting access and discovery of web archives. Speakers discussed descriptive strategies towards integrating web archives with EAD finding aids, MARC records in library catalogs, and other discovery methods and tools.

finde datasets repository.pptx

This review demonstrates that using these websites can provide researchers with valuable sources of data and research, facilitating access to current literature and specialized scientific content. For optimal results, diversifying sources of research and using multiple search engines based on need and specialization is recommended

More Related Content

What's hot

UKSG Conference 2016 Breakout Session - Of Libraries and Labs: effecting user...

UKSG Conference 2016 Breakout Session - Of Libraries and Labs: effecting user...UKSG: connecting the knowledge community

This document summarizes Alex Humphreys' presentation on JSTOR Labs and their work on user-driven innovation projects with libraries and other partners. The presentation included three case studies: JSTOR Snap, a tool for capturing snippets from articles; JSTOR Sustainability, which developed topic pages on sustainability; and Understanding Shakespeare, a collaboration with Folger Shakespeare Library. Humphreys discussed JSTOR Labs' approach of rapid prototyping through "flash builds", gathering user feedback throughout the process, and continually iterating projects. He highlighted lessons for libraries and publishers in adopting more innovative practices.Preparing Data for (Open) Publication

The document discusses best practices for preparing data for open publication. It recommends thinking openly and planning early by creating detailed data management plans. It provides examples of repositories like GenBank, ClinicalTrials.gov, FlyBase, Figshare, and Dryad that accept different types of data. The document emphasizes documenting data thoroughly with metadata and standards and following ethical guidelines for sharing and preserving data in the long term.

Building a scalable, sustainable service with OJS

Ubiquity Press needed a scalable platform to host multiple scholarly journals. They chose to modify the open source Open Journal Systems (OJS) software rather than build a new system or use expensive commercial options. Key modifications included improving scalability to support many journals, integrating external services like typesetting and metrics tracking, fixing issues, and adding new features to enhance functionality and customization. This resulted in a flexible system that can efficiently host a large number of journals with individualized needs.

NIH Public Access Policy

The document summarizes the NIH Public Access Policy, which requires researchers who receive NIH funding to submit final peer-reviewed manuscripts to PubMed Central. It discusses how the policy benefits researchers, patients, and the public. It also outlines how libraries can help by advising authors on copyright issues, assisting with publisher agreements, and coordinating compliance efforts. The library's role is presented as helping relieve burdens on researchers while supporting open access to the biomedical literature.

Ensuring the Scholarly Record is Kept Safe: Measured Progress with Serials

Ensuring the Scholarly Record is Kept Safe: Measured Progress with SerialsEDINA, University of Edinburgh

1) The document discusses roles and responsibilities in ensuring permanent access to scholarly works.

2) It notes that while access to works has improved online, continuity of access is challenged as content can disappear from the web.

3) The document reports on measured progress in archiving journal content through organizations like CLOCKSS and Portico, but notes that only 19% of identified online journals are currently being preserved.NIH Public Access Policy: Ready to Publish

This document provides information about the NIH Public Access Policy, which requires authors to submit final peer-reviewed manuscripts from NIH-funded research to PubMed Central no later than 12 months after publication. It discusses finding copyright transfer information, potential examples of publisher policies, and the four methods for submitting manuscripts to PubMed Central - which include submission by the publisher, author, or directly to NIH. Additional resources are also provided.

Introducing PRIME:Publisher, Repository and Institutional Metadata Exchange

"Introducing PRIME:Publisher, Repository and Institutional Metadata Exchange" – Brian Hole, Ubiquity Press.

OpenAIRE Interoperability Workshop - University of Minho, Braga, Portugal, 8 February 2013

NIH Public Access Policy

The document summarizes the NIH Public Access Policy, which requires researchers receiving NIH funding to submit final peer-reviewed manuscripts to PubMed Central within 12 months of official publication. It outlines the three steps for compliance: 1) managing copyright by checking agreements and modifying if needed, 2) submitting manuscripts to the NIH system if required, and 3) including the PMCID in future NIH proposals. Benefits of complying include increased exposure and citation of work. Publishers have varying levels of support for submitting manuscripts.

Data Journals & Data Papers

Talk given at the “Shareable by Design: Making research data available for access” workshop,

London School of Hygiene and Tropical Medicine, November 12 2014

DiFiore: JSTOR & Portico: Committed to preserving the scholarly record , Bing...

This document discusses JSTOR and Portico's efforts to preserve the scholarly record in digital format. It notes that scholarly communication is shifting from paper to digital, and libraries are moving to electronic-only collections. JSTOR digitizes journal backfiles to improve access and reduce storage costs. Portico serves as a "dark archive" to ensure permanent access to born-digital scholarly content. Both work to ensure the long-term preservation of and access to scholarly works in service of the academic community.

SPARC Overview and Update, October 2008

SPARC (Scholarly Publishing and Academic Resources Coalition) is an alliance of academic institutions that aims to provide alternatives to commercial scholarly journals and encourage open access to research. It works to enhance access to peer-reviewed scholarship through publisher partnerships, incubation of new publications, advocacy, and education. SPARC benefits researchers through high-quality, lower-cost access to research and benefits publishers through providing new models for scholarly communication. It partners with various open access journals and resources to provide alternatives to traditional subscription-based publications.

NIH Public Access policy

Librarians\' role in NIH public access policy, its benefits, steps to compliance, author rights, and working with publishers.

Open Access Overview, Faculty Senate Library Committee, 10/21/08

Open Access publishing refers to making scholarly journal articles freely available online for anyone to read and use. It emerged as the internet made sharing content cheaper and easier than print. Traditionally, subscriptions funded journals but prices rose sharply in the 1990s limiting access. Now, some journals charge authors fees to make articles open while others use a hybrid model combining open access and subscription content, sometimes with embargo periods for new articles. This shift affects libraries who may see fewer subscriptions and more journals combining models, though embargo lengths will vary between journals and author choice.

Preserving the Integrity of the Scholarly Record

Presentation delivered by Peter Burnhill at the Edinburgh Digital Preservation meeting at the National Library of Scotland on 16 February 2015.

The Journal of Open Archaeology Data and PRIME: Incentivising Open Data Archi...

An introduction to the Journal of Open Archaeology Data (JOAD) and the Publisher, Repository and Institutional Metadata Exchange (PRIME) project, by Brian Hole. Presentation given at the 7th World Archaeological Congress (WAC 7), at the Dead Sea, Jordan, in 18 January 2013.

Sustainable, Successful Open Data Publication

Slides from a presentation given by Brian Hole from Ubiquity Press at the 9th International Digital Curation Conference, San Francisco,

February 25 2014.

Obtaining Credit for Research Software

A talk from 11 Febrary 2013, part of the University College London “Research Programming in Practice” seminar series. Brian Hole, founder of Ubiquity Press and creator of the Journal of Open Research Software wspeaks about a thorny problem for computationally-focused researchers: how do you best build a publication record and enhance your academic reputation when your primary output as a researcher is software? The Journal of Open Research Software is one potential solution, associating a software entity with a peer-reviewed journal publication.

The Shift to Open Access Publishing

The document discusses the shift to open access publishing. It provides an overview of Ubiquity Press, which publishes open access. It then discusses the history and models of open access publishing, including gold and green open access. Government policies increasingly mandate open access for publicly funded research. While large publishers initially opposed open access mandates, researcher support for open access has grown.

UKSG Conference 2017 Breakout - Advancing the Research Paper of the Future: c...

UKSG Conference 2017 Breakout - Advancing the Research Paper of the Future: c...UKSG: connecting the knowledge community

The vision for ‘the Research Paper of the Future’ promises

to make scholarship more discoverable, transparent,

inspectable, reusable and sustainable. Yet new forms

of scientific output also challenge authors, librarians,

publishers and service providers to register, validate,

disseminate and preserve them as elements of the scholarly

record. What constitutes authorship in a collaborative

process of GitHub pull requests and commits? When to

capture, reference and preserve dynamic data sets that

change over time? How to package and render complex

executable collections for review and delivery? This session

considers key challenges in operationalising the Research

Paper of the Future from the perspectives of a publisher,

a library administrator and a scientist/developer of a

collaborative authoring platform.PRIME: Publisher, Repository & Institutional Metadata Exchange

An short introduction to the PRIME (Publisher, Repository and Institutional Metadata Exchange) project, by Brian Hole, at the JISC Managing Research Data programme launch workshop in Nottingham, UK, October 25th 2012.

What's hot (20)

UKSG Conference 2016 Breakout Session - Of Libraries and Labs: effecting user...

UKSG Conference 2016 Breakout Session - Of Libraries and Labs: effecting user...

Ensuring the Scholarly Record is Kept Safe: Measured Progress with Serials

Ensuring the Scholarly Record is Kept Safe: Measured Progress with Serials

Introducing PRIME:Publisher, Repository and Institutional Metadata Exchange

Introducing PRIME:Publisher, Repository and Institutional Metadata Exchange

DiFiore: JSTOR & Portico: Committed to preserving the scholarly record , Bing...

DiFiore: JSTOR & Portico: Committed to preserving the scholarly record , Bing...

Open Access Overview, Faculty Senate Library Committee, 10/21/08

Open Access Overview, Faculty Senate Library Committee, 10/21/08

The Journal of Open Archaeology Data and PRIME: Incentivising Open Data Archi...

The Journal of Open Archaeology Data and PRIME: Incentivising Open Data Archi...

UKSG Conference 2017 Breakout - Advancing the Research Paper of the Future: c...

UKSG Conference 2017 Breakout - Advancing the Research Paper of the Future: c...

PRIME: Publisher, Repository & Institutional Metadata Exchange

PRIME: Publisher, Repository & Institutional Metadata Exchange

Similar to Chemical Information Sources Wikibook poster

SAA 2014 session 703

This SAA 2014 (session 703) http://sched.co/1hIEcE2 lightning talk highlights challenges and solutions to promoting access and discovery of web archives. Speakers discussed descriptive strategies towards integrating web archives with EAD finding aids, MARC records in library catalogs, and other discovery methods and tools.

finde datasets repository.pptx

This review demonstrates that using these websites can provide researchers with valuable sources of data and research, facilitating access to current literature and specialized scientific content. For optimal results, diversifying sources of research and using multiple search engines based on need and specialization is recommended

Libraries, collections, technology: presented at Pennylvania State University...

Library collections are changing in a network environment. This presentation considers how collections are being reconfigured, it looks at research support services, and it explores the shift from the purchased/licensed collection to the facilitated collection.

The commitment of arabic sites in the field of libraries and information that...

This document analyzes 106 Arabic websites related to libraries and information to assess their compliance with the Dublin Core metadata schema. It finds that university library websites make up the largest portion at 35.8%. Most sites neglect updating. It recommends increased cooperation between sites to design according to Dublin Core, make interfaces available in Arabic, and develop specialized sites like library networks and catalogs. Previous studies found Arabic library sites lack bookmarks, metadata use, and presence in global indexes due to neglect and lack of English interfaces.

How Libraries Use Publisher Metadata - Crossref Community Webinar

The document provides an overview of how libraries use publisher-provided metadata in library discovery systems. It discusses how libraries obtain MARC records and direct linking metadata from publishers and suppliers to incorporate content into library discovery services. It also describes how openURL linking and link resolvers allow libraries to provide access to publisher content through library discovery interfaces and services. Accurate metadata is important for successful linking to full text content.

Wikipedia and Libraries: Increasing your Library’s Visibilityi

How a partnership between The Wikipedia Library, OCLC, and 4 partner Universities is helping libraries expose their collections online.

ACRL/NY 2013 poster: Assessment of the Effectiveness of the Human Rights Web ...

Presented by: Anna Perricci, Web Archiving Project Librarian, and Pamela Graham, Director, Center for Human Rights Documentation & Research at Columbia University Libraries / Information Services

Event: ACRL / NY December 6, 2013

Poster: Assessment of the Effectiveness of the Human Rights Web Archive @ Columbia University (plus some information about web archiving collaborations)

http://hrwa.cul.columbia.edu/

Web archiving encompasses several challenges that we face in the midst of the radical changes that are the focus of the ACRL-NY 2013 Symposium. Like many other interdisciplinary, wide-ranging and highly networked fields, human rights scholarship relies extensively on web-based information, but much of this content is at risk of disappearing within a relatively short time.

To meet the needs of the scholarly community, the Human Rights Web Archive @ Columbia University (HRWA) was created. The HRWA is a searchable collection of archived copies of human rights websites created by non-governmental organizations, national human rights institutions, tribunals and individuals.

In this poster we will detail our early progress in the assessment of the effectiveness of the HRWA through user testing and a review of scholarly publishing in journals focusing on human rights research. We will also discuss how keeping users actively engaged is at the core of our evolving collecting policy for web archives. In sharing our experiences with a collection development policy centered in an active and agile feedback loop, we hope to shed light on strengths and opportunities for growth including via collaborative initiatives.

An introduction to Wikipedia and cataloguing issues

An introduction to Wikipedia and cataloguing issuesScottish Library & Information Council (SLIC), CILIP in Scotland (CILIPS)

An overview of Wikipedia and its potential for libraries, also covering cataloguing issues. Part of the Cataloguing and Indexing Group in Scotland (CIGS) seminar "Toto, I've got a feeling we're not in Kansas anymore": metadata issues and Web2.0 services.Strategies for LIS research

The document discusses various topics related to library and information science (LIS) research including focus areas, literature search tools, importance of research design, and citations patterns. It provides examples of pioneering LIS researchers in India and their contributions. It outlines potential areas for theoretical and applied LIS research and lists several online resources and gateways relevant to LIS research.

Internet-based Tools for Communication and Collaboration in Chemistry

Internet-based Tools for Communication and Collaboration in ChemistryUS Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

Web-based technologies coupled with a drive for improved communication between scientists has resulted in the proliferation of scientific opinion, data and knowledge at an ever-increasing rate. The availability of tools to host wikis and blogs has provided the necessary building blocks for scientists with only a rudimentary understanding of computer software science to communicate to the masses. This newfound freedom has the ability to speed up research and sharing of results, develop extensive collaborations, conduct science in public, and in near-real time. The technologies supporting Chemistry, while immature, are fast developing to support chemical structures and reactions, analytical data support, and integration to related data sources via supporting software technologies. Communication in chemistry is already witnessing a new revolution.Chemical Information Sources Wikibook

A description of the history and purposes of the Chemical Information Sources Wikibook, presented at the 250th American Chemical Society National Meeting in Boston, MA on 19 August 2015.

How Libraries Use Publisher Metadata Redux (Steven Shadle)

This document summarizes how libraries use publisher-provided metadata to provide access to content. It describes how metadata is used in the library catalog, link resolvers, and discovery systems. Publisher metadata must be accurate and distributed to various library systems and standards to effectively support discovery and access for users.

Using wikis in library liaison work: overview & trends

Gives introduction to wikis and overview of their application to library liaison work within medical settings.

NERM 2006: Introduction to the future of scholarly communication

The document summarizes key developments in scholarly communications over time including the evolution from printed manuscripts to digital formats online. It discusses factors driving this change such as rising journal costs, the growth of the internet, and advocacy for open access. It outlines groups affected by these changes and trends toward making more government-funded research openly available online through initiatives like institutional repositories and open access publishing models.

201399627 kovacs-collection-cyberspace

homework help,online homework help,online tutors,online tutoring,research paper help,do my homework,

https://www.homeworkping.com/

A Brief Review Of Studies Of Wikipedia In Peer-Reviewed Journals

This document summarizes and reviews peer-reviewed studies that have been conducted on Wikipedia. It discusses research on how and why Wikipedia works as a collaborative project, assessments of Wikipedia's reliability and content accuracy, uses of Wikipedia as a data source, and applications of the Wikipedia model. The studies examined range from introductory reviews of Wikipedia to more in-depth analyses of editorial processes, motivations of contributors, reliability comparisons with other encyclopedias, and uses of Wikipedia content and data across various domains. Overall, the document provides a comprehensive overview of the breadth of academic research that has been done on Wikipedia since its inception.

OUR space: the new world of metadata

Presented at Industry Symposium, IFLA, 14 August 2008. Describes a new environment of global information services using metadata, taxonomies, and knowledge organization. Makes the case that these changes will permanently affect what it means "to catalog" materials for the purpose of connecting citizens, students and scholars to the information they need, when and where they need it.

Institutional repositories notes

An institutional repository is defined as an online archive for collecting, preserving, and disseminating digital copies of the intellectual output of an institution. It aims to provide open access, long-term preservation, and promotion of scholarship. Early repositories focused on specific subjects like science, but now institutions house a variety of material like faculty research, student work, data sets, and more to increase visibility and collaboration both on and off campus. While concerns exist around costs and policies, repositories provide benefits like increased citations and sharing of knowledge if properly supported and promoted to the academic community.

"In the Early Days of a Better Nation": Enhancing the power of metadata today...

"In the Early Days of a Better Nation": Enhancing the power of metadata today...National Information Standards Organization (NISO)

NISO Two Day Virtual Conference:

Using the Web as an E-Content Distribution Platform:

Challenges and Opportunities

Oct 21-22, 2014

John Mark Ockerbloom, Digital Library Architect and Planner, University of PennsylvaniaCambridge university library ess update for ucs

The document summarizes developments in Cambridge University Library's transition to more digital resources and services. It discusses how the library has shifted significant portions of its materials budget to online journals and databases. It also describes the library's implementation of a new "resource discovery" platform to help users more easily search and access the library's diverse digital collections, which had previously been scattered across different systems. Additionally, the document outlines the library's "COMET" project to publish a large portion of its metadata as open linked data on the semantic web.

Similar to Chemical Information Sources Wikibook poster (20)

Libraries, collections, technology: presented at Pennylvania State University...

Libraries, collections, technology: presented at Pennylvania State University...

The commitment of arabic sites in the field of libraries and information that...

The commitment of arabic sites in the field of libraries and information that...

How Libraries Use Publisher Metadata - Crossref Community Webinar

How Libraries Use Publisher Metadata - Crossref Community Webinar

Wikipedia and Libraries: Increasing your Library’s Visibilityi

Wikipedia and Libraries: Increasing your Library’s Visibilityi

ACRL/NY 2013 poster: Assessment of the Effectiveness of the Human Rights Web ...

ACRL/NY 2013 poster: Assessment of the Effectiveness of the Human Rights Web ...

An introduction to Wikipedia and cataloguing issues

An introduction to Wikipedia and cataloguing issues

Internet-based Tools for Communication and Collaboration in Chemistry

Internet-based Tools for Communication and Collaboration in Chemistry

How Libraries Use Publisher Metadata Redux (Steven Shadle)

How Libraries Use Publisher Metadata Redux (Steven Shadle)

Using wikis in library liaison work: overview & trends

Using wikis in library liaison work: overview & trends

NERM 2006: Introduction to the future of scholarly communication

NERM 2006: Introduction to the future of scholarly communication

A Brief Review Of Studies Of Wikipedia In Peer-Reviewed Journals

A Brief Review Of Studies Of Wikipedia In Peer-Reviewed Journals

"In the Early Days of a Better Nation": Enhancing the power of metadata today...

"In the Early Days of a Better Nation": Enhancing the power of metadata today...

Recently uploaded

Compexometric titration/Chelatorphy titration/chelating titration

Classification

Metal ion ion indicators

Masking and demasking reagents

Estimation of Magnisium sulphate

Calcium gluconate

Complexometric Titration/ chelatometry titration/chelating titration, introduction, Types-

1.Direct Titration

2.Back Titration

3.Replacement Titration

4.Indirect Titration

Masking agent, Demasking agents

formation of complex

comparition between masking and demasking agents,

Indicators/Metal ion indicators/ Metallochromic indicators/pM indicators,

Visual Technique,PM indicators (metallochromic), Indicators of pH, Redox Indicators

Instrumental Techniques-Photometry

Potentiometry

Miscellaneous methods.

Complex titration with EDTA.

Pests of Storage_Identification_Dr.UPR.pdf

InIndia-post-harvestlosses-unscientificstorage,insects,rodents,micro-organismsetc.,accountforabout10percentoftotalfoodgrains

Graininfestation

Directdamage

Indirectly

•theexuviae,skin,deadinsects

•theirexcretawhichmakefoodunfitforhumanconsumption

About600speciesofinsectshavebeenassociatedwithstoredgrainproducts

100speciesofinsectpestsofstoredproductscauseeconomiclosses

Describing and Interpreting an Immersive Learning Case with the Immersion Cub...

Current descriptions of immersive learning cases are often difficult or impossible to compare. This is due to a myriad of different options on what details to include, which aspects are relevant, and on the descriptive approaches employed. Also, these aspects often combine very specific details with more general guidelines or indicate intents and rationales without clarifying their implementation. In this paper we provide a method to describe immersive learning cases that is structured to enable comparisons, yet flexible enough to allow researchers and practitioners to decide which aspects to include. This method leverages a taxonomy that classifies educational aspects at three levels (uses, practices, and strategies) and then utilizes two frameworks, the Immersive Learning Brain and the Immersion Cube, to enable a structured description and interpretation of immersive learning cases. The method is then demonstrated on a published immersive learning case on training for wind turbine maintenance using virtual reality. Applying the method results in a structured artifact, the Immersive Learning Case Sheet, that tags the case with its proximal uses, practices, and strategies, and refines the free text case description to ensure that matching details are included. This contribution is thus a case description method in support of future comparative research of immersive learning cases. We then discuss how the resulting description and interpretation can be leveraged to change immersion learning cases, by enriching them (considering low-effort changes or additions) or innovating (exploring more challenging avenues of transformation). The method holds significant promise to support better-grounded research in immersive learning.

Direct Seeded Rice - Climate Smart Agriculture

Direct Seeded Rice - Climate Smart AgricultureInternational Food Policy Research Institute- South Asia Office

PPT on Direct Seeded Rice presented at the three-day 'Training and Validation Workshop on Modules of Climate Smart Agriculture (CSA) Technologies in South Asia' workshop on April 22, 2024.

The debris of the ‘last major merger’ is dynamically young

The Milky Way’s (MW) inner stellar halo contains an [Fe/H]-rich component with highly eccentric orbits, often referred to as the

‘last major merger.’ Hypotheses for the origin of this component include Gaia-Sausage/Enceladus (GSE), where the progenitor

collided with the MW proto-disc 8–11 Gyr ago, and the Virgo Radial Merger (VRM), where the progenitor collided with the

MW disc within the last 3 Gyr. These two scenarios make different predictions about observable structure in local phase space,

because the morphology of debris depends on how long it has had to phase mix. The recently identified phase-space folds in Gaia

DR3 have positive caustic velocities, making them fundamentally different than the phase-mixed chevrons found in simulations

at late times. Roughly 20 per cent of the stars in the prograde local stellar halo are associated with the observed caustics. Based

on a simple phase-mixing model, the observed number of caustics are consistent with a merger that occurred 1–2 Gyr ago.

We also compare the observed phase-space distribution to FIRE-2 Latte simulations of GSE-like mergers, using a quantitative

measurement of phase mixing (2D causticality). The observed local phase-space distribution best matches the simulated data

1–2 Gyr after collision, and certainly not later than 3 Gyr. This is further evidence that the progenitor of the ‘last major merger’

did not collide with the MW proto-disc at early times, as is thought for the GSE, but instead collided with the MW disc within

the last few Gyr, consistent with the body of work surrounding the VRM.

8.Isolation of pure cultures and preservation of cultures.pdf

Isolation of pure culture, its various method.

快速办理(UAM毕业证书)马德里自治大学毕业证学位证一模一样

学校原件一模一样【微信:741003700 】《(UAM毕业证书)马德里自治大学毕业证学位证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

(June 12, 2024) Webinar: Development of PET theranostics targeting the molecu...

(June 12, 2024) Webinar: Development of PET theranostics targeting the molecu...Scintica Instrumentation

Targeting Hsp90 and its pathogen Orthologs with Tethered Inhibitors as a Diagnostic and Therapeutic Strategy for cancer and infectious diseases with Dr. Timothy Haystead.Randomised Optimisation Algorithms in DAPHNE

Slides from talk:

Aleš Zamuda: Randomised Optimisation Algorithms in DAPHNE .

Austrian-Slovenian HPC Meeting 2024 – ASHPC24, Seeblickhotel Grundlsee in Austria, 10–13 June 2024

https://ashpc.eu/

Authoring a personal GPT for your research and practice: How we created the Q...

Thematic analysis in qualitative research is a time-consuming and systematic task, typically done using teams. Team members must ground their activities on common understandings of the major concepts underlying the thematic analysis, and define criteria for its development. However, conceptual misunderstandings, equivocations, and lack of adherence to criteria are challenges to the quality and speed of this process. Given the distributed and uncertain nature of this process, we wondered if the tasks in thematic analysis could be supported by readily available artificial intelligence chatbots. Our early efforts point to potential benefits: not just saving time in the coding process but better adherence to criteria and grounding, by increasing triangulation between humans and artificial intelligence. This tutorial will provide a description and demonstration of the process we followed, as two academic researchers, to develop a custom ChatGPT to assist with qualitative coding in the thematic data analysis process of immersive learning accounts in a survey of the academic literature: QUAL-E Immersive Learning Thematic Analysis Helper. In the hands-on time, participants will try out QUAL-E and develop their ideas for their own qualitative coding ChatGPT. Participants that have the paid ChatGPT Plus subscription can create a draft of their assistants. The organizers will provide course materials and slide deck that participants will be able to utilize to continue development of their custom GPT. The paid subscription to ChatGPT Plus is not required to participate in this workshop, just for trying out personal GPTs during it.

waterlessdyeingtechnolgyusing carbon dioxide chemicalspdf

The technology uses reclaimed CO₂ as the dyeing medium in a closed loop process. When pressurized, CO₂ becomes supercritical (SC-CO₂). In this state CO₂ has a very high solvent power, allowing the dye to dissolve easily.

Sexuality - Issues, Attitude and Behaviour - Applied Social Psychology - Psyc...

A proprietary approach developed by bringing together the best of learning theories from Psychology, design principles from the world of visualization, and pedagogical methods from over a decade of training experience, that enables you to: Learn better, faster!

Travis Hills of MN is Making Clean Water Accessible to All Through High Flux ...

By harnessing the power of High Flux Vacuum Membrane Distillation, Travis Hills from MN envisions a future where clean and safe drinking water is accessible to all, regardless of geographical location or economic status.

Recently uploaded (20)

Compexometric titration/Chelatorphy titration/chelating titration

Compexometric titration/Chelatorphy titration/chelating titration

Basics of crystallography, crystal systems, classes and different forms

Basics of crystallography, crystal systems, classes and different forms

GBSN - Biochemistry (Unit 6) Chemistry of Proteins

GBSN - Biochemistry (Unit 6) Chemistry of Proteins

Describing and Interpreting an Immersive Learning Case with the Immersion Cub...

Describing and Interpreting an Immersive Learning Case with the Immersion Cub...

The debris of the ‘last major merger’ is dynamically young

The debris of the ‘last major merger’ is dynamically young

8.Isolation of pure cultures and preservation of cultures.pdf

8.Isolation of pure cultures and preservation of cultures.pdf

(June 12, 2024) Webinar: Development of PET theranostics targeting the molecu...

(June 12, 2024) Webinar: Development of PET theranostics targeting the molecu...

aziz sancar nobel prize winner: from mardin to nobel

aziz sancar nobel prize winner: from mardin to nobel

Authoring a personal GPT for your research and practice: How we created the Q...

Authoring a personal GPT for your research and practice: How we created the Q...

waterlessdyeingtechnolgyusing carbon dioxide chemicalspdf

waterlessdyeingtechnolgyusing carbon dioxide chemicalspdf

Sexuality - Issues, Attitude and Behaviour - Applied Social Psychology - Psyc...

Sexuality - Issues, Attitude and Behaviour - Applied Social Psychology - Psyc...

Travis Hills of MN is Making Clean Water Accessible to All Through High Flux ...

Travis Hills of MN is Making Clean Water Accessible to All Through High Flux ...

Chemical Information Sources Wikibook poster

- 1. Chemical Information Sources Wikibook Charles F. Huber Davidson Library, University of California – Santa Barbara 250th American Chemical Society National Meeting, Boston, MA 17 August 2015 Migration to the Web • Recognizing that chemical information sources were changing too rapidly to keep pace using revised print editions, and that accessibility could be improved with Internet distribution, Prof. Wiggins created a Web version of his text. • (1994) Chemical Information Sources from Indiana University (CIS-IU) • In 2007, this and two sister publications moved to the more flexible Wikibooks platform: • Chemical Information Sources Wikibook • Selected Internet Resources in Chemistry (SIRCh) – a list of resources available on the Web. • Chemical Information Instructional Materials (CIIM) – an electronic collection of teaching materials which grew out of a paper file originally collected by the Education Committee of ACS CINF. History of Chemical Information Sources: Before the Web • Gary Wiggins, chemistry librarian at Indiana University – Bloomington, wrote Chemical Information Sources,(1991), McGraw-Hill • “…is designed to give the chemist, librarian or student the command of the chemical literature which is needed to solve most chemical information problems.” • The 360 page book (with accompanying files on floppy disks) described key concepts in chemical information, the most important sources of chemical information, and the best techniques for their use. Current Table of Contents How and Where to Start Chapter 1 The Publication Process: Primary, Secondary, and Tertiary Sources Chapter 2 Guides to Chemical Information Sources and Databases Chapter 3 General Search Strategies for Online Chemical Information Chapter 4 Keeping Up and Looking Back: Current Awareness, Reviews, and Document Delivery Chapter 5 Deep Background Reading: Dictionaries, Encyclopedias, Treatises, Monographs, and Other Books How and Where to Search: General Chapter 6 Author and Citation Searches Chapter 7 Subject Searches Chapter 8 Chemical Name and Formula Searches Chapter 9 Structure Searches How and Where to Search: Specialized Chapter 10 Synthesis and Reaction Searches Chapter 11 Chemical Safety and Toxicology Searches Chapter 12 Analytical Chemistry Searches Chapter 13 Physical Property Searches Chapter 14 Chemical Patent Searches Communicating in Chemistry Chapter 15 Blogs, Social Networks, and Mailing Lists Chapter 16 Molecular Visualization Tools and Sites Chapter 17 Science Writing Aids Miscellaneous Chapter 18 Chemical History, Biography, Directories, and Industry Sources Chapter 19 Teaching and Studying Chemistry Chapter 20 Careers in Chemistry Chapter 21 Cheminformatics Supplemental Resources SIRCh: Selected Internet Resources for Chemistry (Links to Web resources with the same subject outline as the chapters on this page.) CIIM: Chemical Information Instructional Materials (Web resources for more in-depth training on the topics discussed in the chapters.) Problem Sets CRSD: Chemical Reference Sources Database (a searchable database that covers reference books, commercial databases, etc.) Information Competencies for Chemistry Undergraduates: the Elements of Information Literacy Wikibook, July 2012- ; from the Special Libraries Association, Chemistry Division and the American Chemical Society, Division of Chemical Information CHMINF-L: Chemical Information Sources Discussion List (a listserv in existence since 1991 with many chemistry librarians, chemists, publishers, and others interested in chemical information; has a searchable archive of all posts since its inception.) ABSTRACT Based on the landmark book by Gary Wiggins, Chemical Information Sources became a wikibook under the leadership of Ben Wagner. Now entering its next stage, the CIS Wikibook is designed to be an open access source of resources for a wide range of chemical information research and teaching. The talk will cover the current content of the CIS Wikibook, plans for its future, and how you can get involved. Further Developments: 2011 – Present • Following Gary’s retirement in 2007, he maintained the sites for several years, with A. Ben Wagner (University at Buffalo) taking over as editor in 2011. • In 2014, it was decided that the Education Committee of ACS CINF (Grace Baysinger, Stanford, chair) should take over the site. • Prof. Martin Walker volunteered to become Technical Editor, and Chuck Huber assumed the role of Editor- in-Chief for 2015-2018. What next for the Wikibook? • Integration of SIRCh and CIIM into the main Wikibook structure – currently the files contain duplication and overlapping links that could be streamlined. • Reorganization for easier navigation • Updating and enhancing of existing articles to ensure that they have the most current information. • Adding new articles in areas that had previously been neglected, or which have grown in importance since the project began, e.g.: • Biochemistry and Chemical Biology • Materials Chemistry • Metrics, both traditional and altmetrics. How You Can Help! • Visit the Chemical Information Sources Wikibook! • https://en.wikibooks.org/wiki/Chemical_Information_Sources • Explore it, critique it, and let us know what you think, both good and bad. Can you find what you’re looking for? Is the content up-to-date? Are there omissions or corrections you can suggest? New topics that deserve articles? • Volunteer to work on sections that match your interests and expertise! • To volunteer, e-mail Chuck Huber, cfhuber@ucsb.edu Sample Paragraph: Primary, Secondary and Tertiary Sources Introduction Traditionally, scientific research work is first published in journal articles (known as the primary literature). It is then picked up in various secondary tools whose purpose is to better organize the primary literature and make the retrieval of items of interest much easier. Most important of these are the abstracting and indexing (A&I) services such as Chemical Abstracts Service, the Web of Science, Reaxys, and Scopus. There are differences among secondary A&I services both with respect to the depth and breadth of coverage of chemistry and with respect to the swiftness with which the average reference to a new primary work makes its way into the A&I databases. A very significant change in scientific publishing is now underway. Innovations such as the American Chemical Society's "As soon as publishable" process for new journal articles make possible the appearance of Web editions of original research articles several weeks before the corresponding print versions. The shift to electronic journals as the archival record of science is nearly complete. Many chemistry libraries have decided to stop subscribing to printed journals. With so much new information available, there are other sources that help to sift through, condense, and re-package the most important discoveries. For example, some people write reviews of what has been happening in a given scientific area over a period of time. Of course, once the new discoveries have been validated and deemed important enough, they will find their way into various books, encyclopedias, and other secondary sources.