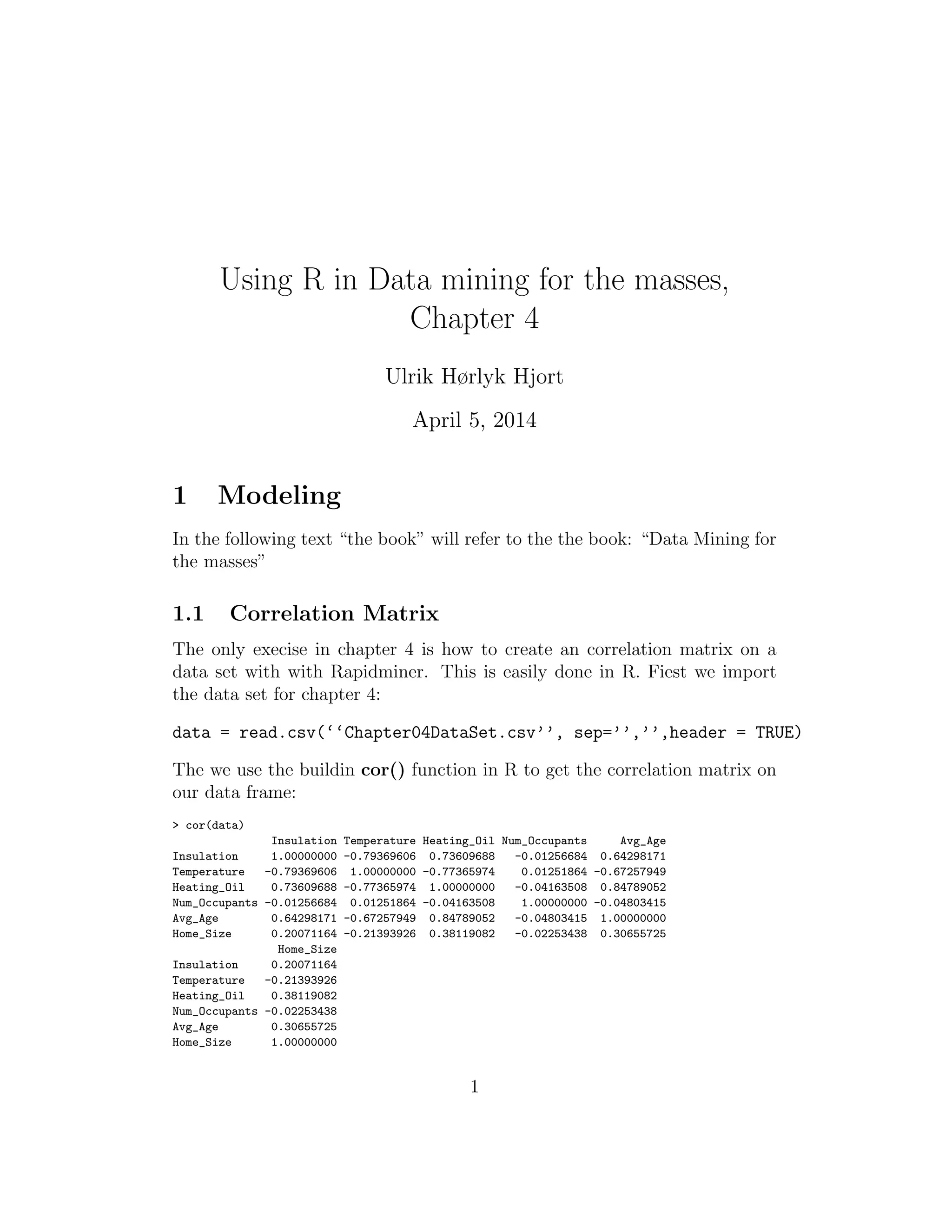

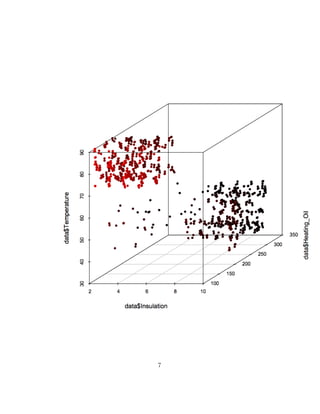

This document discusses using R to analyze a dataset and create correlation matrices and scatter plots from chapter 4 of the book "Data Mining for the Masses". It shows how to import the dataset into R, use the built-in cor() function to generate a correlation matrix matching the example in the book, and create 2D and 3D scatter plots of attributes in the data to illustrate correlations. The plots can be customized by adding jitter, color, and other graphical parameters.