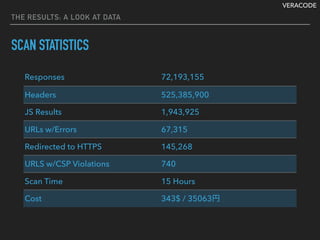

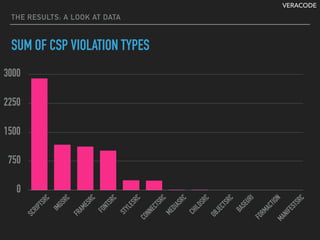

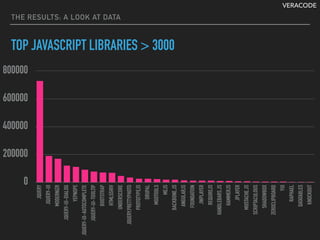

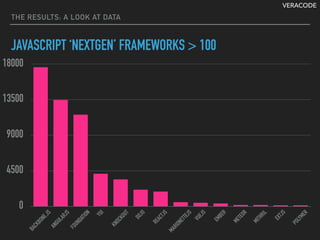

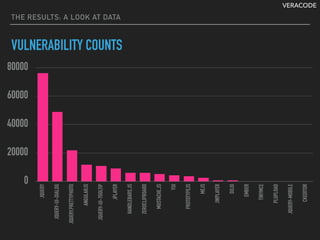

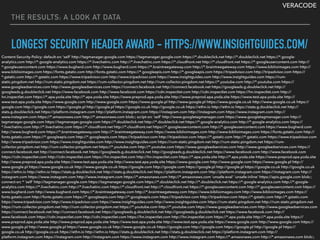

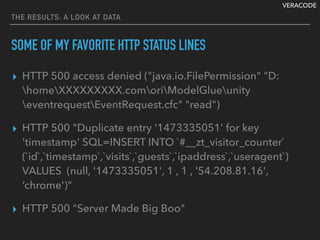

The document details the development of a scalable fingerprinting tool using Chromium automation at Veracode, covering its architecture, components, and challenges encountered over the years. Notable elements include a message queue for processing URLs, the use of browser automation for data capture, various coding solutions to handle database challenges, and the results of the scanning project. The final outcomes reflect on the number of security header scans, violations, and the analysis of prevalent JavaScript libraries and frameworks.

![VERACODE

AROUND THE WEB IN 80 HOURS: SCALABLE FINGERPRINTING WITH

CHROMIUM AUTOMATION

HELPFUL NSQ FEATURES

// Create consumer

c.urlConsumer, err = nsq.NewConsumer(job.Topics["url"],

creeper_types.UrlChannel, cfg)

// Process numBrowser of messages concurrently (7)

c.urlConsumer.AddConcurrentHandlers(

nsq.HandlerFunc(c.processUrls),

numBrowsers)

// Job taking too long to handle/process a message?

msg.Touch() // notify we are still working on this message

// Need to requeue because chrome crashed?

msg.RequeueWithoutBackoff(-1)

// Need to change max # of inflight messages?

c.urlConsumer.ChangeMaxInFlight(c.getInflightCount())

1

2

3

4](https://image.slidesharecdn.com/cb16dawsonja-161109051202/85/CB16-80-Web-by-Isaac-Dawson-8-320.jpg)

![VERACODE

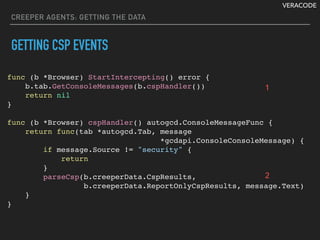

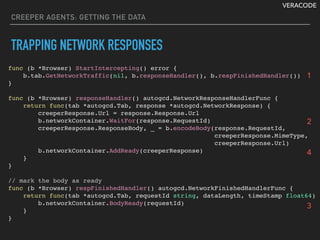

CREEPER AGENTS: GETTING THE DATA

GOOGLE CHROME REMOTE DEBUGGER

▸ Huge definition files: browser_protocol.json and

js_protocol.json

{

"version": { "major": "1", "minor": "1" },

"domains": [{ "domain": "Inspector",

"hidden": true,

"types": [],

"commands": [{

"name": "enable",

"description": "Enables inspector domain...”,

"handlers": ["browser", "renderer"]

}],

"events": [{

"name": "evaluateForTestInFrontend",

"parameters": [ … ]

}],

}

}](https://image.slidesharecdn.com/cb16dawsonja-161109051202/85/CB16-80-Web-by-Isaac-Dawson-16-320.jpg)

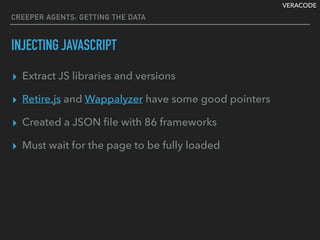

![VERACODE

CREEPER AGENTS: GETTING THE DATA

INJECTING JAVASCRIPT - THE QUERIES

{

"libraries": [ {

"url": "http://jquery.com/",

"key": "jquery",

"statement": "jQuery.fn.jquery"

}, {

"url": "https://jquerymobile.com/",

"key": "jquery-mobile",

"statement": "jQuery.mobile.version"

}, {

"url": "http://www.embeddedjs.com/",

"key": "embeddedjs 1.0",

"statement": "(typeof EJS === "function"

&& typeof EJS.Buffer === "function") ? "ejs 1.0":"""

}, {

"url": "http://www.embeddedjs.com/",

"key": "embeddedjs 0.x",

"statement": "(typeof EJS === "function"

&& typeof EjsScanner === "function") ? "ejs 0.x":"""

} ]

}](https://image.slidesharecdn.com/cb16dawsonja-161109051202/85/CB16-80-Web-by-Isaac-Dawson-22-320.jpg)

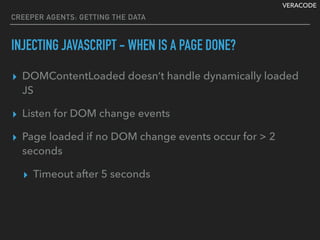

![VERACODE

CREEPER AGENTS: GETTING THE DATA

INJECTING JAVASCRIPT - INJECTING

for _, library := range JsLibs.Libraries {

res, err := b.ExecuteScript(library.Statement)

if err == nil && string(res) != "" {

log.Printf("%s library result was: %sn",

library.Key,

string(res))

report.JavaScriptLibraries[library.Key] = string(res)

}

}](https://image.slidesharecdn.com/cb16dawsonja-161109051202/85/CB16-80-Web-by-Isaac-Dawson-23-320.jpg)

![VERACODE

DB WRITERS: STORING THE DATA

SOLUTION #1 BATCHER

func (b *Batcher) AddReport(r *creeper_types.CreeperReport) {

select {

case b.reportPool <- r:

atomic.AddInt32(&b.reportCount, 1)

}

}

func (b *Batcher) EmptyReports() []*creeper_types.CreeperReport {

reports := make([]*creeper_types.CreeperReport, 0)

for {

select {

case report := <-b.reportPool:

reports = append(reports, report)

default:

return reports

}

}

return nil

}](https://image.slidesharecdn.com/cb16dawsonja-161109051202/85/CB16-80-Web-by-Isaac-Dawson-39-320.jpg)