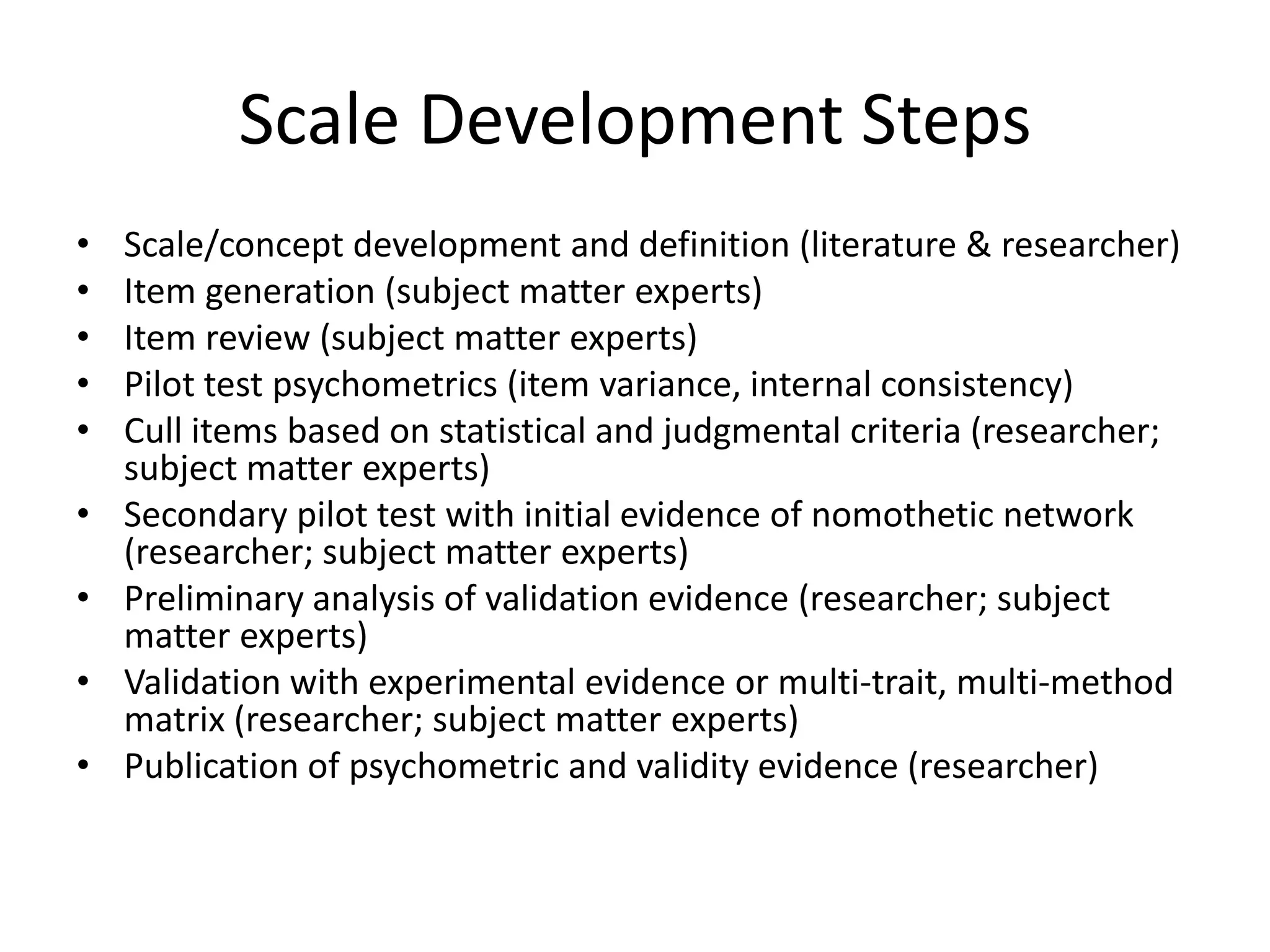

The document outlines the steps for developing a valid scale for use in web surveys, including defining the construct, generating items, pilot testing, refining the scale, and validating it with other measures. Key aspects include using subject matter experts, evaluating items statistically and conceptually, demonstrating the scale's nomological network, and publishing validation evidence. The goal is to create a concise yet reliable and valid scale for measuring constructs online.