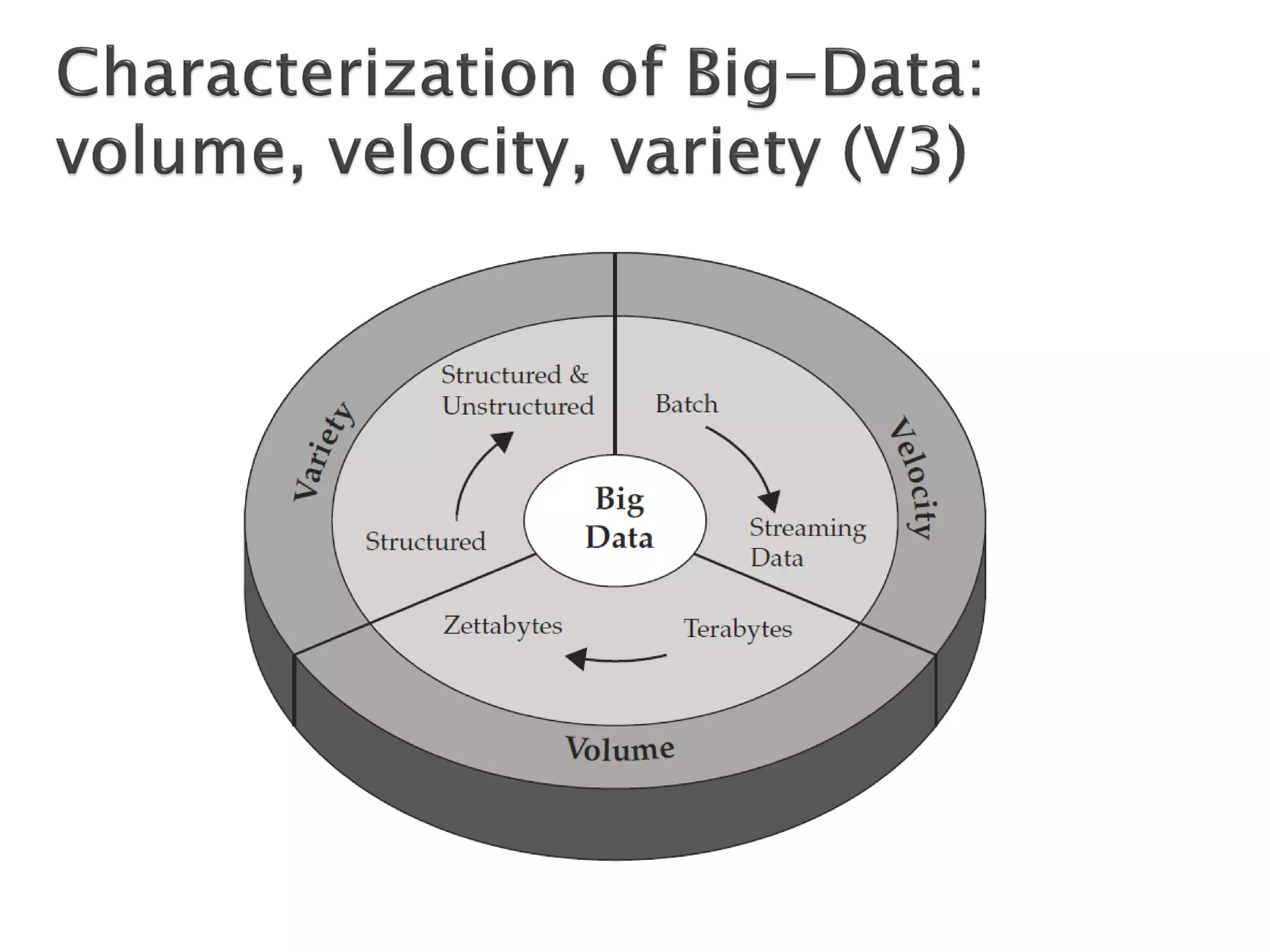

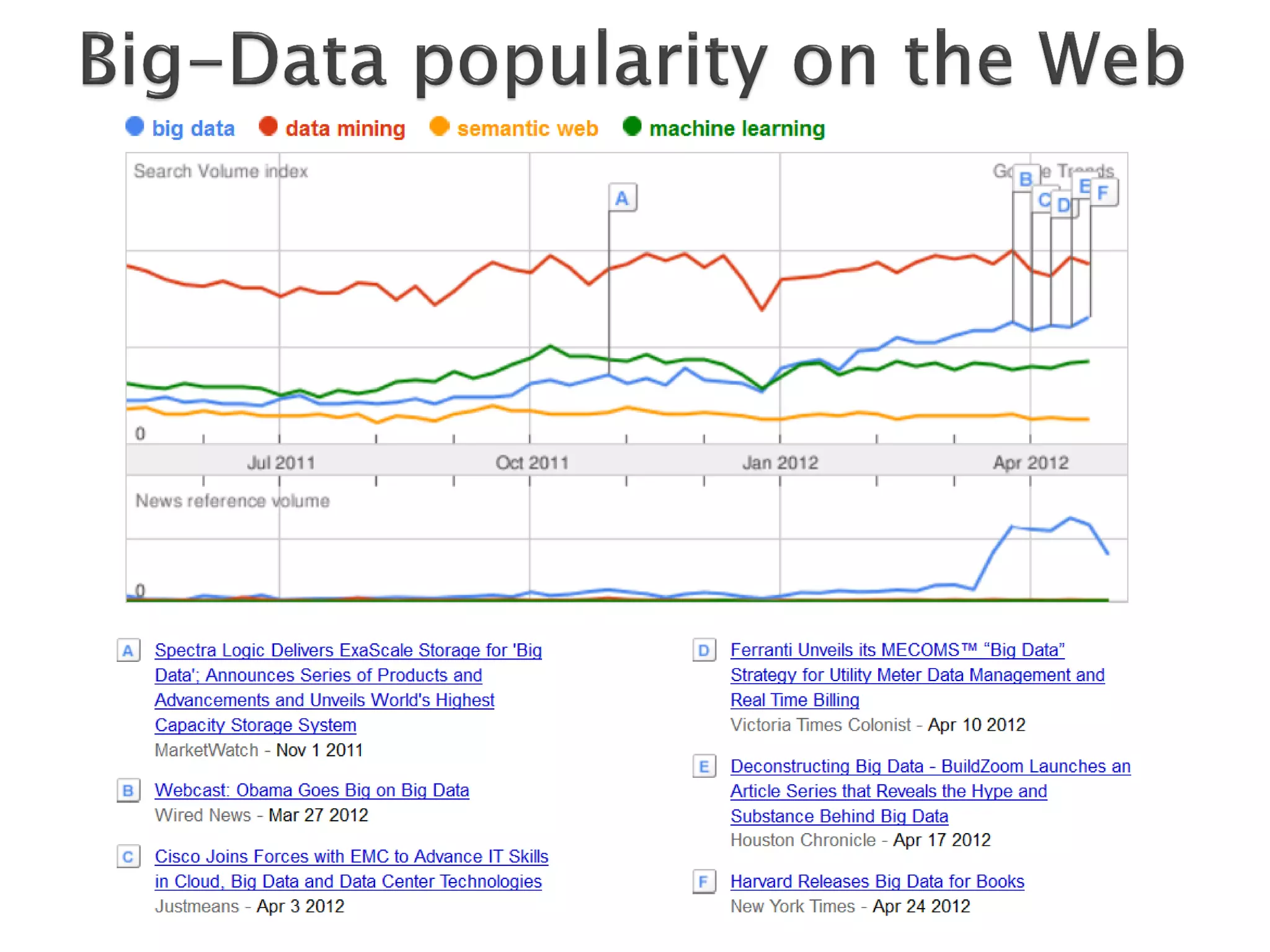

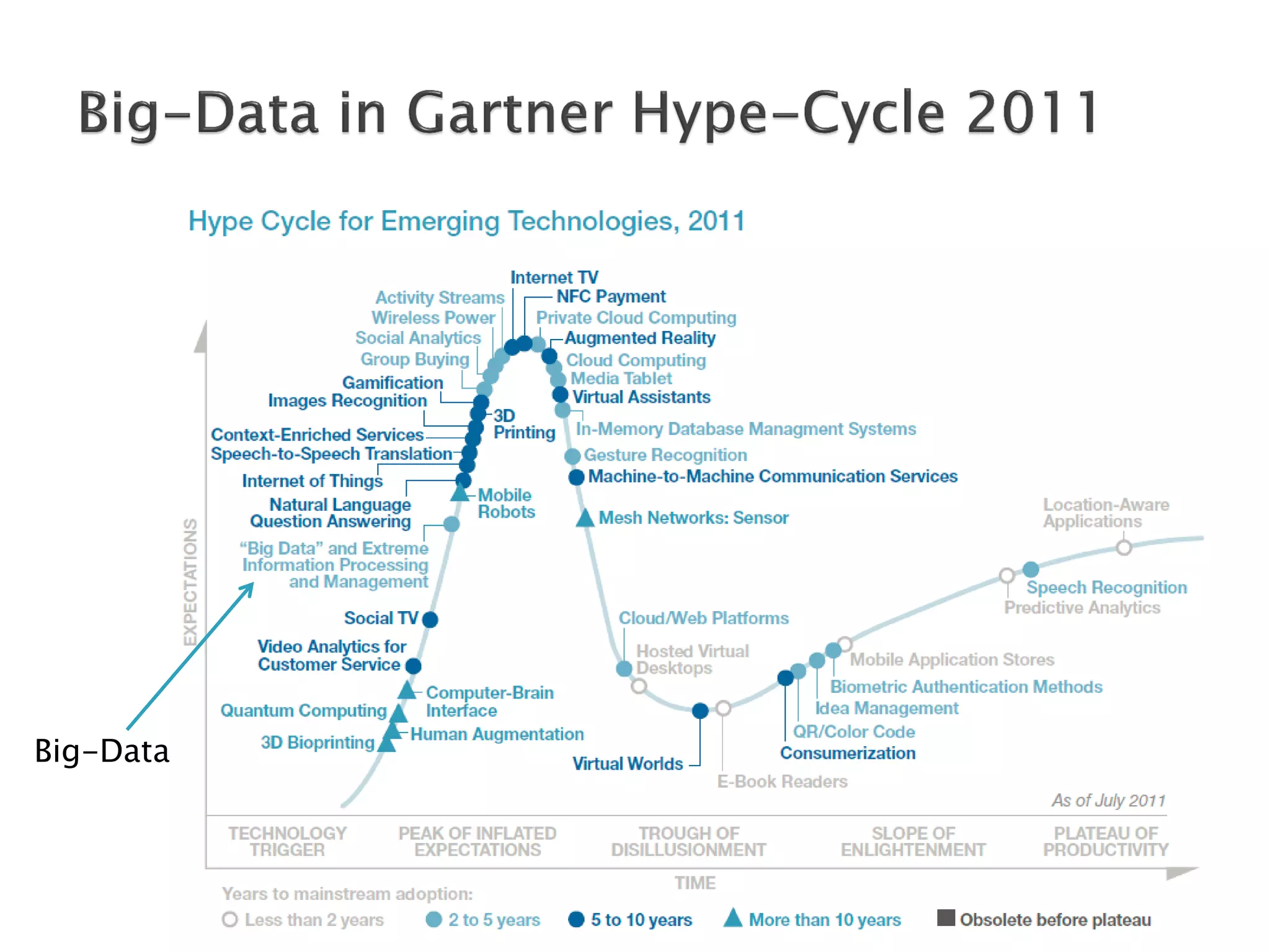

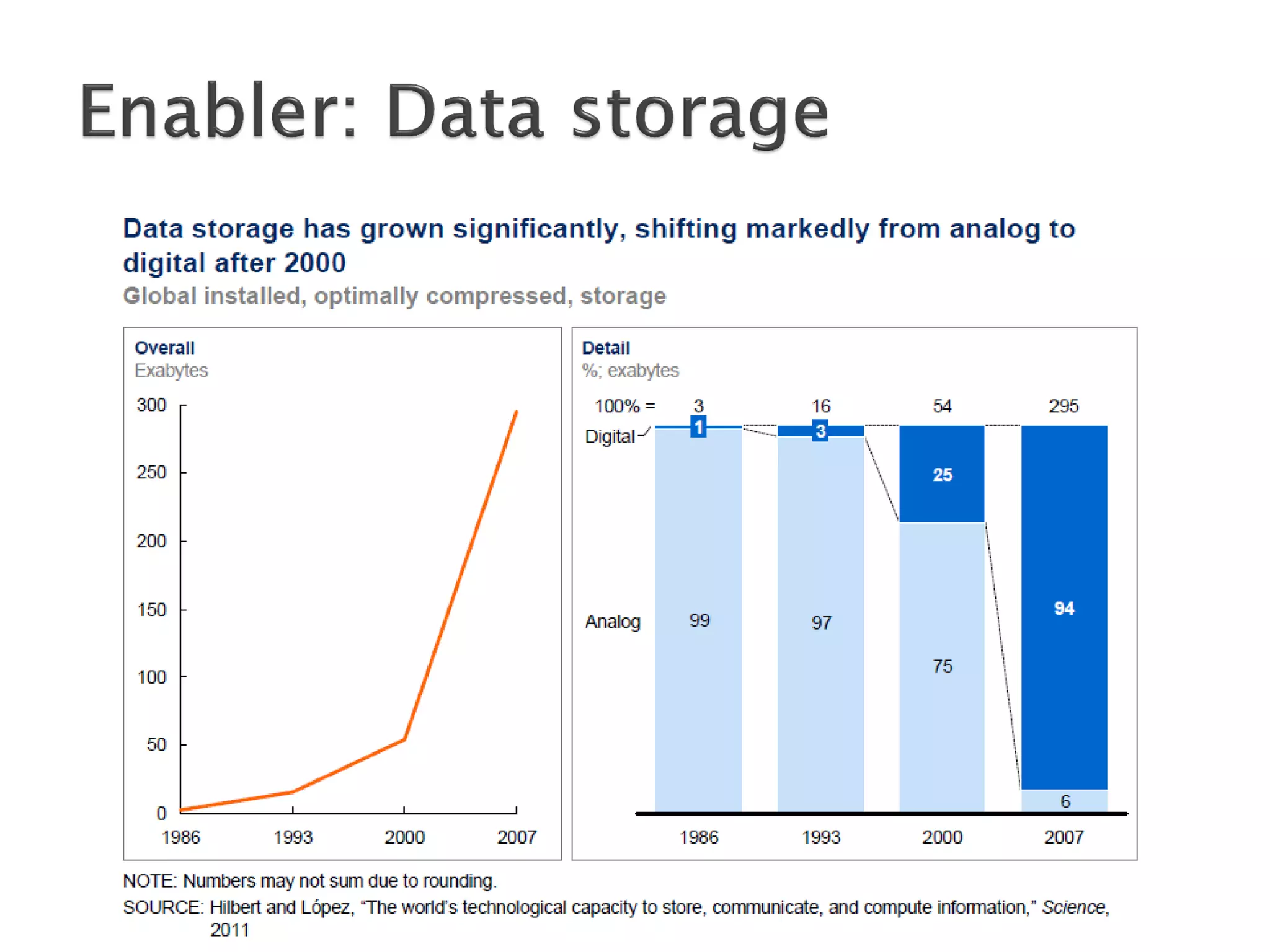

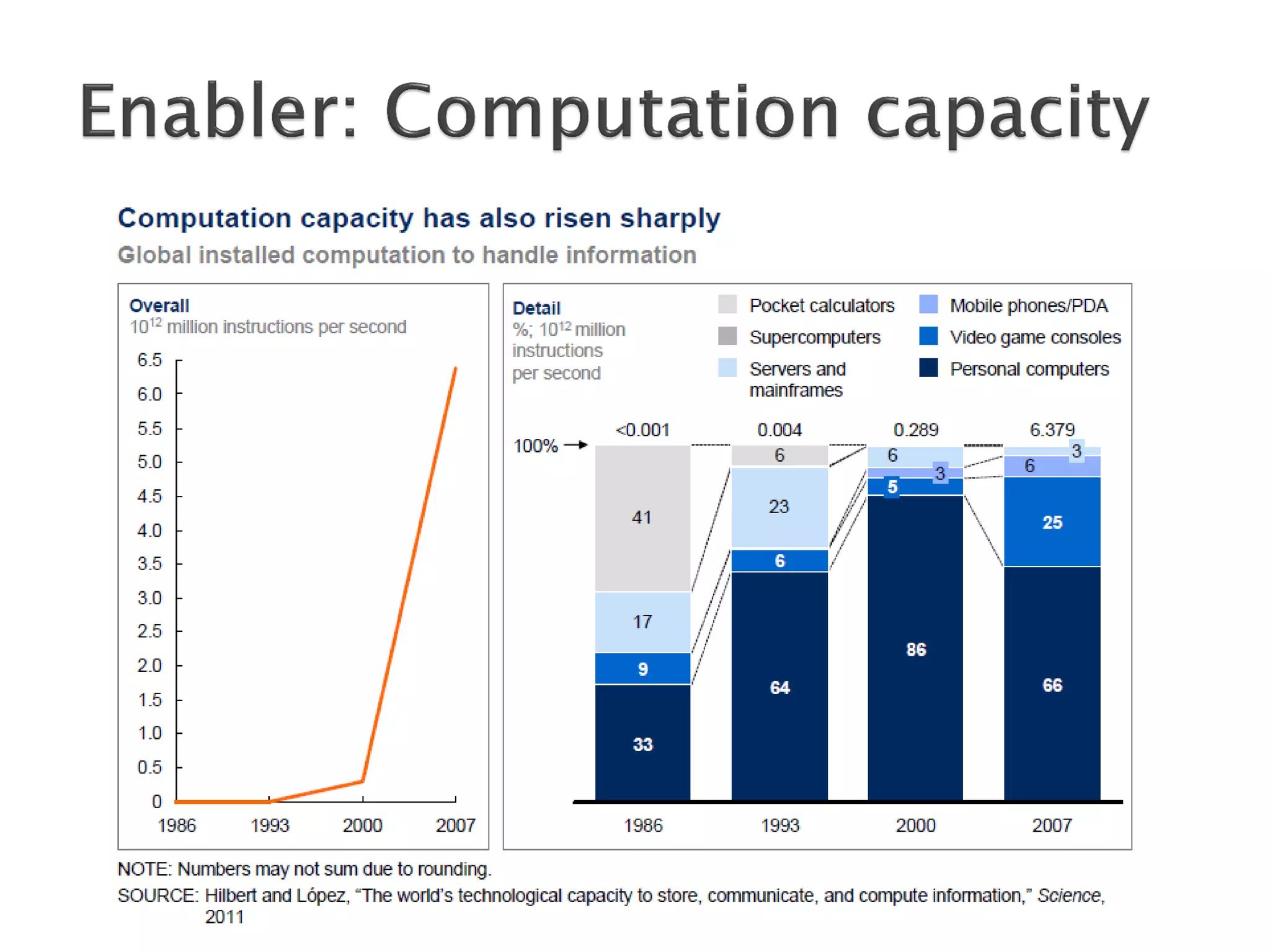

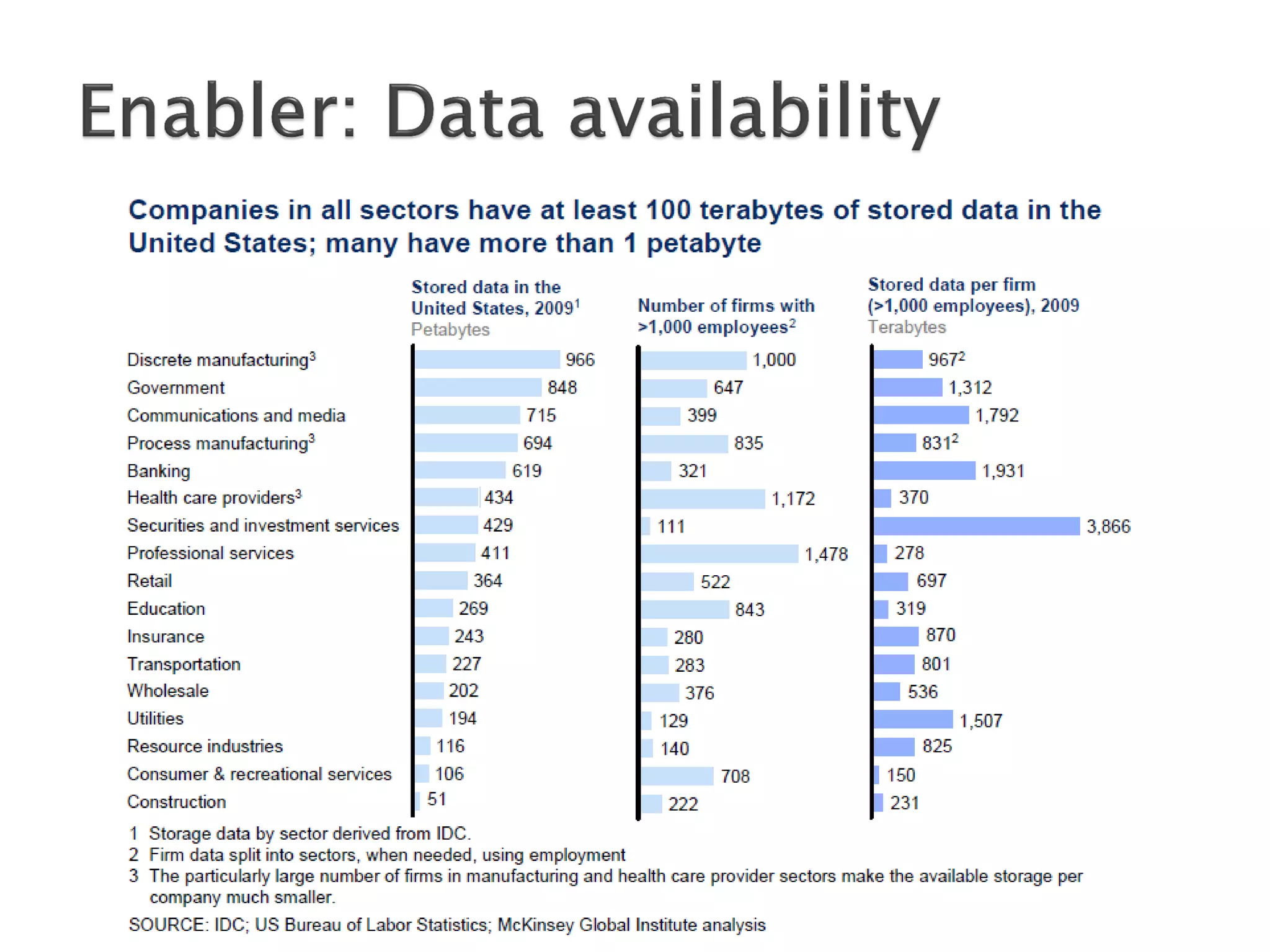

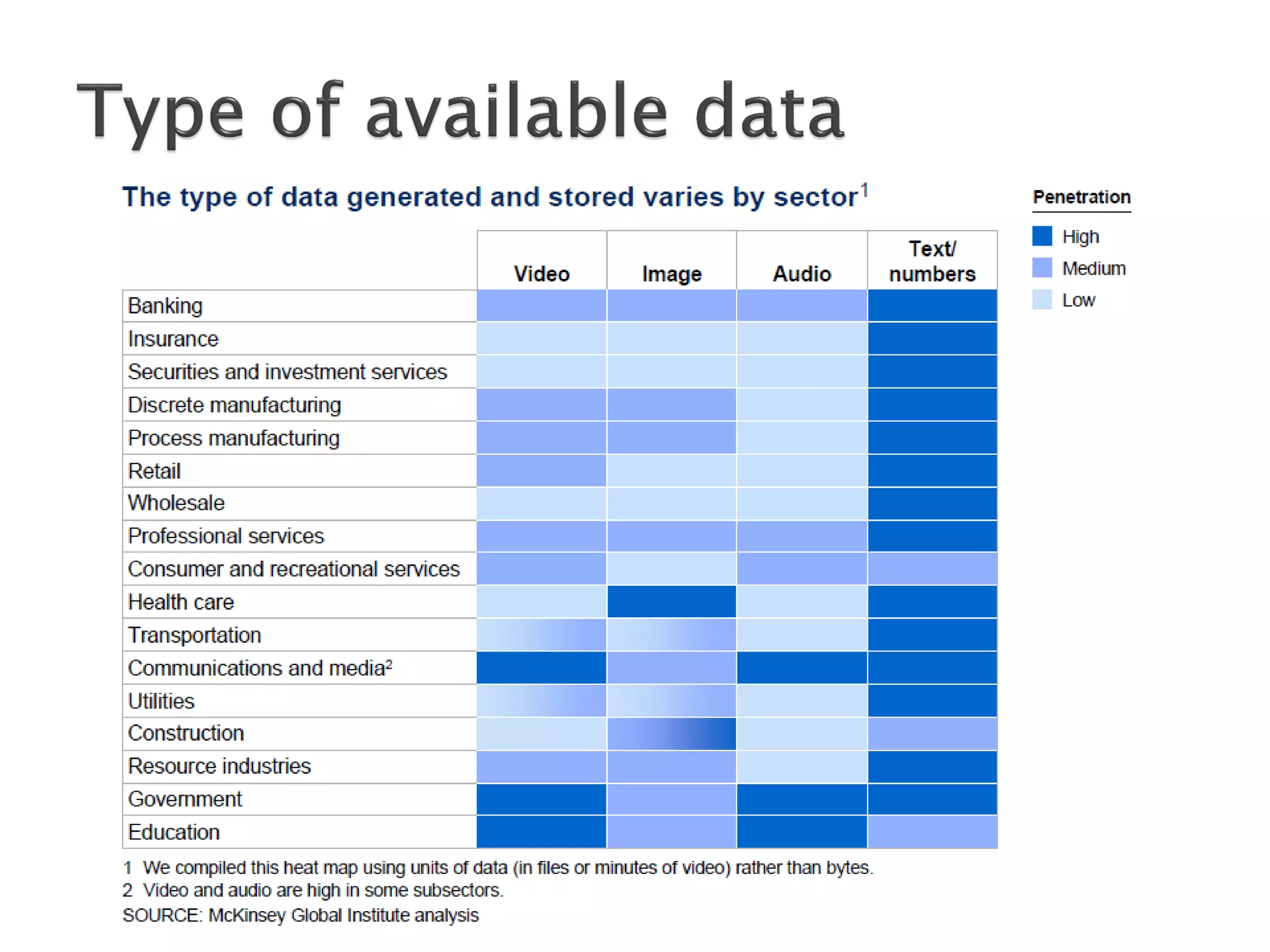

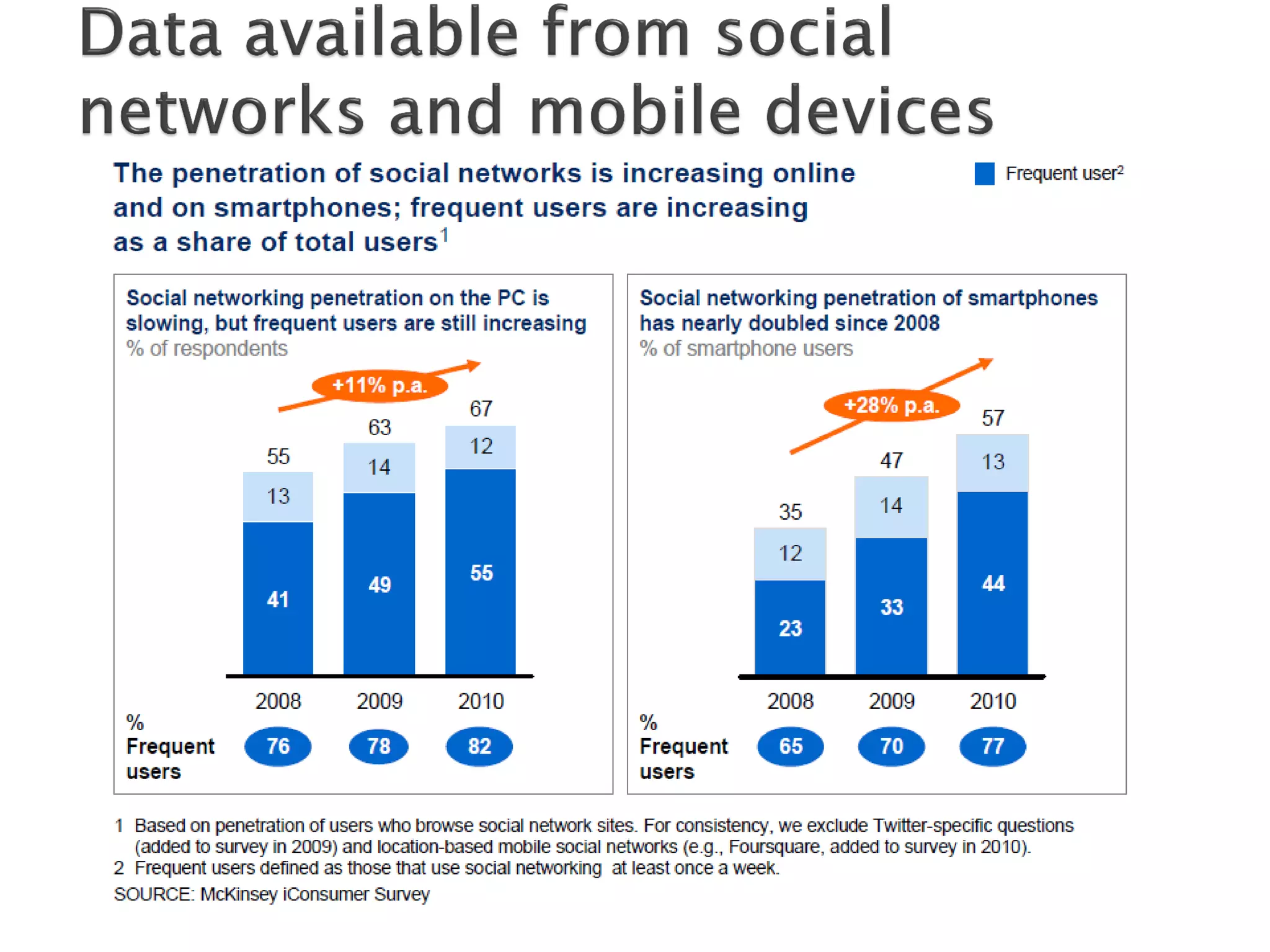

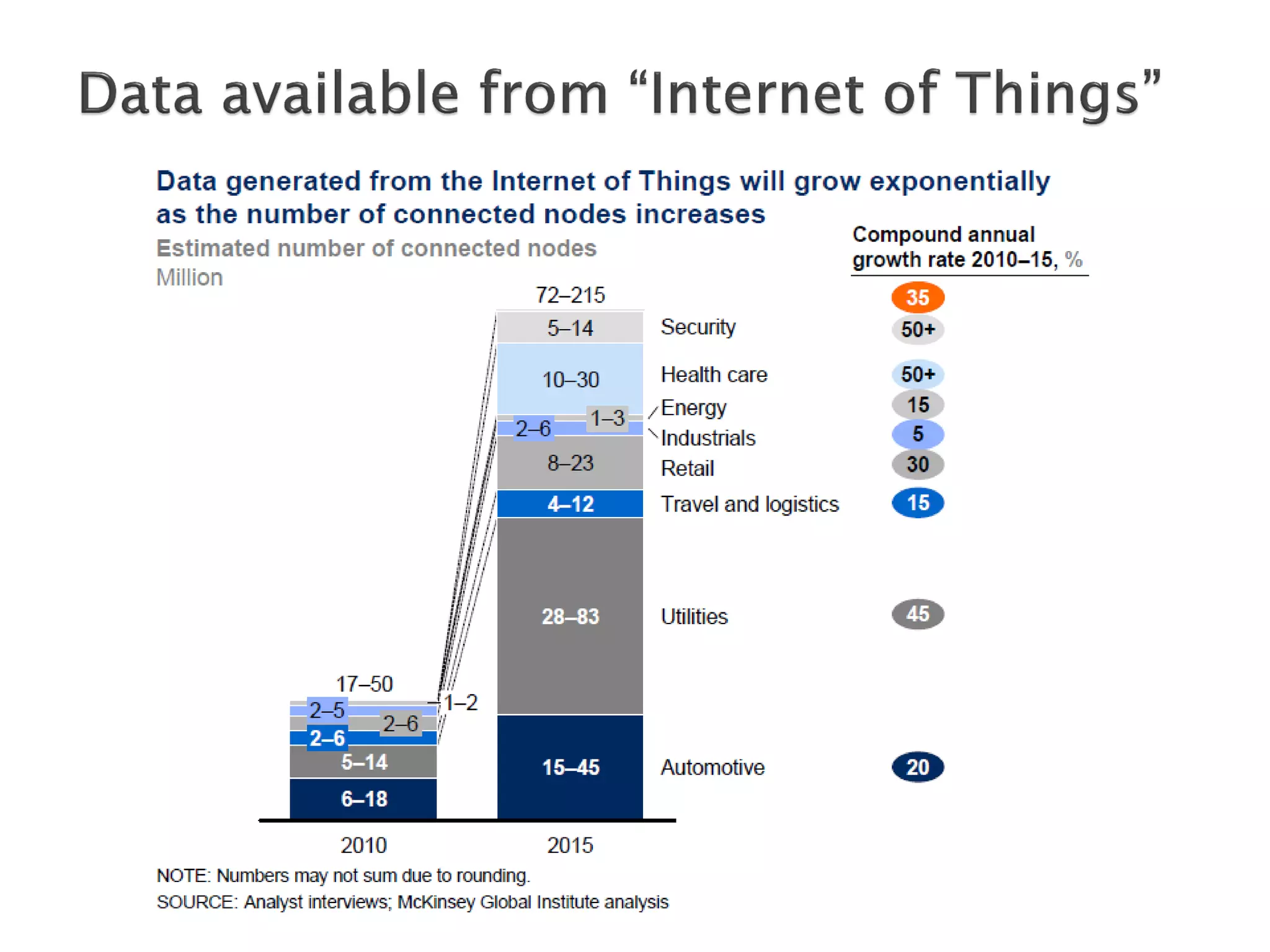

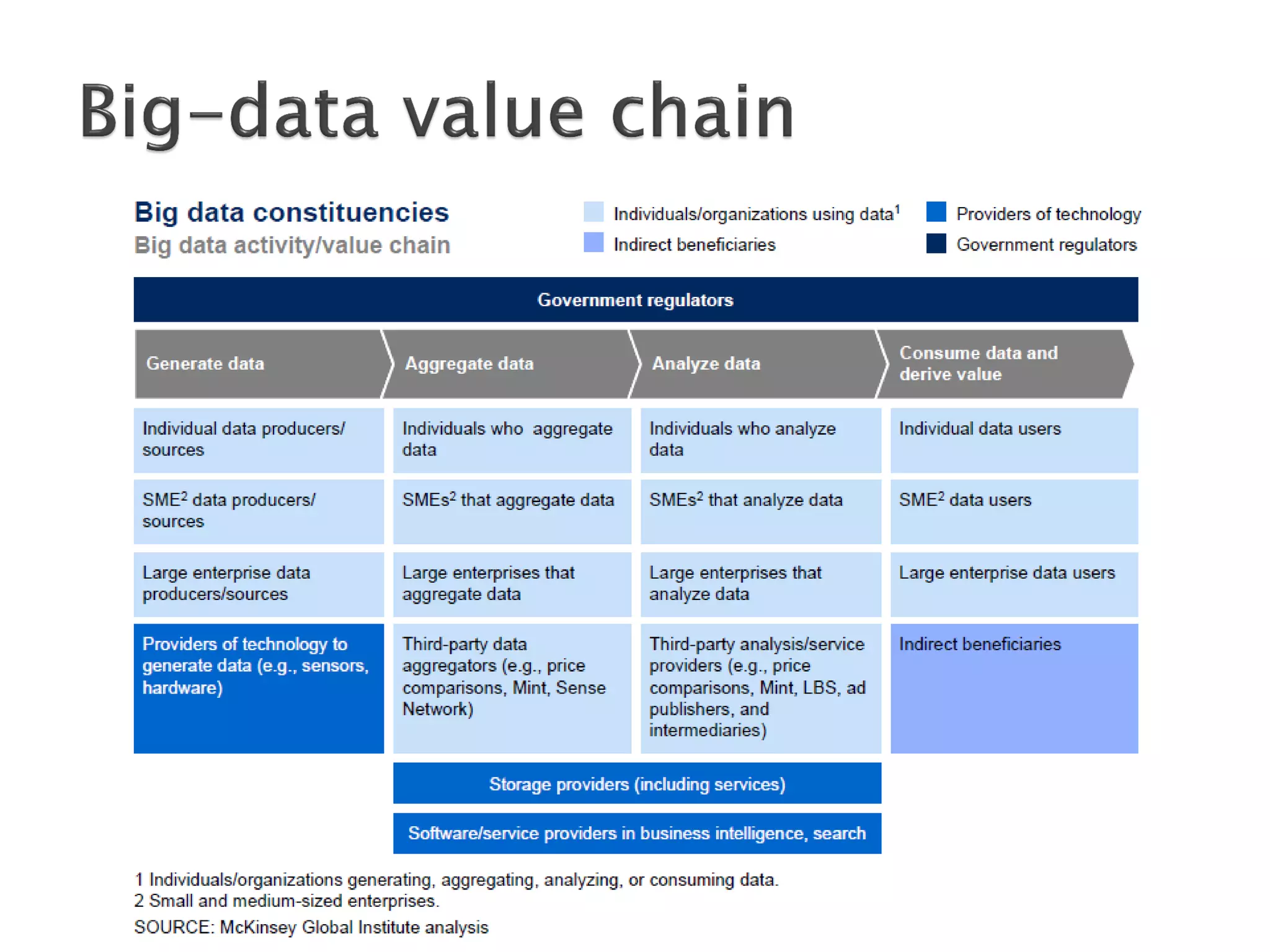

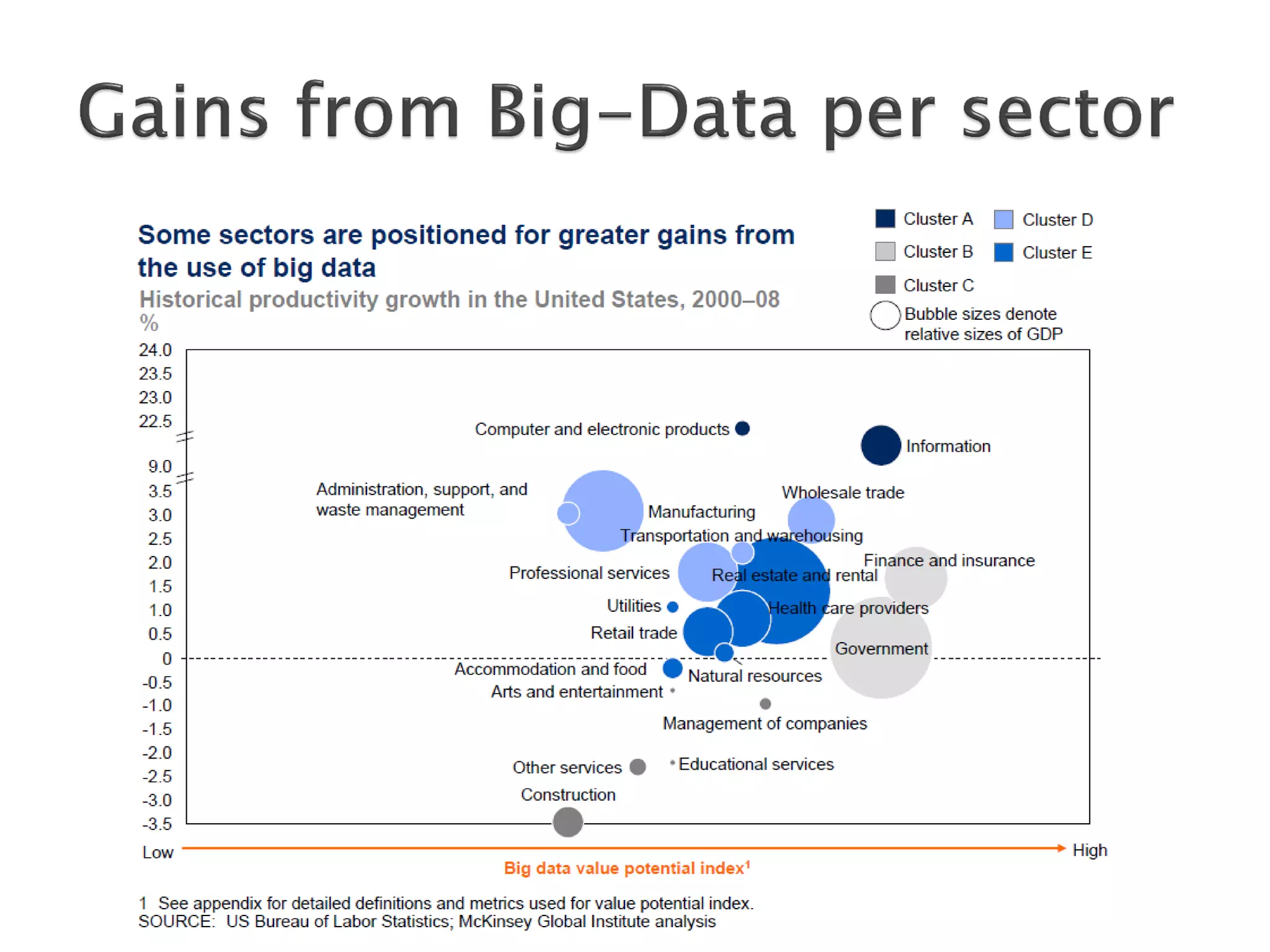

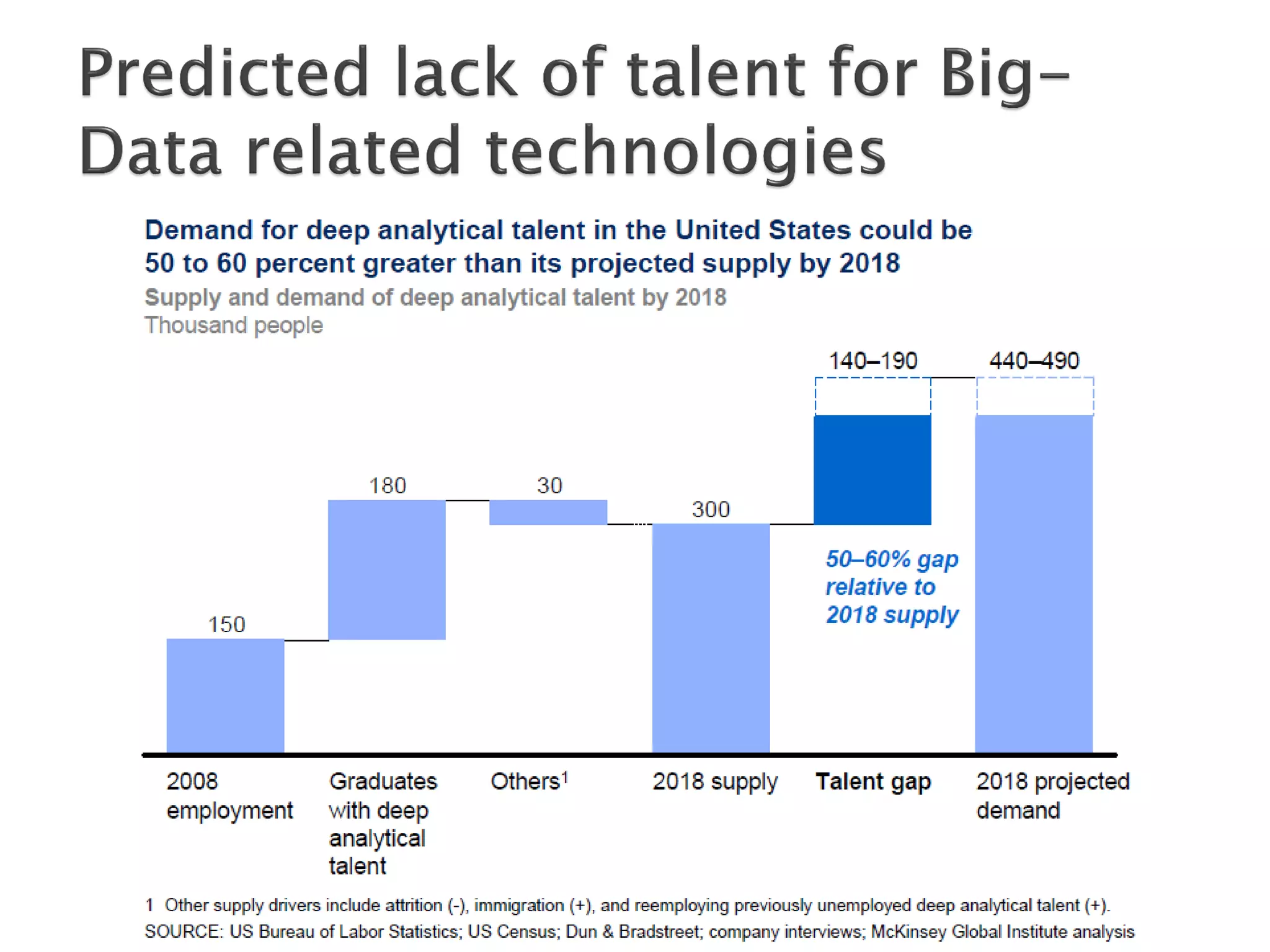

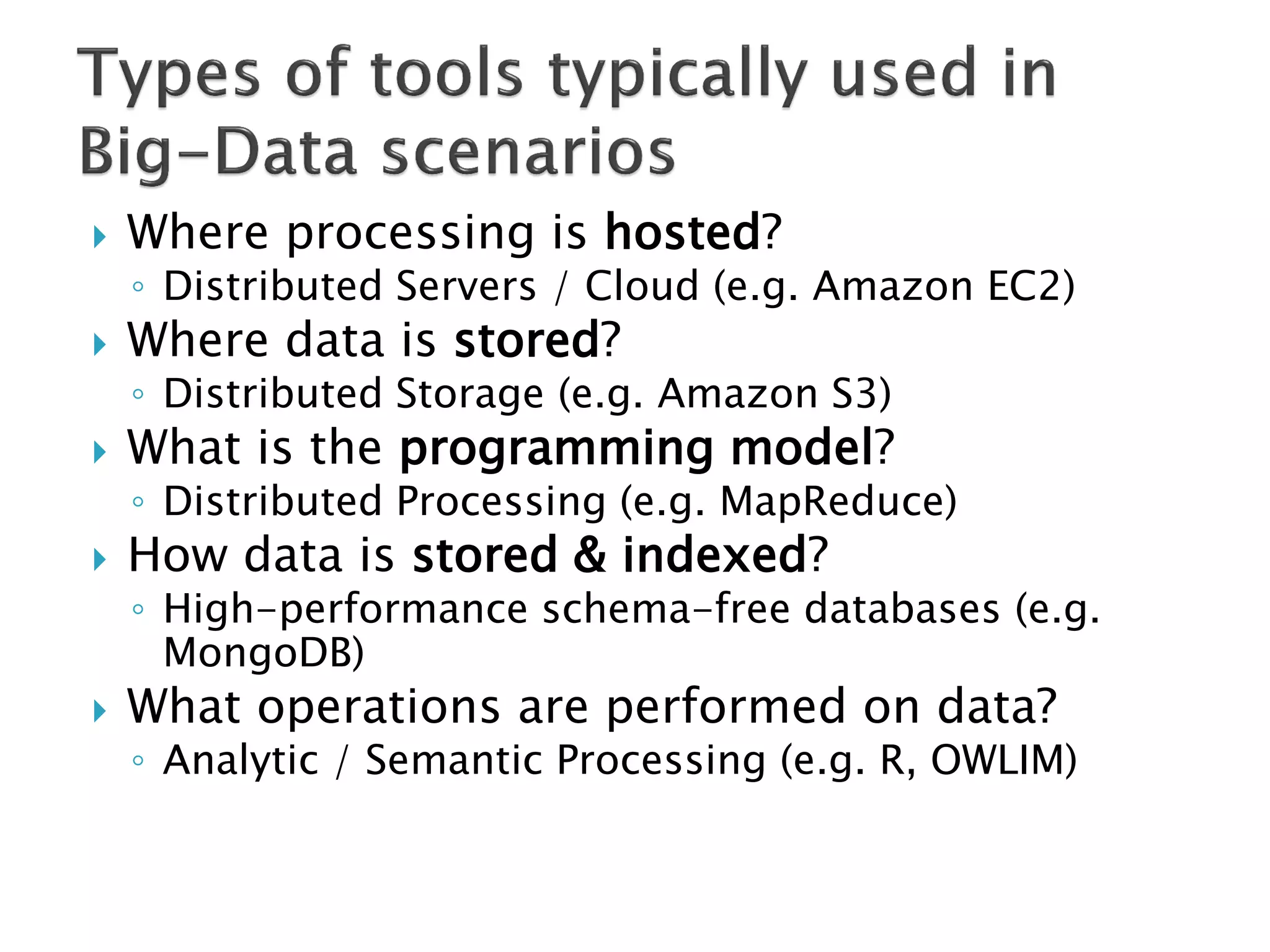

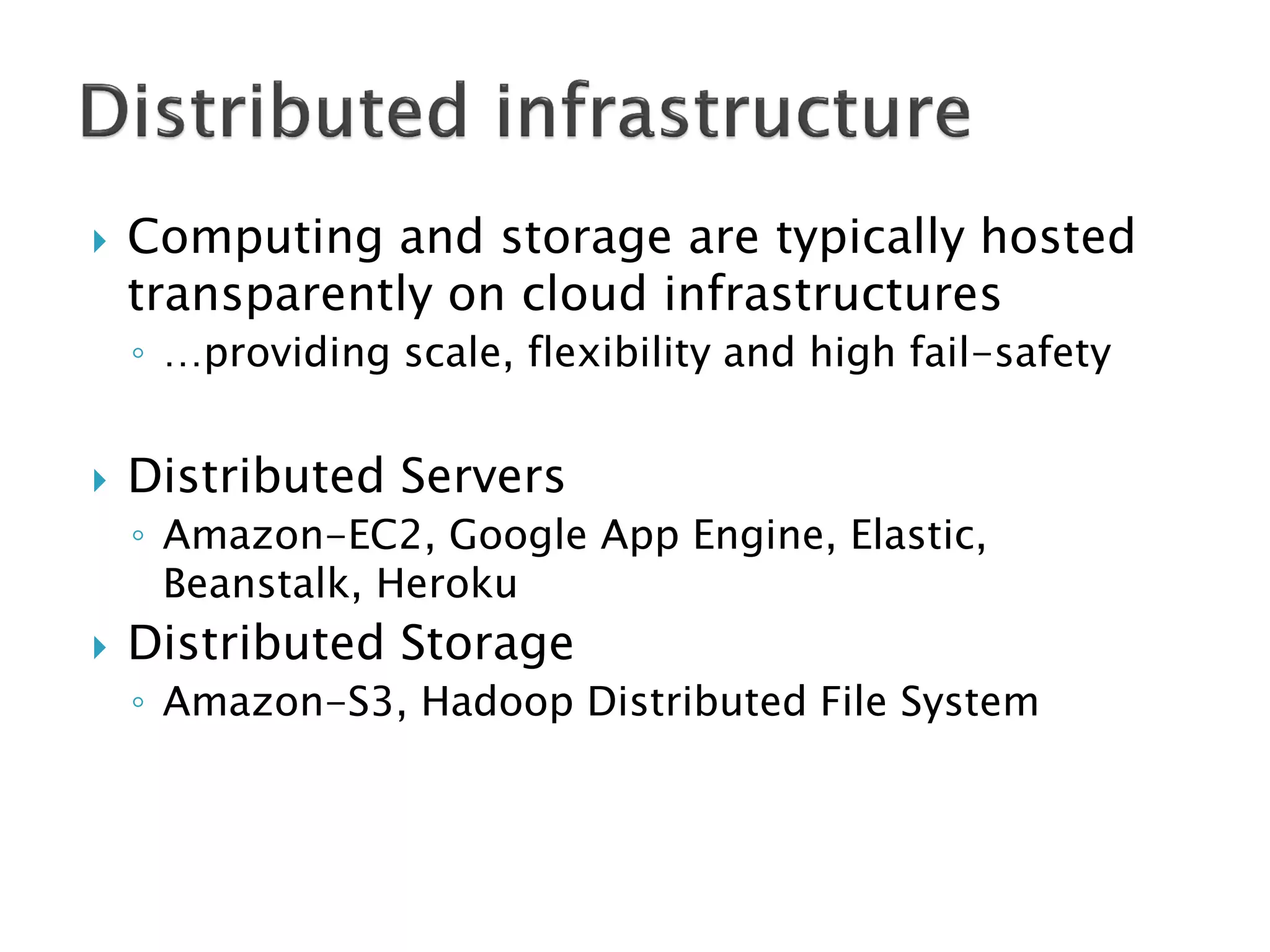

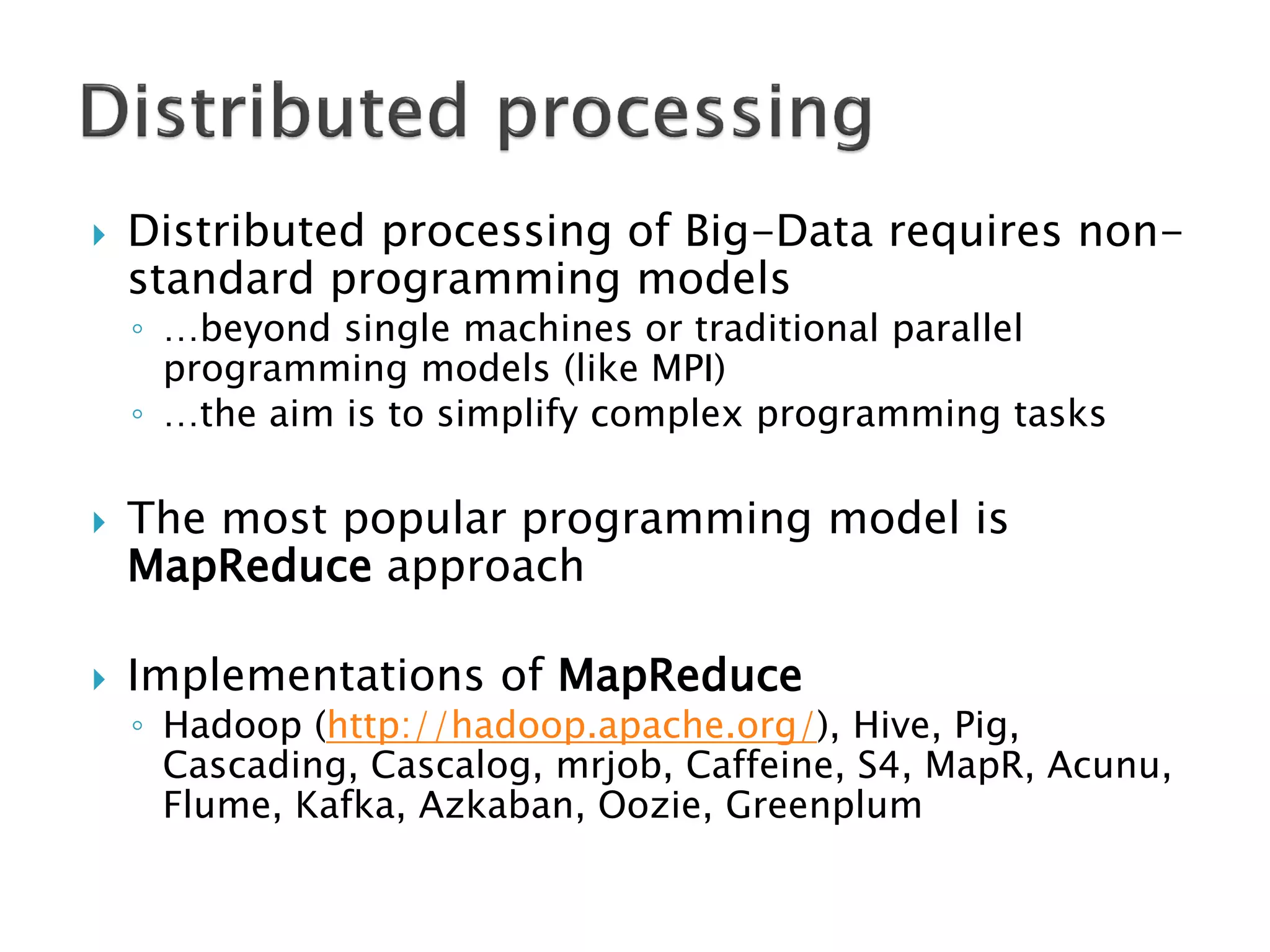

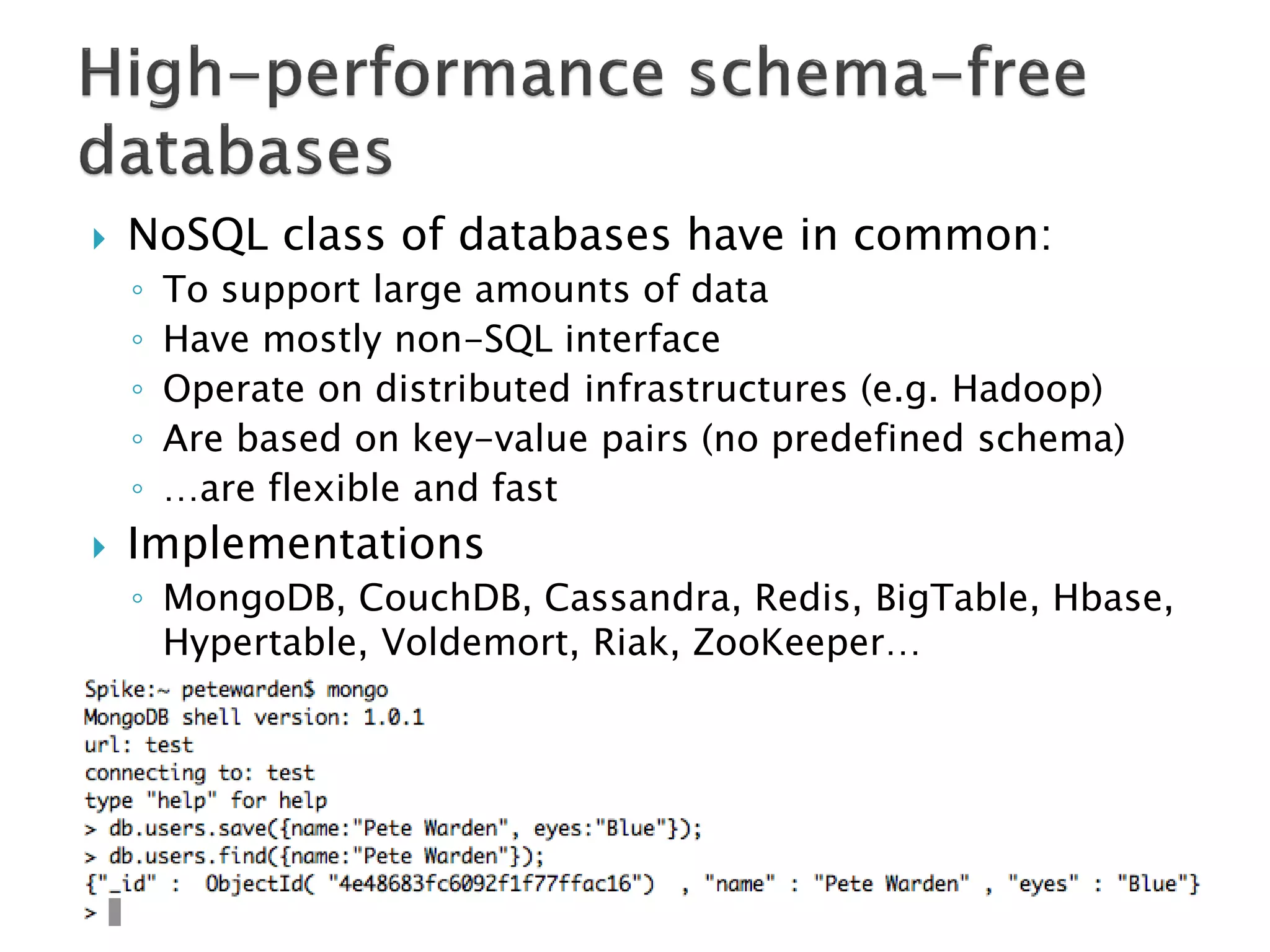

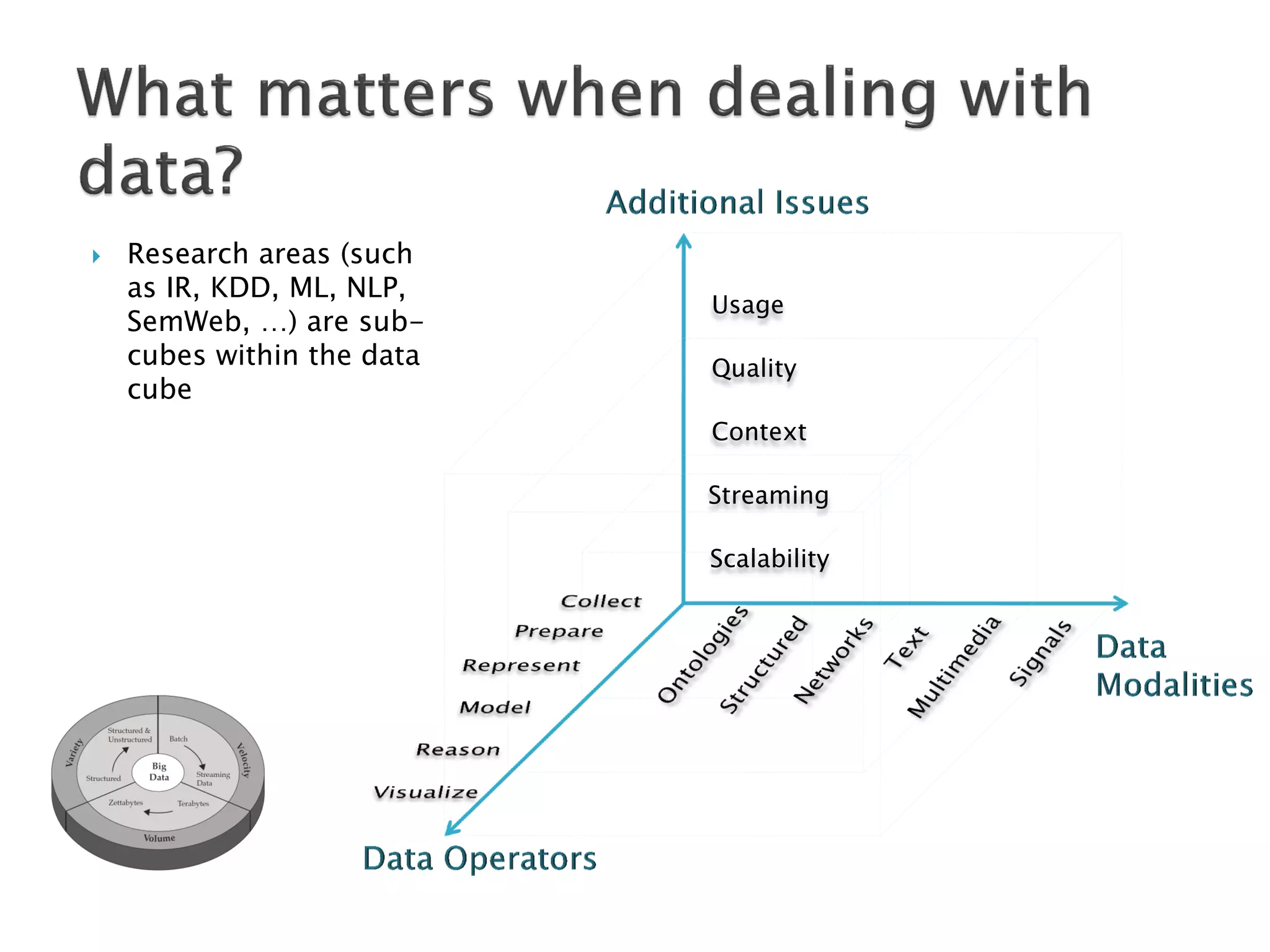

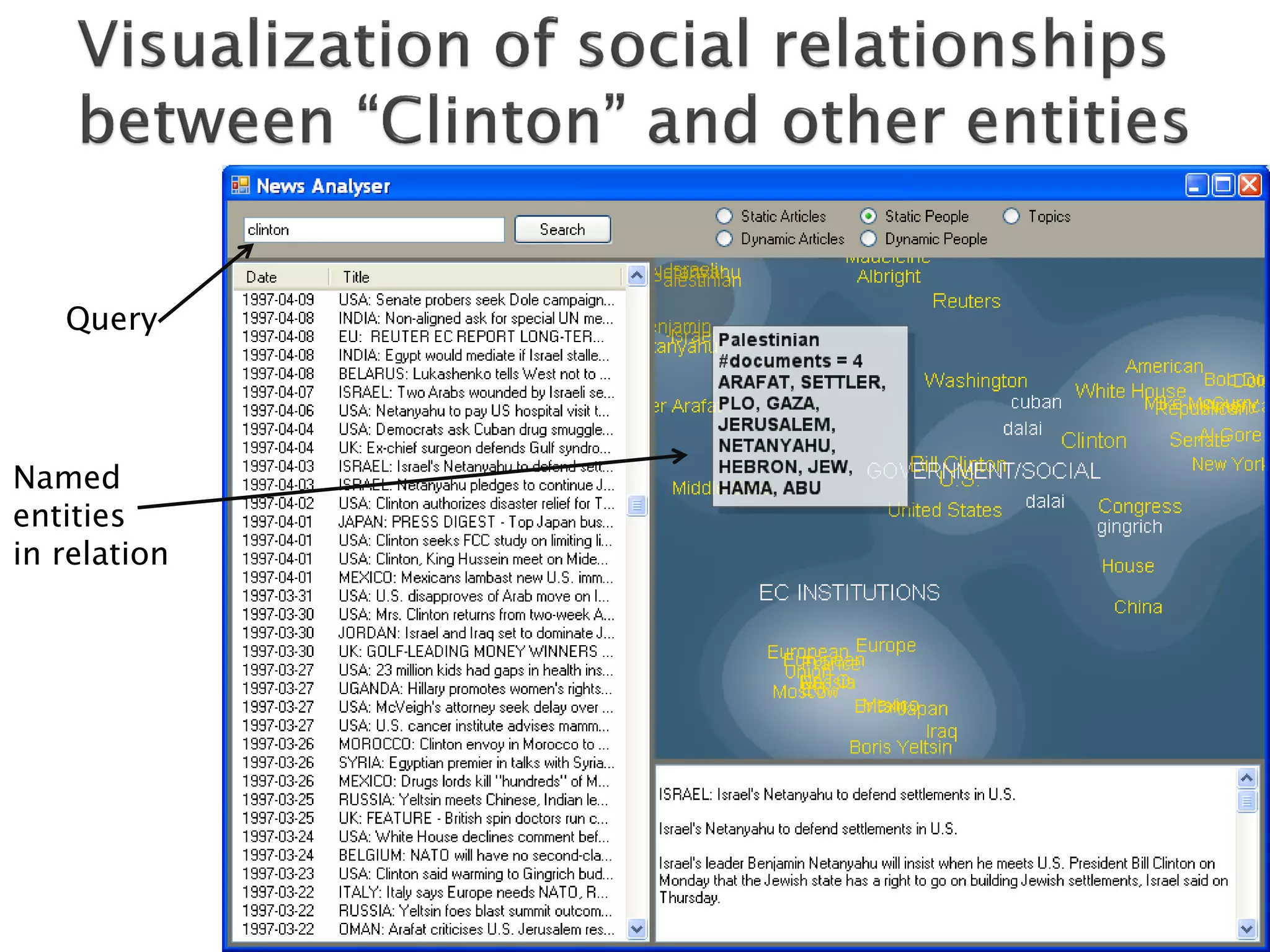

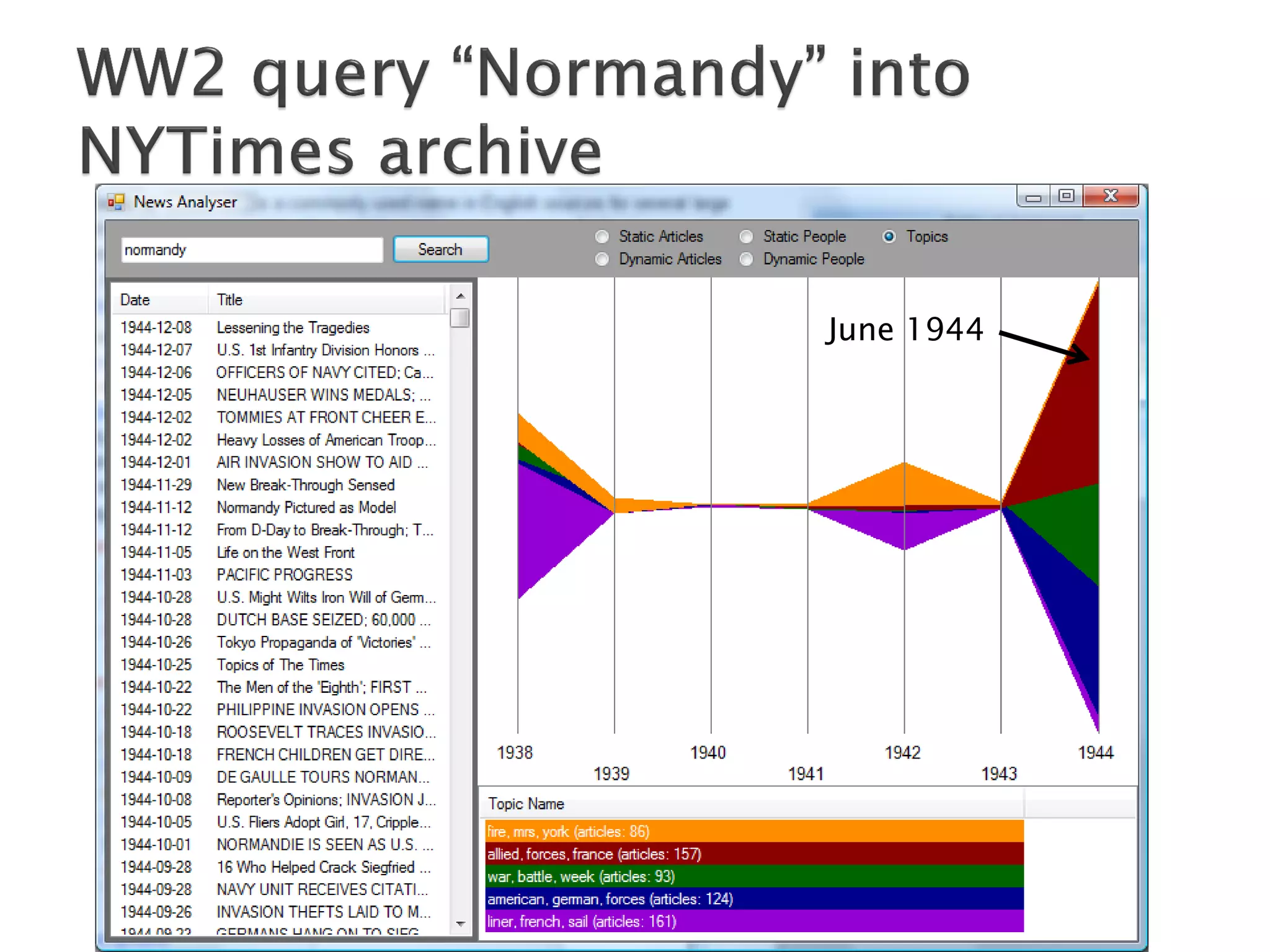

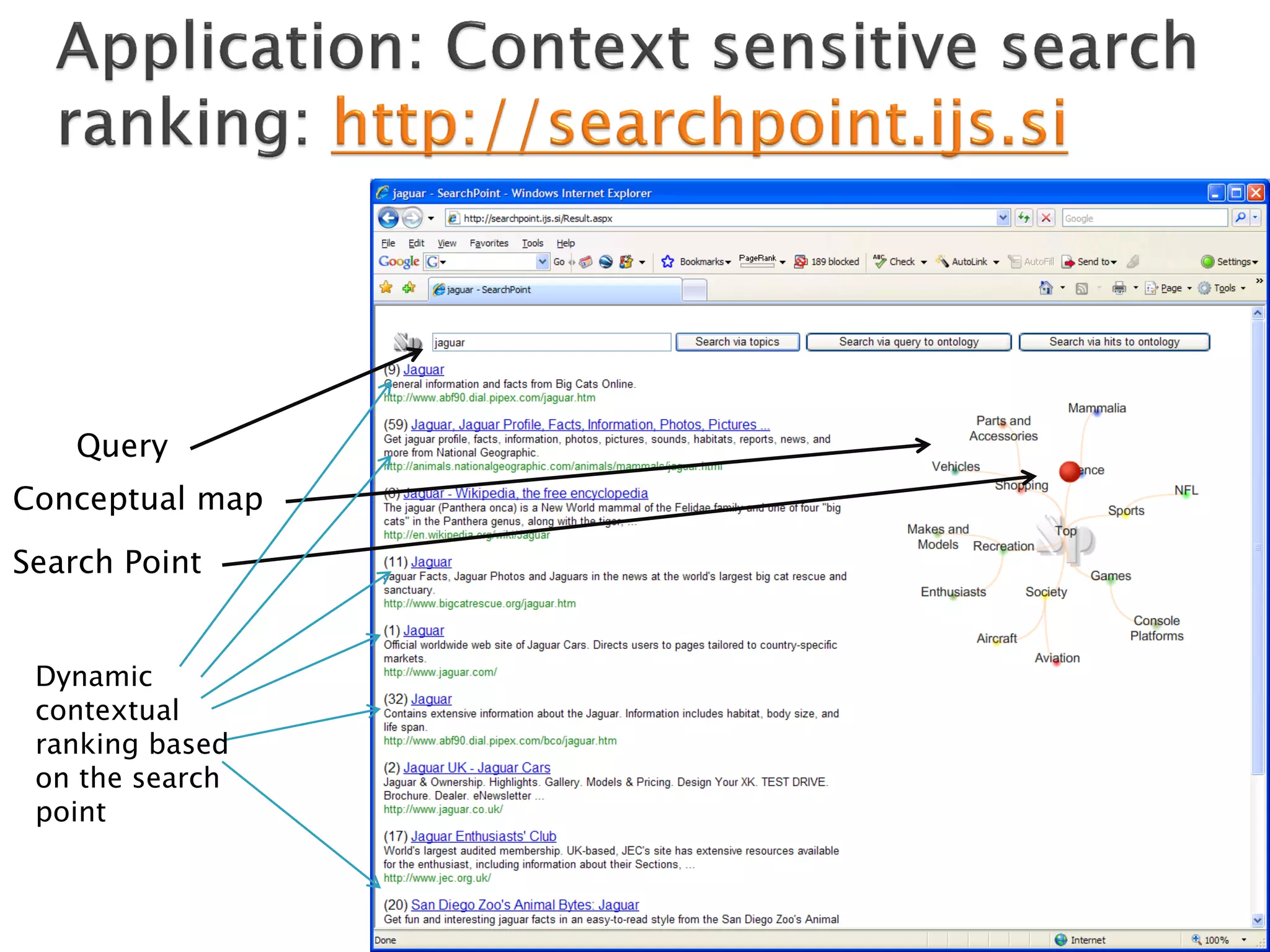

The document discusses big data, including what it is, key factors enabling its growth like increased storage and processing power, and techniques for handling big data like distributed processing and NoSQL databases. It provides examples of tools and applications for big data and discusses challenges like ensuring patterns found in big data analysis are actually meaningful.

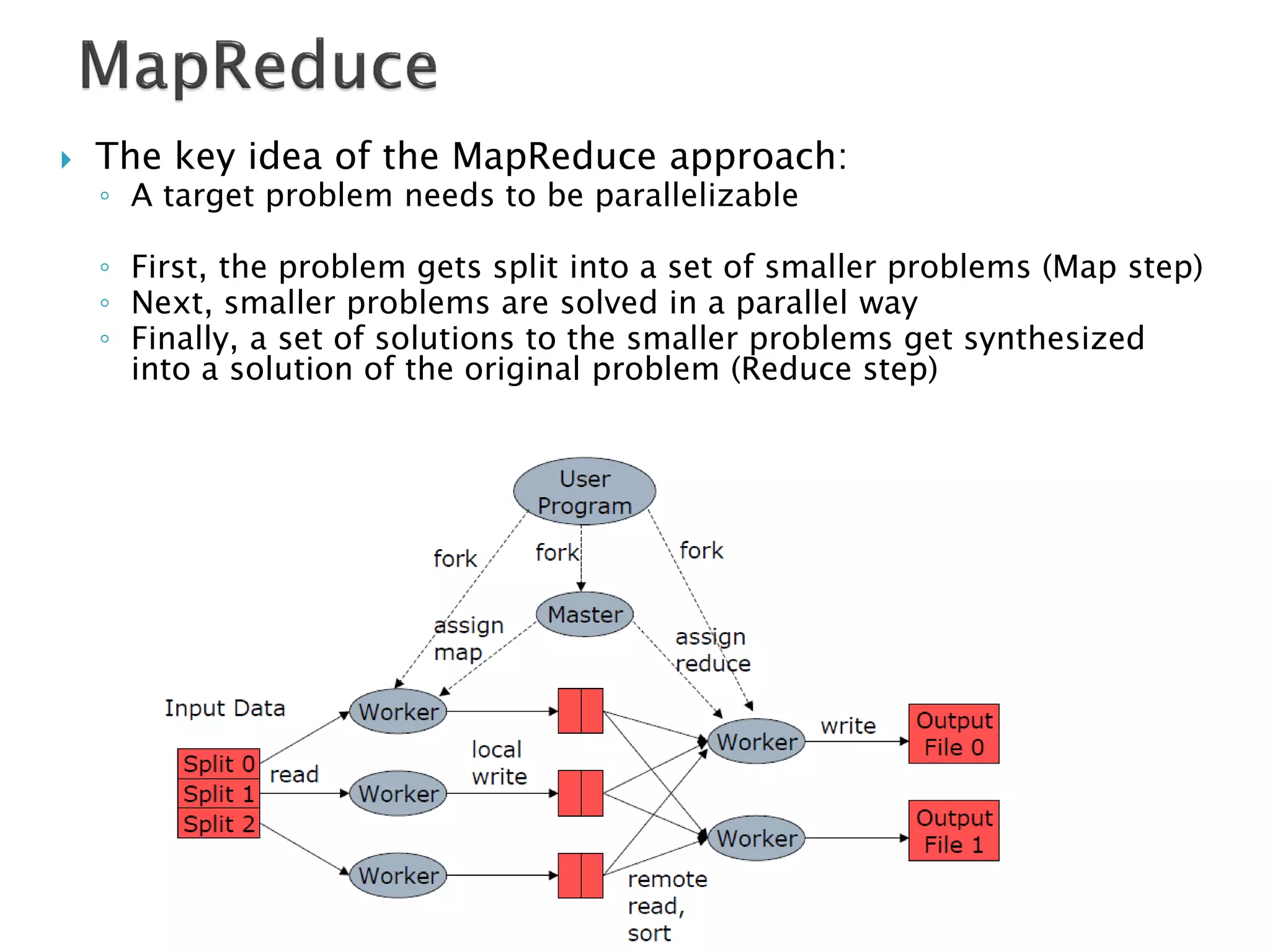

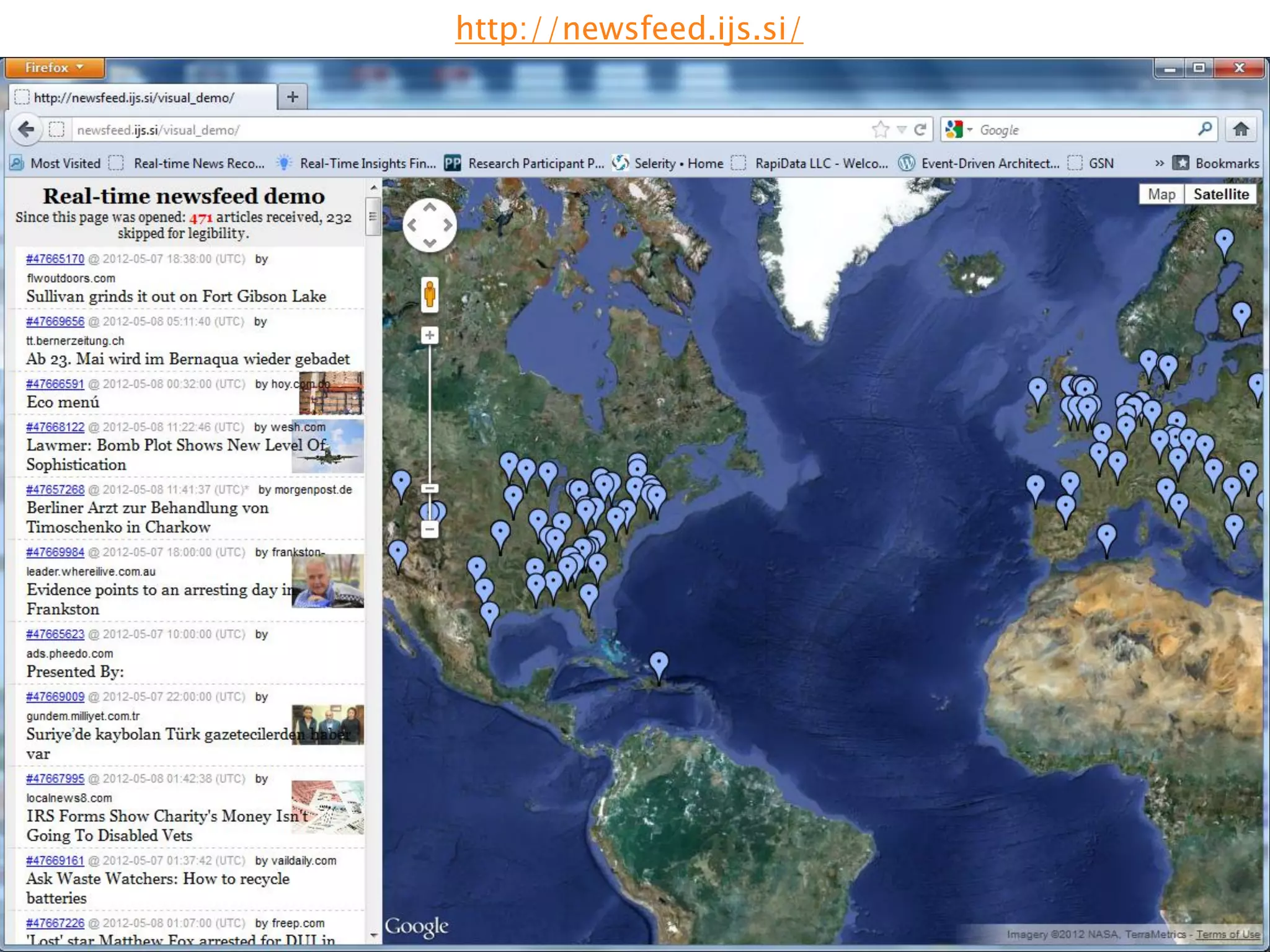

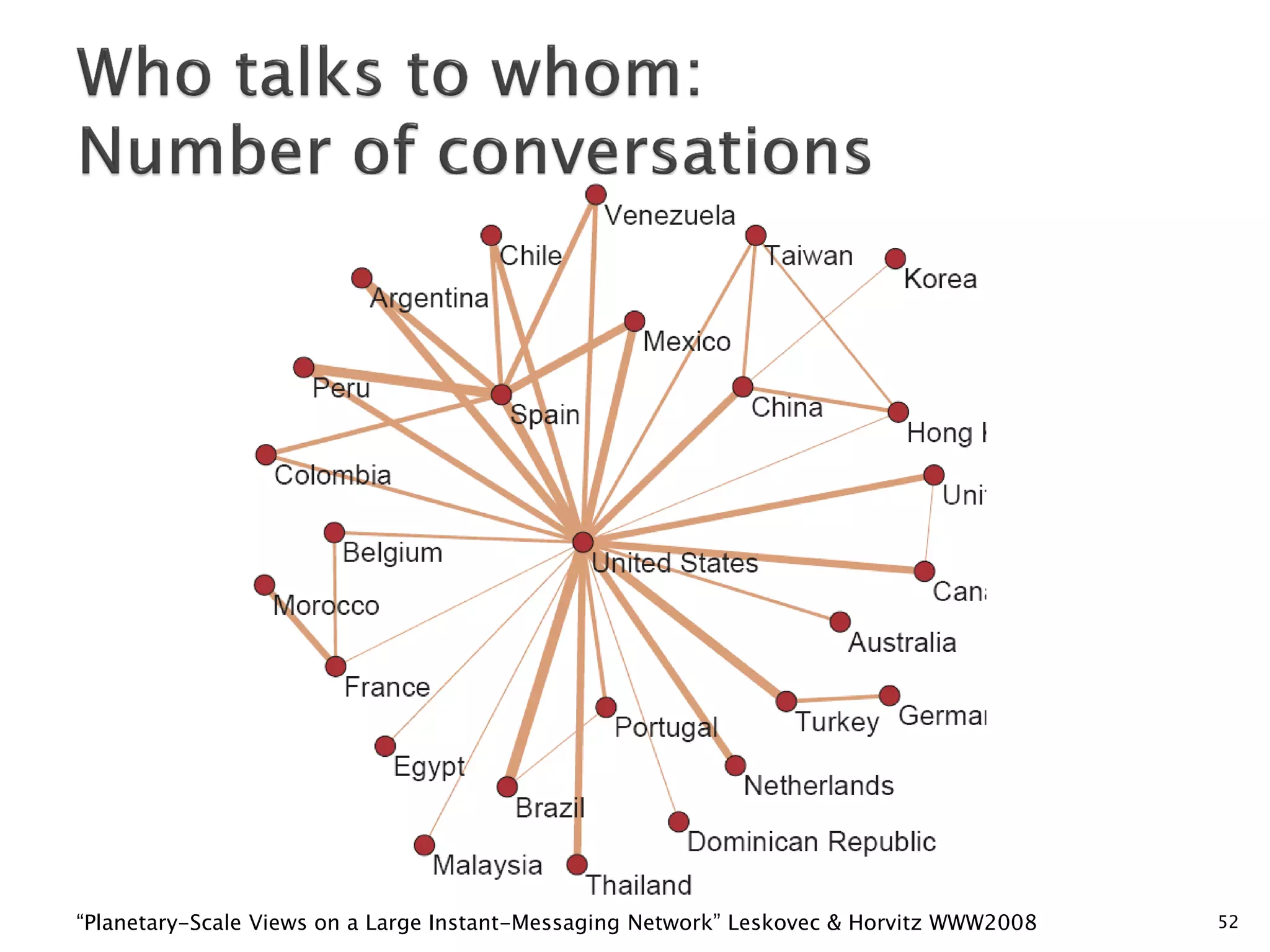

![Hops Nodes

1 10

2 78

3 396

4 8648

5 3299252

6 28395849

7 79059497

8 52995778

9 10321008

10 1955007

11 518410

12 149945

13 44616

14 13740

15 4476

16 1542

17 536

18 167

19 71

6 degrees of separation [Milgram ’60s] 20 29

Average distance between two random users is 6.622

21 16

10

90% of nodes can be reached in < 8 hops 23 3

24 2

“Planetary-Scale Views on a Large Instant-Messaging Network” Leskovec & Horvitz WWW2008 25 3](https://image.slidesharecdn.com/bigdatatutorial-markogrobelnik-kalamaki-25may2012-120621164119-phpapp02/75/Big-Data-Tutorial-Marko-Grobelnik-25-May-2012-56-2048.jpg)