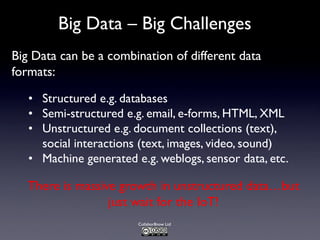

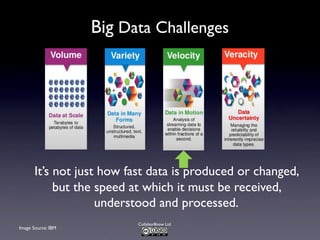

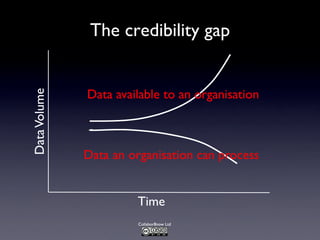

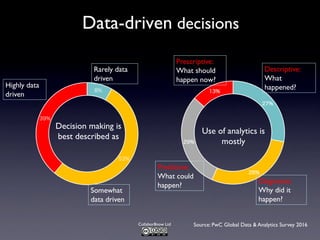

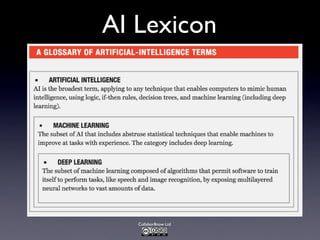

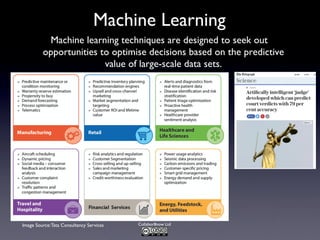

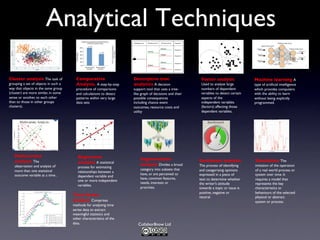

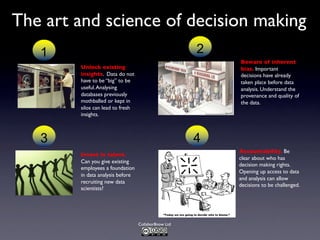

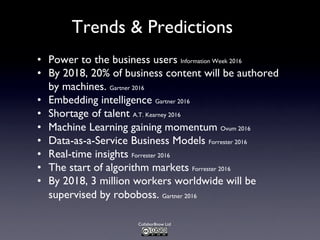

The document discusses the challenges and requirements associated with big data, highlighting its diverse nature and the need for advanced algorithms and techniques for effective management and analysis. It emphasizes the importance of data-driven decision-making, the role of machine learning, and the need for businesses to invest in talent while being aware of potential biases in data usage. Furthermore, it outlines trends and predictions for the future, including the increasing role of machine-generated content and the integration of artificial intelligence in decision-making processes.