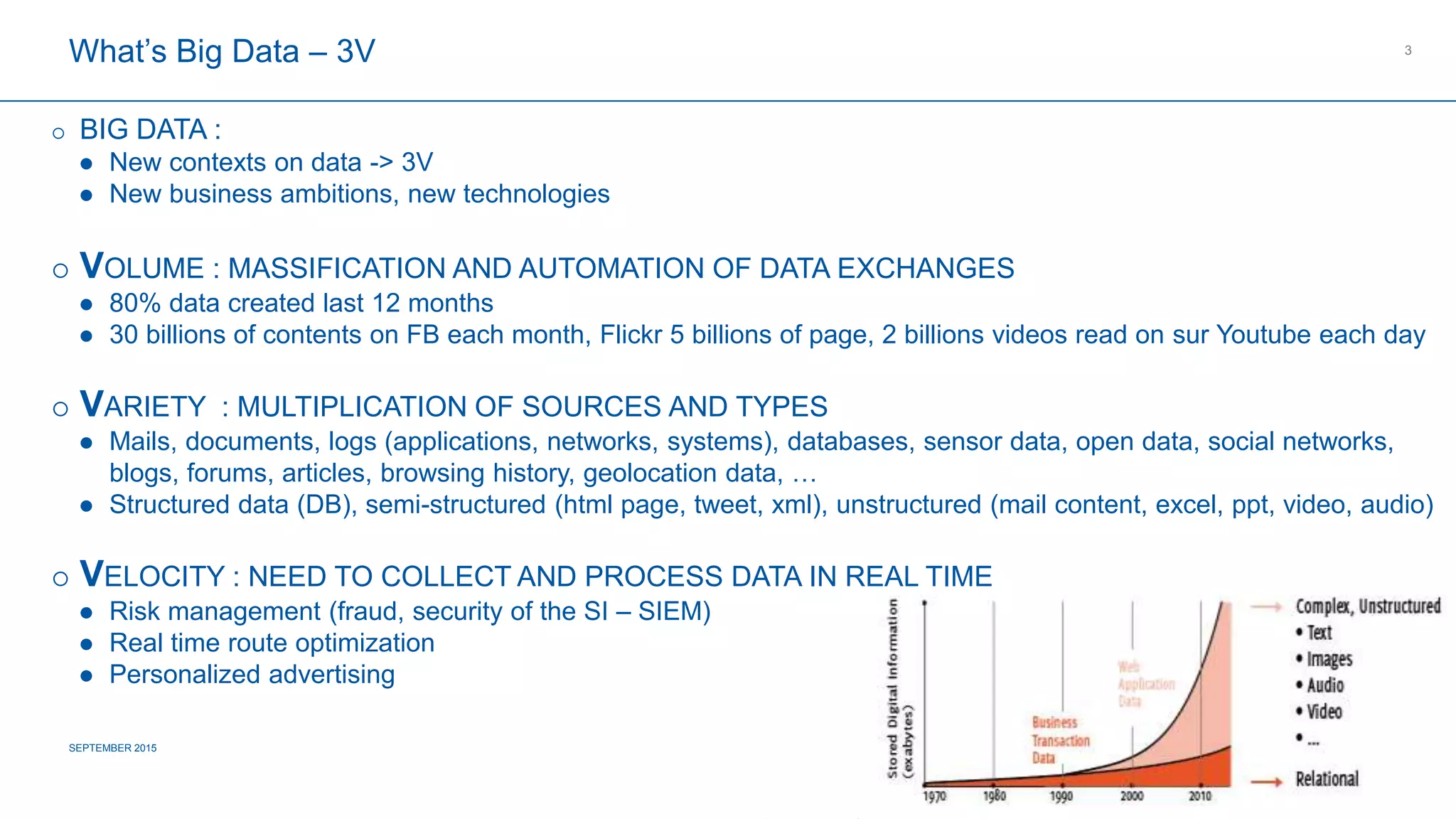

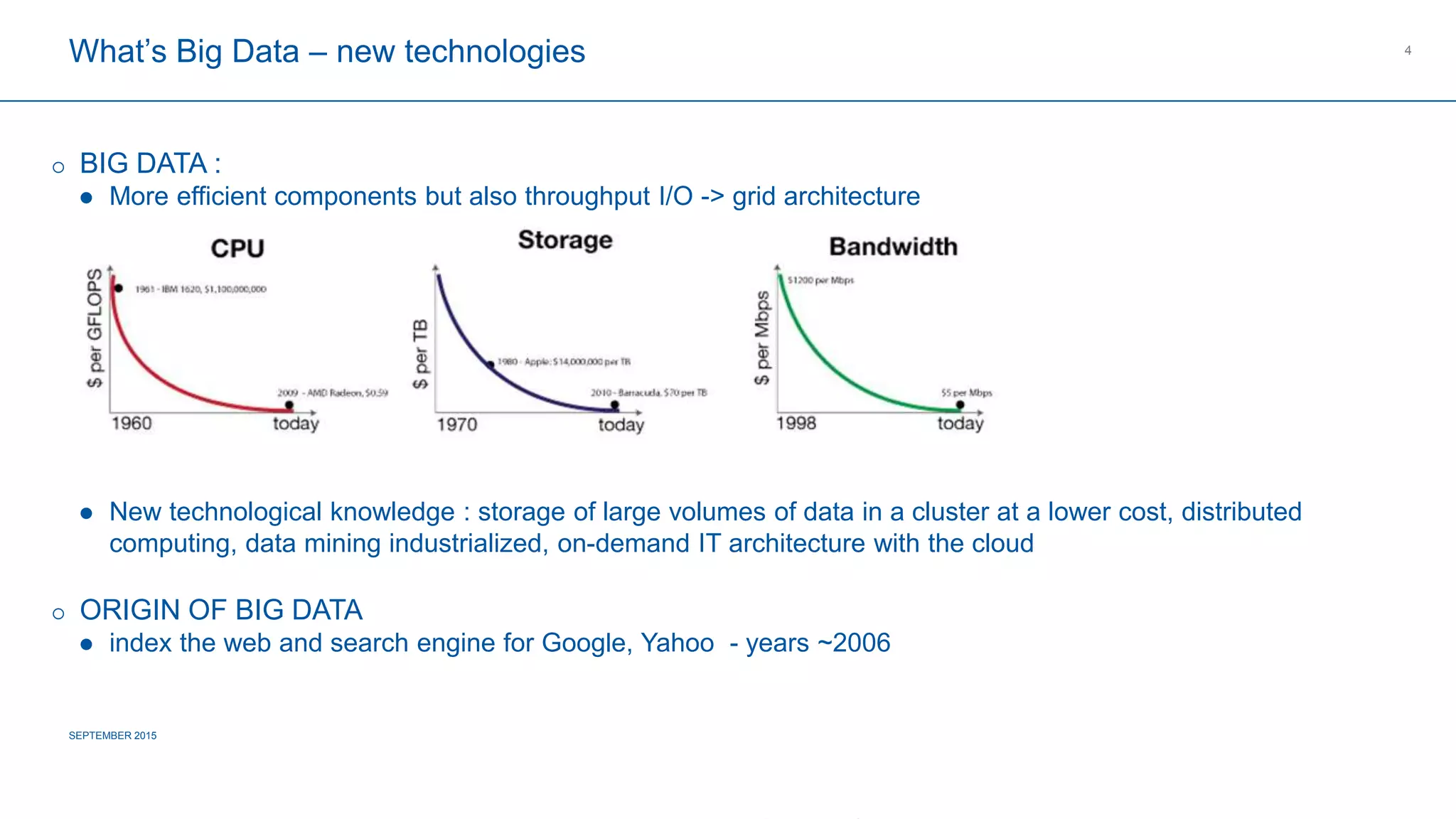

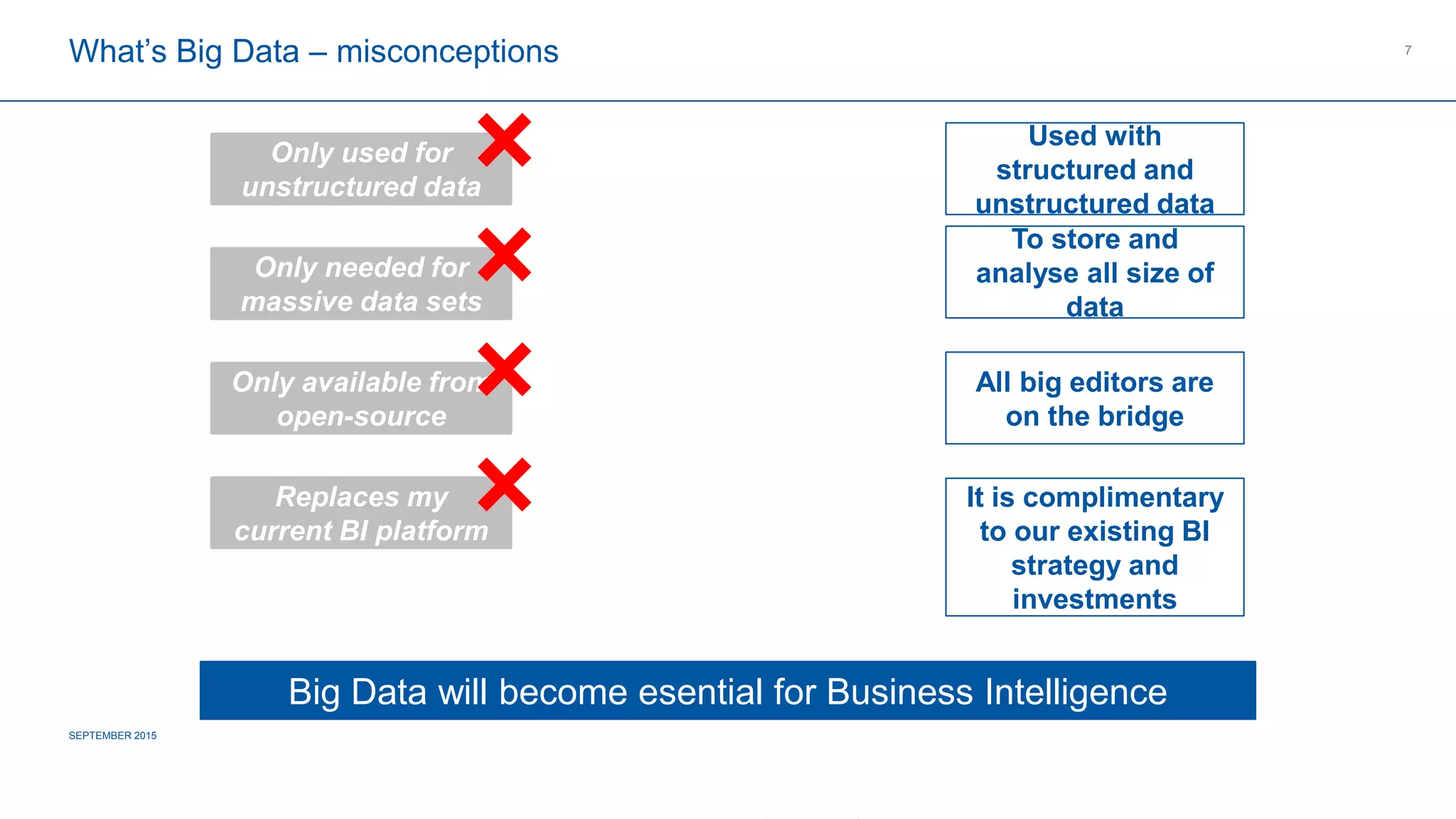

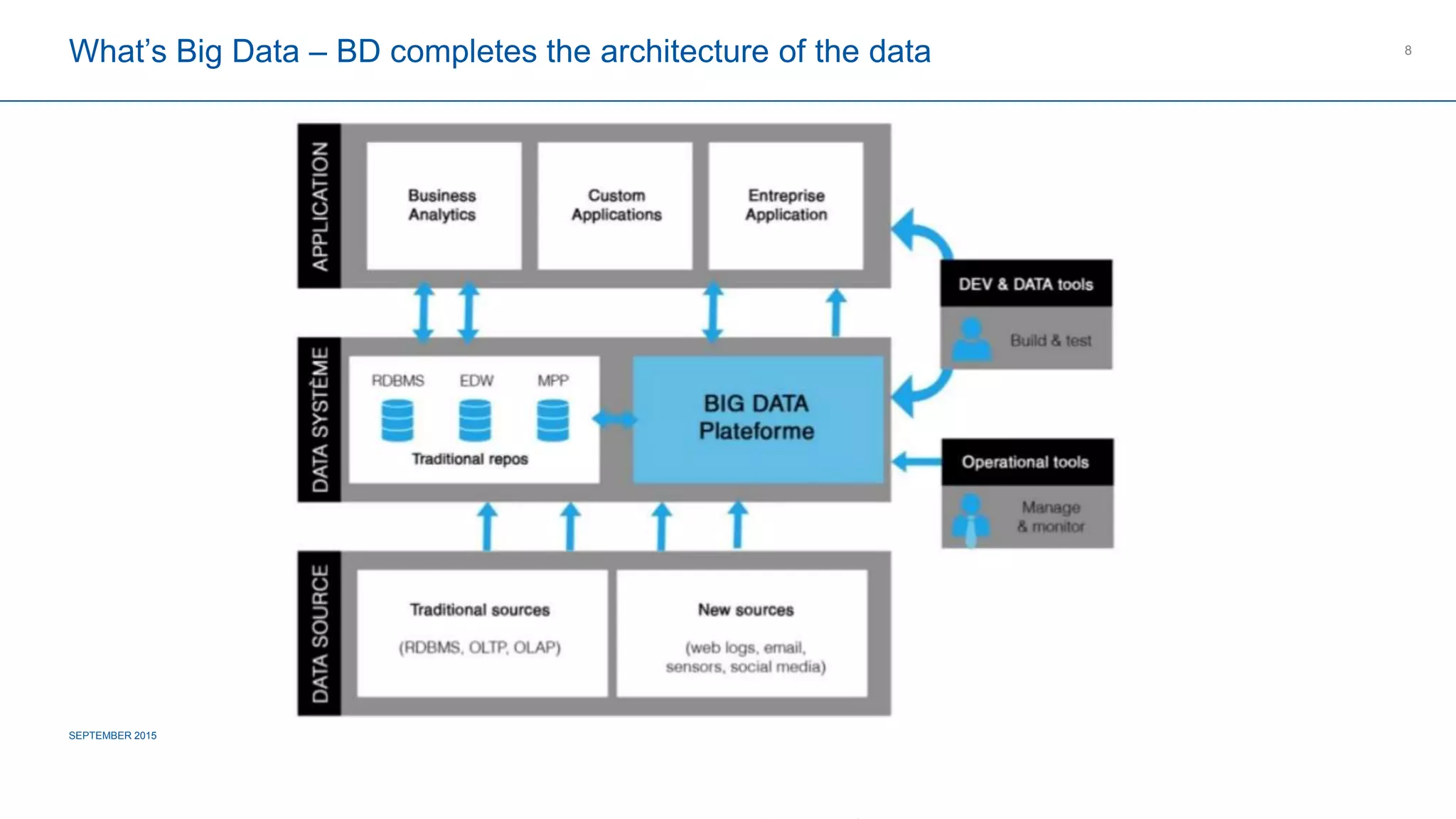

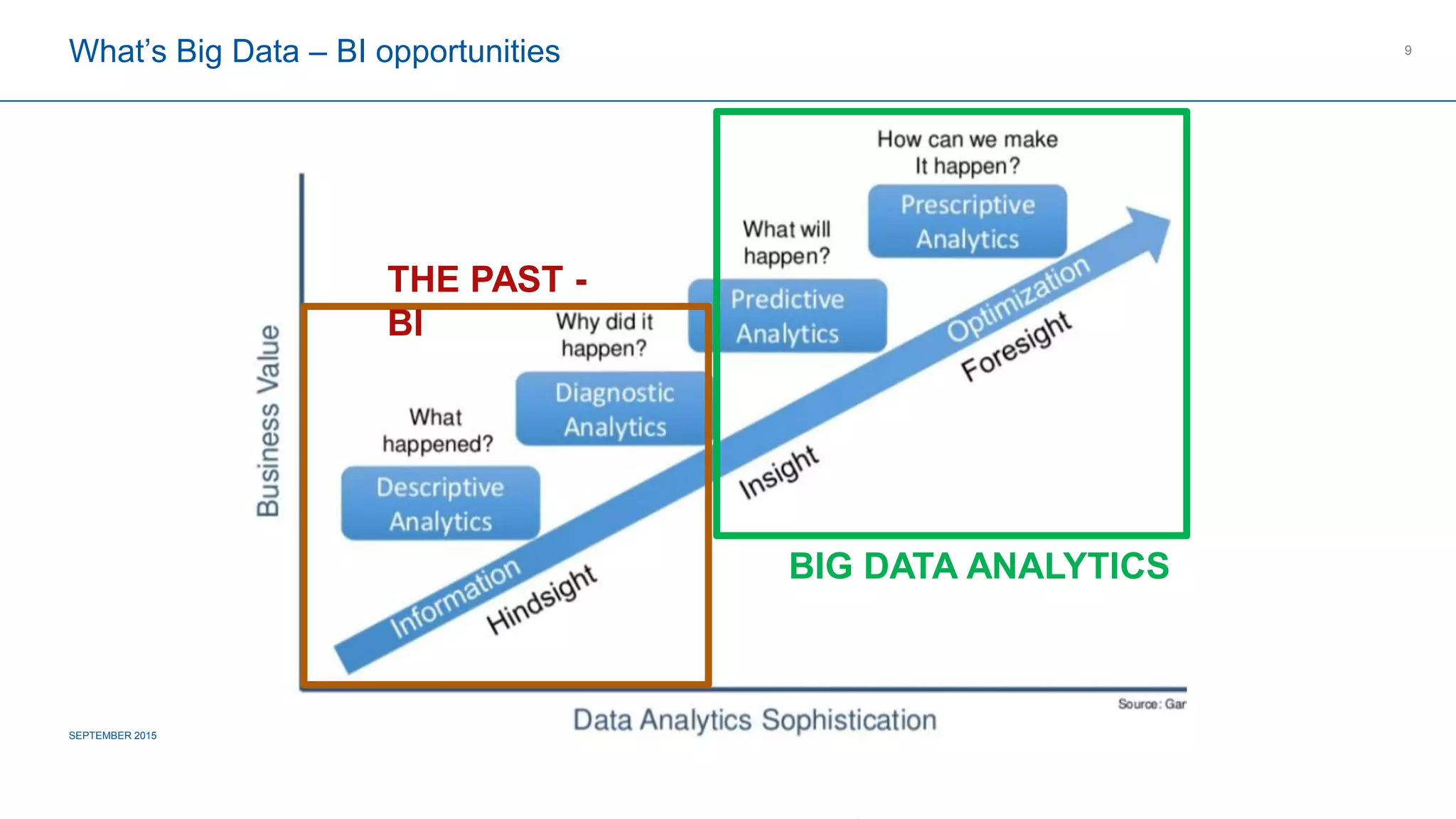

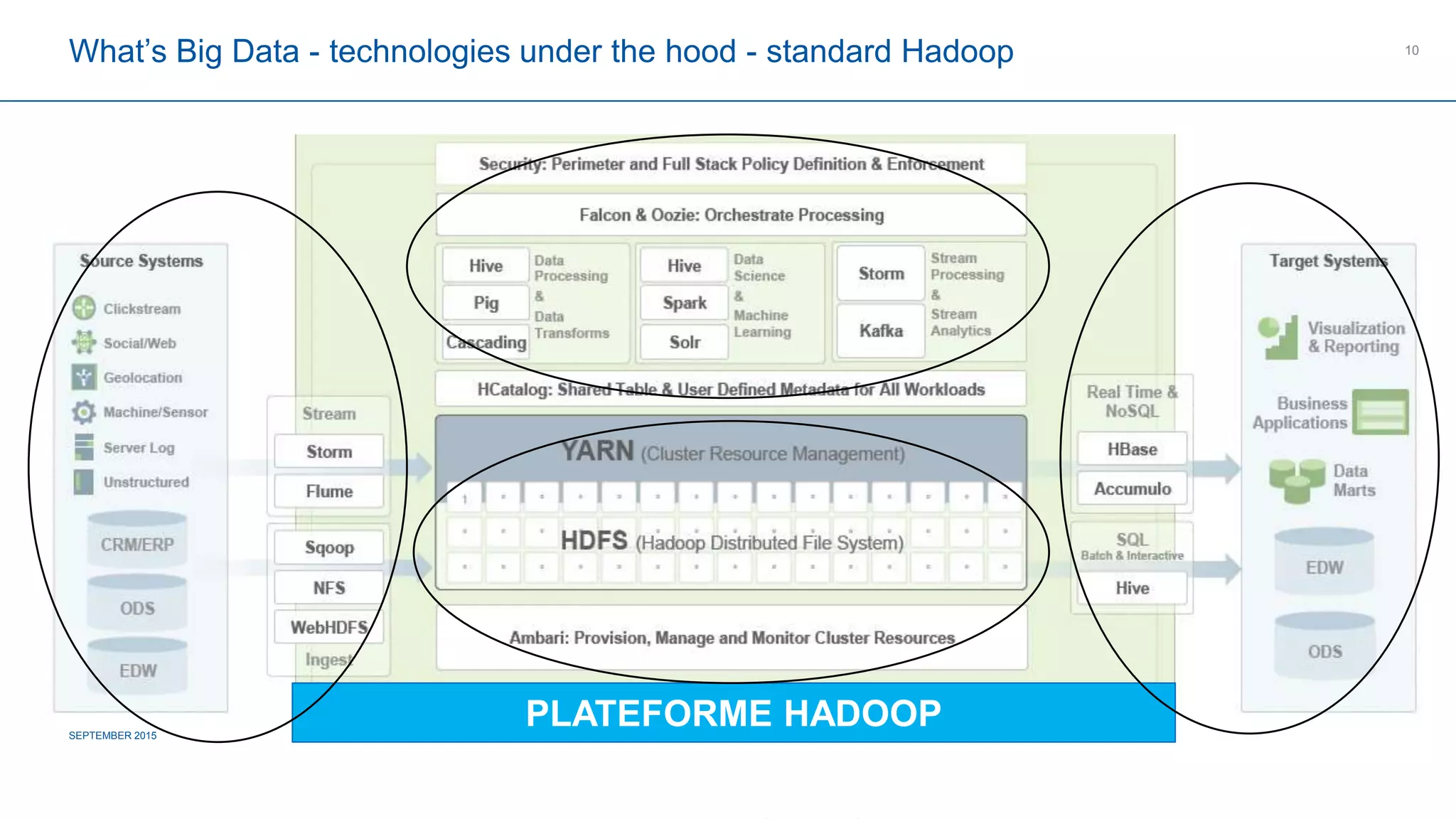

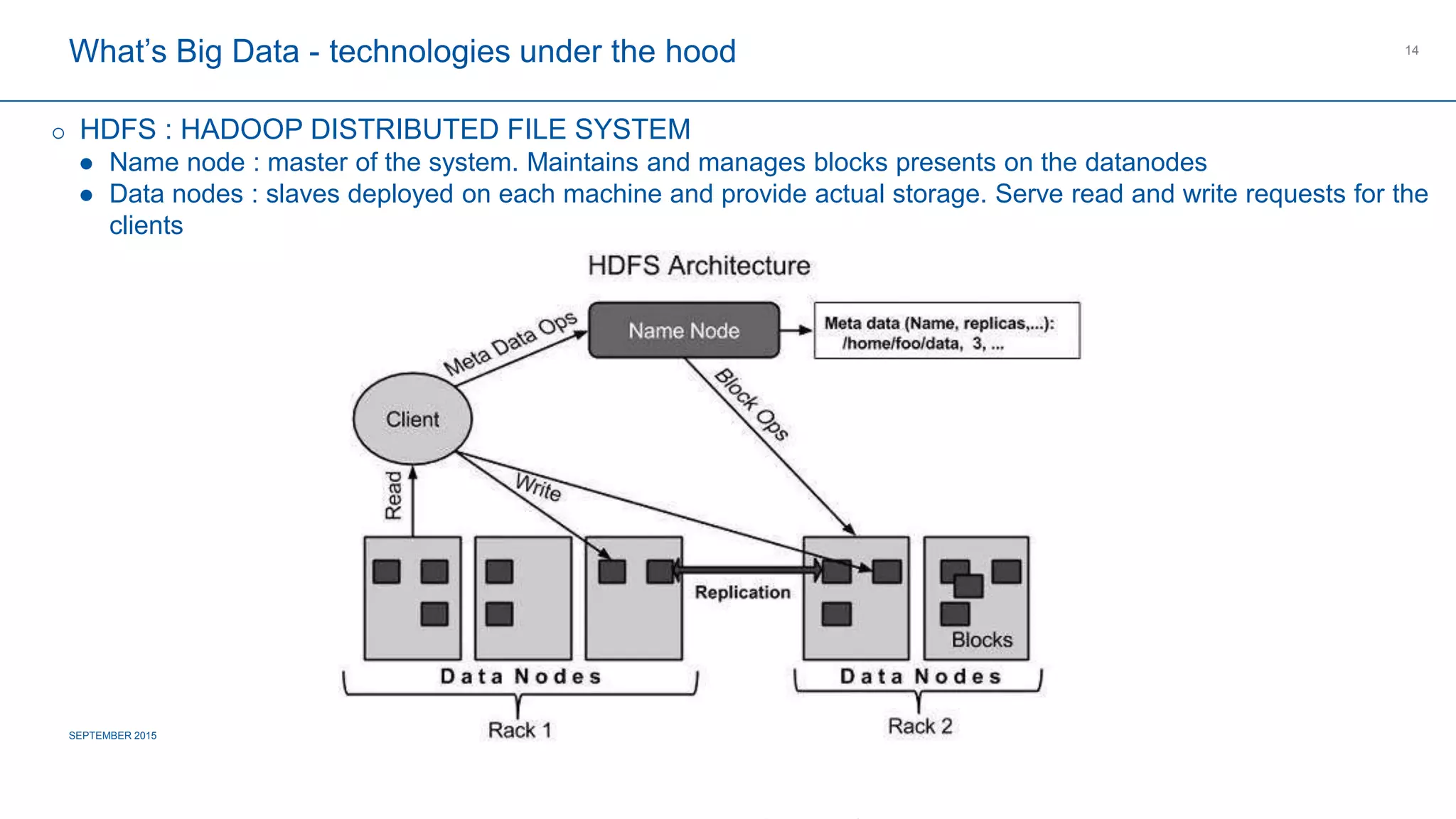

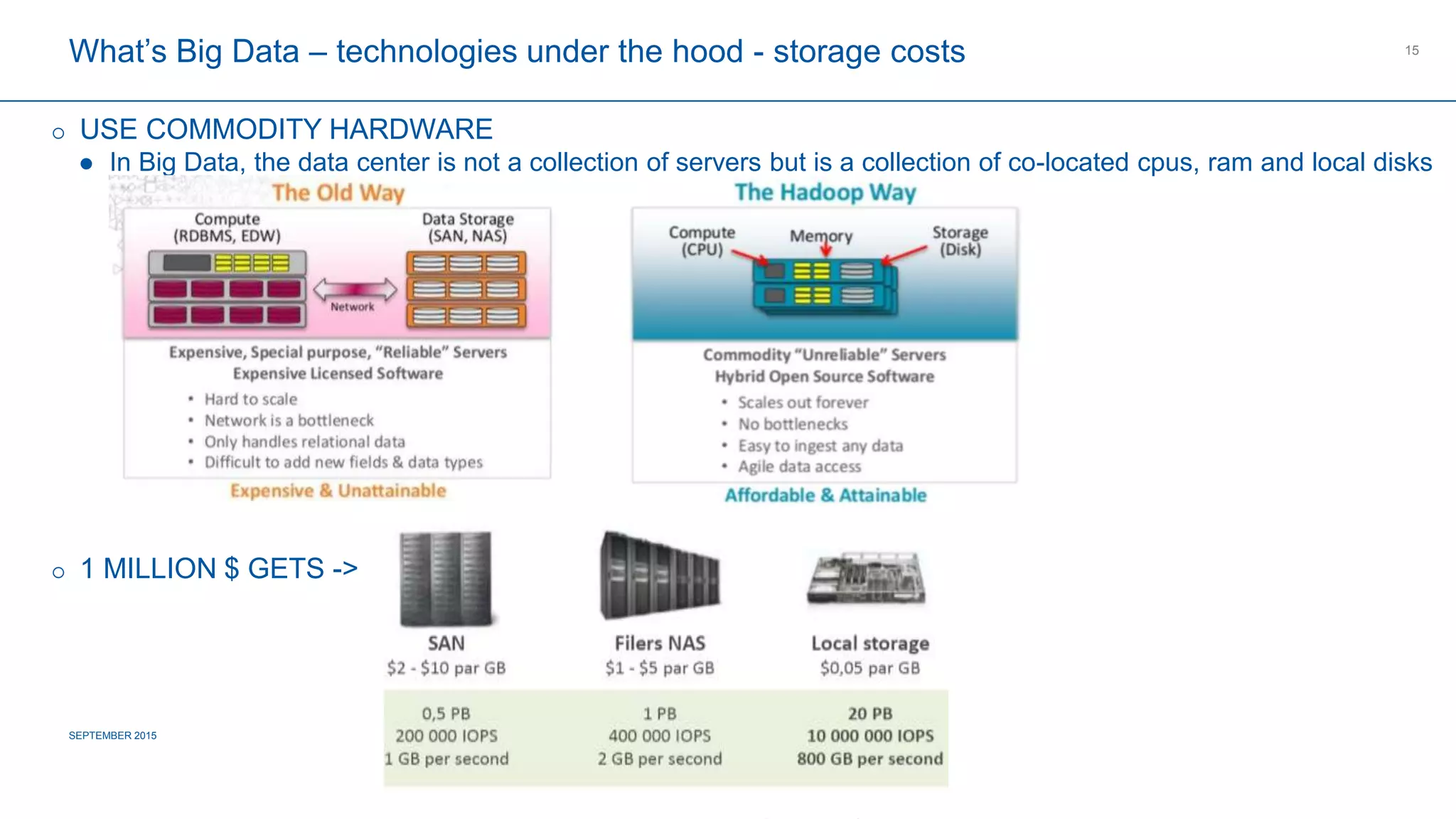

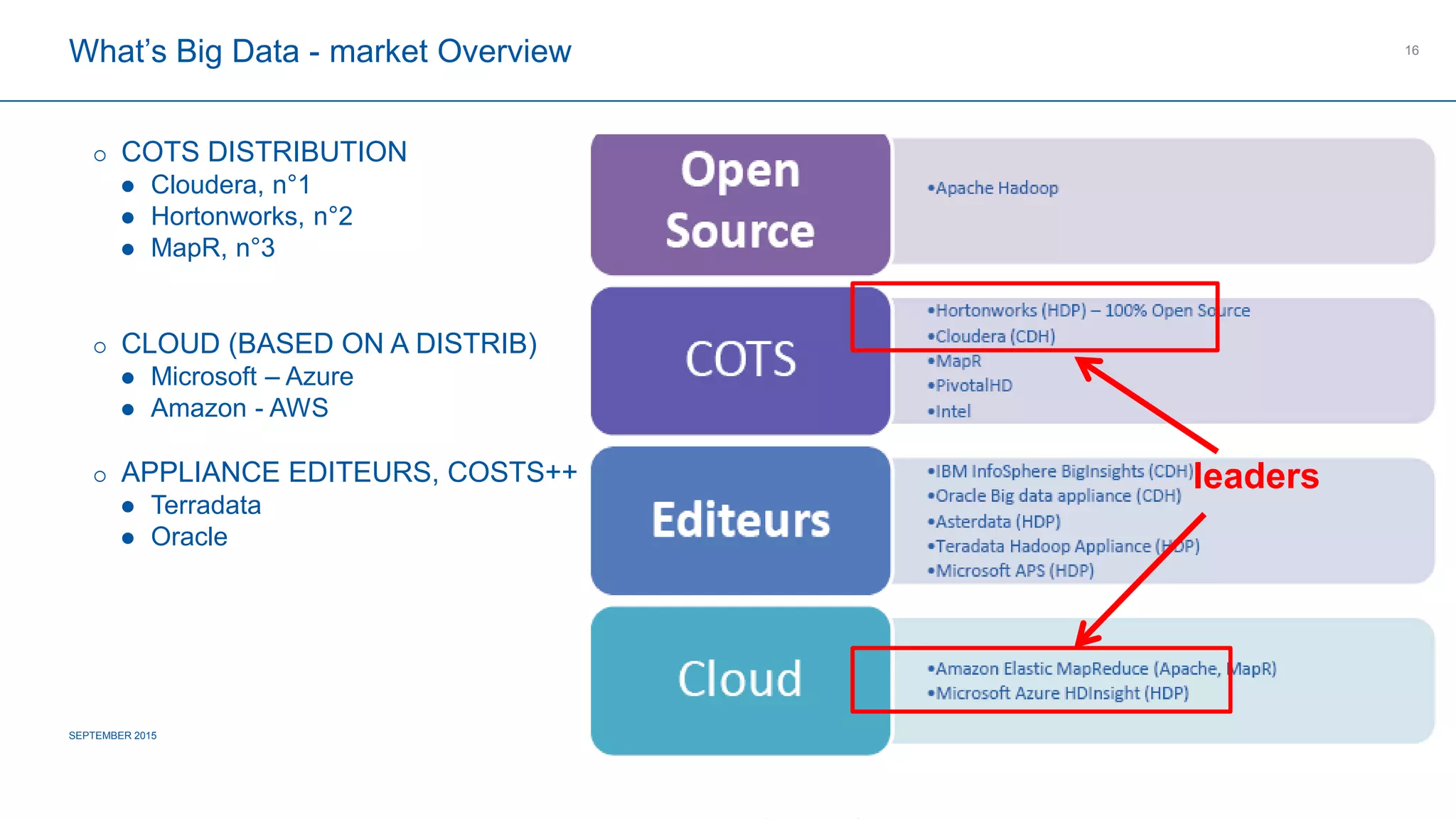

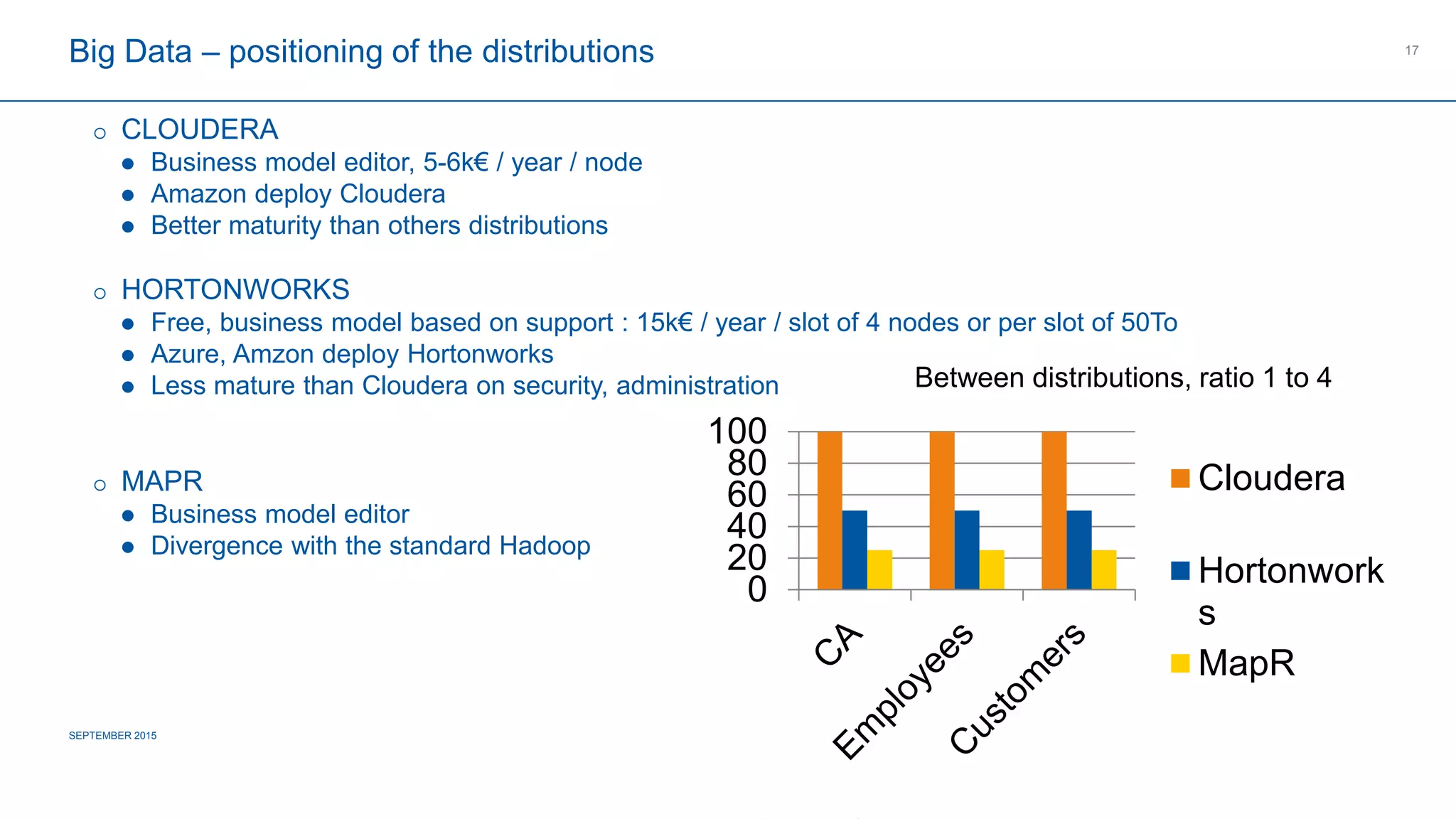

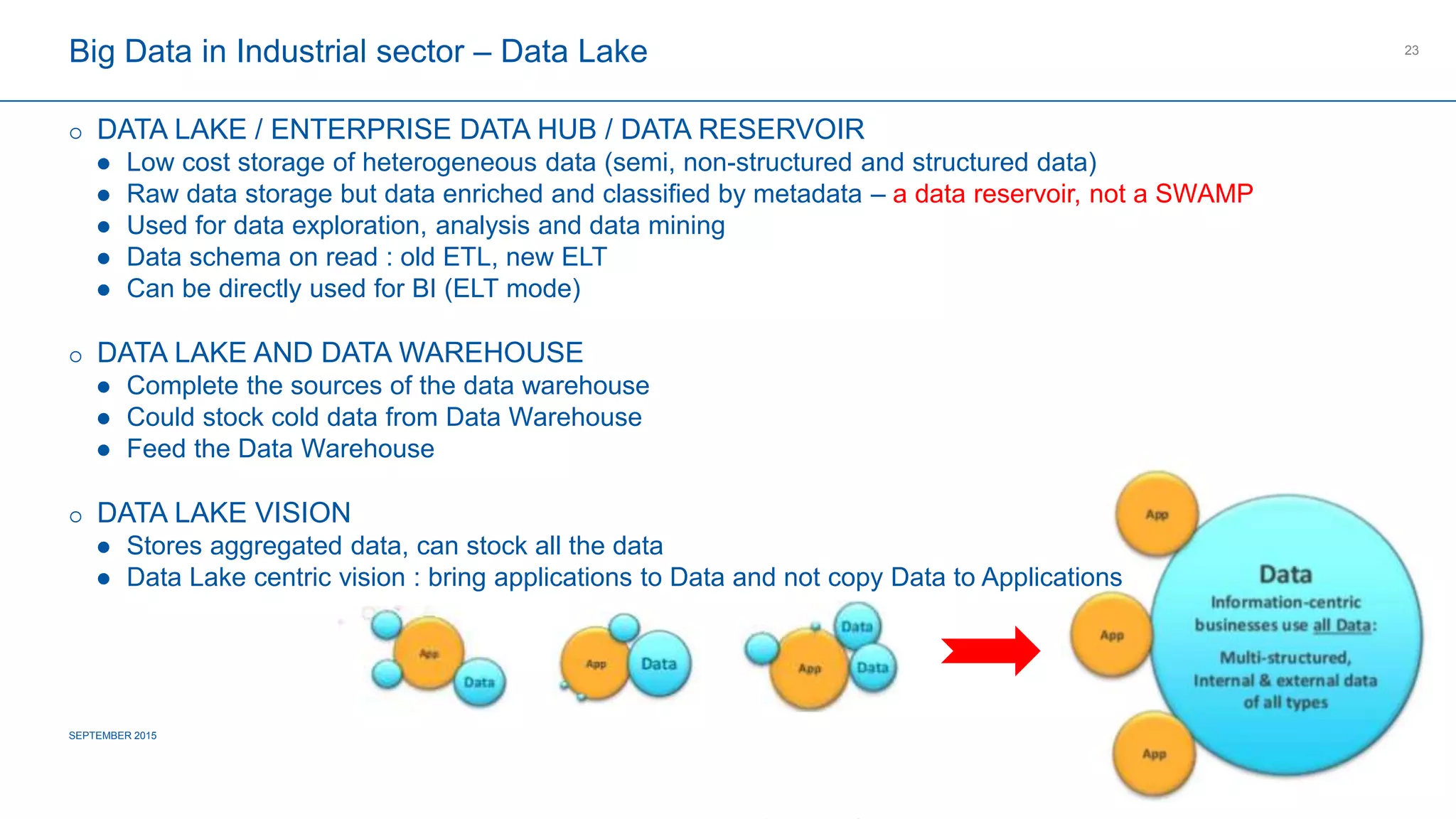

The document provides an overview of big data's definition, technologies, and industrial applications, highlighting its value in managing large volumes of diverse data. It discusses the importance of creating data lakes, using real-time data for predictive maintenance, and integrating big data with existing business intelligence strategies. Additionally, it outlines market leaders and offers insights into the future roadmap for big data implementation in various sectors.