Big Data presents both opportunities and challenges for insurance companies. It allows for more customized products and services through improved segmentation, prediction, and risk analysis. However, it also requires developing a data-driven culture and trust in data governance to realize these benefits. Emerging techniques like predictive modeling, data clustering, sentiment analysis and web crawling can provide new insights but also raise concerns around data privacy and security with more personal customer information. Overall, insurance companies that embrace Big Data and make data-driven decisions are found to be 5% more productive and 6% more profitable.

![15

References

Diversity Limited. (2010). Moving your infrastructure to the cloud. [pdf]. Retrieved from

http://diversity.net.nz/wp-content/uploads/2011/01/Moving-to-the-Clouds.pdf

Economist Intelligence Unit. (2013). Fostering a data-driven culture. [pdf].

Retrieved from

http://www.economistinsights.com/search/node/sites%20default%20files%20downloads%20Tableau%20DataCu

lture%20130219%20pdf

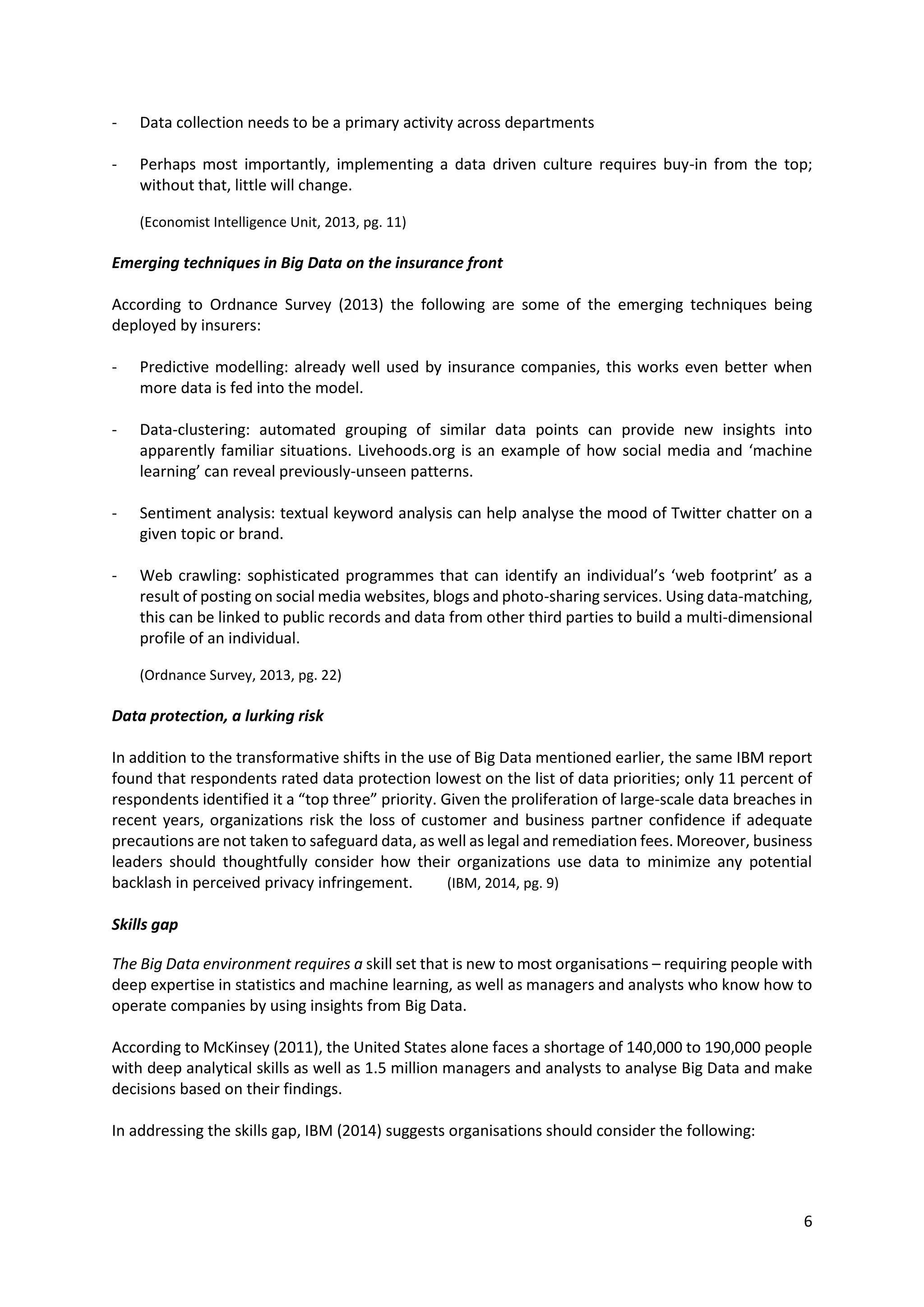

Gartner. (2013). Characteristics of the traditional versus the big data approach. [Table]. Retrieved from Gartner. (2013).

Big data business benefits are hampered by 'culture clash'. [pdf]. Retrieved from

https://www.gartner.com/doc/2588415

Gartner. (2013). Use big data to solve fraud and security problems. [pdf]. Retrieved from

https://www.gartner.com/doc/2397715

Gartner. (2013). How it should deepen big data analysis to support customer-centricity. [pdf].

Retrieved from https://www.gartner.com/doc/2531116

Gartner. (2013). Consistent view of the customer for big data. [Diagram]. Retrieved from Gartner. (2013). How it should

deepen big data analysis to support customer-centricity. [pdf].

Retrieved from https://www.gartner.com/doc/2531116

Gartner. (2014). Agenda overview for p&c and life insurance. [pdf].

Retrieved from https://www.gartner.com/doc/2643327

HBR. (2012). Big Data: The management revolution. [pdf].

Retrieved from https://hbr.org/2012/10/big-data-the-management-revolution/ar

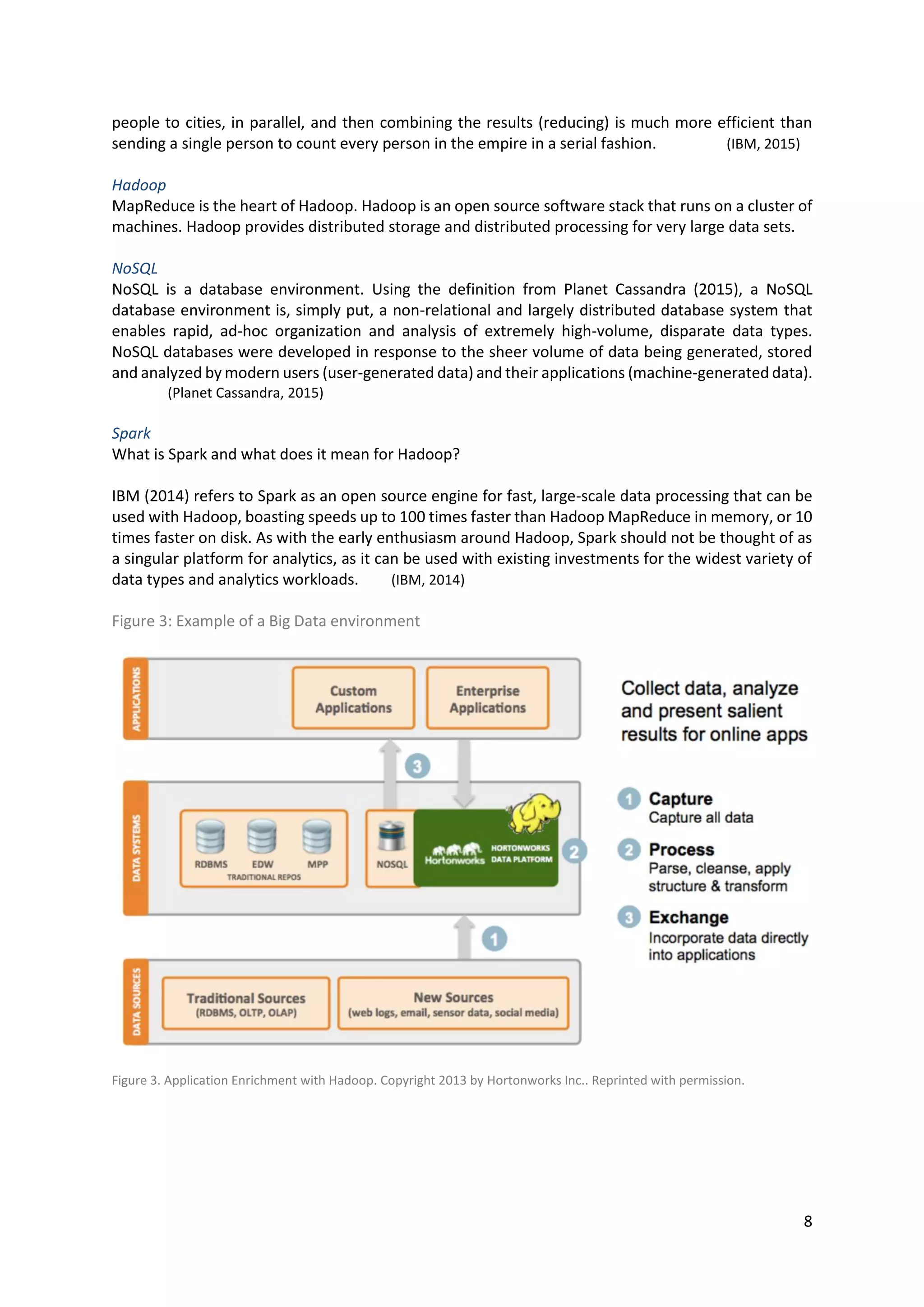

Hortonworks. (2013). Application enrichment with hadoop. [Diagram]. Retrieved from Hortonworks. (2013).

Apache Hadoop patterns of use. [pdf]. Retrieved from http://hortonworks.com/blog/apache-hadoop-patterns-of-

use-refine-enrich-and-explore/

IBM. (2012). Four dimensions of big data. [Diagram] Retrieved from IBM, (2012). Analytics: the real-world use of big

data. [pdf]. Retrieved from

http://public.dhe.ibm.com/common/ssi/ecm/en/gbe03519usen/GBE03519USEN.PDF

IBM. (2012). Analytics: the real-world use of big data. [pdf].

Retrieved from http://public.dhe.ibm.com/common/ssi/ecm/en/gbe03519usen/GBE03519USEN.PDF

IBM. (2012). Insurance bureau of Canada. [pdf]. Retrieved from

http://www-01.ibm.com/common/ssi/cgi-

bin/ssialias?subtype=AB&infotype=PM&appname=SWGE_IM_IM_USEN&htmlfid=IMC14775USEN&attachment=I

MC14775USEN.PDF

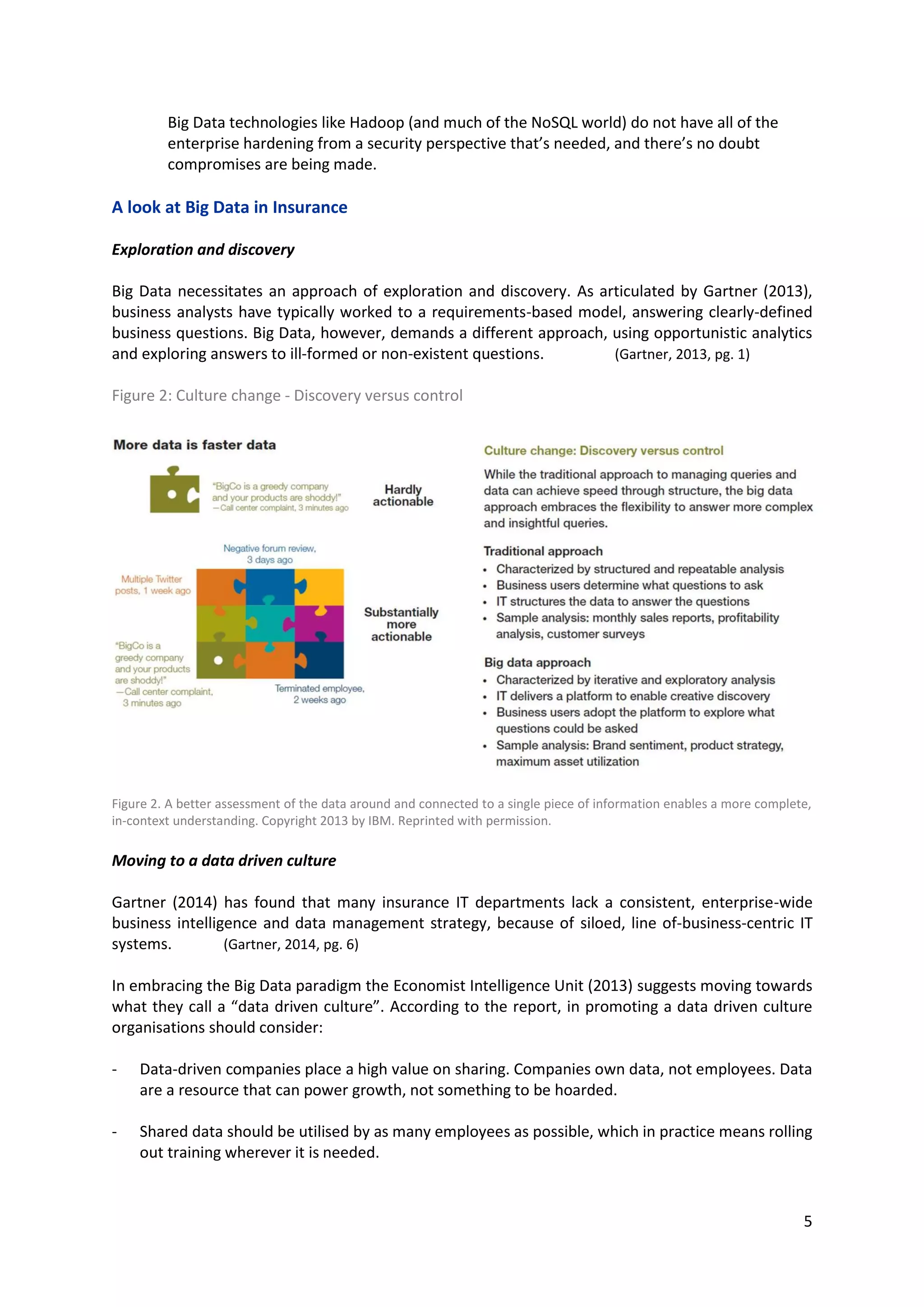

IBM. (2013). A better assessment of the data around and connected to a single piece of information enables a more

complete, in-context understanding. [Diagram]. Retrieved from IBM. (2013). The future of insurance. [pdf].

Retrieved from http://public.dhe.ibm.com/common/ssi/ecm/en/imw14671usen/IMW14671USEN.PDF

IBM. (2013). Harnessing the power of big data and analytics for insurance. [pdf]. Retrieved from

http://public.dhe.ibm.com/common/ssi/ecm/en/imw14672usen/IMW14672USEN.PDF

IBM. (2014). Analytics: The speed advantage. [pdf].

Retrieved from http://www-935.ibm.com/services/us/gbs/thoughtleadership/2014analytics/

IBM. (2014). IBM expands hadoop commitment with support for spark.. [blog].

Retrieved from http://www.ibmbigdatahub.com/blog/ibm-expands-hadoop-commitment-support-spark

IBM. (2015). Analytics: What is mapreduce. [web page].

Retrieved from http://www-01.ibm.com/software/data/infosphere/hadoop/mapreduce/](https://image.slidesharecdn.com/assignment1-436-greggbarrett-150715134753-lva1-app6891/75/Big-Data-Opportunities-Strategy-and-Challenges-16-2048.jpg)

![16

IBM. (2015). BigInsights for apache hadoop quick start edition. [pdf].

Retrieved from http://www-01.ibm.com/common/ssi/cgi-

bin/ssialias?infotype=PM&subtype=BR&htmlfid=IMB14164USEN#loaded

IBM. (2015). Making the case for hadoop and big data in the enterprise. [pdf].

Retrieved from http://www-01.ibm.com/common/ssi/cgi-

bin/ssialias?infotype=PM&subtype=BK&htmlfid=IMM14161USEN#loaded

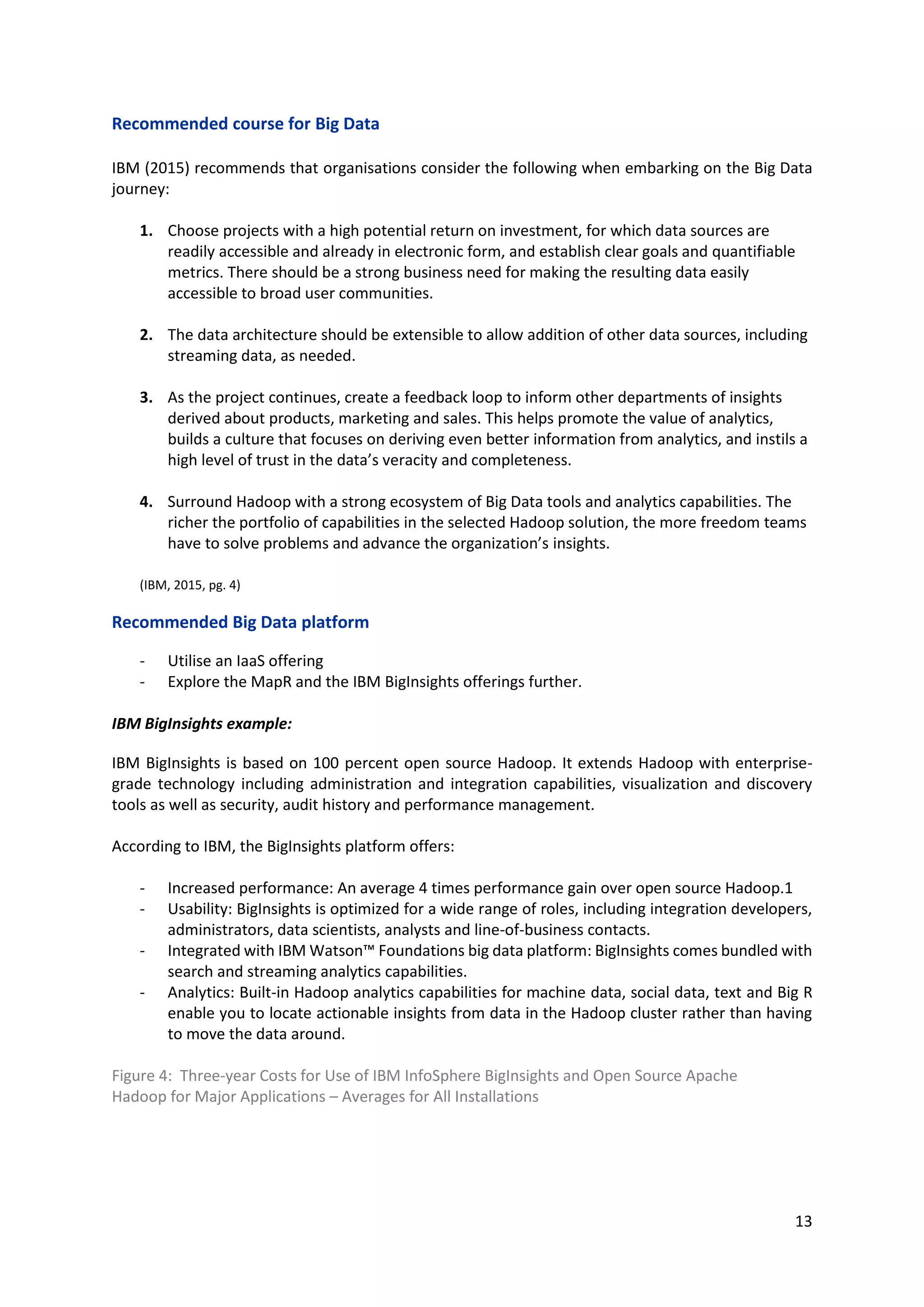

ITG. (2013). Business case for enterprise big data deployments. [pdf].

Retrieved from http://www-01.ibm.com/common/ssi/cgi-

bin/ssialias?htmlfid=IME14028USEN&appname=skmwww

ITG. (2013). Three-year Costs for Use of IBM InfoSphere BigInsights and Open Source Apache

Hadoop for Major Applications. [Diagram].

Retrieved from ITG. (2013). Business case for enterprise big data deployments. [pdf].

Retrieved from http://www-01.ibm.com/common/ssi/cgi-

bin/ssialias?htmlfid=IME14028USEN&appname=skmwww

Intel. (2015). Big data cloud technology. [pdf].

Retrieved from http://www.intel.co.za/content/dam/www/public/us/en/documents/product-briefs/big-data-

cloud-technologies-brief.pdf

McKinsey. (2011). Big data: The next frontier for innovation, competition, and productivity. [pdf].

Retrieved from

http://www.mckinsey.com/insights/business_technology/big_data_the_next_frontier_for_innovation

Ordnance Survey. (2013) The big data rush: how data analytics can yield underwriting gold. [pdf].

Retrieved from http://events.marketforce.eu.com/big-data-underwriting-report-email

Planet Cassandra. (2015). Nosql databases defined and explained. [web page].

Retrieved from http://www.planetcassandra.org/what-is-nosql/

Standish, J. (2013). Speed to detection - strategically leveraging advanced analytics for insurance fraud. [blog]. Retrieved

from

http://www.johnstandishconsultinggroup.com/JohnStandishConsultingGroup.com/Blog/Entries/2013/8/9_Speed

_to_Detection_-_Strategically_Leveraging_Advanced_Analytics_for_Insurance_Fraud.html

Strategy Meets Action. (2012). Data and analytics in insurance. [pdf].

Retrieved from https://www.acord.org/library/Documents/2012_SMA_Data_Analytics.pdf

Strategy Meets Action. (2013). Data and analytics in insurance: p&c plans and priorities for 2013 and beyond. [pdf].

Retrieved from https://strategymeetsaction.com/data-and-analytics-in-insurance-p-and-c-plans-and-priorities-

for-2013-and-beyond/

Zikopoulos, P., deRoos, D., Bienko, C., Buglio, R., Andrews, M. (2014). Big data beyond the hype. [pdf].

Retrieved from

https://www.ibm.com/developerworks/community/blogs/SusanVisser/entry/big_data_beyond_the_hype_a_gui

de_to_conversations_for_today_s_data_center?lang=en](https://image.slidesharecdn.com/assignment1-436-greggbarrett-150715134753-lva1-app6891/75/Big-Data-Opportunities-Strategy-and-Challenges-17-2048.jpg)