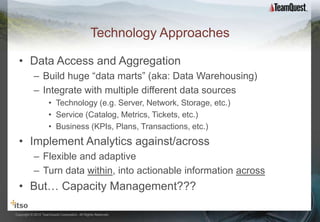

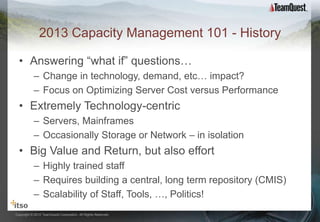

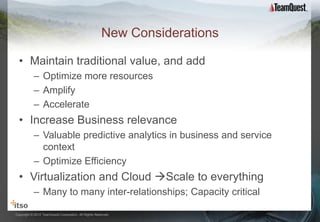

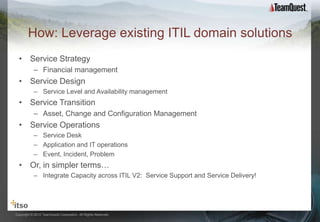

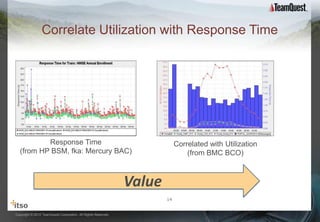

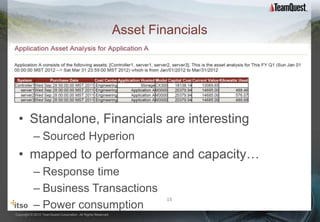

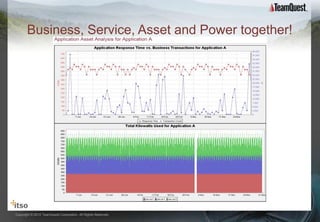

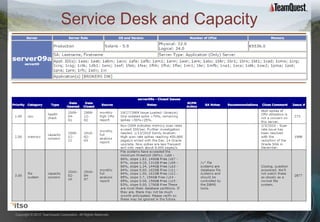

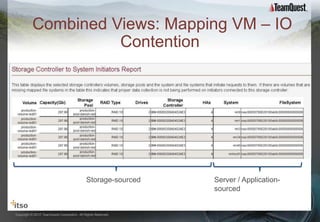

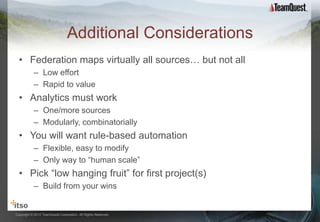

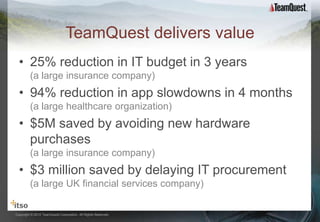

TeamQuest advocates applying "big data" approaches to capacity management to optimize resources through faster, scalable techniques. Traditionally, capacity management focused on technology and was staff-intensive, but new approaches integrate data from technology, services, and business sources using federated analytics. This provides a single view of capacity across the organization and correlates different metrics like response time, tickets, and financial data to surface new insights for optimizing efficiency.