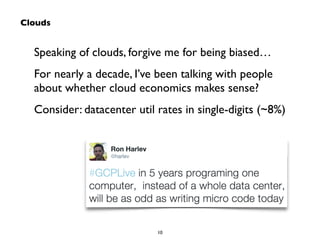

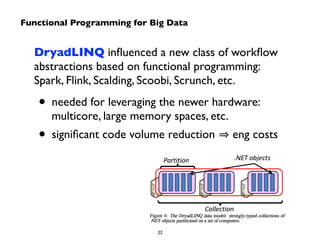

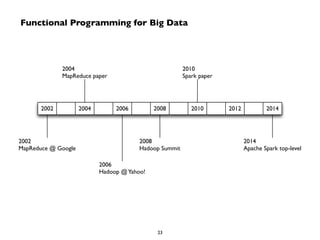

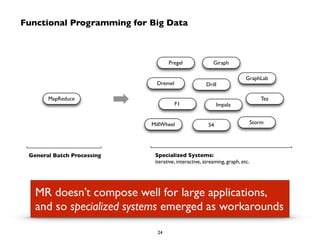

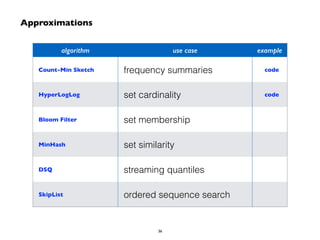

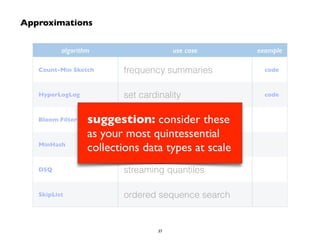

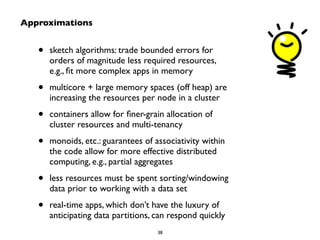

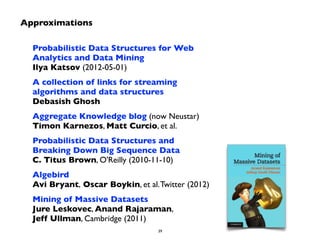

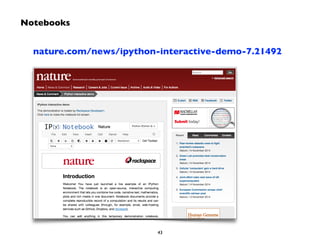

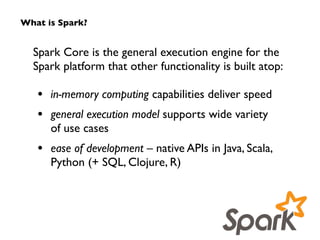

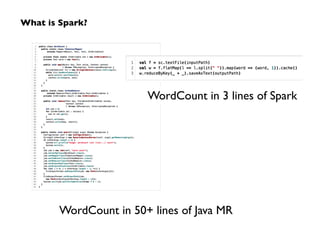

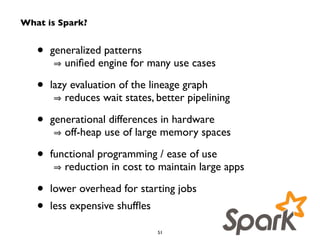

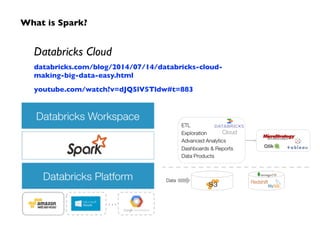

Big Data technologies are changing rapidly due to shifts in hardware, data types, and software frameworks. Incumbent Big Data technologies do not fully leverage newer hardware like multicore processors and large memory spaces, while newer open source projects like Spark have emerged to better utilize these resources. Containers, clouds, functional programming, databases, approximations, and notebooks represent significant trends in how Big Data is managed and analyzed at large scale.