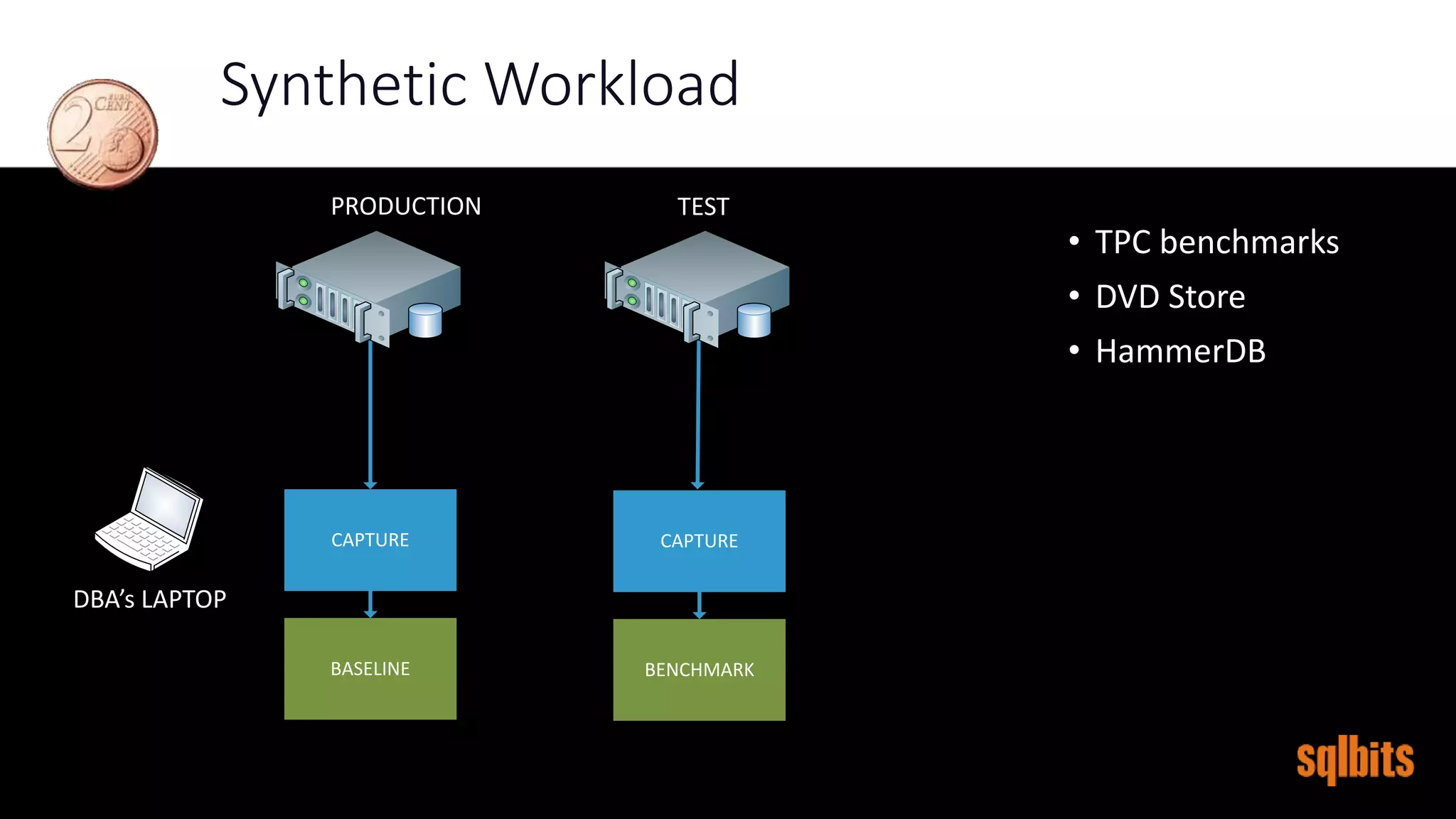

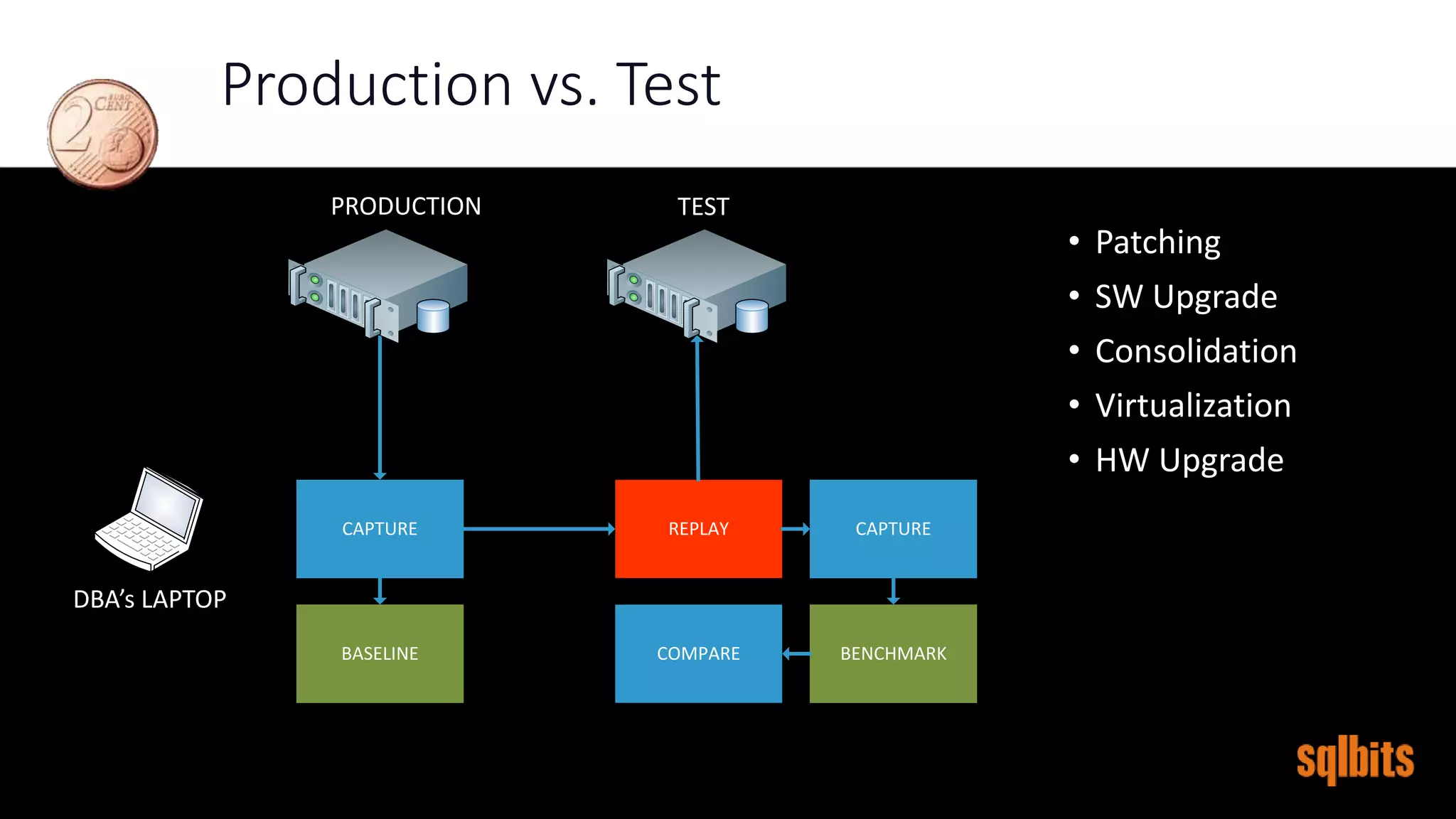

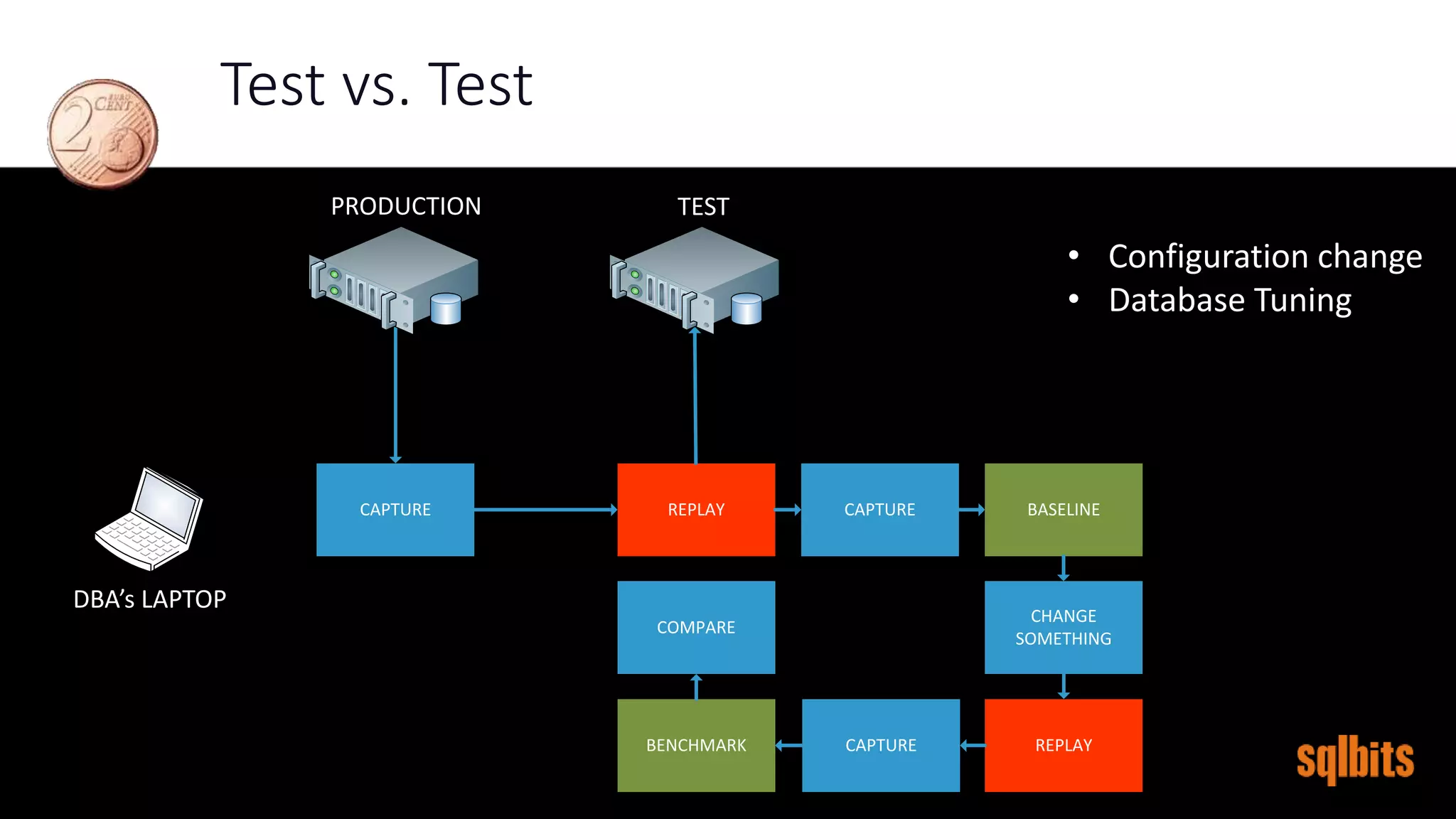

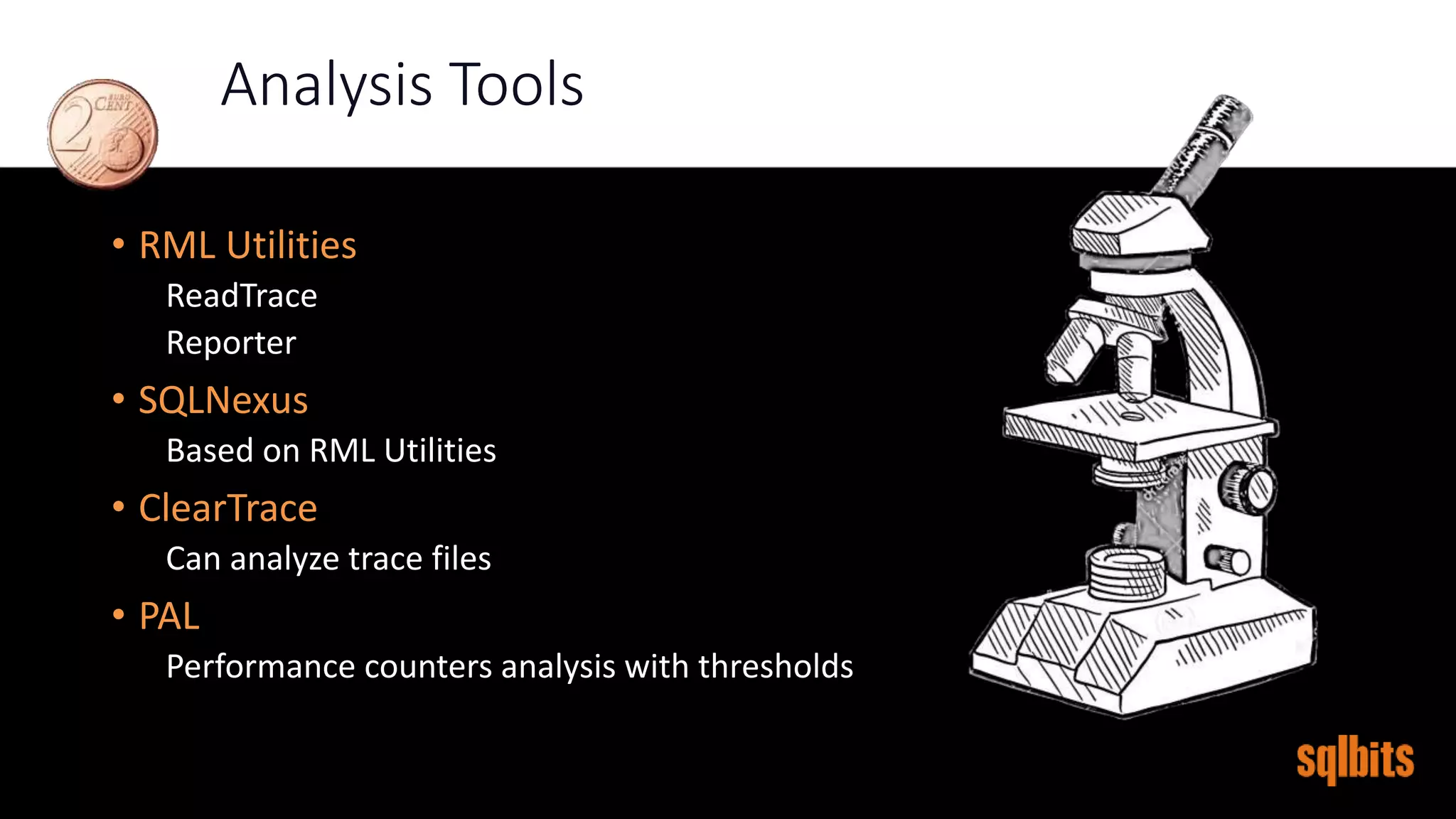

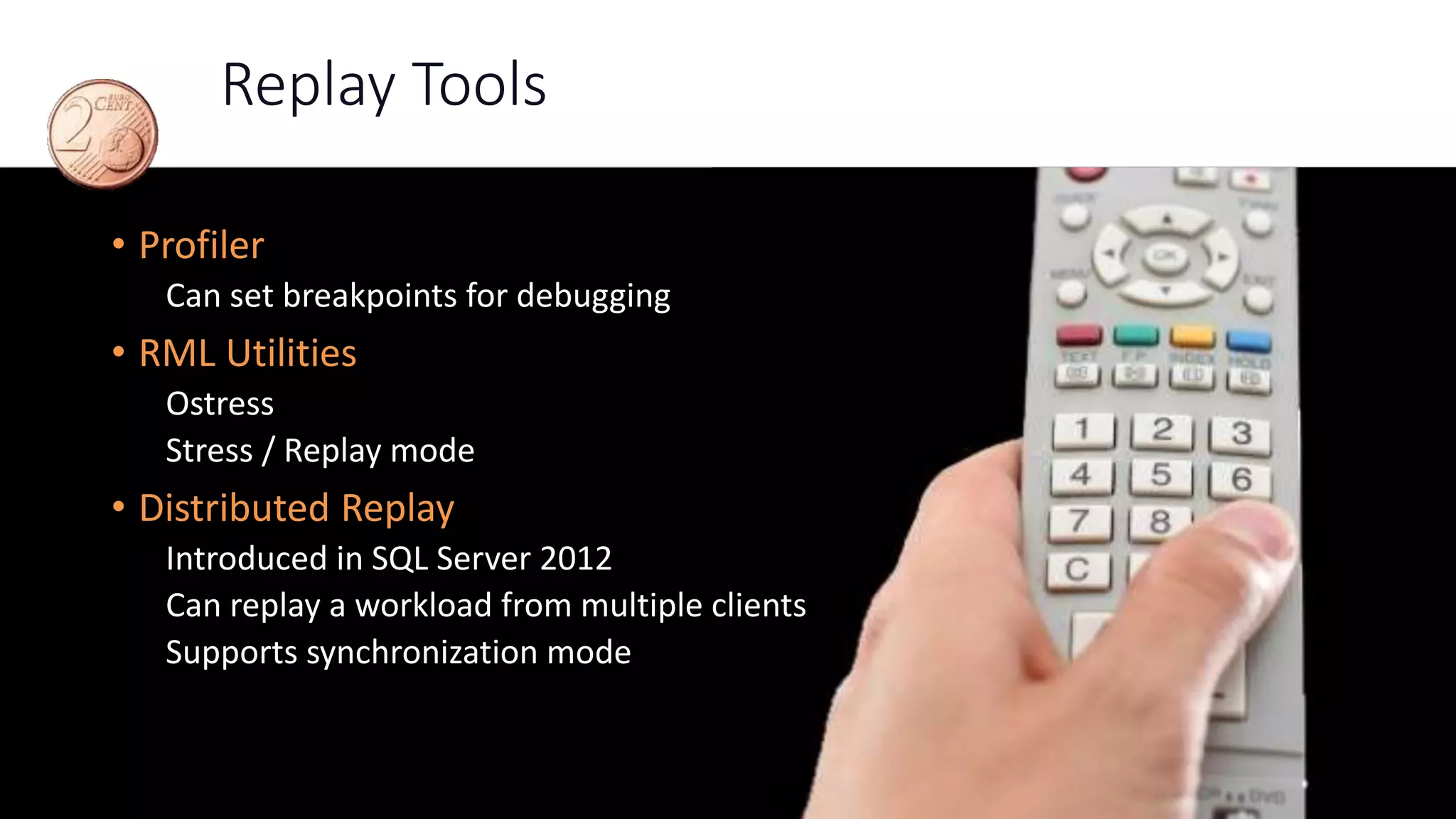

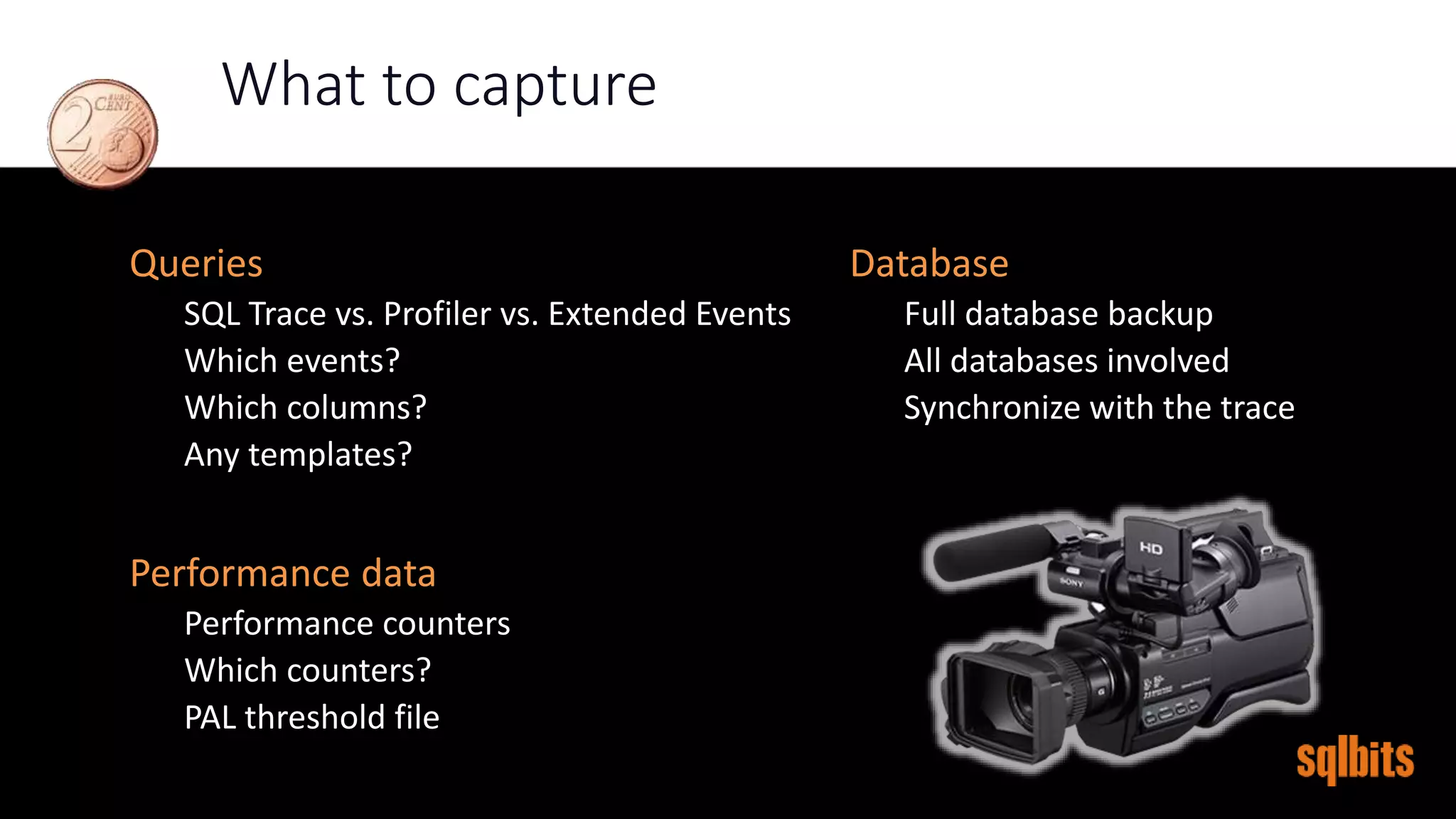

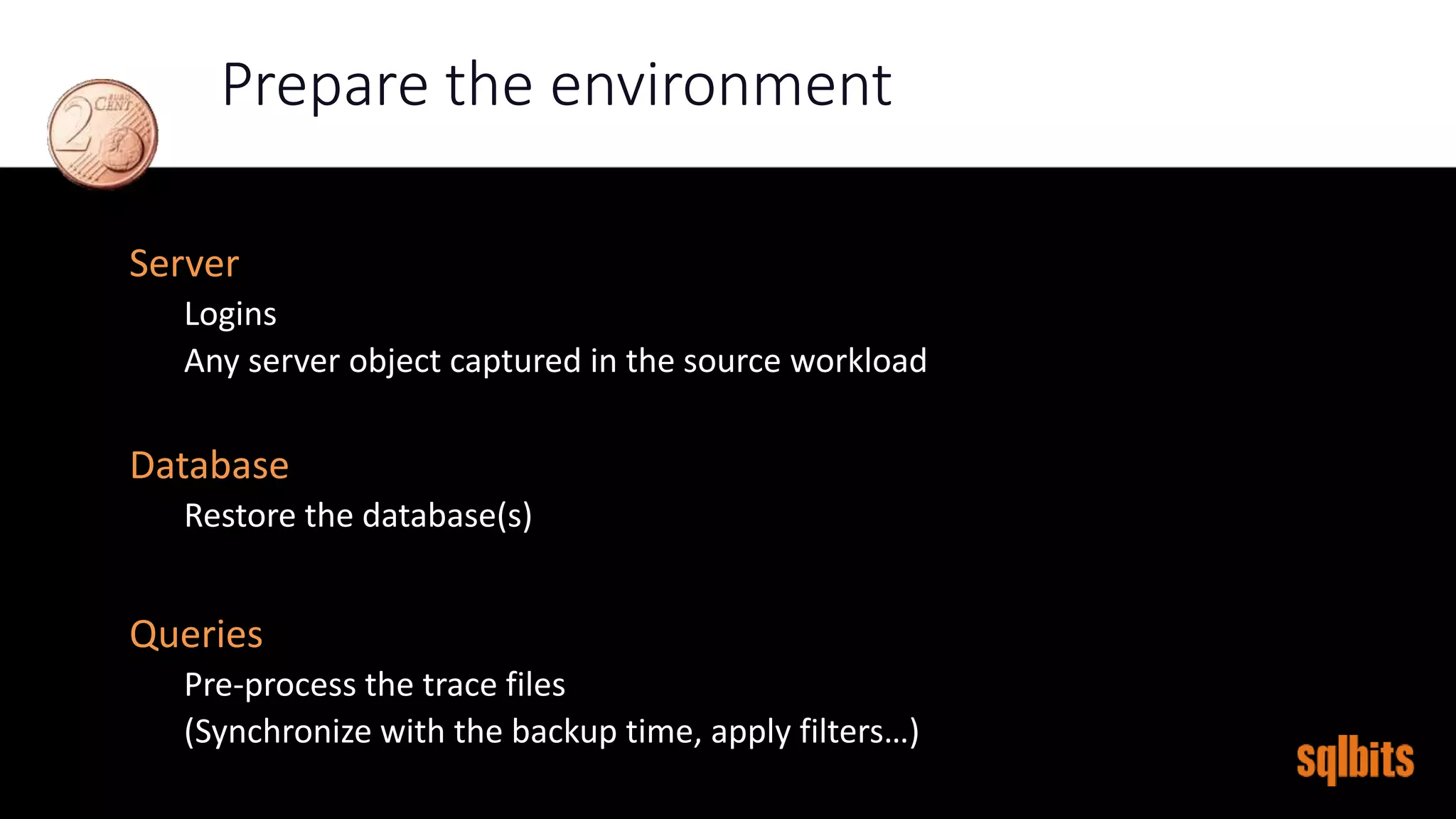

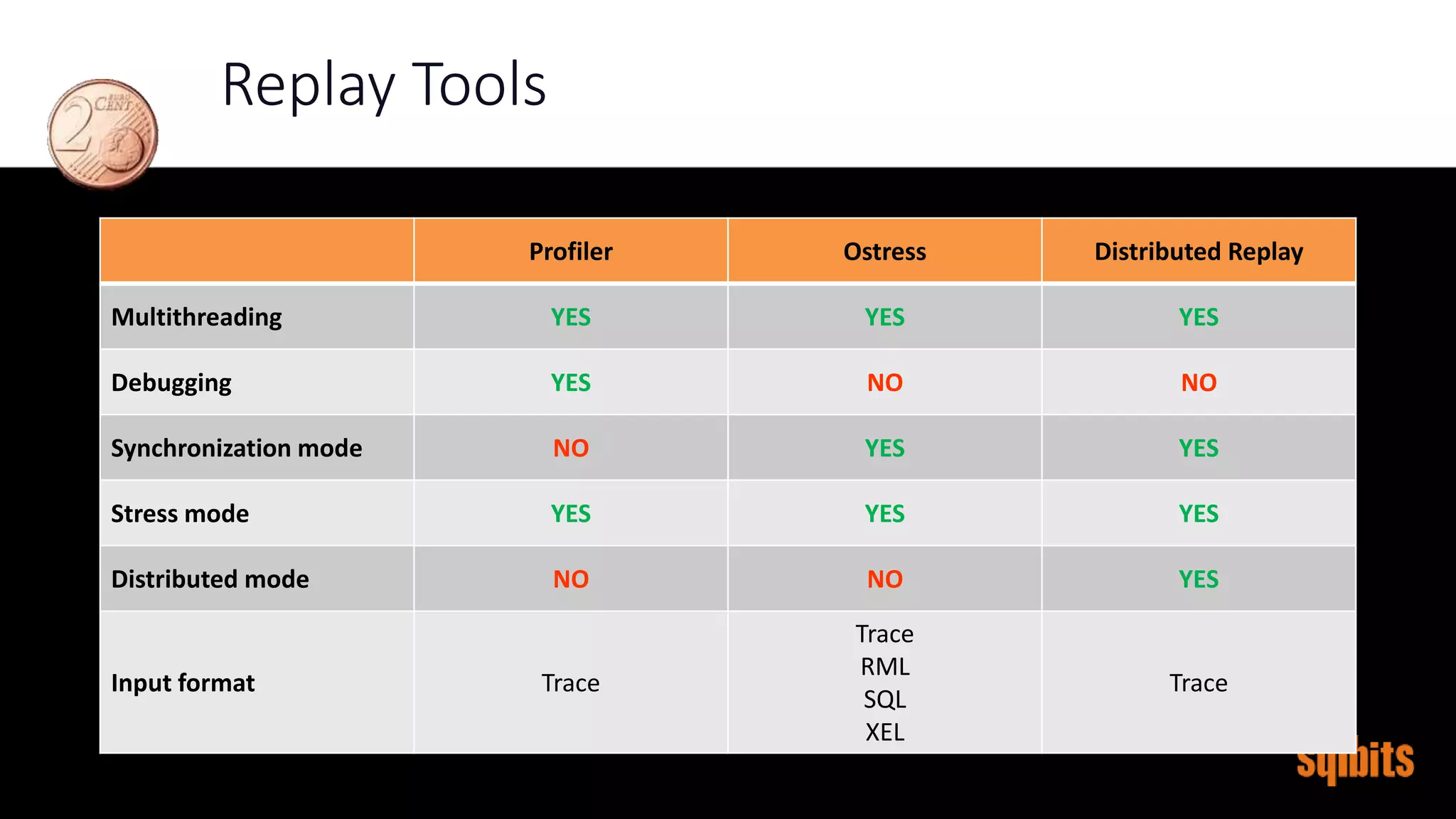

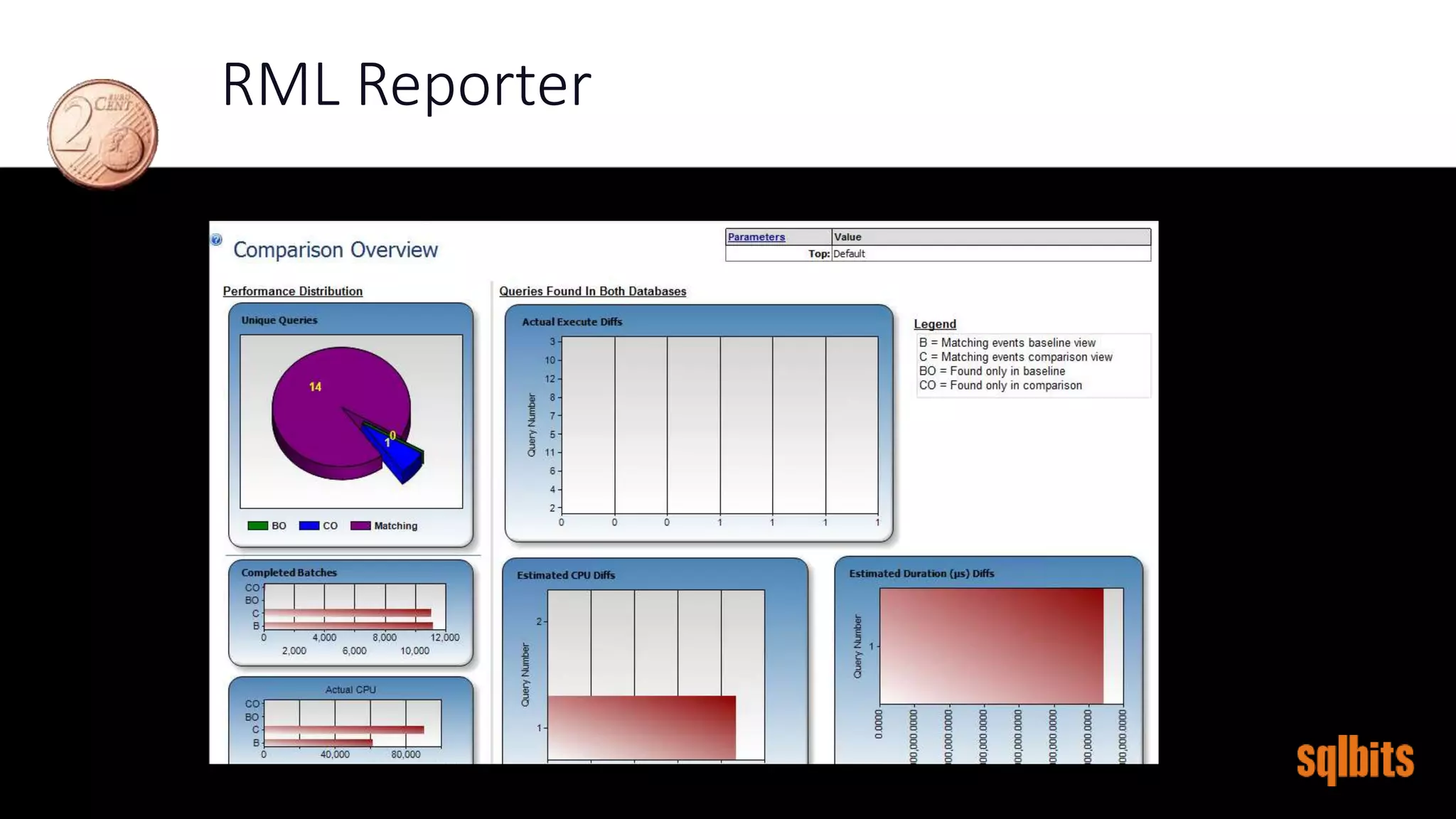

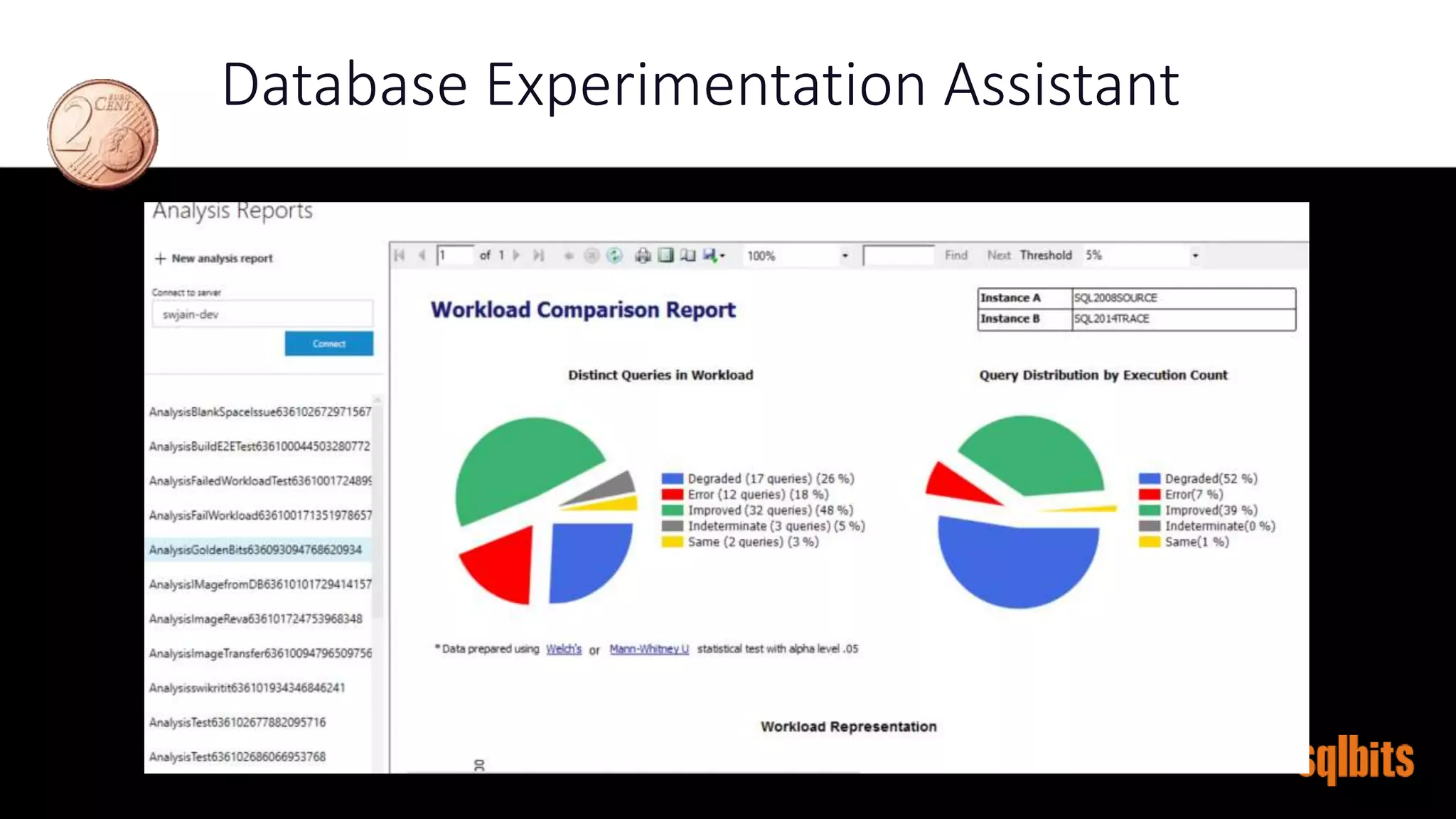

The document discusses benchmarking and baselining in SQL Server, covering scenarios, tools, and processes for capturing and replaying workloads. It highlights the importance of benchmarks for performance evaluation during hardware/software upgrades, configuration changes, and database tuning. Various tools such as Profiler, RML Utilities, and Extended Events are mentioned, along with best practices for capturing and analyzing workload data.