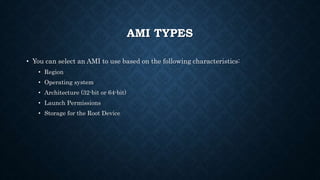

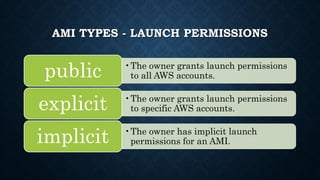

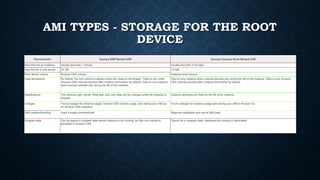

EC2 provides a virtual computing environment allowing users to launch instances with different operating systems. Users can specify availability zones, key pairs, and security groups when launching instances. Amazon Machine Images contain the information required to launch instances and can be shared, copied to different regions, or deregistered. EC2 offers various instance types optimized for tasks like machine learning, graphics, storage, and high I/O. Features include elastic IP addresses, auto scaling, multiple locations, and time sync services. Users pay based on actual resources consumed.