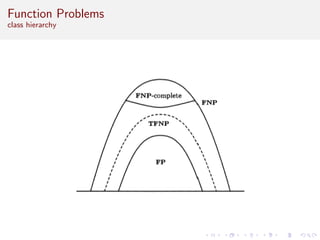

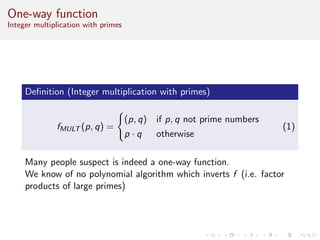

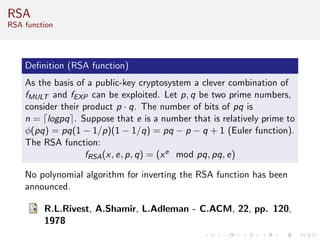

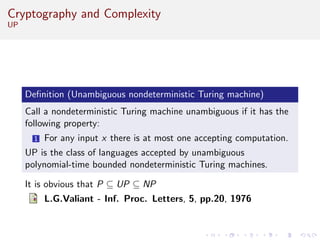

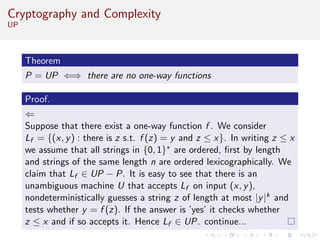

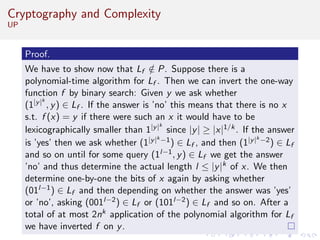

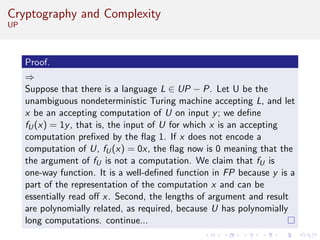

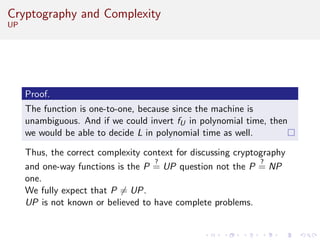

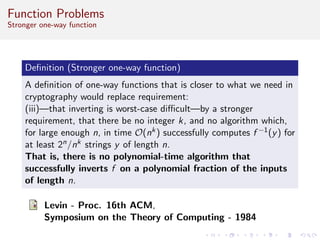

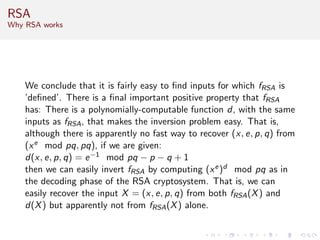

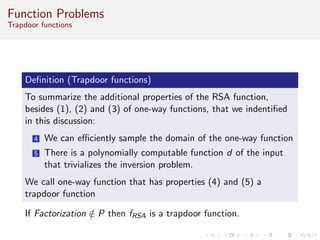

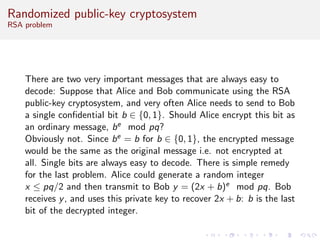

This document discusses algorithms and complexity in cryptography. It begins by defining function problems in computational complexity theory as computational problems where the expected output is more complex than a simple yes or no answer. It then discusses one-way functions, which are easy to compute but believed to be hard to invert. The document provides examples of one-way functions based on integer multiplication, discrete logarithms, and the RSA cryptosystem. It argues that the existence of one-way functions separates the complexity classes P and UP, and that cryptography relies on the assumption that stronger versions of one-way functions exist.