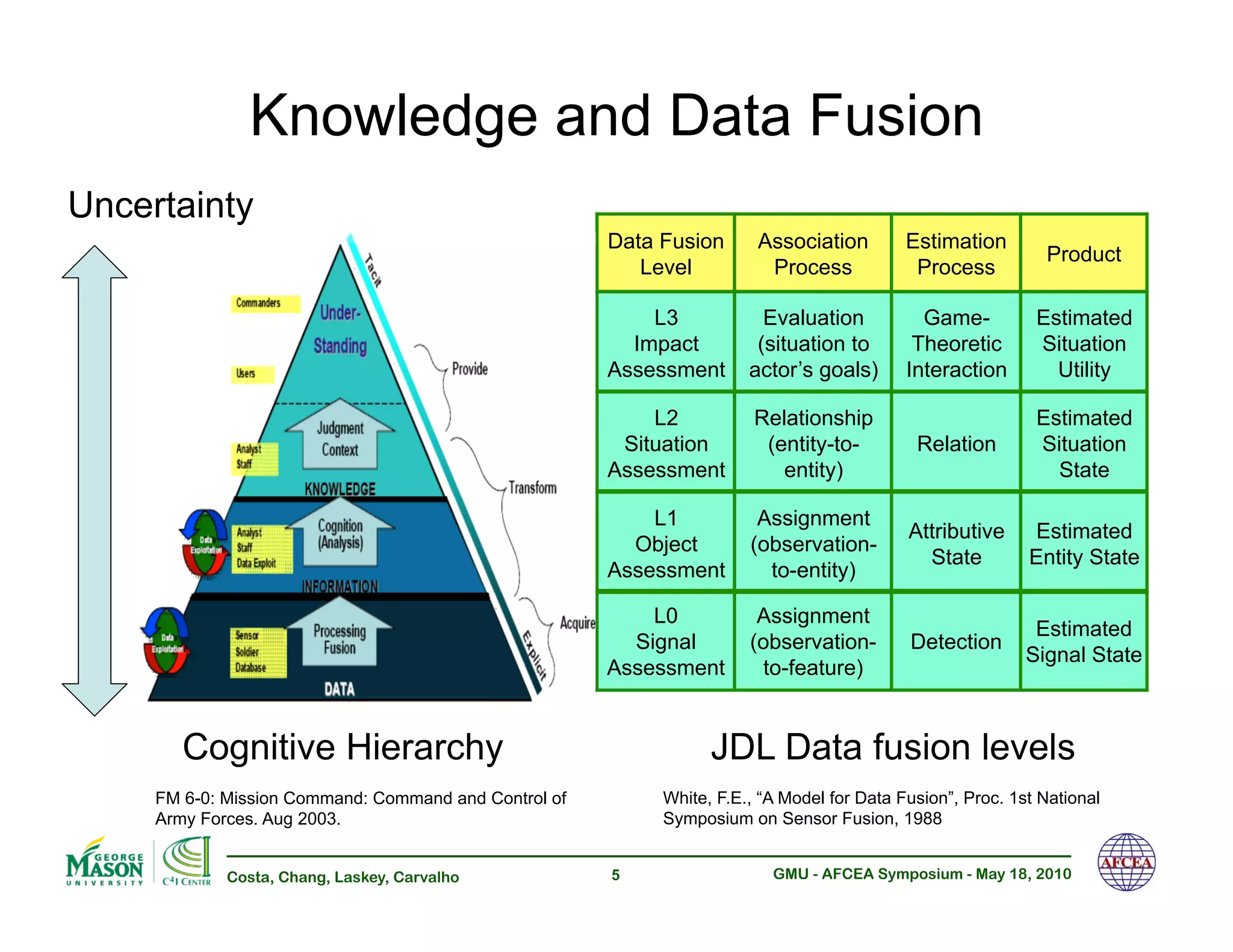

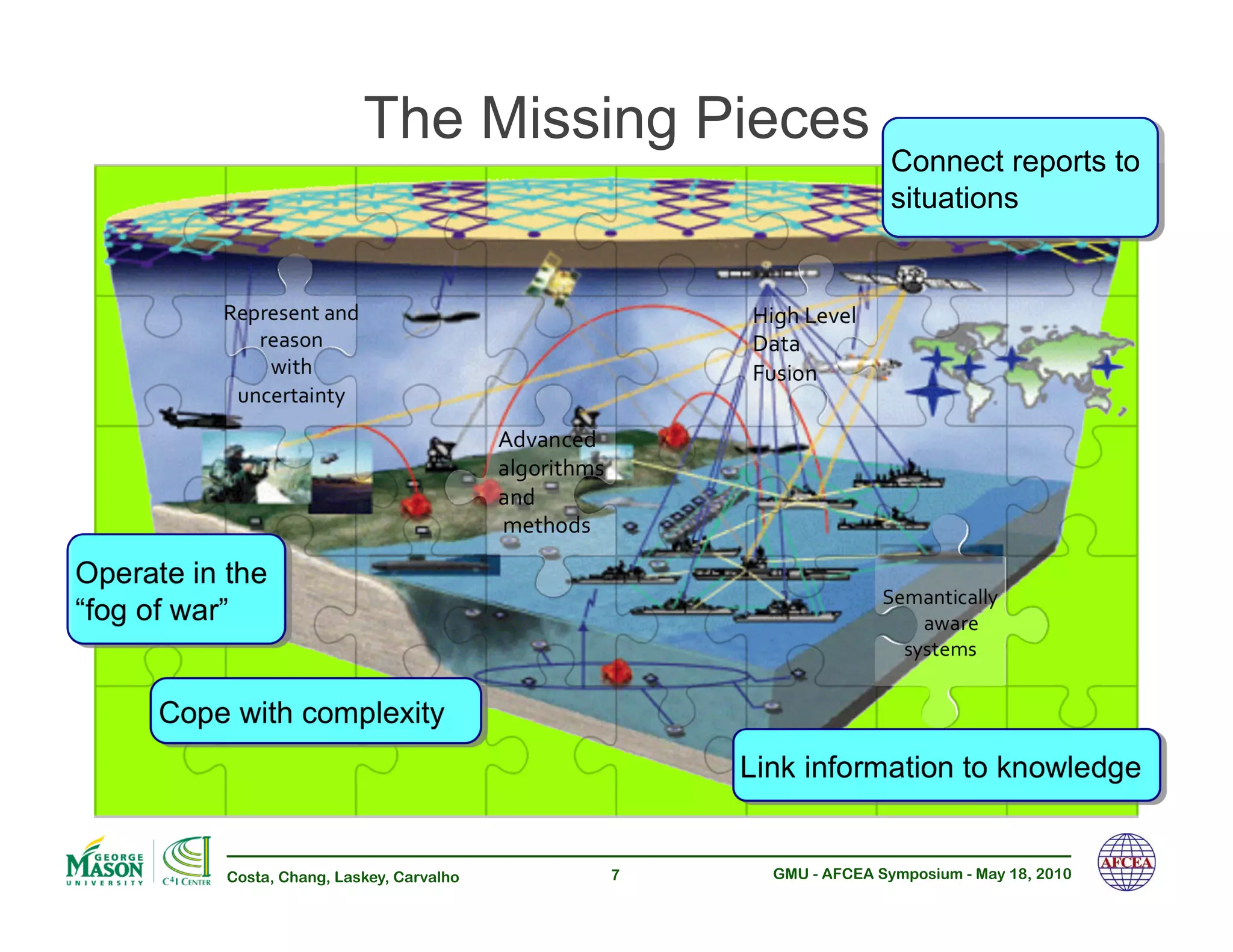

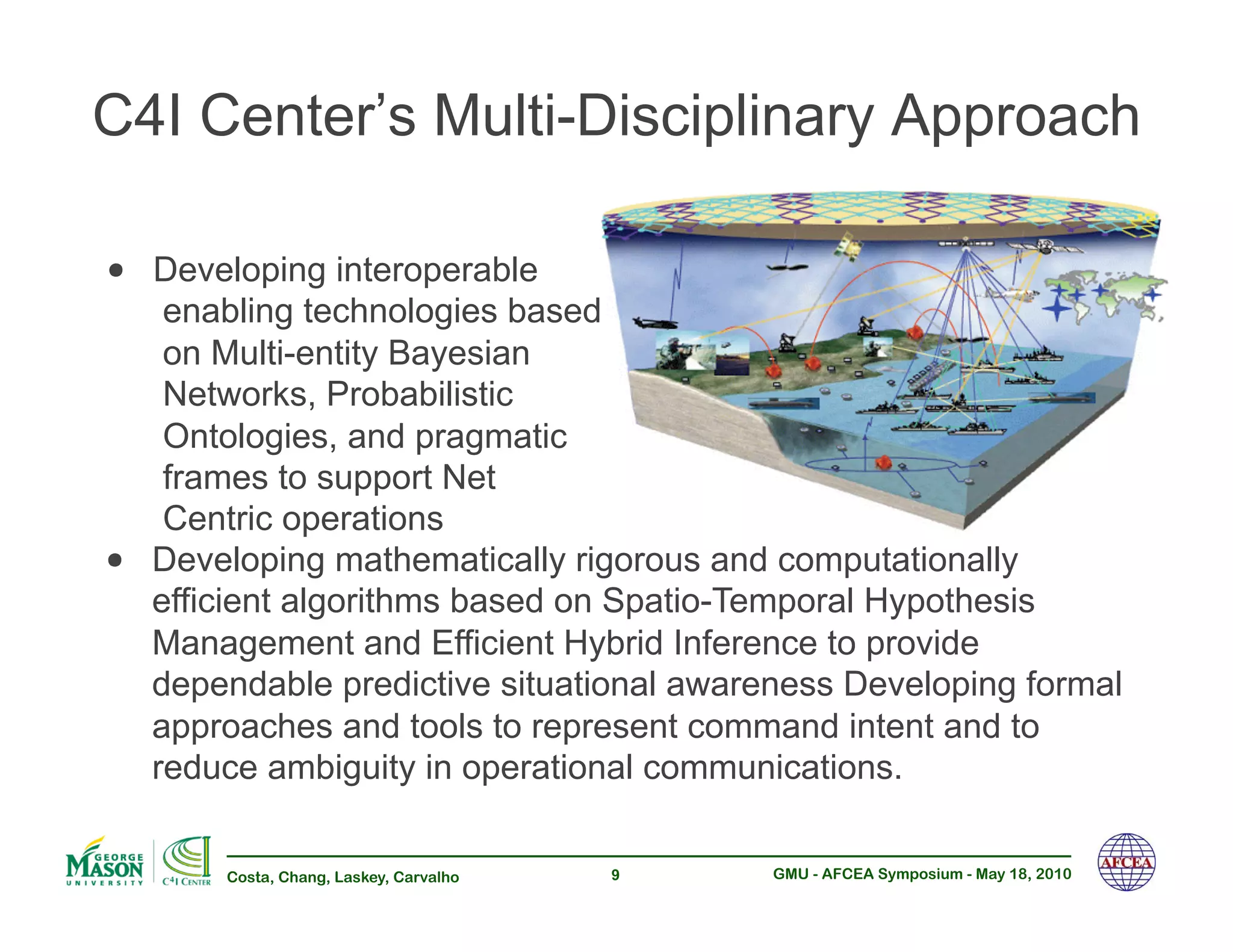

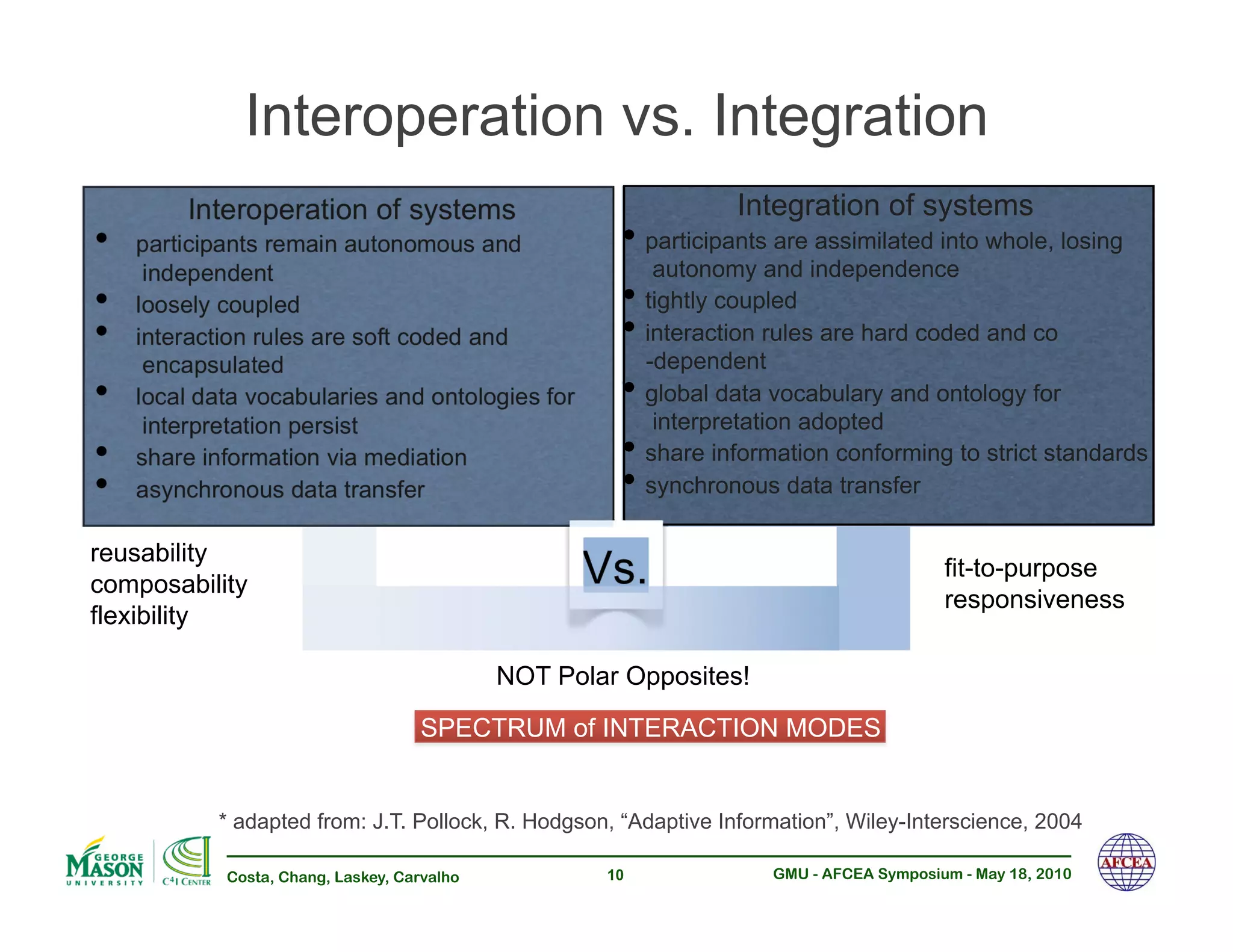

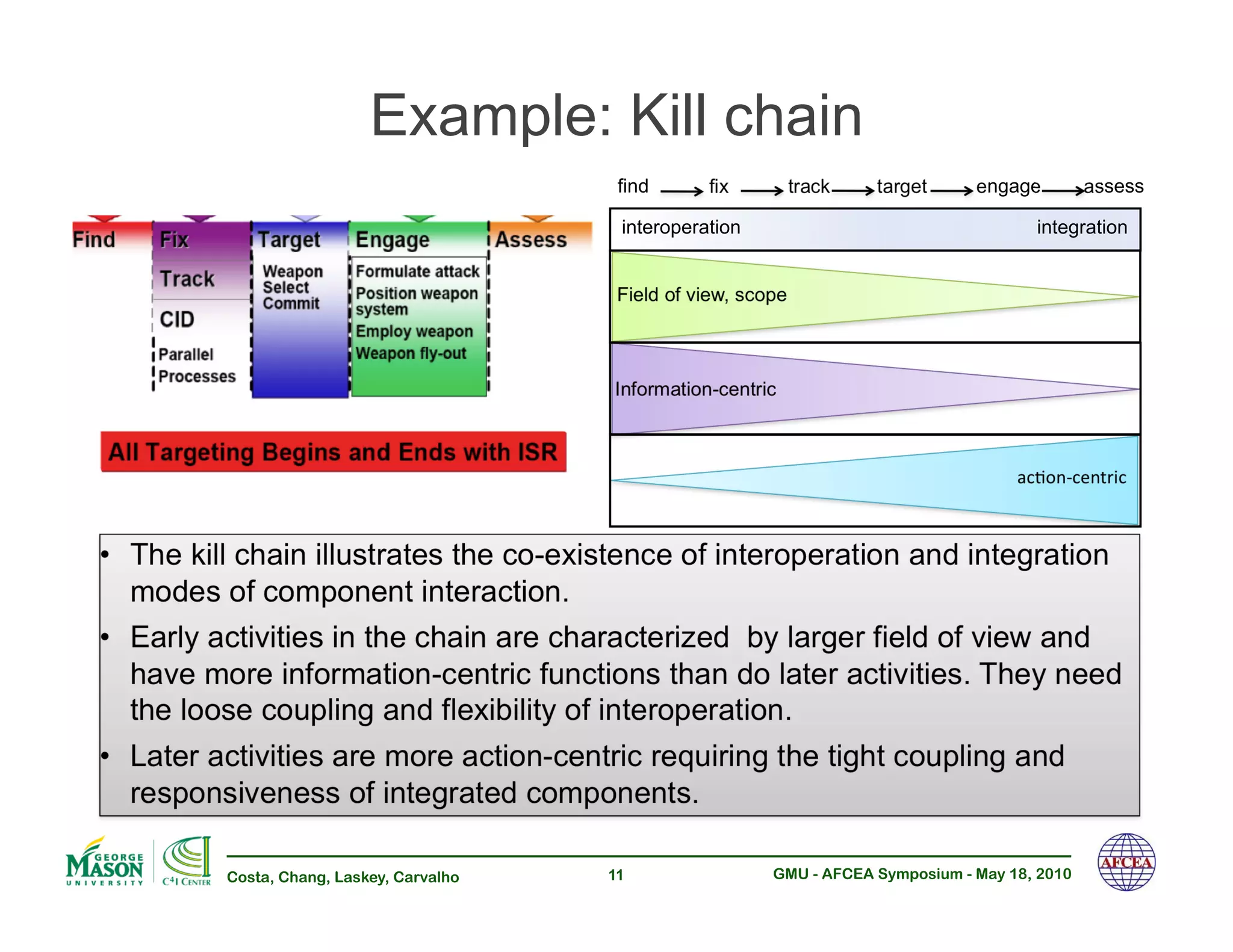

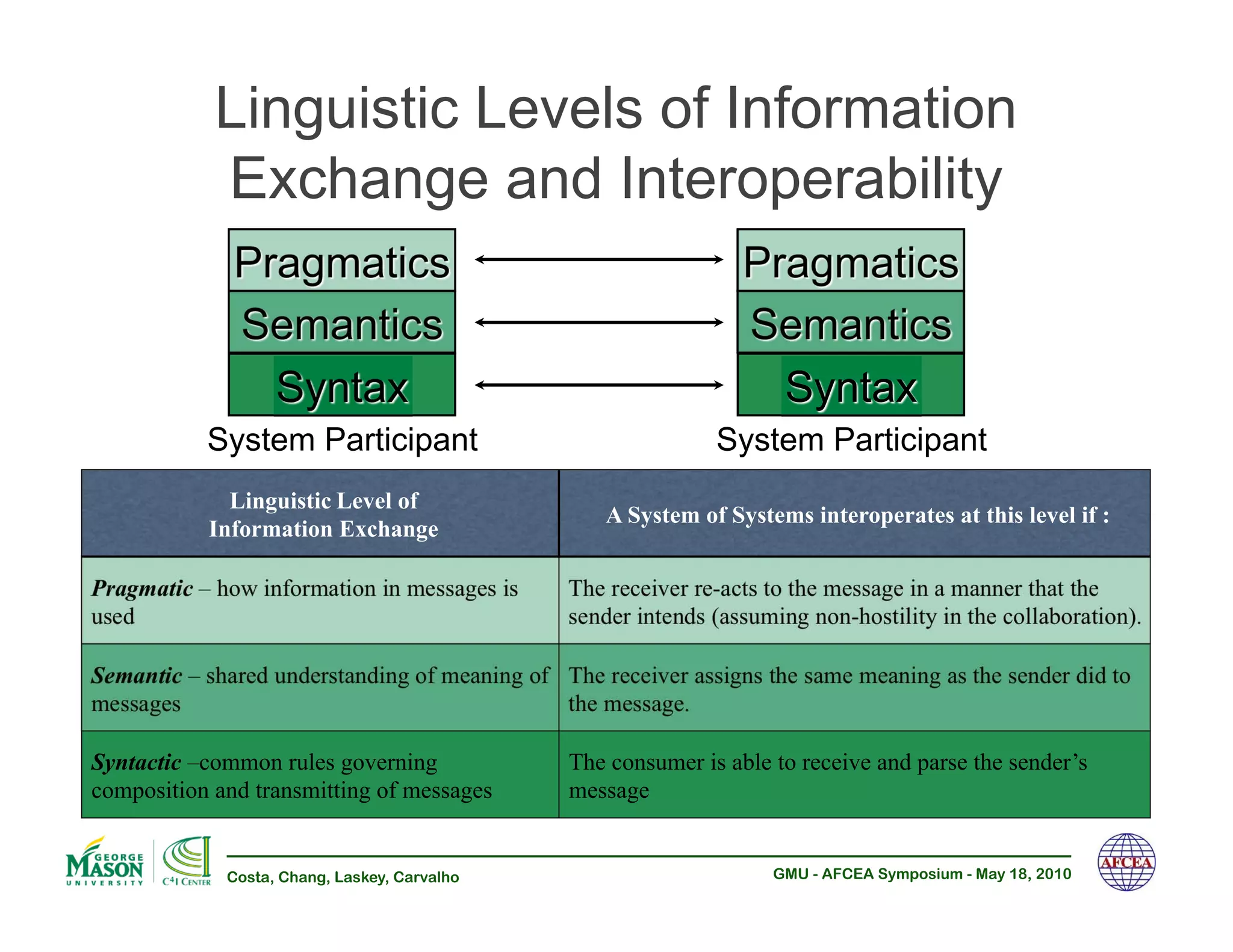

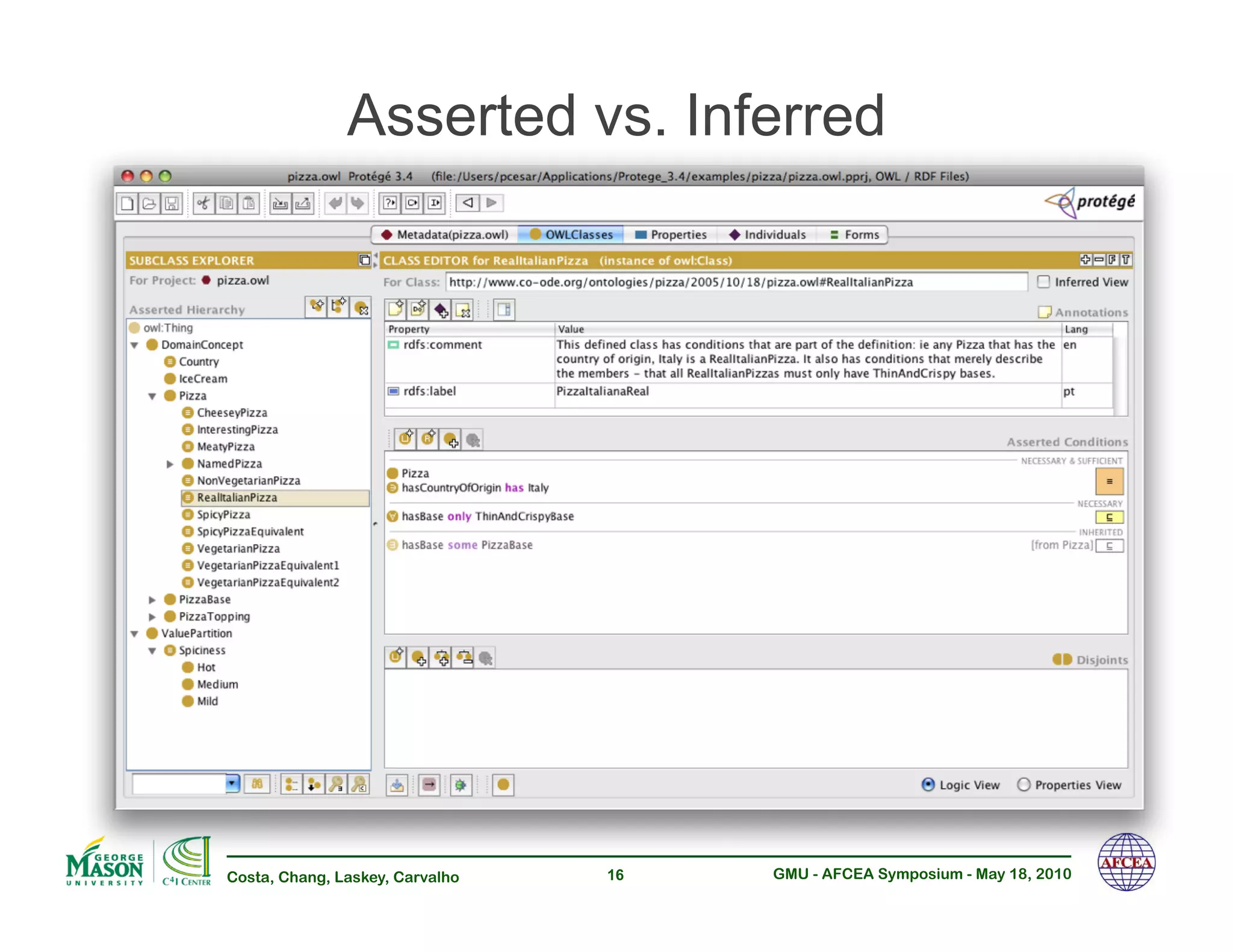

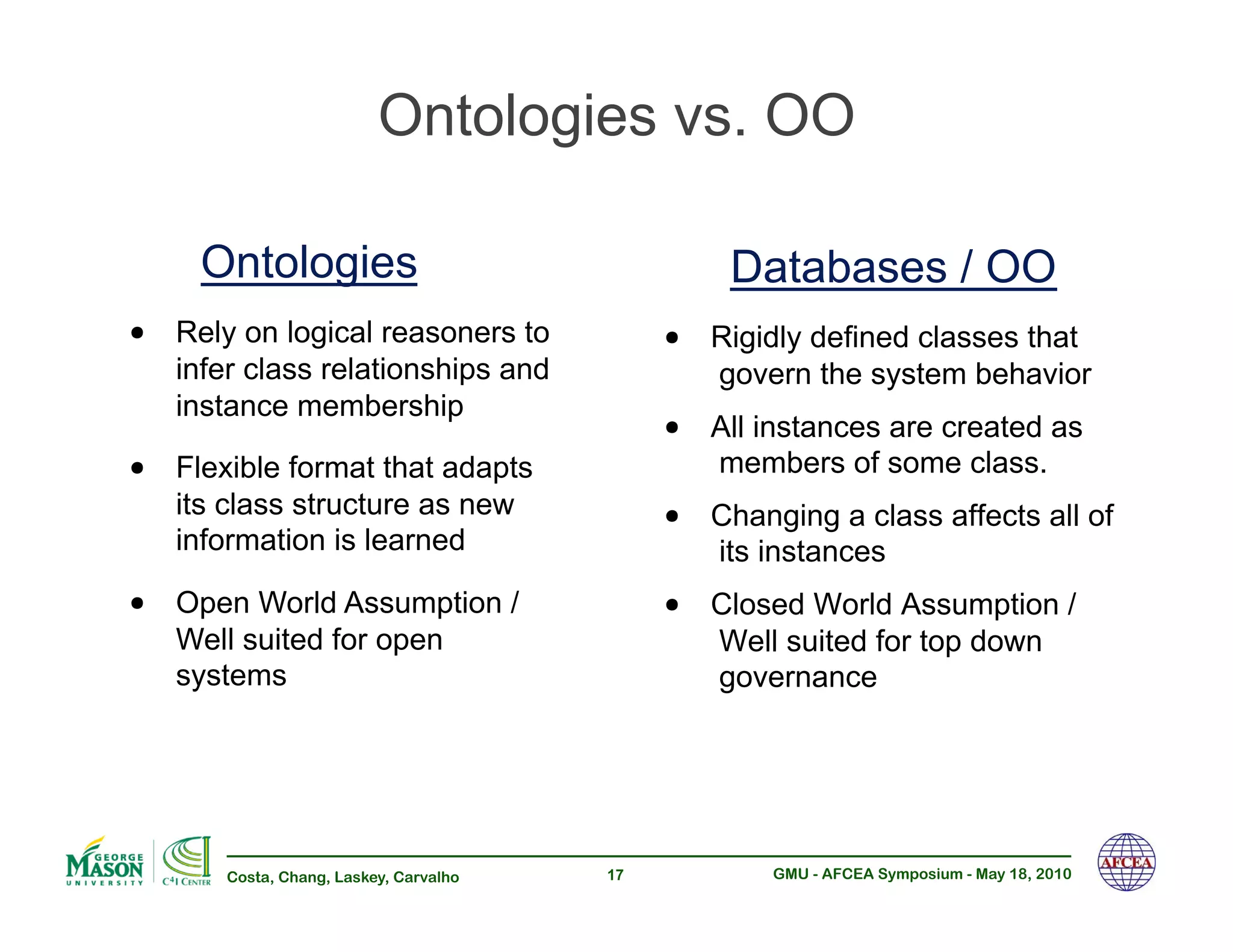

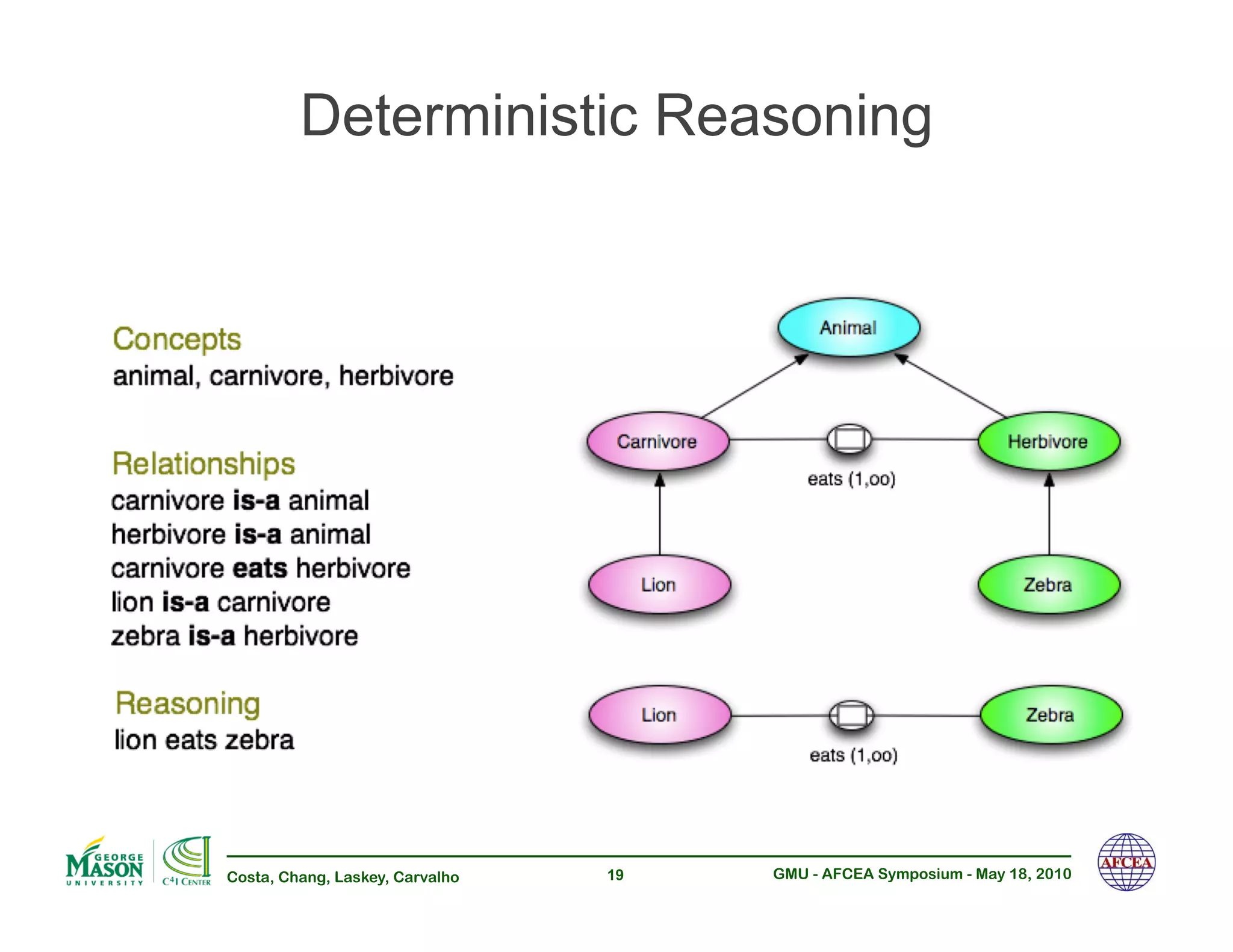

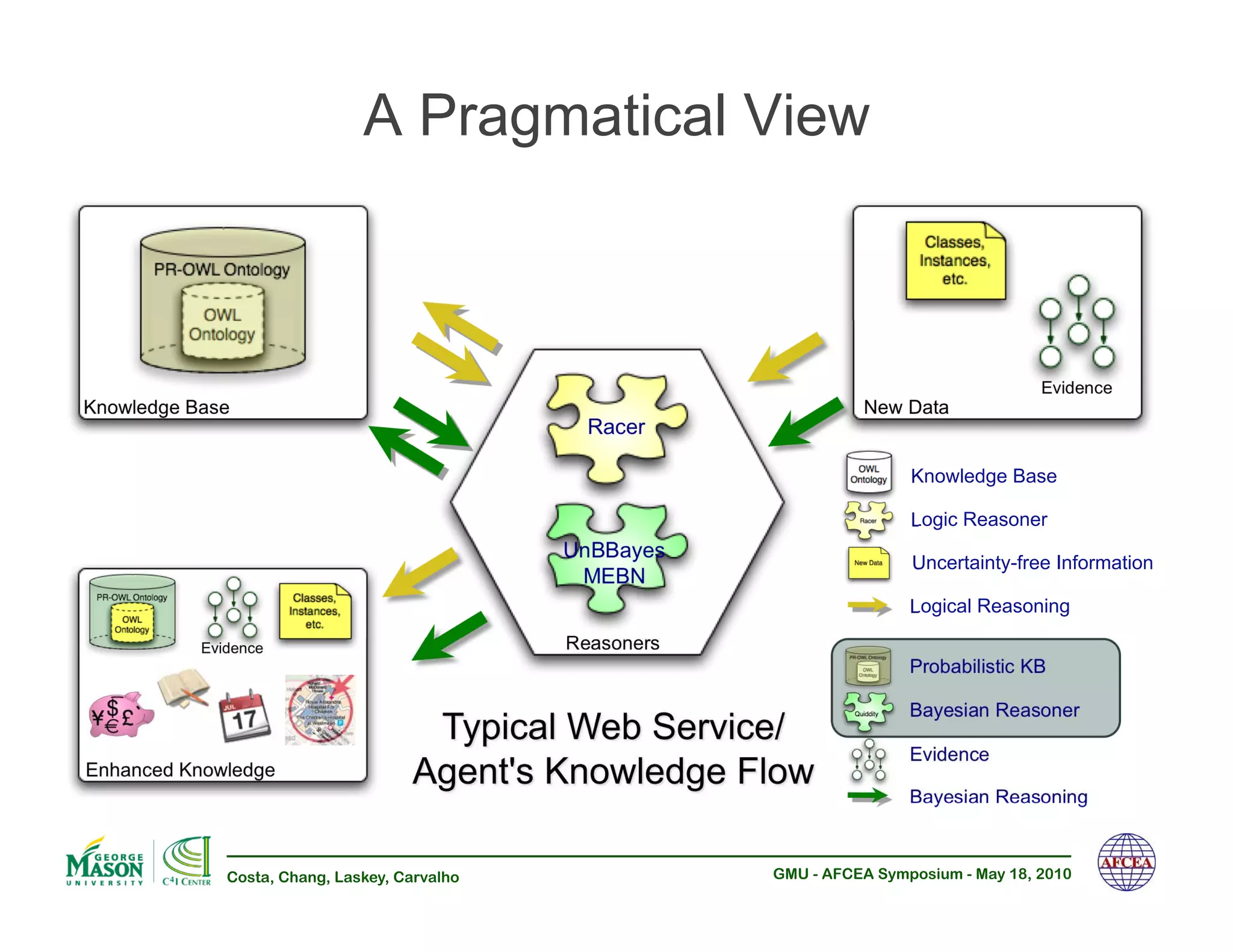

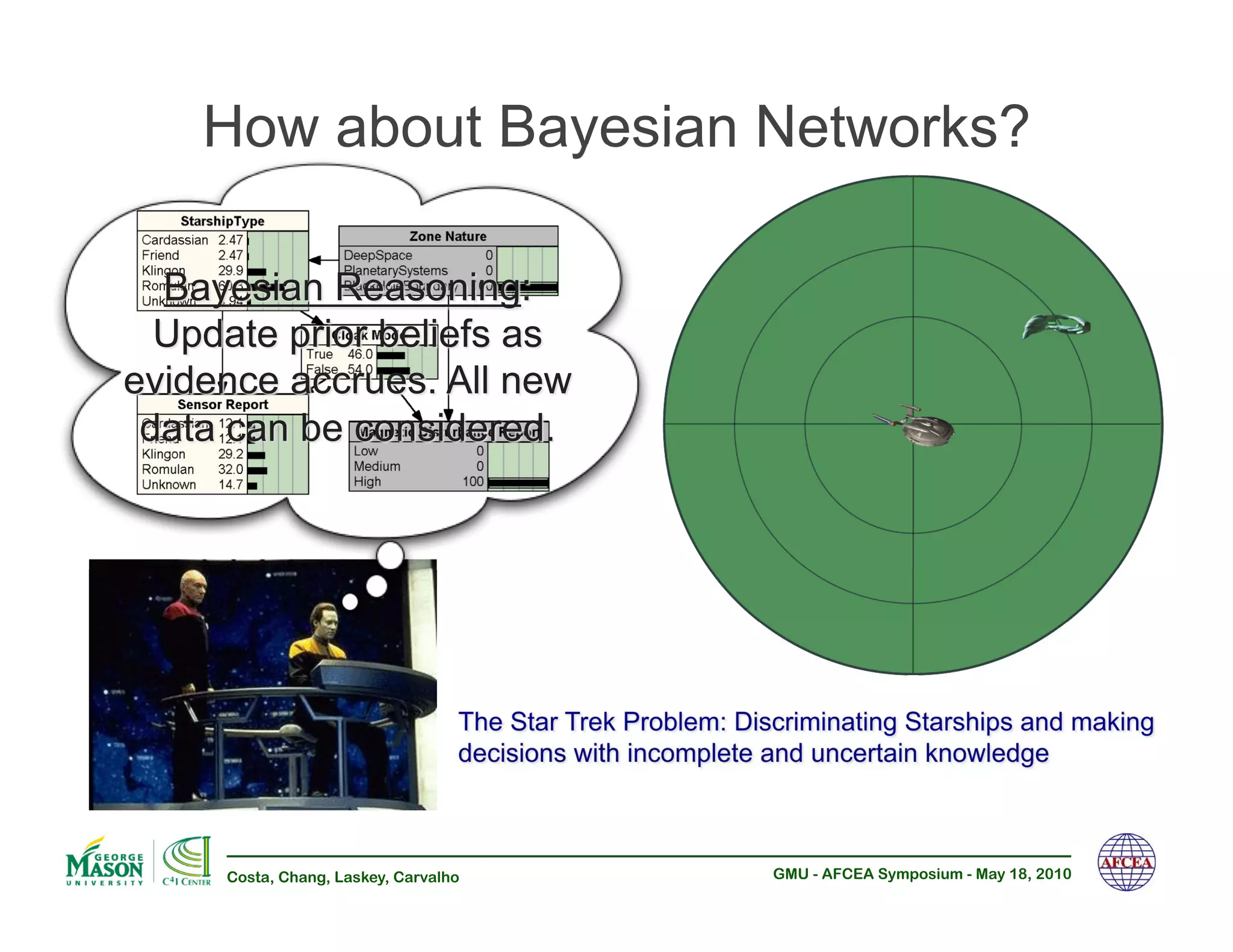

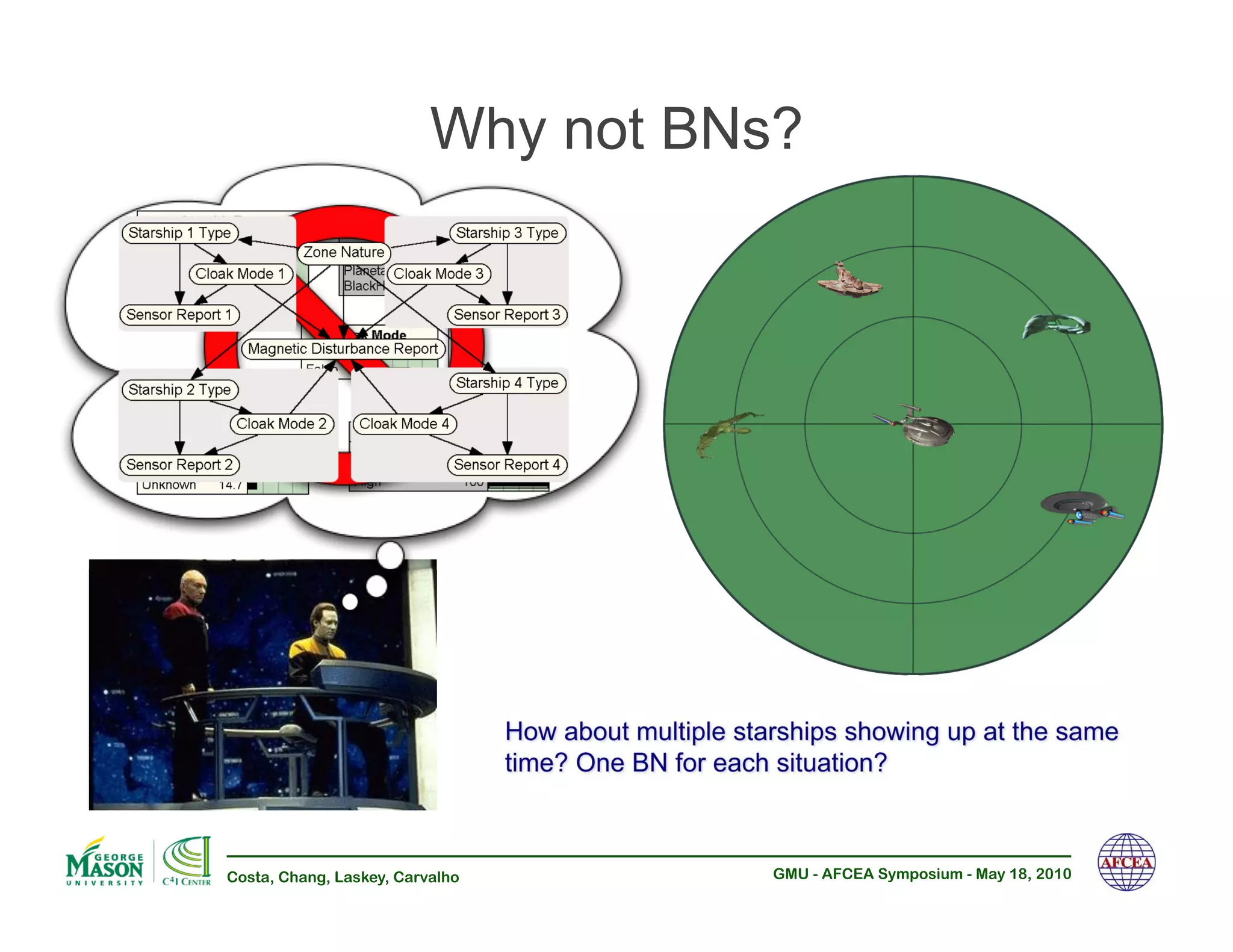

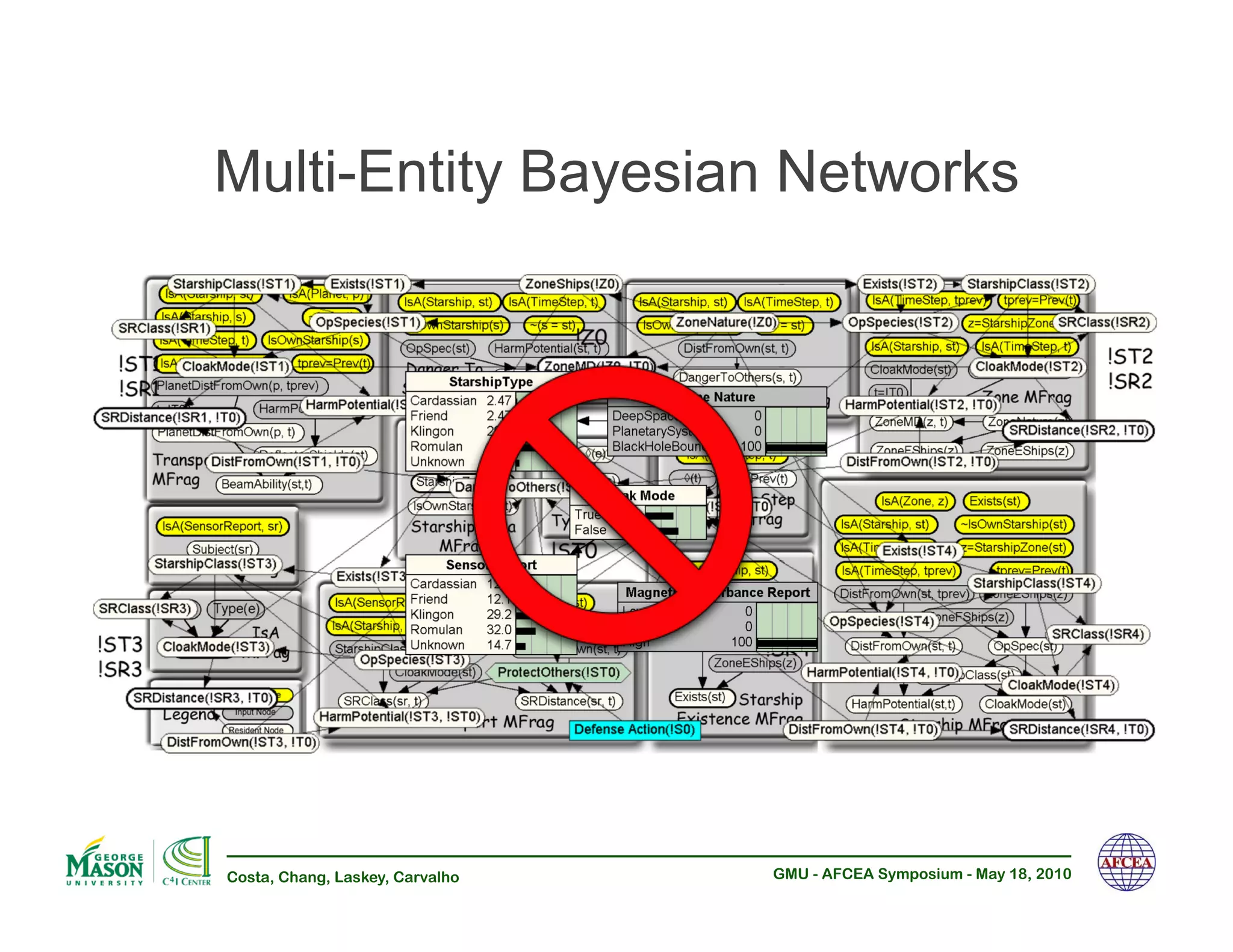

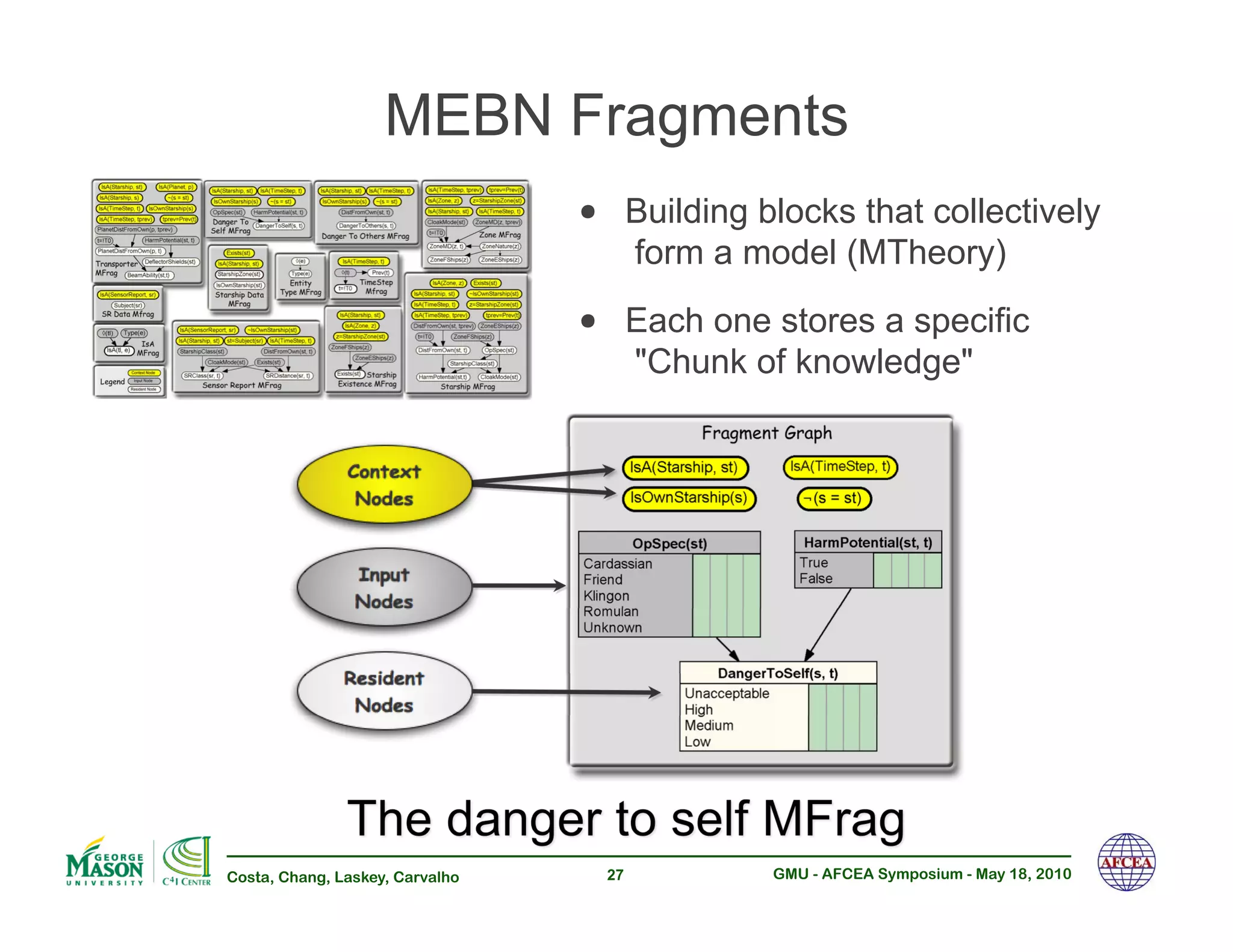

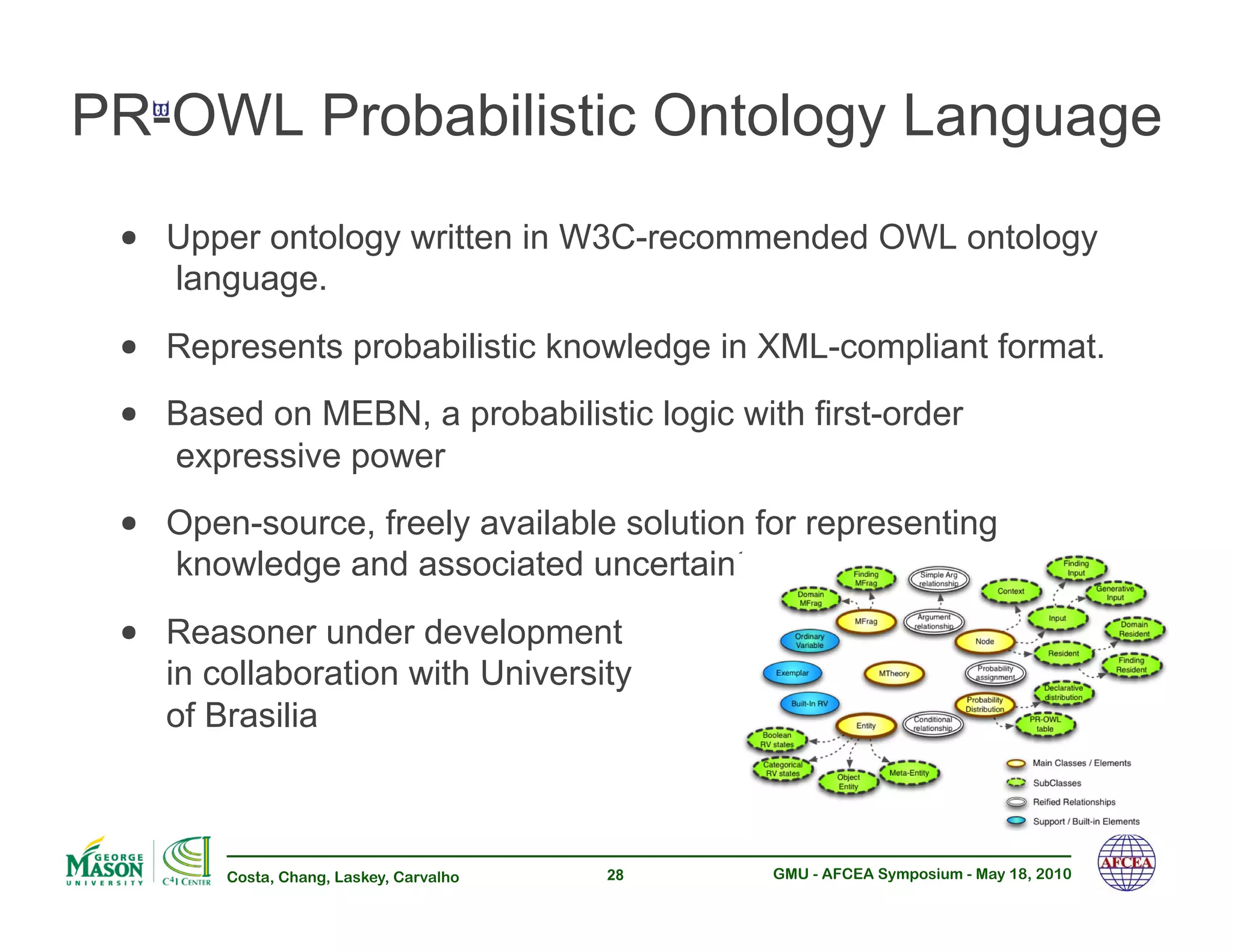

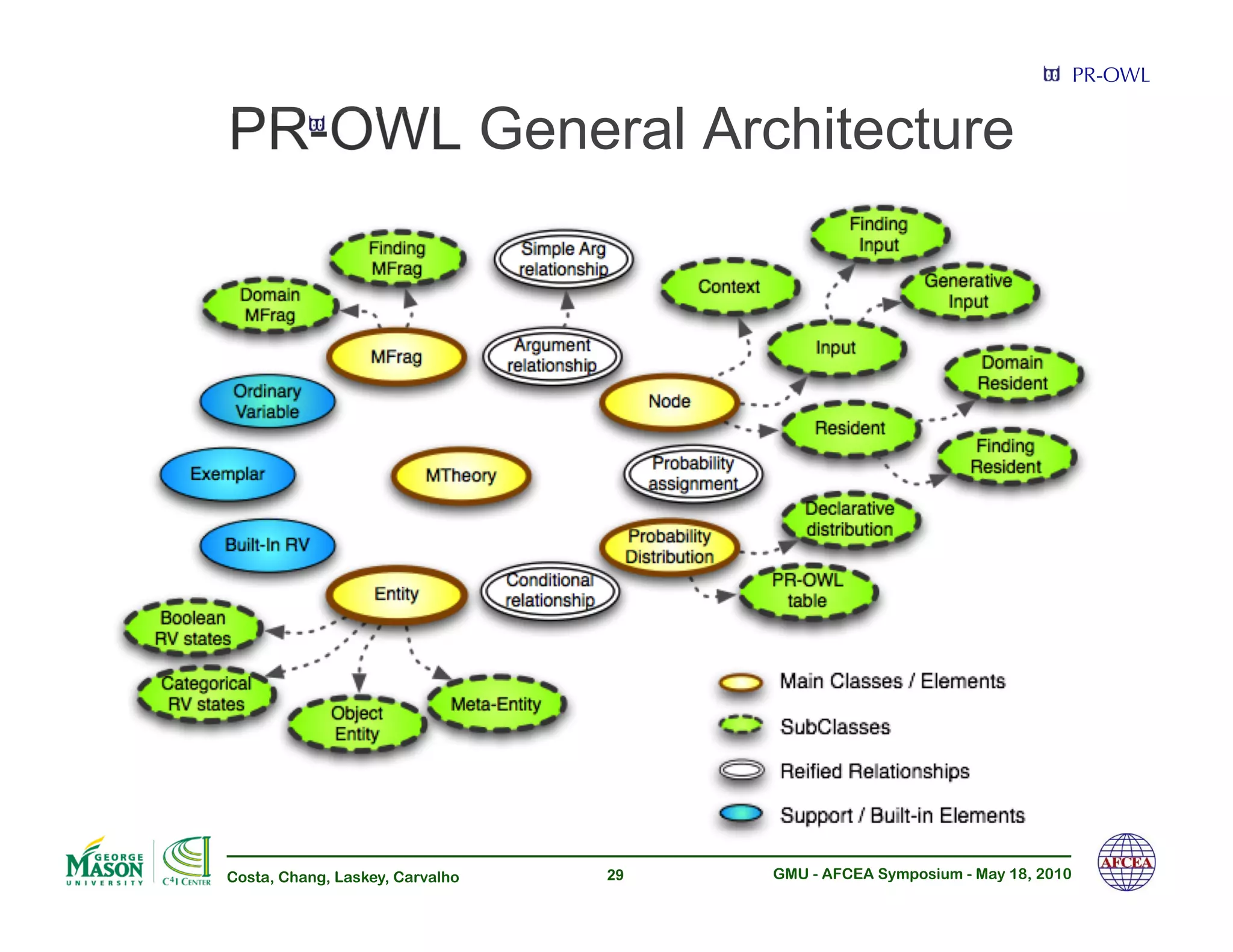

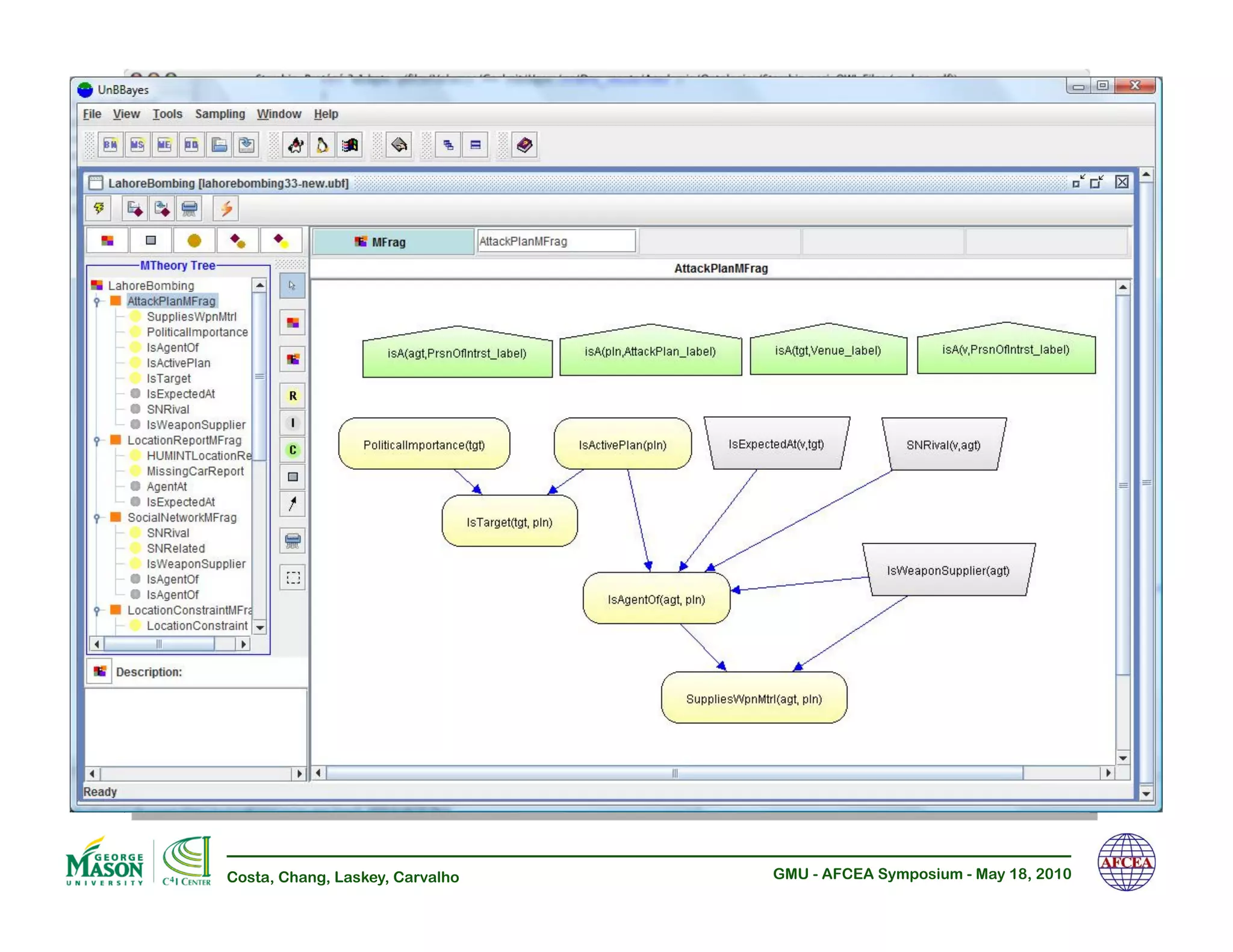

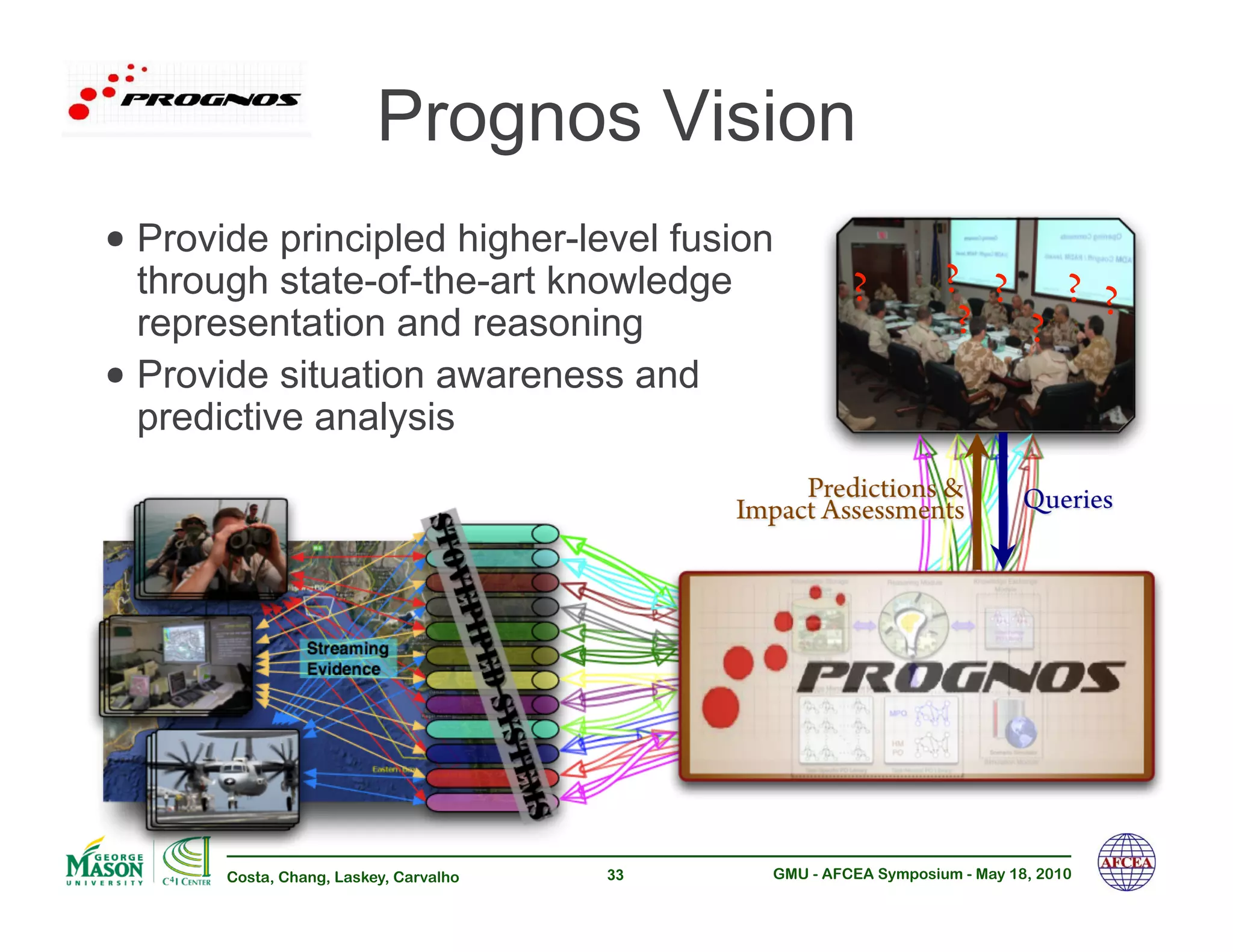

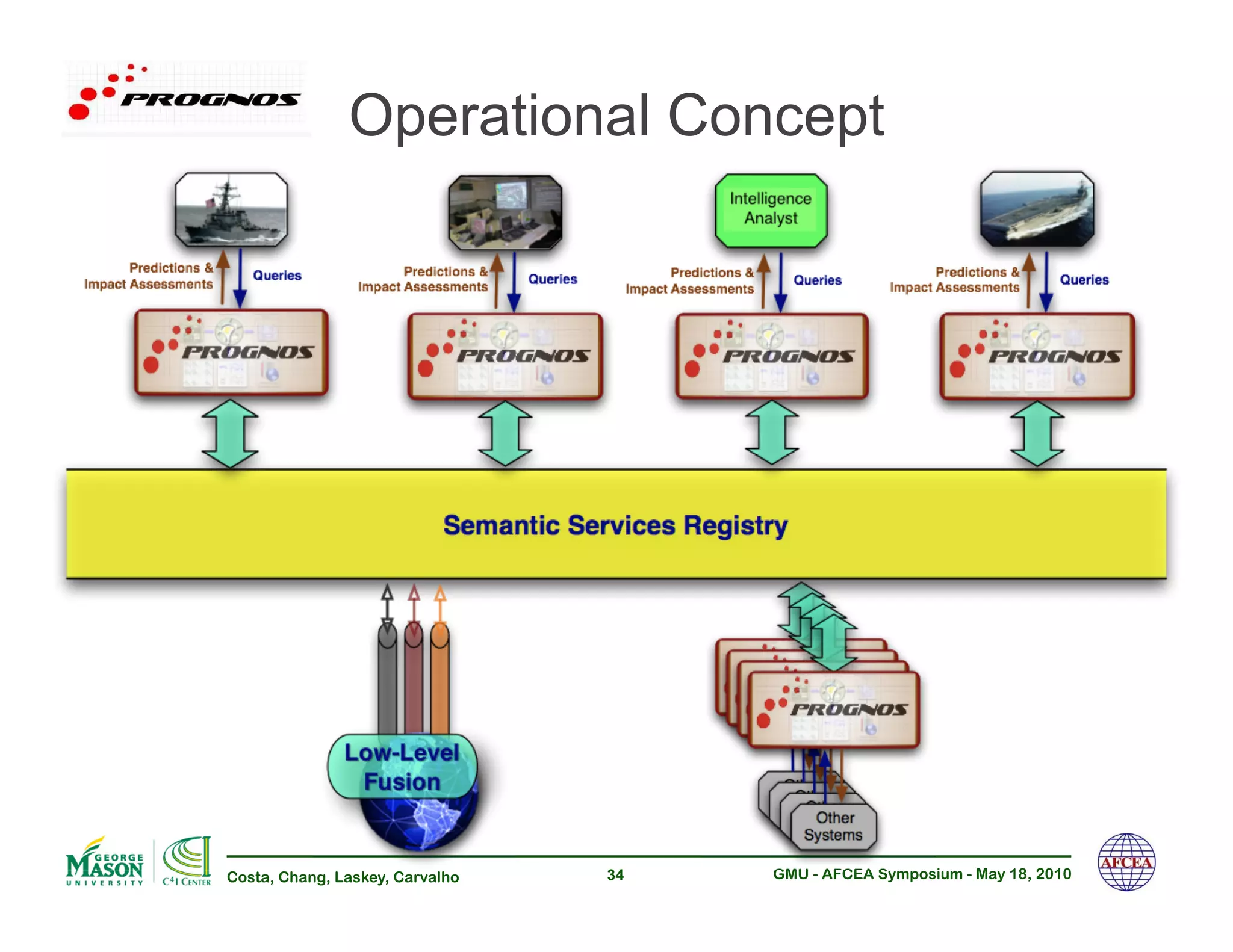

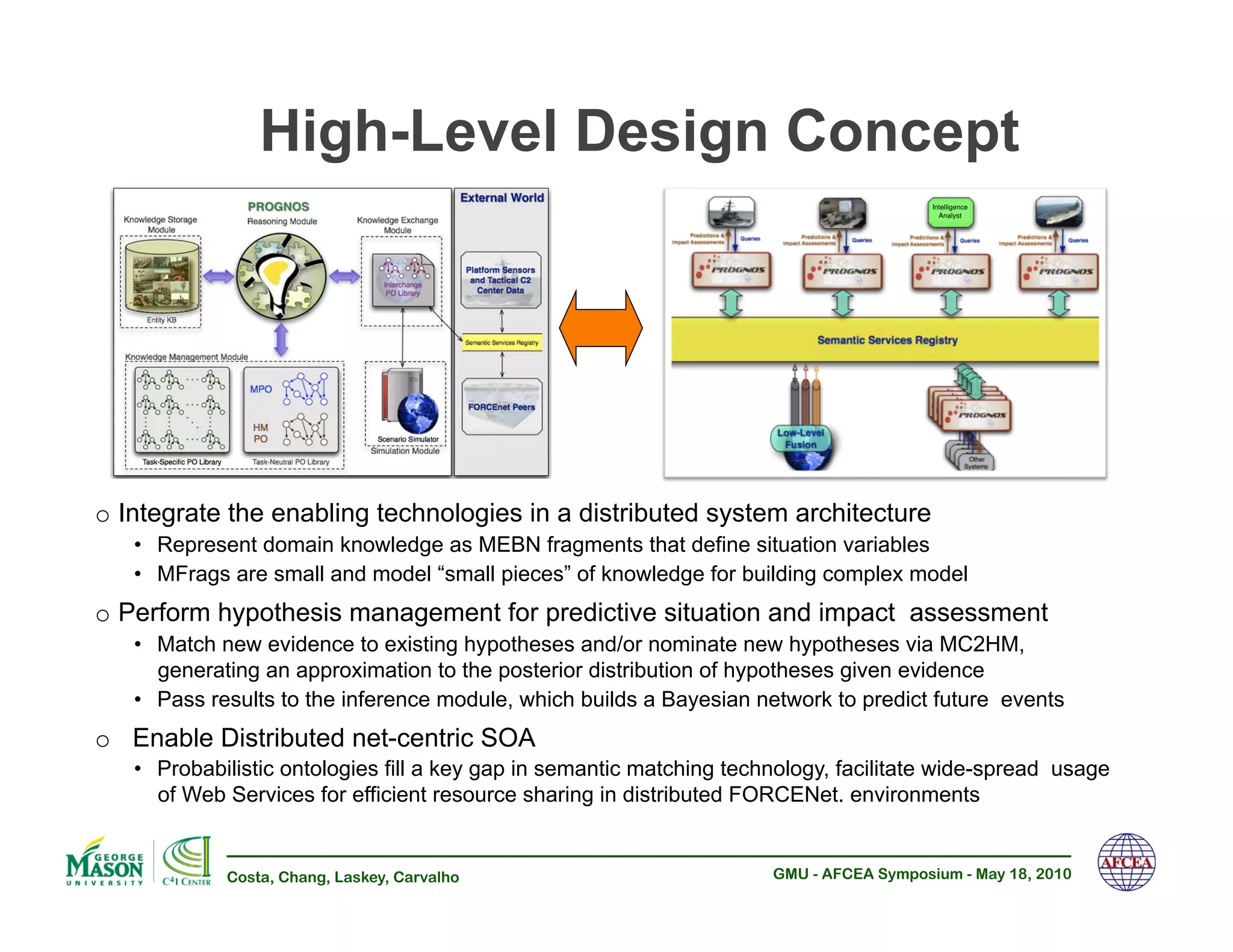

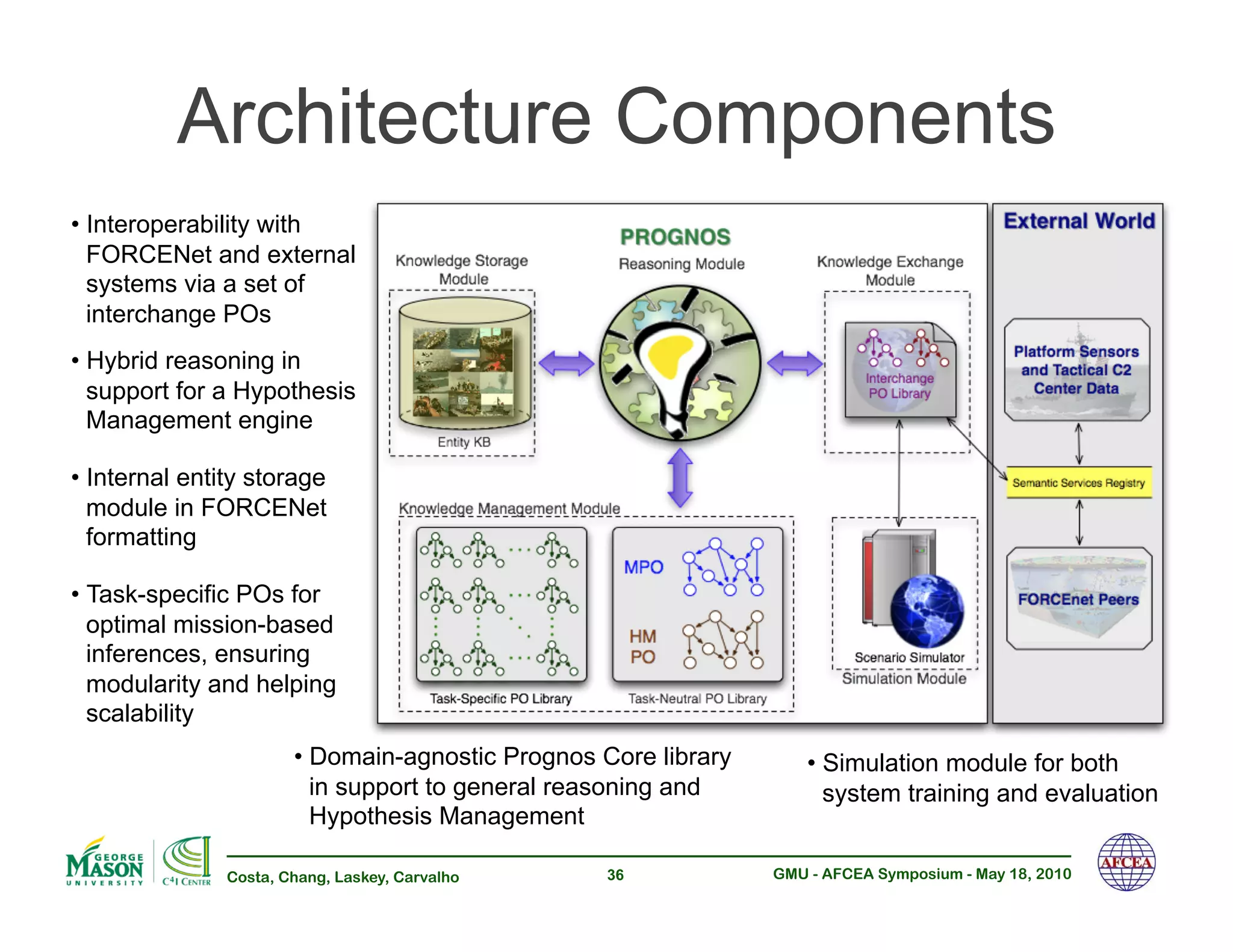

The document discusses the challenges in achieving effective net-centric operations due to limitations in data integration, uncertainty handling, and information processing in military contexts. It proposes the development of probabilistic ontologies and multi-entity Bayesian networks to enhance situational awareness and decision-making by addressing complexities and uncertainties in battlefield data. The authors advocate for rigorous mathematical foundations and interoperable methodologies to improve predictive situational assessments and support warfighter operations.