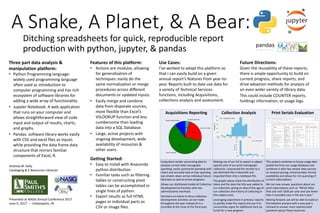

A snake, a planet, and a bear ditching spreadsheets for quick, reproducible report production with python, jupyter, and pandas

•

1 like•146 views

Presenter: Andrew Kelly, Cataloging & E-Resources Librarian, Paul Smith's College This poster has two accompanying handouts: https://www.slideshare.net/NASIG/a-snake-a-planet-and-a-bear-ditching-spreadsheets-handout1 and https://www.slideshare.net/NASIG/a-snake-a-planet-and-a-bear-ditching-spreadsheets-handout2slides.

Report

Share

Report

Share

Download to read offline

Recommended

Capturing and Analyzing Publication, Citation and Usage Data for Contextual C...

Libraries have long sought to demonstrate the value of their collections through a variety of usage statistics. Traditionally, a strong emphasis is placed on high usage statistics when evaluating journals in collection development discussions. However, as budget pressures persist, administrators are increasingly concerned with looking beyond traditional usage metrics to determine the real impact of library services and collections. By examining journal usage in the context of scholarly communication, we hope to gain a more holistic understanding of the use and impact of our library’s resources. In this session, we begin by outlining our methodology for gathering comprehensive publication and citation data for authors affiliated with Northwestern University’s Feinberg School of Medicine, utilizing Web of Science as our primary data source and leveraging a custom Python script to manage the data. Using this data we discuss various potential metrics that could be employed to measure and evaluate journals in institutional and field-specific contexts, including but not limited to: number of publications and references per journal, co-citation networks, percentage of references per journal, and increases or decreases of references over time per title. We then consider the development of normalized benchmarks and criteria for creating field-specific core journal lists. We also discuss a process for establishing usage thresholds to evaluate existing journal subscriptions and to highlight potential gaps in the collection. Finally, we apply and compare these metrics to traditional collection development tools like COUNTER usage reports, cost-per-use analysis, Inter-Library Loan statistics and turnaway reports, to determine what correlations or discrepancies might exist. We finish by highlighting some use-cases which demonstrate the value of considering publication and citation metrics, and provide suggestions for incorporating these metrics into library collection development practices.

Speakers: Joelen Pastva and Jonathan Shank, Northwestern University

Project GitHub page: https://goo.gl/2C2Pcy

How Accessible Is Our Collection? Performing an E-Resources Accessibility Review

Michael Fernandez, presenter

While the growth and adoption of electronic resources has been exponential, there has been a concurrent lag in ensuring that e-resources are accessible by users with disabilities. Vendors have become increasingly aware of this issue and are taking steps to address it; however, given the sheer size of the library marketplace, there is a noticeable lack of consistency across vendor platforms. In the Summer of 2016, American University Library began evaluating the accessibility of its web content as part of a university-wide initiative focusing on Section 508 compliance. This review entailed not only library hosted websites, but also third party platforms for databases, e-journals, and e-books. In order to assess the accessibility of the library’s subscribed e-resources, the Electronic Resources Management Unit created an accessibility inventory. All subscribed e-resources were evaluated to gauge the efforts being made by vendors to make their products accessible. The methodology for this inventory involved seeking out voluntary product accessibility templates (VPATs), identifying clearly marked accessibility statements on the vendor site or platform, and reviewing current license agreements for verbiage that ensures a commitment to accessibility regulations and allows for remediation of accessibility issues that may be identified. This inventory represented an initial but crucial step towards e-resource accessibility. AU Library was able to identify the vendors who have already taken measures, and for those who had not, we identified the opportunity to create a dialogue. In this presentation, I’ll detail methods and resources that can be used in order to assess the status of a collection’s accessibility. Additionally, I’ll describe how AU Library was able to collaborate on this shared goal by identifying allies across the university in the offices of assistive technology and procurement. Finally, I’ll discuss our strategies for further educating and engaging with vendors.

The Kaleidoscope of Impact: same data, different perspectives, constantly cha...

Scholars, scientists, academic institutions, publishers and funders are all interested in impact. We have different roles and goals, and therefore different reasons for needing to understand impact; we are therefore asking different questions about impact, and those questions continue to evolve, much as the concept of impact itself is evolving. To answer our different questions, do we need different data, in separate silos, or are we looking at the same data, from different angles? This session gathered researcher, library, publisher and metrics provider perspectives to consider who has an interest in impact, what data they are interested in, how they use it, and how the situation is evolving as e.g. business models and technical infrastructures shift.

Data Stories: Using Narratives to Reflect on a Data Purchase Pilot Program

Anita Foster and Gene R. Springs, presenters

The Ohio State University Libraries, driven by campus demand, developed and implemented a data resource purchase pilot program that took place over one fiscal year. Having previously only prioritized the purchasing of subject-related data resources on a small scale, this initiative included large data resources, most of which can meet the research and teaching needs of a variety of academic disciplines. Beginning the pilot with very few criteria for selection and potential acquisition, the Collections Strategist and Electronic Resources Officer encountered various challenges along with way, each requiring additional exploration, research, and eventual resolution. As the pilot program proceeded, other criteria emerged as important considerations when examining data resources, particularly for content and licensing.

To best develop an understanding of what was learned over the year of this pilot program, the Collections Strategist and Electronic Resources Officer collaborated in writing "data stories," or narratives about each of the data resource options investigated for acquisition. Each narrative is structured similarly, from the requestor and initial stated need through the end result. Any pertinent details regarding content, access, or licensing were incorporated to complete the narratives. The data stories will be further analyzed to track commonalities among both the successful and unsuccessful acquisitions, with the proposed outcome of developing tested criteria for future acquisition of data resources.

Datavi$: Negotiate Resource Pricing Using Data Visualization

Stephanie J. Spratt, presenter

Ready to ask for a reduction in the annual increase of an e-resource product but unclear on how to make your case? Want to try some innovative strategies to avoid spending more than your budget? Want to reduce the amount of heavy renewal work falling right at fiscal close? Attend this presentation to learn techniques on all of that and more!

The speaker will use commonly collected data to show how to combine and visualize metrics to help make a library’s case for requesting reductions in pricing, adjusting service fees, and asking for changes to subscription periods to balance out the renewal workload. Attendees will learn which data to analyze and combine as it relates to pricing negotiations along with the steps involved to make that data come alive in Excel graphs and charts. Alternate data visualization products will also be discussed. The data visualization techniques, not outcomes, will be the focus of this presentation with the goal of attendees taking back which techniques might be worthwhile endeavors at their own institutions. Attendees will also learn about negotiation strategies and internal and external considerations when preparing to negotiate.

Growing an awareness of negotiation techniques and factors in play both inside and outside the library will help librarians make their cases for equitable pricing and models for library resources. The data visualization techniques shown in this presentation will serve as a stepping-off point for any librarian who wishes to use honesty, directness, and real-world scenarios to negotiate pricing for content and other library expenditures.

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Serendipity in Digital Collections: Enhancing Discovery with Linked Data Anna L. Creech, Head, Resource Acquisition and Delivery, Boatwright Memorial Library, University of RichmondAccessibility Compliance: One State, Two Approaches

Accessibility compliance is a growing concern for academic institutions as it pertains to instructional materials on websites, course management systems, and in course documents. This extends to materials provided by academic libraries such as electronic resources. This presentation will discuss the approaches that both systems governing Tennessee public colleges and universities are using to ensure that vendors are compliant with standards as described in WCAG 2.0, EPUB 3, and Section 508 of the Rehabilitation Act of 1973.

The session will be divided into three parts as follows:

Introduction to the difference between accessibility and accommodation. Discussion of the types of disabilities of which librarians should be aware when acquiring and assessing different electronic resources. Brief mention of the laws and standards related to accessibility compliance.

An overview of the University of Tennessee System’s approach to encouraging accessibility compliance by incorporating detailed conformance language into licenses with the vendors and publishers of electronic and information technology.

A discussion of the Tennessee Board of Regents system’s approach to encouraging accessibility compliance by conducting an accessibility audit of resources held in common among the system’s libraries and through a collaborative process of compliance document collection from vendors/publishers and sharing in an AIMT (Accessible Instructional Materials and Technology) database. An introduction to the different types of documents and their content: Accessibility Statement, Voluntary Product Accessibility Template (VPAT), WCAG 2.0 (Web Content Accessibility Guidelines) Checklist, EPUB 3 Accessibility Checklist, and a Conformance and Remediation Form.

Stephanie J. Adams

Electronic Resources Librarian, Tennessee Tech University

Ms. Adams is the Electronic Resources Librarian at Tennessee Tech University where she is responsible for the acquisition and set-up of all electronic resources at the Volpe Library.

Corey S. Halaychik

The University of Tennessee, Knoxville

Licensing guy, negotiator of master agreements at the University of Tennessee Libraries, and co-chair of The Collective, I work to make libraries more efficient, saving time and money for institutions and the people they serve.

Jennifer Mezick

Pellissippi State Community College

Acquisitions and Collection Development Librarian at Pellissippi State Community College in Knoxville, TN. In addition to these roles, I manage the libraries' electronic resources and website, and provide instruction and research support to students and faculty.

Recommended

Capturing and Analyzing Publication, Citation and Usage Data for Contextual C...

Libraries have long sought to demonstrate the value of their collections through a variety of usage statistics. Traditionally, a strong emphasis is placed on high usage statistics when evaluating journals in collection development discussions. However, as budget pressures persist, administrators are increasingly concerned with looking beyond traditional usage metrics to determine the real impact of library services and collections. By examining journal usage in the context of scholarly communication, we hope to gain a more holistic understanding of the use and impact of our library’s resources. In this session, we begin by outlining our methodology for gathering comprehensive publication and citation data for authors affiliated with Northwestern University’s Feinberg School of Medicine, utilizing Web of Science as our primary data source and leveraging a custom Python script to manage the data. Using this data we discuss various potential metrics that could be employed to measure and evaluate journals in institutional and field-specific contexts, including but not limited to: number of publications and references per journal, co-citation networks, percentage of references per journal, and increases or decreases of references over time per title. We then consider the development of normalized benchmarks and criteria for creating field-specific core journal lists. We also discuss a process for establishing usage thresholds to evaluate existing journal subscriptions and to highlight potential gaps in the collection. Finally, we apply and compare these metrics to traditional collection development tools like COUNTER usage reports, cost-per-use analysis, Inter-Library Loan statistics and turnaway reports, to determine what correlations or discrepancies might exist. We finish by highlighting some use-cases which demonstrate the value of considering publication and citation metrics, and provide suggestions for incorporating these metrics into library collection development practices.

Speakers: Joelen Pastva and Jonathan Shank, Northwestern University

Project GitHub page: https://goo.gl/2C2Pcy

How Accessible Is Our Collection? Performing an E-Resources Accessibility Review

Michael Fernandez, presenter

While the growth and adoption of electronic resources has been exponential, there has been a concurrent lag in ensuring that e-resources are accessible by users with disabilities. Vendors have become increasingly aware of this issue and are taking steps to address it; however, given the sheer size of the library marketplace, there is a noticeable lack of consistency across vendor platforms. In the Summer of 2016, American University Library began evaluating the accessibility of its web content as part of a university-wide initiative focusing on Section 508 compliance. This review entailed not only library hosted websites, but also third party platforms for databases, e-journals, and e-books. In order to assess the accessibility of the library’s subscribed e-resources, the Electronic Resources Management Unit created an accessibility inventory. All subscribed e-resources were evaluated to gauge the efforts being made by vendors to make their products accessible. The methodology for this inventory involved seeking out voluntary product accessibility templates (VPATs), identifying clearly marked accessibility statements on the vendor site or platform, and reviewing current license agreements for verbiage that ensures a commitment to accessibility regulations and allows for remediation of accessibility issues that may be identified. This inventory represented an initial but crucial step towards e-resource accessibility. AU Library was able to identify the vendors who have already taken measures, and for those who had not, we identified the opportunity to create a dialogue. In this presentation, I’ll detail methods and resources that can be used in order to assess the status of a collection’s accessibility. Additionally, I’ll describe how AU Library was able to collaborate on this shared goal by identifying allies across the university in the offices of assistive technology and procurement. Finally, I’ll discuss our strategies for further educating and engaging with vendors.

The Kaleidoscope of Impact: same data, different perspectives, constantly cha...

Scholars, scientists, academic institutions, publishers and funders are all interested in impact. We have different roles and goals, and therefore different reasons for needing to understand impact; we are therefore asking different questions about impact, and those questions continue to evolve, much as the concept of impact itself is evolving. To answer our different questions, do we need different data, in separate silos, or are we looking at the same data, from different angles? This session gathered researcher, library, publisher and metrics provider perspectives to consider who has an interest in impact, what data they are interested in, how they use it, and how the situation is evolving as e.g. business models and technical infrastructures shift.

Data Stories: Using Narratives to Reflect on a Data Purchase Pilot Program

Anita Foster and Gene R. Springs, presenters

The Ohio State University Libraries, driven by campus demand, developed and implemented a data resource purchase pilot program that took place over one fiscal year. Having previously only prioritized the purchasing of subject-related data resources on a small scale, this initiative included large data resources, most of which can meet the research and teaching needs of a variety of academic disciplines. Beginning the pilot with very few criteria for selection and potential acquisition, the Collections Strategist and Electronic Resources Officer encountered various challenges along with way, each requiring additional exploration, research, and eventual resolution. As the pilot program proceeded, other criteria emerged as important considerations when examining data resources, particularly for content and licensing.

To best develop an understanding of what was learned over the year of this pilot program, the Collections Strategist and Electronic Resources Officer collaborated in writing "data stories," or narratives about each of the data resource options investigated for acquisition. Each narrative is structured similarly, from the requestor and initial stated need through the end result. Any pertinent details regarding content, access, or licensing were incorporated to complete the narratives. The data stories will be further analyzed to track commonalities among both the successful and unsuccessful acquisitions, with the proposed outcome of developing tested criteria for future acquisition of data resources.

Datavi$: Negotiate Resource Pricing Using Data Visualization

Stephanie J. Spratt, presenter

Ready to ask for a reduction in the annual increase of an e-resource product but unclear on how to make your case? Want to try some innovative strategies to avoid spending more than your budget? Want to reduce the amount of heavy renewal work falling right at fiscal close? Attend this presentation to learn techniques on all of that and more!

The speaker will use commonly collected data to show how to combine and visualize metrics to help make a library’s case for requesting reductions in pricing, adjusting service fees, and asking for changes to subscription periods to balance out the renewal workload. Attendees will learn which data to analyze and combine as it relates to pricing negotiations along with the steps involved to make that data come alive in Excel graphs and charts. Alternate data visualization products will also be discussed. The data visualization techniques, not outcomes, will be the focus of this presentation with the goal of attendees taking back which techniques might be worthwhile endeavors at their own institutions. Attendees will also learn about negotiation strategies and internal and external considerations when preparing to negotiate.

Growing an awareness of negotiation techniques and factors in play both inside and outside the library will help librarians make their cases for equitable pricing and models for library resources. The data visualization techniques shown in this presentation will serve as a stepping-off point for any librarian who wishes to use honesty, directness, and real-world scenarios to negotiate pricing for content and other library expenditures.

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Serendipity in Digital Collections: Enhancing Discovery with Linked Data Anna L. Creech, Head, Resource Acquisition and Delivery, Boatwright Memorial Library, University of RichmondAccessibility Compliance: One State, Two Approaches

Accessibility compliance is a growing concern for academic institutions as it pertains to instructional materials on websites, course management systems, and in course documents. This extends to materials provided by academic libraries such as electronic resources. This presentation will discuss the approaches that both systems governing Tennessee public colleges and universities are using to ensure that vendors are compliant with standards as described in WCAG 2.0, EPUB 3, and Section 508 of the Rehabilitation Act of 1973.

The session will be divided into three parts as follows:

Introduction to the difference between accessibility and accommodation. Discussion of the types of disabilities of which librarians should be aware when acquiring and assessing different electronic resources. Brief mention of the laws and standards related to accessibility compliance.

An overview of the University of Tennessee System’s approach to encouraging accessibility compliance by incorporating detailed conformance language into licenses with the vendors and publishers of electronic and information technology.

A discussion of the Tennessee Board of Regents system’s approach to encouraging accessibility compliance by conducting an accessibility audit of resources held in common among the system’s libraries and through a collaborative process of compliance document collection from vendors/publishers and sharing in an AIMT (Accessible Instructional Materials and Technology) database. An introduction to the different types of documents and their content: Accessibility Statement, Voluntary Product Accessibility Template (VPAT), WCAG 2.0 (Web Content Accessibility Guidelines) Checklist, EPUB 3 Accessibility Checklist, and a Conformance and Remediation Form.

Stephanie J. Adams

Electronic Resources Librarian, Tennessee Tech University

Ms. Adams is the Electronic Resources Librarian at Tennessee Tech University where she is responsible for the acquisition and set-up of all electronic resources at the Volpe Library.

Corey S. Halaychik

The University of Tennessee, Knoxville

Licensing guy, negotiator of master agreements at the University of Tennessee Libraries, and co-chair of The Collective, I work to make libraries more efficient, saving time and money for institutions and the people they serve.

Jennifer Mezick

Pellissippi State Community College

Acquisitions and Collection Development Librarian at Pellissippi State Community College in Knoxville, TN. In addition to these roles, I manage the libraries' electronic resources and website, and provide instruction and research support to students and faculty.

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Smart Content applications at Elsevier

Michael Lauruhn, Disruptive Technology Director, ElsevierThe Road from Millennium to Alma: Two Tracks, One Destination

In 2016, two academic libraries migrated from Innovative Interface’s Millennium to Ex Libris’ Alma. Though both libraries came from a similar starting point in terms of library software, their migration environments were quite different: Colorado State University’s migration involved two campuses, CSU Fort Collins and CSU Pueblo, while Central Connecticut State University migrated with a newly-formed consortium comprised of 18 institutions. Even though both libraries share the same proprietary ILS, the environmental differences between the two libraries shape their experiences throughout the migration process. The presenters will share their libraries’ unique experiences while also addressing commonalities germane to the ILS migration process such as pre-migration data clean up, data migration, training, and designing workflows. Particular attention will be paid to the data migration process that details the extraction process along with coordinating these efforts. Because Alma is designed on a different concept than III’s Millennium, the redesign of workflows is critical prior to the final cutover to the new system. In light of this, the presenters will address the engagement of staff during these discussions along with their professional growth. In addition to explaining the technical aspects of this migration, they will also delve beneath the surface of the intellectual labor required for implementation and examine the psychological impact on all constituents who will use the new system for their daily work.

Kristin D'Amato

Central Connecticut State University

Kristin D’Amato is the Head of Acquisitions and Serials at Central Connecticut State University’s Elihu Burritt Library. She received her master’s in Library and Information Science from SUNY Albany and her bachelor’s in English Literature from SUNY Geneseo.

Rachel Erb Edit Profile

Colorado State University

Rachel A. Erb is the Electronic Resources Management Librarian at Colorado State University’s Morgan Library. She received her master's in Library Science from Florida State University, a master's in Slavic Languages and Literatures from Ohio State University, and her bachelor’s in Russian from Dickinson College.

Investigating Perpetual Access

Speaker: Bethany Greene, Electronic Resources Management Librarian, Duke University

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Keynote Address: Linking Data: What Does It Take to Make It Happen?

Marjorie Hlava, President, Access Innovations, Inc. and Data HarmonyResearch information management: making sense of it all

"Research information management: making sense of it all" - Julia Hawks, VP North America, Symplectic

Slides from Shaking It Up: Challenges and Solutions in Scholarly Information Management, San Francisco, April 22, 2015

Navigating the data management ecosystem - John Kratz

"Navigating the data management ecosystem" - John Kratz

Rodriguez No Free Lunch Sept 7

This presentation was provided by Allyson Rodriguez of the University of North Texas during a NISO Webinar on the topic of Open Access and Acquisitions, held on September 7, 2016

The Intersection of InterLibrary Loan and Acquisition Models: A review of rec...

Speaker: Sarah Paige, ILL Coordinator, Bailey/Howe Library, University of Vermont

Navigating the data management ecosystem - Dan Valen

"Navigating the data management ecosystem" - Dan Valen, figshare product specialist

Practical applications for altmetrics in a changing metrics landscape

"Practical applications for altmetrics in a changing metrics landscape" - Sara Rouhi, Altmetric product specialist, and Anirvan Chatterjee, Director Data Strategy for CTSI at UCSF

Beyond COUNTER Compliant: Ways to Assess E-Resources Reporting Tools

Kelly Marie Blanchat, presenter

The need to continually evaluate electronic resources should not limited to a metric for how resources perform. The reporting tools that monitor and collect e-resource usage need to have their performance evaluated as well. This presentation will cover how vendor-provided systems -- designed to aid in the decision making process of the e-resources lifecycle -- can be assessed for reporting accuracy. Following this session, participants will have an understanding of what data points to review when assessing vendor-provided usage statistic tools, and will have a method to begin evaluating their own systems. In summer 2015, Yale Library brought up ProQuest’s 360 COUNTER Data Retrieval Service (DRS), a service in which COUNTER-compliant usage statistics are uploaded, archived, and normalized into consolidated reports twice per year. To date 360 COUNTER has freed up a significant amount of time for Yale's E-Resources Group, allowing for staff resources to be allocated elsewhere in the e-resources lifecycle. This extra staff time also allowed time to “kick the tires” of the system, which resulted in an assessment workflow using Microsoft Excel to compare how raw COUNTER data uploaded to the system was affected by title normalization in the knowledgebase. This assessment workflow helped to identify the volume of data available in the system, and also gave clarity to how the 360 COUNTER system works and what steps need to be taken–by both ProQuest and Yale Library–to improve reporting accuracy. Please note that this presentation will touch on issues found within the system, and how ProQuest worked with Yale to identify the source through title normalization decisions, and correct errors when possible. The primary purpose is to bring awareness for the need of reporting tool assessment, which can be applied to any assessment tool, not just 360 COUNTER.

Brave New eWorld: Struggles and Solutions

Brave New eWorld: Struggles and SolutionsUniversity of Toronto Libraries - Information Technology Services

American Library Association Conference 2013Brooking Ingesting Metadata - FINAL

This presentation was provided by Diana Brooking of the University of Washington during the 11th Annual NISO-BISG Forum, Delivering the Integrated Information Experience, on June 23, 2017 and held at the ALA Annual Conference.

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Hyperconnected Learning: Linked Data at Pearson

Madi Weland Solomon, Director of Semantic Platforms & Metadata, Pearson PlcText mining 101 what you should know

Presenters:

Patricia Cleary, Global eProduct Development Manager, Springer

Kristen Garlock, ITHAKA/JSTOR

Denise D Novak, Acquisitions Librarian, Carnegie Mellon University

Ethen Pullman, Carnegie Mellon University

Academic libraries and publishers are fielding an increasing number of faculty/researcher text mining requests. This program will address these needs and offer some best practices. Specific examples from academic libraries will highlight the administrative and technical issues, while the resource provider perspective will focus on the challenges of rights management clearance and how to deliver the information, as well as the publisher philosophy on supporting digital scholarship efforts. The session will capture the issues from both sides and provide attendees with a framework for handling requests at their own institutions. In keeping with the theme "Embracing New Horizons" we will use this time to explore possibilities for better communication around digital scholarship issues, and the development of best practices, through appropriate channels.

Grant apr20-8

This presentation was provided by Carl Grant of the University of Oklahoma for a NISO virtual conference, held on April 20, 2016.

Lowe NISO virtual conf feb17

This talk was provided by Brian Lowe of Ontocale SRL during the NISO Virtual Conference, Using Open Source in Your Institution, held on February 17, 2016

Web scale discovery service

This is a presentation presented in the IASLIC Seminar held at Gauhati University

Big Data projects.pdf

ChatGPT

The Big Data projects course includes five projects:

Data Engineering with PDF Summary Tool: Create a Streamlit app to summarize PDFs, comparing nougat and PyPDF libraries, and integrate architectural diagrams.

Large Language Models for SEC Document Summarization: Develop a tool for summarizing PDF documents, evaluating different libraries, and creating Jupyter notebooks and APIs for Streamlit integration.

Document Summarization with LLMs and RAG: Focus on automating embedding creation, data processing, and developing a client-facing application with secure login and search functionalities.

Data Engineering with Snowpark Python: Reproduce data pipeline steps, analyze datasets, design architectural diagrams, and integrate Streamlit with OpenAI for SQL query generation using natural language.

Project Redesign and Rearchitecture: Review existing architecture and redesign using open-source components and enterprise alternatives, focusing on flexible, scalable, and cost-effective solutions.

Agile Methodology Approach to SSRS Reporting

Agile Methodology Approach to SSRS Reporting. How to utilize principles from Agile project management process and utilize it for creating better SSRS reports.

More Related Content

What's hot

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Smart Content applications at Elsevier

Michael Lauruhn, Disruptive Technology Director, ElsevierThe Road from Millennium to Alma: Two Tracks, One Destination

In 2016, two academic libraries migrated from Innovative Interface’s Millennium to Ex Libris’ Alma. Though both libraries came from a similar starting point in terms of library software, their migration environments were quite different: Colorado State University’s migration involved two campuses, CSU Fort Collins and CSU Pueblo, while Central Connecticut State University migrated with a newly-formed consortium comprised of 18 institutions. Even though both libraries share the same proprietary ILS, the environmental differences between the two libraries shape their experiences throughout the migration process. The presenters will share their libraries’ unique experiences while also addressing commonalities germane to the ILS migration process such as pre-migration data clean up, data migration, training, and designing workflows. Particular attention will be paid to the data migration process that details the extraction process along with coordinating these efforts. Because Alma is designed on a different concept than III’s Millennium, the redesign of workflows is critical prior to the final cutover to the new system. In light of this, the presenters will address the engagement of staff during these discussions along with their professional growth. In addition to explaining the technical aspects of this migration, they will also delve beneath the surface of the intellectual labor required for implementation and examine the psychological impact on all constituents who will use the new system for their daily work.

Kristin D'Amato

Central Connecticut State University

Kristin D’Amato is the Head of Acquisitions and Serials at Central Connecticut State University’s Elihu Burritt Library. She received her master’s in Library and Information Science from SUNY Albany and her bachelor’s in English Literature from SUNY Geneseo.

Rachel Erb Edit Profile

Colorado State University

Rachel A. Erb is the Electronic Resources Management Librarian at Colorado State University’s Morgan Library. She received her master's in Library Science from Florida State University, a master's in Slavic Languages and Literatures from Ohio State University, and her bachelor’s in Russian from Dickinson College.

Investigating Perpetual Access

Speaker: Bethany Greene, Electronic Resources Management Librarian, Duke University

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Keynote Address: Linking Data: What Does It Take to Make It Happen?

Marjorie Hlava, President, Access Innovations, Inc. and Data HarmonyResearch information management: making sense of it all

"Research information management: making sense of it all" - Julia Hawks, VP North America, Symplectic

Slides from Shaking It Up: Challenges and Solutions in Scholarly Information Management, San Francisco, April 22, 2015

Navigating the data management ecosystem - John Kratz

"Navigating the data management ecosystem" - John Kratz

Rodriguez No Free Lunch Sept 7

This presentation was provided by Allyson Rodriguez of the University of North Texas during a NISO Webinar on the topic of Open Access and Acquisitions, held on September 7, 2016

The Intersection of InterLibrary Loan and Acquisition Models: A review of rec...

Speaker: Sarah Paige, ILL Coordinator, Bailey/Howe Library, University of Vermont

Navigating the data management ecosystem - Dan Valen

"Navigating the data management ecosystem" - Dan Valen, figshare product specialist

Practical applications for altmetrics in a changing metrics landscape

"Practical applications for altmetrics in a changing metrics landscape" - Sara Rouhi, Altmetric product specialist, and Anirvan Chatterjee, Director Data Strategy for CTSI at UCSF

Beyond COUNTER Compliant: Ways to Assess E-Resources Reporting Tools

Kelly Marie Blanchat, presenter

The need to continually evaluate electronic resources should not limited to a metric for how resources perform. The reporting tools that monitor and collect e-resource usage need to have their performance evaluated as well. This presentation will cover how vendor-provided systems -- designed to aid in the decision making process of the e-resources lifecycle -- can be assessed for reporting accuracy. Following this session, participants will have an understanding of what data points to review when assessing vendor-provided usage statistic tools, and will have a method to begin evaluating their own systems. In summer 2015, Yale Library brought up ProQuest’s 360 COUNTER Data Retrieval Service (DRS), a service in which COUNTER-compliant usage statistics are uploaded, archived, and normalized into consolidated reports twice per year. To date 360 COUNTER has freed up a significant amount of time for Yale's E-Resources Group, allowing for staff resources to be allocated elsewhere in the e-resources lifecycle. This extra staff time also allowed time to “kick the tires” of the system, which resulted in an assessment workflow using Microsoft Excel to compare how raw COUNTER data uploaded to the system was affected by title normalization in the knowledgebase. This assessment workflow helped to identify the volume of data available in the system, and also gave clarity to how the 360 COUNTER system works and what steps need to be taken–by both ProQuest and Yale Library–to improve reporting accuracy. Please note that this presentation will touch on issues found within the system, and how ProQuest worked with Yale to identify the source through title normalization decisions, and correct errors when possible. The primary purpose is to bring awareness for the need of reporting tool assessment, which can be applied to any assessment tool, not just 360 COUNTER.

Brave New eWorld: Struggles and Solutions

Brave New eWorld: Struggles and SolutionsUniversity of Toronto Libraries - Information Technology Services

American Library Association Conference 2013Brooking Ingesting Metadata - FINAL

This presentation was provided by Diana Brooking of the University of Washington during the 11th Annual NISO-BISG Forum, Delivering the Integrated Information Experience, on June 23, 2017 and held at the ALA Annual Conference.

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...National Information Standards Organization (NISO)

Hyperconnected Learning: Linked Data at Pearson

Madi Weland Solomon, Director of Semantic Platforms & Metadata, Pearson PlcText mining 101 what you should know

Presenters:

Patricia Cleary, Global eProduct Development Manager, Springer

Kristen Garlock, ITHAKA/JSTOR

Denise D Novak, Acquisitions Librarian, Carnegie Mellon University

Ethen Pullman, Carnegie Mellon University

Academic libraries and publishers are fielding an increasing number of faculty/researcher text mining requests. This program will address these needs and offer some best practices. Specific examples from academic libraries will highlight the administrative and technical issues, while the resource provider perspective will focus on the challenges of rights management clearance and how to deliver the information, as well as the publisher philosophy on supporting digital scholarship efforts. The session will capture the issues from both sides and provide attendees with a framework for handling requests at their own institutions. In keeping with the theme "Embracing New Horizons" we will use this time to explore possibilities for better communication around digital scholarship issues, and the development of best practices, through appropriate channels.

Grant apr20-8

This presentation was provided by Carl Grant of the University of Oklahoma for a NISO virtual conference, held on April 20, 2016.

Lowe NISO virtual conf feb17

This talk was provided by Brian Lowe of Ontocale SRL during the NISO Virtual Conference, Using Open Source in Your Institution, held on February 17, 2016

Web scale discovery service

This is a presentation presented in the IASLIC Seminar held at Gauhati University

What's hot (20)

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

The Road from Millennium to Alma: Two Tracks, One Destination

The Road from Millennium to Alma: Two Tracks, One Destination

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

Research information management: making sense of it all

Research information management: making sense of it all

Navigating the data management ecosystem - John Kratz

Navigating the data management ecosystem - John Kratz

The Intersection of InterLibrary Loan and Acquisition Models: A review of rec...

The Intersection of InterLibrary Loan and Acquisition Models: A review of rec...

Navigating the data management ecosystem - Dan Valen

Navigating the data management ecosystem - Dan Valen

Library management and User Trends for SAGE Editors

Library management and User Trends for SAGE Editors

Practical applications for altmetrics in a changing metrics landscape

Practical applications for altmetrics in a changing metrics landscape

Beyond COUNTER Compliant: Ways to Assess E-Resources Reporting Tools

Beyond COUNTER Compliant: Ways to Assess E-Resources Reporting Tools

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

NISO/NFAIS Joint Virtual Conference: Connecting the Library to the Wider Wor...

Similar to A snake, a planet, and a bear ditching spreadsheets for quick, reproducible report production with python, jupyter, and pandas

Big Data projects.pdf

ChatGPT

The Big Data projects course includes five projects:

Data Engineering with PDF Summary Tool: Create a Streamlit app to summarize PDFs, comparing nougat and PyPDF libraries, and integrate architectural diagrams.

Large Language Models for SEC Document Summarization: Develop a tool for summarizing PDF documents, evaluating different libraries, and creating Jupyter notebooks and APIs for Streamlit integration.

Document Summarization with LLMs and RAG: Focus on automating embedding creation, data processing, and developing a client-facing application with secure login and search functionalities.

Data Engineering with Snowpark Python: Reproduce data pipeline steps, analyze datasets, design architectural diagrams, and integrate Streamlit with OpenAI for SQL query generation using natural language.

Project Redesign and Rearchitecture: Review existing architecture and redesign using open-source components and enterprise alternatives, focusing on flexible, scalable, and cost-effective solutions.

Agile Methodology Approach to SSRS Reporting

Agile Methodology Approach to SSRS Reporting. How to utilize principles from Agile project management process and utilize it for creating better SSRS reports.

Accelerate Report Migrations from Cognos Power BI & Tableau

Learn how the Senturus Report Insights app decodes Cognos reports and models accelerating report migrations to Power BI and Tableau. Save time. Save money. View this on-demand webinar with demos: https://senturus.com/resources/accelerate-report-migrations-from-cognos-to-power-bi-tableau/.

Senturus offers a full spectrum of services in business intelligence and training on Tableau, Power BI and Cognos. Our resource library has hundreds of free live and recorded webinars, blog posts, demos and unbiased product reviews available on our website at: http://www.senturus.com/senturus-resources/.

From Spreadsheets to SUSHI: Five Years of Assessing E-Resources

From Spreadsheets to SUSHI: Five Years of Assessing E-Resources

Charleston Conference

November 7, 2013

From Spreadsheets to SUSHI: Five Years of Assessing Use of E-Resources

Kristin Calvert (speaker), Leslie Farison (speaker)

Map reduce advantages over parallel databases report

I. Introduction

II. State of the art

a. Parallel Database Technology

b. MapReduce Technology

III. Comparative study

Conclusion

SSRS 2008 R2

SSRS 2008 R2 as presented by DataSite at BI data platform on 30/05/2010 at Dan Hotel, Tel Aviv, Israel

Translating SQL to Spreadsheet: A Survey

Spreadsheets are the most popular and conventionally databases in use today. Since Spreadsheets are visual and expression based languages, research into the features of spreadsheets is therefore a highly relevant topic to study. Spreadsheet can be viewed as a Relation Database which contains a sheet and its corresponding information in terms of rows, while in RDBMS each table or say relation also represents its contained information in terms of rows. Each row represents a record which belongs to one or more relation. Spreadsheets uses different formulae to extract required information but it need expert knowledge about the tool and its usage. One can extend the usage of Spreadsheet in any direction as it provides great flexibility in terms of data storage and dependency of stored data. We surveyed some of research which took great attention over Spreadsheets and its applicability in different functional cases, such as Data Visualization, SQL Engines and many more. Our survey focuses on QUERYSHEET, ES-SQL, MDSHEET and PrediCalc [3], [5], [4], [8]. These different researches are motivations to our survey and attraction in Spreadsheets and its functional extensibility.

IntroductionThis report discusses the programming process whic.docx

Introduction

This report discusses the programming process which I would developed and used to produce the required data suitable for part two and three. The main measurement that I used to generate data is one region, particularly in two month period of time. This period information is required to generate from particular years 2011, 2012 and 2013. This data contains two different types of information which are climatic conditions recorded and power consumption that are related to that period of time.

Climatic conditions

The program that I developed using C programming related to weather data was focusing on years. What is supposed to do is processing a bunch of dataset containing information that is climatic conditions recorded across various regions. Which means reducing it down to just the data values that are relevant or meaningful to the desired region (Auckland) to be able to get its details on January and February in particular years .The idea is collecting the 2011+2013 desired information and generating it in a separate excel file then so on for 2013.

Power consumption

It is the same idea for power consumption, what I accomplished was using two processes in a huge number of data file to generate a filtered file. Although that huge file contained only the required years, there were unwanted months details that needed to be excluded. The first process was using C codes programming to get the desired two months by printing out the first two months of each year. So, during printing process, it had to be stopped at the end of the second months of each year and jumping on the following year to complete the process. The second process was combining every two rows of the filtered file as each row taken every 5 minutes power consuming recorded but the requirement was ten minutes reading for each row.

After achieving all of that processes and generating the filtered files, we need to use these files information with Weka to undertake a data modelling task. Then using this modelling task in different visualization techniques to see how well the performance of the task predictive is. The following sections show how to use the generated data both the weather data and power consumption in data mining and data visualization.

STAT390-14B (Ham): Directed Study Project

Individual Project Focus: Work vs. Play

Project co-ordinator: Associate Professor David Bainbridge

Process the weather data for Auckland in January and February in the given

dataset (10 minute readings) and experiment with various data mining

techniques to see if a model can be generated that predicts power

consumption for Monday-Friday (work), Saturday, and Sunday (play). Is it

easier to predict the power usage for one of time periods? Trial having

Saturday and Sunday represented as a single entity (i.e. the weekend) and as

separate days.

The aim of this directed study project is combine the programming skills learnt in COMP5002 (BoPP)

with the Data Min.

DBMS CAPSTONE PPT (1).pptx

CAPSTONE PROJECT. OPEN-ONES PROJECT MANAGEMENT SYSTEM. CONTENTS Introduction Plan Requirement Design Implementation Summary Demo and QA.CAPSTONE PROJECT. OPEN-ONES PROJECT MANAGEMENT SYSTEM. CONTENTS Introduction Plan Requirement Design Implementation Summary Demo and QA.CAPSTONE PROJECT. OPEN-ONES PROJECT MANAGEMENT SYSTEM. CONTENTS Introduction Plan Requirement Design Implementation Summary Demo and QA.CAPSTONE PROJECT. OPEN-ONES PROJECT MANAGEMENT SYSTEM. CONTENTS Introduction Plan Requirement Design Implementation Summary Demo and QA.

Similar to A snake, a planet, and a bear ditching spreadsheets for quick, reproducible report production with python, jupyter, and pandas (20)

Accelerate Report Migrations from Cognos Power BI & Tableau

Accelerate Report Migrations from Cognos Power BI & Tableau

From Spreadsheets to SUSHI: Five Years of Assessing E-Resources

From Spreadsheets to SUSHI: Five Years of Assessing E-Resources

From Spreadsheets to SUSHI: Five Years of Assessing Use of E-Resources

From Spreadsheets to SUSHI: Five Years of Assessing Use of E-Resources

Map reduce advantages over parallel databases report

Map reduce advantages over parallel databases report

Choosing a Data Visualization Tool for Data Scientists_Final

Choosing a Data Visualization Tool for Data Scientists_Final

IntroductionThis report discusses the programming process whic.docx

IntroductionThis report discusses the programming process whic.docx

More from NASIG

Ctrl + Alt + Repeat: Strategies for Regaining Authority Control after a Migra...

Speaker: Jamie Carlstone

This presentation is on how to regain authority control in a large research library catalog: first, dealing with a backlog of problems from years without authority control and second, creating a process for ongoing workflows to realistically maintain authority control when new records are added to the collection.

The Serial Cohort: A Confederacy of Catalogers

Speaker: Mandy Hurt

In 2018, at a time when our department was shrinking through attrition, the decision was made to further leverage the particular skill sets of a select group of monographic catalogers by training them to also undertake the complex copy cataloging of serials.

This presentation concerns the assumptions underlying how this decision was originally made, the initial plan for how this would be accomplished by CONSER Bridge Training, the eventual formation of the Serials Cohort with a view to creating an iterative process I would design and manage, and the problems, obstacles and time constraints faced and addressed along the way.

Calculating how much your University spends on Open Access and what to do abo...

Librarians are working hard to understand how much money their university is spending on open access article processing fees (APCs), and how much of what they subscribe to is available as OA. This information is useful when making subscription decisions, considering Read and Publish agreements, rethinking library open access budgets, and designing Institution-wide OA policies.

This session will talk concretely about how to calculate the impact of Open Access on *your* university. It will provide an overview on how to estimate the amount of money spent across a university on Open Access fees: we will discuss underlying concepts behind calculating OA article-processing fee (APC) spend and give an overview of useful data sources, including:

FlourishOA

Microsoft Academic Graph

PLOS API

Unpaywall Journals

We will also talk about Open Access on the subscription side, including how much of what you subscribe to is available as open access and how you can use that in your subscription decisions and negotiations.

The presenters are the cofounders of Our Research, the nonprofit company behind Unpaywall, the primary source of Open Access data worldwide.

Heather Piwowar, Co-founder, Our Research

Jason Priem, Co-founder, Our Research

Measure Twice and Cut Once: How a Budget Cut Impacted Subscription Renewals f...

Speakers: Ilda Cardenas, Keri Prelitz, Greg Yorba

The process of looking at subscriptions with the goal of proactively downsizing revealed that the library’s existing renewal workflows were outdated and in need of regular analysis to identify underused resources. Additionally, this project uncovered shortcomings of analysis that is reliant on usage data, the unexpected ramifications of large-scale subscription cancellations, as well as the need for improved communication within and between the many library departments affected by subscription cancellations.

Analyzing workflows and improving communication across departments

Presented by Jharina Pascual and Sarah Wallbank.

The presentation provides people with simple techniques for analyzing their local workflow and information-sharing practices, some ideas for interrogating and improving intra-technical services communication, and ideas for simple changes that can improve communication and build a sense of community/joint purpose within or across departments.

Supporting Students: OER and Textbook Affordability Initiatives at a Mid-Size...

Presented by Jennifer L. Pate.

With support from the president and provost of the university, Collier Library adopted strategic purchasing initiatives, including database purchases to support specific courses as well as purchasing reserve copies of textbooks for high-enrollment, required classes. In addition, the scholarly communications librarian became a founding member of the OER workgroup on campus. This group’s mission is to direct efforts for increasing faculty awareness and adoption of OER. This presentation discusses the structure of the each of these programs from initial idea to implementation. Included will be discussions of assessment of faculty and student awareness, development of an OER grant program, starting a textbook purchasing program, promotion of efforts, funding, and future goals.

Access to Supplemental Journal Article Materials

Presented by Electra Enslow, Suzanne Fricke, Susan Shipman

The use of supplemental journal article materials is increasing in all disciplines. These materials may be datasets, source code, tables/figures, multimedia or other materials that previously went unpublished, were attached as appendices, or were included within the body of the work. Current emphasis on critical appraisal and reproducibility demands that researchers have access to the complete shared life cycle in order to fully evaluate research. As more libraries become dependent on secondary aggregators and interlibrary loan, we questioned if access to these materials is equitable and sustainable.

Communications and context: strategies for onboarding new e-resources librari...

Presented by Bonnie Thornton.

This presentation details onboarding strategies institutions can utilize to help acclimate new e-resources librarians with an emphasis on strategies for effectively establishing and perpetuating communications with stakeholders.

Full Text Coverage Ratios: A Simple Method of Article-Level Collections Analy...

Presented by Matthew Goddard.

his presentation describes a simple and efficient method of using a discovery layer to evaluate periodicals holdings at the article level, and suggest a variety of applications.

Web accessibility in the institutional repository crafting user centered sub...

Presented by Jenny Hoops and Margaret McLaughlin.

As web accessibility initiatives increase across institutions, it is important not only to reframe and rethink policies, but also to develop sustainable and tenable methods for enforcing accessibility efforts. For institutional repositories, it is imperative to determine the extent to which both the repository manager and the user are responsible for depositing accessible content. This presentation allows us to share our accessibility framework and help repository and content managers craft sustainable, long-term goals for accessible content in institutional repositories, while also providing openly available resources for short-term benefit.

Linked Data at Smithsonian Libraries

Linked Data is exploding in the library world, but the biggest problems libraries have are coming up with the time or money involved in converting their records, looking into Linked Data programs, finding community support, and all the various other issues that arise as part of developing new methods. Likewise, one of the biggest hurdles for libraries and linked data is that they do not know what to do to get involved. As we have fewer people available and smaller budgets each year, we would like to explore ways in which libraries can get involved in the process without expending an undue amount of their already dwindling resources. To see how linked data can be applied, we will look at the example of the Smithsonian Libraries (SIL). Over the past 18 months, SIL has been preparing for the transition from MARC to linked open data. This session will talk about various SIL projects and initiatives (such as the FAST headings project and the introduction of Wikidata and WikiBase); how to incorporate linked data elements into MARC records; and how to develop staff and give them proficiency with new tools and workflows.

Heidy Berthoud, Head, Resource Description, Smithsonian Libraries

Walk this way: Online content platform migration experiences and collaboration

In this session, a librarian and a publisher share their perspectives on content platform migrations, and the Working Group Co-chairs will describe the group’s efforts to-date and expected outcomes. Our publisher-side speaker will describe issues they must consider when their content migrates, such as providing continuous access, persistent linking, communicating with stakeholders, and working with vendors. Our librarian speaker will describe their experience and steps they take during migrations, such as receiving notifications about migrations, identifying affected e-resources, updating local systems to ensure continuous access, and communicating with their front-line staff and patrons.

Read & Publish – What It Takes to Implement a Seamless Model?

PANELISTS

Adam Chesler

Director of Global Sales

AIP Publishing

Sara Rotjan

Assistant Marketing Director, AIP Publishing

Keith Webster

Dean of Libraries and Director of Emerging and Integrative Media Initiatives

Carnegie Mellon University

Andre Anders

Director, Leibniz Institute of Surface Engineering (IOM)

Editor in Chief of Journal of Applied Physics

Professor of Applied Physics, Leipzig University

“Read & Publish” agreements continue to gain global attention. What’s rarely discussed when these new access and article processing models are introduced is the paperwork, back-end technology and overall management required to implement the new program that works for all involved. This panel, comprised of a librarian, publisher, and researcher, will focus on the complexities of developing, implementing and using the infrastructures of different Read & Publish models and the challenges of developing a seamless experience for everyone.

From article submission to publication to final reporting, the panel will discuss the “hidden” impact that new workflows will have on stakeholders in scholarly communications. Time will be allotted for Q&A and attendee participation is encouraged.

When to hold them when to fold them: reassessing big deals in 2020

This presentation goes into details for each of the publishers’ big deals that we examined and present reasons as to why we cancelled them, with concrete examples from our experiences (four cancellations and two restructurings).

Getting on the Same Page: Aligning ERM and LIbGuides Content

This presentation gives background on the development of the initial processes, the review and revision of the processes,and the issues encountered in developing a workflow for importing data from one system to the other.

A multi-institutional model for advancing open access journals and reclaiming...

The presenters will provide brief overviews of CIL and PDXScholar, and they will detail the challenges and ultimate successes of this multi-institutional model for advancing open access journals and reclaiming control of the scholarly record.

Knowledge Bases: The Heart of Resource Management

This session will discuss the knowledge base metadata lifecycle, current and upcoming metadata standards, and the effect that knowledge bases have on discovery and e-resource management. The presenters will look at ways knowledge bases can be leveraged to create downstream tools for resource management and discovery. The session will also provide different perspectives on knowledge bases, including from librarians and product managers, as well as a discussion of the NISO's KBART Automation recommended practice and what this could mean for knowledge bases in the future. The session will also include a conversation regarding how leveraging knowledge bases can aid librarians in improving resource discovery within their own libraries and ultimately decrease the amount of time spent on metadata workflows. Through this presentation, we also aim to improve communication between the library community and metadata providers and creators.

Elizabeth Levkoff Derouchie, Metadata Librarian for Serials & Electronic Resources, Samford University Library

Beth Ashmore, Associate Head, Acquisitions & Discovery (Serials), North Carolina State University

Eric Van Gorden, Product Manager, EBSCO

Practical approaches to linked data

This session will talk about various SIL projects and initiatives (such as the FAST headings project and the introduction of Wikidata and WikiBase); how to incorporate linked data elements into MARC records; and how to develop staff and give them proficiency with new tools and workflows.

More from NASIG (20)

Ctrl + Alt + Repeat: Strategies for Regaining Authority Control after a Migra...

Ctrl + Alt + Repeat: Strategies for Regaining Authority Control after a Migra...

Calculating how much your University spends on Open Access and what to do abo...

Calculating how much your University spends on Open Access and what to do abo...

Measure Twice and Cut Once: How a Budget Cut Impacted Subscription Renewals f...

Measure Twice and Cut Once: How a Budget Cut Impacted Subscription Renewals f...

Analyzing workflows and improving communication across departments

Analyzing workflows and improving communication across departments

Supporting Students: OER and Textbook Affordability Initiatives at a Mid-Size...

Supporting Students: OER and Textbook Affordability Initiatives at a Mid-Size...

Communications and context: strategies for onboarding new e-resources librari...

Communications and context: strategies for onboarding new e-resources librari...

Full Text Coverage Ratios: A Simple Method of Article-Level Collections Analy...

Full Text Coverage Ratios: A Simple Method of Article-Level Collections Analy...

Web accessibility in the institutional repository crafting user centered sub...

Web accessibility in the institutional repository crafting user centered sub...

Walk this way: Online content platform migration experiences and collaboration

Walk this way: Online content platform migration experiences and collaboration

Read & Publish – What It Takes to Implement a Seamless Model?

Read & Publish – What It Takes to Implement a Seamless Model?

Mapping Domain Knowledge for Leading and Managing Change

Mapping Domain Knowledge for Leading and Managing Change

When to hold them when to fold them: reassessing big deals in 2020

When to hold them when to fold them: reassessing big deals in 2020

Getting on the Same Page: Aligning ERM and LIbGuides Content

Getting on the Same Page: Aligning ERM and LIbGuides Content

A multi-institutional model for advancing open access journals and reclaiming...

A multi-institutional model for advancing open access journals and reclaiming...

Recently uploaded

How to Break the cycle of negative Thoughts

We all have good and bad thoughts from time to time and situation to situation. We are bombarded daily with spiraling thoughts(both negative and positive) creating all-consuming feel , making us difficult to manage with associated suffering. Good thoughts are like our Mob Signal (Positive thought) amidst noise(negative thought) in the atmosphere. Negative thoughts like noise outweigh positive thoughts. These thoughts often create unwanted confusion, trouble, stress and frustration in our mind as well as chaos in our physical world. Negative thoughts are also known as “distorted thinking”.

Thesis Statement for students diagnonsed withADHD.ppt

Presentation required for the master in Education.

Sectors of the Indian Economy - Class 10 Study Notes pdf

The Indian economy is classified into different sectors to simplify the analysis and understanding of economic activities. For Class 10, it's essential to grasp the sectors of the Indian economy, understand their characteristics, and recognize their importance. This guide will provide detailed notes on the Sectors of the Indian Economy Class 10, using specific long-tail keywords to enhance comprehension.

For more information, visit-www.vavaclasses.com

CLASS 11 CBSE B.St Project AIDS TO TRADE - INSURANCE

Class 11 CBSE Business Studies Project ( AIDS TO TRADE - INSURANCE)

Overview on Edible Vaccine: Pros & Cons with Mechanism

This ppt include the description of the edible vaccine i.e. a new concept over the traditional vaccine administered by injection.

TESDA TM1 REVIEWER FOR NATIONAL ASSESSMENT WRITTEN AND ORAL QUESTIONS WITH A...

TESDA TM1 REVIEWER FOR NATIONAL ASSESSMENT WRITTEN AND ORAL QUESTIONS WITH ANSWERS.

Home assignment II on Spectroscopy 2024 Answers.pdf

Answers to Home assignment on UV-Visible spectroscopy: Calculation of wavelength of UV-Visible absorption

How libraries can support authors with open access requirements for UKRI fund...

How libraries can support authors with open access requirements for UKRI funded books

Wednesday 22 May 2024, 14:00-15:00.

Supporting (UKRI) OA monographs at Salford.pptx

How libraries can support authors with open access requirements for UKRI funded books

Wednesday 22 May 2024, 14:00-15:00.

How to Make a Field invisible in Odoo 17

It is possible to hide or invisible some fields in odoo. Commonly using “invisible” attribute in the field definition to invisible the fields. This slide will show how to make a field invisible in odoo 17.

Model Attribute Check Company Auto Property

In Odoo, the multi-company feature allows you to manage multiple companies within a single Odoo database instance. Each company can have its own configurations while still sharing common resources such as products, customers, and suppliers.

Cambridge International AS A Level Biology Coursebook - EBook (MaryFosbery J...

for studentd in cabridge board

Mule 4.6 & Java 17 Upgrade | MuleSoft Mysore Meetup #46

Mule 4.6 & Java 17 Upgrade | MuleSoft Mysore Meetup #46

Event Link:-

https://meetups.mulesoft.com/events/details/mulesoft-mysore-presents-exploring-gemini-ai-and-integration-with-mulesoft/

Agenda

● Java 17 Upgrade Overview

● Why and by when do customers need to upgrade to Java 17?

● Is there any immediate impact to upgrading to Mule Runtime 4.6 and beyond?

● Which MuleSoft products are in scope?

For Upcoming Meetups Join Mysore Meetup Group - https://meetups.mulesoft.com/mysore/

YouTube:- youtube.com/@mulesoftmysore

Mysore WhatsApp group:- https://chat.whatsapp.com/EhqtHtCC75vCAX7gaO842N

Speaker:-

Shubham Chaurasia - https://www.linkedin.com/in/shubhamchaurasia1/

Priya Shaw - https://www.linkedin.com/in/priya-shaw

Organizers:-

Shubham Chaurasia - https://www.linkedin.com/in/shubhamchaurasia1/

Giridhar Meka - https://www.linkedin.com/in/giridharmeka

Priya Shaw - https://www.linkedin.com/in/priya-shaw

Shyam Raj Prasad-

https://www.linkedin.com/in/shyam-raj-prasad/

Template Jadual Bertugas Kelas (Boleh Edit)

Jadual Bertugas kelas dalam bentuk softcopy PowerPoint. Cikgu boleh download >> edit >> print >> laminate. Semoga bermanfaat.

Operation Blue Star - Saka Neela Tara

Operation “Blue Star” is the only event in the history of Independent India where the state went into war with its own people. Even after about 40 years it is not clear if it was culmination of states anger over people of the region, a political game of power or start of dictatorial chapter in the democratic setup.

The people of Punjab felt alienated from main stream due to denial of their just demands during a long democratic struggle since independence. As it happen all over the word, it led to militant struggle with great loss of lives of military, police and civilian personnel. Killing of Indira Gandhi and massacre of innocent Sikhs in Delhi and other India cities was also associated with this movement.

Unit 8 - Information and Communication Technology (Paper I).pdf

This slides describes the basic concepts of ICT, basics of Email, Emerging Technology and Digital Initiatives in Education. This presentations aligns with the UGC Paper I syllabus.

How to Split Bills in the Odoo 17 POS Module

Bills have a main role in point of sale procedure. It will help to track sales, handling payments and giving receipts to customers. Bill splitting also has an important role in POS. For example, If some friends come together for dinner and if they want to divide the bill then it is possible by POS bill splitting. This slide will show how to split bills in odoo 17 POS.

Polish students' mobility in the Czech Republic

Polish students mobility to the Czech Republic within eTwinning project "Medieval adventures with Marco Polo"

Synthetic Fiber Construction in lab .pptx

Synthetic fiber production is a fascinating and complex field that blends chemistry, engineering, and environmental science. By understanding these aspects, students can gain a comprehensive view of synthetic fiber production, its impact on society and the environment, and the potential for future innovations. Synthetic fibers play a crucial role in modern society, impacting various aspects of daily life, industry, and the environment. ynthetic fibers are integral to modern life, offering a range of benefits from cost-effectiveness and versatility to innovative applications and performance characteristics. While they pose environmental challenges, ongoing research and development aim to create more sustainable and eco-friendly alternatives. Understanding the importance of synthetic fibers helps in appreciating their role in the economy, industry, and daily life, while also emphasizing the need for sustainable practices and innovation.

Recently uploaded (20)

Thesis Statement for students diagnonsed withADHD.ppt

Thesis Statement for students diagnonsed withADHD.ppt

Sectors of the Indian Economy - Class 10 Study Notes pdf

Sectors of the Indian Economy - Class 10 Study Notes pdf

CLASS 11 CBSE B.St Project AIDS TO TRADE - INSURANCE

CLASS 11 CBSE B.St Project AIDS TO TRADE - INSURANCE

Overview on Edible Vaccine: Pros & Cons with Mechanism

Overview on Edible Vaccine: Pros & Cons with Mechanism

TESDA TM1 REVIEWER FOR NATIONAL ASSESSMENT WRITTEN AND ORAL QUESTIONS WITH A...

TESDA TM1 REVIEWER FOR NATIONAL ASSESSMENT WRITTEN AND ORAL QUESTIONS WITH A...

Home assignment II on Spectroscopy 2024 Answers.pdf

Home assignment II on Spectroscopy 2024 Answers.pdf

How libraries can support authors with open access requirements for UKRI fund...

How libraries can support authors with open access requirements for UKRI fund...

Cambridge International AS A Level Biology Coursebook - EBook (MaryFosbery J...

Cambridge International AS A Level Biology Coursebook - EBook (MaryFosbery J...

Mule 4.6 & Java 17 Upgrade | MuleSoft Mysore Meetup #46

Mule 4.6 & Java 17 Upgrade | MuleSoft Mysore Meetup #46

Unit 8 - Information and Communication Technology (Paper I).pdf

Unit 8 - Information and Communication Technology (Paper I).pdf

A snake, a planet, and a bear ditching spreadsheets for quick, reproducible report production with python, jupyter, and pandas