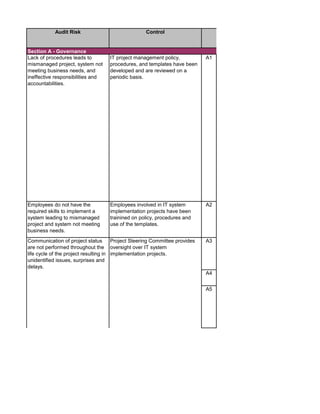

The document outlines an audit program for reviewing a system development lifecycle (SDLC) project. It includes steps for planning the audit, performing risk assessments, reviewing documentation for various project phases, issuing audit reports, and closing out the audit. The objectives are to assess project management, compliance with policies, security controls, internal controls, and whether the project will achieve its expected benefits and objectives. Risks relate to project management, system design, data conversion, and whether the new system will meet user needs and strategy. Controls involve governance policies, contract terms, project documentation, and testing procedures.

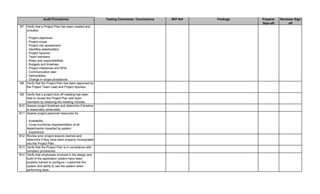

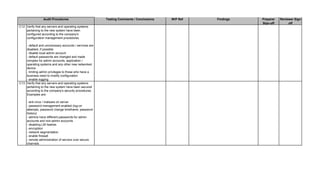

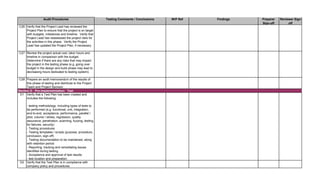

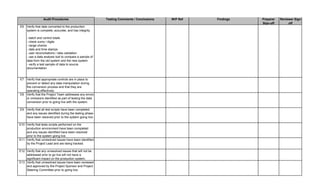

![Audit Step W/P Ref Preparer

Sign-off

Reviewer

Sign-off

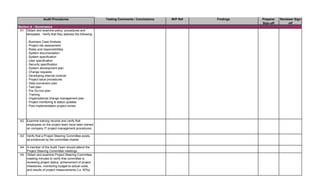

1. Prepare the audit announcement / notification letter informing

applicable people of the estimated start date of the audit, the

objective and the scope. E-mail it to addressee(s). Maintain e-mail

in audit file.

2. Prepare a budget of estimated audit hours by audit category. See

Audit Time Budget tab. Identify audit staff that will be assigned to

the engagement.

3. Review prior SDLC audits and permanent files to ensure

understanding of SDLC process and previously identified audit

findings. Document any risks noted in the Risk Assessment tab.

Update information in the permanent files, if necessary.

4. Perform pre-audit risk assessment. Map risks identified with

audit procedures by updating the Benchmarking and Detail Audit

Testing tabs as necessary.

5. Obtain and review the most current SDLC Policies and

Procedures manual from auditee. Update the Benchmarking and

Detail Audit Testing tabs as necessary.

6. Research industry best practices (ISACA, IIA, NIST, ISO,

PMBOK) and compliance requirements (PCI DSS, Privacy, HIPAA,

etc.) that are applicable to the system being implemented. Update

the Benchmarking and Detail Audit Testing tabs as necessary.

Scope

The audit of the SDLC process will review each phase of a system implementation project. The audit will

address the following areas: governance and risk management, compliance with company procedures and

regulation, project management methodology, budget, internal controls, and business processes.

5. Provide management with an evaluation of the project metrics / KPIs and expected benefits stated within the

project business case report.

AA - Planning

4. Provide management with an assessment of the adequacy of security controls implemented.

[INSERT NAME OF AUDIT AND AUDIT NUMBER]

Objectives

1. Provide management with an independent assessment of the progress, quality and attainment of project

objectives, at defined milestones within the project, based off of company policies and procedures.

3. Provide management with an evaluation of the internal controls of proposed business processes at a point in

the development cycle where enhancements can be easily implemented and processes adapted.

2. Provide management with an assessment of the adequacy of project management methodologies and that

the methodologies are applied consistently across all projects.](https://image.slidesharecdn.com/250250902-141-isaca-nacacs-auditing-it-projects-audit-program-230428063735-9b200bcf/75/250250902-141-ISACA-NACACS-Auditing-IT-Projects-Audit-Program-pdf-1-2048.jpg)

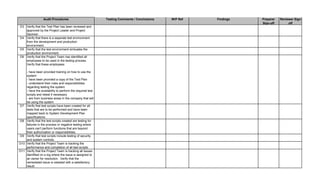

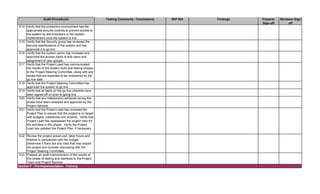

![Audit Step W/P Ref Preparer

Sign-off

Reviewer

Sign-off

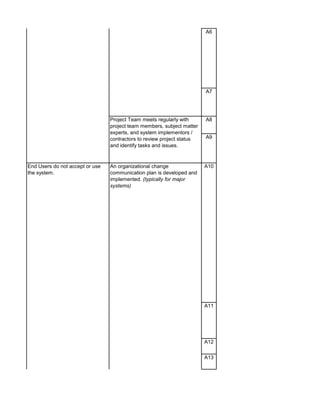

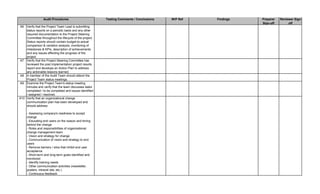

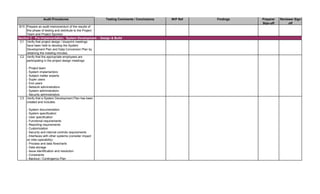

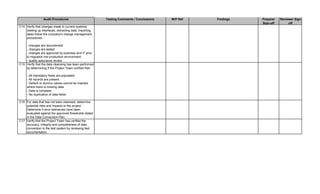

1. Perform follow-up procedures (if applicable) to ensure that

responses to audit findings have been implemented. Follow-up

procedures should be performed within 6 months of issuance date

of audit report. (Note: If management hasn't addressed findings,

schedule a meeting with applicable employees to discuss

implementation of action plan. Maintain meeting minutes.)

2. Prepare Follow-up Audit Report and e-mail it to direct

addressess(s) and management.

3. If management is not properly or timely addressing audit findings,

escalate the matter to the CAE. Document escalation in a

memorandum to the Audit Files.

1. Perform a residual risk assessment and incorporate risks into the

annual audit risk assessment.

2. Update permanent file, if necessary.

3. Review audit workpapers. Verify that all review notes have been

properly addressed and closed. Verify that audit sign-offs have

been performed by audit staff and reviewer(s).

4. Schedule a meeting of the audit team to discuss what worked

well during the audit and areas for improvement to consider for

future audits. Discuss with Audit Manager.

5. This audit has been completed in its entirety as of: [insert date]

6. The audit report and workpapers shall be retained for 7 years

from the date of completion, unless indicated otherwise (e.g.

permanent files). The retentation date is:

[insert date]

DD - Audit Close-Out & Retention Dates

CC - Follow-up Audit Procedures](https://image.slidesharecdn.com/250250902-141-isaca-nacacs-auditing-it-projects-audit-program-230428063735-9b200bcf/85/250250902-141-ISACA-NACACS-Auditing-IT-Projects-Audit-Program-pdf-3-320.jpg)

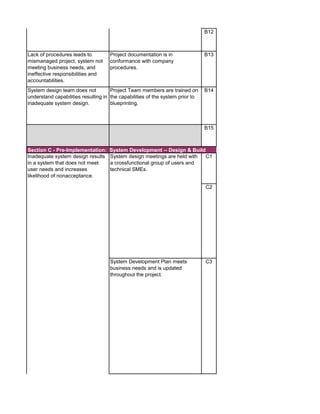

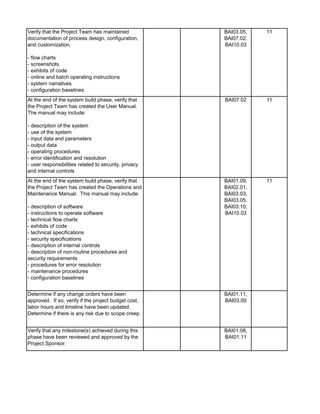

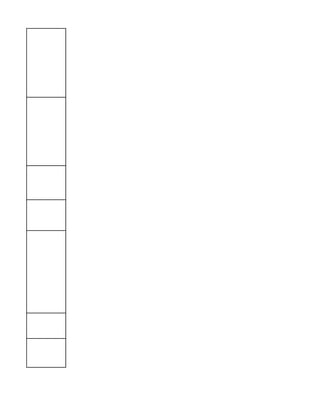

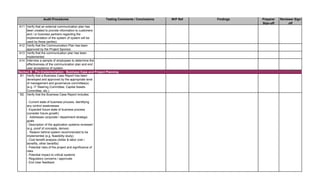

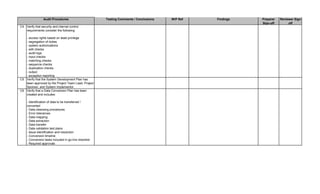

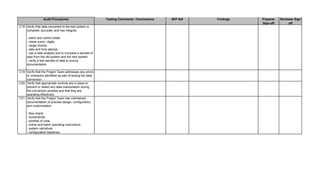

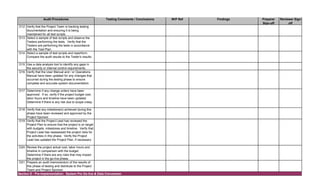

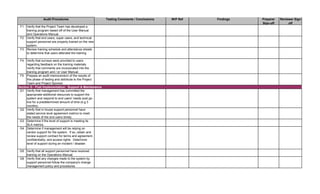

![Audit Area Budget Hours Actual Hours Notes

Planning

Reporting

Follow-up Audit Procedures

Audit Close-out

Detailed Audit Testing

Project Governance

Pre Implementation - Business Case & Project Planning

Pre Implementation - System Development

Pre Implementation - Testing

Pre Implementation - Pre Go-Live & Conversion

Pre Implementation - Training

Post Implementation - Support & Maintenance

Post Implementation - Project Assessment

Post Implementation - Internal Controls Assessment

Total Hours 0 0

Planning

Reporting

Follow-up Audit Procedures

Audit Close-out

Detailed Audit Testing

Project Governance

Pre Implementation - Business Case & Project Planning

Pre Implementation - System Development

Pre Implementation - Testing

Pre Implementation - Pre Go-Live & Conversion

Pre Implementation - Training

Post Implementation - Support & Maintenance

Post Implementation - Project Assessment

Post Implementation - Internal Controls Assessment

Total Hours 0 0

Audit Charge Code:

Audit Manager: [Insert Auditor Name]

Audit Senior: [Insert Auditor Name]](https://image.slidesharecdn.com/250250902-141-isaca-nacacs-auditing-it-projects-audit-program-230428063735-9b200bcf/85/250250902-141-ISACA-NACACS-Auditing-IT-Projects-Audit-Program-pdf-4-320.jpg)

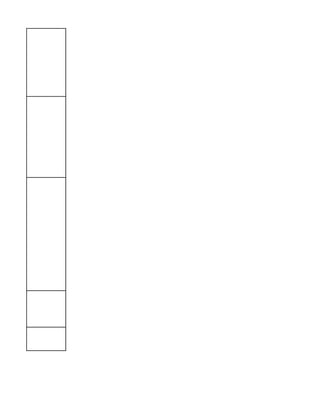

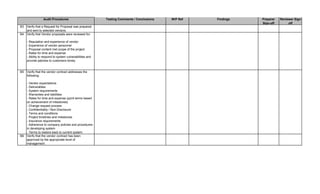

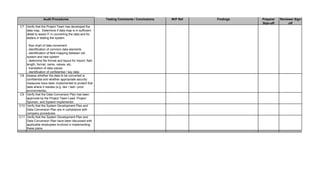

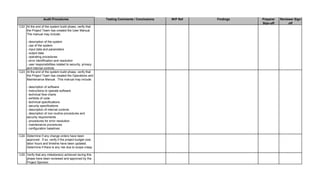

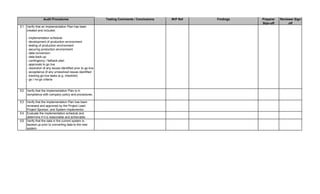

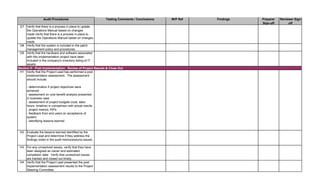

![Audit Area Budget Hours Actual Hours Notes

Audit Charge Code:

Planning

Reporting

Follow-up Audit Procedures

Audit Close-out

Detailed Audit Testing

Project Governance

Pre Implementation - Business Case & Project Planning

Pre Implementation - System Development

Pre Implementation - Testing

Pre Implementation - Pre Go-Live & Conversion

Pre Implementation - Training

Post Implementation - Support & Maintenance

Post Implementation - Project Assessment

Post Implementation - Internal Controls Assessment

Total Hours 0 0

Audit Staff: [Insert Auditor Name]](https://image.slidesharecdn.com/250250902-141-isaca-nacacs-auditing-it-projects-audit-program-230428063735-9b200bcf/85/250250902-141-ISACA-NACACS-Auditing-IT-Projects-Audit-Program-pdf-5-320.jpg)

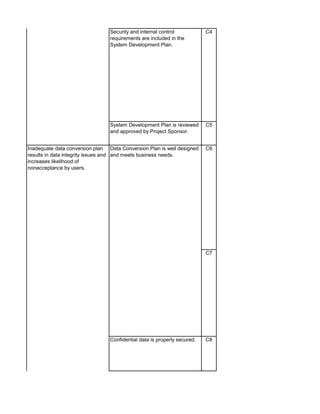

![[Provide a high level overview of the area(s), function(s), business process(es), and current systems that will be affected by the system being

implmeneted.]

General listing of common risks that may occur during a system implementation project.

• System does not align with strategic objectives

• End Users do not accept the system due to poor design

• Project mismanagement leads to scope creep, budget overruns, and delays

• Security vulnerabilities

• Internal control gaps

• Lack of data completeness, accuracy, and integrity

• Inability to adhere to regulation resulting in fines / penalties

• Damage to reputation (especially if system is used by external parties)

• Disruption of service

Risk Rating Definition

High Rating: The potential for a material impact on the company's earnings, assets, reputation, customers, and operations. This risk

has a high likelihood of occurring.

Medium Rating: The potential for a significant impact on the company's earnings, assets, reputation, customers, and operations. This

risk has a medium likelihood of occurring.

Low Rating: The potential for a significant impact on the company's earnings, assets, reputation, customers, and operations. This

risk has a low likelihood of occurring.](https://image.slidesharecdn.com/250250902-141-isaca-nacacs-auditing-it-projects-audit-program-230428063735-9b200bcf/85/250250902-141-ISACA-NACACS-Auditing-IT-Projects-Audit-Program-pdf-6-320.jpg)

![Risk Assessment Questionnaire Audit Notes Risk Rating Audit Step

R25 Do you believe that the new system will require a significant

amount of support from the Help Desk, IS, Super Users, or

the Project Team members after it goes live?

R26 Did you include an assessment of the vendor's security

process related to the product you are purchasing for the

history of vulnerabilities, notifying customers of

vulnerabilities and remediating vulnerabilities identified

through patching?

R27 Will you be using a software development model in

implementing this system? (e.g. rapid application

development, joint application development, agile, spiral,

prototype, or waterfall)

R28 Will you be using any implementation tools on the cloud in

implementing this system?

R29 Does this system or the data that will be contained within it

fall under the scope of international / federal / state

regulation?

R30 [Add additional risks as needed]

R31 Indicate risks stated in the annual audit risk assessment.

R32 Indicate risks identified in review of prior SDLC audits

performed.

R33 Indicate any control deficiencies identified in the area(s),

function(s), or business process(es) that have occurred in

the past two years.

R34 Determine if the members on the Project Team have the

proper training and experience to manage a SDLC project.

R35 Are there any financial risks concerning this project (e.g.

going overbudget, impacting the financial statements,

impacting customer billing or vendor payments, etc.)?

R36 Are there any fraud risks that need to be considered

(programming backdoors, unauthorized access to / theft of

data, intentionally misconfiguring the system, unauthorized

individual (internal employees and contractors) with access

to data and / or systems)?

R37 Are there any security risks that need to be considered

(server / OS, application, data, placement in network

infrastructure (segmentation))?

R38 Review post implementation project assessment reports

and identify any lessons learned that may pose a risk to this

project.

r

R39 Assess if there are any risks related to the response in

question #R27.

R40 Assess if there are any risks related to the response in

question #R28.

R41 [Add additional risks as needed]

Audit Team Assessment](https://image.slidesharecdn.com/250250902-141-isaca-nacacs-auditing-it-projects-audit-program-230428063735-9b200bcf/85/250250902-141-ISACA-NACACS-Auditing-IT-Projects-Audit-Program-pdf-8-320.jpg)