Managing Data: storage, decisions and classification

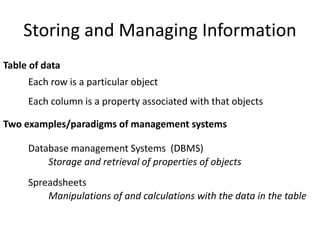

- 1. Storing and Managing Information Table of data Database management Systems (DBMS) Storage and retrieval of properties of objects Spreadsheets Manipulations of and calculations with the data in the table Each row is a particular object Each column is a property associated with that objects Two examples/paradigms of management systems

- 2. Database Management System (DBMS) Organizes data in sets of tables

- 3. Relational Database Management System (RDBMS) Name Address Parcel # John Smith 18 Lawyers Dr.756554 T. Brown 14 Summers Tr. 887419 Table A Table B Parcel # Assessed Value 887419 152,000 446397 100,000 Provides relationships Between data in the tables

- 4. Using SQL- Structured Query Language • SQL is a standard database protocol, adopted by most ‘relational’ databases • Provides syntax for data: – Definition – Retrieval – Functions (COUNT, SUM, MIN, MAX, etc) – Updates and Deletes • SELECT list FROM table WHERE condition • list - a list of items or * for all items o WHERE - a logical expression limiting the number of records selected o can be combined with Boolean logic: AND, OR, NOT o ORDER may be used to format results

- 5. Spreadsheets Every row is a different “object” with a set of properties Every column is a different property of the row object

- 6. Spreadsheet Organization of elements Column A Column B Column C Row 1 Row 2 Row 3 Row and column indicies Cells with addresses A7 B4 C10 D5 Accessing each cell

- 7. Spreadsheet Formulas Formula: Combination of values or cell references and mathematical operators such as +, -, /, * The formula displays in the entry bar. This formula is used to add the values in the four cells. The sum is displayed in cell B7. The results of a formula display in the cell. With cell, row and column functions Ex. Average, sum, min,max,

- 9. Making Sense of Knowledge Time flies like an arrow proverb Fruit flies like a banana Groucho Marx There is a semantic and context behind all words Flies: 1. The act of flying 2. The insect Like: 1. Similar to 2. Are fond of There is also the elusive “Common Sense” 1. One type of fly, the fruit fly, is fond of bananas 2. Fruit, in general, flies through the air just like a banana 3. One type of fly, the fruit fly, is just like a banana A bit complicated because we are speaking metaphorically, Time is not really an object, like a bird, which flies Translation is not just doing a one-to-one search in the dictionary Complex Searches is not just searching for individual words Google translate

- 10. Adding Semantics: Ontologies Concept conceptual entity of the domain Attribute property of a concept Relation relationship between concepts or properties Axiom coherent description between Concepts / Properties / Relations via logical expressions 10 Person Student Professor Lecture isA – hierarchy (taxonomy) name email student nr. research field topic lecture nr. attends holds Structuring of: • Background Knowledge • “Common Sense” knowledge

- 11. Structure of an Ontology Ontologies typically have two distinct components: Names for important concepts in the domain – Elephant is a concept whose members are a kind of animal – Herbivore is a concept whose members are exactly those animals who eat only plants or parts of plants – Adult_Elephant is a concept whose members are exactly those elephants whose age is greater than 20 years Background knowledge/constraints on the domain – Adult_Elephants weigh at least 2,000 kg – All Elephants are either African_Elephants or Indian_Elephants – No individual can be both a Herbivore and a Carnivore 11

- 12. Ontology Definition 12 Formal, explicit specification of a shared conceptualization commonly accepted understanding conceptual model of a domain (ontological theory) unambiguous terminology definitions machine-readability with computational semantics [Gruber93]

- 13. The Semantic Web Ontology implementation 13 "The Semantic Web is an extension of the current web in which information is given well-defined meaning, better enabling computers and people to work in cooperation." -- Tim Berners-Lee “the wedding cake”

- 14. Abstracting Knowledge Several levels and reasons to abstract knowledge Feature abstraction Simplifying “reality” so the know can be used in Computer data structures and algorithms Concept Abstraction Organizing and making sense of the immense amount of data/knowledge we have Modeling abstraction Making usable and predictive models of reality

- 15. Prediction Based on Bayes’ Theorem • Given training data X, posteriori probability of a hypothesis H, P(H|X), follows the Bayes’ theorem • Informally, this can be viewed as posteriori = likelihood x prior/evidence • Predicts X belongs to Ci iff the probability P(Ci|X) is the highest among all the P(Ck|X) for all the k classes • Practical difficulty: It requires initial knowledge of many probabilities, involving significant computational cost 15 )(/)()|( )( )()|()|( XX X XX PHPHP P HPHPHP

- 16. Naïve Bayes Classifier age income studentcredit_ratingbuys_comput <=30 high no fair no <=30 high no excellent no 31…40 high no fair yes >40 medium no fair yes >40 low yes fair yes >40 low yes excellent no 31…40 low yes excellent yes <=30 medium no fair no <=30 low yes fair yes >40 medium yes fair yes <=30 medium yes excellent yes 31…40 medium no excellent yes 31…40 high yes fair yes >40 medium no excellent no 16 Class: C1:buys_computer = ‘yes’ C2:buys_computer = ‘no’ P(buys_computer = “yes”) = 9/14 = 0.643 P(buys_computer = “no”) = 5/14= 0.357 X = (age <= 30 , income = medium, student = yes, credit_rating = fair)

- 17. Naïve Bayes Classifier age income studentcredit_ratingbuys_comput <=30 high no fair no <=30 high no excellent no 31…40 high no fair yes >40 medium no fair yes >40 low yes fair yes >40 low yes excellent no 31…40 low yes excellent yes <=30 medium no fair no <=30 low yes fair yes >40 medium yes fair yes <=30 medium yes excellent yes 31…40 medium no excellent yes 31…40 high yes fair yes >40 medium no excellent no 17 Class: C1:buys_computer = ‘yes’ C2:buys_computer = ‘no’ Want to classify X = (age <= 30 , income = medium, student = yes, credit_rating = fair) Will X buy a computer?

- 18. Naïve Bayes Classifier 18 Key: Conditional probability P(X|Y) The probability that X is true, given Y P(not rain| sunny) > P(rain | sunny) P(not rain| not sunny) < P(rain | not sunny) Classifier: Have to include the probability of the condition P(not rain | sunny)*P(sunny) How often did it really not rain, given that it was actually sunny

- 19. Naïve Bayes Classifier 19 Class: C1:buys_computer = ‘yes’ C2:buys_computer = ‘no’ Want to classify X = (age <= 30 , income = medium, student = yes, credit_rating = fair) Will X buy a computer? Which “conditional probability” is greater? P(X|C1)*P(C1) > P(X|C2) *P(C2) X will buy a computer P(X|C1) *P(C1) < P(X|C2) *P(C2) X will not buy a computer

- 20. Naïve Bayes Classifier age income studentcredit_ratingbuys_comput <=30 high no fair no <=30 high no excellent no 31…40 high no fair yes >40 medium no fair yes >40 low yes fair yes >40 low yes excellent no 31…40 low yes excellent yes <=30 medium no fair no <=30 low yes fair yes >40 medium yes fair yes <=30 medium yes excellent yes 31…40 medium no excellent yes 31…40 high yes fair yes >40 medium no excellent no 20 Class: C1:buys_computer = ‘yes’ C2:buys_computer = ‘no’ X = (age <= 30 , income = medium, student = yes, credit_rating = fair) P(age = “<=30” | buys_computer = “yes”) = 2/9 = 0.222 P(age = “<= 30” | buys_computer = “no”) = 3/5 = 0.6

- 21. Naïve Bayes Classifier • Compute P(X|Ci) for each class P(age = “<=30” | buys_computer = “yes”) = 2/9 = 0.222 P(age = “<= 30” | buys_computer = “no”) = 3/5 = 0.6 P(income = “medium” | buys_computer = “yes”) = 4/9 = 0.444 P(income = “medium” | buys_computer = “no”) = 2/5 = 0.4 P(student = “yes” | buys_computer = “yes) = 6/9 = 0.667 P(student = “yes” | buys_computer = “no”) = 1/5 = 0.2 P(credit_rating = “fair” | buys_computer = “yes”) = 6/9 = 0.667 P(credit_rating = “fair” | buys_computer = “no”) = 2/5 = 0.4 21

- 22. Naïve Bayes Classifier P(X|Ci) : P(X|buys_computer = “yes”) = 0.222 x 0.444 x 0.667 x 0.667 = 0.044 P(X|buys_computer = “no”) = 0.6 x 0.4 x 0.2 x 0.4 = 0.019 P(X|Ci)*P(Ci) : P(X|buys_computer = “yes”) * P(buys_computer = “yes”) = 0.028 P(X|buys_computer = “no”) * P(buys_computer = “no”) = 0.007 Therefore, X belongs to class (“buys_computer = yes”) Bigger

- 23. Decision Tree Classifier Ross Quinlan AntennaLength 10 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 Abdomen Length Abdomen Length > 7.1? no yes KatydidAntenna Length > 6.0? no yes KatydidGrasshopper

- 24. Grasshopper Antennae shorter than body? Cricket Foretiba has ears? Katydids Camel Cricket Yes Yes Yes No No 3 Tarsi? No Decision trees predate computers

- 25. • Decision tree – A flow-chart-like tree structure – Internal node denotes a test on an attribute – Branch represents an outcome of the test – Leaf nodes represent class labels or class distribution • Decision tree generation consists of two phases – Tree construction • At start, all the training examples are at the root • Partition examples recursively based on selected attributes – Tree pruning • Identify and remove branches that reflect noise or outliers • Use of decision tree: Classifying an unknown sample – Test the attribute values of the sample against the decision tree Decision Tree Classification

- 26. • Basic algorithm (a greedy algorithm) – Tree is constructed in a top-down recursive divide-and-conquer manner – At start, all the training examples are at the root – Attributes are categorical (if continuous-valued, they can be discretized in advance) – Examples are partitioned recursively based on selected attributes. – Test attributes are selected on the basis of a heuristic or statistical measure (e.g., information gain) • Conditions for stopping partitioning – All samples for a given node belong to the same class – There are no remaining attributes for further partitioning – majority voting is employed for classifying the leaf – There are no samples left How do we construct the decision tree?

- 27. Information Gain as A Splitting Criteria • Select the attribute with the highest information gain (information gain is the expected reduction in entropy). • Assume there are two classes, P and N – Let the set of examples S contain p elements of class P and n elements of class N – The amount of information, needed to decide if an arbitrary example in S belongs to P or N is defined as np n np n np p np p SE 22 loglog)( 0 log(0) is defined as 0

- 28. nformation Gain in Decision Tree Induction • Assume that using attribute A, a current set will be partitioned into some number of child sets • The encoding information that would be gained by branching on A )()()( setschildallEsetCurrentEAGain Note: entropy is at its minimum if the collection of objects is completely uniform

- 29. Person Hair Length Weight Age Class Homer 0” 250 36 M Marge 10” 150 34 F Bart 2” 90 10 M Lisa 6” 78 8 F Maggie 4” 20 1 F Abe 1” 170 70 M Selma 8” 160 41 F Otto 10” 180 38 M Krusty 6” 200 45 M Comic 8” 290 38 ?

- 30. Hair Length <= 5? yes no Entropy(4F,5M) = -(4/9)log2(4/9) - (5/9)log2(5/9) = 0.9911 np n np n np p np p SEntropy 22 loglog)( Gain(Hair Length <= 5) = 0.9911 – (4/9 * 0.8113 + 5/9 * 0.9710 ) = 0.0911 )()()( setschildallEsetCurrentEAGain Let us try splitting on Hair length

- 31. Weight <= 160? yes no Entropy(4F,5M) = -(4/9)log2(4/9) - (5/9)log2(5/9) = 0.9911 np n np n np p np p SEntropy 22 loglog)( Gain(Weight <= 160) = 0.9911 – (5/9 * 0.7219 + 4/9 * 0 ) = 0.5900 )()()( setschildallEsetCurrentEAGain Let us try splitting on Weight

- 32. age <= 40? yes no Entropy(4F,5M) = -(4/9)log2(4/9) - (5/9)log2(5/9) = 0.9911 np n np n np p np p SEntropy 22 loglog)( Gain(Age <= 40) = 0.9911 – (6/9 * 1 + 3/9 * 0.9183 ) = 0.0183 )()()( setschildallEsetCurrentEAGain Let us try splitting on Age

- 33. Weight <= 160? yes no Hair Length <= 2? yes no Of the 3 features we had, Weight was best. But while people who weigh over 160 are perfectly classified (as males), the under 160 people are not perfectly classified… So we simply recurse! This time we find that we can split on Hair length, and we are done!

- 34. Weight <= 160? yes no Hair Length <= 2? yes no We need don’t need to keep the data around, just the test conditions. Male Male Female How would these people be classified?