Towards Learning a Semantically Relevant Dictionary for Visual Category Recognition

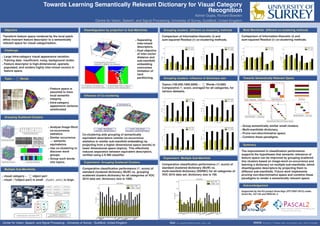

- 1. Towards Learning Semantically Relevant Dictionary for Visual Category Recognition Ashish Gupta, Richard Bowden Centre for Vision, Speech, and Signal Processing, University of Surrey, Guildford, United Kingdom Objective Transform feature space rendered by the local patch affine invariant feature descriptor to a semantically relevant space for visual categorisation. Challenge Large intra-category visual appearance variation. Training data: insufficient, noisy, background clutter. Feature descriptor is high-dimensional, sparsely populated, and renders highly inter-mixed vectors in feature space. Topic ← Words Feature space is assumed to have local semantic integrity. Intra-category appearance variance ameliorated. Grouping Scattered Clusters Analyse Image-Word co-occurrence statistics. Similar occurrence ⇒ semantic equivalence. Use co-clustering to discover word groups. Group such words into topics. Multiple Sub-Manifolds visual category ← object part visual σ2 (object part) is small . d(part1, part2) is large. Disambiguation by projection to Sub-Manifolds Separating inter-mixed descriptors. Dual objective of inter-vector distance and sub-manifold embedding overcomes limitation of hard partitioning. Influence of Co-clustering Co-clustering aids grouping of semantically equivalent descriptors (similar co-occurrence statistics or similar sub-manifold embedding) by projecting from a higher dimensional space (words) to lower dimensional space (topics). This effectively reduces separation between equivalent descriptors, verified using a K-NN classifier. Experiment: Grouping Scattered Clusters Comparative classification performance (F1 score) of standard clustered dictionary (BoW) vs. grouping scattered clusters dictionary for all categories of VOC 2010 data set; dictionary size is 1000. Grouping clusters: different co-clustering methods Comparison of Information-theoretic (i) and sum-squared Residue (r) co-clustering methods. Grouping clusters: influence of dictionary size Topics (100,500,1000,5000) ← Words (10,000) Comparative F1 score, averaged for all categories, for various datasets. Experiment: Multiple Sub-Manifold Comparative classification performance (F1 score) of standard clustered dictionary (BoW) vs. multi-manifold dictionary (SSRBC) for all categories of VOC 2010 data set; dictionary size is 100. Multi-Manifolds: different co-clustering methods Comparison of Information-theoretic (i) and sum-squared Residue (r) co-clustering methods. Towards Semantically Relevant Space Group semantically similar small clusters. Multi-manifolds dictionary. Prune non-discriminative space. Combine these paradigms. Summary The improvement in classification performance supports the hypotheses that semantic relevance of feature space can be improved by grouping scattered tiny clusters based on image-word co-occurrence and learning a dictionary on multiple sub-manifolds, which disambiguates descriptors by projecting them to different sub-manifolds. Future work implements pruning non-discriminative space and combine these paradigms to render a semantically relevant space. Acknowledgement Supported by the EU project Dicta-Sign (FP7/2007-2013) under Grant No. 231135 and PASCAL 2. Center for Vision, Speech, and Signal Processing - University of Surrey - Guildford, United Kingdom Mail: a.gupta@surrey.ac.uk WWW: http://www.ee.surrey.ac.uk/cvssp