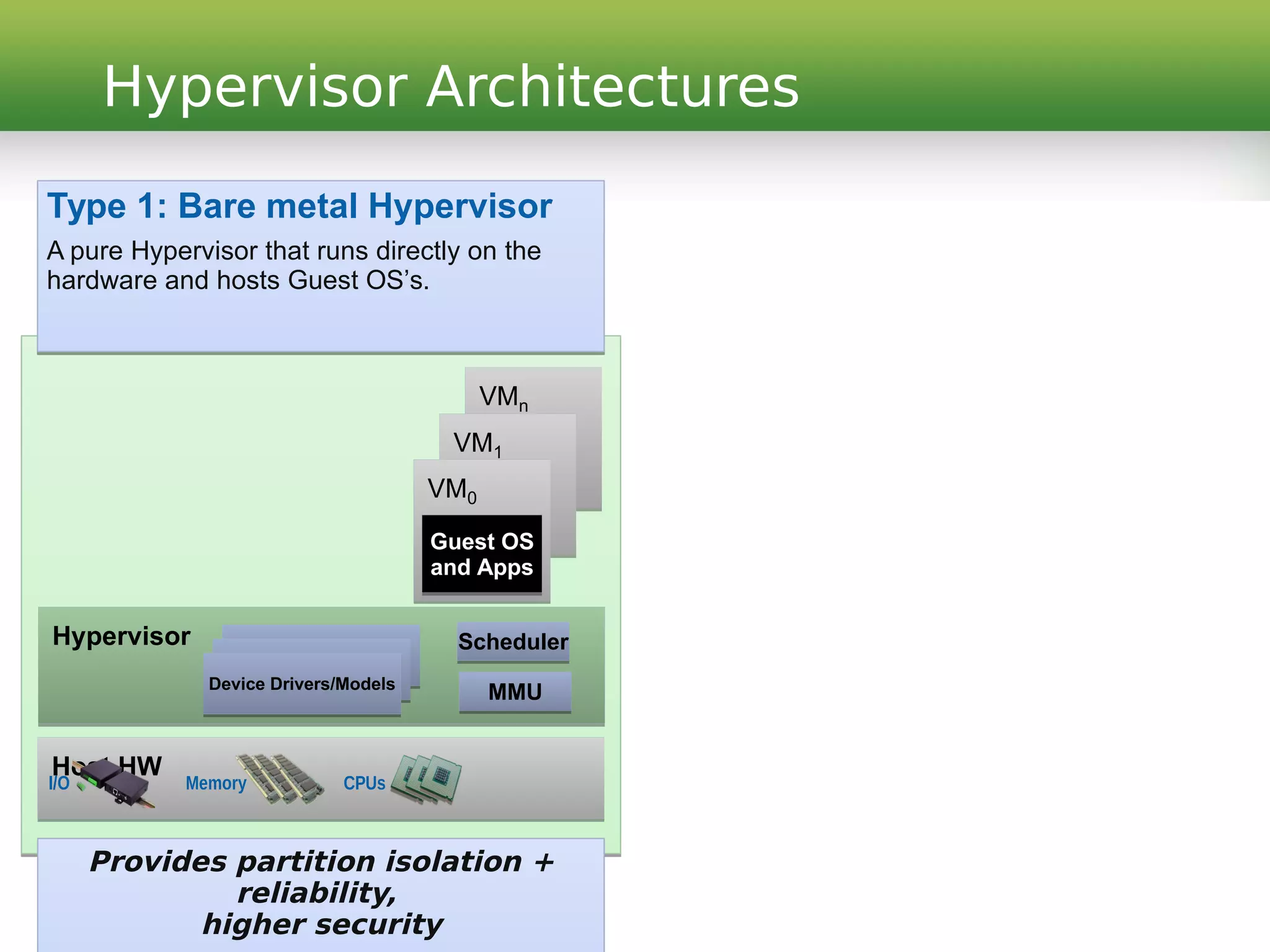

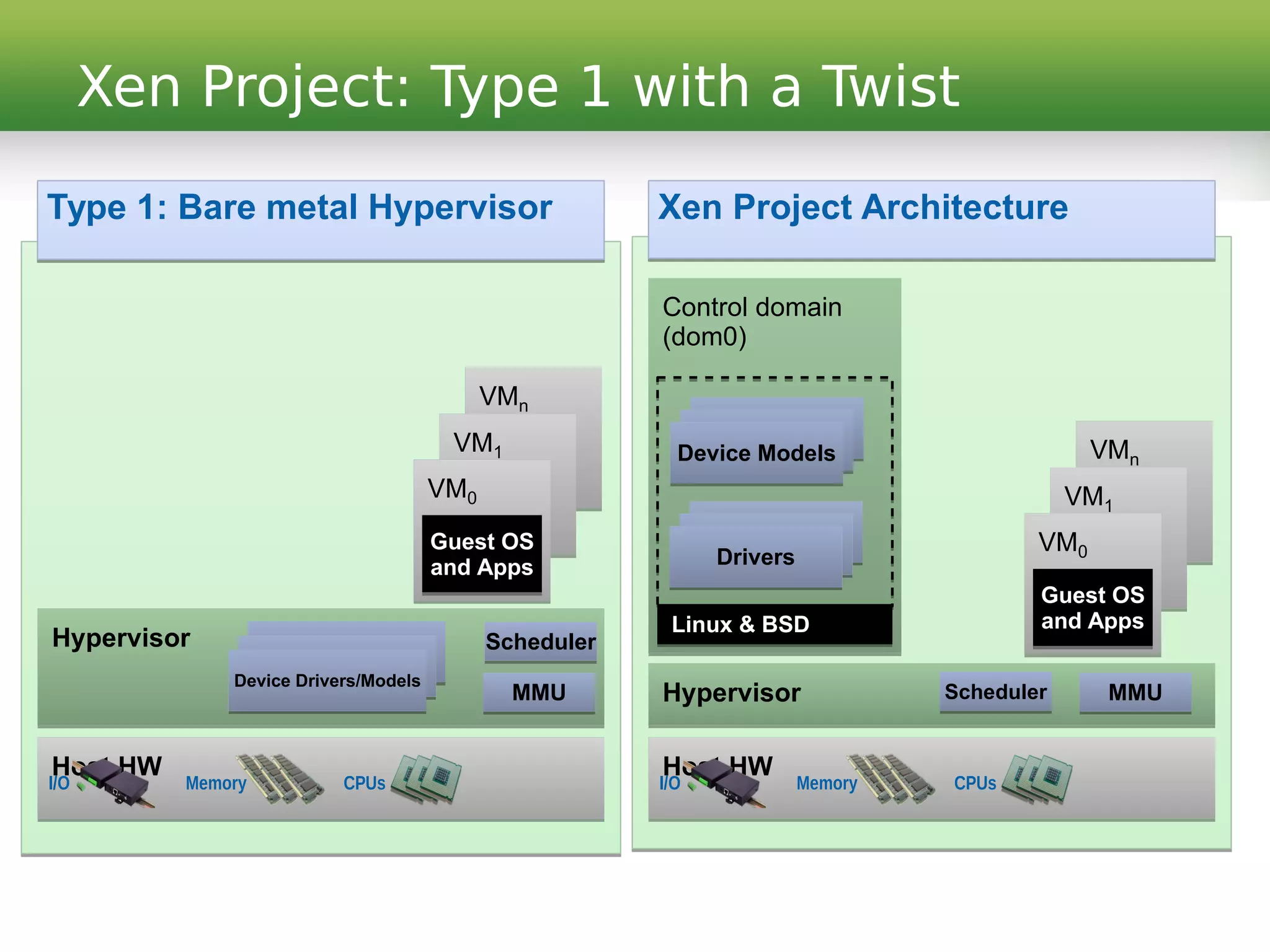

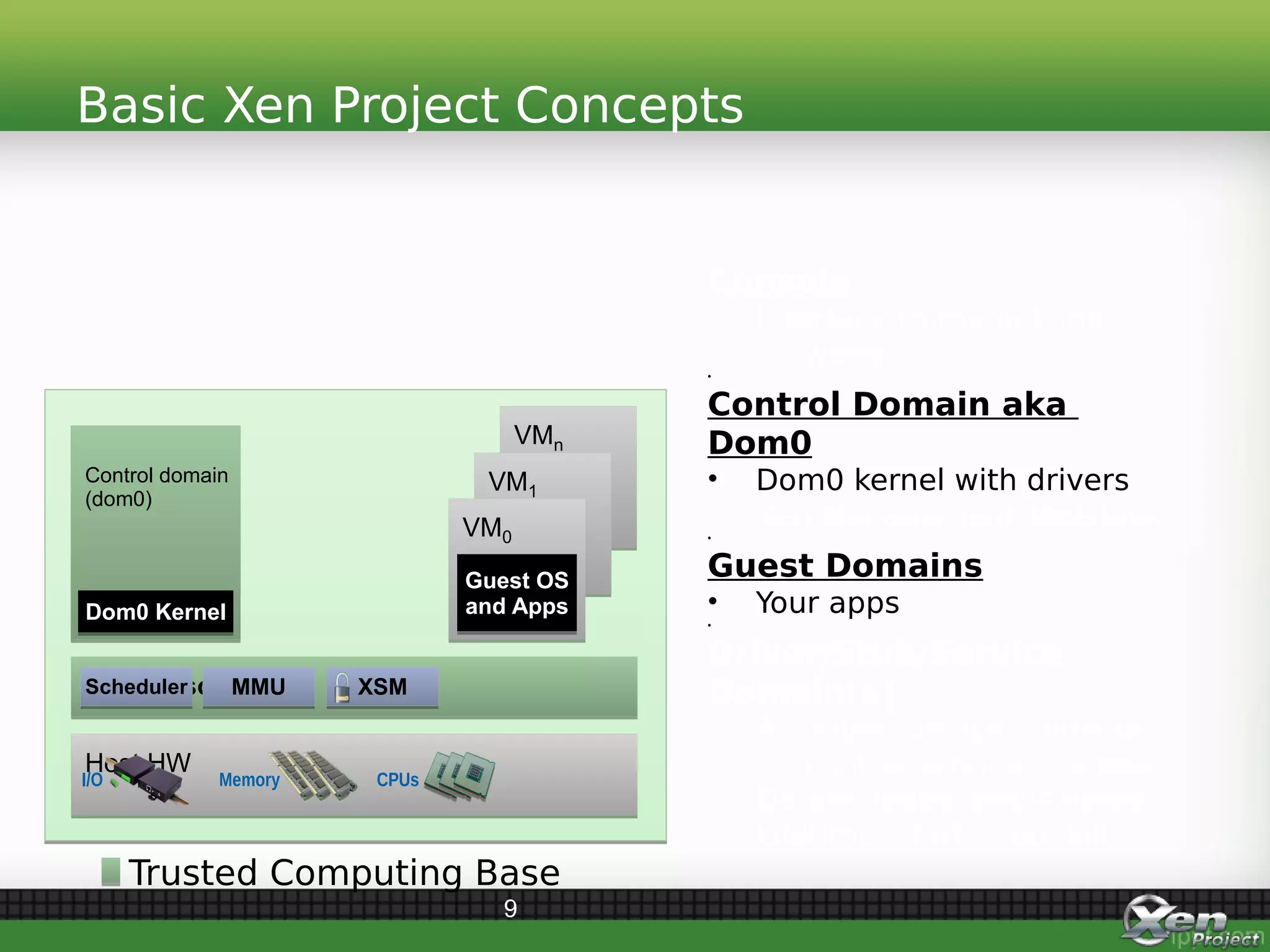

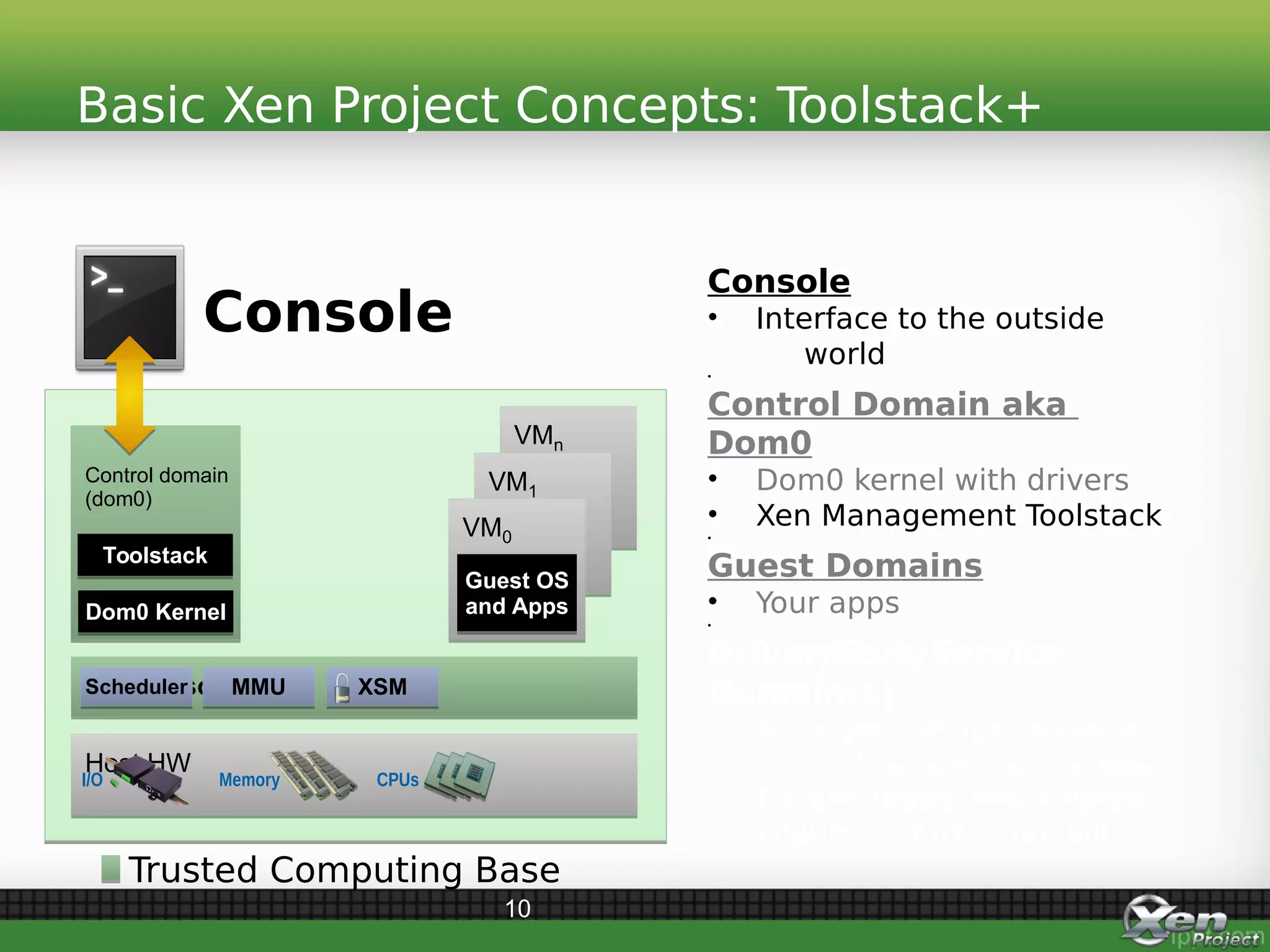

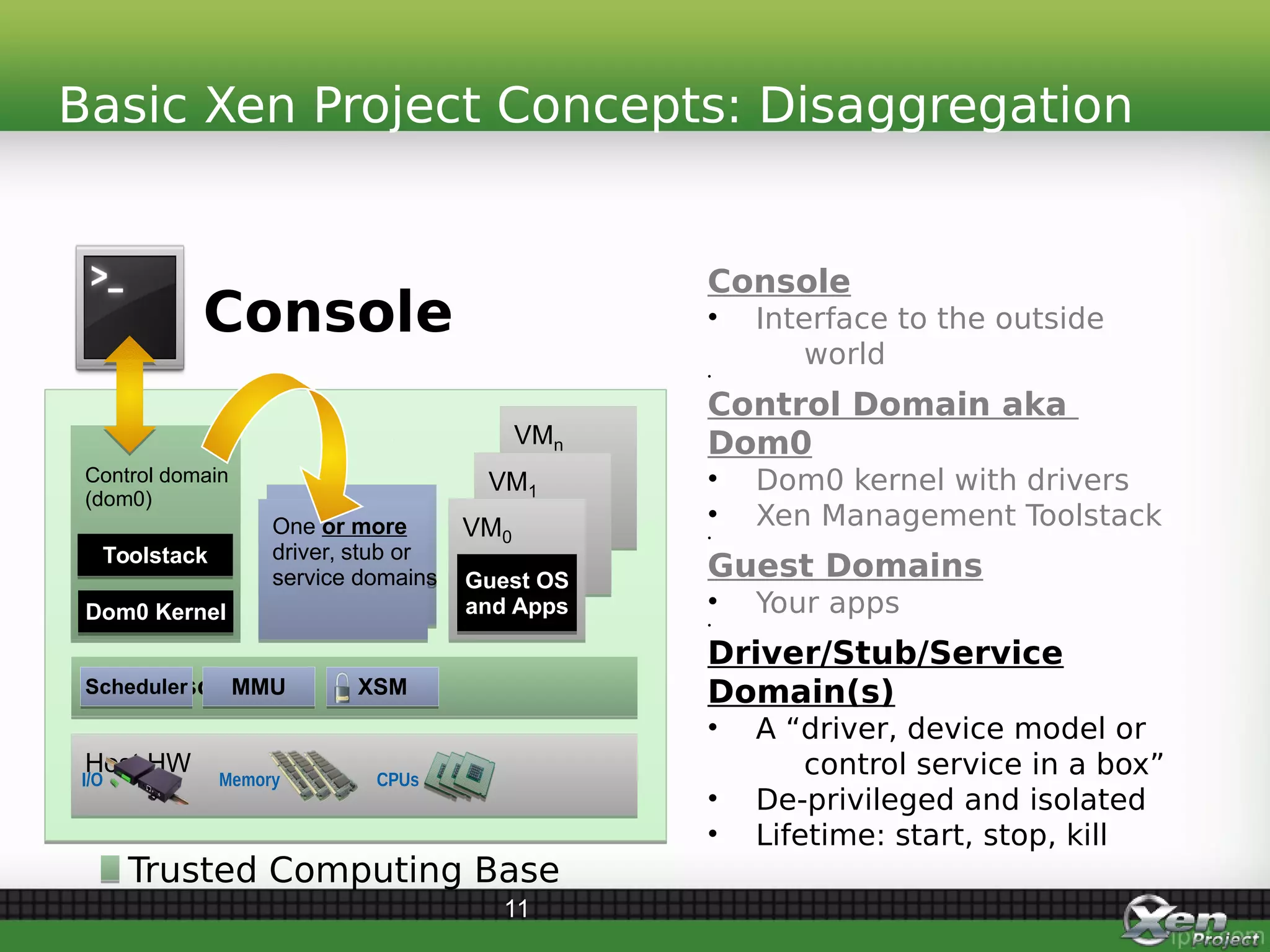

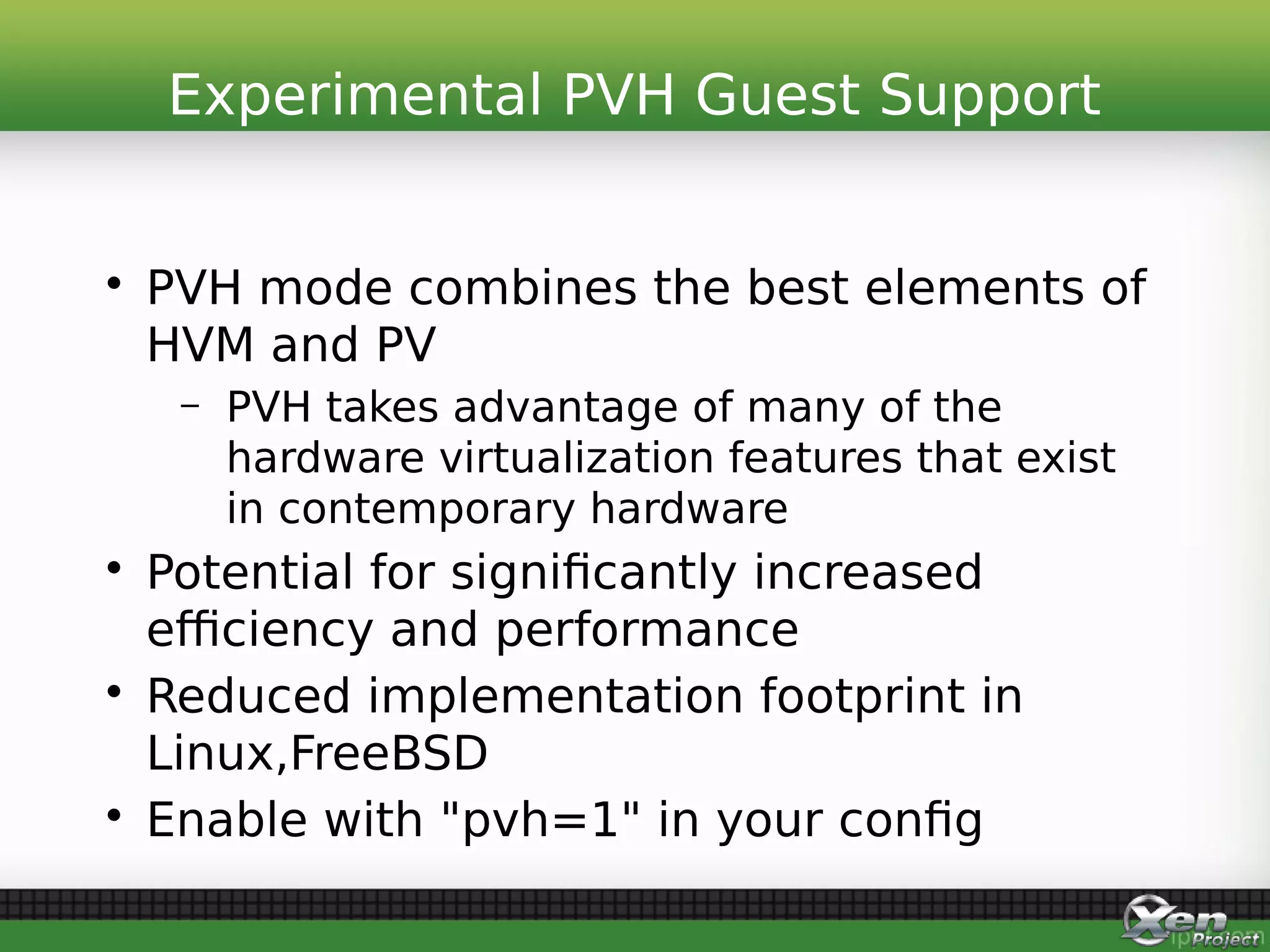

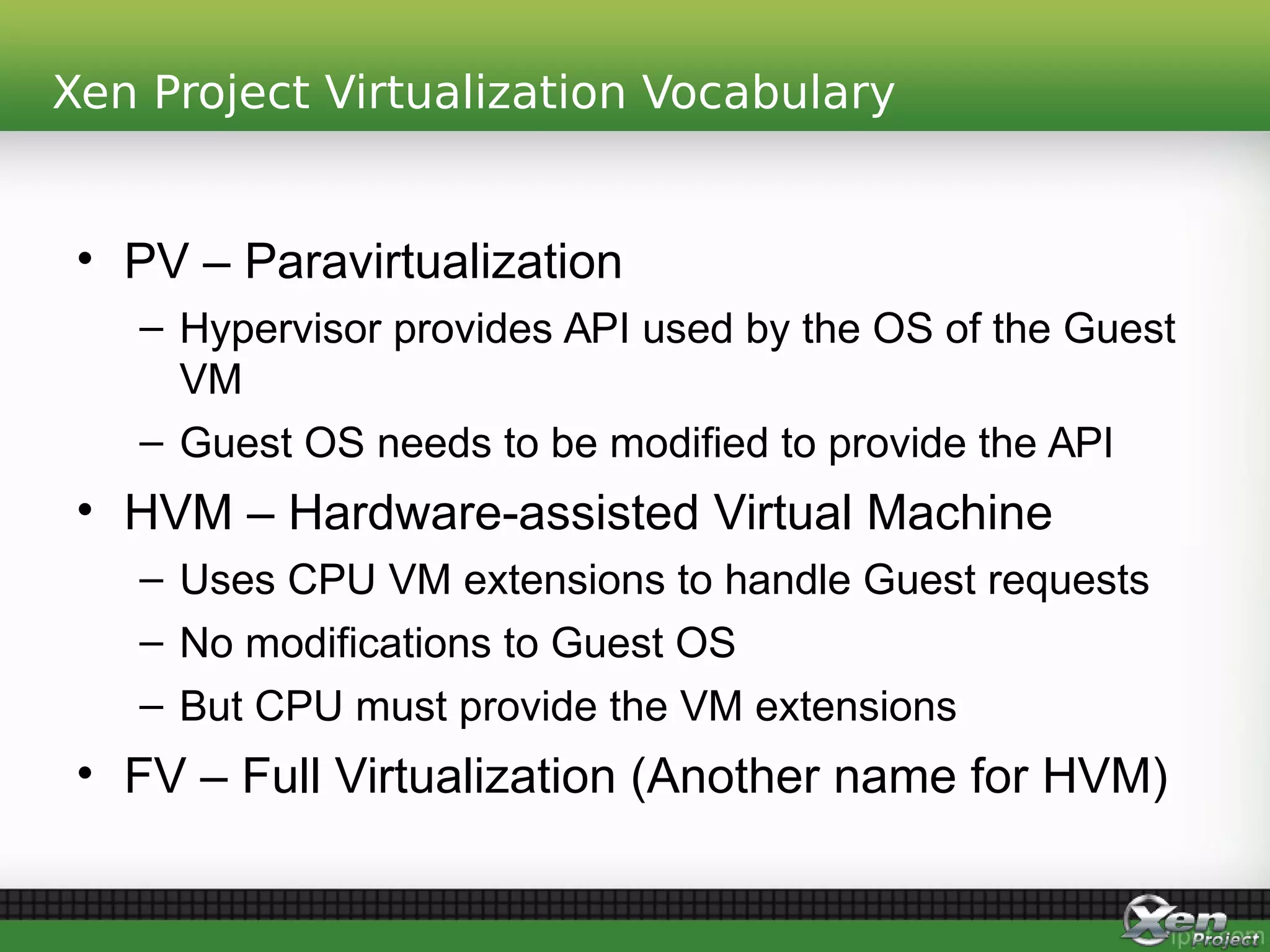

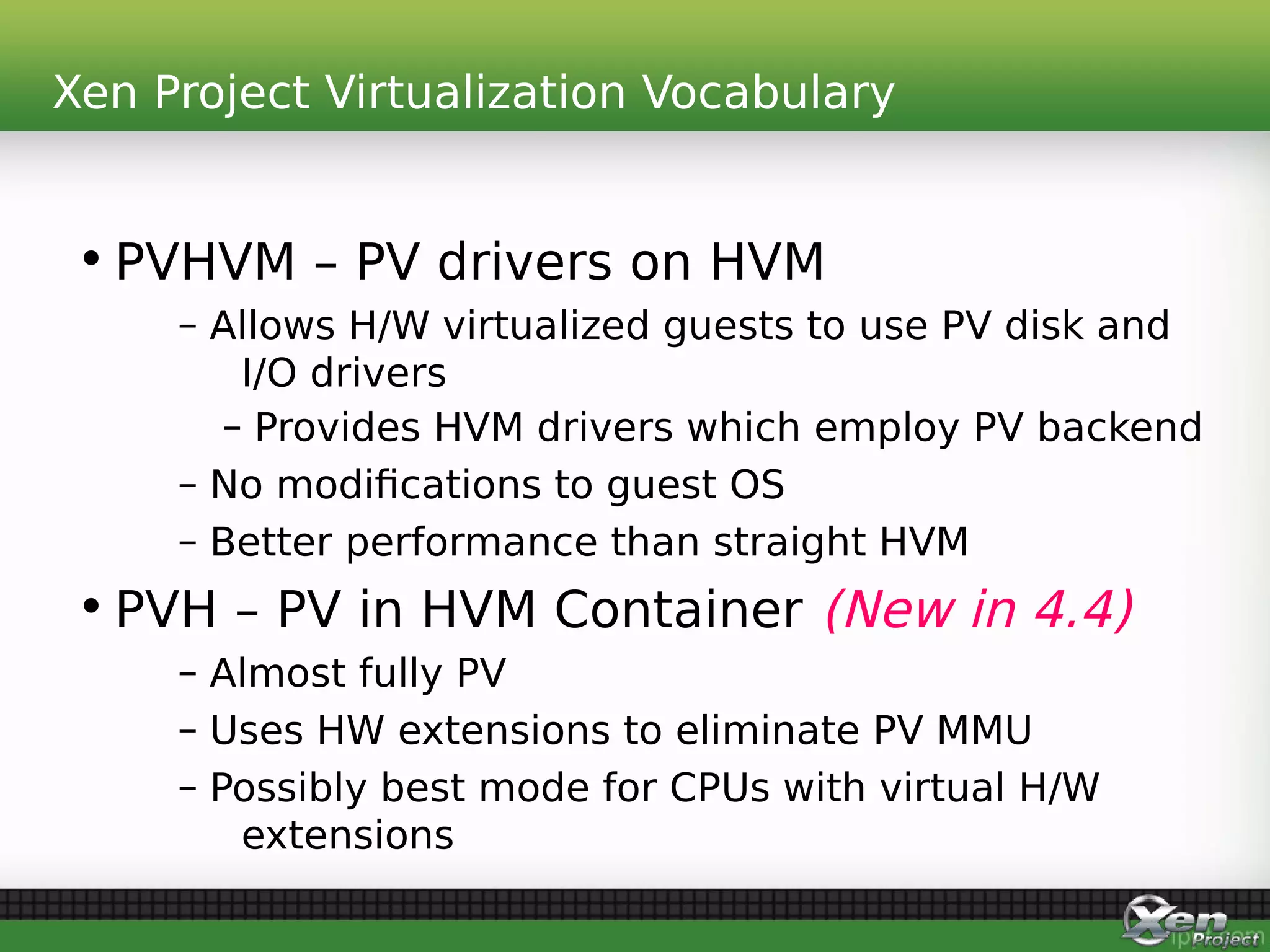

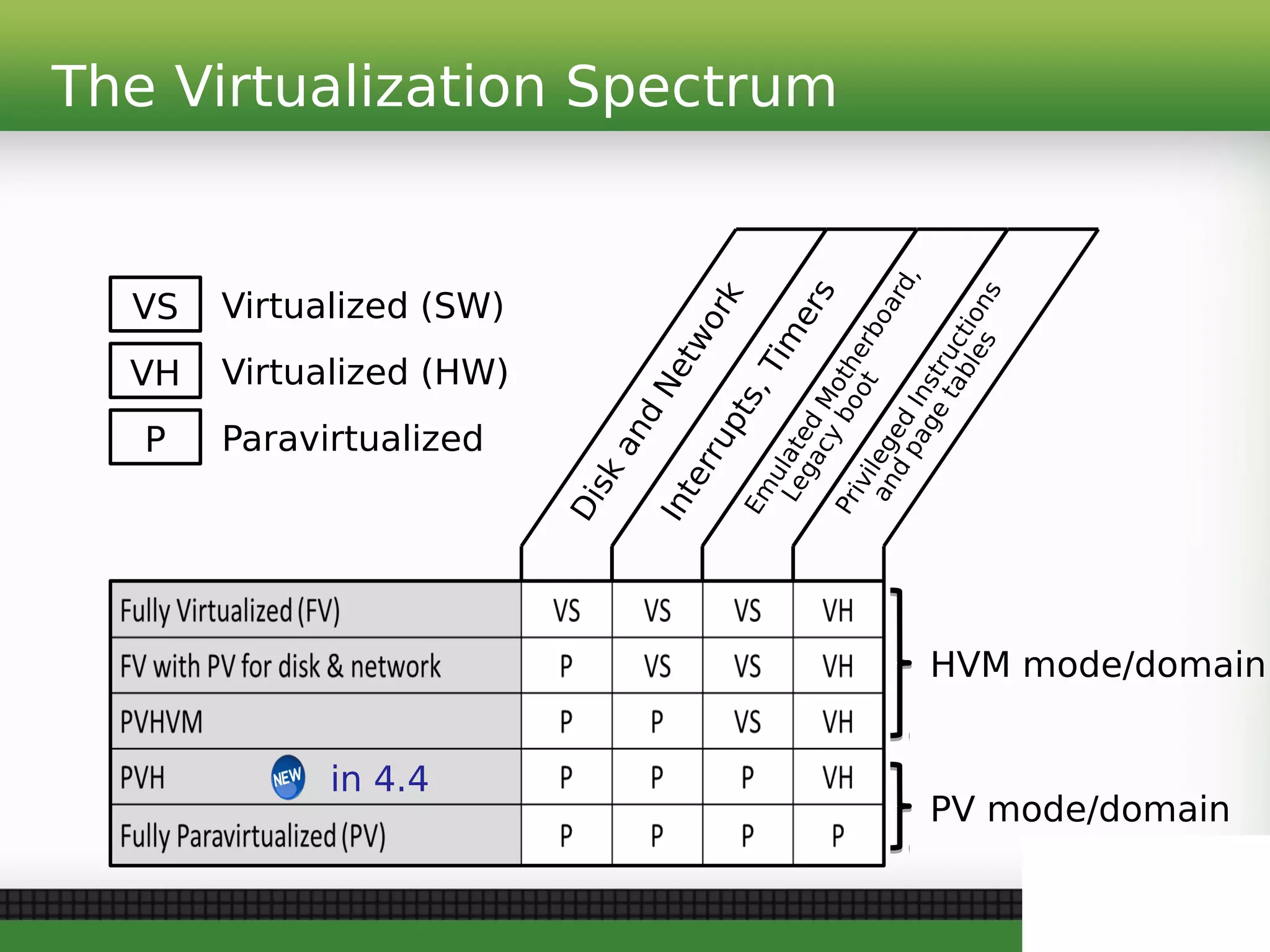

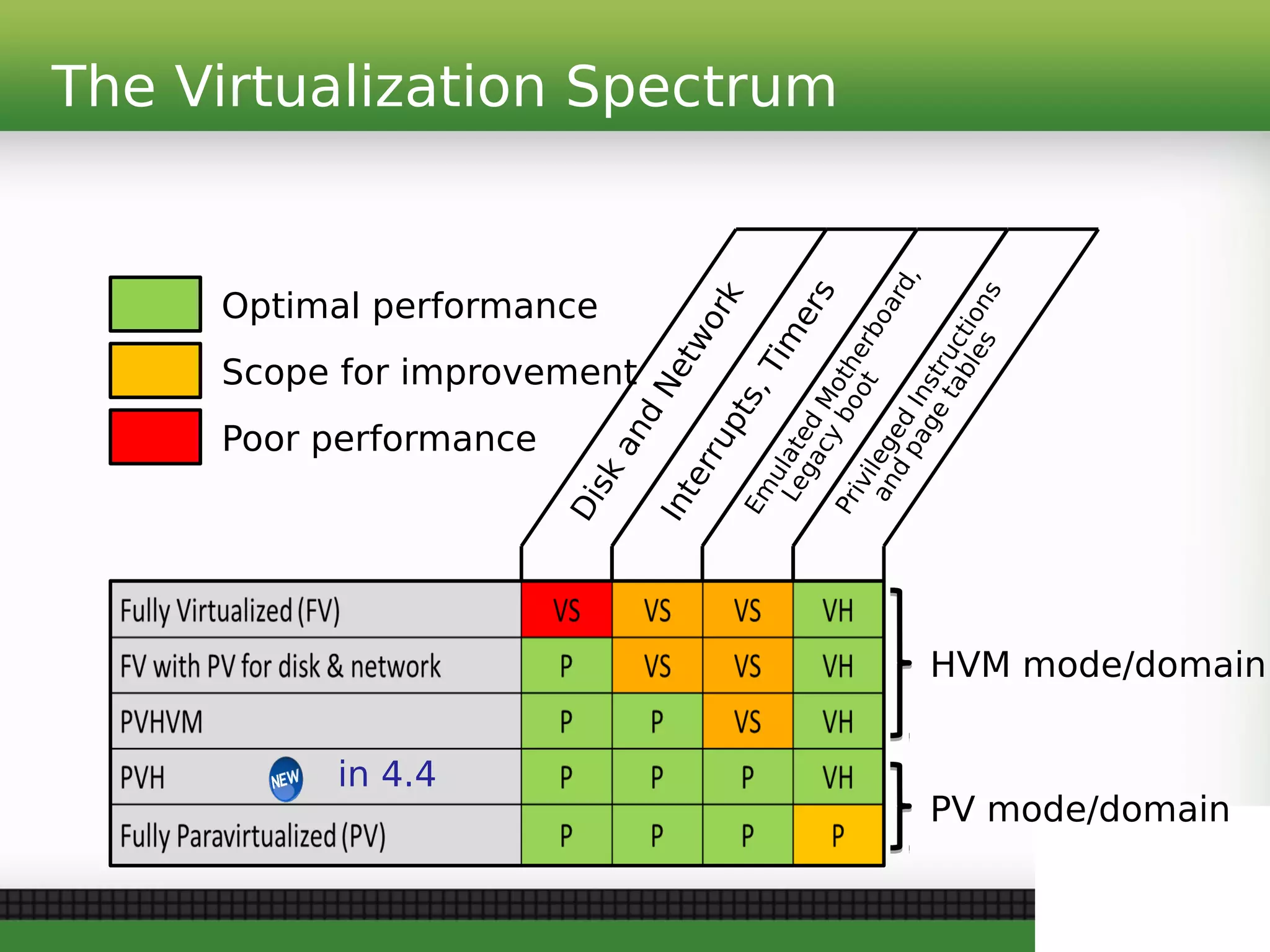

The document discusses the release of Xen Project 4.4, highlighting its features, architectures, and enhancements after 8 months of development. Key advancements include improved support for nested virtualization, a new pvh mode for efficiency, and better integration with modern storage systems, among others. Additionally, it outlines future directions for Xen, including enhanced support for cloud operating systems, automotive applications, and further development of virtualization capabilities.