Database development life cycle

•Download as DOCX, PDF•

7 likes•10,329 views

Database development life cycle, Database Planning , Systems Definition, Database Design, Application Design,Prototyping

Report

Share

Report

Share

Recommended

Database development life cycle unit 2 part 1

The document discusses the database development life cycle (DDLC), which is a six-phase process for designing, implementing, and maintaining a database system to meet an organization's information needs. The six phases are: 1) database initial study, 2) database design, 3) implementation and loading, 4) testing and evaluation, 5) operation, and 6) maintenance and evolution. Each phase is described in detail, from analyzing requirements in the initial study to ongoing maintenance activities once the database is operational.

Data models

This document provides an overview of data modeling concepts. It discusses the importance of data modeling, the basic building blocks of data models including entities, attributes, and relationships. It also covers different types of data models such as conceptual, logical, and physical models. The document discusses relational and non-relational data models as well as emerging models like object-oriented, XML, and big data models. Business rules and their role in database design are also summarized.

Database Design

The document discusses database design and the design process. It explains that database design involves determining the logical structure of tables and relationships between data elements. The design process consists of steps like determining relationships between data, dividing information into tables, specifying primary keys, and applying normalization rules. The document also covers entity-relationship diagrams and designing inputs and outputs, including input controls and designing report formats.

Introduction to Database

This document defines database and DBMS, describes their advantages over file-based systems like data independence and integrity. It explains database system components and architecture including physical and logical data models. Key aspects covered are data definition language to create schemas, data manipulation language to query data, and transaction management to handle concurrent access and recovery. It also provides a brief history of database systems and discusses database users and the critical role of database administrators.

Database Normalization by Dr. Kamal Gulati

Database Normalization by Dr. Kamal GulatiAmity University | FMS - DU | IMT | Stratford University | KKMI International Institute | AIMA | DTU

The PPT would provide the Database Normalization is to restructure the logical data model of a database to:

Eliminate Redundancy

Organize Data Efficiently

Reduce the potential for Data Anomalies.

Integrity Constraints

Integrity constraints are rules used to maintain data quality and ensure accuracy in a relational database. The main types of integrity constraints are domain constraints, which define valid value sets for attributes; NOT NULL constraints, which enforce non-null values; UNIQUE constraints, which require unique values; and CHECK constraints, which specify value ranges. Referential integrity links data between tables through foreign keys, preventing orphaned records. Integrity constraints are enforced by the database to guard against accidental data damage.

Introduction to database & sql

The document provides an introduction to databases and SQL. It defines what a database is as a collection of related data containing information relevant to an enterprise. It then discusses the properties of databases, what a database management system (DBMS) is, the typical functionality of a DBMS including defining, constructing, manipulating databases, and providing security. It also summarizes the components of a database system including fields, records, queries, and reports. The document then introduces SQL and its uses for data manipulation, definition, and administration. It provides examples of SQL statements for creating tables, inserting, querying, updating, and deleting data.

Introduction to Database Management System

Data & Information, Drawbacks of File system, What is Database Management Systems, What is the need of DBMS, Examples of DBMS, Database Types, Applications of DBMS, Advantage of DBMS over file system, Disadvantages of DBMS, DBMS vs. File System

Recommended

Database development life cycle unit 2 part 1

The document discusses the database development life cycle (DDLC), which is a six-phase process for designing, implementing, and maintaining a database system to meet an organization's information needs. The six phases are: 1) database initial study, 2) database design, 3) implementation and loading, 4) testing and evaluation, 5) operation, and 6) maintenance and evolution. Each phase is described in detail, from analyzing requirements in the initial study to ongoing maintenance activities once the database is operational.

Data models

This document provides an overview of data modeling concepts. It discusses the importance of data modeling, the basic building blocks of data models including entities, attributes, and relationships. It also covers different types of data models such as conceptual, logical, and physical models. The document discusses relational and non-relational data models as well as emerging models like object-oriented, XML, and big data models. Business rules and their role in database design are also summarized.

Database Design

The document discusses database design and the design process. It explains that database design involves determining the logical structure of tables and relationships between data elements. The design process consists of steps like determining relationships between data, dividing information into tables, specifying primary keys, and applying normalization rules. The document also covers entity-relationship diagrams and designing inputs and outputs, including input controls and designing report formats.

Introduction to Database

This document defines database and DBMS, describes their advantages over file-based systems like data independence and integrity. It explains database system components and architecture including physical and logical data models. Key aspects covered are data definition language to create schemas, data manipulation language to query data, and transaction management to handle concurrent access and recovery. It also provides a brief history of database systems and discusses database users and the critical role of database administrators.

Database Normalization by Dr. Kamal Gulati

Database Normalization by Dr. Kamal GulatiAmity University | FMS - DU | IMT | Stratford University | KKMI International Institute | AIMA | DTU

The PPT would provide the Database Normalization is to restructure the logical data model of a database to:

Eliminate Redundancy

Organize Data Efficiently

Reduce the potential for Data Anomalies.

Integrity Constraints

Integrity constraints are rules used to maintain data quality and ensure accuracy in a relational database. The main types of integrity constraints are domain constraints, which define valid value sets for attributes; NOT NULL constraints, which enforce non-null values; UNIQUE constraints, which require unique values; and CHECK constraints, which specify value ranges. Referential integrity links data between tables through foreign keys, preventing orphaned records. Integrity constraints are enforced by the database to guard against accidental data damage.

Introduction to database & sql

The document provides an introduction to databases and SQL. It defines what a database is as a collection of related data containing information relevant to an enterprise. It then discusses the properties of databases, what a database management system (DBMS) is, the typical functionality of a DBMS including defining, constructing, manipulating databases, and providing security. It also summarizes the components of a database system including fields, records, queries, and reports. The document then introduces SQL and its uses for data manipulation, definition, and administration. It provides examples of SQL statements for creating tables, inserting, querying, updating, and deleting data.

Introduction to Database Management System

Data & Information, Drawbacks of File system, What is Database Management Systems, What is the need of DBMS, Examples of DBMS, Database Types, Applications of DBMS, Advantage of DBMS over file system, Disadvantages of DBMS, DBMS vs. File System

data modeling and models

This document provides an overview of data modeling, including definitions of key concepts like data models and data modeling. It describes the evolution of popular data models from hierarchical to network to relational to entity-relationship to object-oriented models. For each model, it outlines the basic concepts, advantages, and disadvantages. The document emphasizes that newer data models aimed to address shortcomings of previous approaches and capture real-world data and relationships.

Lecture 01 introduction to database

This document provides an overview of databases and database management systems (DBMS). It discusses how databases evolved from file systems to address flaws in data management. It describes what a DBMS is and its functions in managing the database structure and controlling data access. The document also summarizes different database models including hierarchical, network, relational, entity-relationship, and object-oriented models. It highlights advantages and disadvantages of each model.

Dbms architecture

Dbms

architecture

Three level architecture is also called ANSI/SPARC architecture or three schema architecture

This framework is used for describing the structure of specific database systems (small systems may not support all aspects of the architecture)

In this architecture the database schemas can be defined at three levels explained in next slide

Data abstraction in DBMS

The document discusses three levels of data abstraction - view level, logical level, and physical level. It also discusses three schema architecture - external schema, conceptual schema, and internal schema. The levels and schemas describe how data is represented and accessed at different levels of abstraction, hiding low-level implementation details from users.

Data Modeling PPT

The document discusses data modeling, which involves creating a conceptual model of the data required for an information system. There are three types of data models - conceptual, logical, and physical. A conceptual data model describes what the system contains, a logical model describes how the system will be implemented regardless of the database, and a physical model describes the implementation using a specific database. Common elements of a data model include entities, attributes, and relationships. Data modeling is used to standardize and communicate an organization's data requirements and establish business rules.

DATABASE MANAGEMENT SYSTEM

A database management system (DBMS) is a collection of programs that enables users to create and maintain databases and control all access to them. The primary goal of a DBMS is to provide an environment that is both convenient and efficient for users to retrieve and store information.

Databases: Normalisation

Normalisation is a process that structures data in a relational database to minimize duplication and redundancy while preserving information. It aims to ensure data is structured efficiently and consistently through multiple forms. The stages of normalization include first normal form (1NF), second normal form (2NF), third normal form (3NF), Boyce-Codd normal form (BCNF), fourth normal form (4NF) and fifth normal form (5NF). Higher normal forms eliminate more types of dependencies to optimize the database structure.

Database Administration

The document provides an overview of the role and responsibilities of a database administrator (DBA). It discusses that a DBA supervises databases and database management systems to ensure availability. Key responsibilities include database security, monitoring, backup/recovery, and performance tuning. DBAs must have both technical skills and knowledge of database platforms. While important, the DBA role is challenging as it involves being available to resolve various technical issues at any time from different stakeholders. The document also provides salary data for DBA roles from an external source.

Object oriented database

An object database is a database management system in which information is represented in the form of objects as used in object-oriented programming. Object databases are different from relational databases which are table-oriented. Object-relational databases are a hybrid of both approaches

Types of Database Models

The document discusses different database models including hierarchical, network, relational, entity-relationship, object-oriented, object-relational, and semi-structured models. It provides details on the characteristics, structures, advantages and disadvantages of each model. It also includes examples and diagrams to illustrate concepts like hierarchical structure, network structure, relational schema, entity relationship diagrams, object oriented diagrams, and XML schema. The document appears to be teaching materials for a database management course that provides an overview of various database models.

Rdbms

This document discusses Relational Database Management Systems (RDBMS). It provides an overview of early database systems like hierarchical and network models. It then describes the key concepts of RDBMS including relations, attributes, and using tables, rows, and columns. RDBMS uses Structured Query Language (SQL) and has advantages over early systems by allowing data to be spread across multiple tables and accessed simultaneously by users.

Introduction to Database

This document provides an introduction to databases. It defines what a database is, the steps to create one, and benefits such as fast querying and flexibility. It describes database models like hierarchical, network, entity-relationship, and relational. Key database concepts are explained, including entities, attributes, primary keys, and foreign keys. Finally, it outlines database management system components, common users, and introduces Microsoft Access.

Data Models

A data model is a set of concepts that define the structure of data in a database. The three main types of data models are the hierarchical model, network model, and relational model. The hierarchical model uses a tree structure with parent-child relationships, while the network model allows many-to-many relationships but is more complex. The relational model - which underlies most modern databases - uses tables with rows and columns to represent data, and relationships are represented by values in columns.

Rdbms

~!!! Please Remember in my prayers May Almighty Allah or God bless you all with great happiness Ameen. !!!~

Advanced Database System

Here you can gain knowledge from traditional database system to advanced database system and also know about that new features of database.

SQL - Structured query language introduction

SQL is a language used to define, manipulate, and control relational databases. It has four main components: DDL for defining schemas; DML for manipulating data within schemas; DCL for controlling access privileges; and DQL for querying data. Some key SQL concepts covered include data definition using CREATE, ALTER, DROP statements; data manipulation using SELECT, INSERT, UPDATE, DELETE; and joining data across tables using conditions. Advanced topics include views, aggregation, subqueries, and modifying databases.

Database administrator

A database administrator is responsible for installing, configuring, upgrading, administering, monitoring and maintaining databases. Key responsibilities include database design, performance and capacity issues, data replication, and table maintenance. DBAs ensure proper data organization and management through their skills in SQL, database design, and knowledge of database management systems and operating systems. There are several types of DBAs based on their specific roles like system DBA, database architect, and data warehouse administrator.

Database concepts

This document provides an overview of database concepts and terminology. It discusses different types of databases based on number of users (single, multi, workgroup, enterprise), number of computers used (centralized, distributed), and how up-to-date the data is (production, data warehouse). It also covers database categorizations, the relational model, entity types and occurrences, relationship types and occurrences, attributes, keys, and E.F. Codd's 12 rules for relational databases.

D B M S Animate

The document discusses database concepts including:

- What a database is and its components like data, hardware, software, and users.

- Database management systems (DBMS) that enable users to define, create and maintain databases.

- Data models like hierarchical, network, and relational models. Relational databases using SQL are now most common.

- Database design including logical design, physical implementation, and application development.

- Key concepts like data abstraction, instances and schemas, normalization, and integrity rules.

Database Management System

The document provides an overview of database management systems (DBMS). It defines DBMS as software that creates, organizes, and manages databases. It discusses key DBMS concepts like data models, schemas, instances, and database languages. Components of a database system including users, software, hardware, and data are described. Popular DBMS examples like Oracle, SQL Server, and MS Access are listed along with common applications of DBMS in various industries.

Structure of this ChapterIn Section 11.1Section 11.docx

Structure of this Chapter

In Section 11.1Section 11.1 we discuss when a database developer might use

fact-finding techniques. (Throughout this book we use the term

“database developer” to refer to a person or group of people

responsible for the analysis, design, and implementation of a

database system.) In Section 11.2Section 11.2 we illustrate the types of facts that

should be collected and the documentation that should be produced

at each stage of the database system development lifecycle. In

Section 11.3Section 11.3 we describe the five most commonly used fact-finding

techniques and identify the advantages and disadvantages of each. In

Section 11.4Section 11.4 we demonstrate how fact-finding techniques can be

used to develop a database system for a case study called

DreamHome, a property management company. We begin this section

by providing an overview of the DreamHome case study. We then

examine the first three stages of the database system development

lifecycle, namely database planning, system definition, and

requirements collection and analysis. For each stage we demonstrate

327

328of each. We finally demonstrate how some of these techniques may be

used during the earlier stages of the database system development

lifecycle using a property management company called DreamHome.

The DreamHome case study is used throughout this book.

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid29635

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid29644

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid29751

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid30021

the process of collecting data using fact-finding techniques and

describe the documentation produced.

11.1 When Are Fact-Finding Techniques Used?

There are many occasions for fact-finding during the database system

development life cycle. However, fact-finding is particularly crucial to

the early stages of the lifecycle, including the database planning,

system definition, and requirements collection and analysis stages. It

is during these early stages that the database developer captures the

essential facts necessary to build the required database. Fact-finding is

also used during database design and the later stages of the lifecycle,

but to a lesser extent. For example, during physical database design,

fact-finding becomes technical as the database developer attempts to

learn more about the DBMS selected for the database system. Also,

during the final stage, operational maintenance, fact-finding is used to

determine whether a system requires tuning to improve performance

or further development to include new requirements.

Note that it is important to have a rough estimate of how much time

and effort is to be spent on fact-finding for a database project. As we

mentioned in Chapter 10Chapter 10, too much study too soon leads to paralysis

by analysis. However, too little thought can result in an unnecessary

waste of both time and.

Database management system (part 1)

This document discusses database management systems and the database development lifecycle. It defines DBMS as software that manages databases and provides functions like data definition, retrieval, updating and administration. It describes the characteristics of data in databases and advantages like redundancy control and data sharing. The document outlines the planning, analysis, design, implementation and maintenance phases of both the software development lifecycle and database development lifecycle. It also covers different database models like hierarchical, network and relational.

More Related Content

What's hot

data modeling and models

This document provides an overview of data modeling, including definitions of key concepts like data models and data modeling. It describes the evolution of popular data models from hierarchical to network to relational to entity-relationship to object-oriented models. For each model, it outlines the basic concepts, advantages, and disadvantages. The document emphasizes that newer data models aimed to address shortcomings of previous approaches and capture real-world data and relationships.

Lecture 01 introduction to database

This document provides an overview of databases and database management systems (DBMS). It discusses how databases evolved from file systems to address flaws in data management. It describes what a DBMS is and its functions in managing the database structure and controlling data access. The document also summarizes different database models including hierarchical, network, relational, entity-relationship, and object-oriented models. It highlights advantages and disadvantages of each model.

Dbms architecture

Dbms

architecture

Three level architecture is also called ANSI/SPARC architecture or three schema architecture

This framework is used for describing the structure of specific database systems (small systems may not support all aspects of the architecture)

In this architecture the database schemas can be defined at three levels explained in next slide

Data abstraction in DBMS

The document discusses three levels of data abstraction - view level, logical level, and physical level. It also discusses three schema architecture - external schema, conceptual schema, and internal schema. The levels and schemas describe how data is represented and accessed at different levels of abstraction, hiding low-level implementation details from users.

Data Modeling PPT

The document discusses data modeling, which involves creating a conceptual model of the data required for an information system. There are three types of data models - conceptual, logical, and physical. A conceptual data model describes what the system contains, a logical model describes how the system will be implemented regardless of the database, and a physical model describes the implementation using a specific database. Common elements of a data model include entities, attributes, and relationships. Data modeling is used to standardize and communicate an organization's data requirements and establish business rules.

DATABASE MANAGEMENT SYSTEM

A database management system (DBMS) is a collection of programs that enables users to create and maintain databases and control all access to them. The primary goal of a DBMS is to provide an environment that is both convenient and efficient for users to retrieve and store information.

Databases: Normalisation

Normalisation is a process that structures data in a relational database to minimize duplication and redundancy while preserving information. It aims to ensure data is structured efficiently and consistently through multiple forms. The stages of normalization include first normal form (1NF), second normal form (2NF), third normal form (3NF), Boyce-Codd normal form (BCNF), fourth normal form (4NF) and fifth normal form (5NF). Higher normal forms eliminate more types of dependencies to optimize the database structure.

Database Administration

The document provides an overview of the role and responsibilities of a database administrator (DBA). It discusses that a DBA supervises databases and database management systems to ensure availability. Key responsibilities include database security, monitoring, backup/recovery, and performance tuning. DBAs must have both technical skills and knowledge of database platforms. While important, the DBA role is challenging as it involves being available to resolve various technical issues at any time from different stakeholders. The document also provides salary data for DBA roles from an external source.

Object oriented database

An object database is a database management system in which information is represented in the form of objects as used in object-oriented programming. Object databases are different from relational databases which are table-oriented. Object-relational databases are a hybrid of both approaches

Types of Database Models

The document discusses different database models including hierarchical, network, relational, entity-relationship, object-oriented, object-relational, and semi-structured models. It provides details on the characteristics, structures, advantages and disadvantages of each model. It also includes examples and diagrams to illustrate concepts like hierarchical structure, network structure, relational schema, entity relationship diagrams, object oriented diagrams, and XML schema. The document appears to be teaching materials for a database management course that provides an overview of various database models.

Rdbms

This document discusses Relational Database Management Systems (RDBMS). It provides an overview of early database systems like hierarchical and network models. It then describes the key concepts of RDBMS including relations, attributes, and using tables, rows, and columns. RDBMS uses Structured Query Language (SQL) and has advantages over early systems by allowing data to be spread across multiple tables and accessed simultaneously by users.

Introduction to Database

This document provides an introduction to databases. It defines what a database is, the steps to create one, and benefits such as fast querying and flexibility. It describes database models like hierarchical, network, entity-relationship, and relational. Key database concepts are explained, including entities, attributes, primary keys, and foreign keys. Finally, it outlines database management system components, common users, and introduces Microsoft Access.

Data Models

A data model is a set of concepts that define the structure of data in a database. The three main types of data models are the hierarchical model, network model, and relational model. The hierarchical model uses a tree structure with parent-child relationships, while the network model allows many-to-many relationships but is more complex. The relational model - which underlies most modern databases - uses tables with rows and columns to represent data, and relationships are represented by values in columns.

Rdbms

~!!! Please Remember in my prayers May Almighty Allah or God bless you all with great happiness Ameen. !!!~

Advanced Database System

Here you can gain knowledge from traditional database system to advanced database system and also know about that new features of database.

SQL - Structured query language introduction

SQL is a language used to define, manipulate, and control relational databases. It has four main components: DDL for defining schemas; DML for manipulating data within schemas; DCL for controlling access privileges; and DQL for querying data. Some key SQL concepts covered include data definition using CREATE, ALTER, DROP statements; data manipulation using SELECT, INSERT, UPDATE, DELETE; and joining data across tables using conditions. Advanced topics include views, aggregation, subqueries, and modifying databases.

Database administrator

A database administrator is responsible for installing, configuring, upgrading, administering, monitoring and maintaining databases. Key responsibilities include database design, performance and capacity issues, data replication, and table maintenance. DBAs ensure proper data organization and management through their skills in SQL, database design, and knowledge of database management systems and operating systems. There are several types of DBAs based on their specific roles like system DBA, database architect, and data warehouse administrator.

Database concepts

This document provides an overview of database concepts and terminology. It discusses different types of databases based on number of users (single, multi, workgroup, enterprise), number of computers used (centralized, distributed), and how up-to-date the data is (production, data warehouse). It also covers database categorizations, the relational model, entity types and occurrences, relationship types and occurrences, attributes, keys, and E.F. Codd's 12 rules for relational databases.

D B M S Animate

The document discusses database concepts including:

- What a database is and its components like data, hardware, software, and users.

- Database management systems (DBMS) that enable users to define, create and maintain databases.

- Data models like hierarchical, network, and relational models. Relational databases using SQL are now most common.

- Database design including logical design, physical implementation, and application development.

- Key concepts like data abstraction, instances and schemas, normalization, and integrity rules.

Database Management System

The document provides an overview of database management systems (DBMS). It defines DBMS as software that creates, organizes, and manages databases. It discusses key DBMS concepts like data models, schemas, instances, and database languages. Components of a database system including users, software, hardware, and data are described. Popular DBMS examples like Oracle, SQL Server, and MS Access are listed along with common applications of DBMS in various industries.

What's hot (20)

Similar to Database development life cycle

Structure of this ChapterIn Section 11.1Section 11.docx

Structure of this Chapter

In Section 11.1Section 11.1 we discuss when a database developer might use

fact-finding techniques. (Throughout this book we use the term

“database developer” to refer to a person or group of people

responsible for the analysis, design, and implementation of a

database system.) In Section 11.2Section 11.2 we illustrate the types of facts that

should be collected and the documentation that should be produced

at each stage of the database system development lifecycle. In

Section 11.3Section 11.3 we describe the five most commonly used fact-finding

techniques and identify the advantages and disadvantages of each. In

Section 11.4Section 11.4 we demonstrate how fact-finding techniques can be

used to develop a database system for a case study called

DreamHome, a property management company. We begin this section

by providing an overview of the DreamHome case study. We then

examine the first three stages of the database system development

lifecycle, namely database planning, system definition, and

requirements collection and analysis. For each stage we demonstrate

327

328of each. We finally demonstrate how some of these techniques may be

used during the earlier stages of the database system development

lifecycle using a property management company called DreamHome.

The DreamHome case study is used throughout this book.

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid29635

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid29644

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid29751

epub://rxsygq01kgnjqgui7xpe.1.vbk/OPS/loc_020.xhtml#eid30021

the process of collecting data using fact-finding techniques and

describe the documentation produced.

11.1 When Are Fact-Finding Techniques Used?

There are many occasions for fact-finding during the database system

development life cycle. However, fact-finding is particularly crucial to

the early stages of the lifecycle, including the database planning,

system definition, and requirements collection and analysis stages. It

is during these early stages that the database developer captures the

essential facts necessary to build the required database. Fact-finding is

also used during database design and the later stages of the lifecycle,

but to a lesser extent. For example, during physical database design,

fact-finding becomes technical as the database developer attempts to

learn more about the DBMS selected for the database system. Also,

during the final stage, operational maintenance, fact-finding is used to

determine whether a system requires tuning to improve performance

or further development to include new requirements.

Note that it is important to have a rough estimate of how much time

and effort is to be spent on fact-finding for a database project. As we

mentioned in Chapter 10Chapter 10, too much study too soon leads to paralysis

by analysis. However, too little thought can result in an unnecessary

waste of both time and.

Database management system (part 1)

This document discusses database management systems and the database development lifecycle. It defines DBMS as software that manages databases and provides functions like data definition, retrieval, updating and administration. It describes the characteristics of data in databases and advantages like redundancy control and data sharing. The document outlines the planning, analysis, design, implementation and maintenance phases of both the software development lifecycle and database development lifecycle. It also covers different database models like hierarchical, network and relational.

Database Management Systems 2

The document discusses the database development life cycle (DBLC), which follows a similar process to the systems development life cycle (SDLC). The DBLC involves gathering requirements, database analysis, design, implementation, testing and evaluation, and maintenance. It describes each stage in detail, including conceptual, logical, and physical data modeling during the design stage. The goal is to systematically plan and develop a database to meet requirements while ensuring completeness, integrity, flexibility, and usability.

Week 7 Database Development Process

Database Development Process: A core aspect of software engineering is the subdivision of the development process into a series of phases, or steps, each of which focuses on one part of the development.

Db lecture 2

-This lecture about the Details explanation about the Database Development life Cycle. This lecture show about the Software development Cycle in term of DB. This lecture Explain the architecture of the Database. This lecture explain about the Three-Level ANSI-SPARC Architecture.

A CRUD Matrix

This document discusses various techniques used in software project management including CRUD matrices, Gantt charts, PERT charts, feasibility analysis, and cost-benefit analysis. A CRUD matrix identifies the database tables involved in create, read, update, and delete operations for different user scenarios of a website. Gantt charts show project activities and timelines while PERT charts illustrate task dependencies in a project. Feasibility analysis evaluates the technical, economic, operational, and legal viability of a project. Cost-benefit analysis compares monetary costs and benefits to determine if a project's benefits outweigh its costs. These techniques help software project managers effectively plan, schedule, and control development projects.

Database Development Lifecycle (DBMS DDLC)

The document discusses the database development life cycle (DDLC). It outlines the 6 phases of the DDLC: database planning, database design, implementation and downloading, testing and evaluation, operation, and maintenance and evolution. The database design phase is described as the most important, involving conceptual, logical, and physical design activities like data modeling, normalization, and validating the data model. The goals and key activities of each phase are summarized.

Project report

This document provides information about a student details management system (SDMS) software project created by a student. It includes an introduction describing the purpose of automating a student information system. It also includes sections on the objectives, theoretical background of databases, MySQL and Python, problem definition and analysis, and system design including database and code details. The overall aim is to develop a program with a graphical user interface to allow users to view and update student information stored in a centralized database.

Advance database system (part 2)

Introduction to File Processing Systems. Advantages of Database Approach. (Database) System Development Life Cycle (SDLC).

Bi Capacity Planning

Traditional operational views of capacity planning is not the same as BI Capacity planning. I created this presentation to help establish a BI Infrastructure Capacity planning process.

Web Database integration

Database integration allows data from multiple applications to be stored in a central database so it can be easily accessed and used across different applications. This involves developing a plan so that all client applications are accounted for. Integrating a database with a website allows web visitors to add, remove, and update information in the database using a web browser and often search the database. Requirements analysis determines what type of database is needed based on factors like daily data volume and amount of master file data.

Notes of DBMS Introduction to Database Design

This document outlines the six steps of database design: requirements analysis, conceptual design, logical design, schema refinement, physical design, and application/security design. It describes each step in detail. Requirements analysis involves understanding user needs. Conceptual design models the data using an entity-relationship diagram. Logical design maps the conceptual model to a relational schema. Schema refinement improves normalization. Physical design optimizes performance. Application/security design specifies user roles and access permissions.

Sdlc1

The document discusses the system development life cycle (SDLC), which includes various phases for developing and maintaining systems. The key phases are: system investigation, feasibility study, system analysis, system design, coding, testing, implementation, and maintenance. The feasibility study phase evaluates the technical, operational, economic, motivational, and schedule feasibility of a proposed system. The system analysis phase involves studying user requirements and the current system. System design then specifies how the new system will meet requirements through elements like data design, user interface design, and process design. This produces specifications for the system.

Data Warehouses & Deployment By Ankita dubey

This document contains the notes about data warehouses and life cycle for data warehouse deployment project. This can be useful for students or working professionals to gain the basic knowledge about Data warehouses.

Database Design

The document discusses database design and the waterfall approach to systems development. It describes the waterfall approach as involving a series of sequential phases from strategy and planning to maintenance. Some problems with the waterfall approach are that users may not properly communicate requirements or understand their own needs, and analysts can misunderstand requirements. The document also discusses conceptual, logical, and physical phases of database design, moving from requirements to implementing database technology.

Ems

This document describes the development of an employee management system. It discusses:

1) The programming tools used - Microsoft Access for the database and C# with .NET Framework for the application. Access allows constructing relational databases while C# provides an object-oriented interface.

2) The database design, which includes 6 tables - one main employee table and 5 child tables for additional employee details like work history, time records, and contact information. The tables are related through primary and foreign keys.

3) The development process, which first analyzed user needs, designed the database structure, then constructed the graphical user interface in the application to interact with the database according to its structure.

Project PlanFor our Project Plan, we are going to develop.docx

The document outlines a project plan to develop a new payroll system for an organization using the waterfall development methodology. It estimates the project will take 33.3 person-months to complete based on industry standards of allocating 15% of effort to planning, 20% to analysis, 35% to design, and 30% to implementation. It also discusses developing a work plan, staffing the project, and coordinating project activities to manage the system development life cycle.

Journal of Physics Conference SeriesPAPER • OPEN ACCESS.docx

Journal of Physics: Conference Series

PAPER • OPEN ACCESS

The methodology of database design in

organization management systems

To cite this article: I L Chudinov et al 2017 J. Phys.: Conf. Ser. 803 012030

View the article online for updates and enhancements.

You may also like

The Construction of Group Financial

Management Information System

Yuan Ma

-

Identification of E-Maintenance Elements

and Indicators that Affect Maintenance

Performance of High Rise Building: A

Literature Review

Nurul Inayah Wardahni, Leni Sagita

Riantini, Yusuf Latief et al.

-

Web-Based Project Management

Information System in Construction

Projects

M R Fachrizal, J C Wibawa and Z Afifah

-

This content was downloaded from IP address 75.44.16.235 on 09/10/2022 at 19:18

https://doi.org/10.1088/1742-6596/803/1/012030

https://iopscience.iop.org/article/10.1088/1757-899X/750/1/012025

https://iopscience.iop.org/article/10.1088/1757-899X/750/1/012025

https://iopscience.iop.org/article/10.1088/1757-899X/1007/1/012021

https://iopscience.iop.org/article/10.1088/1757-899X/1007/1/012021

https://iopscience.iop.org/article/10.1088/1757-899X/1007/1/012021

https://iopscience.iop.org/article/10.1088/1757-899X/1007/1/012021

https://iopscience.iop.org/article/10.1088/1757-899X/879/1/012064

https://iopscience.iop.org/article/10.1088/1757-899X/879/1/012064

https://iopscience.iop.org/article/10.1088/1757-899X/879/1/012064

The methodology of database design in organization

management systems

I L Chudinov, V V Osipova, Y V Bobrova

Tomsk Polytechnic University, 30, Lenina ave., Tomsk, 634050, Russia

E-mail: [email protected]

Abstract. The paper describes the unified methodology of database design for management

information systems. Designing the conceptual information model for the domain area is the

most important and labor-intensive stage in database design. Basing on the proposed integrated

approach to design, the conceptual information model, the main principles of developing the

relation databases are provided and user’s information needs are considered. According to the

methodology, the process of designing the conceptual information model includes three basic

stages, which are defined in detail. Finally, the article describes the process of performing the

results of analyzing user’s information needs and the rationale for use of classifiers.

1. Introduction

Management information systems are among the most important components of information

technologies (IT), used in a company. They are usually classified by the functions into the following

systems: Manufacturing Execution Systems (MES), Human Resource Management (HRM), Enterprise

Content Management (ECM), Customer Relationship Management (CRM), etc. [1]. Such systems are

used a special structured database and are required for reengineering of the whole enterprise

management system, while the integration makes it difficult to use them. These systems are expensive

enough and particularly devel.

Fulltext01

The document describes the development of an employee management system. It discusses analyzing the data needed for the system and designing relational database tables to store employee information. This includes tables for employee details, work history, time records, salary, contacts, and holidays. The document also covers using C# and Microsoft Access to build the graphical user interface and connect it to the backend database. Functions are implemented to retrieve, add, update and delete employee records from the database.

Similar to Database development life cycle (20)

Structure of this ChapterIn Section 11.1Section 11.docx

Structure of this ChapterIn Section 11.1Section 11.docx

Project PlanFor our Project Plan, we are going to develop.docx

Project PlanFor our Project Plan, we are going to develop.docx

Journal of Physics Conference SeriesPAPER • OPEN ACCESS.docx

Journal of Physics Conference SeriesPAPER • OPEN ACCESS.docx

More from Afrasiyab Haider

How to know value of a company

How to know value of a company

How to merge

When to merge

How to acquire

when to acquire

What is contracted R&D

Providing feedback for effective listening

Communication Skills : Providing feedback for effective listening,presentation, techniques, types of feedack

Rectification of errors (Financial Accounting)

This document discusses the rectification of accounting errors. It defines rectification of errors as the systematic correction of errors in accounting records. It outlines two types of errors: two-sided errors, which do not affect the trial balance, and one-sided errors, which do affect the trial balance. Specific two-sided errors discussed include errors of omission, commission, original entry, principal, and compensating errors. A suspense account is introduced as an account used to temporarily record amounts from one-sided errors until the proper account can be identified.

Octal to binary and octal to hexa decimal conversions

The document summarizes various number systems and coding techniques:

1. It explains how to perform addition, subtraction, and conversion in octal number systems with examples.

2. It describes binary coded decimals (BCD) and provides the counting table.

3. It explains ASCII codes and provides the code table.

4. It describes excess-3 code and provides the code table.

5. It summarizes Unicode and some common encoding formats like UTF-8.

6. It explains Gray code and provides an example code table.

RUP - Rational Unified Process

Rational Unified Process, It is an software development approach which uses rational objectory model to develop a software on predefined principlas

Normalization in Database

what is normalization, what is 1st normal form, what is 2nd normal form, what is 3rd normal form, what is Anomaly, how to normalize table in database,

Facts finding techniques in Database

Fact finding techniques, Database, Facts finding techniques in database,Methods of facts finding in Database

File organization in database

1. File organization in databases involves grouping related data records into tables and storing the tables as files in memory for efficient data access and querying.

2. There are three main types of file organization: sequential, indexed, and hashed. Sequential organization stores records one after the other, indexed uses a record key to order records, and hashed uses a hash function to directly map records to storage blocks.

3. The objectives of file organization are to optimally select and access records quickly, allow efficient data modification, avoid duplicate records, and minimize storage costs.

Software development life cycle

Software development is a process that involves planning, designing, coding, testing, and maintaining software. It includes identifying requirements, analyzing requirements, designing the software architecture and components, programming, testing, and maintaining the software. There are various software development models that guide the process, such as waterfall, rapid application development, and agile development. Choosing the right development model and tools, clearly defining requirements, managing changes, and testing thoroughly are important best practices for successful software projects.

Expected value of random variables

The document defines expected value as the sum of each possible value of a random variable multiplied by its probability. For a random variable X that can take on values x1, x2, x3, etc. with probabilities p(x1), p(x2), p(x3), etc., the expected value is defined as E(X) = ∑ xk p(xk). An example is provided calculating the expected value of Y, the number of microcomputers getting out of order per year at a university, which is determined to be 1.4 based on the given probabilities.

Class diagram of school management system (OOP)

The document describes a class diagram for a school management system with the following classes: Student, Employee, Examination, Accounts, and Show. The Student class stores student information like name, age, roll number, class, and contact details. The Employee class models employee data such as name, type, designation, salary and contact information. The Examination class keeps student exam results linked to their name, roll number and class. The Accounts class manages fee and expense records. And the Show class displays student records, employee information, fees and expenses.

What is difference between dbms and rdbms

DBMS stores data as files while RDBMS stores data in tabular form with relationships between tables. DBMS is meant for small organizations and single users, does not support normalization, and lacks security features. RDBMS supports large data, multiple users, normalization, security, distributed databases, and examples include MySQL, PostgreSQL, and Oracle. The key difference is that RDBMS represents data in tables with relationships while DBMS stores data as files without relationships.

Relation of psychology and IT

The document discusses the impact of information technology and psychology on human life. It outlines several ways that IT has advantages such as globalization, lower costs, and new jobs, but also disadvantages like unemployment and privacy issues. Psychology is defined as the study of the mind and behavior, and its uses in daily life include building relationships and self-confidence. The document then analyzes the impact of IT on several domains including positive effects on business communication but high costs, and positive effects on education through online learning but also potential misinformation. The impact of IT on society, agriculture, and banking are also summarized, highlighting both benefits and disadvantages.

What is Psychology and variables in psychology?

Psychology is the scientific study of the human mind and behavior. There are different types of variables studied in psychology like the dependent and independent variables. Controls are used in experiments to minimize the influence of outside factors other than the independent variable being tested. Some common research methods in psychology include experimental methods, clinical methods, and systematic observation. Experimental methods use controlled experiments and the scientific method. Clinical methods involve diagnosing and treating patients in clinical settings. Systematic observation methodically observes and collects data to support or disprove hypotheses.

History of operating systems

What is operating system? , what is history of operating system? , history of operating system? , operating system,

Expected value of random variables

The document defines expected value as the sum of each possible value of a random variable multiplied by its probability. For a random variable X that can take on values x1, x2, x3, etc. with probabilities p(x1), p(x2), p(x3), etc., the expected value is defined as E(X) = ∑ xk p(xk). An example is provided calculating the expected value of Y, the number of microcomputers getting out of order per year at a university, which is determined to be 1.4 based on the given probabilities.

File organization in database

1. File organization in databases involves grouping related data records into tables and storing the tables as files in memory for efficient data access and querying.

2. There are several methods of file organization including sequential, indexed, and hashed. Sequential organization stores records one after the other, indexed uses a record key to order records, and hashed uses a hash function to directly map records to storage blocks.

3. The objectives of file organization are to optimally select and access records quickly, allow efficient data modification, avoid duplicate records, and minimize storage costs.

Should prisoners be allowed to cast vote?

Prisoners should have the right to vote according to the principles of democracy. Around 2 million prisoners in the US are denied this right. While some countries and US states restrict prisoner voting, Vermont and Maine allow all prisoners to vote. The 15th Amendment states the right to vote cannot be denied based on race or past actions, but this is not applied to prisoners in most states. Advocates argue that denying prisoners the right to vote is inconsistent with democratic values of participation and that their votes could influence election outcomes.

Politics and martial law in pakistan

Politics of Pakistan

History of politics in Pakistan

Martial laws in Pakistan

Dictatorship in Pakistan

Html and its tags

What is HTML?

HTML basics

What are tags of HTML?

How to write its tags?

Sample of HTML pages.

More from Afrasiyab Haider (20)

Octal to binary and octal to hexa decimal conversions

Octal to binary and octal to hexa decimal conversions

Recently uploaded

The Microsoft 365 Migration Tutorial For Beginner.pptx

This presentation will help you understand the power of Microsoft 365. However, we have mentioned every productivity app included in Office 365. Additionally, we have suggested the migration situation related to Office 365 and how we can help you.

You can also read: https://www.systoolsgroup.com/updates/office-365-tenant-to-tenant-migration-step-by-step-complete-guide/

Demystifying Knowledge Management through Storytelling

The Department of Veteran Affairs (VA) invited Taylor Paschal, Knowledge & Information Management Consultant at Enterprise Knowledge, to speak at a Knowledge Management Lunch and Learn hosted on June 12, 2024. All Office of Administration staff were invited to attend and received professional development credit for participating in the voluntary event.

The objectives of the Lunch and Learn presentation were to:

- Review what KM ‘is’ and ‘isn’t’

- Understand the value of KM and the benefits of engaging

- Define and reflect on your “what’s in it for me?”

- Share actionable ways you can participate in Knowledge - - Capture & Transfer

[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...![[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

The typical problem in product engineering is not bad strategy, so much as “no strategy”. This leads to confusion, lack of motivation, and incoherent action. The next time you look for a strategy and find an empty space, instead of waiting for it to be filled, I will show you how to fill it in yourself. If you’re wrong, it forces a correction. If you’re right, it helps create focus. I’ll share how I’ve approached this in the past, both what works and lessons for what didn’t work so well.

"$10 thousand per minute of downtime: architecture, queues, streaming and fin...

Direct losses from downtime in 1 minute = $5-$10 thousand dollars. Reputation is priceless.

As part of the talk, we will consider the architectural strategies necessary for the development of highly loaded fintech solutions. We will focus on using queues and streaming to efficiently work and manage large amounts of data in real-time and to minimize latency.

We will focus special attention on the architectural patterns used in the design of the fintech system, microservices and event-driven architecture, which ensure scalability, fault tolerance, and consistency of the entire system.

What is an RPA CoE? Session 1 – CoE Vision

In the first session, we will review the organization's vision and how this has an impact on the COE Structure.

Topics covered:

• The role of a steering committee

• How do the organization’s priorities determine CoE Structure?

Speaker:

Chris Bolin, Senior Intelligent Automation Architect Anika Systems

A Deep Dive into ScyllaDB's Architecture

This talk will cover ScyllaDB Architecture from the cluster-level view and zoom in on data distribution and internal node architecture. In the process, we will learn the secret sauce used to get ScyllaDB's high availability and superior performance. We will also touch on the upcoming changes to ScyllaDB architecture, moving to strongly consistent metadata and tablets.

AWS Certified Solutions Architect Associate (SAA-C03)

AWS Certified Solutions Architect Associate (SAA-C03)

"Frontline Battles with DDoS: Best practices and Lessons Learned", Igor Ivaniuk

At this talk we will discuss DDoS protection tools and best practices, discuss network architectures and what AWS has to offer. Also, we will look into one of the largest DDoS attacks on Ukrainian infrastructure that happened in February 2022. We'll see, what techniques helped to keep the web resources available for Ukrainians and how AWS improved DDoS protection for all customers based on Ukraine experience

Day 2 - Intro to UiPath Studio Fundamentals

In our second session, we shall learn all about the main features and fundamentals of UiPath Studio that enable us to use the building blocks for any automation project.

📕 Detailed agenda:

Variables and Datatypes

Workflow Layouts

Arguments

Control Flows and Loops

Conditional Statements

💻 Extra training through UiPath Academy:

Variables, Constants, and Arguments in Studio

Control Flow in Studio

Getting the Most Out of ScyllaDB Monitoring: ShareChat's Tips

ScyllaDB monitoring provides a lot of useful information. But sometimes it’s not easy to find the root of the problem if something is wrong or even estimate the remaining capacity by the load on the cluster. This talk shares our team's practical tips on: 1) How to find the root of the problem by metrics if ScyllaDB is slow 2) How to interpret the load and plan capacity for the future 3) Compaction strategies and how to choose the right one 4) Important metrics which aren’t available in the default monitoring setup.

GraphRAG for LifeSciences Hands-On with the Clinical Knowledge Graph

Tomaz Bratanic

Graph ML and GenAI Expert - Neo4j

"Choosing proper type of scaling", Olena Syrota

Imagine an IoT processing system that is already quite mature and production-ready and for which client coverage is growing and scaling and performance aspects are life and death questions. The system has Redis, MongoDB, and stream processing based on ksqldb. In this talk, firstly, we will analyze scaling approaches and then select the proper ones for our system.

From Natural Language to Structured Solr Queries using LLMs

This talk draws on experimentation to enable AI applications with Solr. One important use case is to use AI for better accessibility and discoverability of the data: while User eXperience techniques, lexical search improvements, and data harmonization can take organizations to a good level of accessibility, a structural (or “cognitive” gap) remains between the data user needs and the data producer constraints.

That is where AI – and most importantly, Natural Language Processing and Large Language Model techniques – could make a difference. This natural language, conversational engine could facilitate access and usage of the data leveraging the semantics of any data source.

The objective of the presentation is to propose a technical approach and a way forward to achieve this goal.

The key concept is to enable users to express their search queries in natural language, which the LLM then enriches, interprets, and translates into structured queries based on the Solr index’s metadata.

This approach leverages the LLM’s ability to understand the nuances of natural language and the structure of documents within Apache Solr.

The LLM acts as an intermediary agent, offering a transparent experience to users automatically and potentially uncovering relevant documents that conventional search methods might overlook. The presentation will include the results of this experimental work, lessons learned, best practices, and the scope of future work that should improve the approach and make it production-ready.

Dandelion Hashtable: beyond billion requests per second on a commodity server

This slide deck presents DLHT, a concurrent in-memory hashtable. Despite efforts to optimize hashtables, that go as far as sacrificing core functionality, state-of-the-art designs still incur multiple memory accesses per request and block request processing in three cases. First, most hashtables block while waiting for data to be retrieved from memory. Second, open-addressing designs, which represent the current state-of-the-art, either cannot free index slots on deletes or must block all requests to do so. Third, index resizes block every request until all objects are copied to the new index. Defying folklore wisdom, DLHT forgoes open-addressing and adopts a fully-featured and memory-aware closed-addressing design based on bounded cache-line-chaining. This design offers lock-free index operations and deletes that free slots instantly, (2) completes most requests with a single memory access, (3) utilizes software prefetching to hide memory latencies, and (4) employs a novel non-blocking and parallel resizing. In a commodity server and a memory-resident workload, DLHT surpasses 1.6B requests per second and provides 3.5x (12x) the throughput of the state-of-the-art closed-addressing (open-addressing) resizable hashtable on Gets (Deletes).

inQuba Webinar Mastering Customer Journey Management with Dr Graham Hill

HERE IS YOUR WEBINAR CONTENT! 'Mastering Customer Journey Management with Dr. Graham Hill'. We hope you find the webinar recording both insightful and enjoyable.

In this webinar, we explored essential aspects of Customer Journey Management and personalization. Here’s a summary of the key insights and topics discussed:

Key Takeaways:

Understanding the Customer Journey: Dr. Hill emphasized the importance of mapping and understanding the complete customer journey to identify touchpoints and opportunities for improvement.

Personalization Strategies: We discussed how to leverage data and insights to create personalized experiences that resonate with customers.

Technology Integration: Insights were shared on how inQuba’s advanced technology can streamline customer interactions and drive operational efficiency.

GlobalLogic Java Community Webinar #18 “How to Improve Web Application Perfor...

Під час доповіді відповімо на питання, навіщо потрібно підвищувати продуктивність аплікації і які є найефективніші способи для цього. А також поговоримо про те, що таке кеш, які його види бувають та, основне — як знайти performance bottleneck?

Відео та деталі заходу: https://bit.ly/45tILxj

"What does it really mean for your system to be available, or how to define w...

We will talk about system monitoring from a few different angles. We will start by covering the basics, then discuss SLOs, how to define them, and why understanding the business well is crucial for success in this exercise.

Recently uploaded (20)

The Microsoft 365 Migration Tutorial For Beginner.pptx

The Microsoft 365 Migration Tutorial For Beginner.pptx

Demystifying Knowledge Management through Storytelling

Demystifying Knowledge Management through Storytelling

[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...![[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[OReilly Superstream] Occupy the Space: A grassroots guide to engineering (an...

"$10 thousand per minute of downtime: architecture, queues, streaming and fin...

"$10 thousand per minute of downtime: architecture, queues, streaming and fin...

AWS Certified Solutions Architect Associate (SAA-C03)

AWS Certified Solutions Architect Associate (SAA-C03)

"Frontline Battles with DDoS: Best practices and Lessons Learned", Igor Ivaniuk

"Frontline Battles with DDoS: Best practices and Lessons Learned", Igor Ivaniuk

Getting the Most Out of ScyllaDB Monitoring: ShareChat's Tips

Getting the Most Out of ScyllaDB Monitoring: ShareChat's Tips

GraphRAG for LifeSciences Hands-On with the Clinical Knowledge Graph

GraphRAG for LifeSciences Hands-On with the Clinical Knowledge Graph

From Natural Language to Structured Solr Queries using LLMs

From Natural Language to Structured Solr Queries using LLMs

Dandelion Hashtable: beyond billion requests per second on a commodity server

Dandelion Hashtable: beyond billion requests per second on a commodity server

inQuba Webinar Mastering Customer Journey Management with Dr Graham Hill

inQuba Webinar Mastering Customer Journey Management with Dr Graham Hill

GlobalLogic Java Community Webinar #18 “How to Improve Web Application Perfor...

GlobalLogic Java Community Webinar #18 “How to Improve Web Application Perfor...

"What does it really mean for your system to be available, or how to define w...

"What does it really mean for your system to be available, or how to define w...

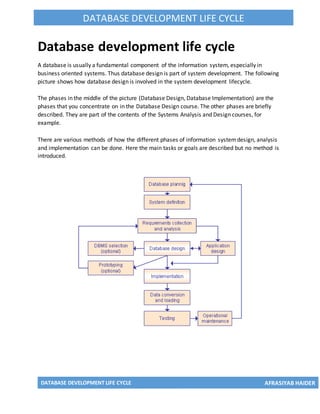

Database development life cycle

- 1. DATABASE DEVELOPMENT LIFE CYCLE AFRASIYAB HAIDER DATABASE DEVELOPMENT LIFE CYCLE Database development life cycle A database is usually a fundamental component of the information system, especially in business oriented systems. Thus database design is part of system development. The following picture shows how database design is involved in the system development lifecycle. The phases in the middle of the picture (Database Design, Database Implementation) are the phases that you concentrate on in the Database Design course. The other phases are briefly described. They are part of the contents of the Systems Analysis and Design courses, for example. There are various methods of how the different phases of information systemdesign, analysis and implementation can be done. Here the main tasks or goals are described but no method is introduced.

- 2. DATABASE DEVELOPMENT LIFE CYCLE AFRASIYAB HAIDER DATABASE DEVELOPMENT LIFE CYCLE Database Planning The database planning includes the activities that allow the stages of the database system development lifecycle to be realized as efficiently and effectively as possible. This phase must be integrated with the overall Information System strategy of the organization. The very first step in database planning is to define the mission statement and objectives for the database system. That is the definition of: - the major aims of the database system - the purpose of the database system - the supported tasks of the database system - the resources of the database system Systems Definition In the systems definition phase, the scope and boundaries of the database application are described. This description includes: - links with the other information systems of the organization - what the planned systemis going to do now and in the future - who the users are now and in the future. The major user views are also described. i.e. what is required of a database systemfrom the perspectives of particular job roles or enterprise application areas. Requirements Collection and Analysis During the requirements collection and analysis phase, the collection and analysis of the information about the part of the enterprise to be served by the database are completed. The results may include eg: - the description of the data used or generated - the details how the data is to be used or generated - any additional requirements for the new database system Database Design The database design phase is divided into three steps: - conceptual database design - logical database design - physical database design

- 3. DATABASE DEVELOPMENT LIFE CYCLE AFRASIYAB HAIDER DATABASE DEVELOPMENT LIFE CYCLE In the conceptual database design phase, the model of the data to be used independent of all physical considerations is to be constructed. The model is based on the requirements specification of the system. In the logical database design phase, the model of the data to be used is based on a specific data model, but independent of a particular database management system is constructed. This is based on the target data model for the database e.g. relational data model. In the physical database design phase, the description of the implementation of the database on secondary storage is created. The base relations, indexes, integrity constraints, security, etc. are defined using the SQL language. Database Management System Selection This in an optional phase. When there is a need for a new database management system (DBMS), this phase is done. DBMS means a database system like Access, SQL Server, MySQL, Oracle. In this phase the criteria for the new DBMS are defined. Then several products are evaluated according to the criteria. Finally the recommendation for the selection is decided. ApplicationDesign In the application design phase, the design of the user interface and the application programs that use and process the database are defined and designed. Protyping The purpose of a prototype is to allow the users to use the prototype to identify the features of the system using the computer. There are horizontal and vertical prototypes. A horizontal prototype has many features (e.g. user interfaces) but they are not working. A vertical prototype has very few features but they are working. See the following picture. Implementation During the implementation phase, the physical realization of the database and application designs are to be done. This is the programming phase of the systems development. Data Conversion and Loading This phase is needed when a new database is replacing an old system. During this phase the existing data will be transferred into the new database.

- 4. DATABASE DEVELOPMENT LIFE CYCLE AFRASIYAB HAIDER DATABASE DEVELOPMENT LIFE CYCLE Testing Before the new systemis going to live, it should be thoroughly tested. The goal of testing is to find errors! The goal is not to prove the software is working well. Operational Maintenance The operational maintenance is the process of monitoring and maintaining the database system. Monitoring means that the performance of the systemis observed. If the performance of the system falls below an acceptable level, tuning or reorganization of the database may be required. Maintaining and upgrading the database systemmeans that, when new requirements arise, the new development lifecycle will be done. Source: Connolly, Begg. 2005. Database Systems. A Practical Approach to Design, Implementation, and Management. Addison Wesley. Chapter 9. Database Planning, Design and Administration.