Report

Share

Download to read offline

Recommended

24 ĐỀ THAM KHẢO KÌ THI TUYỂN SINH VÀO LỚP 10 MÔN TIẾNG ANH SỞ GIÁO DỤC HẢI DƯ...

24 ĐỀ THAM KHẢO KÌ THI TUYỂN SINH VÀO LỚP 10 MÔN TIẾNG ANH SỞ GIÁO DỤC HẢI DƯ...Nguyen Thanh Tu Collection

More Related Content

Similar to Lecture Slides.pdf

Similar to Lecture Slides.pdf (20)

P-values the gold measure of statistical validity are not as reliable as many...

P-values the gold measure of statistical validity are not as reliable as many...

Recently uploaded

24 ĐỀ THAM KHẢO KÌ THI TUYỂN SINH VÀO LỚP 10 MÔN TIẾNG ANH SỞ GIÁO DỤC HẢI DƯ...

24 ĐỀ THAM KHẢO KÌ THI TUYỂN SINH VÀO LỚP 10 MÔN TIẾNG ANH SỞ GIÁO DỤC HẢI DƯ...Nguyen Thanh Tu Collection

TỔNG HỢP HƠN 100 ĐỀ THI THỬ TỐT NGHIỆP THPT TOÁN 2024 - TỪ CÁC TRƯỜNG, TRƯỜNG...

TỔNG HỢP HƠN 100 ĐỀ THI THỬ TỐT NGHIỆP THPT TOÁN 2024 - TỪ CÁC TRƯỜNG, TRƯỜNG...Nguyen Thanh Tu Collection

Recently uploaded (20)

Đề tieng anh thpt 2024 danh cho cac ban hoc sinh

Đề tieng anh thpt 2024 danh cho cac ban hoc sinh

24 ĐỀ THAM KHẢO KÌ THI TUYỂN SINH VÀO LỚP 10 MÔN TIẾNG ANH SỞ GIÁO DỤC HẢI DƯ...

24 ĐỀ THAM KHẢO KÌ THI TUYỂN SINH VÀO LỚP 10 MÔN TIẾNG ANH SỞ GIÁO DỤC HẢI DƯ...

When Quality Assurance Meets Innovation in Higher Education - Report launch w...

When Quality Assurance Meets Innovation in Higher Education - Report launch w...

Personalisation of Education by AI and Big Data - Lourdes Guàrdia

Personalisation of Education by AI and Big Data - Lourdes Guàrdia

Trauma-Informed Leadership - Five Practical Principles

Trauma-Informed Leadership - Five Practical Principles

UChicago CMSC 23320 - The Best Commit Messages of 2024

UChicago CMSC 23320 - The Best Commit Messages of 2024

Transparency, Recognition and the role of eSealing - Ildiko Mazar and Koen No...

Transparency, Recognition and the role of eSealing - Ildiko Mazar and Koen No...

Graduate Outcomes Presentation Slides - English (v3).pptx

Graduate Outcomes Presentation Slides - English (v3).pptx

TỔNG HỢP HƠN 100 ĐỀ THI THỬ TỐT NGHIỆP THPT TOÁN 2024 - TỪ CÁC TRƯỜNG, TRƯỜNG...

TỔNG HỢP HƠN 100 ĐỀ THI THỬ TỐT NGHIỆP THPT TOÁN 2024 - TỪ CÁC TRƯỜNG, TRƯỜNG...

Lecture Slides.pdf

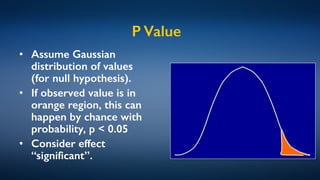

- 1. P Value • Assume Gaussian distribution of values (for null hypothesis). • If observed value is in orange region, this can happen by chance with probability, p < 0.05 • Consider effect “significant”.

- 2. Many Assumptions • Is the distribution nicely bell-shaped? • Did we test only once?

- 3. p-Hacking • Multiple hypothesis testing • For a given single hypothesis, a p-value of 0.05 says that there is only a 5% probability of observing values by chance, without the hypothesis being true. • What if you test 100 independent hypotheses? • One gene chip can have 20,000 genes.

- 4. Unreported Failures • Independent hypotheses, tested in parallel, can have statistics developed to correct for multiple tests. • What about sequential hypotheses, each slightly different from the previous? • E.g. a pharma company develops dozens of drug candidates, and tests them independently. – Most fail, a few succeed.

- 5. Exploratory Analysis • What if you devised your hypothesis to fit the observed data? • Often, exploration is the first phase of data analysis. • Separate exploratory (training) data from test data on which evaluation is reported.

- 6. Algorithmic Fairness • Humans have many biases. – No human is perfectly fair, even with the best of intentions. • Biases in algorithms usually easier to measure, even if outcome is no fairer. • Mathematical definitions of fairness can be applied, proving fairness, at least within the scope of the assumptions.

- 7. Attributions Cartoon by Scott Hampson is licensed CC BY-NC-ND Headline from Wall Street Journal Blog is reproduced as Fair Use. Staples company logo is reproduced as Fair Use. All other graphics are the creation of the author.