Embed presentation

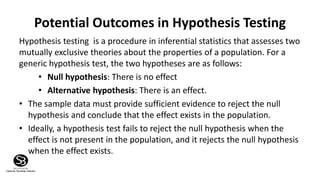

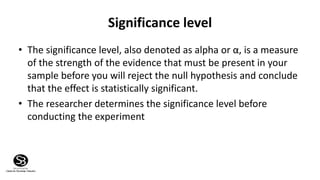

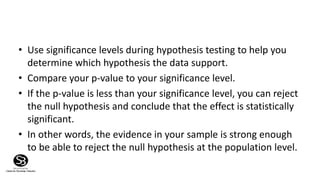

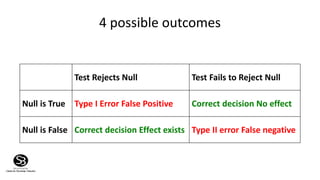

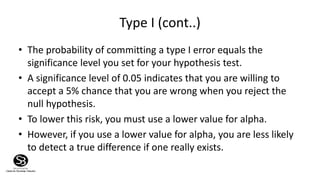

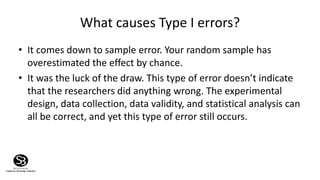

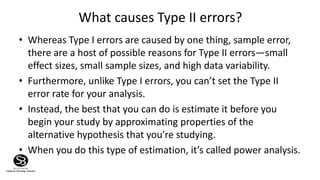

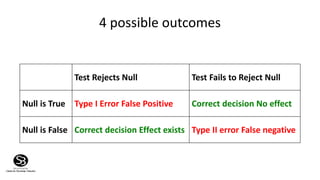

The document covers the fundamentals of hypothesis testing in research, explaining concepts such as significance levels, p-values, sampling errors, and types of errors in statistical tests. Hypothesis tests assess two conflicting theories regarding population properties, striving to provide evidence against the null hypothesis that assumes no effect. It emphasizes the importance of setting appropriate significance levels to reduce the risk of type I and type II errors in data interpretation.