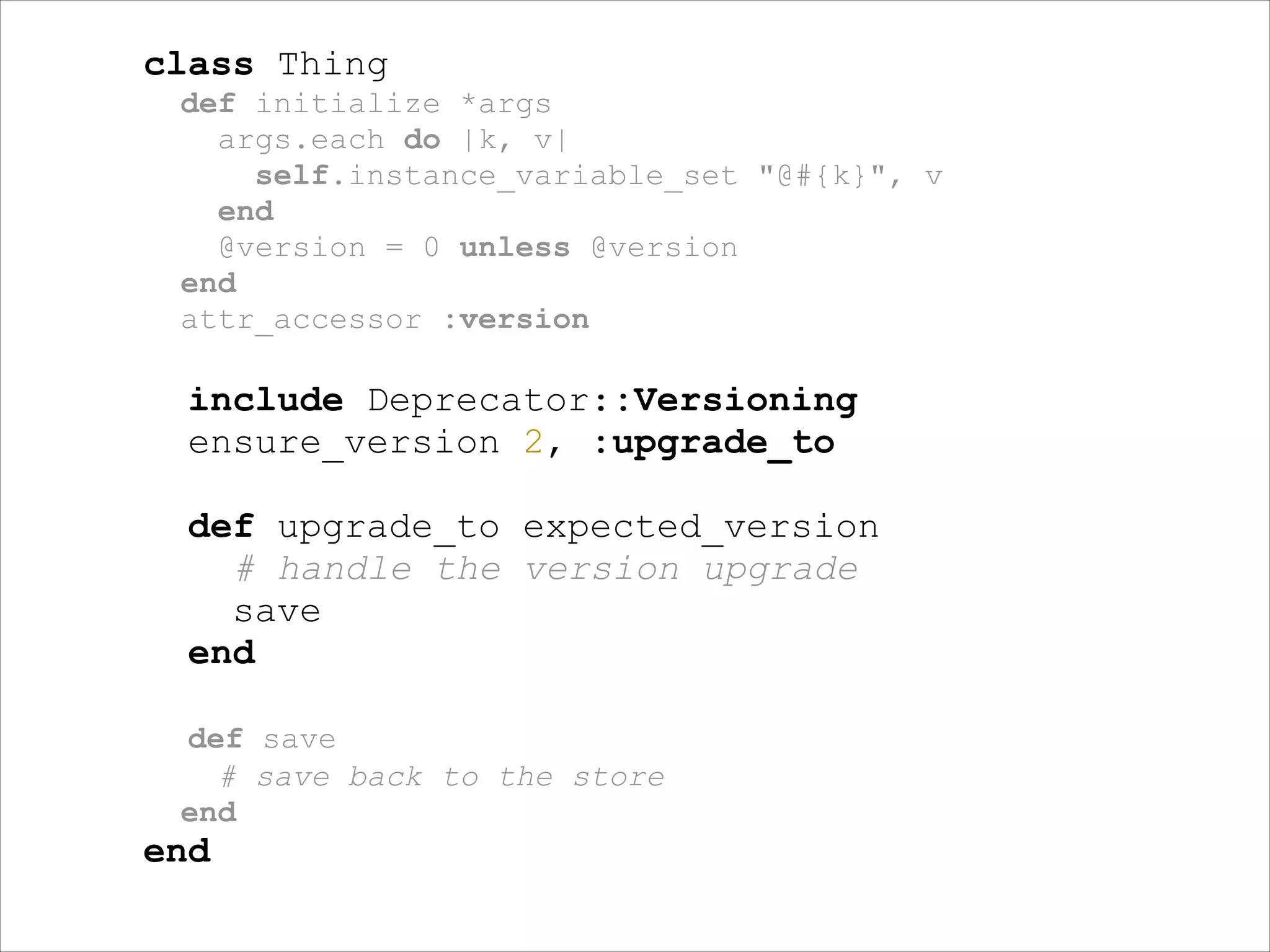

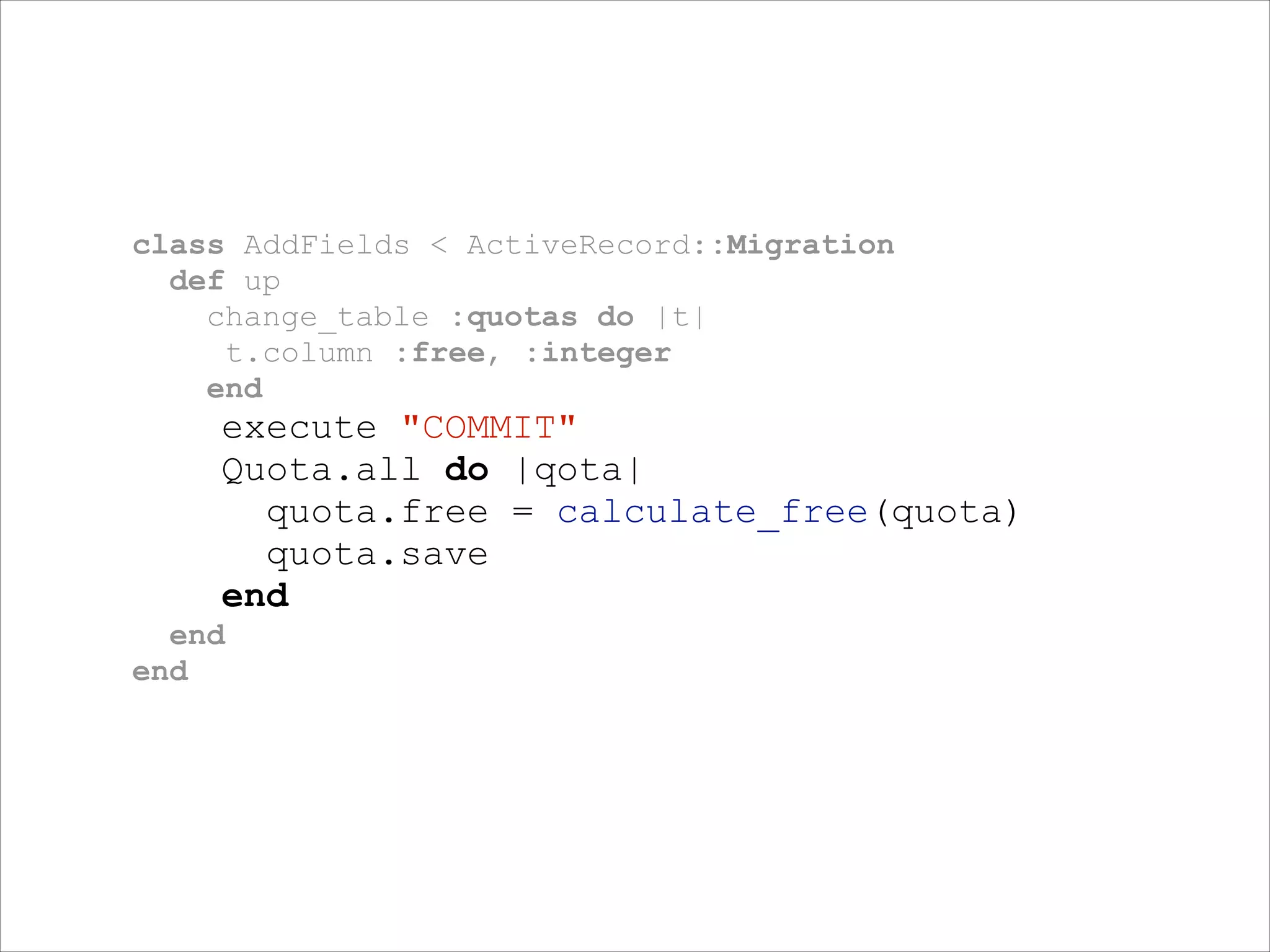

The document discusses evolving schemas in NoSQL databases. It describes starting with a simple data structure and search index, then enhancing it to support dynamic filtering and cached previews without hitting the main data store. It also covers approaches for migrating data to a new format, such as adding new fields, while the system is live using techniques like versioning the data and writing upgrade functions. Finally, it recommends some lessons learned, such as that schemaless does not mean no schema, changes should be painless, and agile code needs agile data.

![module DB

STORE = {

keyOne: [1, 2],

keyTwo: [1, 3, 4]

}

!

def self.find_all keys

keys.map { |k| STORE.fetch(k.to_sym, []) }

end

end](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-9-2048.jpg)

![def get_search_results keys

results = DB::find_all(keys)

results.flatten.uniq

end

!

get_search_results ["keyOne", “keyTwo"]

!

# => [1, 2, 3, 4]](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-10-2048.jpg)

![module DB

STORE = {

keyOne: [{id:

{id:

keyTwo: [{id:

{id:

{id:

}

1,

2,

1,

3,

4,

prop:

prop:

prop:

prop:

prop:

"bar"},

"foo"}],

"bar"},

"bar"},

"bar"}]

!

def self.find_all keys, prop

keys.map { |k|

STORE.fetch(k.to_sym, []).map { |e|

e if e[:prop] == prop

}.compact

}

# => [[{:id=>1, :prop=>”bar"}], …]

end

end](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-16-2048.jpg)

![def get_search_results keys, prop = "bar"

results = DB::find_all(keys, prop)

results.flatten.uniq.map { |e| e[:id] }

end

!

get_search_results ["keyOne", “keyTwo"]

!

# => [1, 3, 4]](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-21-2048.jpg)

![module DB

STORE = {

keyOne: [{id:

{id:

keyTwo: [{id:

{id:

{id:

}

1,

2,

1,

3,

4,

prop:

prop:

prop:

prop:

prop:

"bar", vsn: 2, free: 20 },

"foo", vsn: 2, free: 10 }],

"bar"},

"bar"},

"bar"}]

!

def self.find_all keys, prop

keys.map { |k|

STORE.fetch(k.to_sym, []).map { |e|

e if e[:prop] == prop

}.compact

}

end

end](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-38-2048.jpg)

![def is_version2? data; data[:vsn] == 2; end

def get_free id; 20; end

def save data; data; end

!

def transfrom_to_v2 data

return data if is_version2?(data)

!

data[:vsn] = 2

data[:free] = calculate_free(data[:id])

save data

end](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-39-2048.jpg)

![def get_search_results keys

results = DB::find_all(keys, "bar")

results.flatten

.map { |data| transfrom_to_v2 data }

.uniq

end

!

get_search_results ["keyOne", “keyTwo"]

!

# =>

[{:id=>1, :prop=>"bar", :vsn=>2, :free=>20},

{:id=>3, :prop=>"bar", :vsn=>2, :free=>20},

{:id=>4, :prop=>"bar", :vsn=>2, :free=>20}]](https://image.slidesharecdn.com/yougotschemainmyjson-140202075136-phpapp02/75/You-got-schema-in-my-json-40-2048.jpg)