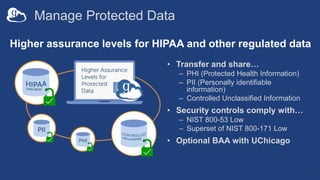

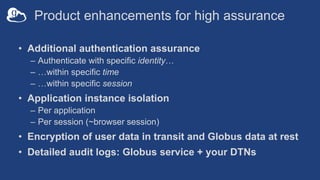

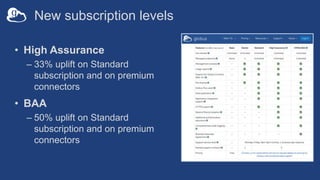

Globus has reported significant usage metrics including over 6,900 active shared endpoints and the transfer of 675 petabytes of data. It offers enhanced security features tailored for high assurance data management, including compliance with HIPAA and NIST standards, and has rolled out new subscription levels with various pricing increases. Upcoming developments focus on enhancing the platform's core transfer capabilities, building interoperable applications, and transitioning to an open-source model for more customizable solutions.